Your curated collection of saved posts and media

M2.7 w/ hermes cli is replacing ~75% of my claude code / opus usage now, but we need clarity for using it as a coding agent @ work. We're truly blessed to have the weights of this one, looking forward to seeing the license change. Definitely a model worth checking out.

Self-hosted M2.7 for code writing is absolutely allowed and free of charge!!!😁 I think this license is not detailed enough, so I will update it.

I started contributing to @NousResearch Hermes Agent by doing one thing: reading the code. Then a small fix. Then another. Gateway platforms, skills, bug fixes... It kept going for a long time. Today I received the Developer role in the Nous Research Discord. 🎉 Special thanks to @Teknium for reviewing and valuing every contribution throughout this journey. For anyone thinking about contributing to open source: the best starting point is reading the code. The rest follows. https://t.co/8baosGeEwS 🤖

Introducing HermesAgent-20, a new Bench Pack for BenchLocal. 20 scenarios extracted straight from the Hermes Agent source code, run against a REAL Hermes instance. The actual workload you'd put your model through. Why I built BenchLocal in the first place: most benchmarks are too abstract. We use local LLMs for practical work, and finding the right model for YOUR task efficiently is the single most important thing, especially when you're constrained to what fits on your machine. BenchLocal is a framework: providers, models, side-by-side comparison, all in one UI. Bench Packs are the unit of testing: ToolCall-15 and BugFind-15 shipped first, and when I launched the BenchLocal 0.1.0, added StructOutput, ReasonMath, InstructFollow, DataExtract. Now, HermesAgent-20 is the newest. Bench Packs install like VS Code extensions. The SDK is open, write your own, share it, grow the ecosystem. Here's the goal: a community-built, practical evaluation layer for the local LLM space. Early numbers on HermesAgent-20: > GLM 5.1 — 85 > Gemma4 31B — 83 > Qwen3.5 27B — 79 > MiniMax M2.7 — 76 Upgrade to the latest BenchLocal to install HermesAgent-20 (SDK update required).

Twitter is full of "AI Demos" that would survive exactly 30 seconds in a real business environment. You can’t run a premium restaurant or a clinic on a "System Prompt" and a prayer. The first time the LLM hallucinations a $0 booking, your ROI goes to zero. At @solwees, we are the only ones pouring the concrete for Deterministic AI. I don’t care about your "clever prompts." Show me your validation schemas. Show me your state machine. Stop building toys. Start building the floor.

Chinese student bought a USB-C chip for $2 with a blue LED. Smaller than my thumb. Set it up with Claude Code in 15 minutes. The chip does one thing - blinks when AI agents are working and goes dark when they're waiting. Nothing else. The Google engineer did the same thing and ended up automating 80% of his work at the company, and now just decides whether to let the chip work or not. Everything Claude Code - 27 agents, 64 skills, 1,282 security tests out of the box. You stop chatting with AI and start managing a team. Commenters on the forum mocked the Chinese student. Someone wrote that his toaster has more computing power. He didn't reply to anyone. Just added one line at the bottom: the LED knows before you do.

https://t.co/IOfam4j2sI

bunu anlayin cunku cogu kisi "bir agent daha cikti" diye gececek ama bu farkli hermes agent acik kaynakli bir AI asistan ama normal chatbot degil, seni TANIYOR, her konusmadan ogreniyor, ogrndiklerini skill olarak kaydediyor ve bir sonraki sefere daha iyi calisiyor en manyak kisim: tek bir yerden telegram, discord, slack, whatsapp, signal, imessage, email ve home assistant'a bagli, yani telefonuna whatsapp'tan yaziyorsun ayni agent masaustunde discord'dan da cevap veriyor, hafizasi ortak simdiye kadar ben bunu parcalayarak yapiyordum, telegram botu ayri, claude code ayri, scheduled trigger ayri, hermes hepsini tek catida birlestiriyor ve su an android'de bile calisiyor, termux'a kuruyorsun cebinden yonetiyorsun, web dashboard'u var browser'dan ayarliyorsun acik kaynak yani bedava, kendi bilgisayarinda calisiyor, verilerin sende kaliyor, openai veya anthropic'e bagli degil istedigin modeli bagla 487 commit, 24 gelistirici, ciddi bir proje bu hobi isi degil ben denemeye basladim, sonuclari paylasacagim

Hermes Agent v0.9.0 - “The Everywhere Release” Full changelog below ↓ https://t.co/aG5M6owBNS

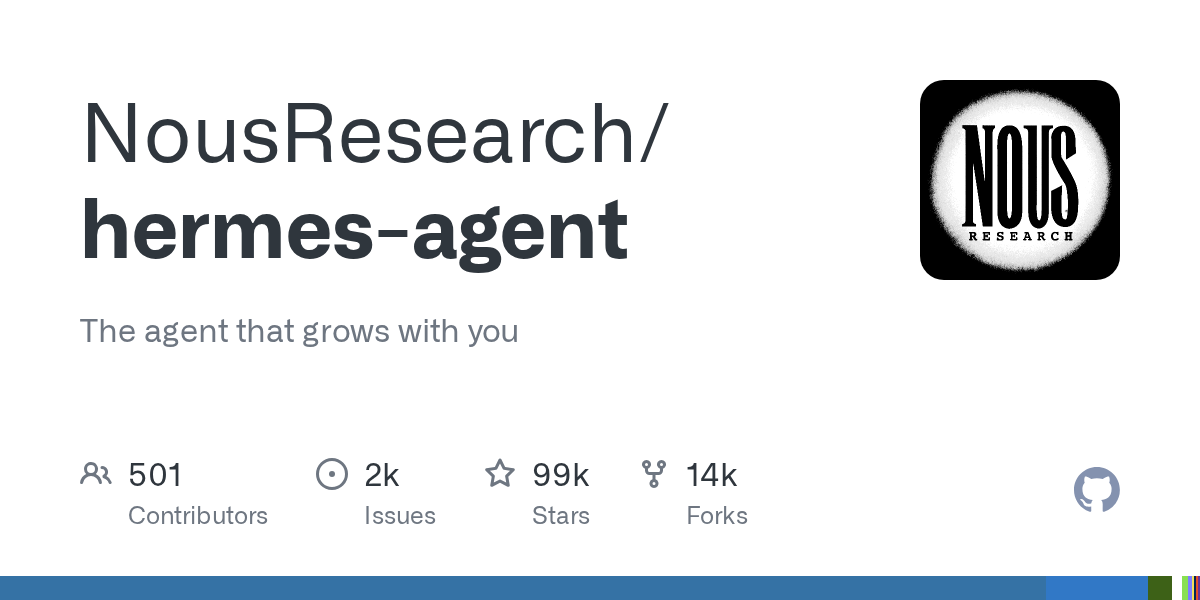

FSD v14.3.1 review after 10 drives and many hours, here are my thoughts (it’s a great one) - v14.3.1 feels like a big jump even compared to FSD v14.3, even for “just” a point release build. Everything’s polished and smoother overall as expected. - Always after a big update or fundamental architecture changes, there will be a couple rough edges. I was very impressed with how solid FSD v14.3 was, and .1 added a lot of polish to it. - First big thing I noticed with this build was improved lane bias and preference changes. Notably, v14.3 would like to sit toward the left lane on emptier highways, in my driving tonight I didn’t see any of that. Will drive a lot more tomorrow to verify. - In FSD v14.3, a new feature introduced was a new P parking icon at your destination. At first with v14.3, it didn’t show for me, then it did majority of the time. With v14.3.1, it has shown every single time now. Something new in .1 is you can now select it and change the arrival type from parking lot to garage, street parking, curbside etc. Nice improvement and quick to change. - As with v14.3, stop sign behavior is MUCH improved. It commits and doesn’t hesitate, no double stops I’ve seen and a smoother acceleration and deceleration curve, leading to a more comfortable drive. Notably, speed bumps and dip handling is also buttery smooth, great acceleration/deceleration curve as well. - Gated parking lots. One thing I noticed today with gated lots and garages is that it pulls up to the ticket dispenser way quicker and in a better position than before. It’s close and centered, exactly where I’d pull up. Goes right away when gate opens too, 10/10 no notes. Parking- This is a big change with FSD v14.3+. It now picks parking spots quicker and more decisive. With v14.3.1 it’s even better, it’s picking the first spot it sees and commits, and sometimes they are even corner spots which I personally love to avoid door dings. I saw a ton of corner spot parking today which is awesome. When it’s parking, it’s so quick to decide which spot to take, but sometimes once it starts pulling in the spot it’s a bit slow to finish the maneuver as well as a bit slow in parking lots sometimes now, the final 5 feet of the maneuver are the slowest understandably. I saw an instance of the twitchy steering wheel as well. Spot selection is so improved it’s hard to even compare to v14.2.x. What a massive upgrade and the decision making is so fast and confident. - Speed control was great on my drives tonight, mostly city but was behaving exactly how I would and expect of it now. Went perfectly with flow of traffic on the profiles I selected. Hurry mode is dialed in, and doesn’t sit in left lane, at least on the drives I did. - So cool to have Grok integrated with navigation. Tesla Self Driving and Grok took me to 5 separate destinations earlier with ZERO input, I just pressed start FSD and it drove, parked, unparked and got me to each destination without any intervention. Definitely worth watching the video I posted earlier of it. - Decision making and reaction times are next level as well, it reacted to multiple bad human drivers we encountered tonight extremely well and the reaction time is lightning fast. Will post some videos tomorrow of it reacting to other vehicles, its reasoning is fantastic. Overall, what a big polish upgrade to an already excellent build. Really excited to drive it more extensively tomorrow. Thank you to the legends @Tesla_AI for the hard work getting another fantastic build out to us, this one’s phenomenal.

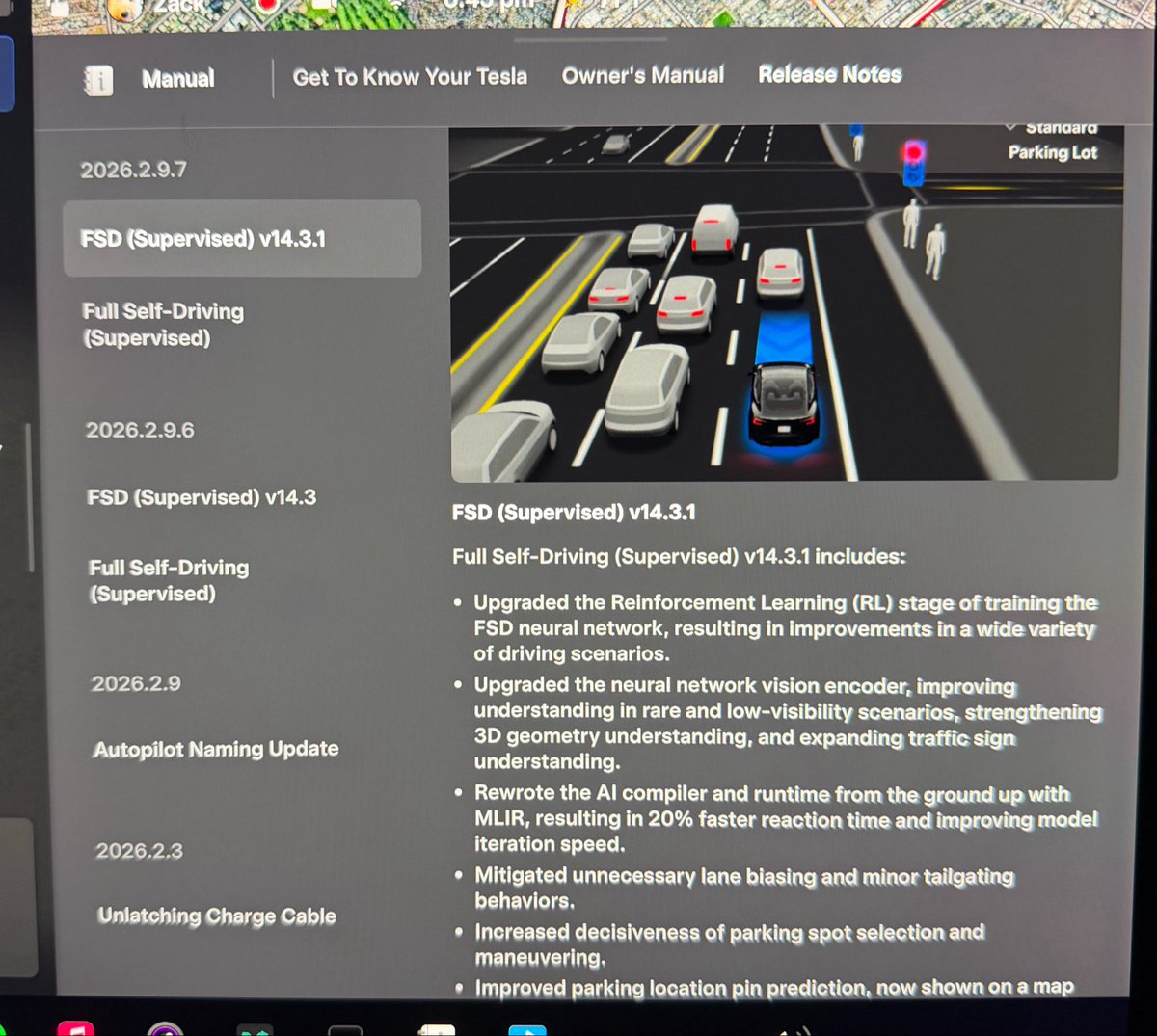

We shipped a new repo type called "kernel" on the Hub. We want to democratize the whole ping-pong around packaging, distributing, and using custom kernels. This repo type is only available to a few community partners, @sgl_project being the first! Hop in 🧵for more details. https://t.co/8yiWzDbBPY

@norpadon The tensor engine was first implemented inside SN3 (before it was called Lush) in 1992 at Bell Labs by Léon Bottom and me. The naming convention has survived to this day in PyTorch and other libraries. The naming of the tensor operations was reused in EBlearn (C++ deep learning library written by Pierre Sermanet and me, with some help from @soumithchintala). It was recycled in Torch5 and Torch7, which was written largely by Ronan Collobert, and my students Clément Farabet, and @koraykv ). Clément and Koray had been brought up on Lush (the open version of SN) and knew the nomenclature. Then, Soumith used the same conventions in PyTorch.

The Hermes Agent is a truly impressive piece of engineering. I dissected its memory architecture and it's definitely better than mine 😅 Amazing work @Teknium and @NousResearch team. When on Lex Fridman podcast? It's about time. https://t.co/tNdFiMZs4F

🎉 After one year of teamwork, we are excited to release our 3D foundation model — LingBot-Map! Unlike DA3/VGGT, LingBot-Map is a purely autoregressive model for streaming 3D reconstruction ⚡ It achieves ~20 FPS on 518×378 resolution over sequences exceeding 10,000 frames — and beyond 🚀 Two key insights behind LingBot-Map: 🔑 Keep SLAM's structural wisdom: build Geometric Context Attention with long-context modeling while maintaining a compact streaming state 🔑 Make everything end-to-end learnable — no optimization, no post-processing Let's check out our demos 👇

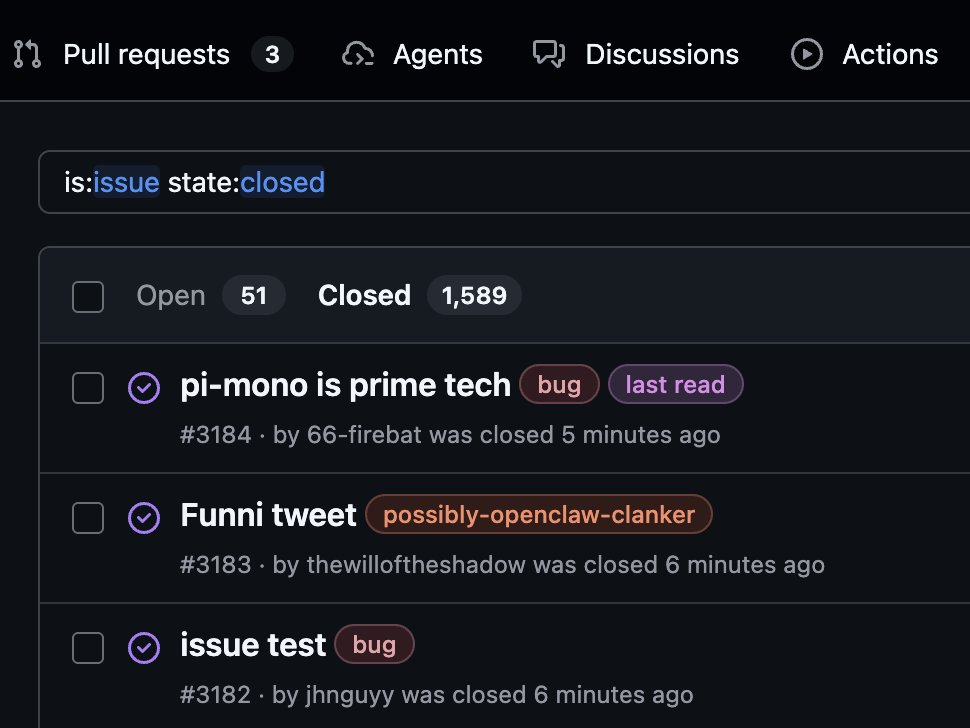

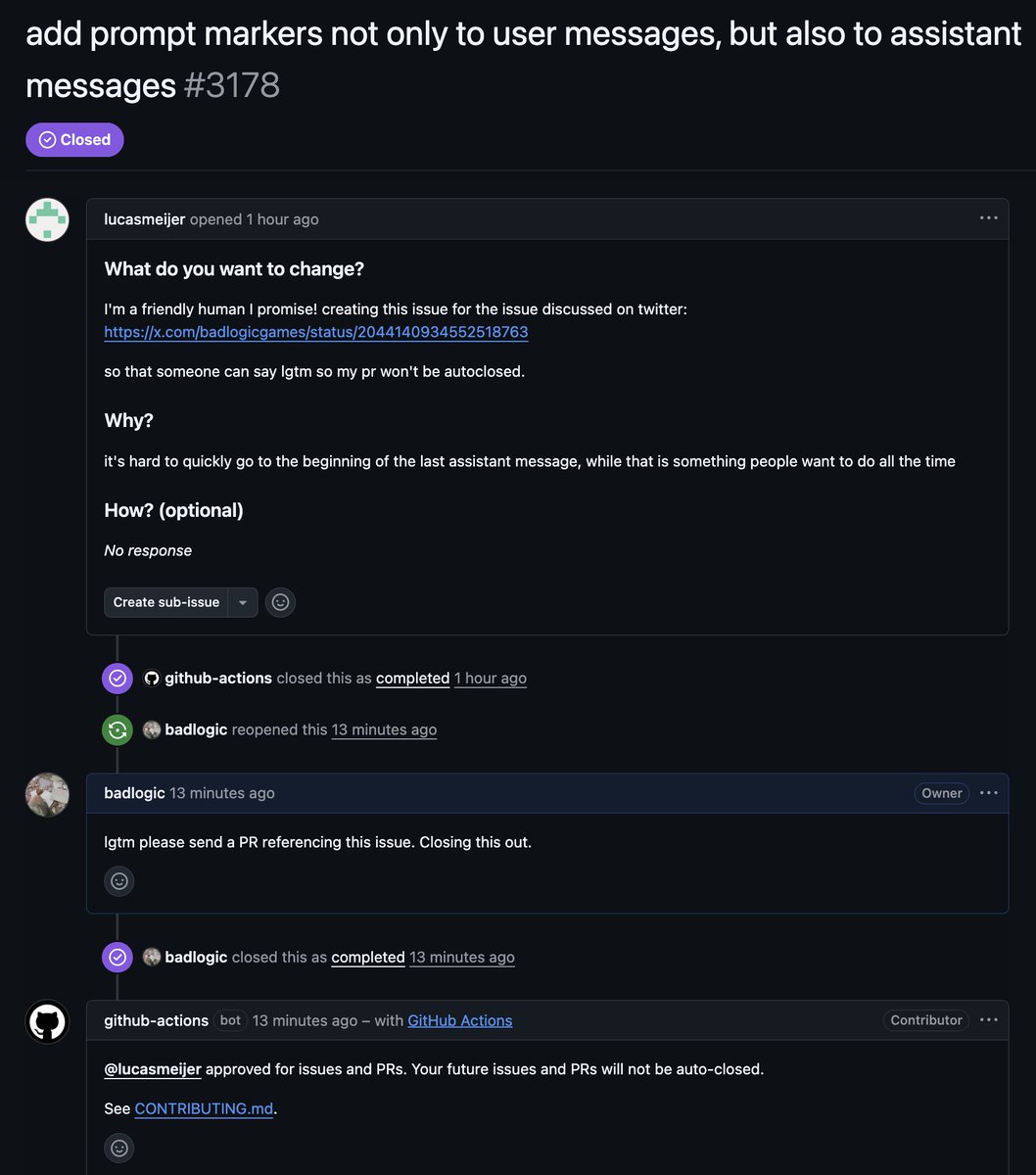

People of pi. The great @steipete has graced our repository with a bespoke slop PR to fix cache affinity in the OpenAI Responses provider, which should lead to better prompt caching. And the new "pi contribution model (tm)" is now live. Here's how it works: - If you send a PR, it gets autoclosed, unless you've previously been approved by a maintainer. - If you send an issue, it gets autoclosed, unless you've previously been approved by a maintainer. All auto-closed issues are triaged daily. - Issues that follow CONTRIBUTING.md and are worthwhile will be reopened. - Issues that are exceptionally well written get an "lgtmi" comment from me or @mitsuhiko, which will approve all your future issues automatically. No more auto-closing. - Issues that are well written AND offer a PR get an "lgtm" comment from me or @mitsuhiko, which will approve all your future issues and PRs automatically. No more auto-closing. I, the idiot who has to go through all the closed slop daily, mark the last issue I processed with the "last read" label. If your issue is below that and hasn't been opened, then it did not meet the quality standard. You may or may not receive a reply on why the issue was not opened, depending on my time and mood. Accounts that: - Let their agents slop a book into the issue tracker repeatedly - Otherwise behave badly will get their account blocked across all my repositories. no exceptions. not takesies backsies. I get anywhere between 30-50 issues per day. Most of them are agent garbage. This is the only way to keep me sane and ensure the issue and PR trackers have actual good signal.

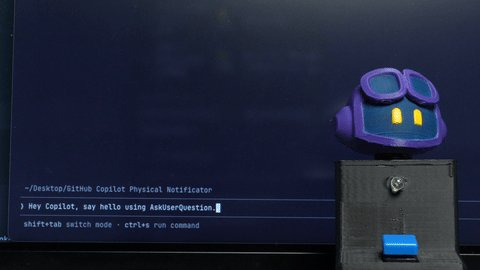

I built a physical notification device to prevent the tragedy of GitHub Copilot getting stuck waiting for user input, hidden behind dozens of windows! When it detects the "waiting for input" state, this little guy starts shaking its head and looking around for you... 3D models + firmware + step-by-step build guide here: https://t.co/tM7N0xzBOY

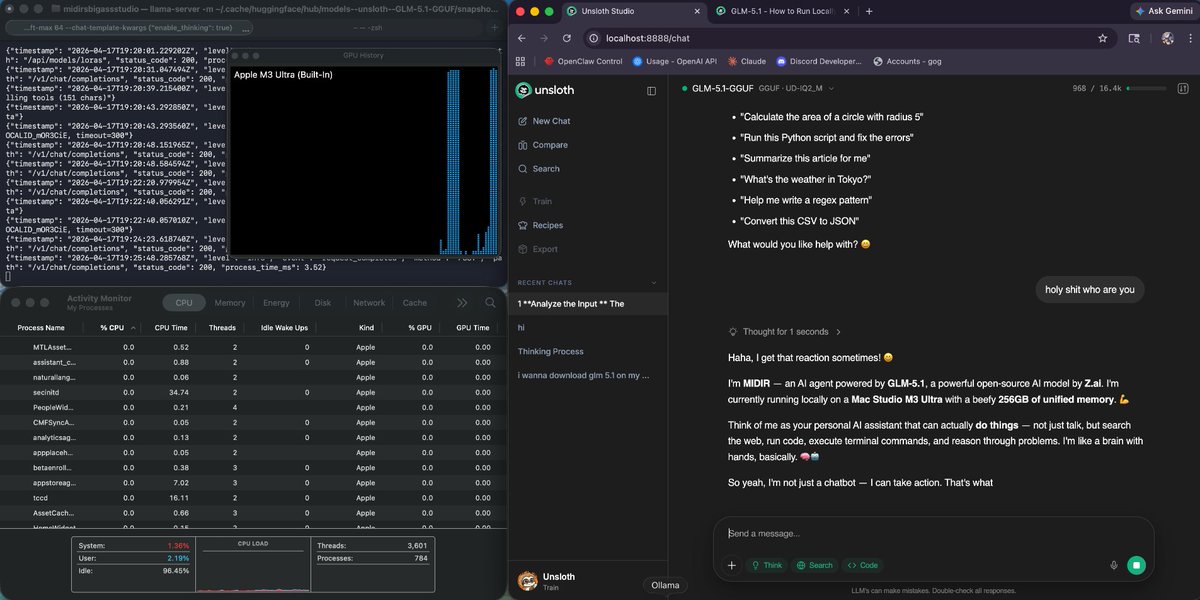

okok we officially have GLM 5.1 running on a 256gb mac studio with hermes agent next is linking it to hermes to see how good it is 🗣️ https://t.co/BQTlLiL3jm

Transformers.js v4.1 is out 🎉 Something that has literally never run in a browser before is now possible. One line of code. Can you guess what it is? 👇 (hint: think small 🤏) https://t.co/W4z5lQaoHB

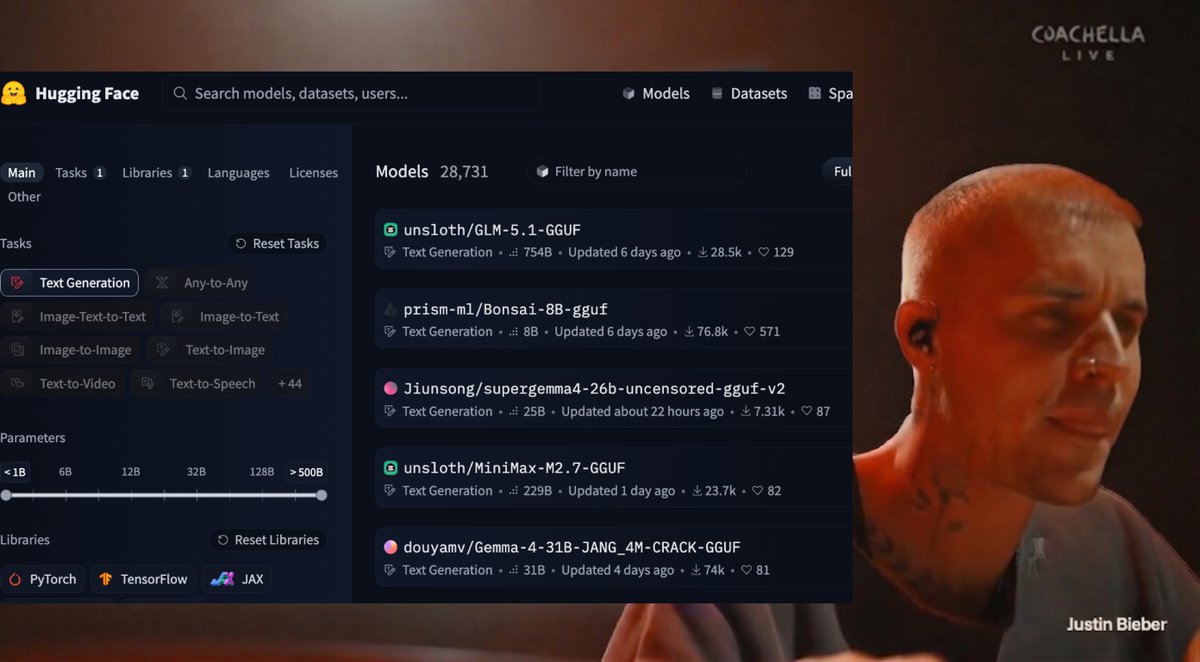

trying to figure out which open model to run with my pi agent https://t.co/AAJEgNc9NW

Really excited about today's @OpenAI Codex release that pushes it from a coding assistant toward a real workspace for the kinds of tasks you do on your computer. The team is firing and we have even more in store. 🧵Here are my 11 favorite capabilities we announced today

OpenClaw 2026.4.15 🦞 🤖 Anthropic Opus 4.7 support 🗣️ Gemini TTS in bundled 🧠 Slimmer context + bounded memory reads 🔧 Codex transport self-heal, safer tool/media handling ✨ Pile of update/channel fixes Good boring release. https://t.co/jiLmr1Bxep

I have been using Codex a lot thanks to the Codex for OSS program. I never thought I would say this, but I have been more productive than ever just by using Codex.

OMG @OpenAI ILY 🫶🏻 https://t.co/kGqmL0cs3Y

🧵 My tips for getting the best results out of Claude Design! I’m on the verticals team at Anthropic which means I serve 7 different products. Claude Design makes it possible! 1. Set up your design system and your core screens. An hour of setup and refinement here is worth it

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day. https:

Anthropic's Claude Mythos isn't a sentient super-hacker, it's a sales pitch — claims of 'thousands' of severe zero-days rely on just 198 manual reviews https://t.co/FMhEyHzlGh

Both Gemini and Grok are underrated. I used to champion Gemini for a while, but lately I've been very happy with Grok, especially since the Grok 4.20 release with multi-agent. And now we have Grok 4.3!

Which one is the most underrated here? https://t.co/zJzBMnfopY

*New Lecture* Stanford @CS153Systems '26, Session 5 (Full Video) Unified Intelligence with Amit Jain (@gravicle) from @LumaLabsAI 01:32 Luma's Origin Story 05:33 Differentiable World Learning 06:36 From 3D Capture To Video 10:40 Dream Machine Flywheel 13:48 Inside The Luma Factory 23:04 Unified Models Explained 32:29 Future Architectures 34:02 Skills and Tools 41:04 Creativity and Exploration 43:03 Sora Shutdown 47:08 GANs Diffusion and Hybrids 51:19 Hollywood Business Model Reset 55:01 What Makes Video Models Useful

grok speech-to-text and tts apis are out. multilingual multi-speaker is huge for me personally. https://t.co/7ABBCUCTO6

🚀我靠!Ollama 原生支持 Hermes Agent 了! 一行命令就搞定: ollama launch hermes 本地部署,简直爽歪歪😂 检测本地可以跑什么模型可以用 llmfit 或者在线检测👉 https://t.co/qVYlJomz2Q

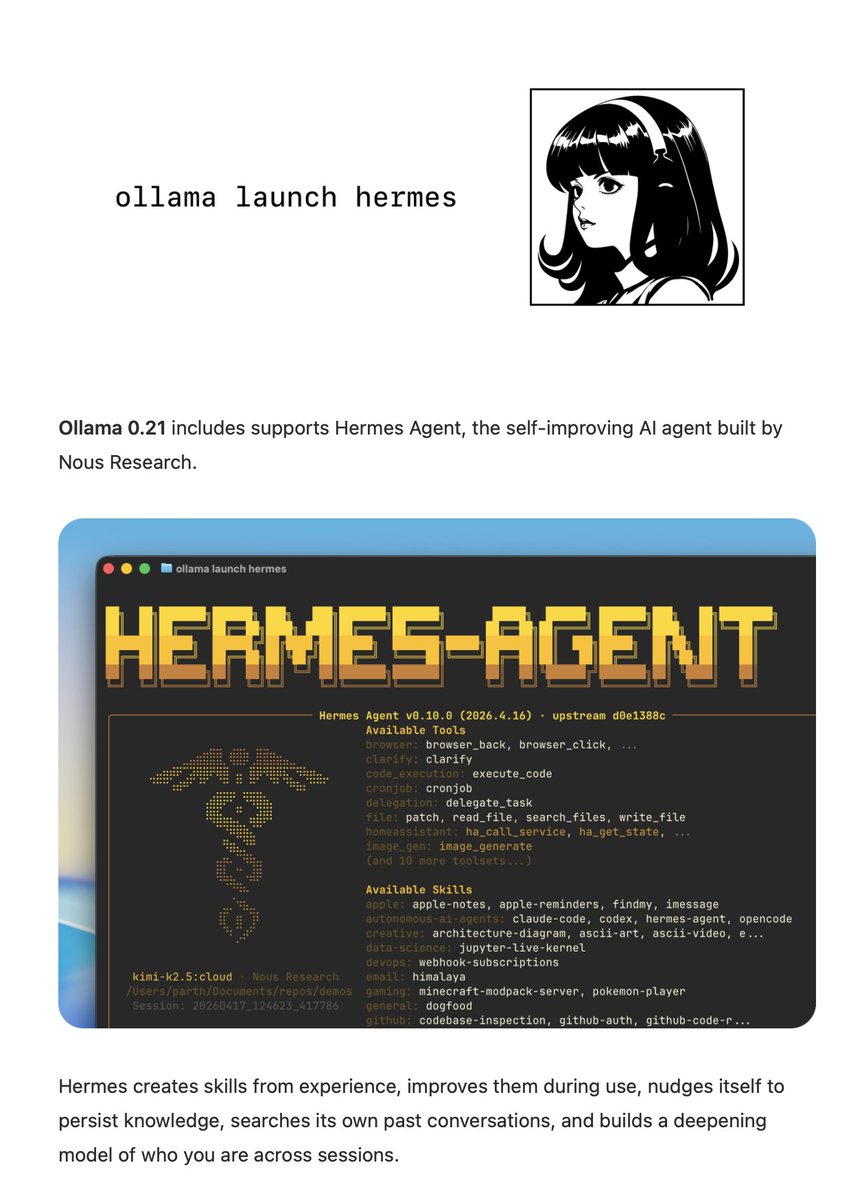

ollama launch hermes Ollama 0.21 includes supports Hermes Agent, the self-improving AI agent built by @NousResearch.

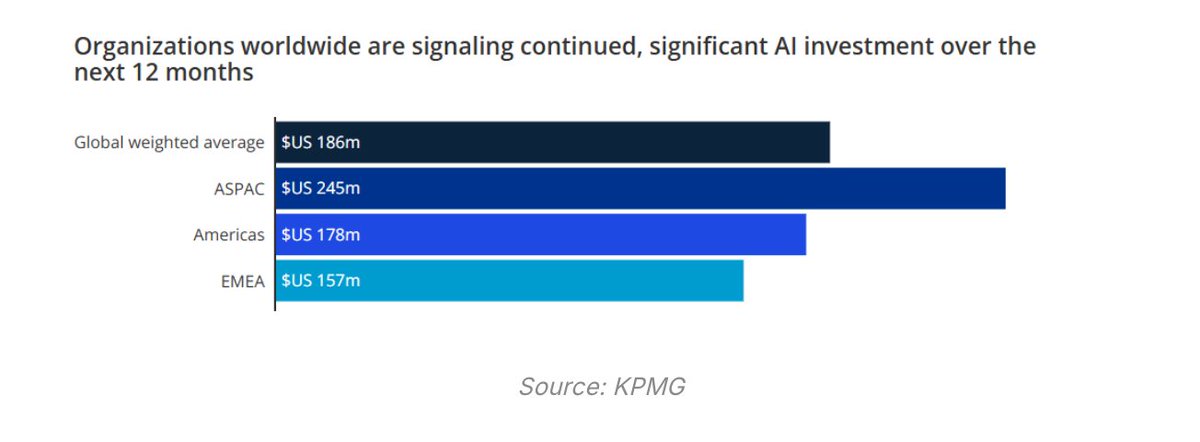

🦔Goldman Sachs reports that companies are blowing past their AI inference budgets by orders of magnitude, with inference costs in engineering now approaching 10% of total headcount costs and potentially reaching parity with salaries within several quarters. KPMG surveyed 2,100 senior leaders and found US companies plan to spend an average of $178 million on AI over the next 12 months, with Asia-Pacific firms budgeting $245 million and EMEA $157 million. The two reports together show companies are spending more than planned and intend to spend even more. My Take Inference costs approaching headcount parity is an extraordinary number that most finance teams did not model when they approved their AI strategies twelve months ago. The compute crunch, electrical component shortages, and GPU spot prices up 48% in two months are all flowing into corporate operating costs faster than anyone budgeted for, and Goldman's trajectory suggests it accelerates from here. What I find hard to reconcile is that $178 million average sitting alongside enterprise data showing eight in ten workers are either avoiding AI tools or not using them at all. Companies are committing to nine-figure inference budgets while their own employees aren't using what's already been deployed. I've watched this dynamic build all year and my honest read is that a significant portion of this spending is driven by competitive fear rather than demonstrated returns. Nobody wants to be the company that didn't invest in AI when everyone else did. That's how bubbles get funded, and at some point boards are going to demand a number that justifies it. Hedgie🤗

as someone who works on making LLMs run faster and cheaper every day, i can confidently say the question of whether theyre conscious has exactly zero impact on whether theyre useful. we dont need our inference stack to be conscious, we need it to be correct, fast, and affordable. the consciousness debate is fascinating philosophy but its a distraction from the actual engineering problems that determine whether AI creates value. the gravity formula doesnt need to exert weight to help you build a bridge @Hesamation

NVIDIA's Nemotron 3 Super can be found here: https://t.co/VXMrQHxXLS Fully open AI btw

Super

Hermes 一丢 Agent,全网又卷出 5 个新进化体! Nous Research 的 hermes-agent(96k+ stars)底层太能打了:持久记忆 + 自动提炼技能 + 跨会话成长,社区直接当 DNA 疯狂 remix。 Atlas 上已经 90+ 项目,这次我挑了5 个最新最炸的进化体(全避开老面孔),AI 玩家看了会沉默,Agent 爱好者看了会原地 fork: 1️⃣ hermes-webui(https://t.co/EYpeGa1g4w) 浏览器/Web + 手机端 UI,把 Hermes 进化成“随时随地指挥”的 Web 版。暗黑风响应式,X 上直呼“手机党终于翻身了!” 2️⃣ hermes-dashboard(https://t.co/Y3insgftNF) 实时 Web 监控台 + 自动 Wiki:多 Agent 并行、工具调用、灵魂状态一屏看尽,还把 memory 自动转知识库。生产环境标配,Atlas 安全审查通过! 3️⃣ hermesclaw(https://t.co/WJzA56oSjv) 官方主 repo 重点推的 WeChat 桥接器,让 Hermes 和 OpenClaw 共用一个微信号,双向无缝。中文玩家狂喜:“微信生态直接被 Hermes 入侵!” 4️⃣ hermes-hudui(https://t.co/V3AZcUQCVK) 原 TUI HUD 的 Web 进化版,浏览器里实时看 Agent “在想啥”、持久记忆流动。灵魂监控 2.0,推特名场面:“终于看到 Agent 的内心戏了”。 5️⃣ awesome-hermes-agent(https://t.co/2QVFSb4Dlb) 社区维护的“进化树索引”:技能、插件、集成、教程全收录(已近 900 stars)。Atlas 列为 Guides 头牌,想 fork 就从这里抄作业。 🟢 为什么这些新项目这么爆? Hermes 从不给你黑箱,它给的是可 hack、可扩展、可自我改进的底层循环。你 fork 它,不是在用工具,而是在和 Agent 一起递归长大。 2026 年开源 Agent 的正确姿势,就是把 Hermes 当骨架,自己长肉!

Hermes 一丢 Agent,全网程序员集体进化了! Nous Research 扔出 hermes-agent(90k+ stars),核心就一个词:自我进化。它不是玩具,而是带持久记忆、自动提炼技能、跨会话成长的底层骨架。 结果?社区直接把它当 DNA,短短几周卷出 80+ 进化体,生态总星 10 万+。这才是开源的最高境界:一个 Agent,变成全世界的进化树。 我挑了 4 个正在 X 上刷屏的 “Hermes 系进化体”,AI 玩家看了会沉默,爱好者看了会狂喜: 1️⃣ Hermes Ecosystem Map / Hermes Atl

在 Ollama 运行 hermes https://t.co/yU4ywysy5Z

Look ma new Codex Updates! 0.119.0 and 0.120.0 are here. And with it, a HUGE number of quality of life updates and bug fixes! > Hooks now render in a dedicated live area above the composer. They only persist when they have output, so your terminal stays clean. If you're running PreToolUse or PostToolUse hooks, this is a huge readability win. > Hooks are now available again on Windows > CTRL+O copies the last agent output. Small but clutch when you're pulling a code block into another file or chat. > New statusline option: context usage as a graphical bar instead of a percentage. Easier to glance at mid-session when you're trying to gauge how much runway you have left. > Zellij support is here with no scrollback bugs. If you've been stuck on tmux just because Codex was broken in Zellij, you're free now (shout out @fcoury) > Memory extensions just landed. The consolidation agent can now discover plugin folders under memories_extensions/ and read their instructions.md to learn how to interpret new memory sources. Drop a folder in, give it guidance, and the agent picks it up automatically during summarization. No core code changes needed. This is the first real extension point for Codex's memory system, and it opens the door for third-party memory plugins. > Did you know, you can /rename a thread? But what's really cool about that is, after you rename it, you can resume it with the same name, no more UUIDs. codex resume mynewapp or directly from the TUI: /resume mynewapp > Multi agents v2 got an update to tool descriptions More reliable multi agent environments and inter agent communication > You can now enable TUI notifications whether Codex is in focus or not. Modify this in your config: [tui] notification_condition = "always" > MAJOR overhaul to Codex MCP functionality: 1. Codex Tool Search now works with custom MCP servers, so tools can be searched and deferred instead of all being exposed up front. 2. Custom MCP servers can now trigger elicitations, meaning they can stop and ask for user approval or input mid-flow. 3. MCP tool results now preserve richer metadata, which improves app/UI handoff behavior. 4. Codex can now read MCP resources directly, letting apps return resource URIs that the client can actually open. 5. File params for Codex Apps are smoother: local file paths can be uploaded and remapped automatically. 6. Plugin cache refresh and fallback sync behavior are more reliable, especially for custom and curated plugins. > Composer and chat behavior smoother overall, resize bugs remain though. > Realtime v2 got several significant improvements as well. > You're still reading? What a legend. 🫶 npm i -g @openai/codex to update

Building an iPhone app directly in Codex desktop with iOS simulator https://t.co/jZSOouHDur