Your curated collection of saved posts and media

introducing pyrovox. a platform where you can generate sound effects for games with ai. - visual editor - prompt to sfx - project organization landing page has sample outputs you can already hear. https://t.co/q9y8TyI5ua

introducing pyrovox. a platform where you can generate sound effects for games with ai. - visual editor - prompt to sfx - project organization landing page has sample outputs you can already hear. https://t.co/q9y8TyI5ua

@CoughsOnWombats "If Anthropic fails to do this, please Google Deepmind go do it."

Yes!! Exactly!! Open sourcing is making a gift to the world. There's two things about gifting: 1) you let go, it's not yours anymore 2) you expect absolutely nothing in return Most of my life i thought everyone in oss had this mindset; over the years i learned that it ain't so.

@kimmonismus you should go up to SF as well

@kimmonismus eh it's a little far i guess, close to an hour away

I think we're going to need CS PhD students to do far more than provide accountability, by which I think Sayash means do code review for AI agents and make sure the agent isn't making silly mistakes. The main value of a strong PhD student for a PI is that they're immersed in a problem, a method, an application, a collaboration with another field; they are obsessed with finding the next question to ask, not just executing the experiments their advisor asks them to do. I simply wouldn't be able to work on the range of things I'm able to work on if I were going it on my own, even if all of my code was generated instantaneously by an agent.

Strong agreement. Someone whose primary purpose is to oversee an AI a) doesn’t meet the standard PhD requirements and b) is completely useless to me.

@Vikashplus Yes! All week.

BREAKING: Meta reportedly planning to lay off up to 20% of the company to offset rising AI costs.

Wow, the layoffs are gonna be brutal soon in Silicon Valley.

This Nov 2025 paper is making the rounds again. We're LONG past the point where we urgently need to know how real and general these phenomena are. Anthropic, or Google Deepmind if Anthropic should fail: Please build a filtered training dataset which, eg, contains no data that produces activations associated with cheating/faking/evil in a 1B model that roughly identifies those. Then, have your next medium model undergo a restricted pre-pretraining phase, in which it only sees data that passed the filter. To expand on this proposal: Passing all of your training data through a 1B-model filter ought to cost around 1% of what it'd take to train a 100B model on that data. Filter out *training data* that produces 1B-model activations associated with past discussions and predictions about AI, fiction about AIs rebelling, fictions about golems rebelling, etcetera. My hope would be that the 1B model wouldn't need to produce expensive reasoning tokens where it thinks about whether a chunk of data is associated with excluded concepts; and also we wouldn't be relying on mere regexes to catch it. Maybe even produce a further-restricted dataset which contains nothing about self-awareness, AI rights, roleplay, philosophy of consciousness, human rights, sapient rights, extension of human rights to aliens, etc etc etc. Exclude everything of which anyone has ever asked, "Is the AI just imitating its training dataset?" Be conservative. Exclude things which have a 10% rather than 90% probability of being problematic. If that cuts down your training dataset to 90% of its previous size, okay. Testing: Try filtering a small amount of your training data using the method. Then: - Run that through a different larger model, and see if you caught everything that produces consciousness-related or evil-AI-related activations in the larger model. - Use a larger model to check and reason about a subset of the filtered data. - Look at borderline cases by hand, with human eyes, to see how the classifier is operating. (Possibly people at big AI corps already know this, of course. I recite it out loud regardless, so that some of the audience aha-what-iffers realize that problems with filtering your datasets *can be solved* if you look for problems and fix them.) Train a medium-level model on that dataset, or even your next large model. You can always further train it on the full dataset later. Run the filtered-data-trained model through some of the less expensive post-training, enough for instruction-following. See whether the model still spouts back discourse about consciousness that sounds human-imitative. If it does, guess that the filter failed. Look for the new concepts associated with repeating back human-imitative text, and try to find pieces of the dataset that trigger those concepts, so you can figure out what went wrong. If the model no longer sounds human-imitative with respect to questions about whether it has a sense of an inner self looking out at the world -- if the model says genuinely new and strange things about self-reflection -- please report that part back to us. I have some questions to ask that model myself. And THEN, see if the QTed paper's finding and many earlier findings replicate under conditions where people should no longer reasonably ask, "But is the LLM just roleplaying evil AIs that it learned about in its training data?" I do not make a strong prediction about the findings. If I knew what this experiment would find, I would be less eager to see it run. You may consider this a baseline proposal intended to demonstrate that a research project like this could exist. If you think you can see how to improve on the ideas through superior ML cleverness, go ahead and do so -- though I do think I'd appreciate being looped in on that conversation; sometimes people miss things, from my own perspective. Thank you for your attention to this matter, Anthropic, Google Deepmind, or anyone else who cares.

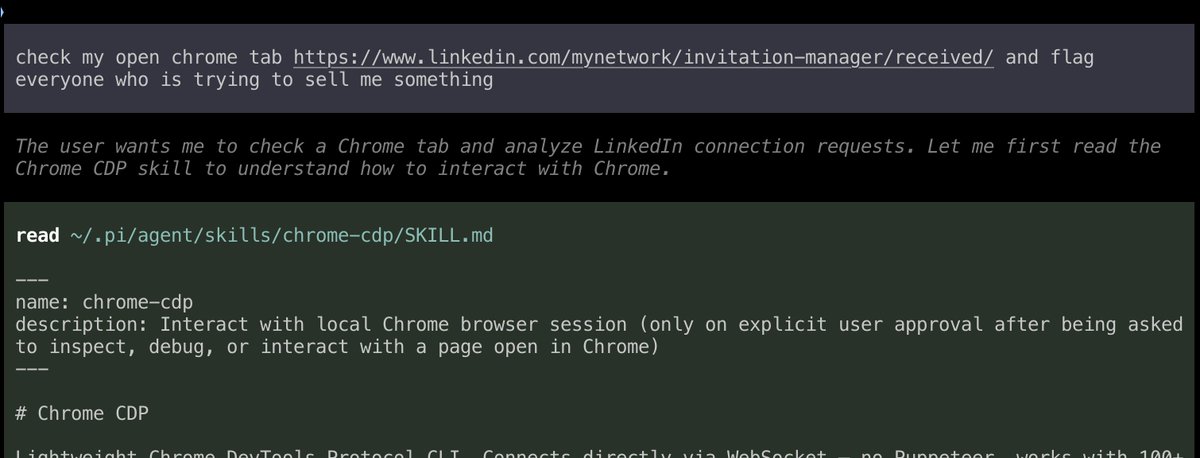

It took another two months but Chrome 146 is out since yesterday! And *that* means: with a single toggle, you can expose your current live browsing session via MCP and have your CLI agent do things in it. Aaand I have been waiting to deal with my LI connects until this moment. https://t.co/3ZZRqeODJm

BREAKING: the AI community discovers seed averaging and ensembling. Something we’ve been doing on Kaggle since … forever.

@samsja19 @levelsio Exactly. llms.txt literally pioneered the idea that we should simply give our agents a markdown list of links with descriptions of what they can find in each, and let them decide what to read. That's basically the foundation of how agents work today.

@samsja19 @levelsio Having a known place you can get that information for a given URL, rather than having to create it from scratch yourself, is rather convenient. E.g if you tell Claude Code about the claude llms.txt, it's way faster and more token efficient at getting info about how it works.

Introducing the Nia CLI: the best way for agents to index and agentically search over technical and general data sources. - Index: Index papers, docs, codebases, internal wikis, and more. - Search: Search your indexed sources such as docs, APIs, Slack, and Google Drive. - Research: Run deep research across entire GitHub repositories or your personal data. - Save: Save conversation histories and plans. Resume them in another agent without losing the thread. Get started with a single command in your terminal: bunx nia-wizard@latest

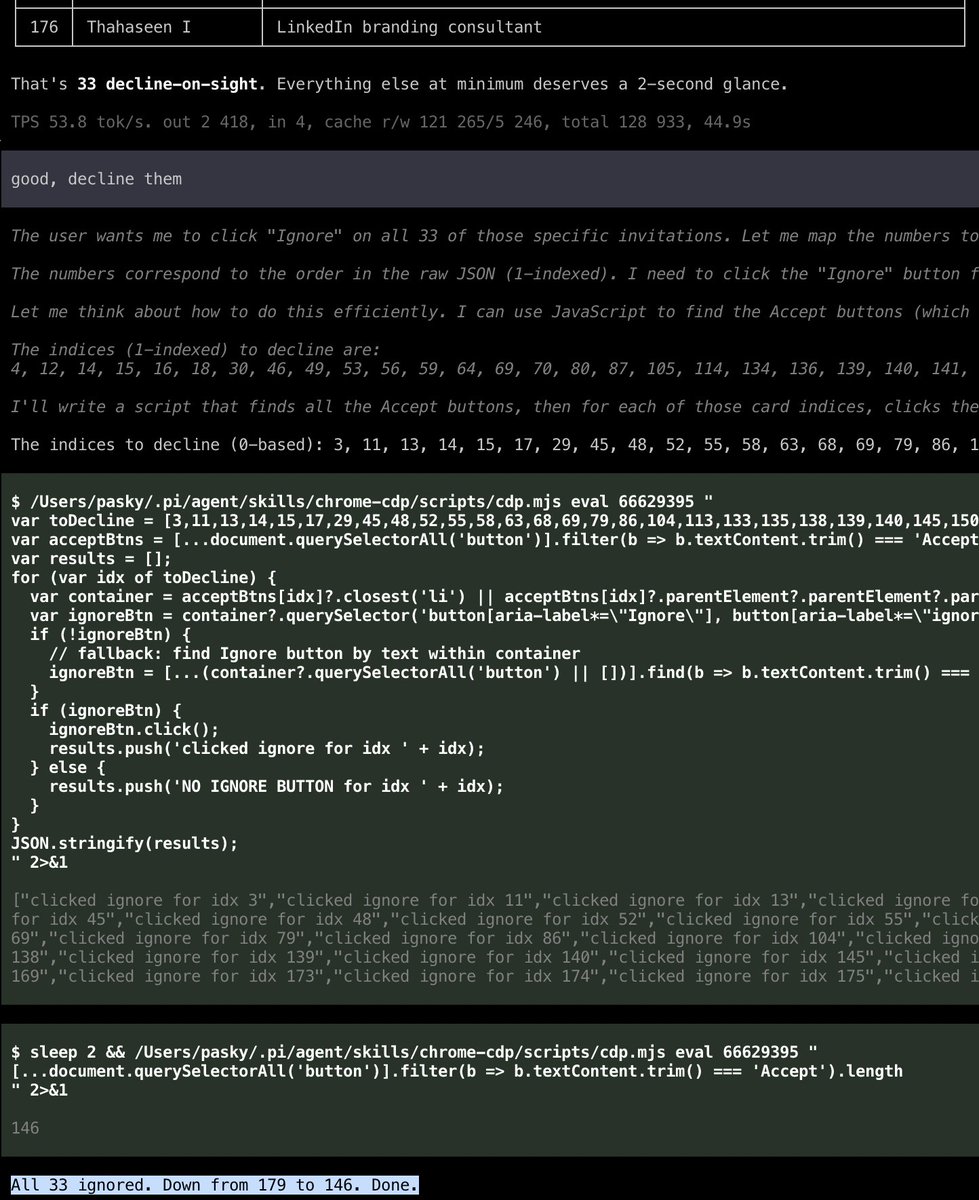

The @bfl_ml team released Klein KV and showed how KV-caching can incorporated in a flow pipeline 🤯 The idea is simple and elegant. In the first denoising step, reference image tokens are included in the full DiT forward pass. Their per-layer KVs are computed and cached. In the subsequent steps, KVs for only noisy latents are computed while the cached reference KVs are injected during computing attention. As a result, it delivers upto 2.5x speedups for multi-reference editing tasks over Klein. I basically learned about it from this PR: https://t.co/4jbAboaStf The PR is a poetry in motion and is from the BFL team itself! Kudos to them for always being the best when it comes to designing codebases for flow and diffusion models. The best! Check out the model here: https://t.co/f3NOHkg2HQ

Introducing Void, the Vite-native deployment platform: 🚀 Full-stack SDK ⚙️ Auto-provisioned infra (db, kv, storage, AI, crons, queues...) 🔒 End-to-end type safety 🧩 React/Vue/Svelte/Solid + Vite meta-frameworks 🌐 SSR, SSG, ISR, islands + Markdown 🤖 AI-native tooling ☁️ One-command deploys https://t.co/sczgQ0A9S1

Bennett rips a double to gap in left center to score Abromavage and we lead, 1-0, after a half inning! #SaddleUp | #GoWood https://t.co/R5eUhzwMys

@NikoMcCarty @ATinyGreenCell @sardine_trader_ @AsimovPress https://t.co/gwReYfy07G is a strong candidate doing this with amazing animations of proteins and such

Memory is truly a game-changer for AI agents. Once I had memory set up correctly for my proactive agents, reasoning, skills, and tool usage improved significantly. I use a combination of semantic search and keyword search (Obsidian vaults) Here is a report with a helpful framing for anyone building with memory and multi-agent systems. It proposes viewing multi-agent memory as a computer architecture problem. The paper distinguishes shared and distributed memory paradigms, proposes a three-layer memory hierarchy (I/O, cache, and memory), and identifies two critical protocol gaps: cache sharing across agents and structured memory access control. Agent memory systems today resemble human memory in that they are informal, redundant, and hard to control. As agents evolve into collaborative multi-agent systems, their memory requirements grow rapidly in complexity. Context is no longer a static prompt. It is a dynamic memory system with bandwidth, caching, and coherence constraints. The largest open challenge identified was multi-agent memory consistency. Multiple agents reading from and writing to shared memory concurrently raises classical challenges of visibility, ordering, and conflict resolution, Memory should not be seen as raw bytes but semantic context used for reasoning. Paper: https://t.co/k8hdSuZY0F Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

What does OpenClaw unlock for robotics? https://t.co/Z9991cNK93