Your curated collection of saved posts and media

@rmushkatblat @Miles_Brundage @allTheYud It's not at all hard to plug numbers in such that it goes "too far".

MCP is dead. Join us for a celebration of its life on April 1 in NYC ahead of the MCP Dev Summit. Wear black. https://t.co/17gXE0QFw6

@AAAzzam Ohhh this is what @HanchungLee was talking about lol

Giving a talk at Softmax today about the future of game development: games built with agents for agents. https://t.co/B7tLcbluoo

I’m genuinely in tears right now please unmute this video https://t.co/WthJDcmov0

🚨: There have been thousands of generations of humans, and you are alive to witness the first photo of a Sunset on another World.😮 This is a real photo of the sunset on Mars. https://t.co/jmZSO7zvrB

@Meelsie143 have another apple, Steve

https://t.co/u4mN4Xco0W

https://t.co/u4mN4Xco0W

@suni_code IRL folks will change requirements, frontend wants progress, a retry button and pause and resume. Rest of the time is wrestling with AWS S3 SDK or workers for the pipeline & SES. If the question was for 500 GB, I would say let’s use Aspera and their sdk. No time for this!

@KimZetter The blind spot is context drift. AI agents context notably degrades after just a few iterations. This has been documented by “Towards a Science of Scaling Agent Systems” testing this effect across 180 controlled configurations, spanning three LLM families and four agentic benchmarks: https://t.co/tFVjda5NTS

@iamheci how do i activaate this on my drive?

@rmushkatblat @Miles_Brundage @allTheYud A simple plugin example: - It increases by 10x the risk of an irreversible change to a global feudalism where per capita utility decreases by 100x, but also: - It decreases P existential risk from 1e-5 to 1e-6 Overall expected utility would be highly negative.

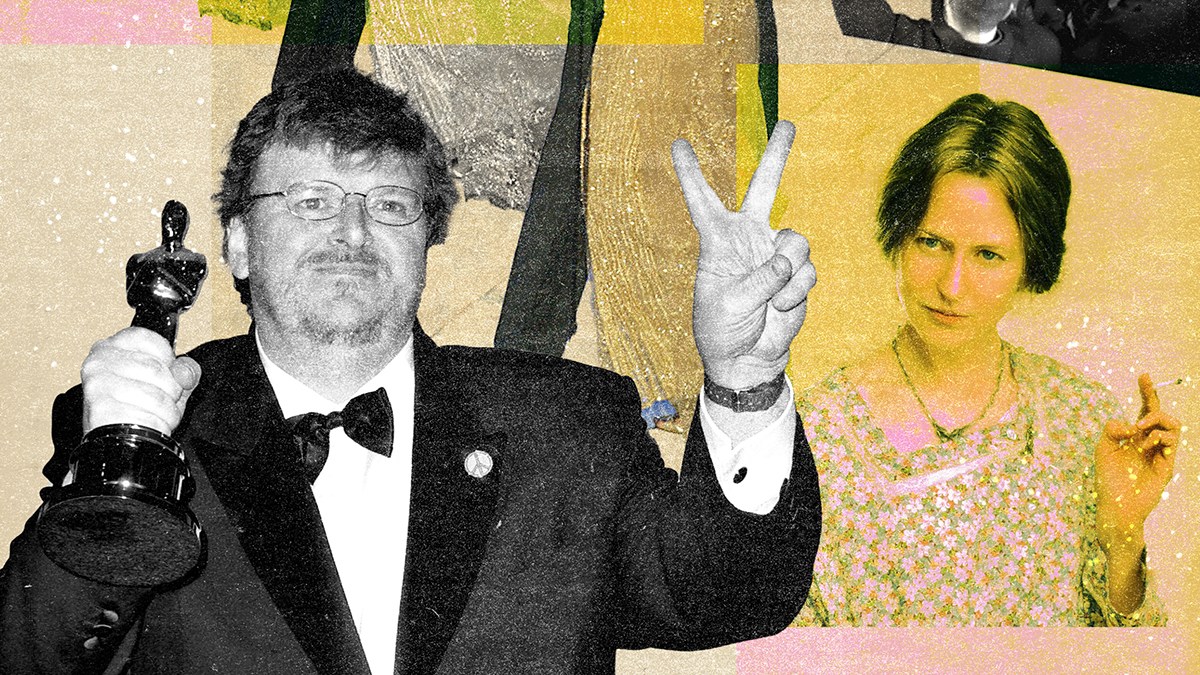

In March 2003, Jack Nicholson, who had recently received his 12th Oscar nomination for “About Schmidt,” invited his fellow best actor contenders to his home on Mulholland Drive. The U.S. had just invaded Iraq, and he wanted the other men — a group that included past winners Daniel Day-Lewis, Michael Caine and Nicolas Cage — to boycott the ceremony with him. But Adrien Brody, the only nominee without an Academy Award of his own, wasn’t having it. “I said, ‘I don’t know about you guys, but I’m going,’” Brody recounted to The Sunday Times in 2023. “I said, ‘I kind of have to show up. My parents are coming. This doesn’t come around too often. I know you guys are all winners. You can sit it out. But I can’t.’” Brody’s mind may have been made up, but the war plunged Hollywood into a state of uncertainty as the town braced for the 75th Academy Awards. Read "Looking Back at the 2003 Oscars" here: https://t.co/erG5RTk8AQ

自衛隊が前線の情報分析にAI活用へ…敵の写真や音声を自動解析し統合、配置の立案も : 読売新聞オンライン https://t.co/rMMNtdbJYQ #自衛隊AI活用 #敵の写真や音声を自動解析

@MarkJam73395966 You can add as many lists as you want. But gotta add them one by one. Put them into X Pro and you can have them side by side.

Automations are now GA. You can now: • Set the model and reasoning level • Choose if runs happen in a worktree or existing branch • Reuse workflows with templates Automations are great for recurring tasks — daily repo briefings, issue triage, PR comment follow-up, and more. https://t.co/YlvQauuMqI

@So8res AI is just software. No digital mind, no ghost in the machine, just vector math on publicly available data. I can guide anyone through the basics or run a multi-day deep dive. Understanding the math beats losing sleep over metaphysics or fear-driven stories. Follow the logic, not the hype. https://t.co/NBoNwQ7LYG

Here is an xAI story. When I was first hired (low level) by xAI, I was extremely excited. I greatly admired Elon and what Grok could be. I have a pretty cool AI following here on X. Some big names see my stuff, including Elon himself (at the time). Lex, Beff, Andreessen, Aravind, many others. During the interview and onboarding for xAI, they made a *big deal* about wanting people who "take initiative" and think outside the box. Ok... So, some of the biggest names in tech follow me on X. I decided to ask for ideas and feedback on how Grok (then still early at version 2) could be improved. I asked my followers on X for the best "how can we make Grok awesome?" ideas, and was going to collect them (organized by Grok himself) into a big report for my boss(es) and ultimately, Elon. (xAI makes a big deal about how it's a "flat structure" also. You're supposed to be empowered to act on good ideas.) Well, my post got way more attention than I expected - great! Ideas to improve Grok poured in! I built a script to collect and sort all these great ideas to make xAI's core product better. John Carmack (personal friend of Elon, creator of Doom, id software, legend) retweeted it. Carmack has 1M followers. There were so many great ideas on how to improve Grok! I was collecting them and excited. Until....... I woke up the next day to a threatening email from my main supervisor* at xAI, telling me I had messed up, that I was NEVER to ask for ideas to improve Grok ever again, that it wasn't my job (I thought our job was to improve Grok.) They suspended my account on X. They never explained why. It was obviously related to my post about improving Grok. I was told to delete those posts which had gone viral. I had to delete all the hundreds (thousands?) of genuinely good ideas for improving Grok that had poured in by users on X, because it stepped on someone's toes. It made me confused and sad. Incidents like this happened often, where xAI employees would come in full of excitement and enthusiasm, and would have it stomped out by managers who hated ideas. They filled xAI with middle managers and busybodies. It was one of the most DEI and corporate-y places I've ever worked. I came in wanting Elon and xAI to win and left just sad. *That manager is gone, for what it's worth. Everyone I knew at xAI is gone.

Here is an xAI story. When I was first hired (low level) by xAI, I was extremely excited. I greatly admired Elon and what Grok could be. I have a pretty cool AI following here on X. Some big names see my stuff, including Elon himself (at the time). Lex, Beff, Andreessen, Aravind, many others. During the interview and onboarding for xAI, they made a *big deal* about wanting people who "take initiative" and think outside the box. Ok... So, some of the biggest names in tech follow me on X. I decided to ask for ideas and feedback on how Grok (then still early at version 2) could be improved. I asked my followers on X for the best "how can we make Grok awesome?" ideas, and was going to collect them (organized by Grok himself) into a big report for my boss(es) and ultimately, Elon. (xAI makes a big deal about how it's a "flat structure" also. You're supposed to be empowered to act on good ideas.) Well, my post got way more attention than I expected - great! Ideas to improve Grok poured in! I built a script to collect and sort all these great ideas to make xAI's core product better. John Carmack (personal friend of Elon, creator of Doom, id software, legend) retweeted it. Carmack has 1M followers. There were so many great ideas on how to improve Grok! I was collecting them and excited. Until....... I woke up the next day to a threatening email from my main supervisor* at xAI, telling me I had messed up, that I was NEVER to ask for ideas to improve Grok ever again, that it wasn't my job (I thought our job was to improve Grok.) They suspended my account on X. They never explained why. It was obviously related to my post about improving Grok. I was told to delete those posts which had gone viral. I had to delete all the hundreds (thousands?) of genuinely good ideas for improving Grok that had poured in by users on X, because it stepped on someone's toes. It made me confused and sad. Incidents like this happened often, where xAI employees would come in full of excitement and enthusiasm, and would have it stomped out by managers who hated ideas. They filled xAI with middle managers and busybodies. It was one of the most DEI and corporate-y places I've ever worked. I came in wanting Elon and xAI to win and left just sad. *That manager is gone, for what it's worth. Everyone I knew at xAI is gone.

i disagree. gpt-oss-120b is *the* model i use most frequently for my research. it is ridiculously good for how cheap it is (5b active). it gets hate for being worse than larger chinese models, but it is one of my favorites -- i really hope that openai releases future oss models

🎉 Congrats to @nvidia on the release of Nemotron 3 Super — day-0 support in vLLM v0.17.1! Verified on NVIDIA GPUs. 120B hybrid MoE, only 12B active at inference. Big upgrades over the previous Nemotron Super: - 5x higher throughput - 2x higher accuracy on Artificial Analysis Intelligence Index - Multi-Token Prediction (MTP) for faster long-form generation - Configurable thinking budget — dial accuracy vs token cost per task - 1M token context window Supports BF16, FP8, and NVFP4. Fully open: weights, datasets, recipes. Blog: https://t.co/PAN0y778iB 🤝 Thanks @NVIDIAAIDev Nemotron team and vLLM community contributors!

@ivanburazin What are your sources?