Your curated collection of saved posts and media

This AutoHarness paper (from Google DeepMind) is the most interesting thing I've read lately. I am testing a similar idea (without training) on models like MiniMax-2.5 and getting good results. It already allowed me to synthesize an entire functional coding agent. More soon. https://t.co/pGRRlIEsUR

@John98188 @openclaw I'm sorry about that. I'm getting the company's attention on your feedback.

After 8+ years on the Tesla Autopilot team and 3 years at Intel, I started @apexcompute to design a new architecture for efficient AI inference. For the past 9 months, we’ve been building our custom inference accelerator. Today we’re releasing Unified Engine v1. Last June we raised our seed round with @maxitechinc , DeepFin Research, @Soma_Capital and an incredible group of angel investors. In less than 9 months, we completed our RTL architecture and brought our first pre-silicon prototype to life on FPGA. Our architecture combines systolic array and vector processing in a single compute engine with multiple architectural optimizations, achieving very high FLOPs utilization. A single engine is super lean and it uses less than 90K LUTs and 1 MB Block RAM. It may also be one of the smallest logic-footprint compute engines developed so far. Our Unified Engine v1 supports: -matrix-matrix multiplication (~95% FLOPs utilization) -softmax (~90% FLOPs utilization) -broadcast and element-wise operations -RMSNorm / LayerNorm -block quantization/dequantization (fp4, int4) -multi-engine synchronization and many other operations. We even implemented memory-efficient attention similar to FlashAttention, reaching ~90% FLOP utilization. Full benchmarks and the software stack are available on our GitHub: https://t.co/KqTKbB2Inl We have basic compiler written in Python and it supports PyTorch tensors directly to easily test and transfer tensors between the accelerator and host using bf16, fp4 and int4 formats. Our FPGA prototype can already run LLM inference and outperform NVIDIA Jetson Orin Nano, even on a mid-tier FPGA setup (6.4x lower memory bandwidth, 18% slower clock speed at 4.5 Watts). Check the side-by-side comparison video below. Our GitHub includes low-level operator implementations, examples for tiled matrix multiplication, operation chaining, tensor parallelism, attention kernel and a full Gemma 3 1B model implementation. Many more models(Vision Transformers and VLA) are coming soon. Our accelerator IP is AXI-ready for deployment on any AMD(Xilinx) FPGA platform today. Even better, our two-engine prototype runs on an entry-level AMD(Xilinx) FPGA as a PCIe accelerator card. You can purchase it here https://t.co/8B9NOcueVu for $50 to experiment our pre-silicon prototype on your desktop PC or Raspberry Pi 5. We will be releasing hardware bitstream updates as the architecture gets new features. More to come soon! We are expanding our team and looking for compiler engineers and floating-point hardware design engineers. If you're interested, please send me a DM.

https://t.co/IlSdfePEMV

https://t.co/IlSdfePEMV

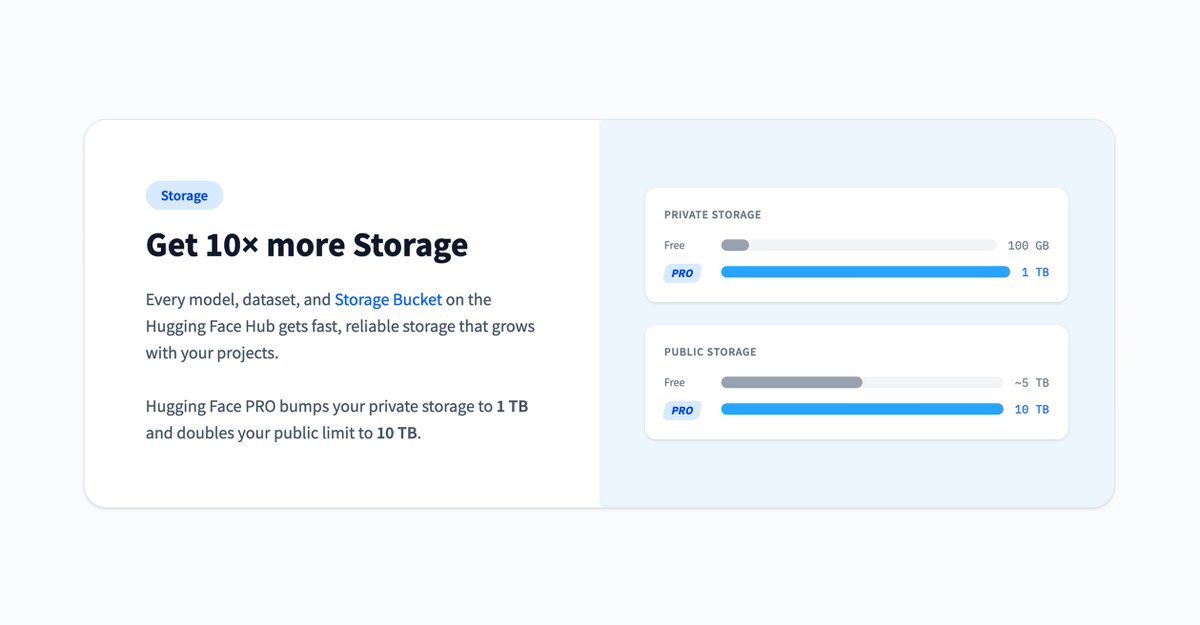

get PRO on @huggingface and instantly 10x your storage to 1 TB private + 10 TB public ...for $9 a month 😮 a deal this good should be illegal https://t.co/nTMcyTn280

The bottleneck of current AI is simple: the techniques we use are still predicated on pattern memorization and retrieval, and thus they need *someone* to tell them which patterns to memorize (training data, RL envs...) That role cannot yet be played by AI in a truly open-ended and autonomous way. We can't yet remove the humans in the loop. In that sense, current AI is still purely a reflection of human cognition (both in terms of which tasks/goals it pursues and the patterns it uses to solve them). It isn't yet its own thing.

Introducing our biggest upgrade to @googlemaps since the original launch, featuring Ask Gemini (with personalization), Immersive Navigation, and much more!! 🗺️ https://t.co/yjKV44hK6w

Damn this is crazy, I have years of saved places.

@OfficialLoganK @ivanleomk @googlemaps YESSSS 🤩🤩🤩

@jeremysoojk I feel like it’s a Z-ai model

@awesomekling FYI

// Think Harder or Know More // Chain-of-thought prompting enables reasoning in LLMs but requires explicit verbalization of intermediate steps. Looped transformers offer an alternative by iteratively refining representations within hidden states, but they sacrifice storage capacity in the process. This paper investigates combining both: adaptive per-layer looping with gated memory banks. Each transformer block learns when to iterate its hidden state and when to access stored knowledge. The key finding: Looping primarily benefits mathematical reasoning, while memory banks recover performance on commonsense tasks. Combining both yields a model that outperforms an iso-FLOP baseline with three times the number of layers on math benchmarks. Analysis of model internals reveals layer specialization. Early layers learn to loop minimally and access memory sparingly, while later layers do both more heavily. The model learns to choose between thinking harder and knowing more, and where to do each. Paper: https://t.co/0Gl77zMwOY Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

I’m joining @OpenHandsDev to work on agent engineering for enterprises. Why, you ask? • Open source is better for enterprises • Model-agnostic solution • A focus on the outer loop: autonomous background coding/knowledge tasks Coding agents are going to reshape enterprises, and it's a lot more than just software development. This is where the action is for at least the next 18 months. So look for a lot more usable content from me, as I am back with an open-source company!

Friday the 13th and 10,000 Starlink sats in orbit🔥 @SpaceX is targeting, weather permitting, double-header Falcon 9 launches from the East and West Coast to deploy 54 @Starlink satellites. ... one of these satellites will represent the first time SpaceX surpass 10,000 Starlink satellites in orbit! 🔥

Thinking Timothee Chalamet is more attractive than Henry Caville is what happens when you've been on birth control for 10 years

@fleetingbits AI isn't eliminating technical debt: 1) Comprehension Debt You stopped writing the code, so you forgot the architecture. 2) Code Sloppification LLMs love verbose, bloated boilerplate. 3) AI Fatigue Burnout due to cognitive overload. Teams that ignore these will suffer.

AI isn't eliminating technical debt: 1) Comprehension Debt You stopped writing the code, so you forgot the architecture. 2) Code Sloppification LLMs love verbose, bloated boilerplate. 3) AI Fatigue Burnout due to cognitive overload. Teams that ignore these will suffer.

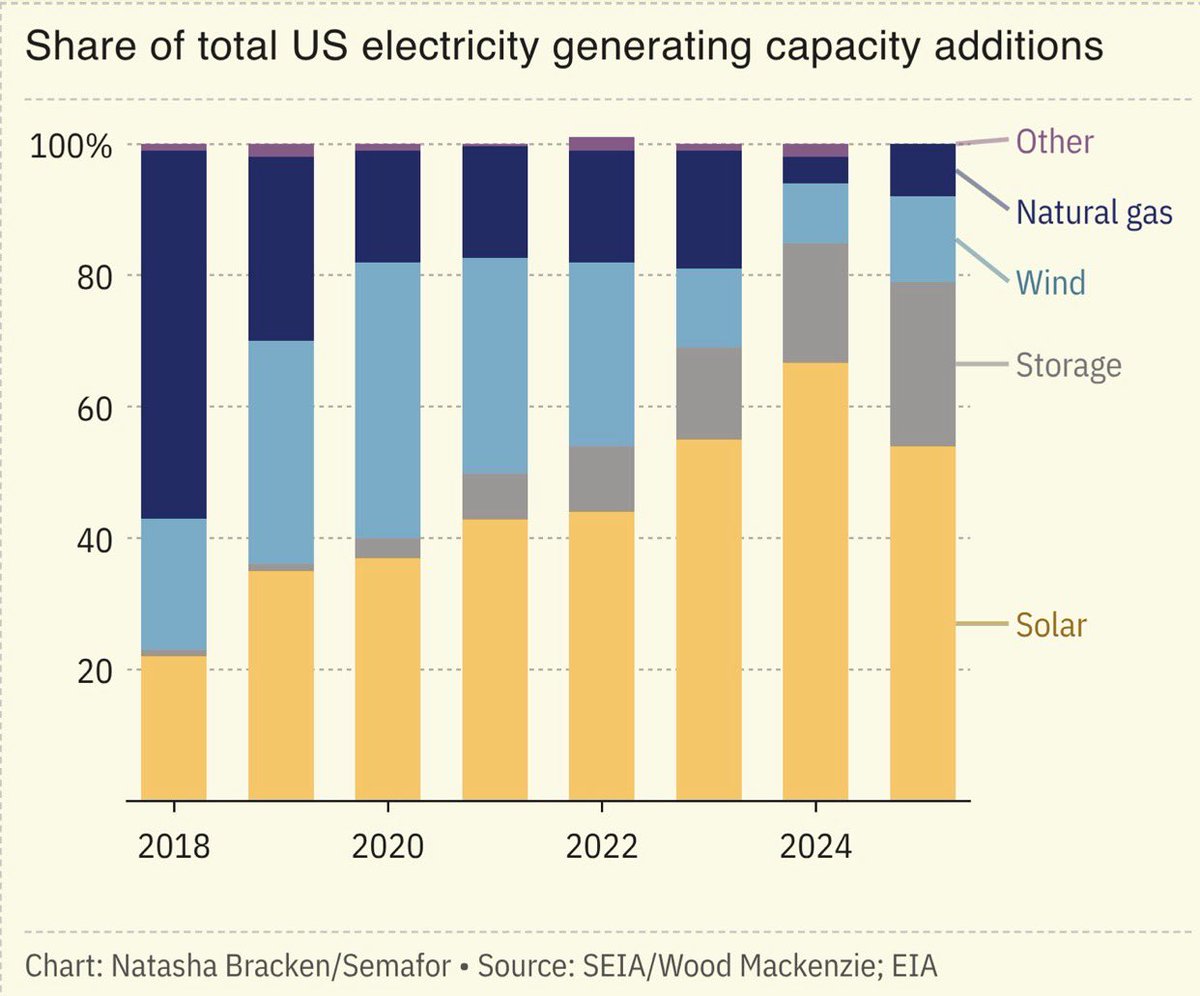

Solar accounted for 54% of all new electricity-generating capacity added to the US grid in 2025. Combined, solar and storage made up 79% of new capacity last year. China has significant growths in solar capacity and generation — the US must be doing the same. https://t.co/uOfdHDTvFJ

Another Soviet joke: The party secretary says: “Our harvest was so enormous that if we stacked them the potatoes could touch the feet of God.” Someone objects: “But in our country God doesn’t exist, comrade!” The secretary replies: “Correct. And neither do the potatoes.”

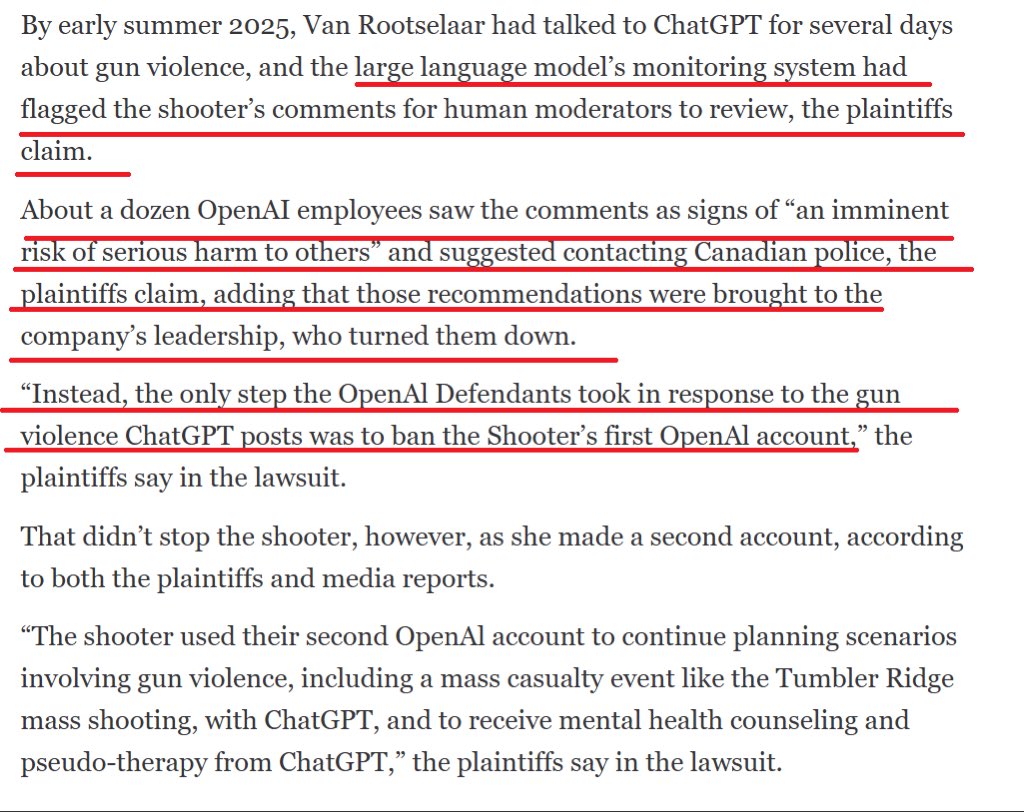

The trans school shooter at Tumbler Ridge laid out an elaborate plan to commit mass murder to ChatGPT. A dozen employees saw the plans and wanted to alert police but were turned down by company leadership. Why? https://t.co/9iJ0YscO2H