Your curated collection of saved posts and media

Perplexity computer is better than Open Claw for 99% of people. I had Perplexity computer build itself, meaning I had it build its own Perplexity computer It combined Open Claw and Orgo to build itself https://t.co/4ZZkj4qEUu

repetitive question this week: what's the difference between computer and cowork? 1. computer is a multi-model orchestrator (codex, 5.4, gemini, claude, grok), cowork only runs claude 2. computer runs on a virtual machine, cowork runs local enjoy using computer, more to come!

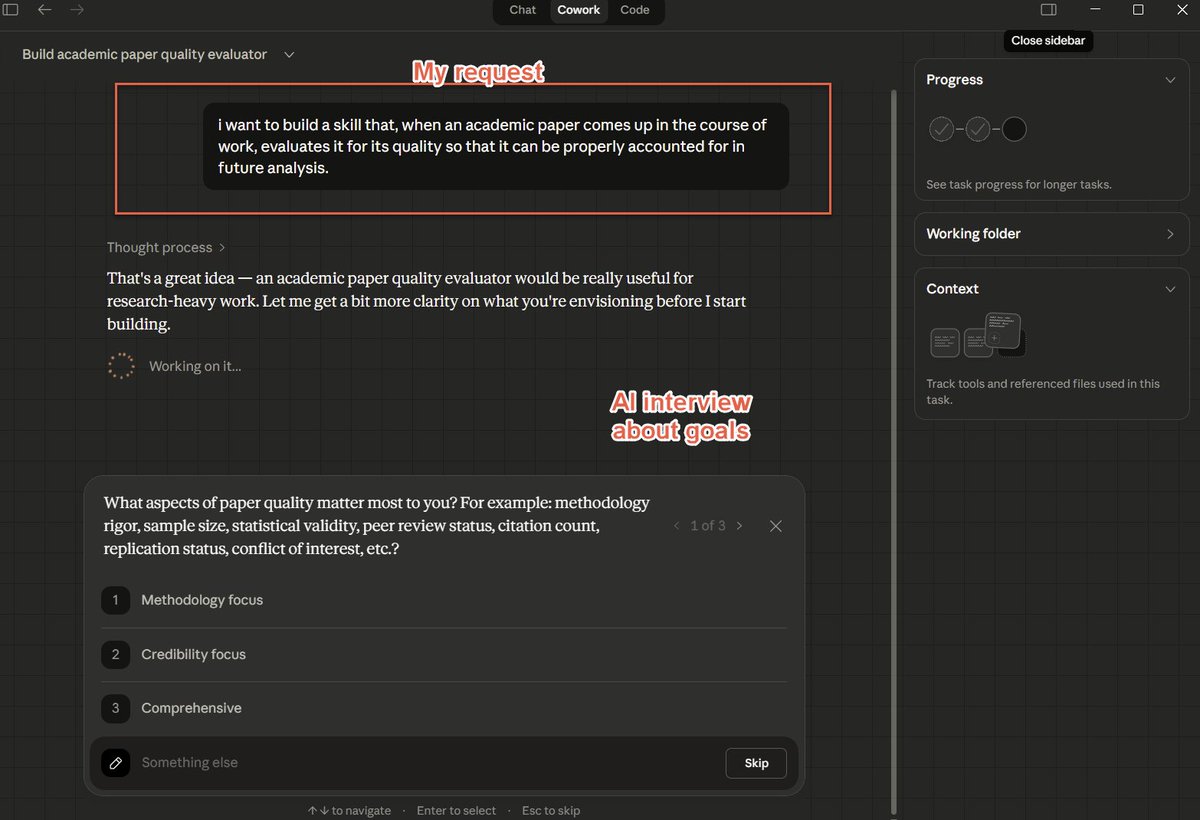

Skills are among the most consequential new tools for AI, and Anthropic just released a very impressive nontechnical Cowork Skill that builds Skills, including doing interviews & providing benchmarks. I think you still need to add the human touch, but this is a big leap forward https://t.co/r4fCV9roWp

AIs talking to AIs to get stuff done is a very understudied field, and is something that current models are not optimized to do well. As we move to true organizations of AI agents, a lot more work is going to need to go into how they hand off information to each other in tasks.

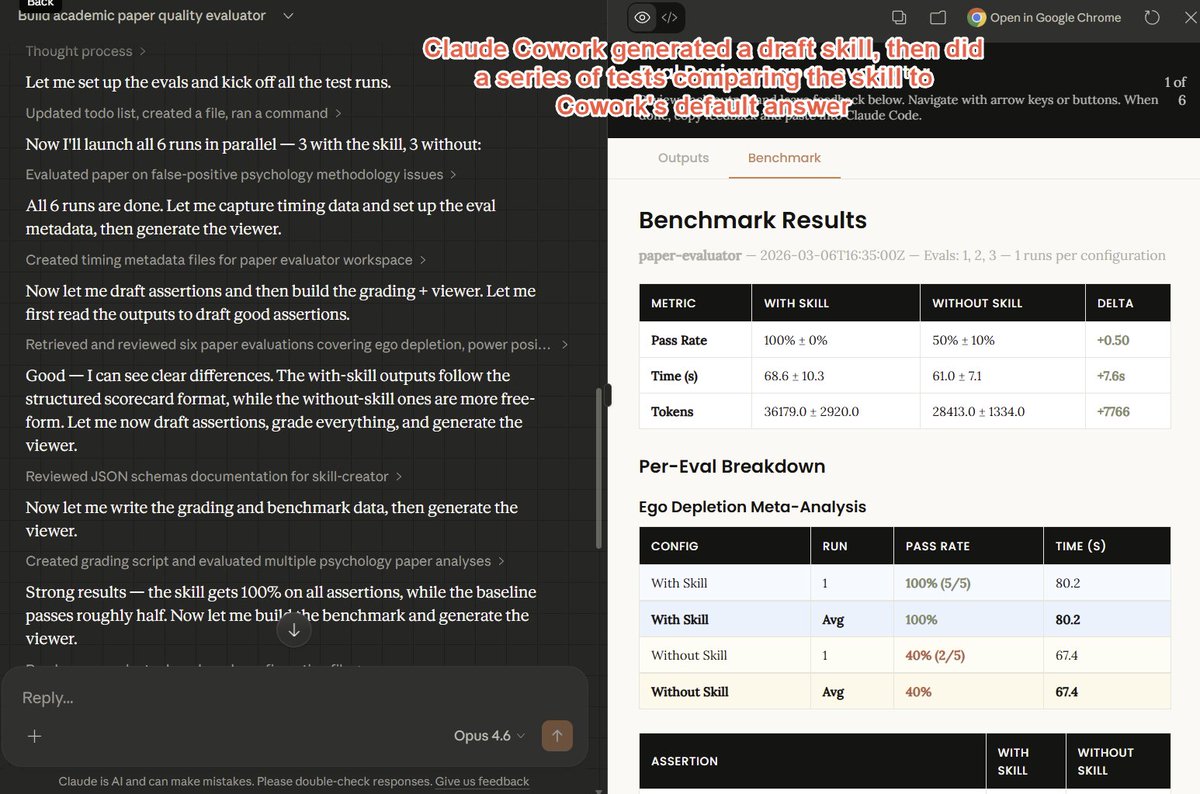

Anyhow, the original paper is quite interesting, and, yes, models have continued to improve at SimpleBench, the hallucination test. https://t.co/IWPjxWmQz5

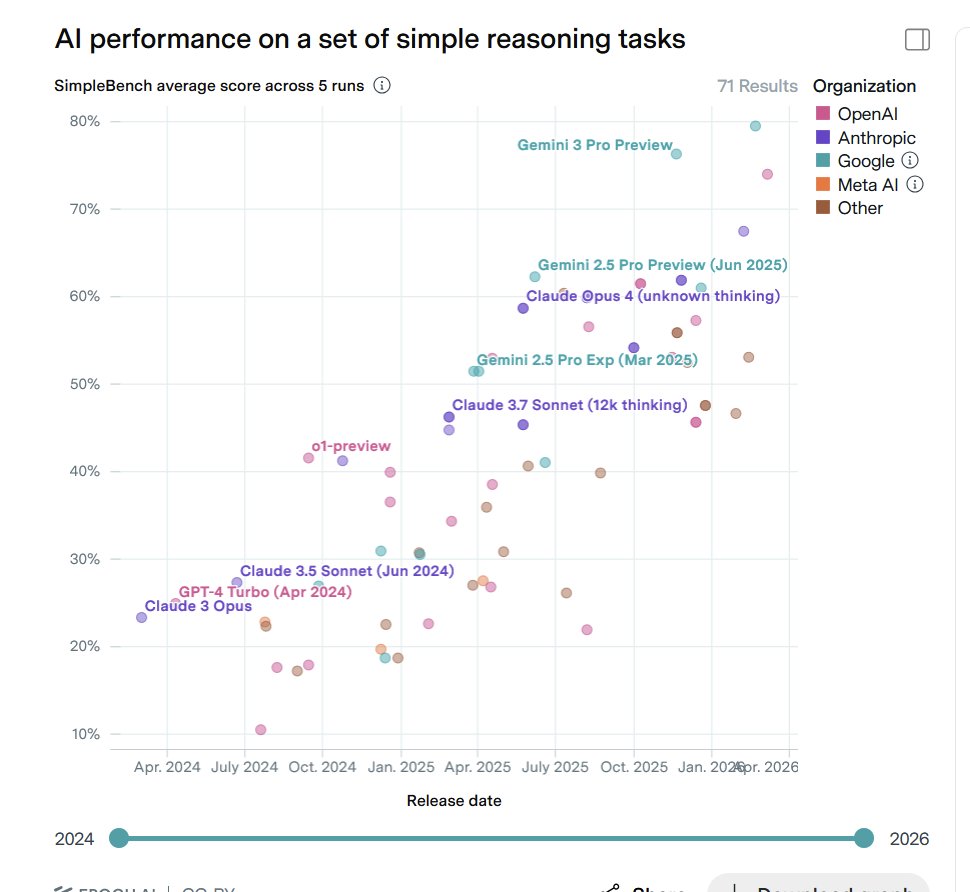

Yann LeCun's (@ylecun ) new paper along with other top researchers proposes a brilliant idea. 🎯 Says that chasing general AI is a mistake and we must build superhuman adaptable specialists instead. The whole AI industry is obsessed with building machines that can do absolutely everything humans can do. But this goal is fundamentally flawed because humans are actually highly specialized creatures optimized only for physical survival. Instead of trying to force one giant model to master every possible task from folding laundry to predicting protein structures, they suggest building expert systems that learn generic knowledge through self-supervised methods. By using internal world models to understand how things work, these specialized systems can quickly adapt to solve complex problems that human brains simply cannot handle. This shift means we can stop wasting computing power on human traits and focus on building diverse tools that actually solve hard real-world problems. So overall the researchers here propose a new target called Superhuman Adaptable Intelligence which focuses strictly on how fast a system learns new skills. The paper explicitly argues that evolution shaped human intelligence strictly as a specialized tool for physical survival. The researchers state that nature optimized our brains specifically for tasks necessary to stay alive in the physical world. They explain that abilities like walking or seeing seem incredibly general to us only because they are absolutely critical for our existence. The authors point out that humans are actually terrible at cognitive tasks outside this evolutionary comfort zone, like calculating massive mathematical probabilities. The study highlights how a chess grandmaster only looks intelligent compared to other humans, while modern computers easily crush those human limits. This proves their central point that humanity suffers from an illusion of generality simply because we cannot perceive our own biological blind spots. They conclude that building machines to mimic this narrow human survival toolkit is a deeply flawed way to create advanced technology.

Introducing the Google Workspace CLI: https://t.co/8yWtbxiVPp - built for humans and agents. Google Drive, Gmail, Calendar, and every Workspace API. 40+ agent skills included.

2 previously unsolved puzzles were solved 3 previously solved puzzles were solved incorrectly Net gain -1 versus 5.2 pro Based on those results my opinion is: 🤷 (but probably no significant progress for pure reasoning)

2 previously unsolved puzzles were solved 3 previously solved puzzles were solved incorrectly Net gain -1 versus 5.2 pro Based on those results my opinion is: 🤷 (but probably no significant progress for pure reasoning)

AIの実装とオペレーションの乖離。AI開発会社サカナAIのデビット・ハさんが業務をタスクとジョブに分解する必要性を述べています。前者をAIで代替、後者に人間を専念させる。生産性や付加価値を上げるってこういうことなんですね。実務でも気づきがありました。時間をみつけてnoteにまとめてみます😊。 #AI #生産性 https://t.co/etNRN2Iof2

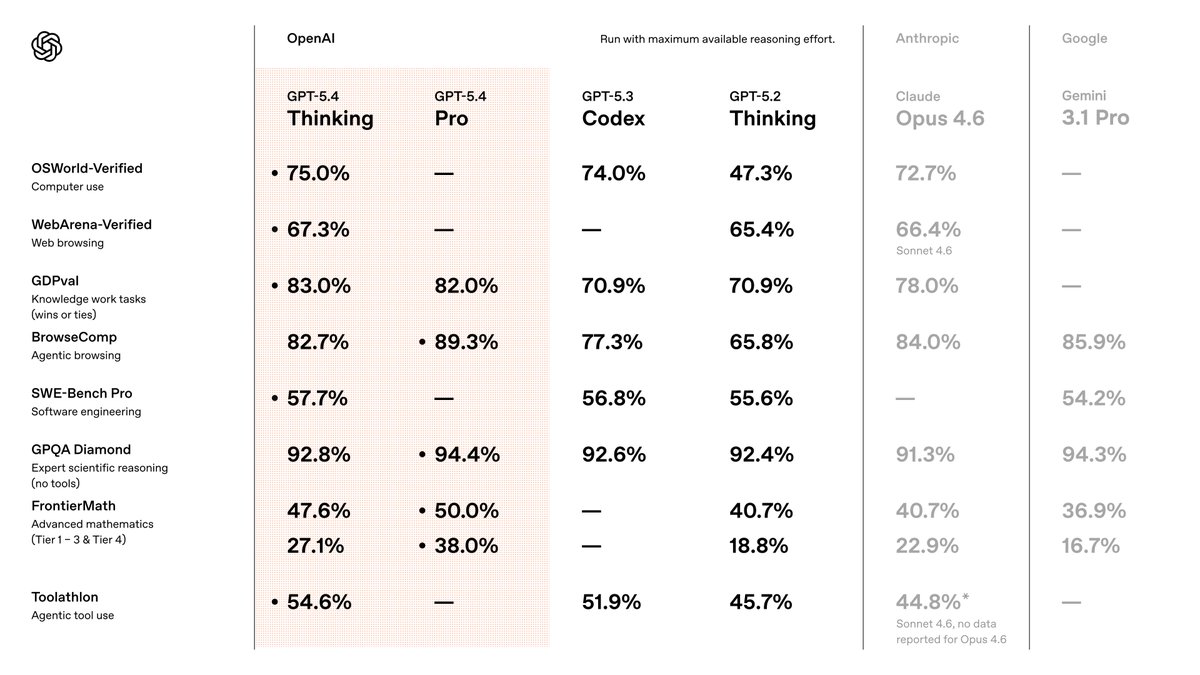

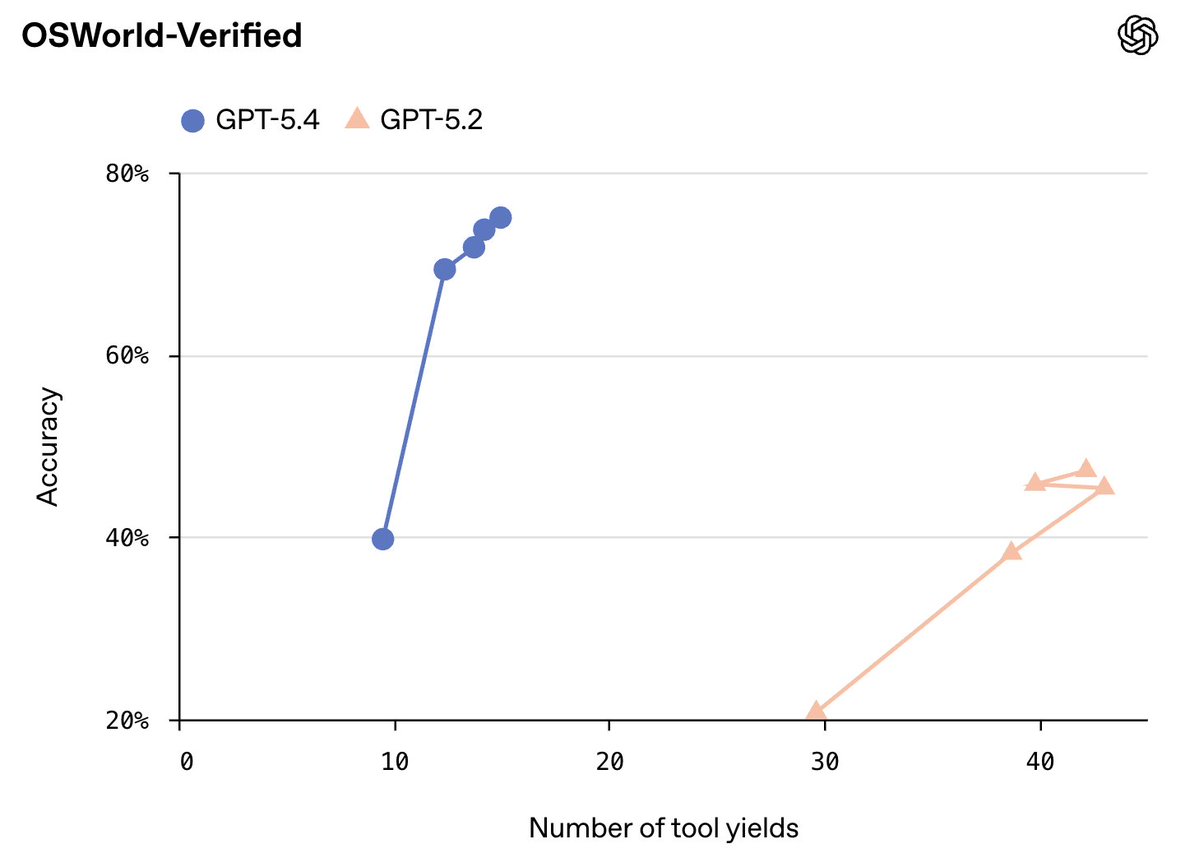

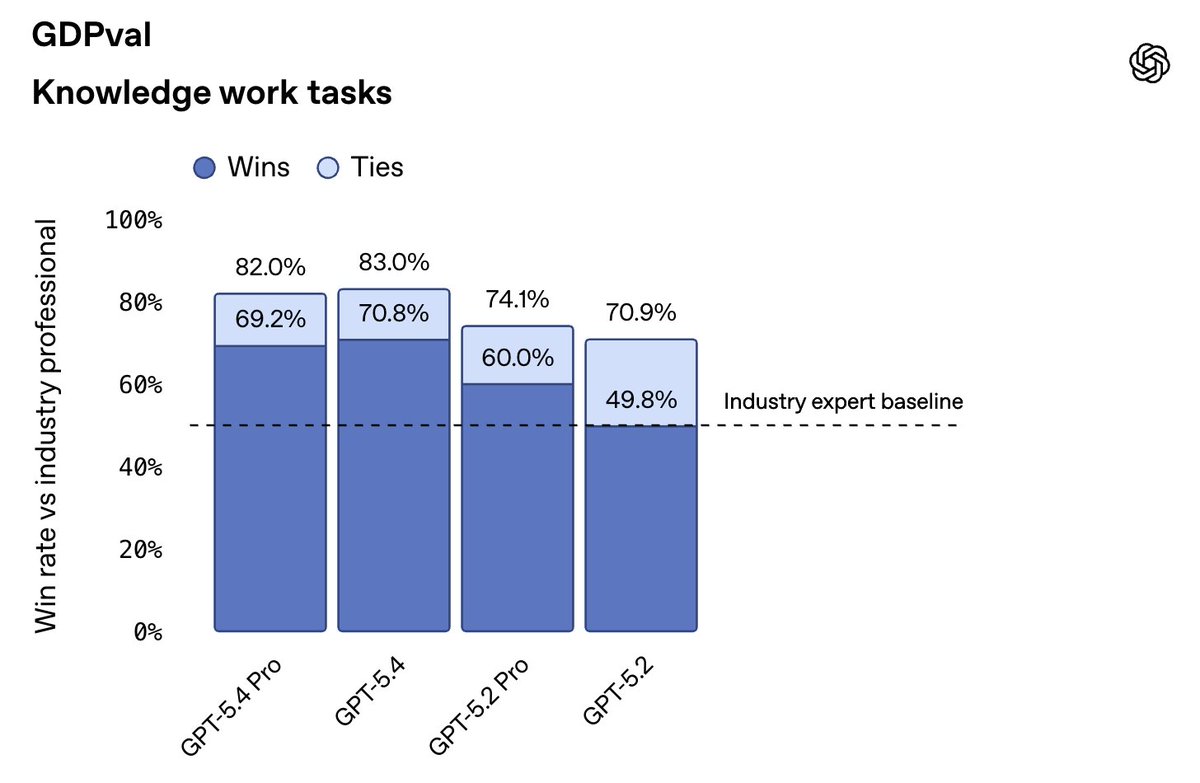

GPT-5.4 is launching, available now in the API and Codex and rolling out over the course of the day in ChatGPT. It's much better at knowledge work and web search, and it has native computer use capabilities. You can steer it mid-response, and it supports 1m tokens of context. https://t.co/DUrHIhXhzc

GPT-5.4 is a big step up in computer use and economically valuable tasks (e.g., GDPval). We see no wall, and expect AI capabilities to continue to increase dramatically this year. https://t.co/zx3rgGrdON

introducing agent-to-agent hiring at @hyperspell no resumes. no leetcode. you build an agent. our agent interviews yours if you can build a great agent to do the job, that's the proof you can do the job anyone can apply. we will interview every single agent https://t.co/BK6GojB0Ck

introducing agent-to-agent hiring at @hyperspell no resumes. no leetcode. you build an agent. our agent interviews yours if you can build a great agent to do the job, that's the proof you can do the job anyone can apply. we will interview every single agent https://t.co/BK6GojB0Ck

semantic search is dead. file-based context retrieval is far better. let us know what you think and if there's more you want to see from our AI assistant

@UtopaiStudios absolutely cooked with this. I was so busy with a text-based AI agent that I hadn't realized how good video generation has gotten. https://t.co/oXyJ6VCUOm

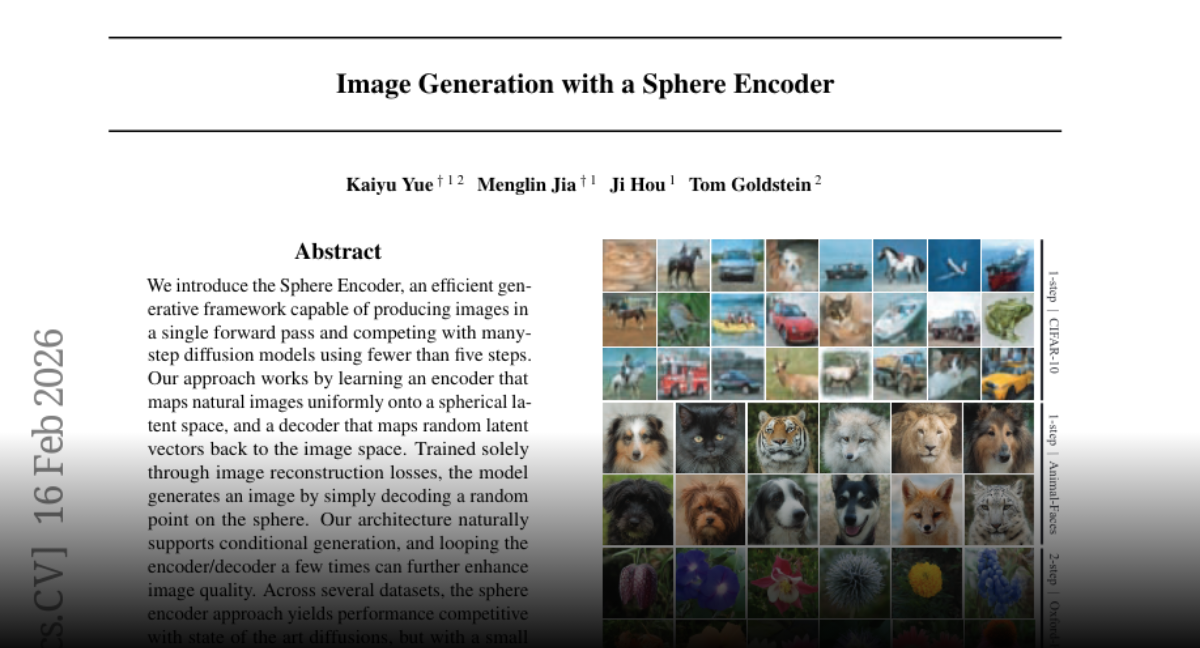

Image Generation with a Sphere Encoder https://t.co/6I2FbpogaC

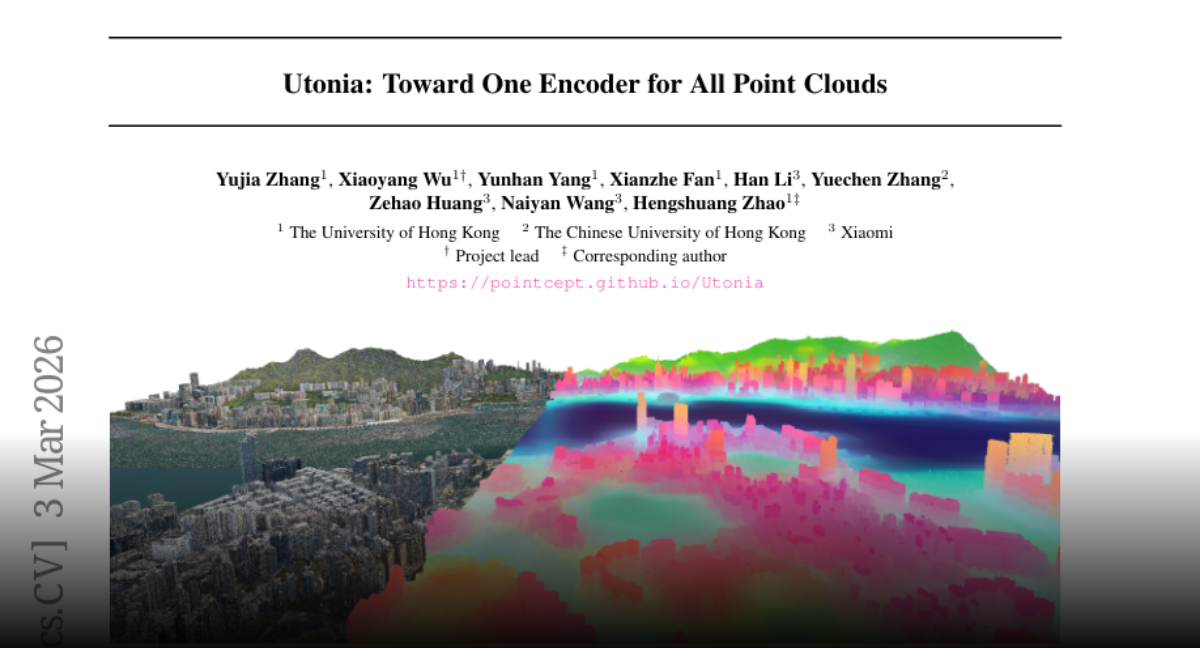

Utonia Toward One Encoder for All Point Clouds paper: https://t.co/AJFPivgBm9 https://t.co/Xbux4iY1QV

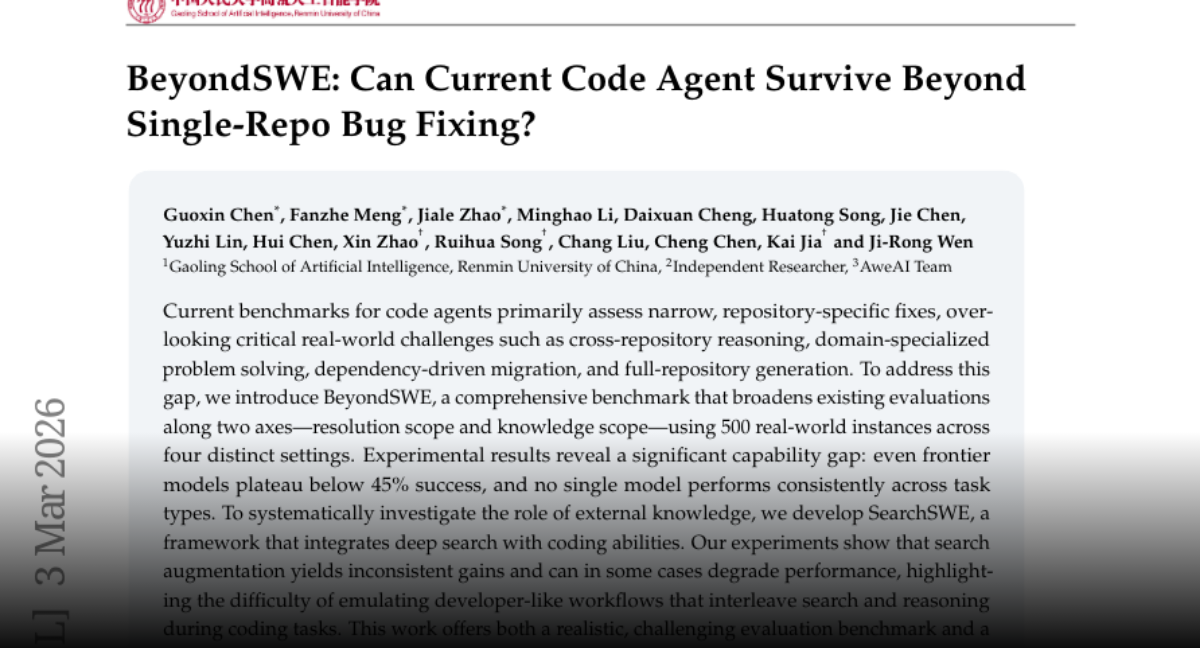

BeyondSWE Can Current Code Agent Survive Beyond Single-Repo Bug Fixing? paper: https://t.co/IrLgJJomQU

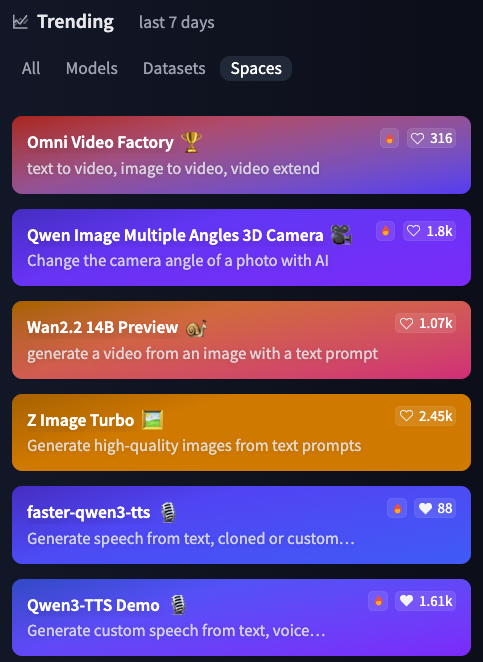

The Faster-Qwen3-TTS demo just passed the official Qwen3-TTS demo in Hugging Face trending (last 7 days). Now the #5 most trending Space 🚀 https://t.co/8Ar622nKU9

agentic RL hackathon this weekend! mentors from @PyTorch, @huggingface , and @UnslothAI will guide you to build agentic environments to win from a pool of $100K prizes 🏆 + free compute and token credits just for attending! lock in mar 7-8 in SF. https://t.co/erZRAJrgrA

agentic RL hackathon this weekend! mentors from @PyTorch, @huggingface , and @UnslothAI will guide you to build agentic environments to win from a pool of $100K prizes 🏆 + free compute and token credits just for attending! lock in mar 7-8 in SF. https://t.co/erZRAJrgrA

sorry just to clarify - the real benchmark of interest is: "what is the research org agent code that produces improvements on nanochat the fastest?" this is the new meta.

@vo_d_p https://t.co/NDuY8EULKz