Your curated collection of saved posts and media

@adriannalakatos @fdotinc @DarioAmodei @elonmusk @ilyasut @zoink @ShaanVP @rauchg @paulg @steipete @ericbahn @karpathy @MrSiqi @bridgitmendler @tankots @mntruell @martin_c @peterthiel @mcuban @rpetersen @tonyzzhao Those would all be awesome. Your talks are great for founders, as you know. Would be awesome if you could live stream them, but understood that would cause some not to share the real insights and troubles they faced inside their companies.

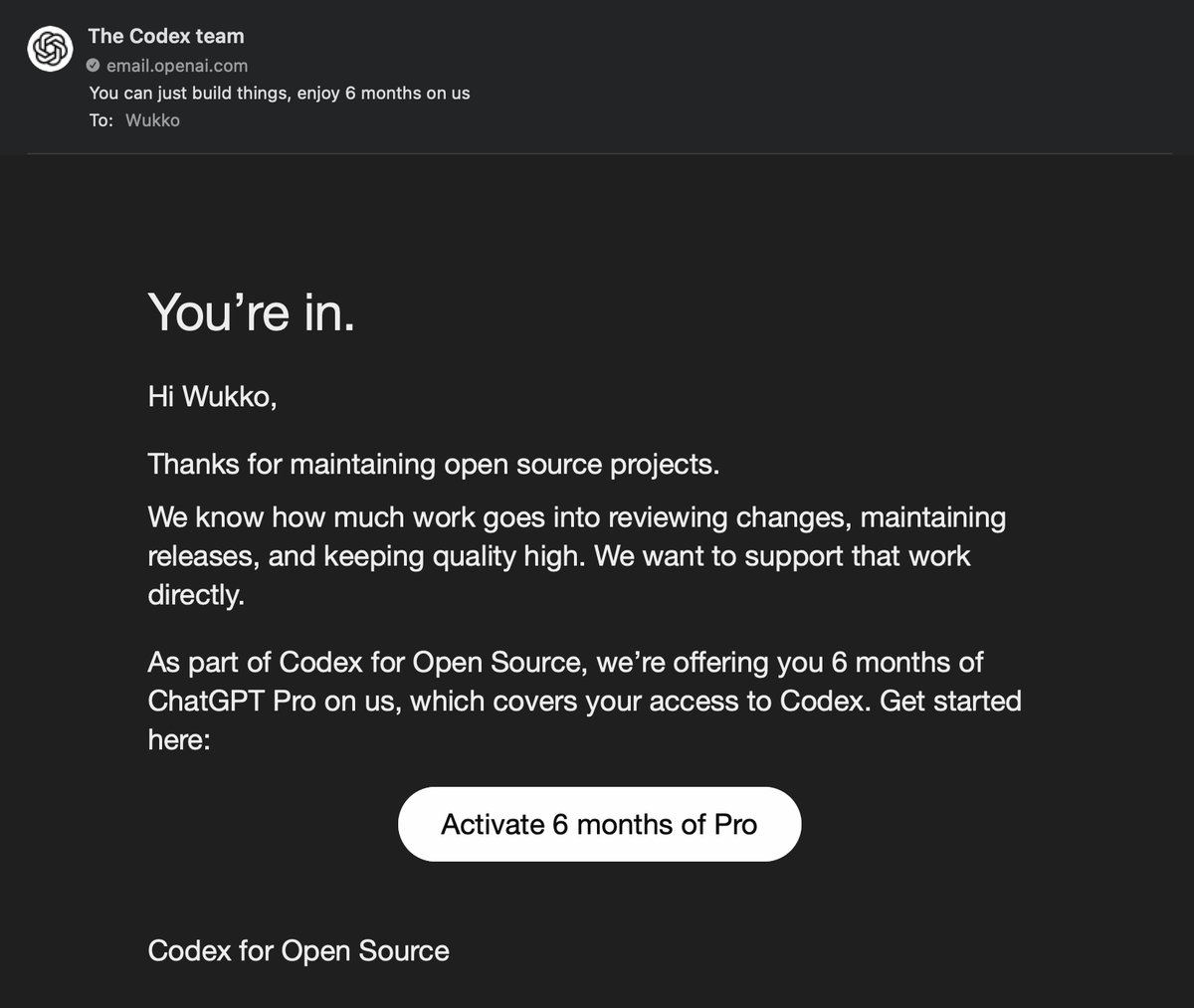

So pleased to have been accepted in the Codex for Open Source program. Thanks to @reach_vb and the team at OpenAI for putting this together - and supporting builders, maintainers and "getting" Open Source code. Lets' go build! https://t.co/iFdwDc1yui

If your GitHub looks like this. Please apply. https://t.co/zN5U890eBx

This is one of the ideas that was so colossally stupid that I'm forced to question the intelligence of everyone involved

@negroprogrammer still fun-employed I see…

New: OpenAI saw the AI coding revolution coming years ago, but was beat to market by Anthropic. This is how OpenAI got in this position, and how a small Codex team spent the last year racing to build a billion-dollar competitor to Claude Code. (yes Codex now has >$1B in ARR) https://t.co/cgbrPintHj

People started emailing my personal email to get credits thanks @interaction for being the bouncer. https://t.co/09tTAgDvYg

Thank you @OpenAIDevs https://t.co/pSsMAevPpg

Chat, any of these good? https://t.co/UO1RnHZYlp

❤️ @OpenAI https://t.co/WDKwr1bjn1

yay i got free codex for open source https://t.co/OixB7wPZVx

i would so dearly love to get to read what grammarly's lawyers had to say about all of this

@EndWokeness @quinnrob76 Insanity that this is happening in NYC

Perplexity Computer now runs on your portfolio. Connect your brokerage accounts securely through @Plaid, then ask Computer to make a personal terminal that's always on. https://t.co/ZbCR0APeNZ

Perplexity Computer now runs on your portfolio. Connect your brokerage accounts securely through @Plaid, then ask Computer to make a personal terminal that's always on. https://t.co/ZbCR0APeNZ

@parmita Rofl maybe it's time to try the Chinese LLMs 😭

@miolini @ronbrachman No

@jgoldfein Awesome to have you join us in this exciting adventure!

Yann just bet a billion dollars that the entire industry is building on the wrong foundation. Large language models predict the next word. They're trained on text, so they understand language. But the real world isn't made of words. It's made of continuous sensor data: camera feeds, touch, sound. And most of that data is unpredictable. You can't predict every pixel in a video the way you predict the next token in a sentence. Generative models fail here because they try to predict everything, including noise. AMI Labs is building world models using JEPA (a method LeCun proposed in 2022 that learns abstract representations of reality and predicts in that compressed space, not in raw pixels). Action-conditioned versions let AI simulate the consequences of actions before taking them. That's not generation. That's understanding. This unlocks AI that can operate in the physical world without hallucinating: 1. Robotics that plans multi-step actions 2. Healthcare devices where errors kill patients 3. Industrial process control under safety constraints 4. Wearables that adapt to real-time sensor input If JEPA works at scale, the next wave of AI companies won't fine-tune LLMs. They'll train world models on sensor data. LeCun's CEO already predicts every startup will rebrand as a "world model company" within six months. The architecture war is starting.

Everybody is talking about recursive self-improvement (RSI) and meta learning. Here is my old 2020 talk about this [1]. It has aged well. Example: humans still define the starts & ends of trials of many modern meta learners. My RSI systems since 1994 LEARN to (re)define them [2]! [1] Meta Learning Machines in a Single Lifelong Trial (talk for workshops at ICML 2020 and NeurIPS 2021, based on earlier talks since 1994). Abstract: the most widely used machine learning algorithms were designed by humans and thus are hindered by our cognitive biases and limitations. Can we also construct meta learning algorithms that can learn better learning algorithms so that our self-improving AIs have no limits other than those inherited from computability and physics? This question has been a main driver of my research since I wrote a thesis on it in 1987 [2]. Here I summarize our work on meta reinforcement learning with self-modifying policies in a single lifelong trial (since 1994), and mathematically optimal meta-learning through the self-referential Gödel Machine (since 2003). Many additional publications on meta-learning since 1987 can be found in the RSI overview [2]. [2] J. Schmidhuber (AI Blog, 2020-2025). 1/3 century anniversary of first publication on recursive self-improvement (RSI) and meta learning machines that learn to learn (1987). For its cover I drew a robot that bootstraps itself. 1992-: gradient descent-based neural meta learning. 1994-: meta reinforcement learning with self-modifying policies. 1997: meta RL plus artificial curiosity and intrinsic motivation. 2002-: asymptotically optimal meta learning for curriculum learning. 2003-: mathematically optimal Gödel Machine. 2020-: new stuff!

@Dawson1320 @elonmusk It will create many businesses. Yup! But we are a few years away from that. Buying a Cybercab is first. :-)

@hamza_benyamina i would argue that's exactly where mcps shine. but there is more it and i find that it's very helpful for things like orchestration and routing which agent in production depend on

https://t.co/HcCun1424f

https://t.co/HcCun1424f