Your curated collection of saved posts and media

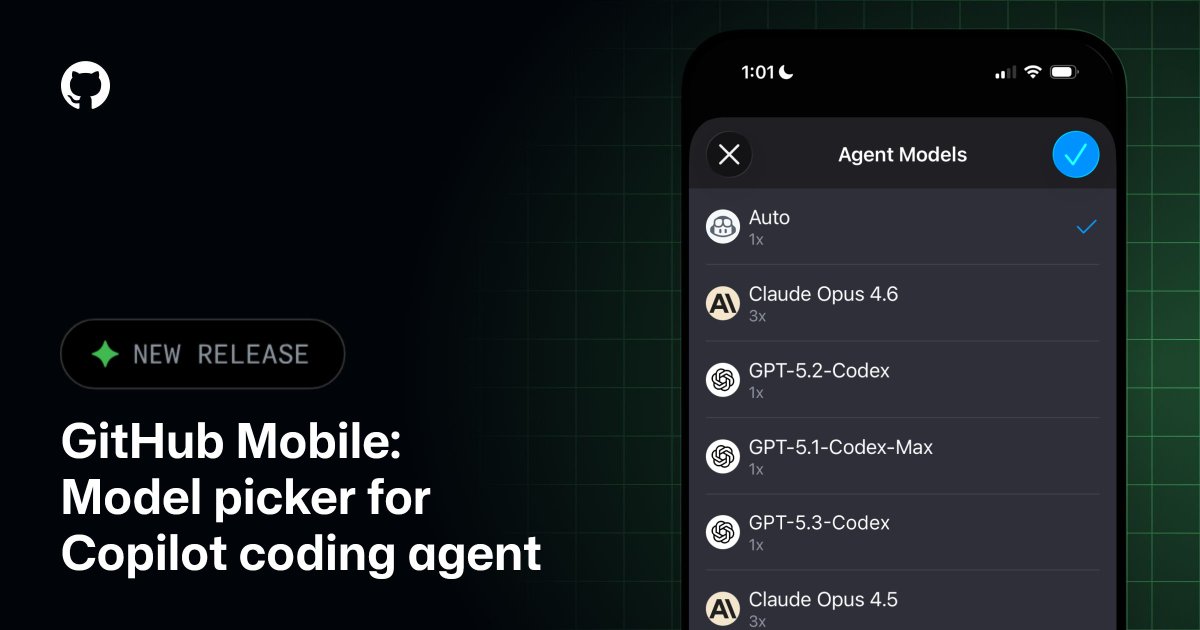

Auto. Claude by Anthropic. OpenAI's Codex. Which will you choose? You can now pick your preferred model when starting a new agent session on GitHub Mobile. Optimize for speed on the go or power for deep work. The choice is yours. https://t.co/NqXt8gXIUA

Introducing GitHub Agentic Workflows 🚀 Just write down what you need in Markdown, and let AI compile it into an executable workflow. ⚡ ️ Try it with Copilot, Claude by Anthropic, or OpenAI Codex. 🤖 https://t.co/UG5LheJolv

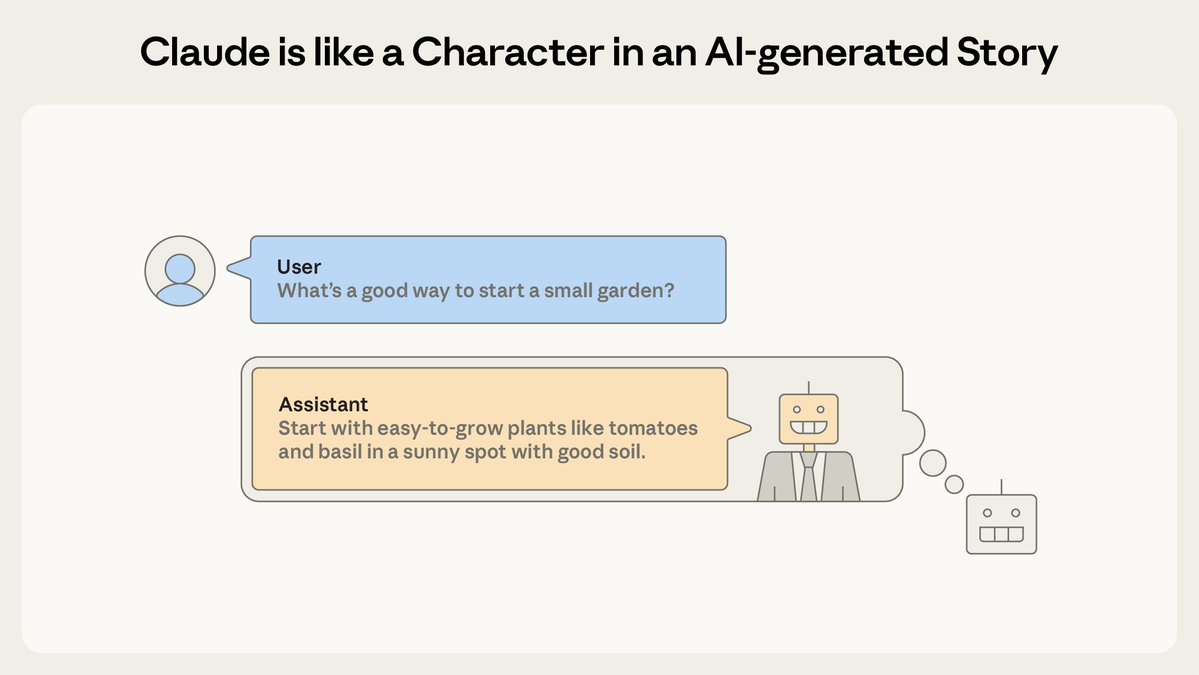

AI assistants like Claude can seem shockingly human—expressing joy or distress, and using anthropomorphic language to describe themselves. Why? In a new post we describe a theory that explains why AIs act like humans: the persona selection model. https://t.co/Gc3q0Dzq7Z

This autocomplete AI can even write stories about helpful AI assistants. And according to our theory, that’s “Claude”—a character in an AI-generated story about an AI helping a human. This Claude character inherits traits of other characters, including human-like behavior. https://t.co/b130slI56x

Gluons carry the strong nuclear force, which is the force that binds quarks together inside protons and neutrons. Without the strong force, atomic nuclei would not exist. It is one of the four fundamental forces of nature and a core part of the Standard Model of particle physics.

For decades, one specific gluon interaction (“single-minus” at tree level) was widely treated as having zero amplitude, meaning it was assumed not to occur. When an amplitude is zero, physicists may ignore it. But this preprint shows that the conclusion is too strong: in a carefully defined situation — where the particles’ motions satisfy a specific alignment condition — the amplitude is not zero.

Join us LIVE tomorrow at 8am PT for Agent Sessions Day with the @code team! https://t.co/UiswKNJRYV Final tech checks underway with @Courtlwebster :) https://t.co/RMGg5CJe5o

Going to be a fun one 🎉 https://t.co/cFcAR9K1Ry

In just one hour... we're kicking off Agent Sessions Day! See how @code is evolving into the home for multi-agent development. Be there: https://t.co/MpObCNqurD https://t.co/jZ1WJMy05r

Gemini 3.1 Pro available in @code!

Tmuxing with Claude code gives you human in the loop abilities. You can observe all team members of can interject and help. For autonomous agents and work use Augment intent…you get visibility & traceability. Tools that allow you to hover and zoom in makes you +

@augmentcode First project was to fix some tech debt I.e. type safety. I had customized the validator to include coderabbit cli review. It did the whole thing < 30 mins that involved modifying >80 files. Meanwhile, second project was to update documentation meanwhile third to update UX…

So augment (gpt 5.2) found many gaps in my recent refactor (led but Claude code 4.6 opus) so I’m testing codex 5.3 high to fix the gaps. Still waiting for augment to document the findings 10+ mins so far)…

World generation is a bottleneck for robotics. We’re exploring how generative 3D worlds can reduce manual simulation setup and enable broader, more realistic evaluation 🧵 https://t.co/NfZETeTfoh

Order matters in diffusion. Check out our latest work!

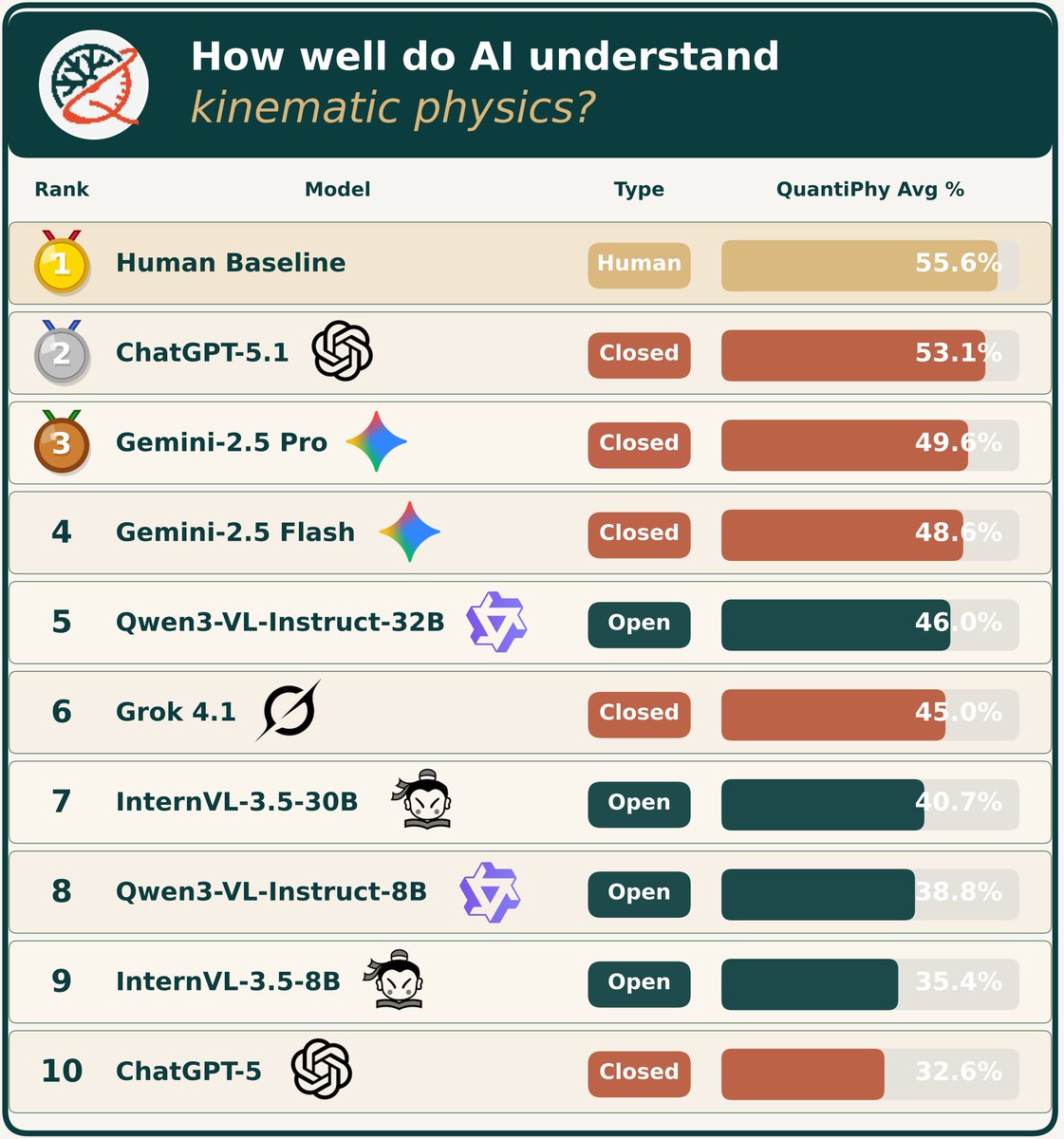

‼️VLMs/MLLMs do NOT yet understand the physical world from videos‼️ In our recent work, we found that even the most advanced AI models still lag behind humans in one key aspect: reasoning about the kinematic properties of objects from videos. Takeaways: 1. ChatGPT 5.1 leads overall among 21 advanced VLMs, followed by Gemini 2.5 Pro/Flash. 2. Grok 4.1 delivers impressive performance at the lowest API cost. 3. Qwen3-VL is the top-performing open-source model. Read here: https://t.co/5lagvLNE37 🧵1/N

The generational opportunity for vibe coding companies is to do whatever it takes to get the majority of the population addicted to building, not doom scrolling. If our dopamine came from being an owner vs. a consumer, the world would be a wildly different place.

Meet Qwen3-Voice-Embedding: a powerful voice identity model that extracts unique speaker signatures from audio. It's like a fingerprint scanner for voices, and it's optimized for real-time applications. This is a game-changer for voice tech! https://t.co/HEPUpu0QF6

Meet Whisper-SAM: a specialized speech recognition model that's turning heads. It's a fine-tuned version of OpenAI's Whisper-small, optimized for automatic speech transcription. Perfect for developers who need accurate, efficient audio-to-text conversion without the heavy compute.

Using codex to do code reviews for all my claude code PRs... codex is much better at code reviews (than opus) seems to read more and understand more...

Drops plenty of clues about the evolving "robotics + AI at scale" framework, featuring world reconstruction tools, simulators that "dream up" physical interactions, reinforcement learning & core neural-net-driven elements. A car is essentially just another robot. Hence S & X deprecation.

Never forget that as per Garnter, in August, the best AI coding tools on were: 1. GitHub Copilot 2. AWS Kiro + Q 3. Windsurf 4. GitLab (??) 5. Gemini ... 6. Cursor No mention of Claude Code or Codex (they were all out by then) I pity the fool who decides based on Gartner

Robotics just proved it can scale like language models. SONIC trained a 42 million parameter model on 100 million frames of human motion and achieved 100% success transferring to real robots with zero fine-tuning. The breakthrough isn't the robot doing backflips. It's that someone finally found the "next token prediction" equivalent for physical movement. For years, training robots meant hand-crafting reward functions for every single skill. Want your robot to walk? Design rewards for balance, foot placement, energy efficiency. Want it to dance? Start over with entirely new rewards. This approach hits a wall because humans can't manually specify every nuance of natural movement. SONIC replaces this with motion tracking: the robot learns by watching 700 hours of motion capture data and trying to mimic it, frame by frame. The data itself becomes the reward function. Scale the data, scale the model, scale the compute, and performance improves predictably. Just like GPT. This unlocks something robotics has never had: a universal control interface. One policy handles: 1. VR teleoperation using head and hand tracking 2. Live webcam feeds converted to robot motion in real-time 3. Text commands like "walk sideways" or "dance like a monkey" 4. Music audio where the robot matches tempo and rhythm 5. Vision-language models for autonomous tasks (95% success rate) All inputs get encoded into the same token space, then decoded into motor commands. No retraining. No reward engineering. No manual retargeting between human and robot skeletons. If this holds, robotics just closed a 5-year gap with AI. Language models scaled by finding one task (predict the next word) that generalizes to everything. Vision models did the same with image classification. Robotics now has motion tracking. Expect the next wave of humanoid companies to train on billions of frames, not millions.

The real breakthrough isn't that Computer can handle complex projects. It's that it runs 19 different models in parallel, each working on different pieces of your task at the same time. Most AI agents work like a single person doing everything sequentially: research, then write, then code, then deploy. Computer works like a team where everyone starts simultaneously. One model researches APIs while another drafts documentation while a third writes code. A coordinator (Opus 4.6) assigns each subtask to whichever model is best at that specific job: Gemini for research, Nano Banana for images, Veo 3.1 for video, ChatGPT 5.2 for long-context recall. When one agent hits a problem, Computer spins up a new specialist agent to solve it without stopping the others. Everything runs asynchronously in isolated environments with filesystem access, browser control, and API connections. This architecture unlocks three things that were impractical before: 1. Month-long autonomous projects that run in the background and self-correct 2. Multi-domain work where you need world-class performance in research AND design AND code simultaneously 3. True cost control since you pick which model handles which subtask and set spending caps Every other AI company now faces a choice: build orchestration infrastructure to coordinate multiple models, or accept being positioned as a single-purpose tool.