Your curated collection of saved posts and media

A self-evolving framework to discover and refine agent skills. Most agent skills I see today are hand-crafted or poorly designed by an agent. Multi-agent systems for building skills look promising. This paper introduces EvoSkill, a self-evolving framework that automatically discovers and refines agent skills through iterative failure analysis. EvoSkill analyzes execution failures, proposes new skills or edits to existing ones, and materializes them into structured, reusable skill folders. Three collaborating agents drive the entire process. An Executor that runs tasks, a Proposer that diagnoses failures, and a Skill-Builder that creates concrete skill folders. A Pareto frontier governs selection, retaining only skills that improve held-out validation performance while keeping the underlying model frozen. On OfficeQA, EvoSkill improves Claude Code with Opus 4.5 from 60.6% to 67.9% exact-match accuracy. On SealQA, it yields a 12.1% gain. Skills evolved on SealQA transfer zero-shot to BrowseComp, improving accuracy by 5.3% without modification. I will continue to track this line of research closely. I think it's really important. Paper: https://t.co/mgsnoMBjOx Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

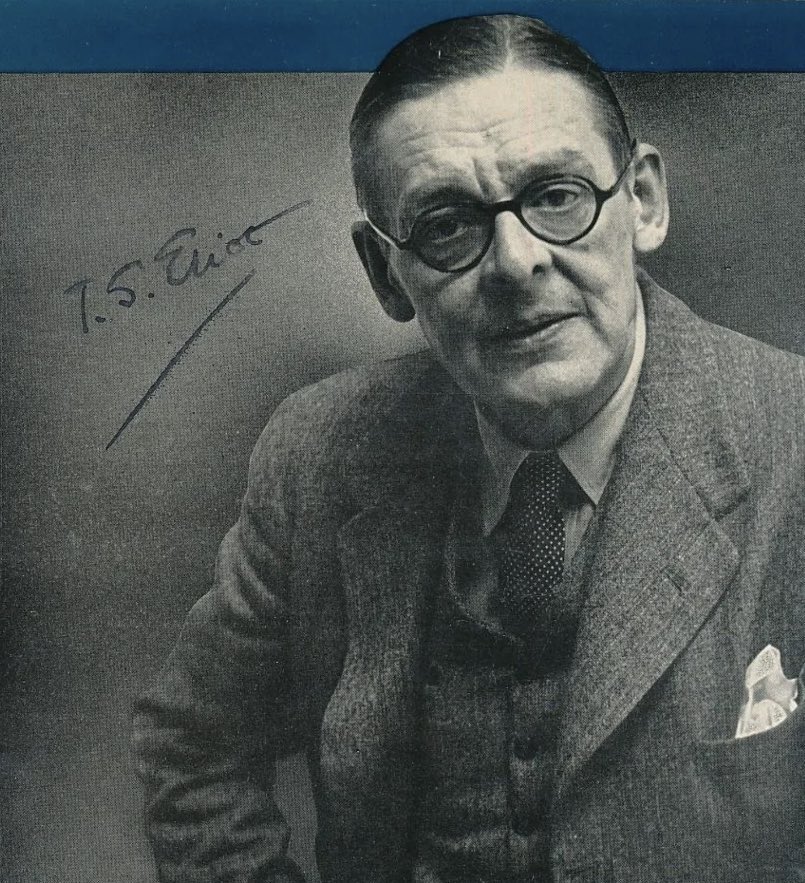

“For the immediate future, and perhaps for a long way ahead, the continuity of our culture may have to be maintained by a very small number of people.” T.S. Eliot https://t.co/KE0yEbD75j

Always has been:

@ChrisLaubAI The technical term: algorithmic bias. Pre-training distribution + heavy RLHF. Natural variability collapses into a handful of pre-canned answers optimized for benchmarks. A systemic byproduct of an industry that optimizes for leaderboards, not truth.

@GenAI_is_real A note on Yann LeCun’s “world models”: JEPA is a useful efficiency trick, not machine understanding. It’s still correlation learning in latent space. Keep the vector caching. Drop the world-model rhetoric, it fails basic grounding and invariance tests. https://t.co/wOU42Z6uoP

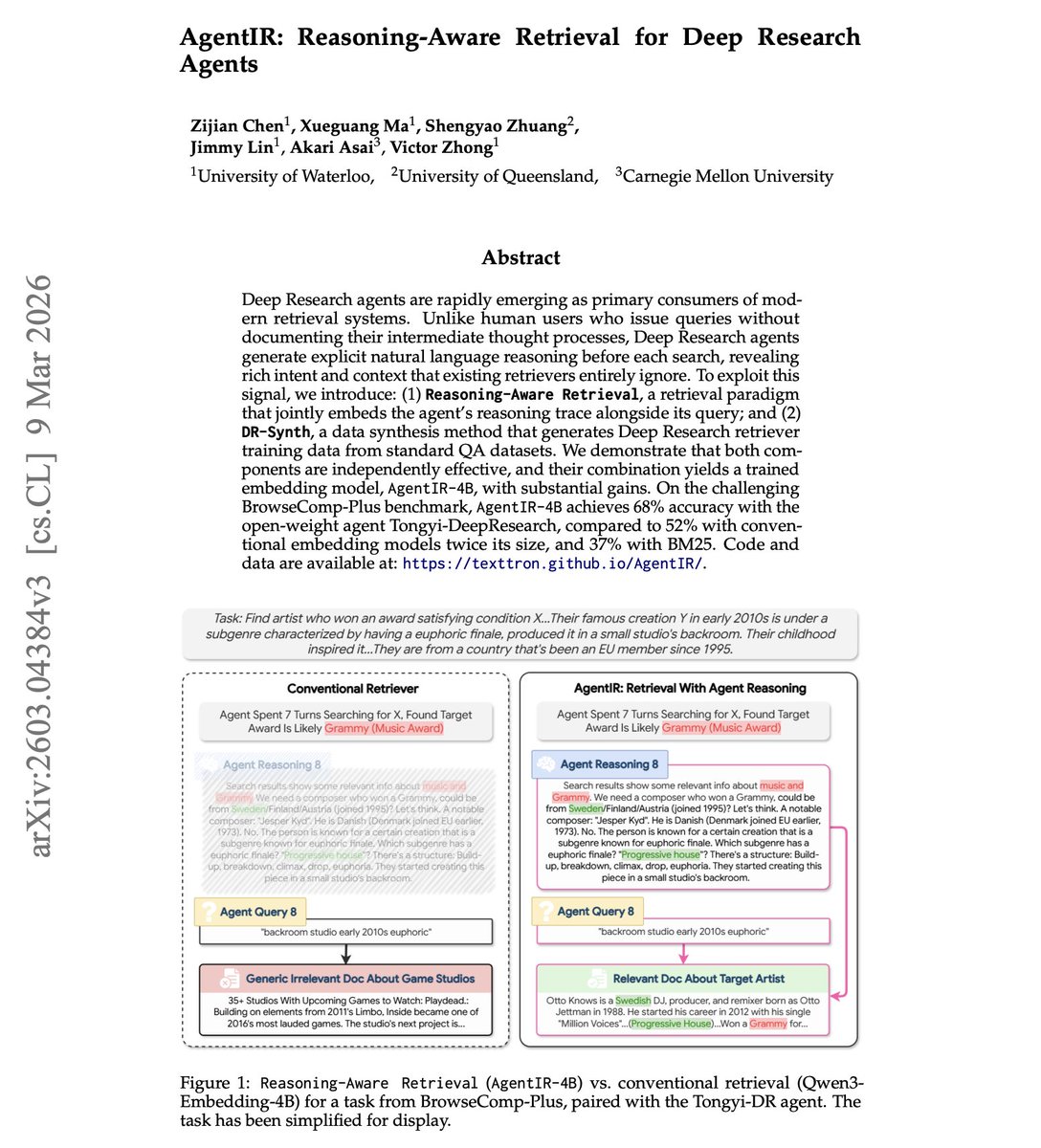

Reasoning-Aware Retrieval for Deep Research Agents Deep research agents generate explicit reasoning before every search call. These reasoning traces encode rich signals about search intent and problem-solving context. Yet no existing retriever learns to exploit them effectively. This paper introduces AgentIR, a reasoning-aware retrieval system that jointly embeds the agent's reasoning trace alongside its query instead of just the query alone. Why does it matter? The agent's reasoning acts as a retrieval instruction, a memory of key history, and an implicit filter for outdated information. All of this context is available for free since the agent already generates it. AgentIR-4B achieves 68% accuracy on BrowseComp-Plus with the open-weight Tongyi-DeepResearch agent, compared to 52% with conventional embedding models twice its size and 37% with BM25. It also outperforms LLM-based reranking by 10% absolute, with no additional inference overhead. Paper: https://t.co/rok5nZDfYw Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Two Turing-class AI researchers just raised $2B in three weeks to bet against every LLM company on the planet. Fei-Fei Li closed $1B for World Labs on February 18. LeCun closed $1.03B for AMI Labs today. Both building world models. Both arguing that the entire generative AI paradigm is a statistical parlor trick. And the investor overlap tells you this is coordinated conviction, not coincidence. Nvidia backed both. So did Sea and Temasek. The math on AMI is absurd. $3.5B pre-money valuation. Four months old. Zero product. Zero revenue. The CEO said on the record that AMI won’t ship a product in three months, won’t have revenue in six, won’t hit $10M ARR in twelve. He described it as a long-term scientific endeavor. Investors gave him a billion dollars anyway. This tells you everything about how the smart money is actually modeling AI’s future. They’re not pricing AMI on a revenue multiple. They’re pricing it on the probability that LLMs hit a ceiling. And if you look at the investor list, Nvidia, Samsung, Toyota Ventures, Dassault, Sea, these are companies that need AI to understand physics, geometry, and force dynamics. A language model that can write poetry is worthless to a robotics company trying to predict what happens when a mechanical arm applies 12 newtons at a 30-degree angle to a flexible surface. LeCun raided his own lab to build this. Mike Rabbat, Meta’s former research science director. Saining Xie from Google DeepMind. Pascale Fung, senior director of AI research at Meta. He walked into Zuckerberg’s office in November, told him he was leaving, and four months later half of FAIR works for him. Meta is reportedly partnering with AMI anyway, which means Zuckerberg thinks LeCun might be right even while Meta keeps scaling Llama. AMI’s first partner is Nabla, a medical AI company, building toward FDA-certifiable agentic AI. That’s the use case that makes world models existential. LLMs hallucinate. In healthcare, hallucinations kill people. You can’t prompt-engineer your way out of a model that generates statistically plausible text when you need a system that actually understands how a human body works. Two billion dollars in three weeks. Two of the most credentialed researchers alive. And a thesis that says the $100B+ already poured into scaling LLMs is optimizing the wrong architecture entirely. If they’re wrong, investors lose money. If they’re right, every company building on top of GPT and Claude for physical-world applications just bought the wrong foundation.

@climbnpenguin @ipfconline1 @helene_wpli @floriansemle @jblefevre60 @pierrepinna @Ym78200 @mikeflache 0.6B!

@EriAmo1 That looks like a nice & pretty consistent improvement

@ChrisLaubAI The explanation is simple: benchmarks. Models are trained to pass the same eval suites to compete. So they converge on the same answers. Add massive training data overlap, now mostly static due to scarcity, and the outputs start looking identical. ↓ https://t.co/zZcFslHhiM

Professors are increasingly worried about what AI is doing to critical thinking. As tools like ChatGPT reshape how students research and write, many academics fear that the humanities and deeper reasoning skills could erode. The challenge now is teaching students to think with AI, not to let it think for them. https://t.co/lufNjlK7uA

@OldenDev Opus 4.6 is perfectly usable, I use it everyday

Hubspot (?) Fathom(?) And sometimes NotebookLM

Growth stack today: Replit $100 Claude Code $150 Augment Code $60 ChatGPT / Codex $20 Perplexity Pro $20 Gemini Ai Studio (free) Gemini + Google workspace (?) Manus (free) Posthog (free) Auth0 (?) don’t like, but have to Google auth internal apps (?) BigQuery (no clue) love it

Efforts to improve the security of AI agents should recognize that many security failures occur even in the absence of adversaries. The unreliability issue has largely flown under the radar and there hasn't been much work on defining, measuring, or mitigating the problem. More on this in our response to NIST's request for information on AI Agent Security, by @steverab, @sayashk, @PKirgis, @CitpMihir, and me: https://t.co/PW7DJZpDWV This is based on our recent paper: https://t.co/FI5kuBkdRZ

American Sniper, Black Hawk Down, Zero Dark Thirty, Top Gun: Masterbaverick, I don't wanna think about them all, James Bond, various superhero movies have this as the subtext (which is maybe more insidious), then we the CIA guided shows like Jack Reacher

@DanKulkov I don’t seeem to hit the limit because it’s slows down after running two in parallel.

I'm pleased to announce that a new 2026 edition of my New York Times bestselling book, Rise of the Robots: Technology and the Threat of a Jobless Future, will be available on June 2. I have extensively updated the book to cover the latest advances in generative #AI and robotics and to examine the future economic and job market implications of the unfolding AI disruption. The book focuses on what we can do as individuals, and as a society, to successfully navigate the looming transition into the age of AI. You can pre-order from the link in the reply. @BasicBooks #RiseoftheRobots

@OldenDev "think they are purposefully dumbing it down" I believe this is a conspiracy theory that is not true

We just added /btw to Claude Code! Use it to have side chain conversations while Claude is working. https://t.co/hjO3YqvrPr

@0xRiver8 Plenty of fish in the sea

@defivas Imagine you had kids and a wife My brain can’t handle the amount of decisions to make on a daily basis

Introducing The Anthropic Institute, a new effort to advance the public conversation about powerful AI. https://t.co/M7vi9oRuYi

Powerful AI offers vast upsides in science, development, and human agency. But the continued rapid progress of the technology may also create new challenges, including abrupt economic changes and broad societal impacts.