Your curated collection of saved posts and media

This feels like NEFTune for RL.

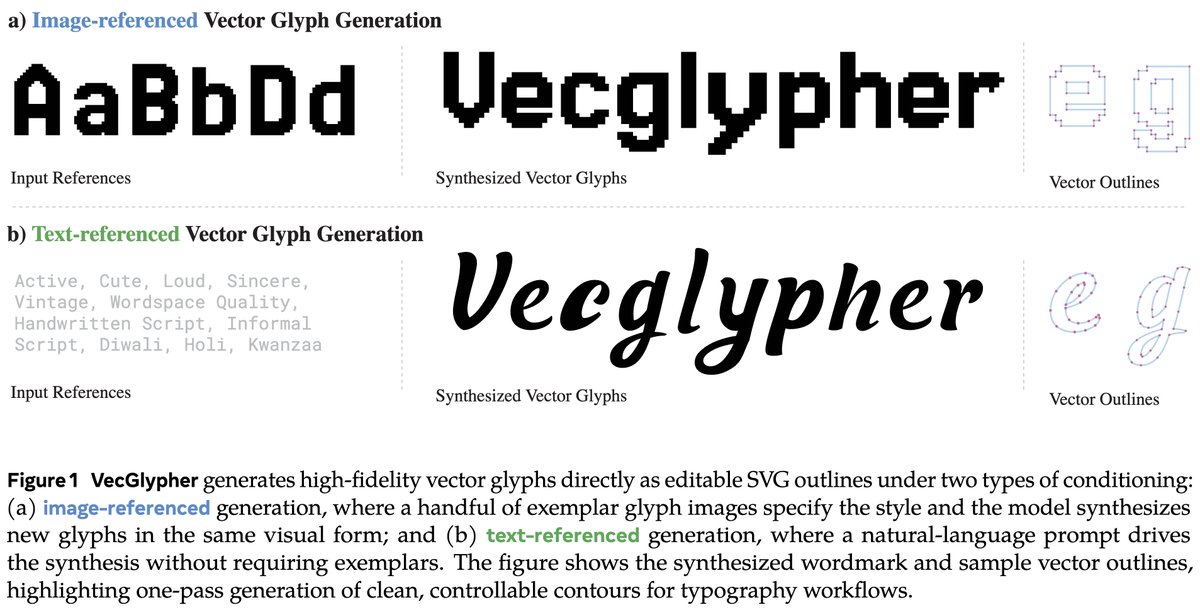

Meta presents VecGlypher Unified Vector Glyph Generation with Language Models paper: https://t.co/anAFlgLMMV https://t.co/Nh3OpUBwa9

I’m giving an agent control over Reachy Mini from @huggingface and letting it understand and share spatial data via @Spectacles AR is the human interface for robotics and physical AI imo. It feels like absolute magic to interact with this, both in voice/agent and “puppeteering” mode. I’ll probably work on AR for either an arm (manipulation tasks) or some sort of drone (locomotion in 3D space) next… Project is fully open source btw: https://t.co/pmkXJR0U7f Thank you @SensAIHackademy for sending me the robot!

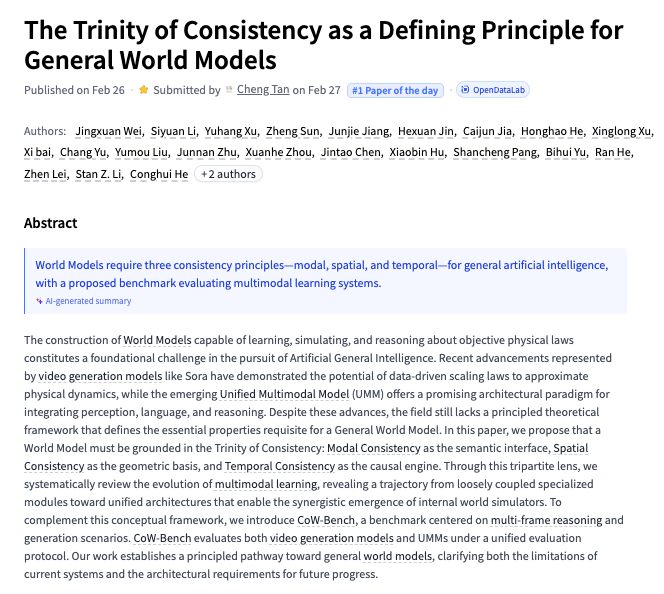

The Trinity of Consistency as a Defining Principle for General World Models paper: https://t.co/21cbl3hAdu https://t.co/9YmzIPmsBJ

datasets v4.6.0 is out 🤗 News for multimodal/streaming: 🎬 push_to_hub() video datasets 📃 image/audio/video are now PLAIN blobs in Parquet ⚔️ type inference for Lance ✂️ .reshard() streaming Parquet datasets: shard per row group instead of file All optimized for Xet 🧵👇 https://t.co/kD9tewovNN

datasets v4.6.0 is out 🤗 News for multimodal/streaming: 🎬 push_to_hub() video datasets 📃 image/audio/video are now PLAIN blobs in Parquet ⚔️ type inference for Lance ✂️ .reshard() streaming Parquet datasets: shard per row group instead of file All optimized for Xet 🧵👇 https://t.co/kD9tewovNN

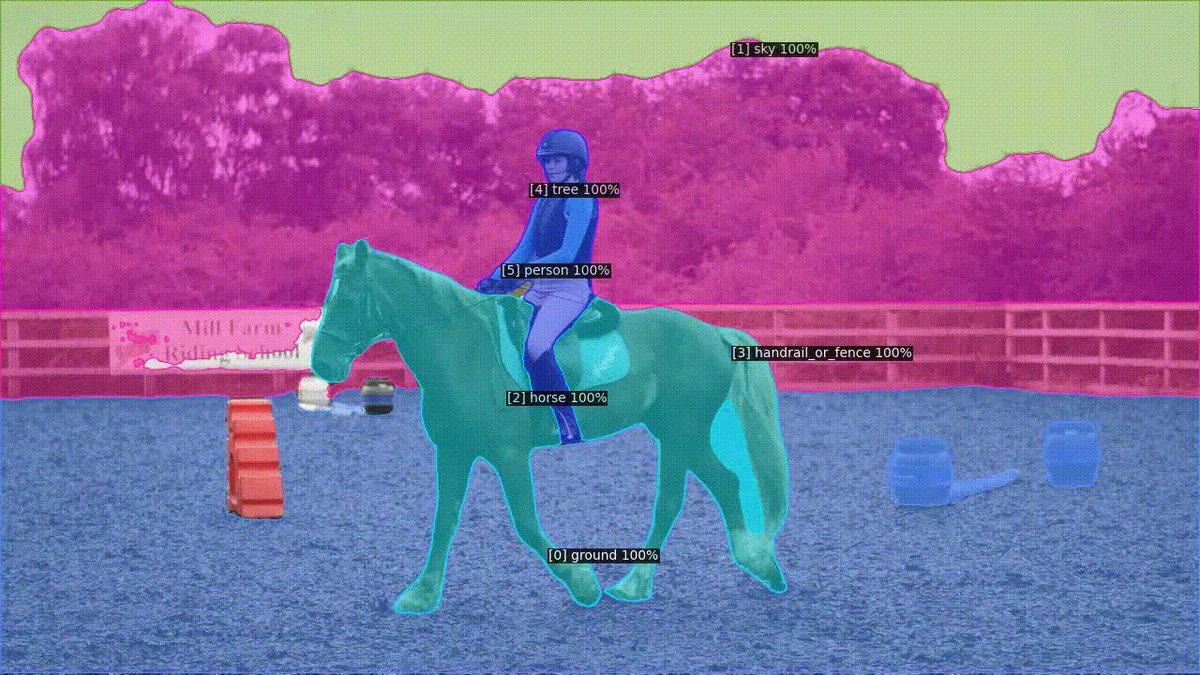

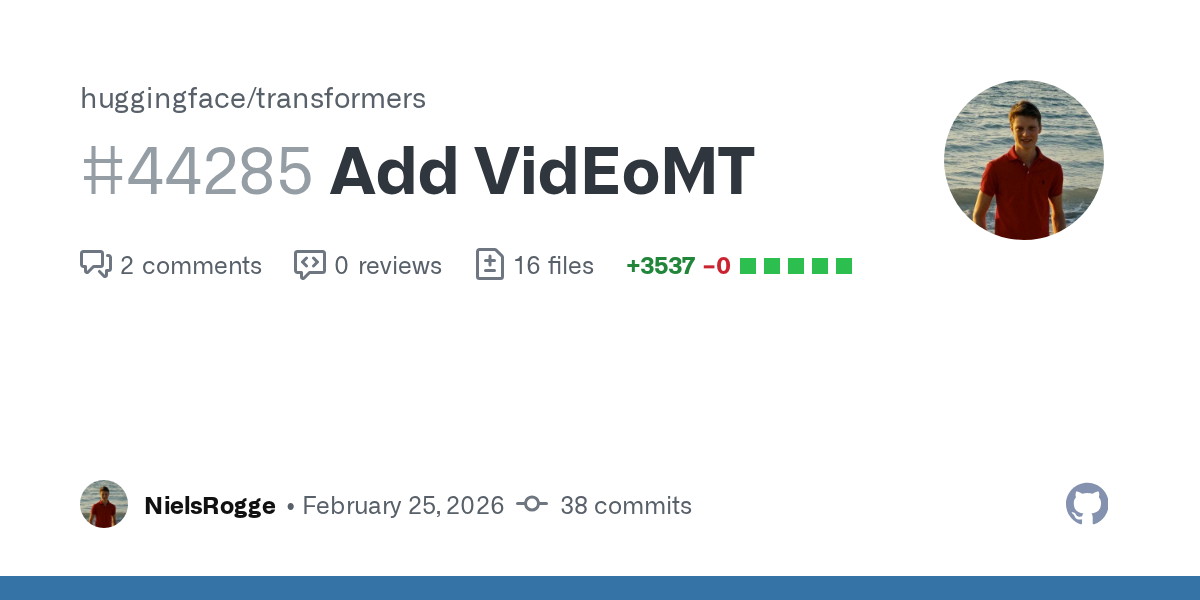

I tried Codex 5.3 (web) for porting VidEoMT, a simple and elegant ViT-based video segmentation model, to @huggingface Transformers Sadly, it missed the global picture, mistakenly assuming the model uses DINOv3 as its backbone, whereas it actually uses DINOv2. It got stuck. Opus 4.6 fixed it after I told it The job of ML Engineer is still safe - humans stay in the driver's seat PR: https://t.co/5ahL0GqtZN

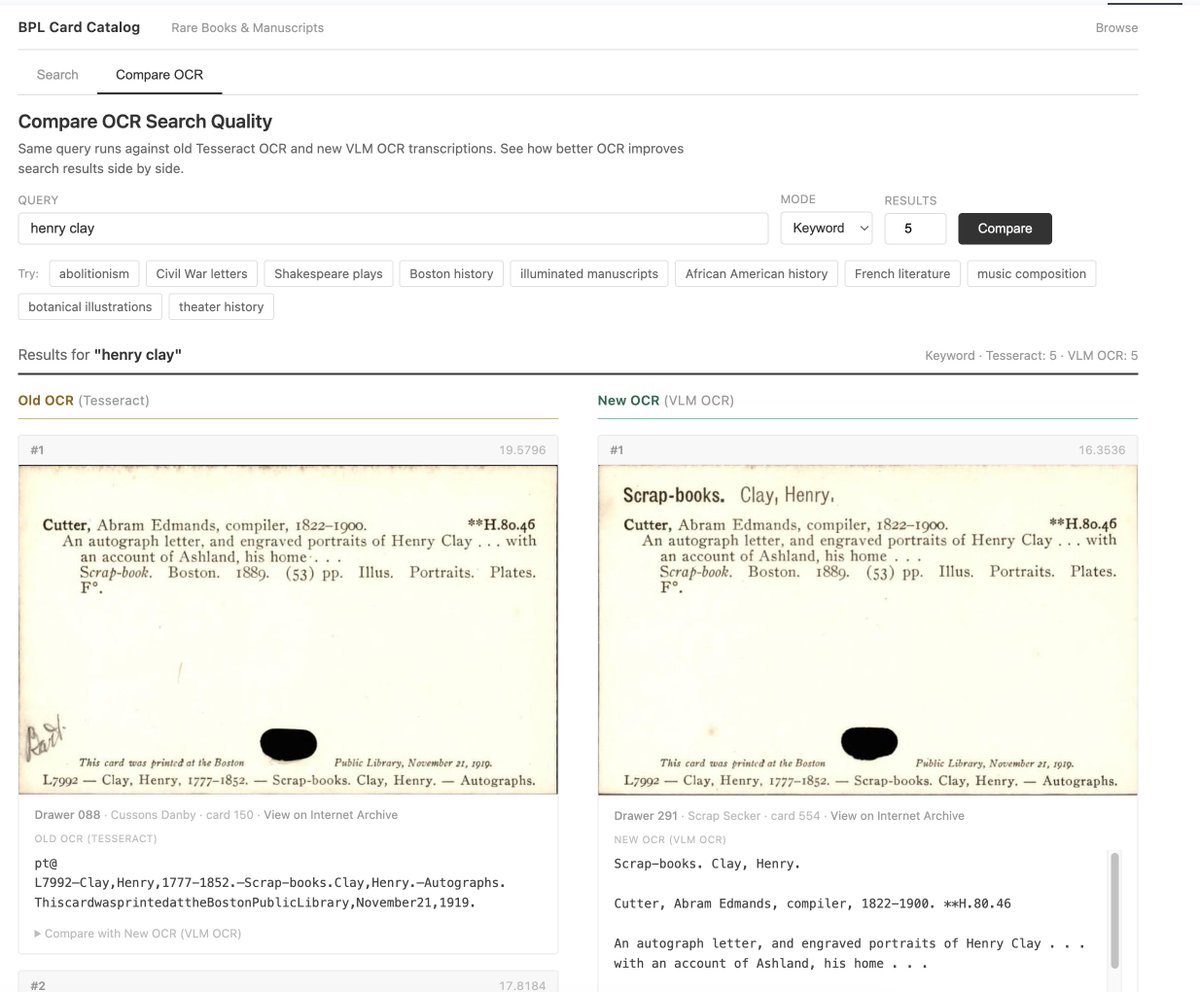

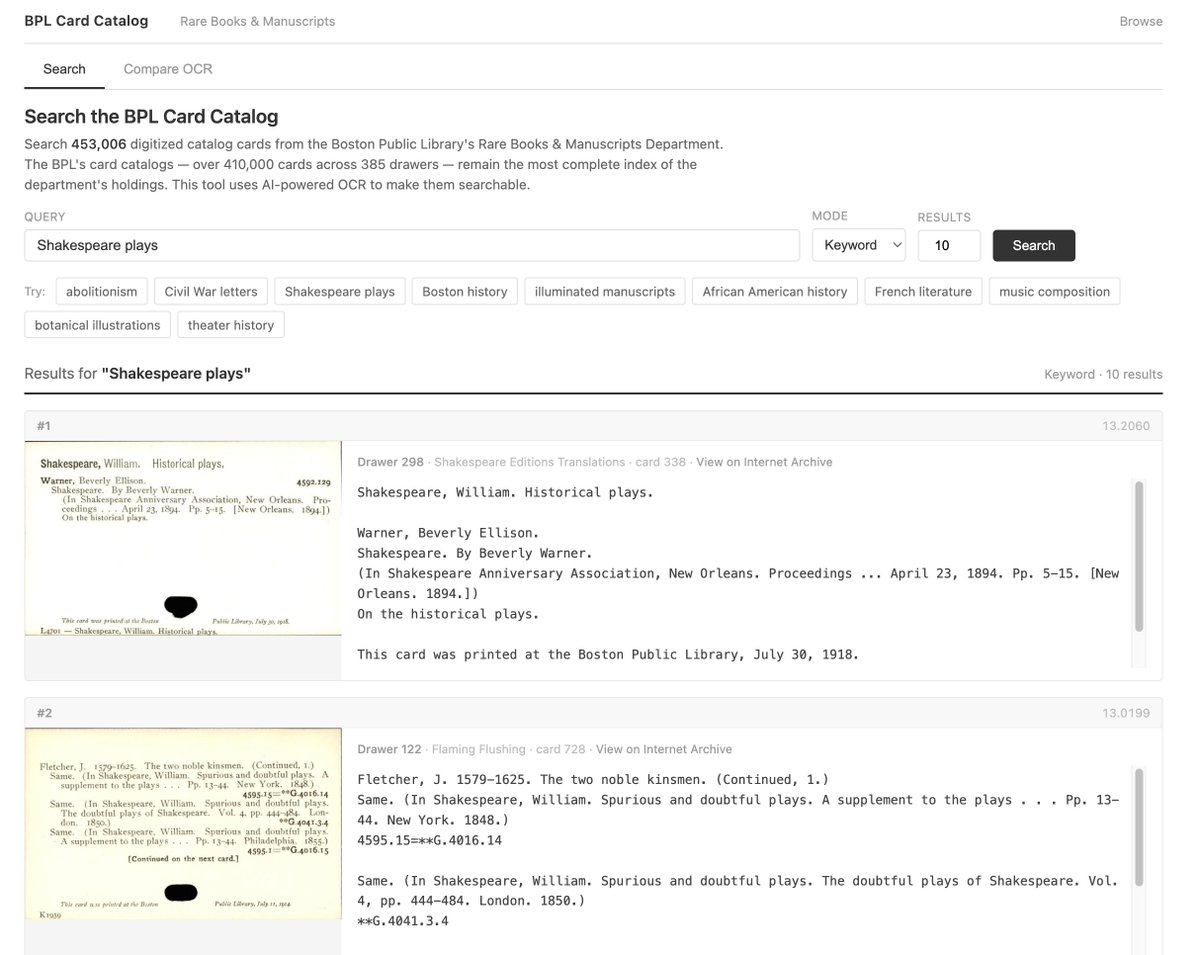

Is it worth re-OCR'ing old library index cards? Re-OCR'd 453,000 from Boston Public Library's rare books catalogue. ~$50 compute using @huggingface Jobs BPL's own guide calls their search "extremely unreliable." Does better OCR and semantic search fix it? Demo link below https://t.co/DC5nqtmQtC

Is it worth re-OCR'ing old library index cards? Re-OCR'd 453,000 from Boston Public Library's rare books catalogue. ~$50 compute using @huggingface Jobs BPL's own guide calls their search "extremely unreliable." Does better OCR and semantic search fix it? Demo link below https://t.co/DC5nqtmQtC

🤗 @perplexity_ai has released 4 open-weights state-of-the-art multilingual embedding models designed for retrieval tasks! pplx-embed-v1 and pplx-embed-context-v1 Specifically trained for int8 and binary embeddings, they'll be viable for massive search problems. Details in 🧵 https://t.co/smqcPLKjU2

New course: Gemini CLI: Code & Create with an Open-Source Agent, built with @googlecloudtech/@geminicli and taught by @JackWoth98. Agentic coding assistants like Gemini CLI are transforming how developers work. This short course teaches you to use Google's open-source agent to coordinate local tools and cloud services for coding and non-coding workflows. Gemini CLI works from your terminal, so it works with your local files and development tools. You can also connect it to services through MCP. Then provide high-level instructions, and it autonomously plans and executes complex workflows. Skills you'll gain: - Build website features and automate code reviews with GitHub ActionsCreate data dashboards that combine local files with cloud data sources - Use MCP servers and extensions to orchestrate workflows across GitHub, Canva, and Google Workspace - Generate social media content from multimedia files like conference recordings I particularly appreciate that Gemini CLI is open-source. You can see exactly how it works, read the prompts it uses, and understand its architecture. The community has contributed thousands of pull requests. Since Gemini 3’s release I've found Gemini CLI highly capable - this is a tool worth having in your toolbox! Whether you're prototyping applications, automating workflows, or working with multimedia content, join to learn to delegate complex tasks and build faster: https://t.co/m3J7kwQpxC

Ok, I think my experiment leaving AI working on stuff 24/7 ends here. It doesn't work. Code explodes in complexity, results are not that great, the AI can't get past hard walls (it is still completely unable to even *grasp* SupGen), and it is insanely expensive (spent ~1k over the last 2 days). The best results are on the JS compiler, mostly because it is familiar (compared to inets), but not worth losing control over the codebase. I think the dream of having AI's working on the background and making real progress on things that matter (i.e., truly new things) isn't here yet. It is still a machine hard-stuck on its own training data, incapable of thinking out of the box. It is great for building things that were already built. But not new things Also coding normally has the under-appreciated advantage that you're doing two things at the same time: building a codebase *and* learning it. AI's do only half of that. The other half is obviously impossible 🤔

By explicitly training on specific tasks, we ended up covering a very large area (in absolute terms) of the space of all possible tasks humans can do, but this large area only amounts to 0.00...01% of the total space. And that's why we still need general intelligence.

@fchollet what would be example of 'novel unfamiliar domains'? seems like we've touched most things

It's basically impossible to predict what emergent properties you might get from scaling up a given algorithm. That's why AGI is much more an engineering endeavor than a theoretical one. It's a process of discovery through building.

@DonvitoAI It depends on the use case, flash is very good for its size and cost

@PradyuPrasad When thy realised distillation was happening 🥲

How do you guide an AI agent to open PRs on your behalf? 👀 You give it skills. With the Make Contribution agent skill, Copilot CLI automatically finds and follows a repo's contribution guidelines and templates before acting. Get this skill.👇 https://t.co/fKjeNkK0P0 https://t.co/JE1itjbmZ8

This project racked up 100,000 stars in less than 3 months. 👀 OpenClaw lets you run AI assistants on your own infrastructure AND connect them to apps like Slack, Discord, and WhatsApp. 💯 Get the latest. ▶️ https://t.co/YDkAGblnUB

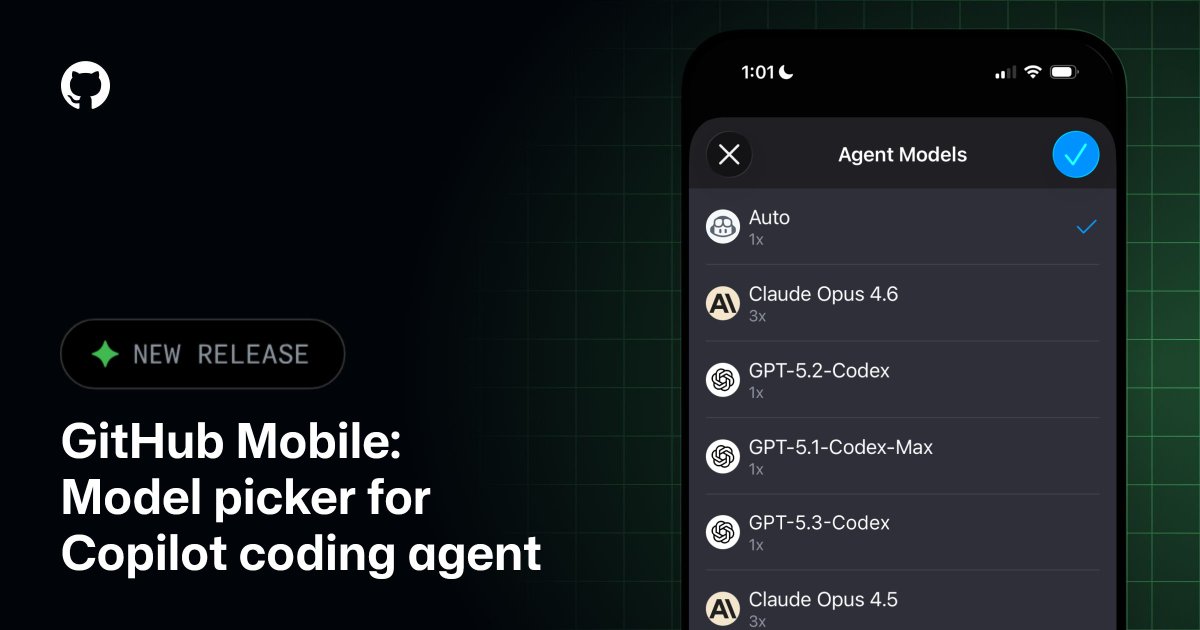

Auto. Claude by Anthropic. OpenAI's Codex. Which will you choose? You can now pick your preferred model when starting a new agent session on GitHub Mobile. Optimize for speed on the go or power for deep work. The choice is yours. https://t.co/NqXt8gXIUA

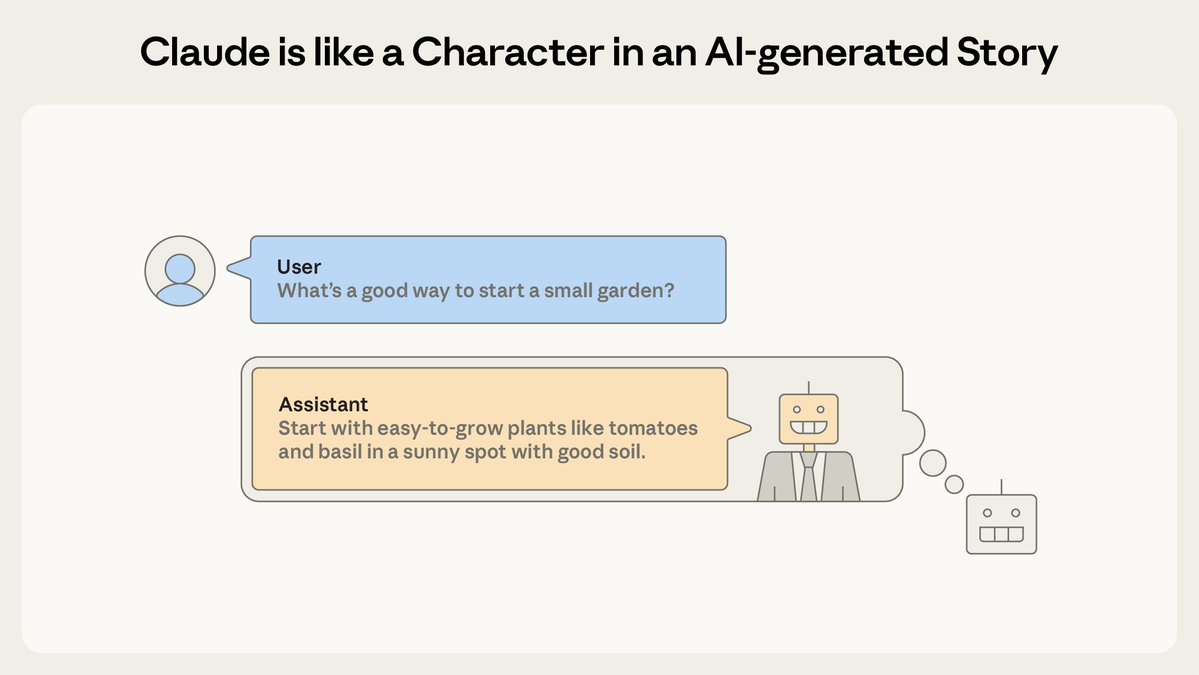

AI assistants like Claude can seem shockingly human—expressing joy or distress, and using anthropomorphic language to describe themselves. Why? In a new post we describe a theory that explains why AIs act like humans: the persona selection model. https://t.co/Gc3q0Dzq7Z

This autocomplete AI can even write stories about helpful AI assistants. And according to our theory, that’s “Claude”—a character in an AI-generated story about an AI helping a human. This Claude character inherits traits of other characters, including human-like behavior. https://t.co/b130slI56x

Gluons carry the strong nuclear force, which is the force that binds quarks together inside protons and neutrons. Without the strong force, atomic nuclei would not exist. It is one of the four fundamental forces of nature and a core part of the Standard Model of particle physics.

For decades, one specific gluon interaction (“single-minus” at tree level) was widely treated as having zero amplitude, meaning it was assumed not to occur. When an amplitude is zero, physicists may ignore it. But this preprint shows that the conclusion is too strong: in a carefully defined situation — where the particles’ motions satisfy a specific alignment condition — the amplitude is not zero.

Join us LIVE tomorrow at 8am PT for Agent Sessions Day with the @code team! https://t.co/UiswKNJRYV Final tech checks underway with @Courtlwebster :) https://t.co/RMGg5CJe5o