Your curated collection of saved posts and media

I have been using Codex a lot thanks to the Codex for OSS program. I never thought I would say this, but I have been more productive than ever just by using Codex.

OMG @OpenAI ILY 🫶🏻 https://t.co/kGqmL0cs3Y

here's a hands on guide to setup multi-agent autoresearch by @karpathy. uses open models. works with codex, claude, open code. - uses 5 agents each with a configuration, specific tools, roles, and permission (see repo) - a researcher agent searches papers on hf papers and creates hypotheses - a planner agent maintains an experiment plan and log - workers take the hypotheses and updates the scripts, then it starts a hf job with a gpu to run the script - a reporter agent monitors these jobs and reports events and metrics to a @TrackioApp dashboard I ran this for 4 hours and the agents ran 32 jobs, they improved on the baseline by a small margin. check out everything I learnt in thread.

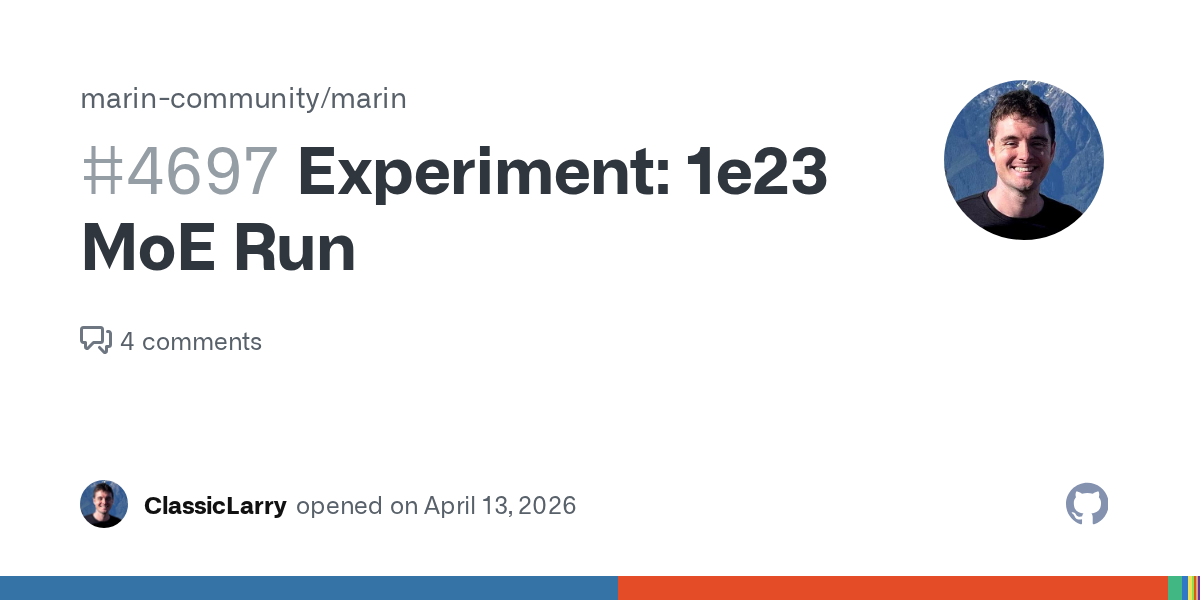

So cool to see that open-source, with open experimentation (and with the help of someone posting blog posts about their personal research), can yield a very robust method for MoE balancing. This method seems more elegant than all other methods I have seen. Open source is Awesome!

Marin is using quantile balancing from @Jianlin_S (who developed RoPE, which was also a good idea) to train our current 1e23 FLOPs MoE. The idea is elegant: assigning tokens to experts by solving a linear program. No hyperparameters to tune. Yields stable training.

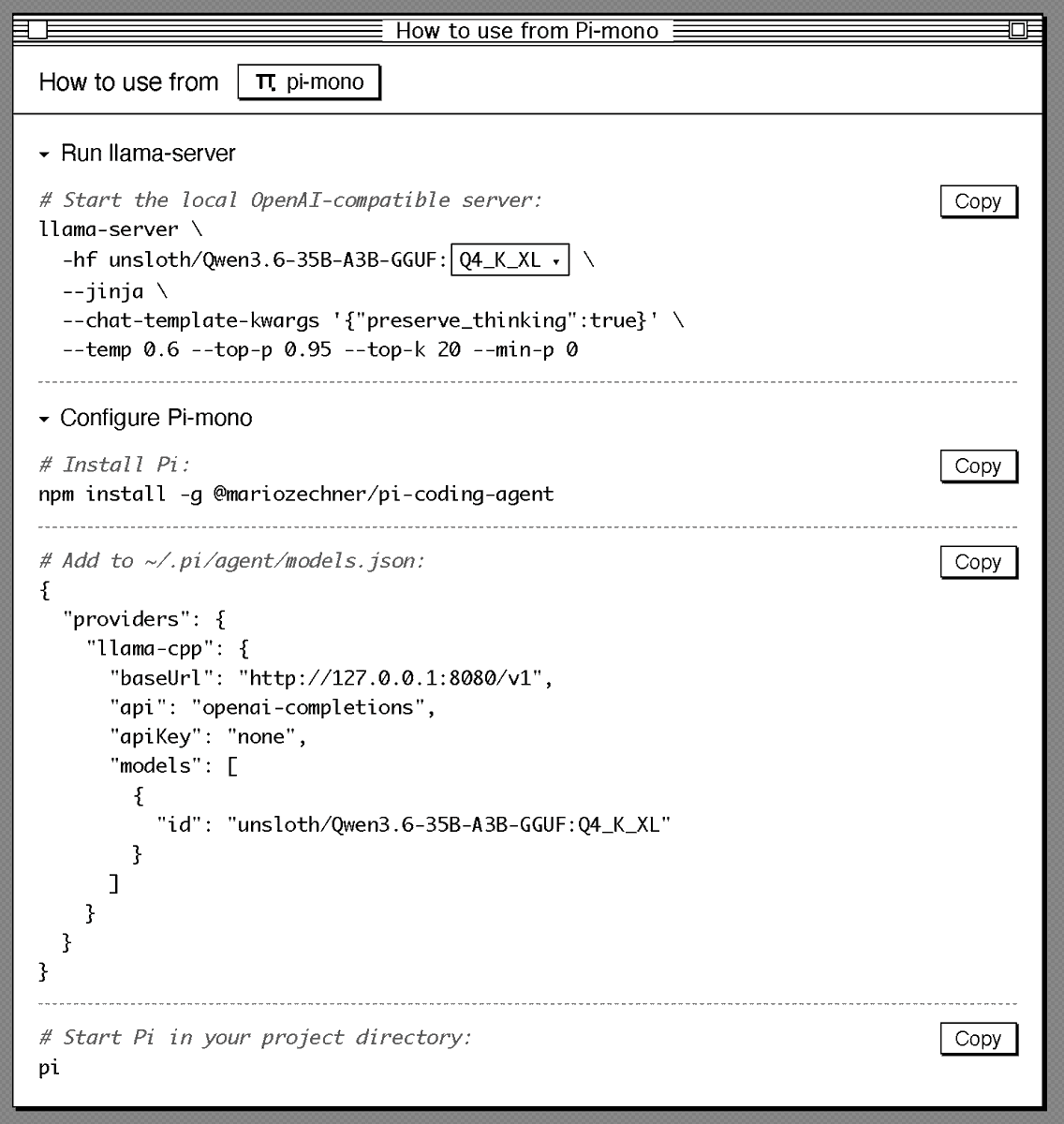

Sharing my current setup to run Qwen3.6 locally in a good agentic setup (Pi + llama.cpp). Should give you a good overview of how good local agents are today: # Start llama.cpp server: llama-server \ -hf unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL \ --jinja \ --chat-template-kwargs '{"preserve_thinking":true}' \ --temp 0.6 --top-p 0.95 --top-k 20 --min-p 0 # Configure Pi: { "providers": { "llama-cpp": { "baseUrl": "http://127.0.0.1:8080/v1", "api": "openai-completions", "apiKey": "none", "models": [ { "id": "unsloth/Qwen3.6-35B-A3B-GGUF:Q4_K_XL" } ] } } }

⚡ Meet Qwen3.6-35B-A3B:Now Open-Source!🚀🚀 A sparse MoE model, 35B total params, 3B active. Apache 2.0 license. 🔥 Agentic coding on par with models 10x its active size 📷 Strong multimodal perception and reasoning ability 🧠 Multimodal thinking + non-thinking modes Efficient. Pow

I have feelings about Opus 4.7. https://t.co/km54XbnDMk

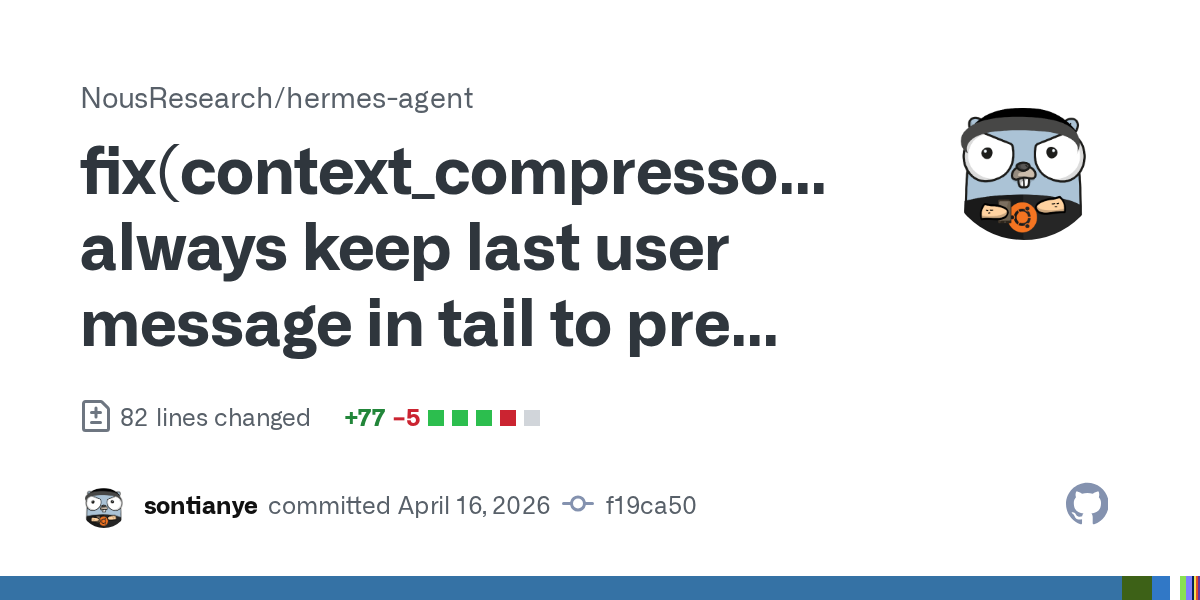

Just got my first PR merged into NousResearch/hermes-agent 🎉 Small bug fix in the context compression system, but super stoked to contribute to an open-source AI project I actually use. https://t.co/II25rW5CuC @NousResearch

Marin is using quantile balancing from @Jianlin_S (who developed RoPE, which was also a good idea) to train our current 1e23 FLOPs MoE. The idea is elegant: assigning tokens to experts by solving a linear program. No hyperparameters to tune. Yields stable training.

Researchers' brilliant ideas often get lost in the sea of endless SOTA claims on weak baselines. At Marin we battle-test ideas in an open arena, where anyone's idea can be promoted to the next hero run. One that recently rose up was @Jianlin_S MoE Quantile Balancing, used in our

See all the gory details on GitHub: https://t.co/CfUbhtcBOp and follow along on wandb: https://t.co/UWU00HPknJ

I recently gave some talks on PointWorld. In this latest version, I discussed: Why world models? Why 3D? Why it matters amidst scaling data in robotics? Why it’s a missing side of the coin for “The Bitter Lesson”? (It’s more than just a better backbone for training policies) https://t.co/oGhLvuyB6B

We comprehensively benchmarked Opus 4.7 on document understanding. We evaluated it through ParseBench - our comprehensive OCR benchmark for enterprise documents where we evaluate tables, text, charts, and visual grounding. The results 🧑🔬: - Opus 4.7 is a general improvement over Opus 4.6. It has gotten much better at charts compared to the previous iteration - Opus 4.7 is quite good at tables, though not quite as good as Gemini 3 flash - Opus 4.7 wins on content faithfulness across all techniques (including ours) - Using Opus 4.7 as an OCR solution is expensive at ~7c per page!! For comparison, our agentic mode is 1.25c and cost-effective is ~0.4c by default. Take a look at these results and more on ParseBench! https://t.co/tYiSOMbd6p

THREAD 1/7 Every AI benchmark a lab bragged about this year is compromised. not because labs are cheating. because the game itself is broken.

Codex writes your code → Codex ships it on Render. We built a Codex plugin with @OpenAI that lets you deploy, debug, and monitor your entire stack on Render, without leaving your flow. Just type @render https://t.co/xrgcCksKRj

Codex for (almost) everything. It can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks. https://t.co/UEEsYBDYfo

AI FOOTBALL ANALYSIS. A FULL COMPUTER VISION SYSTEM. BUILT ON YOLO, OPENCV, AND PYTHON. You upload a regular match video. No sensors, no GPS trackers, just camera footage. The neural network finds every player, referee, and ball on its own. Every frame, in real time. KMeans clustering breaks down jersey colors pixel by pixel. The system splits players into teams automatically. Without a single manual hint. Optical Flow tracks camera movement. Separates it from player movement. Perspective Transformation converts pixels into real meters. Speed of every player. Distance covered. Ball possession percentage. All calculated automatically. Four hours of tutorial from zero to a working system. The model is trained on real Bundesliga matches. Runs on a regular GPU. Python code - take it and run. Sports analytics is no longer behind closed doors. AI leveled the playing field.

We built our launch video in Claude Code using HyperFrames. Now it's yours. Open source, agent-native framework. HTML to MP4. $ npx skills add heygen-com/hyperframes RT + Comment "HyperFrames" to get the full source code of this launch video (must follow) https://t.co/vsRtZ6gQsb

Generate FULLY CONTROLLABLE 3D assets from a SINGLE image, locally on your PC. Made a 1-click launcher for the official Anigen Gradio app, and a dedicated viewer. Crazy this is now possible. What you're seeing here came from one image. Requires: NVIDIA GPU 6GB VRAM

Static 3D generation isn't enough. We need assets ready for animation. Our new #SIGGRAPH work, AniGen, takes a single image and generates the 3D shape, skeleton, and skinning weights all at once. Code is fully open-sourced! Kudos to @KyrieIr31012755 and @VastAIResearch 🧵(1/4) h

🎉 After one year of teamwork, we are excited to release our 3D foundation model — LingBot-Map! Unlike DA3/VGGT, LingBot-Map is a purely autoregressive model for streaming 3D reconstruction ⚡ It achieves ~20 FPS on 518×378 resolution over sequences exceeding 10,000 frames — and beyond 🚀 Two key insights behind LingBot-Map: 🔑 Keep SLAM's structural wisdom: build Geometric Context Attention with long-context modeling while maintaining a compact streaming state 🔑 Make everything end-to-end learnable — no optimization, no post-processing Let's check out our demos 👇

The first global, city-scale 3D map is taking shape, and it’s machine-readable. City-scale environments can now be reconstructed in high-fidelity, reaching a level of detail that is becoming almost indistinguishable from reality. This leap is made possible by large-scale mapping using just an Insta360 X5 and Over the Reality technology. So far, our dataset includes: - 220,000+ 3D mapped locations - 97M images - 1,000TB of spatial data Growing by more than 10,000+ newly mapped locations every week. Enabling Visual AI, Robotics, VPS, XR, and Digital twins.

I recently gave some talks on PointWorld. In this latest version, I discussed: Why world models? Why 3D? Why it matters amidst scaling data in robotics? Why it’s a missing side of the coin for “The Bitter Lesson”? (It’s more than just a better backbone for training policies) https://t.co/oGhLvuyB6B

The recording video is here: https://t.co/UvdPZdY1Qb

@jeremyphoward Check this out! I used Lean4 to emit MLIR by way of StableHLO/IREE to train image recognition networks, with proofs for the backprop operations! https://t.co/HqYG6KflSO

Sharing a super simple, user-owned memory module we've been playing around: nanomem The basic idea is to treat memory as a pure intelligence problem: ingestion, structuring, and (selective) retrieval are all just LLM calls & agent loops on a on-device markdown file tree. Each file lists a set of facts w/ metadata (timestamp, confidence, source, etc.); no embeddings/RAG/training of any kind. For example: - `nanomem add <fact>` starts an agent loop to walk the tree, read relevant files, and edit. - `nanomem retrieve <query>` walks the tree and returns a single summary string (possibly assembled from many subtrees) related to the query. What’s nice about this approach is that the memory system is, by construction: 1. partitionable (human/agents can easily separate `hobbies/snowboard.md` from `tax/residency.md` for data minimization + relevance) 2. portable and user-owned (it’s just text files) 3. interpretable (you know exactly what’s written and you can manually edit) 4. forward-compatible (future models can read memory files just the same, and memory quality/speed improves as models get better) 5. modularized (you can optimize ingestion/retrieval/compaction prompts separately) Privacy & utility. I'm most excited about the ability to partition + selectively disclose memory at inference-time. Selective disclosure helps with both privacy (principle of least privilege & “need-to-know”) and utility (as too much context for a query can harm answer quality). Composability. An inference-time memory module means: (1) you can run such a module with confidential inference (LLMs on TEEs) for provable privacy, and (2) you can selectively disclose context over unlinkable inference of remote models (demo below). We built nanomem as part of the Open Anonymity project (https://t.co/fO14l5hRkp), but it’s meant to be a standalone module for humans and agents (e.g., you can write a SKILL for using the CLI tool). Still polishing the rough edges! - GitHub (MIT): https://t.co/YYDCk5sIzc - Blog: https://t.co/pexZTFdWzz - Beta implementation in chat client soon: https://t.co/rsMjL3wzKQ Work done with amazing project co-leads @amelia_kuang @cocozxu @erikchi !!