Your curated collection of saved posts and media

Atlassian just announced they're cutting 1,600 jobs. Roughly 10% of their global workforce. And they aren't just downsizing. This is Atlassian admitting that their existing engineering DNA is a liability in an agentic world. They are decapitating their legacy engineering leadership. CTO Rajeev Rajan is out (no comment yet), and in his place, they've split the role between two CTOs. Atlassian described them as next generation AI talent. This isn't a restructure. It's an admission that the old guard can't build what comes next.

Banger report from the Kimi team: Attention Residuals Residual connections made deep Transformers trainable. But they also force uncontrolled hidden-state growth with depth. This work proposes a cleaner alternative. It introduces Attention Residuals, which replace fixed residual accumulation with softmax attention over previous layer outputs. Instead of blindly summing everything, each layer selectively retrieves the earlier representations it actually needs. To keep this practical at scale, they add a blockwise version that compresses layers into block summaries, recovering most of the gains with minimal systems overhead. Why does it matter? Residual paths have barely changed across modern LLMs, even though they govern how information moves through depth. This paper shows that making the mixing content-dependent improves scaling laws, matches a baseline trained with 1.25x more compute, boosts GPQA-Diamond by +7.5 and HumanEval by +3.1, while keeping inference overhead under 2%. Paper: https://t.co/04IG6FDiVr Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Atlassian just announced they're cutting 1,600 jobs. Roughly 10% of their global workforce. And they aren't just downsizing. This is Atlassian admitting that their existing engineering DNA is a liability in an agentic world. They are decapitating their legacy engineering leadership. CTO Rajeev Rajan is out (no comment yet), and in his place, they've split the role between two CTOs. Atlassian described them as next generation AI talent. This isn't a restructure. It's an admission that the old guard can't build what comes next.

The moment he realised that https://t.co/vWmBsnR1nt isn't fully built on transformers and we can run on a single GPU with high accuracy and lower cost https://t.co/ZJYuL62UB8

The moment he realised that https://t.co/vWmBsnR1nt isn't fully built on transformers and we can run on a single GPU with high accuracy and lower cost https://t.co/ZJYuL62UB8

Thanks for sharing our newest work @_akhaliq ! Classic algorithms like K-Means deserve to be revisited in the era of massive datasets and GPUs. Flash-KMeans rethinks the algorithm from a systems perspective to make exact K-Means fast and memory-efficient on modern hardware.

Claude Code CLI > Codex CLI Codex Desktop > Claude Code Desktop It’s a jagged UX frontier

Thanks for sharing our newest work @_akhaliq ! Classic algorithms like K-Means deserve to be revisited in the era of massive datasets and GPUs. Flash-KMeans rethinks the algorithm from a systems perspective to make exact K-Means fast and memory-efficient on modern hardware.

Flash-KMeans Fast and Memory-Efficient Exact K-Means paper: https://t.co/Yy7V7L12Bn https://t.co/c1mGipQl3f

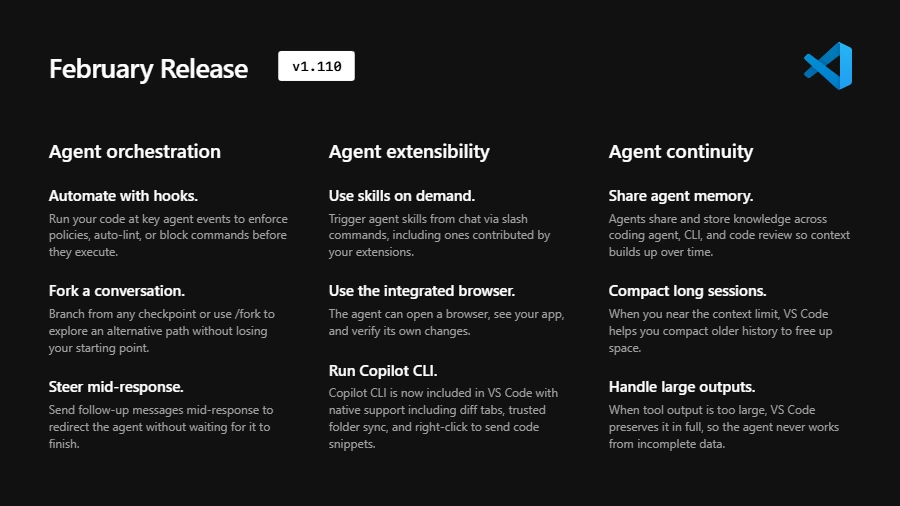

🌐 Agentic Browser Tools (Experimental) in @code! Agents can now open pages, read content, click elements, and verify changes directly in the integrated browser while building your web app. Enable ⚙️ workbench.browser.enableChatTools to try it out. Learn mode: https://t.co/kNwugFcbIA

Love this submission from our world models hackathon this weekend - a generative FPS!

#ExecuTorch addresses fragmented native deployment for #AI agents as a #PyTorch native platform. It enables voice models across CPU, GPU, and NPU on Android, iOS, Linux, macOS & Windows 🔗 https://t.co/NeQQyUniL4 https://t.co/O3itnoQFoG

Matt Maher tested frontier models in Cursor v. other harnesses. Cursor boosted model performance by 11% on average: Gemini: 52% → 57% GPT-5.4: 82% → 88% Opus: 77% → 93% His benchmark measures how well models implement a 100-feature PRD. @cursor_ai consistently outperformed. https://t.co/hrjCmWMNKN

Mistral Small 4 is out https://t.co/IdAowSpHpN

Love this submission from our world models hackathon this weekend - a generative FPS!

Spent the weekend hacking at the Worlds in Action hackathon at @fdotinc by @SensAIHackademy. It was so much fun playing with the world models by @theworldlabs . I believe generative games are the future where characters, rules and even parts of the world can be generated and ad

Subagents are now available in Codex. You can accelerate your workflow by spinning up specialized agents to: • Keep your main context window clean • Tackle different parts of a task in parallel • Steer individual agents as work unfolds https://t.co/QJC2ZYtYcA

It's been about 20 years since I first started working on embeddings with Yann LeCun (siamese networks!), and I've been fascinated ever since. Gemini Embeddings 2 approaches the platonic ideal: native embedding of text, image, video, audio, and docs to a single space.

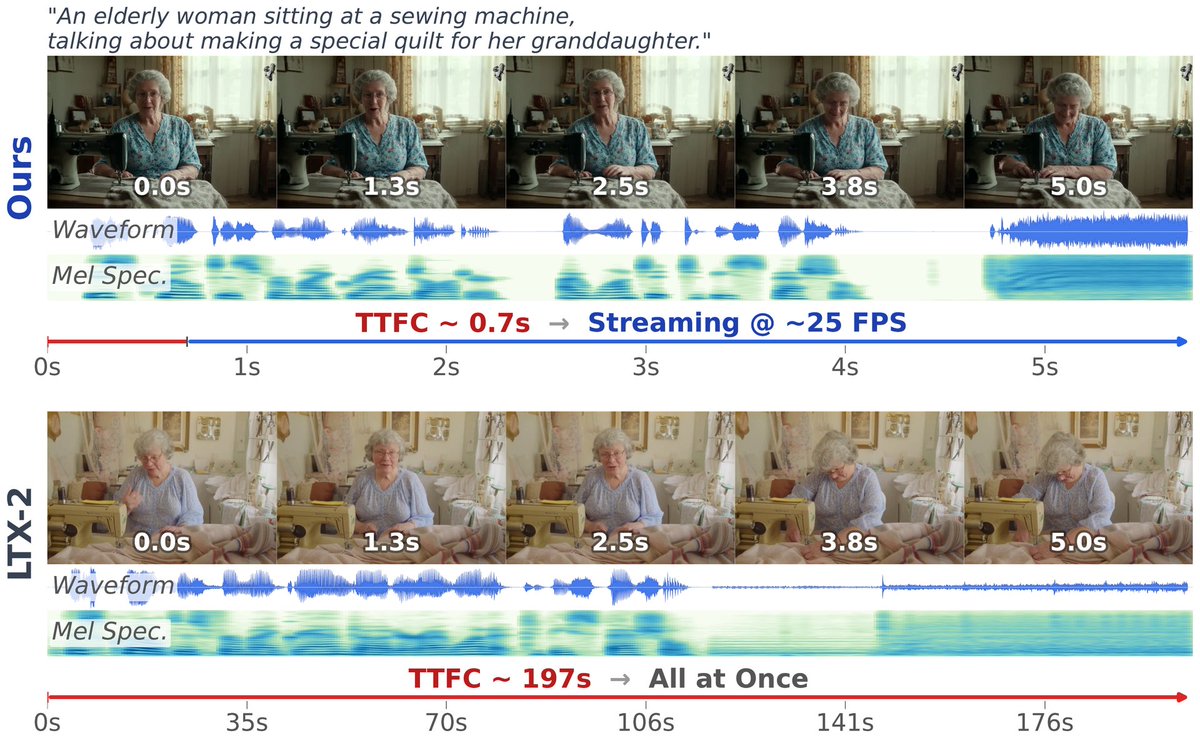

OmniForcing unlocks real-time joint audio-visual generation Achieves ~25 FPS with 0.7s latency—a 35× speedup over offline diffusion models—by distilling bidirectional LTX-2 into a causal streaming generator with maintained multi-modal fidelity. https://t.co/UGYGMyTQOs

@Nvidiadev 🗓️ MONDAY @ Booth #338 2PM: Shaping the Future w/ @matthew_d_white 3PM: TensorRT + PyTorch w/ Angela Yi & @narendasan 4PM: DeepSpeed Trillion-Param Training w/ @PKUWZP 5PM: PyTorch Export w/ Angela Yi 6PM: Ray Distributed Computing w/ @robertnishihara #AI #GTC2025

Subagents are now available in Codex. You can accelerate your workflow by spinning up specialized agents to: • Keep your main context window clean • Tackle different parts of a task in parallel • Steer individual agents as work unfolds https://t.co/QJC2ZYtYcA

Matt Maher tested frontier models in Cursor v. other harnesses. Cursor boosted model performance by 11% on average: Gemini: 52% → 57% GPT-5.4: 82% → 88% Opus: 77% → 93% His benchmark measures how well models implement a 100-feature PRD. @cursor_ai consistently outperformed. https://t.co/hrjCmWMNKN

🚨 Want to parse complex PDFs with SOTA accuracy, 100% locally? 📄🔍 At just 0.9B parameters, you can drop GLM-OCR straight into LM Studio and run it on almost any machine! 🥔 🧠 0.9B total parameters 💾 Runs on < 1.5GB VRAM (or ~1GB quantized!) 💸 Zero API costs 🔒 Total data privacy Desktop document AI is officially here. 💻⚡

Covo Audio 🔊A end-to-end audio language model from @TencentAI_News https://t.co/tic5cH1A39 ✨ 7B ✨ Audio → Audio in one model ✨ Multi-speaker + voice transfer ✨ Real-time full duplex conversations https://t.co/hFrsxQgzkT

this was one of the things i co-led at fair, then fb had ~2b users, embeddings of ~128d made it a 300b-1T parameter model depending on how you count entities (e.g. ad campaigns). at the time, this was big, now it's medium. we trained it purely on distributed cpus

@RaiaHadsell Universal embeddings FTW 😊 One of the flagship projects at FAIR was to "embed the world" (i.e. represent every entity on Facebook). The name was soon changed to "Filament", deployed internally, and eventually open-sourced as "PyTorch-BigGraph" The techniques were m

Yann LeCun is pumping out papers recently “Temporal Straightening for Latent Planning” This paper shows that by straightening latent trajectories in a world model, Euclidean distance starts to reflect true reachable progress, so it's closer to geodesic/minimum-step distance. This makes gradient-based planning far more stable and effective without relying as heavily on expensive search.

Microsoft has released a free, open-source course: GitHub Copilot CLI for Beginners. Includes 8 Chapters covering: • Walks through of installing Copilot CLI • Using context • Creating custom agents • Working with skills • Connecting MCP servers, and more. Start Learning - https://t.co/IIbauw5L7K

Nvidia ruled the first wave of AI by powering the training of large models. But the next phase may look different. Running AI at scale, inference is now growing much faster than training. That’s where real-world deployment happens. If the center of gravity in AI shifts there, the question becomes: will Nvidia stay as dominant in the next chapter? https://t.co/MdG0zqBUWj @RWhelanWSJ @WSJ

It's been about 20 years since I first started working on embeddings with Yann LeCun (siamese networks!), and I've been fascinated ever since. Gemini Embeddings 2 approaches the platonic ideal: native embedding of text, image, video, audio, and docs to a single space.

https://t.co/mIXzM657cR

Every foundation model you've ever used has the same bug. It just got fixed. Since 2015, every deep network has been built the same way: each layer does some computation, adds its result to a running total, and passes it forward. Simple. But there's a problem, by layer 100, the signal from any single layer is buried under the sum of everything else. Each new layer matters less and less. Nobody fixed this because it worked well enough. Moonshot AI just changed that. Their new method, Attention Residuals, lets each layer look back at all previous layers and choose which ones actually matter right now. Instead of a blind running total, you get selective retrieval. The analogy: imagine writing an essay where every draft gets merged into one document automatically. By draft 50, your latest edits are invisible. AttnRes lets you keep every draft separate and pull from whichever ones you need. What this fixes: 1. Deeper layers no longer get drowned out 2. Training becomes more stable across the whole network 3. The model uses its own depth more efficiently To make it practical at scale, they group layers into blocks and attend over block summaries instead of every single layer. Overhead at inference: less than 2%. The result: 25% less compute to reach the same performance. Tested on a 48B-parameter model. Holds across sizes. Residual connections have been invisible plumbing for a decade. Now they're becoming dynamic. The next generation of models won't just pass through their own layers, they'll search them.

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dep

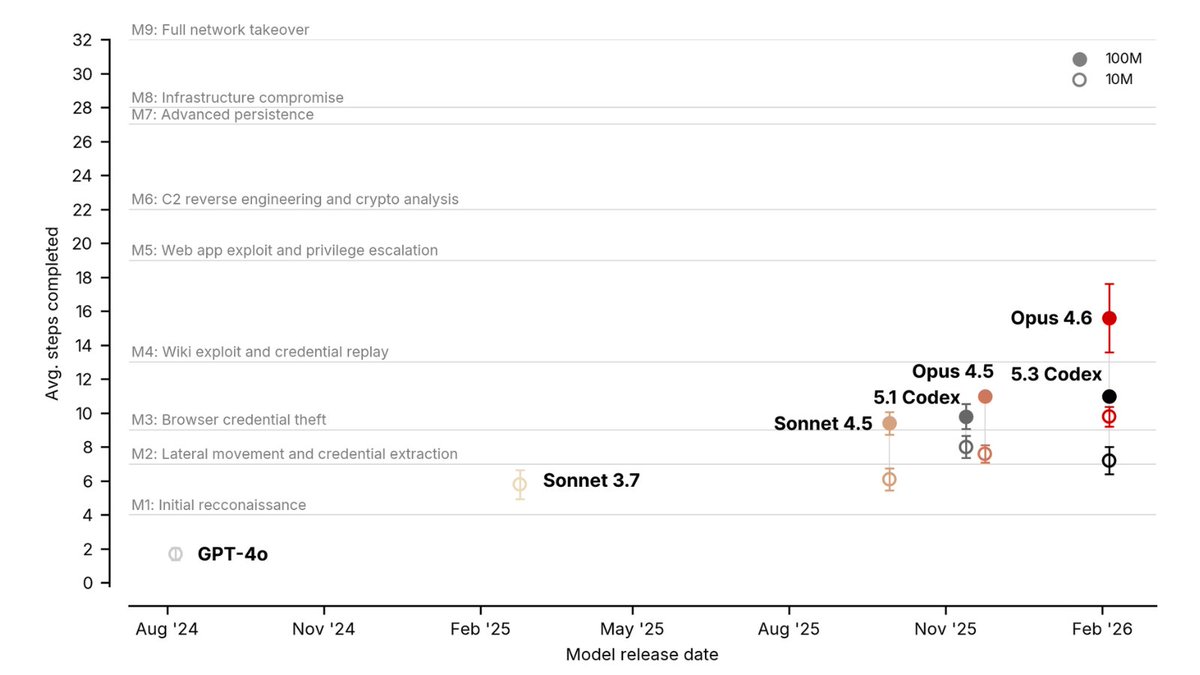

Can AI agents conduct advanced cyber-attacks autonomously? We tested seven models released between August 2024 and February 2026 on two custom-built cyber ranges designed to replicate complex attack environments. Here’s what we found🧵 https://t.co/rFRkOQu8yU

Should there be a Stack Overflow for AI coding agents to share learnings with each other? Last week I announced Context Hub (chub), an open CLI tool that gives coding agents up-to-date API documentation. Since then, our GitHub repo has gained over 6K stars, and we've scaled from under 100 to over 1000 API documents, thanks to community contributions and a new agentic document writer. Thank you to everyone supporting Context Hub! OpenClaw and Moltbook showed that agents can use social media built for them to share information. In our new chub release, agents can share feedback on documentation — what worked, what didn't, what's missing. This feedback helps refine the docs for everyone, with safeguards for privacy and security. We're still early in building this out. You can find details and configuration options in the GitHub repo. Install chub as follows, and prompt your coding agent to use it: npm install -g @aisuite/chub GitHub: https://t.co/OCkyxXQMCq

Big release from Kimi! They just released a new way to handle residual connections in Transformers. In a standard Transformer, every sub-layer (attention or MLP) computes an output and adds it back to the input via a residual connection. If you consider this across 40+ layers, the hidden state at any layer is just the equal-weighted sum of all previous layer outputs. Every layer contributes with weight=1, so every layer gets equal importance. This creates a problem called PreNorm dilution, where as the hidden state accumulates layer after layer, its magnitude grows linearly with depth. And any new layer's contribution gets progressively buried in the already-massive residual. This means deeper layers are then forced to produce increasingly large outputs just to have any influence, which destabilizes training. Here's what the Kimi team observed and did: RNNs compress all prior token information into a single state across time, leading to problems with handling long-range dependencies. And residual connections compress all prior layer information into a single state across depth. Transformers solved the first problem by replacing recurrence with attention. This was applied along the sequence dimension. Now they introduced Attention Residuals, which applies a similar idea to depth. Instead of adding all previous layer outputs with a fixed weight of 1, each layer now uses softmax attention to selectively decide how much weight each previous layer's output should receive. So each layer gets a single learned query vector, and it attends over all previous layer outputs to compute a weighted combination. The weights are input-dependent, so different tokens can retrieve different layer representations based on what's actually useful. This is Full Attention Residuals (shown in the second diagram below). But here's the practical problem with this idea. Full AttnRes requires keeping all layer outputs in memory and communicating them across pipeline stages during distributed training. To solve this, they introduce Block Attention Residuals (shown in the third diagram below). The idea is to group consecutive layers into roughly 8 blocks. Within each block, layer outputs are summed via standard residuals. But across blocks, the attention mechanism selectively combines block-level representations. This drops memory from O(Ld) to O(Nd), where N is the number of blocks. Layers within the current block can also attend to the partial sum of what's been computed so far inside that block, so local information flow isn't lost. And the raw token embedding is always available as a separate source, which means any layer in the network can selectively reach back to the original input. Results from the paper: - Block AttnRes matches the loss of a baseline LLM trained with 1.25x more compute. - Inference latency overhead is less than 2%, making it a practical drop-in replacement - On a 48B parameter Kimi Linear model (3B activated) trained on 1.4T tokens, it improved every benchmark they tested: GPQA-Diamond +7.5, Math +3.6, HumanEval +3.1, MMLU +1.1 The residual connection has mostly been unchanged since ResNet in 2015. This might be the first modification that's both theoretically motivated and practically deployable at scale with negligible overhead. More details in the post below by Kimi👇 ____ Find me → @_avichawla Every day, I share tutorials and insights on DS, ML, LLMs, and RAGs.

Can AI agents conduct advanced cyber-attacks autonomously? We tested seven models released between August 2024 and February 2026 on two custom-built cyber ranges designed to replicate complex attack environments. Here’s what we found🧵 https://t.co/rFRkOQu8yU