Your curated collection of saved posts and media

@rohanpaul_ai this is seriously misleading. you should take it down. he doesn’t say that. he says he thinks it will come, not that it is.

Sam Altman just said in his new interview, that a new AI architecture is coming that will be a massive upgrade, just like Transformers were over Long Short-Term Memory. And also now the current class of frontier models are powerful enough to have the brainpower needed to help us research these ideas. His advice is to use the current AI to help you find that next giant step forward. --- From 'TreeHacks' YT Channel (link in comment)

Misleading summary. Should be deleted. Altman doesn’t say a (known) new architecture is coming; he says he anticipates one will come someday. PS: I also think we need something radical and new; in fact that’s what i have been saying for the last decade. IMHO excess focus on LLMs probably has delayed discovery.

今天正式准备从 Claude Code 切换到 Codex 了 之前用 Claude Code 时因为没有 Anthropic 官方 API,一直在用 Minimax 和 Kimi 等 API 切换着用。 最近肉眼可见 @OpenAIDevs 在 Codex 上的决心和动作越来越密集,OpenClaw 创始人 @steipete、Instructor 作者 @jxnlco 等开源和 AI 教育分享非常活跃的大佬加入 Codex,还有不定期 Reset limit 的 @thsottiaux 😄 先订阅个 Plus 会员作为主力 AI 用起来!对 Codex 指令不够熟悉,先做个 Cheatsheet 给刚刚了解 Codex 的朋友们,包括我自己。

今天正式准备从 Claude Code 切换到 Codex 了 之前用 Claude Code 时因为没有 Anthropic 官方 API,一直在用 Minimax 和 Kimi 等 API 切换着用。 最近肉眼可见 @OpenAIDevs 在 Codex 上的决心和动作越来越密集,OpenClaw 创始人 @steipete、Instructor 作者 @jxnlco 等开源和 AI 教育分享非常活跃的大佬加入 Codex,还有不定期 Reset limit 的 @thsottiaux 😄 先订阅个 Plus 会员作为主力 AI 用起来!对 Codex 指令不够熟悉,先做个 Cheatsheet 给刚刚了解 Codex 的朋友们,包括我自己。

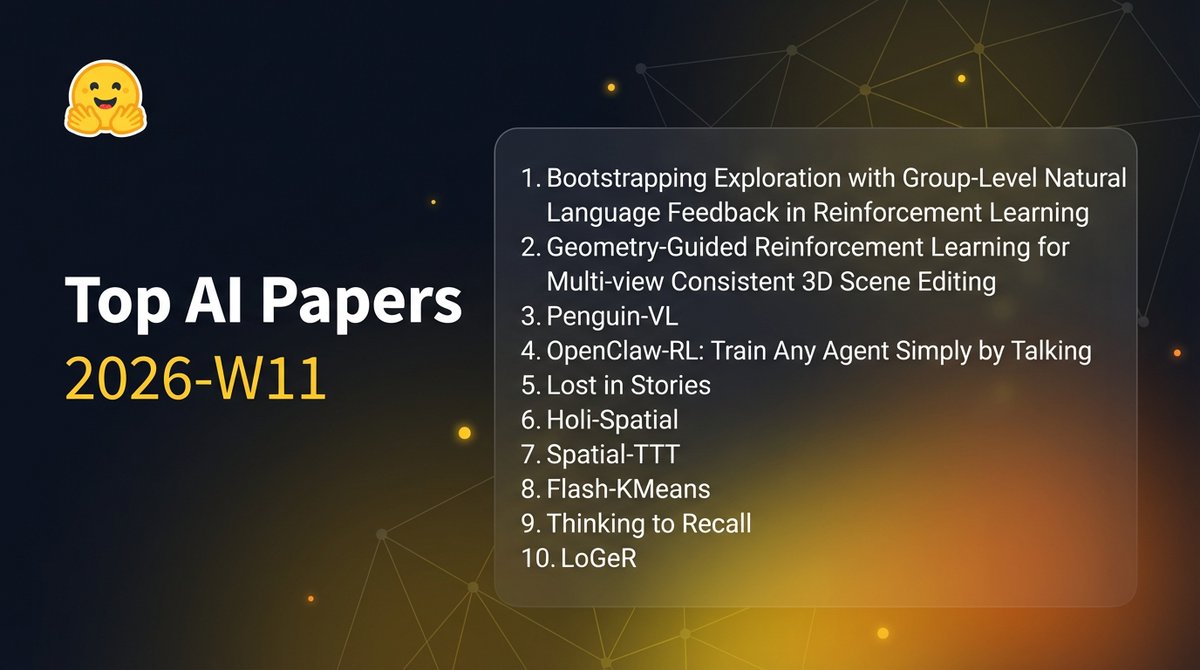

Top AI papers on @huggingface this week: Language feedback for RL, training agents by talking, and fixing LLM story consistency - Bootstrapping Exploration with Group-Level Natural Language Feedback in Reinforcement Learning - Geometry-Guided Reinforcement Learning for Multi-view Consistent 3D Scene Editing - Penguin-VL by Tencent: Exploring the Efficiency Limits of VLM with LLM-based Vision Encoders - OpenClaw-RL: Train Any Agent Simply by Talking - Lost in Stories: Consistency Bugs in Long Story Generation by LLMs - Holi-Spatial: Evolving Video Streams into Holistic 3D Spatial Intelligence - Spatial-TTT: Streaming Visual-based Spatial Intelligence with Test-Time Training - Flash-KMeans: Fast and Memory-Efficient Exact K-Means - Thinking to Recall: How Reasoning Unlocks Parametric Knowledge in LLMs - LoGeR: Long-Context Geometric Reconstruction with Hybrid Memory

Latent world models learn differentiable dynamics in a learned representation space, which should make planning as simple as gradient descent. But it almost never works. What I mean is, at test time, you can treat the action sequence as learnable parameters, roll out the frozen world model, measure how far the predicted final state is from the goal, and backprop through the entire unrolled chain to optimize actions directly. Yet many of the systems that work (Dreamer, TD-MPC2, DINO-WM) abandon this and fall back to sampling-based search instead. That's why I really like this new paper by @yingwww_, @ylecun, and @mengyer, which gives a clean diagnosis of why, and a principled fix. The reason everyone abandons gradient descent on actions is that the planning objective is highly non-convex in the learned latent space. So instead most systems use CEM (cross-entropy method) or MPPI (model predictive path integral), both derivative-free. CEM samples batches of action sequences, evaluates them by rolling out the world model, keeps the top-k, and refits the sampling distribution. MPPI does something similar but weights trajectories by exponentiated negative cost instead of hard elite selection. These work when gradients are unreliable but the compute cost is substantial — hundreds of candidate rollouts per planning step vs a single forward-backward pass. This paper asks what exactly makes the latent planning landscape so hostile to gradients and what you can do about it. The diagnosis. Their baseline is DINO-WM, a JEPA-style world model with a ViT predictor planning in frozen DINOv2 feature space, minimizing terminal MSE between predicted and goal embeddings. The problem is that DINOv2 latent trajectories are highly curved (when you use MSE as the planning cost you're implicitly assuming euclidean distance approximates geodesic distance along feasible transitions). For curved trajectories this breaks badly, gradient-based planners get trapped and straight-line distances in embedding space misrepresent actual reachability. The fix draws from the perceptual straightening hypothesis in neuroscience — the idea that biological visual systems transform complex video into internally straighter representations. So they add a curvature regularizer during world model training. Given consecutive encoded states z_t, z_{t+1}, z_{t+2}, define velocity vectors as v_t = z_{t+1} - z_t measure curvature as the cosine similarity between consecutive velocities, and minimize L_curv = 1 - cos(v_t, v_{t+1}). Total loss is then L_pred + λ * L_curv with stop-gradient on the target branch to prevent collapse. The theory backs this up cleanly — they prove that reducing curvature directly bounds how well-conditioned the planning optimization is — straighter latent trajectories guarantee faster convergence of gradient descent over longer horizons. Worth noting that even without the curvature loss, training the encoder with a prediction objective alone produces some "implicit straightening" — the JEPA loss naturally favors representations whose temporal evolution is predictable. Explicit regularization simply pushes this much further. Empirical results across four 2D goal-reaching environments are consistently strong. Open-loop success improves by 20-50%, and the GD with straightening matches or beats CEM at a fraction of the compute. The most convincing evidence is the distance heatmaps: after straightening, latent Euclidean distance closely matches the shortest distance between states, even though the model was trained only on suboptimal random trajectories. What I find interesting beyond the specific method is that the planning algorithm didn't change. The dynamics model didn't change. A single regularization term on the embedding geometry turned gradient descent from unreliable to competitive with sampling methods. The field has largely treated representation learning and planning as separate concerns — learn good features, then figure out how to plan in them. This paper makes a concrete case that the representation geometry is itself the bottleneck. This connects to a broader pattern in ML. When optimization fails, the instinct is to fix the optimizer (better search, more samples, adaptive schedules). But often the real lever is the shape of the space you're optimizing in. Same principle shows up in RL post-training where reward landscape shaping matters as much as the algorithm itself. Shape the space so simple optimization works, rather than building complex optimization to handle a bad space. Their paper: https://t.co/NLPGxqbP2x

@zhuokaiz Nice summary 😊

The Top AI Papers of the Week (March 9 - March 15) - KARL - OpenDev - SkillNet - Memex(RL) - AutoHarness - FlashAttention-4 - The Spike, the Sparse, and the Sink Read on for more:

@PastorSotoB1 Was a lot of work... but glad it's useful! (And it's also for future self since I revisit things quite often, and I thought why not making it more convenient for everyone)

@FilippoAlimonda That sounds cool but would definitely be more than just a weekend project haha

https://t.co/0lQ8NXM4M3

The Top AI Papers of the Week (March 9 - March 15) - KARL - OpenDev - SkillNet - Memex(RL) - AutoHarness - FlashAttention-4 - The Spike, the Sparse, and the Sink Read on for more:

don't make any mistakes, a tale in 2 parts https://t.co/9ZC5k9QB86

@_kaitodev @karpathy Spreading fear, uncertainty, and doubt isn’t very mindful, but in Silicon Valley, it’s often celebrated. AI won’t replace most jobs yet for one simple reason: it isn’t reliable enough. LLMs and AI agents frequently fail silently. And that’s not just a bug you can patch, it’s a consequence of how these systems are built. Until the industry openly acknowledges this, we’ll keep seeing “mysterious” failures. That’s the cost of hype, speculation, and misaligned incentives across academia, research, and politics. For example, one benchmark testing frontier AI on real-world work reported a 97% failure rate. Avoid getting burned by the hype. https://t.co/Ut4hpvTU3C

@JoshKale Spreading fear, uncertainty, and doubt isn’t very mindful, but in Silicon Valley, it’s often celebrated. AI won’t replace most jobs yet for one simple reason: it isn’t reliable enough. LLMs and AI agents frequently fail silently. And that’s not just a bug you can patch, it’s a consequence of how these systems are built. Until the industry openly acknowledges this, we’ll keep seeing “mysterious” failures. That’s the cost of hype, speculation, and misaligned incentives across academia, research, and politics. For example, one benchmark testing frontier AI on real-world work reported a 97% failure rate. Avoid getting burned by the hype. https://t.co/Ut4hpvTU3C

@TFTC21 Spreading fear, uncertainty, and doubt isn’t very mindful, but in Silicon Valley, it’s often celebrated. AI won’t replace most jobs yet for one simple reason: it isn’t reliable enough. LLMs and AI agents frequently fail silently. And that’s not just a bug you can patch, it’s a consequence of how these systems are built. Until the industry openly acknowledges this, we’ll keep seeing “mysterious” failures. That’s the cost of hype, speculation, and misaligned incentives across academia, research, and politics. For example, one benchmark testing frontier AI on real-world work reported a 97% failure rate. Avoid getting burned by the hype. https://t.co/Ut4hpvTU3C

I've been building panlabel — a fast Rust CLI that converts between dataset annotation formats — and I'm a few releases behind on sharing updates. Here's a quick catch-up. v0.3.0 added Hugging Face ImageFolder support, including remote Hub import via --hf-repo. You can point it at a HF dataset repo and it figures out the layout (metadata.jsonl, parquet shards, even zip-style splits that contain YOLO or COCO inside). v0.4.0 overhauled auto-detection so it gives you concrete evidence when format detection is ambiguous ("found YOLO labels/ but missing images/") instead of a generic error. Also added Docker images. v0.5.0 brought split-aware YOLO reading for Roboflow/Ultralytics Hub exports and conversion report explainability — every adapter now explains its deterministic policies so you know exactly what happens to your data. v0.6.0 is the big one. Five new format adapters: → LabelMe JSON (per-image, with polygon-to-bbox envelope) → Apple CreateML JSON (center-based coords) → KITTI (autonomous driving standard — 15 fields per line) → VGG Image Annotator (VIA) JSON → RetinaNet Keras CSV That brings panlabel to 13 supported formats with full read, write, and auto-detection. Also in v0.6.0: YOLO confidence token support, dry-run mode for previewing conversions, and content-based CSV detection. Single binary, no Python dependencies. Install via pip, brew, cargo, or grab a pre-built binary from GitHub releases. This is the kind of project I enjoy just steadily plodding away at — ticking off one format at a time until every common object detection annotation format is covered. Still sticking with detection bboxes for now, but the format list keeps growing. #ObjectDetection #Rust #MachineLearning #ComputerVision #OpenSource

We received the letter of condolence from H. E. @CyrilRamaphosa following the martyrdom of Imam Khamenei. Iran and South Africa have deep-rooted friendship.

The computational power in your iPhone’s CHARGER is more than what was used to land on the moon.

@steipete You didnt get that many crappy prs without AI

A Restore Britain Government would defund the BBC. https://t.co/K63UGn7DSw