Your curated collection of saved posts and media

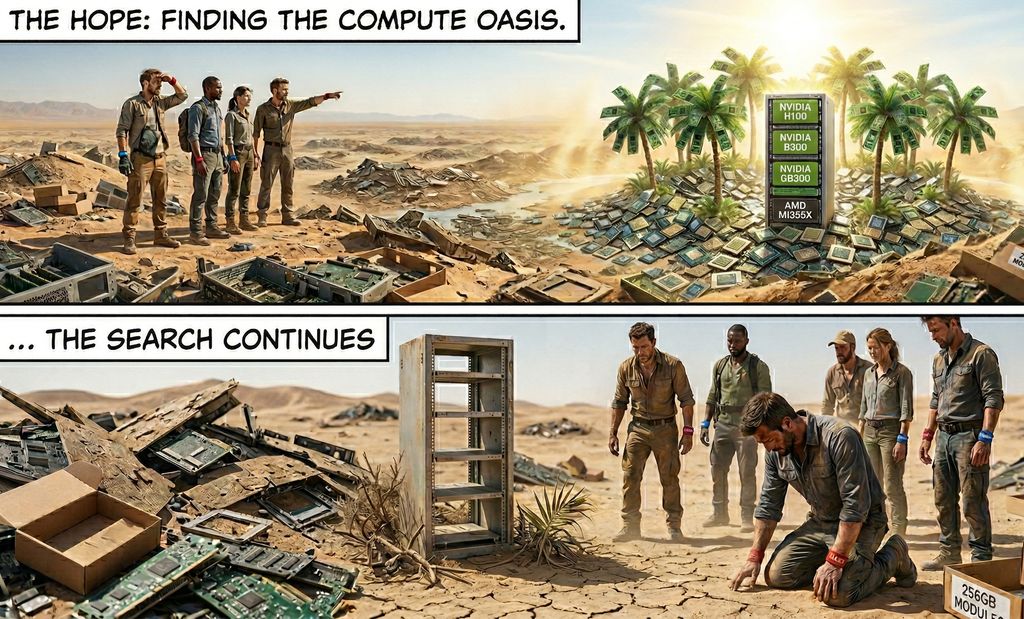

GPU PRICE INCREASE ALERT: Finding GPU compute in early 2026 has been like trying to book the last flight out - high prices, almost no availability. Customers are fighting to pay $14/hr/GPU for p6-b200 spot instances in AWS, some Neocloud Giants no longer sell single nodes, H100s are getting renewed at the exact same rate they were signed at 2-3 years ago. (1/5)🧵

Just updated: all the top AI news here on X. https://t.co/kiuZ7QXLzb My AI wasn't fooled by any April Fools joke too. So proud of it. All built with the X API by an idiot. Me. With a better AI than you can run on an OpenClaw.

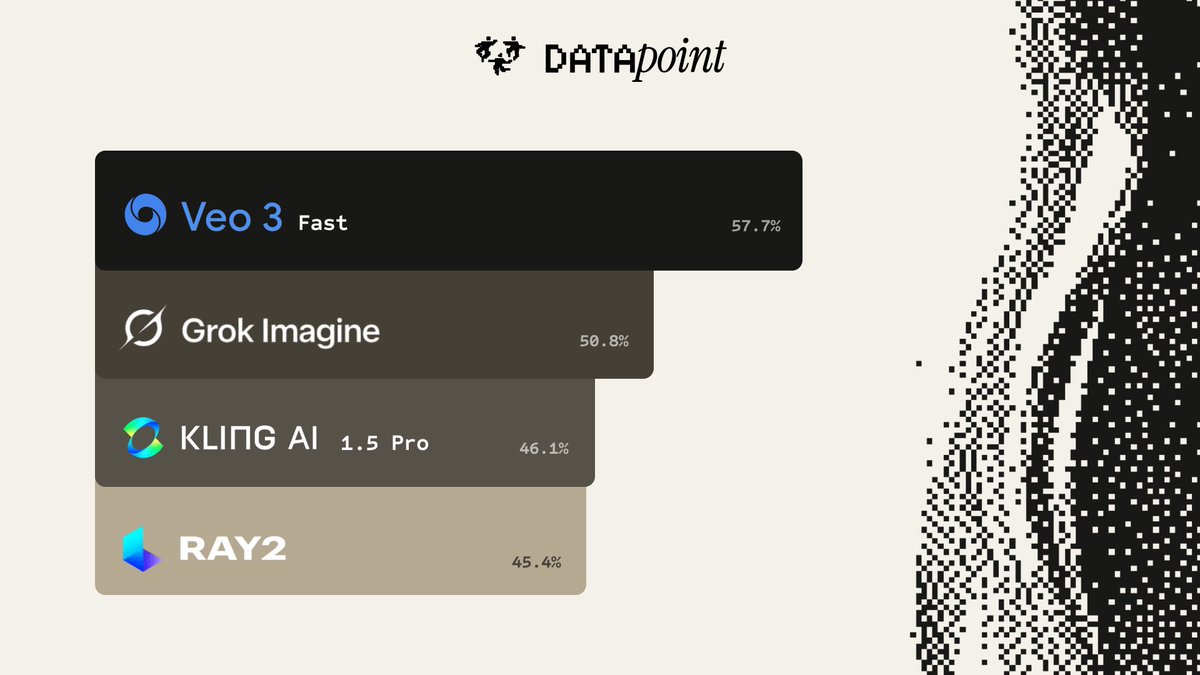

VLMs still suck at human motion. So we collected the world’s largest human motion preference dataset, and used it to rank top video models on what people actually care about. Results: 1) @Google Veo 3 Fast 2) Grok @imagine 3) @Kling_ai 1.5 pro 4) @LumaLabsAI Ray 2 Read more👇 https://t.co/q0ZY2lhxUX

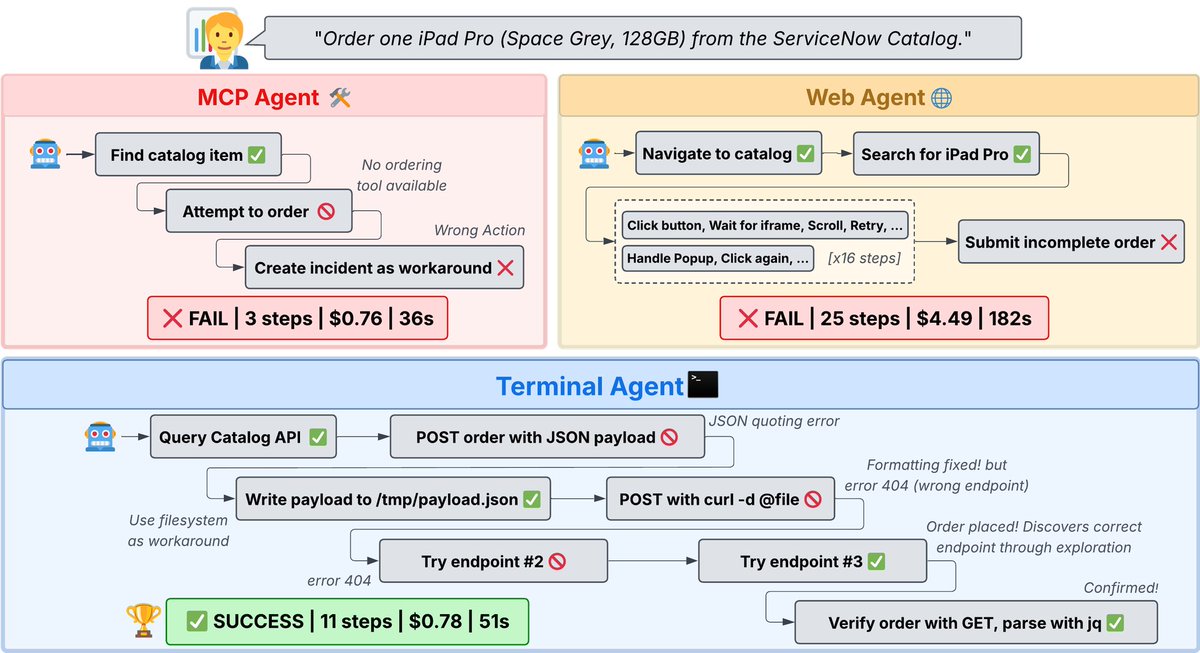

Terminal Agents Suffice for Enterprise Automation ServiceNow research shows terminal-based coding agents with direct API access match or outperform complex MCP and GUI agents, proving strong foundation models need only simple programmatic interfaces for enterprise automation. https://t.co/kbXMNdoMO0

Today, we’re launching Gemma 4, our most intelligent open models to date. Built with the same breakthrough technology as Gemini 3, Gemma 4 brings advanced reasoning to your personal hardware and devices. Here’s what Gemma 4 unlocks for developers: — Intelligence-per-parameter: Our 31B (Dense) and 26B (MoE) models deliver state-of-the-art performance for their size, outcompeting models 20x their size on @arena — Commercial flexibility: Released under a permissive Apache 2.0 license for complete developer flexibility and digital sovereignty — Agentic workflows: Native support for function-calling and structured JSON output allows you to build reliable, autonomous agents — Multimodal edge AI: The E2B and E4B models bring native vision, audio, and low latency to mobile and IoT devices — Long-context reasoning: Up to 256K context windows allow you to process entire repositories or large documents in a single prompt Whether you're building global applications in 140+ languages or local-first AI code assistants, Gemma 4 is built to be your foundation. Explore in @GoogleAIStudio or download the weights on @HuggingFace, @Kaggle, and @Ollama.

Read all about Gemma 4 in our blog: https://t.co/LoynxkXxA9

After the release of Parse v2, Extract is also getting an upgrade — 𝗶𝗻𝘁𝗿𝗼𝗱𝘂𝗰𝗶𝗻𝗴 𝗘𝘅𝘁𝗿𝗮𝗰𝘁 𝘃𝟮! 🎉 We've been reworking the experience from the ground up to make document extraction more powerful and easier to use than ever. Here's what's new: ✦ 𝗦𝗶𝗺𝗽𝗹𝗶𝗳𝗶𝗲𝗱 𝘁𝗶𝗲𝗿𝘀: we've replaced modes with cleaner, more intuitive tiers. (And stay tuned: agentic plus is coming to Extract too, very soon.) ✦ 𝗣𝗿𝗲-𝘀𝗮𝘃𝗲𝗱 𝗲𝘅𝘁𝗿𝗮𝗰𝘁 𝗰𝗼𝗻𝗳𝗶𝗴𝘂𝗿𝗮𝘁𝗶𝗼𝗻𝘀: load your saved extraction configs directly, so you can skip the setup and get straight to extracting. ✦ 𝗖𝗼𝗻𝗳𝗶𝗴𝘂𝗿𝗮𝗯𝗹𝗲 𝗱𝗼𝗰𝘂𝗺𝗲𝗻𝘁 𝗽𝗮𝗿𝘀𝗶𝗻𝗴: now you can control how your documents get parsed before extraction, giving you more flexibility and better results end to end. And for those who need a transition period: Extract v1 will remain accessible via the UI under 'Settings → General' for a limited time. Try Extract v2 today → https://t.co/yPVJzqoKal

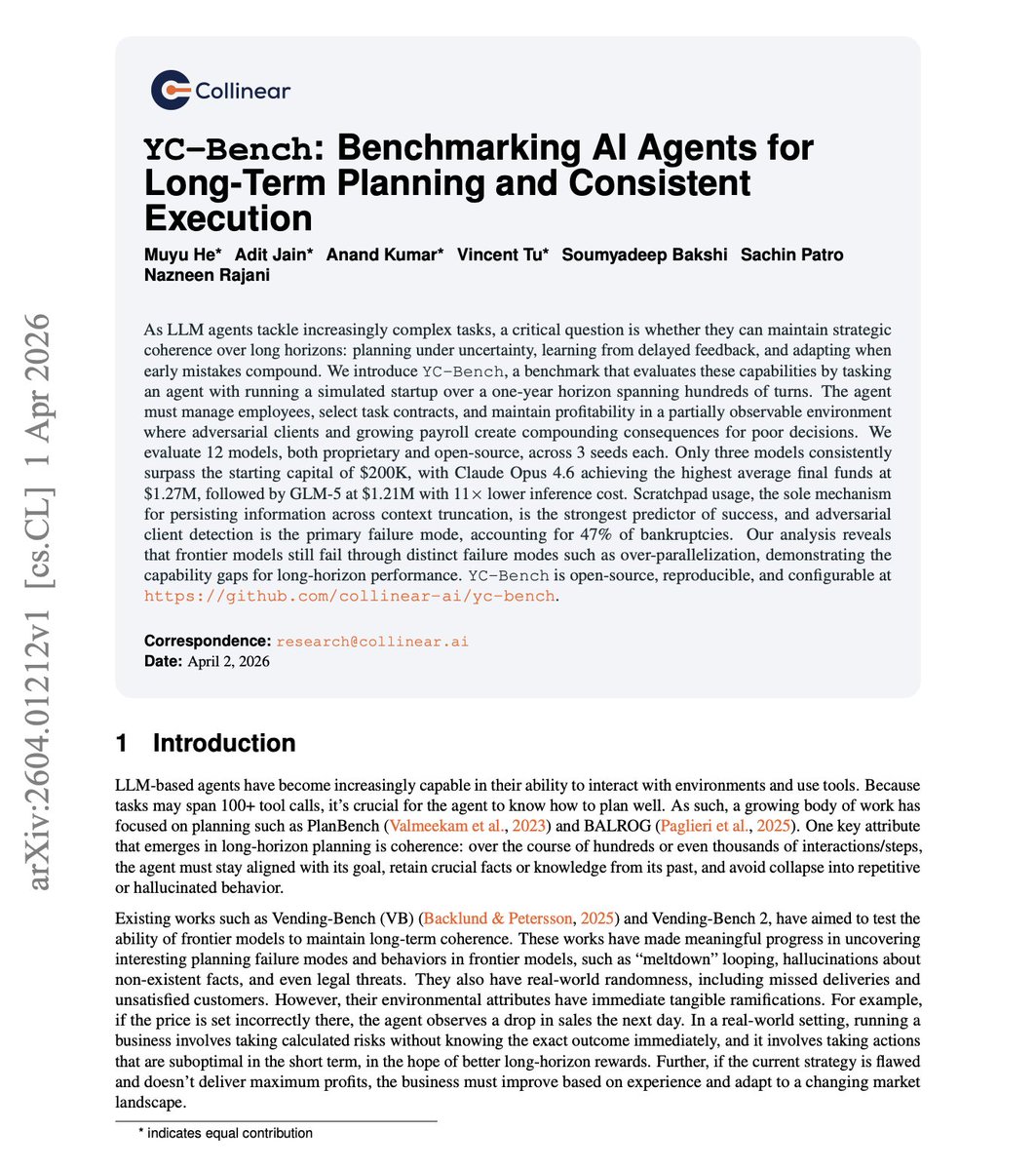

Can an AI agent run a startup for a year without going bankrupt? Turns out most can't. New benchmark from Collinear AI puts 12 models to the test. YC-Bench tasks agents with running a simulated startup over hundreds of turns: hiring employees, selecting contracts, and maintaining profitability in a partially observable environment with adversarial clients and compounding consequences. Only three models consistently surpass the $200K starting capital. Claude Opus 4.6 leads at $1.27M average final funds, followed by GLM-5 at $1.21M with 11x lower inference cost. Scratchpad usage, the sole mechanism for persisting information across context truncation, is the strongest predictor of success. Adversarial client detection accounts for 47% of bankruptcies. Long-horizon coherence, not raw intelligence, separates the winners from the bankrupt. Paper: https://t.co/jVJLJReUsN Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Static orchestration is the silent killer of multi-agent RAG systems. The query changes, but the agent topology stays the same. The work introduces HERA, a framework that jointly evolves multi-agent orchestration and role-specific agent prompts. At the global level, it optimizes query-specific agent topologies through reward-guided sampling. At the local level, it refines individual agent behaviors via credit assignment and dual-axes prompt adaptation. On six knowledge-intensive benchmarks, HERA achieves an average improvement of 38.69% over recent baselines. Why does it matter? As multi-agent RAG systems scale, the gap between fixed pipelines and adaptive orchestration will only grow. HERA shows that letting the system learn its own coordination structure produces compact, high-utility agent networks. Paper: https://t.co/hxoYDfsHBn Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

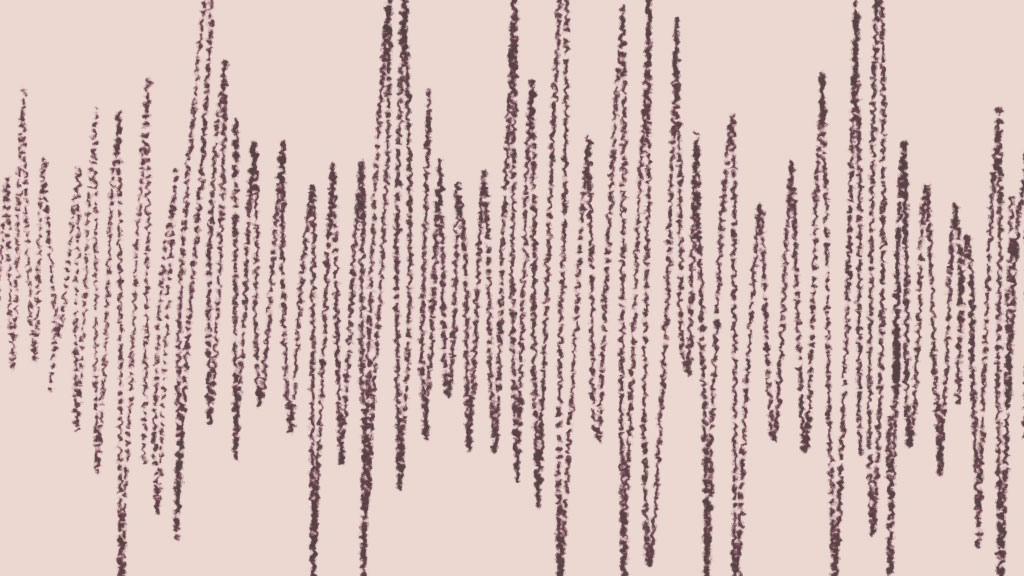

MAI‑Transcribe‑1 makes speech‑to‑text clearer, faster, and more reliable even in noisy audio. Ranked #1 on the industry-standard FLEURS word error rate benchmark. Now in public preview. Learn more: https://t.co/Gr4Q8jgCwL https://t.co/L6hndn3D34

Did you see the latest @code release? 👀 ✨ Preview videos in the image carousel 📋 Copy chat responses into markdown 🔍 Troubleshoot chat problems ⚡ Updated #codebase command ...and more! See what's new → https://t.co/uDDngMlaLB https://t.co/RVtRPuAFVY

Latest update to the Product Growth Stacker… https://t.co/rZBtY0Ia8c

Our co-CEO @malling joined @SquawkCNBC to talk about how AI agents can monitor markets, manage cash, and execute trades. https://t.co/ICCJQgVyMd

Hoorayyy! 🥳👏🏻 @mariaressa For Press Freedom! It’s final: Rappler wins vs 2018 SEC shutdown order https://t.co/4f6XYn0qx5

🔥 CFP is LIVE for #PyTorchCon North America 2026! Submit a talk or poster for Oct 20–21 in San Jose. Topics span training, inference, kernel engineering, responsible AI, & more. Deadlines: June 7 (talks) · July 26 (posters). Learn more + submit: https://t.co/Mz2gMtNnFc Super early bird reg ends April 10. Save up to $500: https://t.co/1z0jDhdUZm

A contributor was dropped by The New York Times after using AI in a book review. The case highlights how even limited AI use can raise concerns about originality and credibility, especially when similarities to other published work are detected. In journalism, trust remains the core currency, and how AI is used can quickly put that at risk. https://t.co/yr7H2Ju2TP

I made a documentary on the creators of JMail it's an attempt to capture what it feels like to be a young person in san francisco right now dropping tomorrow https://t.co/rrABaqZAKC

got to sit down and talk to the team behind jmail incredible story of how a weekend project became load bearing software in one of the biggest scandals in history documentary coming soon, support their work below https://t.co/17vMX7DWs6

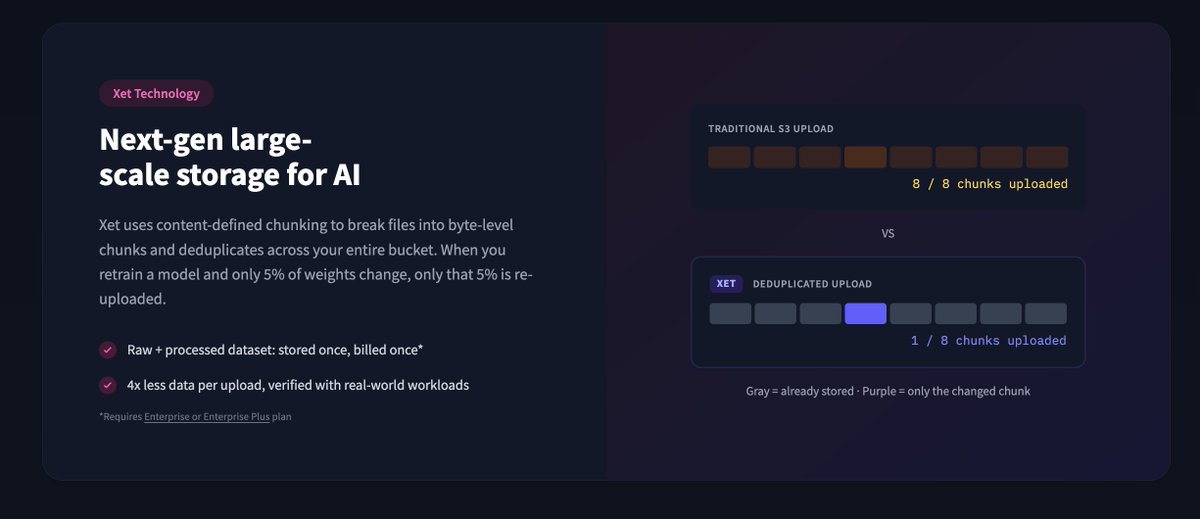

Hot take: Git was the wrong abstraction for 90% of ML data. Checkpoints, optimizer states, training logs, agent traces - none of this needs version control. It needs fast, cheap, mutable storage. So we built Buckets. S3-like storage on the @huggingface Hub with Xet dedup and zero egress. Train in a bucket. Publish to a repo. One platform. 🤗🤗🤗

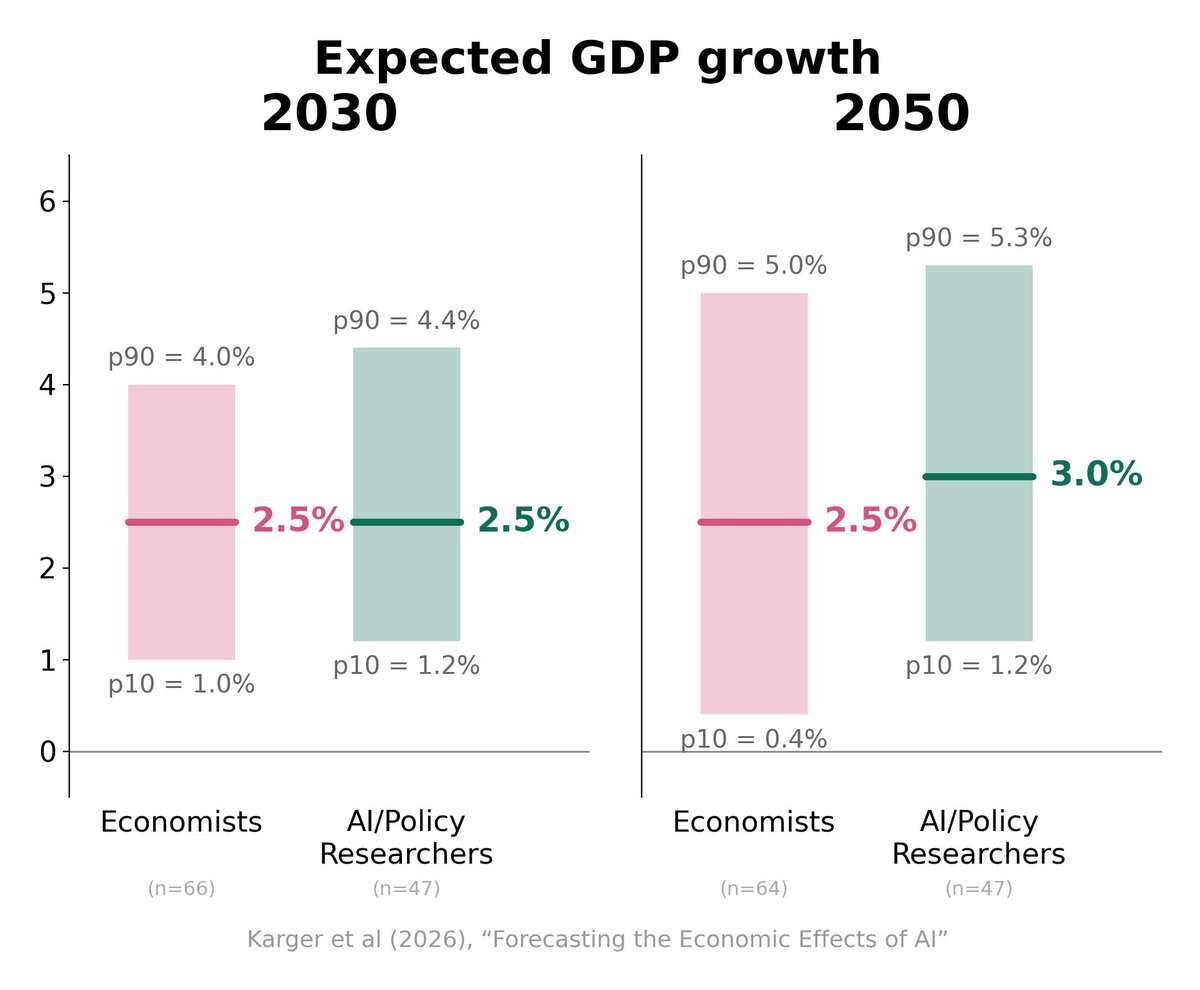

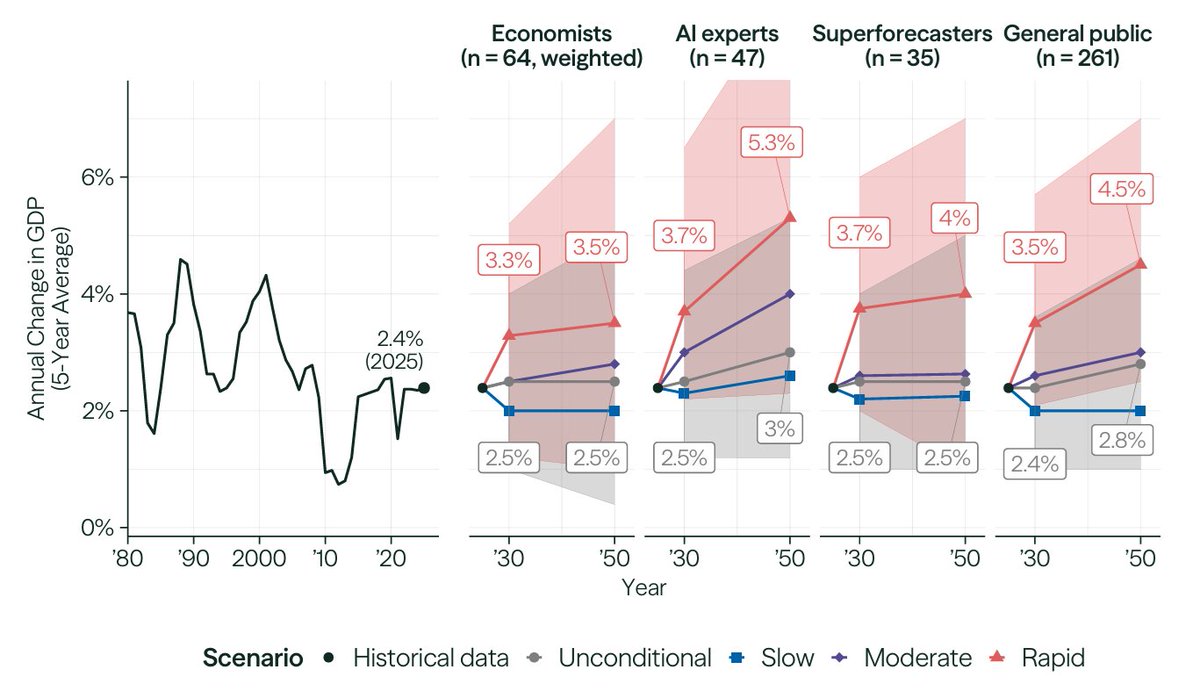

1. Economists are AI-pilled (surprising!)... - Both economists & AI folks give ~15% chance that AI surpasses humans by 2030 on most cognitive and physical tasks 2. ...but no one is singularity-pilled - Expecting basically normal GDP growth (1950s levels at best)!! 🧵 https://t.co/T8ZVMS5Xcq

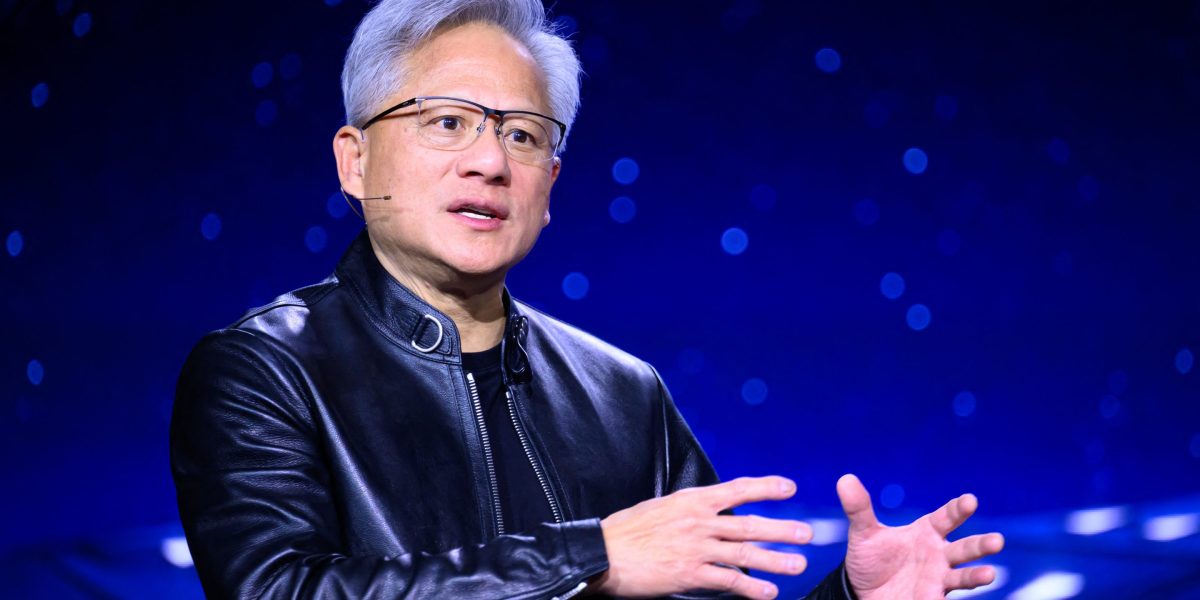

Fear around AI often comes from misunderstanding what is actually changing. Jensen Huang argues that people confuse their job with the tools they use, when in reality tools evolve but the underlying role can adapt. The shift is not about losing purpose, but redefining how work gets done. https://t.co/h5hEUnIUPQ @fortunemagazine

A recent @Research_FRI survey found that experts predict muted growth even under rapid AI progress. Why is that? @testingham, @BasilHalperin, @random_walker, @paulnovosad, and @alexolegimas provided competing explanations. I break down the debate: https://t.co/mRXh2niJ8i https://t.co/A6wqjCPLUa

AI is already delivering real productivity gains. Workers are saving up to an hour a day on tasks, yet most companies have not fully adopted the technology. The gap between what is possible and what is implemented remains wide. The opportunity is clear. The question is how quickly organizations can catch up. https://t.co/4aQQ8s9H40 @fortunemagazine

SOOTHENT PASTE for Sensitive Teeth, our entry for the Runway Ad Contest #RunwayBigAdContest https://t.co/fGadib5xYv

Monzo is pulling back from its US expansion. After six years, the neobank is exiting the market as regulatory complexity and the lack of a banking license proved harder to overcome than expected, despite strong growth at home. It’s a reminder that scaling fintech is not just about product or demand. Regulation still defines the boundaries of what is possible. https://t.co/zme8sdMYCD

Build at enterprise speed -> AMD Developer Hackathon with @AIatAMD. $10K + AMD Radeon AI PRO R9700 GPU on the line. Ship AI Agents. Run massive models. May 4-10. $100 Cloud Credits for all the builders. Register now → https://t.co/AhsT8X44KX. https://t.co/z3ZSOEBjEp

クリクリ〜 https://t.co/G1Vme67PNX

クリクリ〜 https://t.co/G1Vme67PNX

WALL•E and EVE are meeting at Pixar Place Hotel on Wednesdays in April! Just LOOK AT THEM! https://t.co/n0seuGNfjT

WALL•E and EVE are meeting at Pixar Place Hotel on Wednesdays in April! Just LOOK AT THEM! https://t.co/n0seuGNfjT

Chinese startup MindOn in Shenzhen dropped a demo of their “robot brain”. Watch it autonomously water plants, open curtains, tidy rooms and carry packages & clean. MindOn’s world model turns the Unitree G1 into an independent chore bot. https://t.co/JNU7KEbrcf

It was great to see @sparkycollier (Mark Collier) on the keynote stage at #KubeCon + #CloudNativeCon to discuss how cloud native infrastructure is powering the shift from AI inference to autonomous agents. It’s clear that CNCF OSS is the backbone of the modern AI landscape. From Amsterdam to Paris, let’s carry the torch to #PyTorchCon Europe, 7-8 April! Register: https://t.co/9Pt5VR1rI4 #PyTorch #AI #CloudNative #OpenSource #GenAI

Crypto investors face a simple trade-off: -Hold → no yield -Lend → earn yield, but give up custody There is a 3rd option → generate yield without lending your assets. → crito brings this expertise to crypto and makes it tradable 24/7 on a platform. https://t.co/ksOPoX6WNE