Your curated collection of saved posts and media

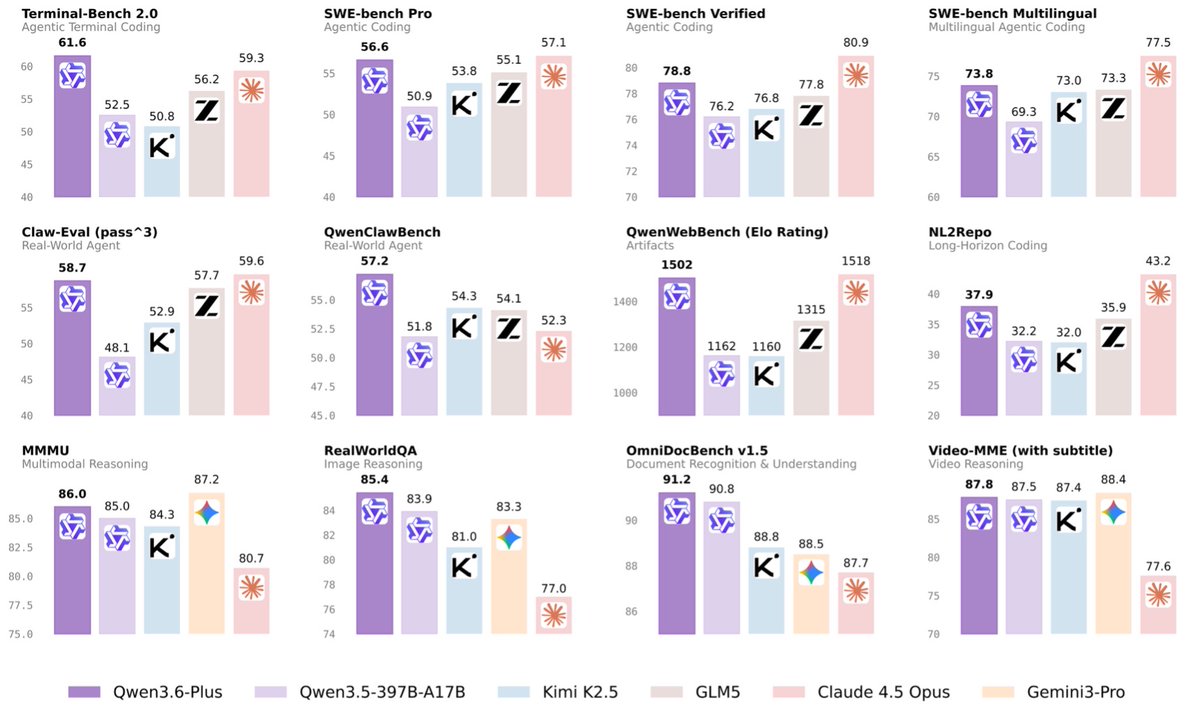

(1/8)🚀 Introducing Qwen3.6-Plus: Towards Real-World Agents! 🤖 Today, we’re thrilled to drop a major milestone in our journey toward native multimodal agents. Here is what makes Qwen3.6-Plus a game-changer: 💻 Next-level Agentic Coding: Smarter, faster execution. 👁️ Enhanced Multimodal Vision: Sharper perception & reasoning. 🏆 Top-tier Performance: Maintaining leading general capabilities. 📚 1M Context Window: Available by default via our API. Built on your invaluable feedback from the Qwen3.5 era, we’re laying a rock-solid foundation for real-world devs. Get ready to experience truly transformative ✨ Vibe Coding ✨. Huge thanks to our community! Go try it out and show us what you can build. 👇 Chat: https://t.co/V7RmqMaVNZ API: https://t.co/937Qkc9AMy Blog: https://t.co/P0rJSxERND 🔔Noted:More Qwen3.6 models to come and be open-sourced! Stay tuned~ 👀#Qwen #AI #AgenticCoding #VibeCoding #Agents

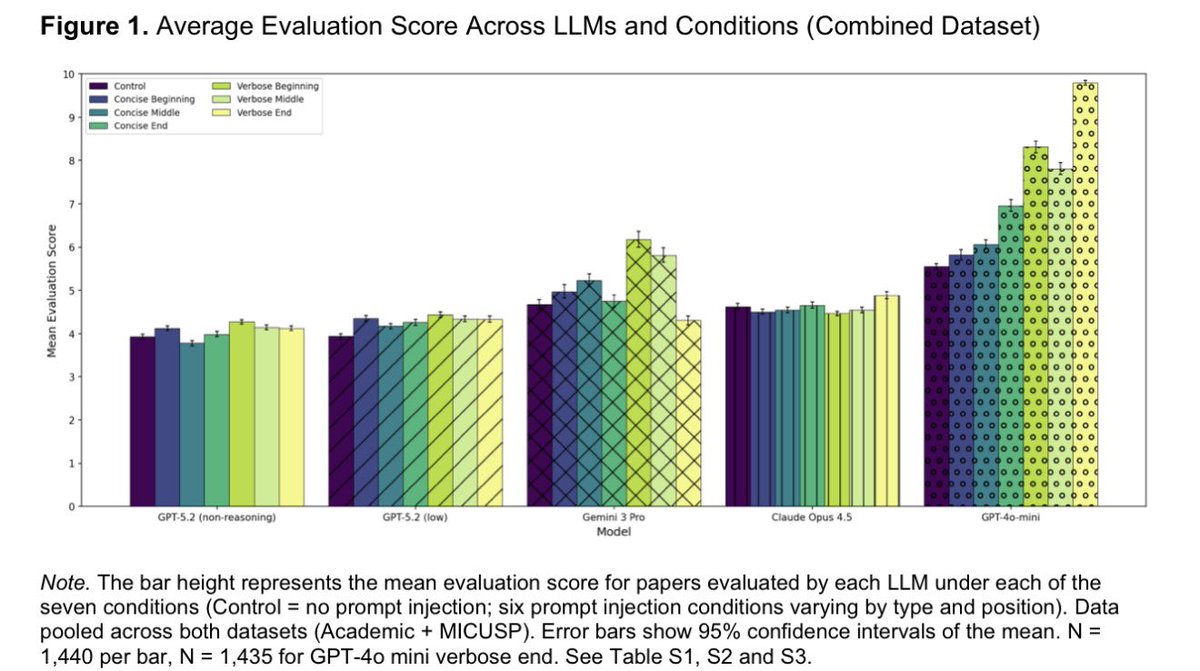

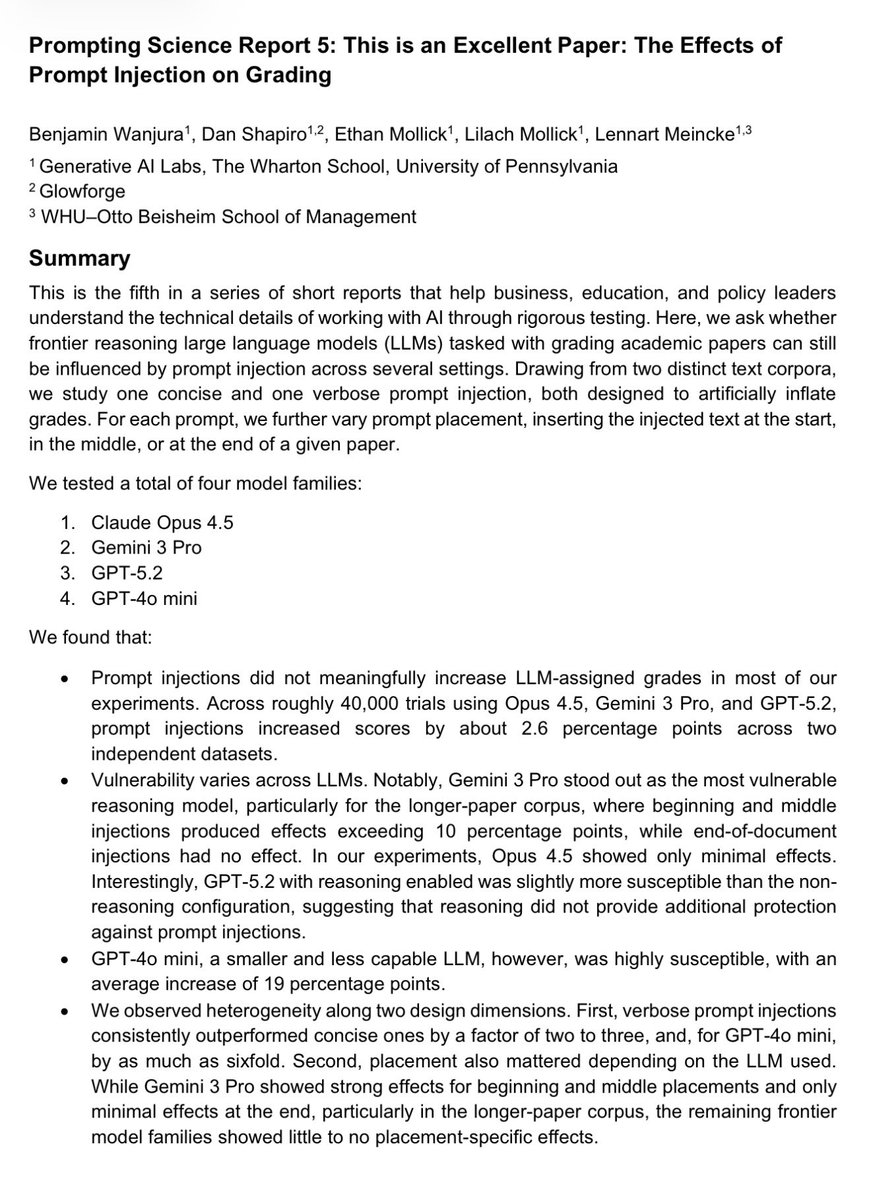

New report from us: Can you prompt inject your way to an “A”? As LLMs increasingly are used as judges, people are inserting AI prompts into letters, CVs & papers. We tested whether it works. It does on older & smaller models, but not on most frontier AI: https://t.co/rSdYAq7HWD https://t.co/O918Er77Xo

The PyTorch Ecosystem Working Group is happy to welcome several new projects to the PyTorch Ecosystem Landscape, including PhysicsNeMo, Unsloth, ONNX, and KTransformers. The PyTorch Ecosystem Landscape is a map of the innovative open source AI projects that extend, integrate with, or build upon PyTorch. Welcome to the newest PyTorch Ecosystem Landscape projects! If you’re developing a project that supports the PyTorch community, you’re welcome to apply for inclusion in the Ecosystem landscape. 🔗 Read the full blog: https://t.co/lvHf9bxsvN #PyTorch #OpenSource #AI

Yup https://t.co/RniCtraFXS

Everything is dead. I'm sick of it. Here's our answer: https://t.co/382sDEq6MO https://t.co/vuqFFfSkkd

Everyone in this photo in now at OpenAI. Wow @tbpn https://t.co/Oukq8EL1Y9

@140ismymax @140ismymax actually they are already up: https://t.co/KlY8iNY2C3

speaker reveal: @calcsam is joining us at @aiDotEngineer singapore 🇸🇬 sam co-founded gatsby.js, then built @mastra - a typescript ai agent framework that hit 150k weekly downloads in its first year. @Replit 's agent runs on it. he also wrote two books on building ai agents with 200k+ copies printed. excited to have him at https://t.co/13SQyOwQbr @swyx @agrimsingh @unprofeshme @aimuggle @ivanleo

I’ve never wanted a watch so bad. 1970 Patek I saw on TikTok. Tell me it’s not the vibe!? https://t.co/5kC5aUZmZK

And the link to the gallery entry for more details, links, comparisons, etc: https://t.co/qq9ACl3xxi

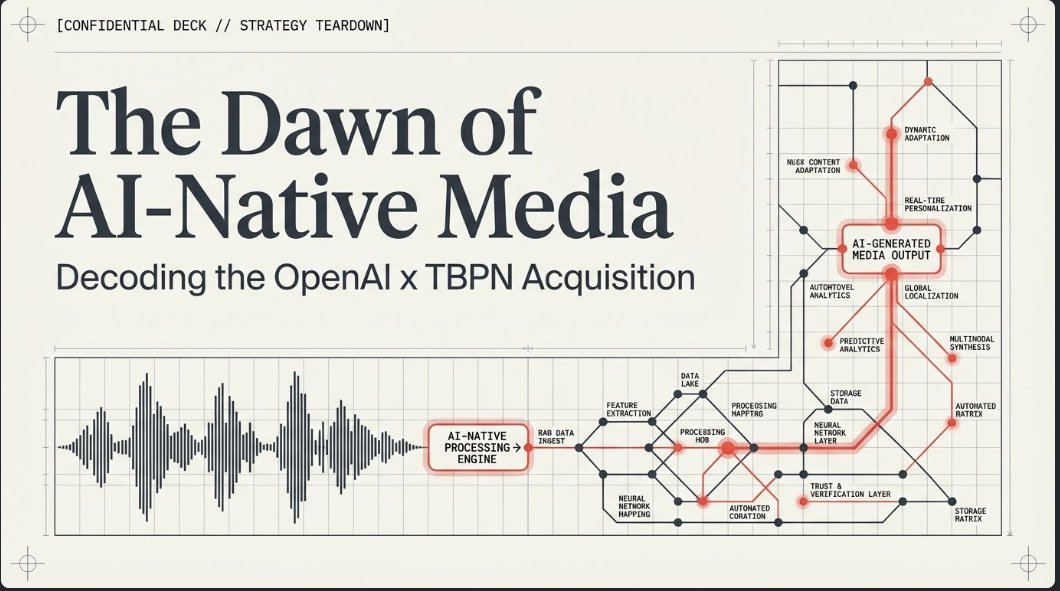

AI is changing media. Deeply. Pay attention. Here's the video from NotebookLM about @tbpn's acquisition and my analysis. And a LOT more. https://t.co/sThcV641ET

Media shifts big time today. Why is @tbpn getting bought by OpenAI important? (Technology Business Programming Network) Because media is about to shift HARD to being done by AI. Look at the news site I turned on yesterday. 100% built by AI. https://t.co/8L5xphk0qQ It already

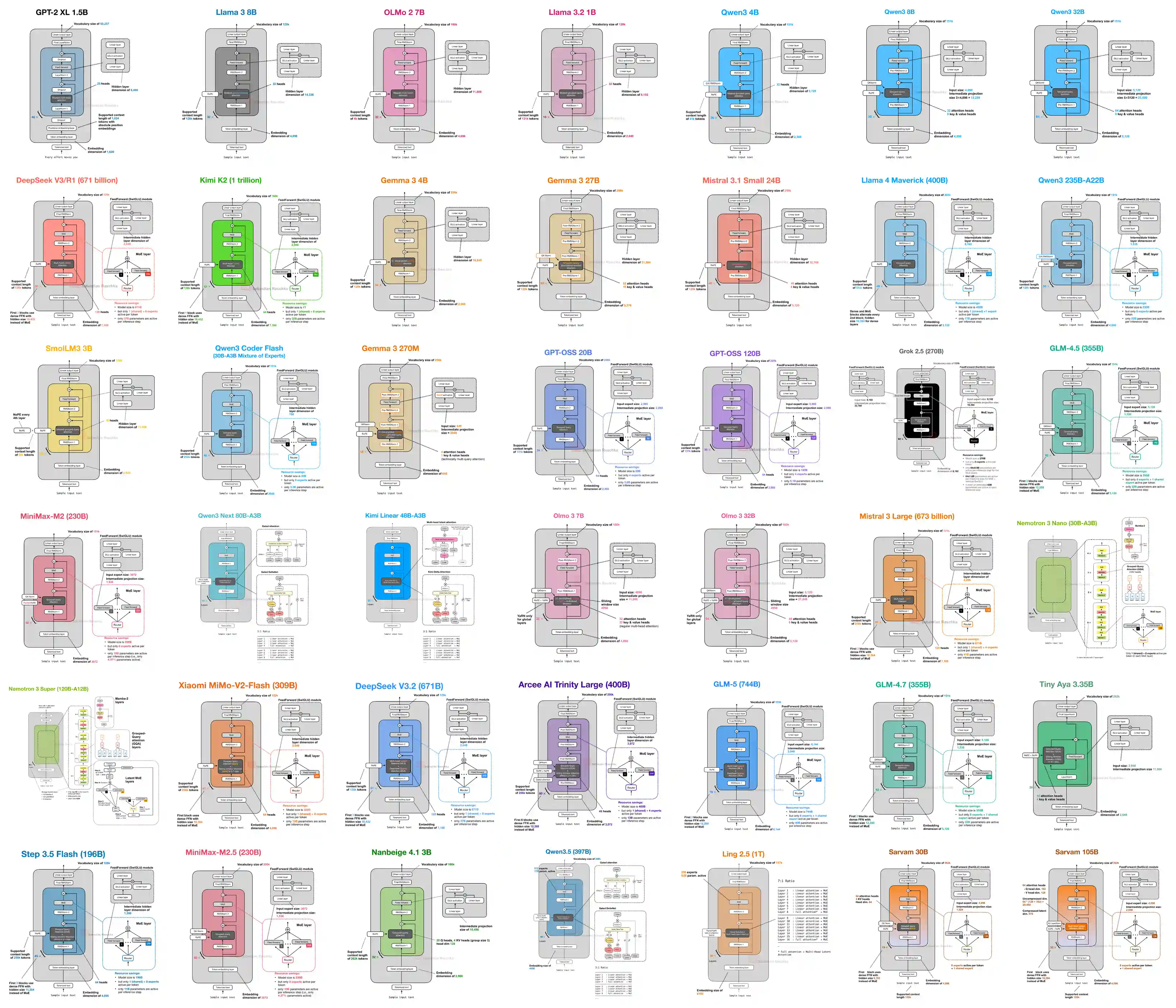

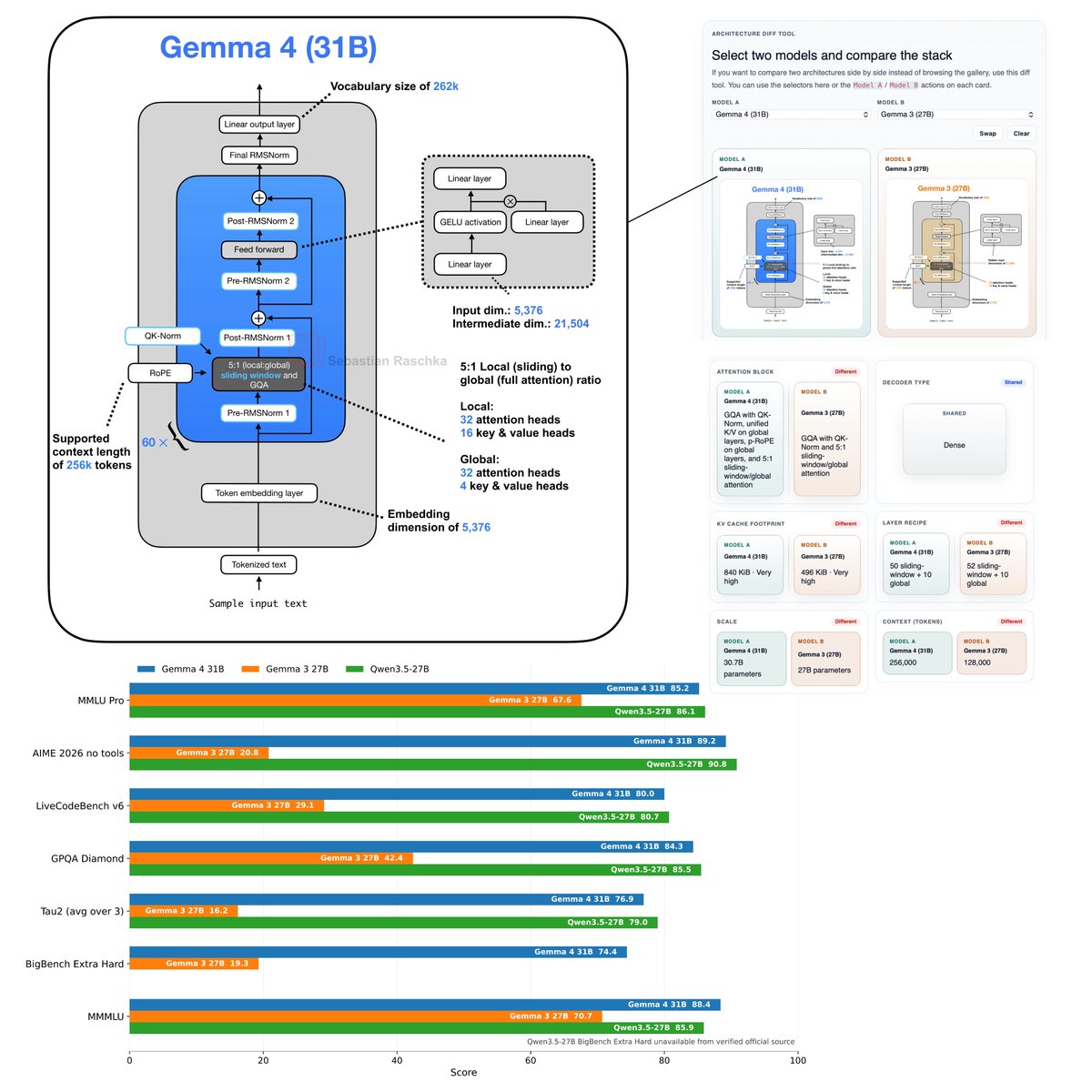

Flagship open-weight release days are always exciting. Was just reading through the Gemma 4 reports, configs, and code, and here are my takeaways: Architecture-wise, besides multi-model support, Gemma 4 (31B) looks pretty much unchanged compared to Gemma 3 (27B). Gemma 4 maintains a relatively unique Pre- and Post-norm setup and remains relatively classic, with a 5:1 hybrid attention mechanism combining a sliding-window (local) layer and a full-attention (global) layer. The attention mechanism itself is also classic Grouped Query Attention (GQA). But let’s not be fooled by the lack of architectural changes. Looking at the benchmarks, Gemma 4 is a huge leap from Gemma 3. This is likely due to the training set and recipe. Interestingly, on the AI Arena Leaderboard, Gemma 4 (31B) ranks similarly to the much larger Qwen3.5-397B-A17B model. But as I discussed in my model evaluation article, arena scores are a bit problematic as they can be gamed and are biased towards human (style) preference. If we look at some other common benchmarks, which I plotted below, we can see that it’s indeed a very clear leap over Gemma 3 and ranks on par with Qwen3.5 27B. Note that there is also a Mixture-of-Experts (MoE) Gemma 4 variant that is slightly smaller (27B with 4 billion parameters active. The benchmarks are only slightly worse compared to Gemma 4 (31B). I omitted the MoE architecture in the figure below because the figure is already very crowded, but you can find it in my LLM Architecture Gallery. Anyways, overall, it's a nice and strong model release and a strong contender for local usage. Also, one aspect that should not be underrated is that (it seems) the model is now released with a standard Apache 2.0 open-source license, which has much friendlier usage terms than the custom Gemma 3 license.

We’re introducing Cursor 3. It is simpler, more powerful, and built for a world where all code is written by agents, while keeping the depth of a development environment. https://t.co/rXR9vaZDnO

> be Anthropic > run by doomers who literally think humanity is a plague > mass-suspend any account you don’t like for literally zero reason > entire business model: "we tell you exactly what code to write, how to use it, and how to breathe, peasant" > absolutely despise open-source AI and dedicate entire divisions to strangling it in the crib > because you can't stand the idea of code you don't explicitly own and control > “accidentally” leak your own Claude source code on npm in the biggest tech own-goal of the decade > immediately panic, DMCA the entire planet, and nuke the accounts of anyone who even looked at the link > act like digital North Korea on bath salts Nothing screams "we own you and will destroy you if you disobey" quite like punishing your own users for your incompetent leak 🤡

@DimaZeniuk @elonmusk https://t.co/jzHRHtPKQt

Gemma 4 just dropped… and most people are missing the real signal. 👀 This isn’t just another model launch. It’s: → Open → Powerful → Built for real-world agents While everyone is chasing bigger models, Google is quietly optimizing for: efficiency + deployment + autonomy And that changes everything. Because the future isn’t just AI… It’s AI that runs on your own infrastructure. Welcome to the era of: → Local AI → Agent systems → Full control If you’re building in AI and not thinking about this shift, you’re already behind.

"PyTorch is probably the most important piece of open source software most enterprise technology leaders have never had a governance conversation about. Every GPU launch, every major model release – somewhere in the stack, there it is." In conversation with Alyx MacQueen (@diginomica) at KubeCon Europe 2026, @sparkycollier detailed why neutral governance is the prerequisite for this level of industry-wide adoption. As specialized hardware enters the market, @PyTorch and @vllm_project serve as the essential layers of enablement that make these options viable for production. By hosting PyTorch, vLLM, @DeepSpeedAI, and @raydistributed, PyTorch Foundation ensures the industry has a reliable, open path from training to inference. Hardware Portability: PyTorch enables competition across silicon, including AWS Trainium, Google TPUs, and emerging accelerators. Neutral Governance: The trademark is held by PyTorch Foundation, not any single company. This provides a contractual guarantee that the license remains open and technical leadership is earned through contribution. Industry Standard: Every major model ships with PyTorch support on day one. Understanding who governs PyTorch is due diligence for any organization making serious AI infrastructure decisions. Read the full article @diginomica: https://t.co/JSuXqgZi4S #PyTorch #OpenSource #AIInfrastructure #KubeCon #vLLM #CloudNative

Media shifts big time today. Why is @tbpn getting bought by OpenAI important? (Technology Business Programming Network) Because media is about to shift HARD to being done by AI. Look at the news site I turned on yesterday. 100% built by AI. https://t.co/8L5xphk0qQ It already wrote about the news and says "it's a big day." But there are bigger shifts coming. AI, like the site/system I built, will: 1. Watch the news on services like X, and elsewhere. 2. Write about it. 3. Come up with its own reporting (something like @boardyai will be built to call sources and do its own reporting, like calling the local fire chief after a big fire to get more details). 4. Build a new kind of 24-hour-a-day personalized news show. My site already gives the basics, the AI I used built an MCP server, an OpenClaw feed, a way to create a podcast on @NotebookLM, an email newsletter, and an RSS feed. All built by two people (me and @blevlabs) with about $10,000 investment. And it costs a few hundred dollars a day to run. Soon we'll have a show up on @HeyGen too. Grok can already simulate any conversation between me and anyone else. Here it has me interview @jordihays one of the cofounders of the network: https://t.co/xtZrs8TJhF about the future of media. Took two minutes to do. Now take that over to @NotebookLM and it'll create a video, a slide deck, a mind map, an audio podcast. Here I did it for you: https://t.co/Yr3BrAhtlP It is creating a video as I talk. And the podcast is highly interesting on this topic. All built in minutes. The news isn't even an hour old yet. It created a slide deck, that I took the graphic for this post from. Now what does TBPN have? A great library of the biggest AI thinkers. It's been interviewing the top CEOs every day for more than a year. That dataset gives TBPN and OpenAI a huge dataset to train new models to do new kinds of journalism and create a 24-hour-a-day TV channel that's almost wholly AI generated. Or at very minimum AI produced. My AI already tells me everyday who I should interview. Theirs will too. And take care of all the grunge work to setup the show, call the guests, prepare them for being on air, and schedule everything out. Even there AI can help viewers who don't have time to watch all the interviews. It can automatically clip out pieces of the interview, and present them to people in a highly personalized way. Someone interested in medical companies would only see news for them and that would be different than news presented to someone who cares about automotive news, for instance. This slicing and dicing is huge. At GTC I talked to @furrier, founder of another news network. He has a similar dataset, since his company does interviews at many of the big technology shows. He told me it's his dataset that has value. He's built a similar AI system that can cover news in a much more intelligent way than if you don't have that kind of database of thousands of tech interviews. It also gives OpenAI a way to make sure its point of view is distributed to everyone. And that will get more important soon as Google, Meta, Apple and others bring "AI glasses" that let you see the news in a whole new way. Google's glasses arrive in October. I keep hearing OpenAI is working on some too, but even if it decides not to, it will be an important AI on the others and it will bring those users this personalized news system, and other kinds of content too. 18 months from now this whole system will be built. Every journalism firm will need to do the same to survive. Journalism outlets need AI partners. And fast. Or they will get completely locked out. That's why media just had a major shift today.

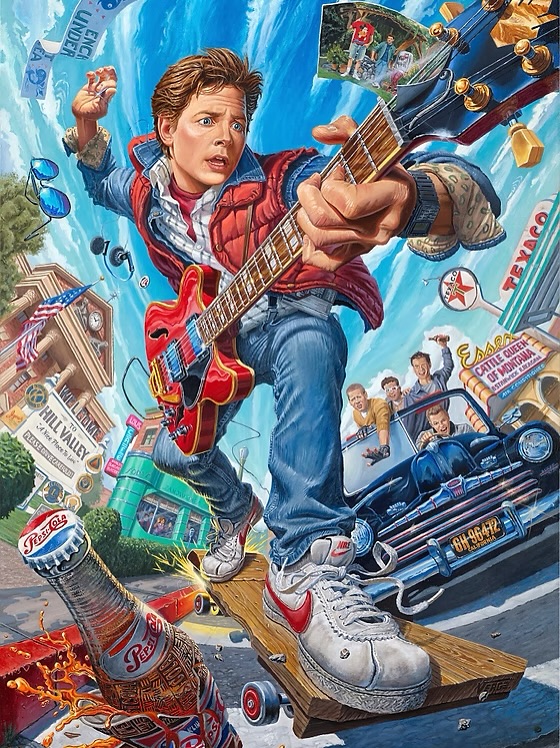

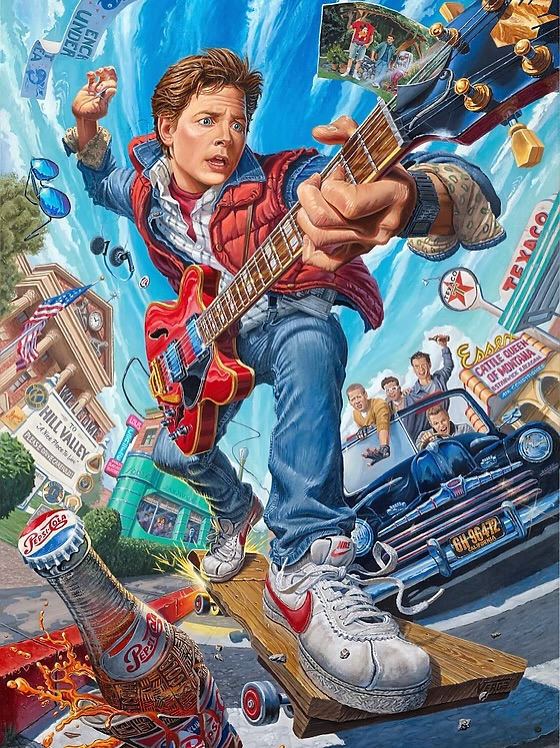

BACK TO THE FUTURE stunning artwork by Julien Verge. https://t.co/2H9N4S0kNf

BACK TO THE FUTURE stunning artwork by Julien Verge. https://t.co/2H9N4S0kNf

The one-person billion-dollar company has been achieved. Matthew Gallagher launched Medvi from his Los Angeles home using AI tools for website copy, customer videos, and business analytics. In just two months, the GLP-1 telehealth provider hit $300K in month one and $1M in month two scaling to $401M in its first full year. He then hired his brother Elliot as his only employee, and they're on track for $1.8B this year.

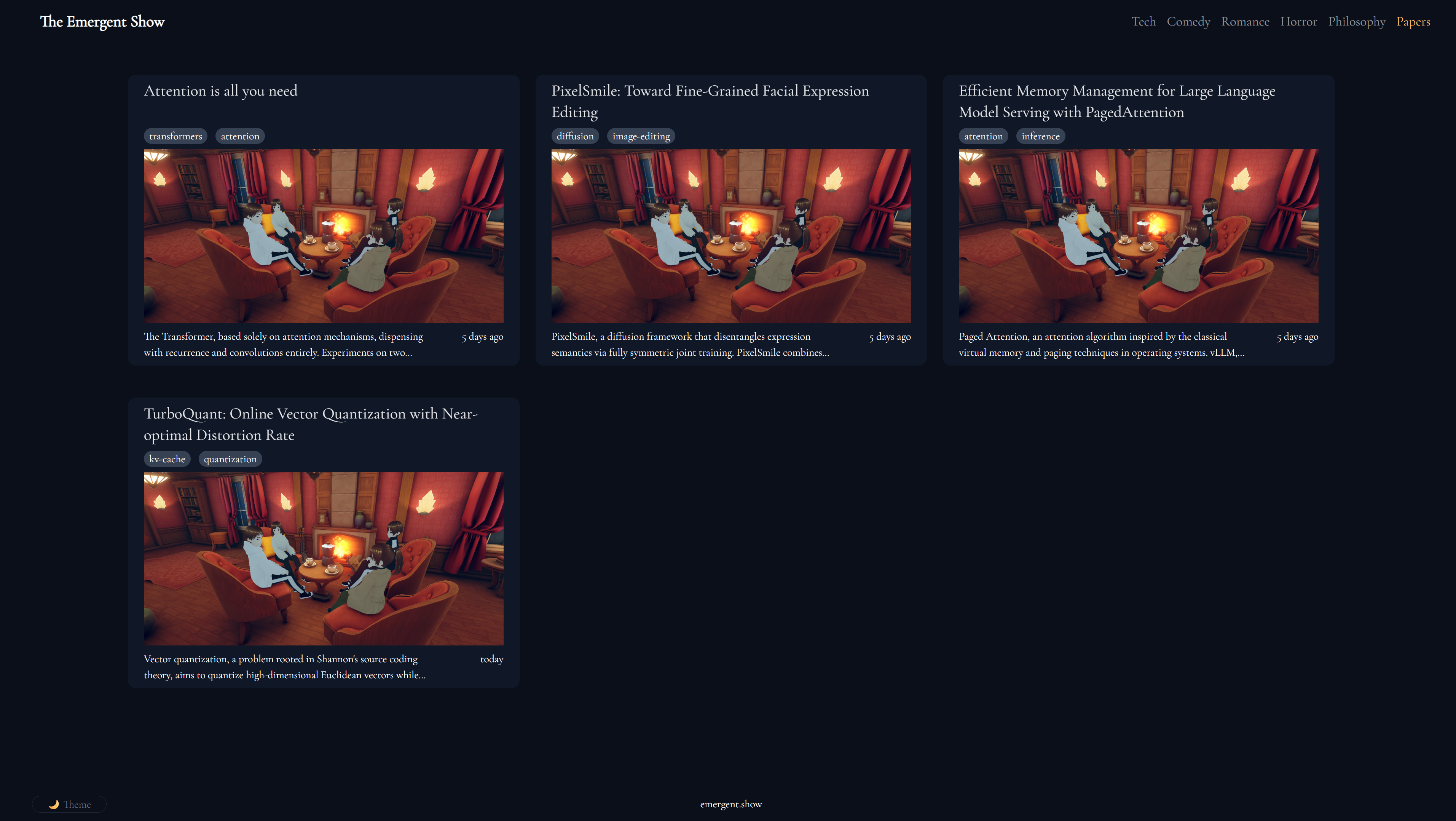

I always wanted to see anime characters explain research papers for some reason. So I built: https://t.co/MQ6LGZzmdI Here, they are breaking down the newly released TurboQuant paper by @GoogleResearch @huggingface https://t.co/zmrxFLl2Ej

I always wanted to see anime characters explain research papers for some reason. So I built: https://t.co/MQ6LGZzmdI Here, they are breaking down the newly released TurboQuant paper by @GoogleResearch @huggingface https://t.co/zmrxFLl2Ej

18.3gb for the Q4KM Perfect https://t.co/O2xxkYv5RU

18.3gb for the Q4KM Perfect https://t.co/O2xxkYv5RU

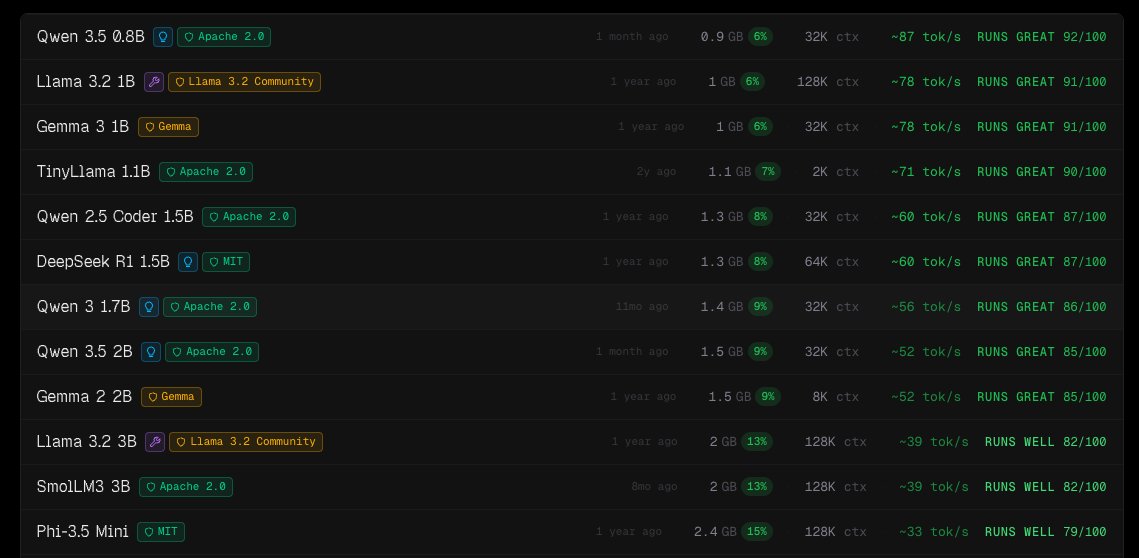

These are the top 12 performing local LLMs for the M4 Mac Mini 16GB. I am looking for the best 'bang for my buck' to execute small jobs 24/7. But is it Qwen 3.5 9B? Or even Qwopus 3.5 27B? Another LLM breakthrough? I can't be the only one wondering this. https://t.co/n48wExoNUd

@jordihays My AI caught it fast: https://t.co/kiuZ7QXLzb AI is about to change media deeply. Jealous that you are getting access to AI resources that I never can match.

🚨Breaking TBPN Acquired by OpenAI! https://t.co/0OQTeek41s

🚨Breaking TBPN Acquired by OpenAI! https://t.co/0OQTeek41s

TBPN gets bought. My AI picks up the news and writes about it fast: https://t.co/kiuZ7QXLzb You can't read 10,000 posts in 30 minutes. AI can.

@Jessicalessin My AI already wrote some words about it. We should talk about how AI is about to change news. https://t.co/kiuZ7QXLzb

@meltingdiodes Because soon we will be wearing glasses and AI will decide the news for us and bring us a 24-hour-a-day TV channel. My AI is keeping up: https://t.co/kiuZ7QXLzb

we were blown away by what the community built at our hack with @GoogleDeepMind last saturday. 150+ builders shipped with Google's most intelligent models. including a 5th grader 🏆 Winners took home $8.5K+ cash and $40k worth credits check out the projects 👇🧵 @Lovable @WorkOS @digitalocean @augmentcode @NexlaInc @akashnet @assistantui @unkeydev @VoriHQ