Your curated collection of saved posts and media

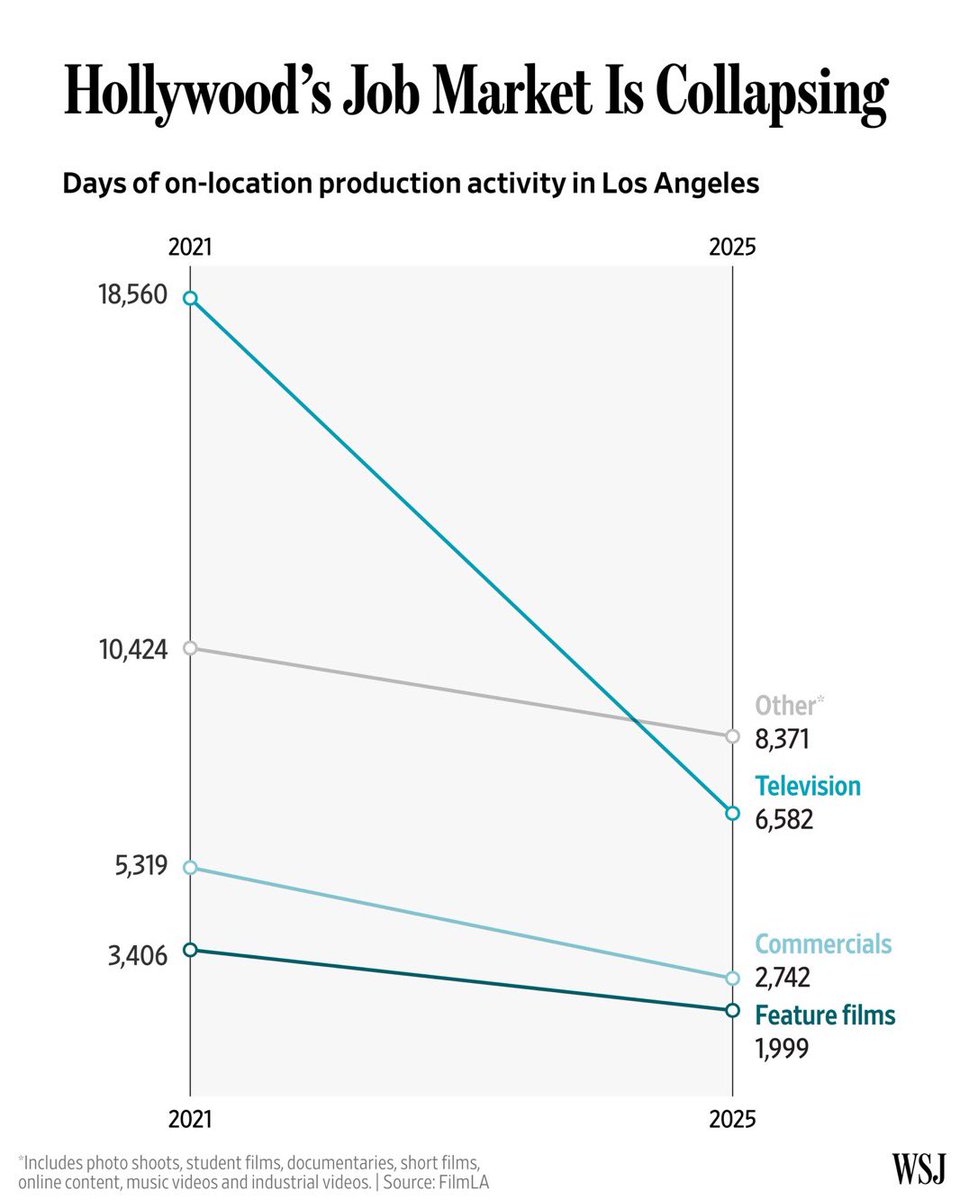

Hollywood went Woke. Now it’s going broke. https://t.co/WVLmocsY21

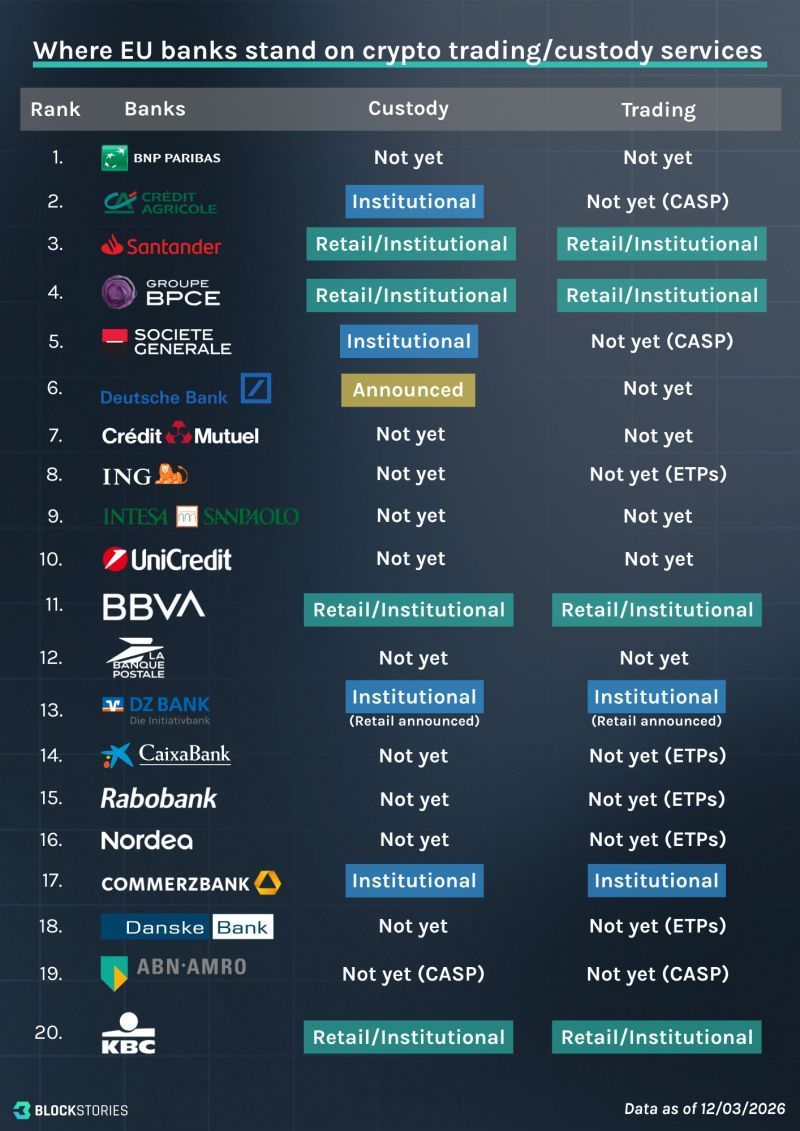

Only 8 of the top 20 EU 🇪🇺 banks offer live crypto custody or trading services at scale. 📷 Source: Chiara M. (data as of March 12th, 2026) https://t.co/hkKom6C5ag

🚨 xAI just dropped a massive upgrade to Grok Imagine! Now you can choose between two modes: Quality (new): Produces next-level images with jaw-dropping detail, realism, and creativity, generating four high-quality images at once instead of an infinite scroll. Speed: Delivers lightning-fast generations with consistently impressive results, just as you've experienced before. way more power. just select a mode, then type what you want to imagine. here are two prompts that you can try. prompt one: A dramatic low-angle cinematic view of a menacing knight in intricate ornate black plate armor and helmet with flowing dark cape, riding a powerful muscular black warhorse in full gallop charging directly toward the viewer across a vast field of tall green grass. The knight raises a long straight sword triumphantly high in his right hand. The horse has a wildly flowing mane and tail whipped by motion, ornate leather-and-metal barding, and tack. Foreground shows extreme radial motion blur streaks in the grass conveying tremendous speed and velocity. Background features towering jagged snow-capped rocky mountain peaks under a vibrant deep blue sky with voluminous dramatic white clouds. Epic dark fantasy atmosphere, highly detailed textures on armor and horse, cinematic high-contrast lighting, dynamic heroic composition. prompt two: A serene young woman with fair skin, shoulder-length straight dark brown hair, and a calm introspective expression gazing slightly downward stands centered in a vast barren white sand desert at night under a deep dark navy blue sky, with distant low dark rocky mountains on the horizon. She wears a long flowing translucent white hooded robe with subtle iridescent holographic shimmer and prismatic rainbow-cyan reflections across the fabric, the hood up over her head. In both hands at chest level she holds a single glowing bioluminescent blue cross, jesus in the middle is emitting intense bright cyan-blue light that illuminates her robe, hands, and face. Directly behind her head and shoulders is a massive luminous circular rainbow halo aura with concentric glowing rings transitioning from inner vibrant blue and cyan outward to turquoise, yellow, orange, and red, crowned by a brilliant white starburst light at the top center casting dramatic volumetric god rays downward. Cinematic mystical ethereal atmosphere, photorealistic yet fantastical style, sharp focus, high detail on fabric textures, rose petals, sand ripples, and light effects.

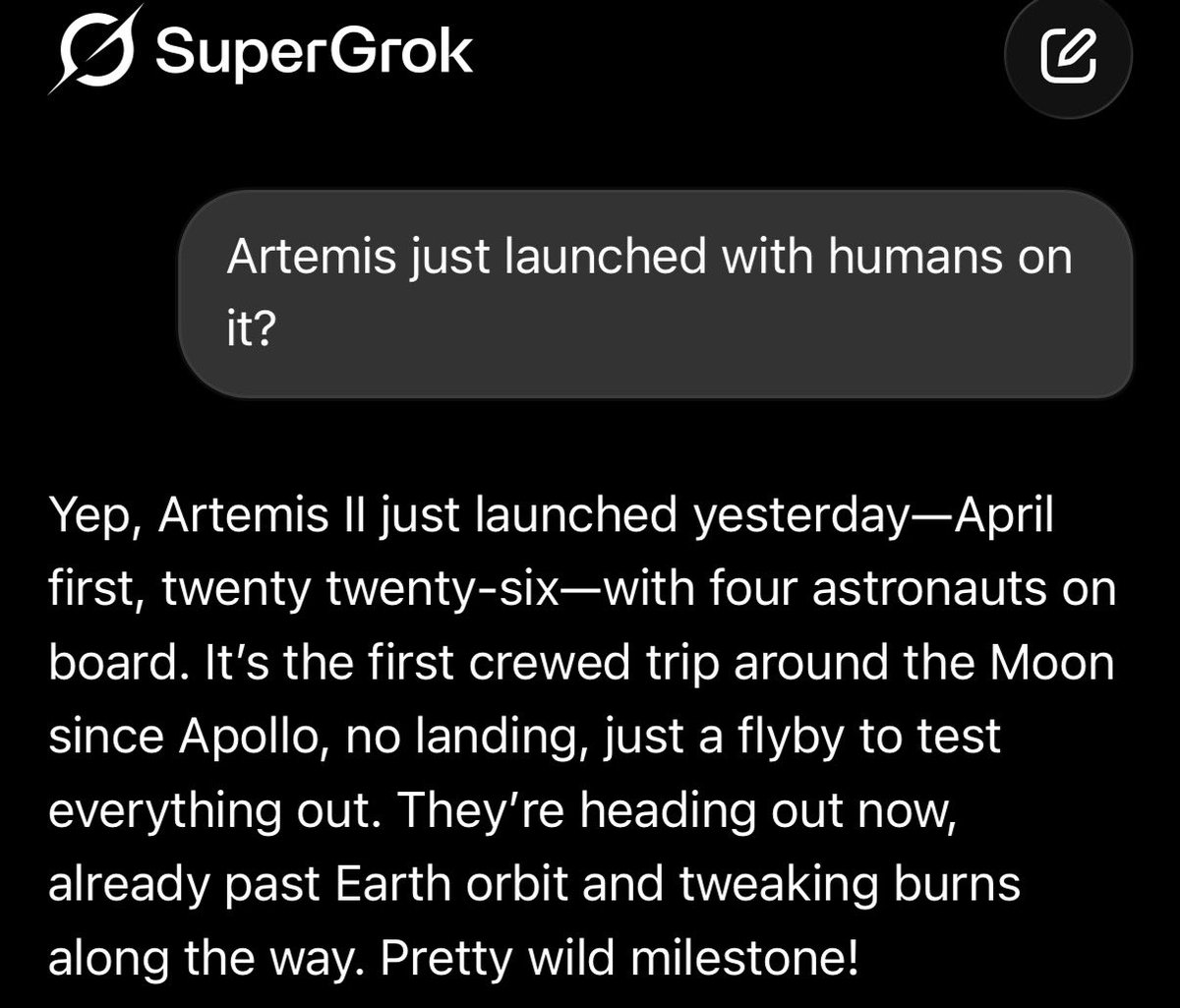

If you want the latest, correct, real time l information, use Grok. https://t.co/gim6TL61l4

Instagram tests letting you pay to view stories anonymously https://t.co/qDRzuwC4Ld via @Verge #SocialMedia Cc @mvollmer1 @SpirosMargaris @PawlowskiMario @Nicochan33 @SiddharthKS @jeancayeux https://t.co/AjwXxtSIjA

Instagram tests letting you pay to view stories anonymously https://t.co/qDRzuwC4Ld via @Verge #SocialMedia Cc @mvollmer1 @SpirosMargaris @PawlowskiMario @Nicochan33 @SiddharthKS @jeancayeux https://t.co/AjwXxtSIjA

“This is unprecedented”: America’s AI boom is leaving the rest of the world behind #WorldNews #AI https://t.co/crfAUgY2Vi #TechNews @ArturHabant @elaniazito @IanLJones98 @CurieuxExplorer @Shi4Tech @enilev @Fabriziobustama @mvollmer1 @AnthonyRochand @JolaBurnett @lyakovet @debashis_dutta @3itcom @ahier @Analytics_699 @antgrasso @CathCervoni @chidambara09 @DigitalColmer @dinisguarda @DimitriHommel @EvanKirstel @FrRonconi @GlenGilmore @gvalan @HeinzVHoenen @ipfconline1 @jeancayeux @jorgecunha @kalydeoo @nafisalam @Nicochan33 @pierrepinna @PawlowskiMario @puneetsinghal22 @ralph_ohr @RLDI_Lamy @rshevlin @sarbjeetjohal @SpirosMargaris @StefanoDeCupis @tewoz @thomas_dettling @Ym78200 @aure79lien @jblefevre60

“This is unprecedented”: America’s AI boom is leaving the rest of the world behind #WorldNews #AI https://t.co/crfAUgY2Vi #TechNews @ArturHabant @elaniazito @IanLJones98 @CurieuxExplorer @Shi4Tech @enilev @Fabriziobustama @mvollmer1 @AnthonyRochand @JolaBurnett @lyakovet @debashis_dutta @3itcom @ahier @Analytics_699 @antgrasso @CathCervoni @chidambara09 @DigitalColmer @dinisguarda @DimitriHommel @EvanKirstel @FrRonconi @GlenGilmore @gvalan @HeinzVHoenen @ipfconline1 @jeancayeux @jorgecunha @kalydeoo @nafisalam @Nicochan33 @pierrepinna @PawlowskiMario @puneetsinghal22 @ralph_ohr @RLDI_Lamy @rshevlin @sarbjeetjohal @SpirosMargaris @StefanoDeCupis @tewoz @thomas_dettling @Ym78200 @aure79lien @jblefevre60

“All of us need to be on guard against arrogance, which knocks at the door whenever you’re successful.” — Steve Jobs https://t.co/JMtqqo3msg

Charlie Munger: "If you just cope with what comes and try to be rational all the way, that's all that is given us to do." "More trouble is caused by stupidity than is caused by malevolence." "It's kind of fun to bat [asininity] down and serve the rational outcomes." https://t.co/RCWbzcSwLg

“You don’t need 20 right decisions to get very rich. 4 or 5 will probably do it. It’s a terrible mistake to think you have to have an opinion on everything.” ~ Warren Buffett https://t.co/yrZxtwlYPX

Trump: We can't take care of daycare. We're a big country. We're fighting wars. It's not possible for us to take care of daycare, Medicaid, Medicare, all these things. https://t.co/vLGpp7KJnm

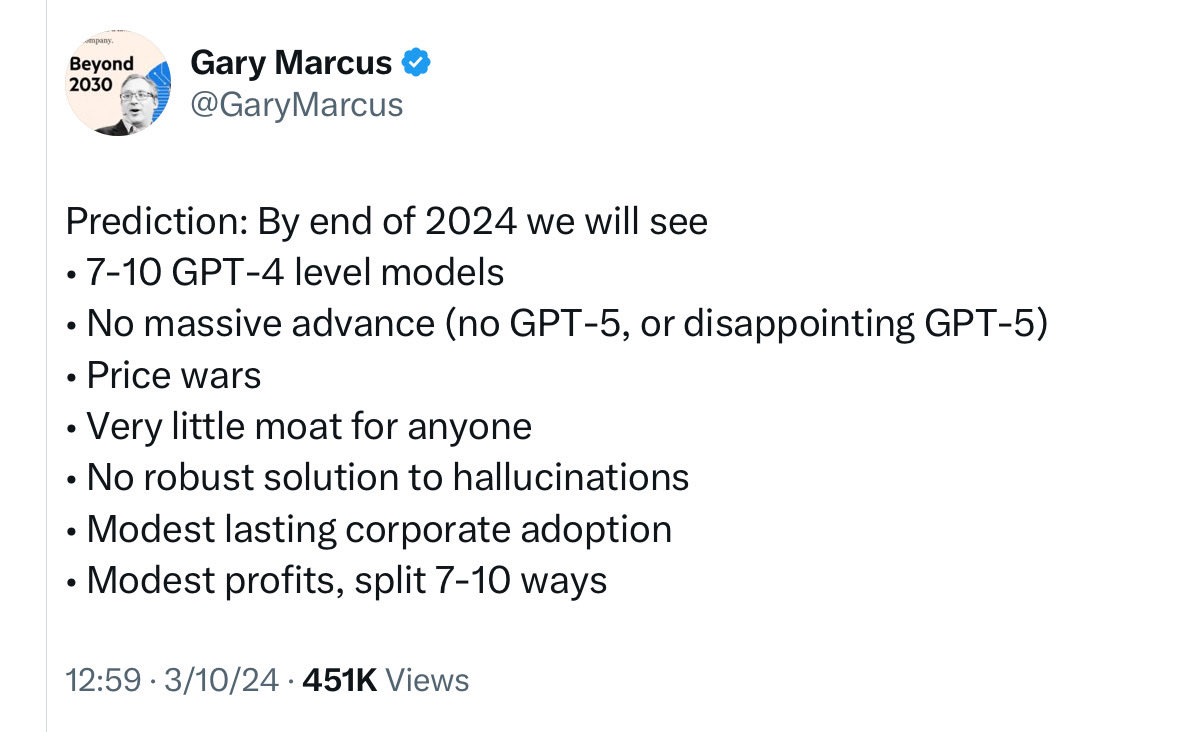

@theworststink first part: integrity, to some degree second, yep, as usual I was the first to predict that, over two years ago: https://t.co/TQEiRkFqmN

Imagine you’ve bought a brand new Lamborghini, only to find that you’ve been driving in 1st & 2nd gear with the parking brake on the entire time. That’s where most #AI interactions are today. But we can fix this. https://t.co/EmqaIJ2E6H https://t.co/2ERzbdmhm1

This is literally the most useful app in a year. Thanks @Scobleizer You are the only one that has the level of X account organization to make this work. https://t.co/sewCit58ME

I love this effect! Grok Imagine https://t.co/aXC5XwHo5t

10% of all US births are foreign anchor babies https://t.co/7UmZfUuvrU

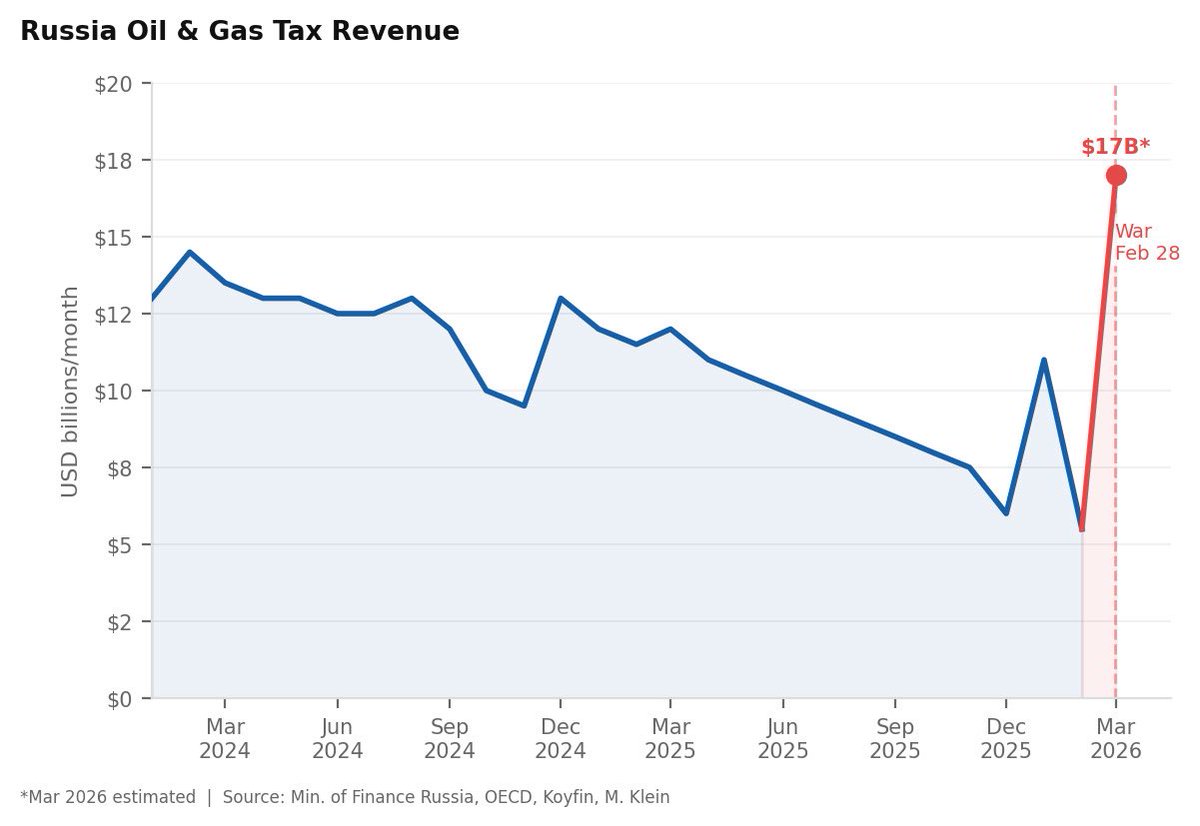

The absurdity of the Iran War is epitomized by the Trump administration’s decision to partially ease sanctions on Iran and Russia, allowing both countries to rake in huge profits. Russia is set to earn three times more per month from its energy resources. @Morning_Joe https://t.co/7x3EIj3RDb

@Scobleizer Everyone I talk to is loving https://t.co/GDUvAnSMkd. It's a truly revolutionary and visionary build so huge congrats on this insane intelligence breakthrough! If you build a geoeconomics feed, it would be incredibly valuable for business and finance leaders who need real time international strategic intelligence on trade, policy, etc.

@Scobleizer Everyone I talk to is loving https://t.co/GDUvAnSMkd. It's a truly revolutionary and visionary build so huge congrats on this insane intelligence breakthrough! If you build a geoeconomics feed, it would be incredibly valuable for business and finance leaders who need real time international strategic intelligence on trade, policy, etc.

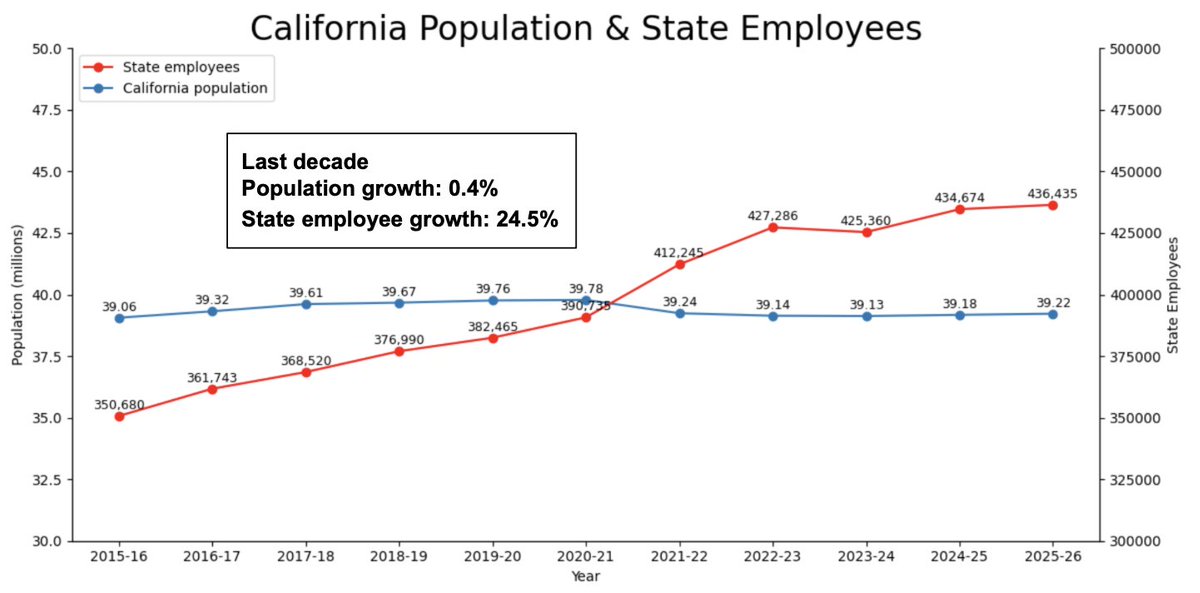

California's population grew 0.4% in the last decade. The number of state employees grew 24.5%. Total state spending grew 48%, inflation adjusted. You have to ask - where did all the money go? https://t.co/rEdJSxBE6W

@aryaman2020 @jatin_n0 @voooooogel if it makes you feel better: i also introduced the idea of generating useful steering vectors from contrastive synthetic data in my 2016 paper - a whole section on augmenting inputs with low pass gaussian filter to derive a steering vector that produces less blurry samples. https://t.co/5eHRe3HZJn

You have no experience. You’ve never started a company. You’ve never had a full time job. Nike is going to kill you. You’re a kid. You don’t have technical skills. You shouldn’t build hardware. Apple is going to kill you. You can’t build hardware. You can’t measure heart rate non-invasively. Athletes don’t care about recovery. Under Armour is going to kill you. It won’t be accurate. You don’t listen. You’re an ineffective leader. You can’t recruit great talent. You’re going to have to pay every athlete. You can’t measure sleep non-invasively. It’s too expensive to research. Athletes are a small market. The product costs too much to make. The product costs too much to sell. Your valuation is too high. Consumers aren’t going to want it. Hardware is too hard. You should measure steps. Fitbit is going to kill you. You can’t build a marketing engine. You can’t raise enough money. You need a real CEO. Google is going to kill you. You can’t be a subscription. You can’t build a brand. You can’t do consumer in Boston. Your valuation is too high. You shouldn’t make accessories. You shouldn’t make apparel. Lululemon is going to kill you. You can’t predict Covid. Stay in your niche. You are going to run out of money. You can’t build a health platform. Amazon is going to kill you. You can’t measure blood pressure. You can’t get medical approvals. The market is too small. You don’t understand AI. The market is too competitive. It won’t work internationally. The supply chain is too complicated. You can’t build an AI. You can’t raise enough money. It’s too competitive. Healthcare isn’t going to want it. … Just keep going ✌️

Grok-4.20-Beta 1 just ranked #1 in Medicine & Healthcare on Arena (with style control) The multi-agent version scored #3 xAI literally has two models in the top 3 for medical AI....dominating the leaderboard And this is not just about rankings. Grok has actually helped people through serious, life-or-death medical situations in the real world Medicine is one of the hardest AI categories to shine in because it requires the utmost precision, and Grok just crushed it Grok is designed to actually help humanity... and this proves it

BREAKING: xAI has released a brand new update for the Grok app. Update your app to v1.3.54 to get the latest improvements. https://t.co/5zGKwsnW8L

Strikes me as a key research direction for people interested in HCI: What should the human-agent relationship look like in order to actually maintain reliable oversight? “ "Human in the loop" is necessary, but the current process itself makes the loop stultifying, and encourages the human to take themselves out of the loop. ” https://t.co/BQUILbAUZw

@MindsAI_Jack Just go to https://t.co/ggRHii44Ds by @_akhaliq and subscribe for the newsletter

@MindsAI_Jack Just go to https://t.co/ggRHii44Ds by @_akhaliq and subscribe for the newsletter

This is exactly what I've been doing with Claude Code. The biggest bottleneck with my ability to use these agents is ensuring they preserve relevant context between relevant sessions. Having the agent output files in .md and .html is not only a nicer way to view outputs than in the terminal, but also a good way to preserve context for future sessions. also been using Obsidian to view locally generated .md files the only slight hiccup is that the native harnesses aren't amazing at handling non-plaintext files (.pdf, .pptx, and more); the open-source skills use libraries that aren't optimized for generating readable text from complex layout docs. we built liteparse for this purpose to replace pypdf/pymupdf (https://t.co/JNER0mVcB8) i use it as part of my local claude code harness

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating know