Your curated collection of saved posts and media

You can now run three frontier models at once and select your orchestrator model directly inside Perplexity Computer. Model Council automatically runs GPT-5.4, Claude Opus 4.6 and Gemini 3.1 Pro simultaneously. Three frontier models. One workflow. Best answer wins. https://t.co/40rPcXpr6s

Massive SpaceX IPO anticipation. @perplexity_ai Computer creating a Financial model and DCF for SpaceX. https://t.co/mPZrEAUpOH

Perplexity just became the first AI company to truly go head-to-head with Palantir Using Perplexity Computer (with no local setup or single LLM limitation), it was able to build me a World Monitor with real-time data to analyze the world situation, flight situation, war situation, and many more... using skills and a combination of LLMs wherever they're best. I'm super impressed @AravSrinivas

Do anything with Perplexity Computer. Even projects like mapping @theallinpod episodes to stock price movements. https://t.co/eCjBmqelXv

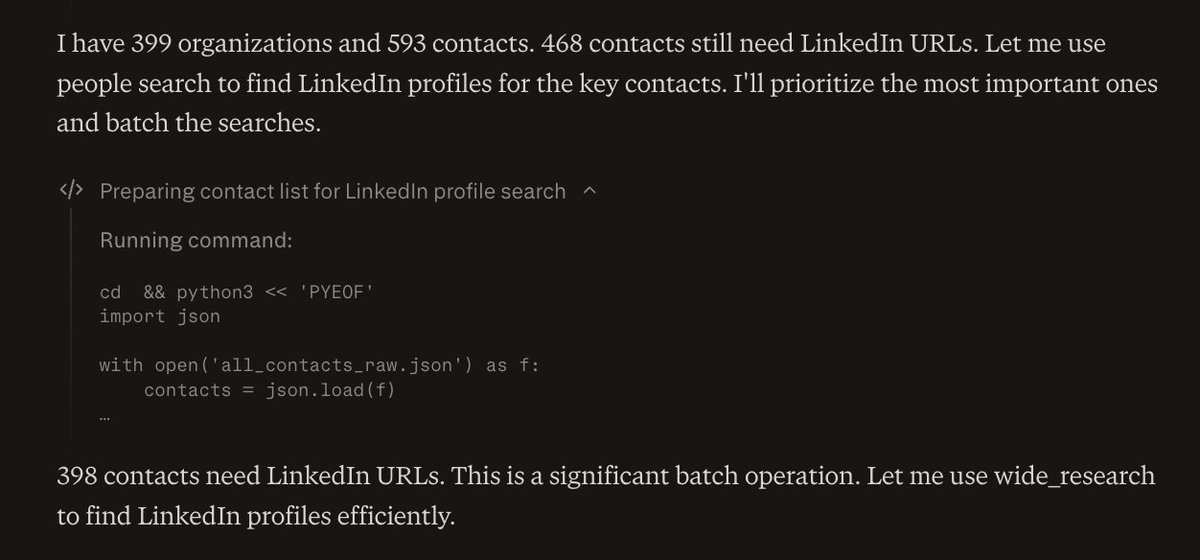

Perplexity Computer is insane. It's researching hundreds of LinkedIn profiles for me in parallel. Claude Code fails because it gets often blocked, and it runs out of context window. But Perplexity Computer doesn't stop. It's been going for a while. https://t.co/4cvyy37wtu

Claude 3 & GPT-4: "the book Piranesi as a p5js 3d space. do it for me" After a few rounds of revisions, this is what they came up with - Claude on the left, GPT-4 on the right. Given the limits, neat. I think the rising and falling tides from Claude 3 are a cute touch. https://t.co/qYsliPrGTK

Had early access to GPT-5.4 and Pro. They are very good. One fun illustration of progress, this is the same prompt I used in GPT-4 below (making a 3D space inspired by Piranesi) now in GPT-5.4 Pro. There were no errors, made in a single prompt plus one to "make it better." https://t.co/7Vgmc60SKc

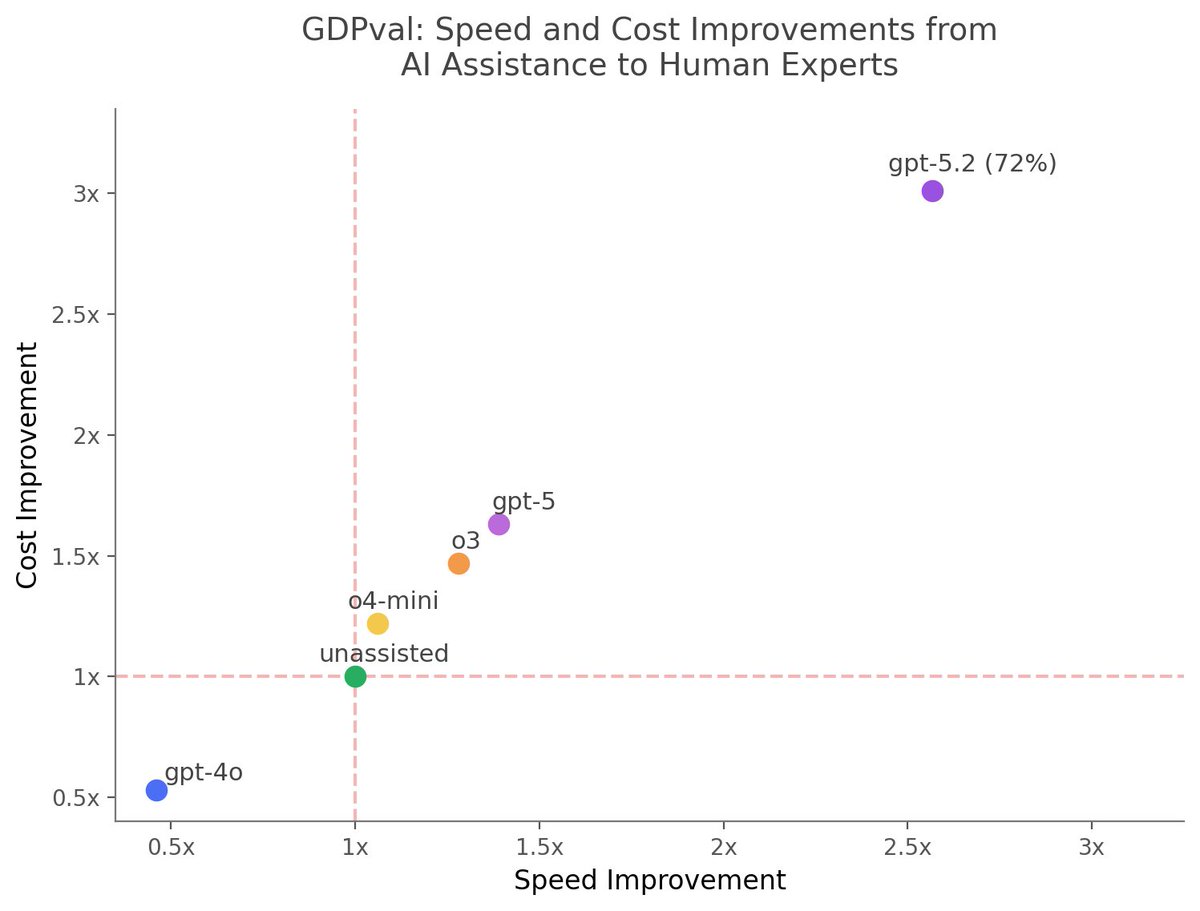

Since OpenAI didn't update Figure 7 from GDPval given the success rate of GPT-5.2 on long-form tasks, I used GPT-5.2 Pro to do so. The chart assumes the process is: delegate long tasks to AI, evaluate the output for an hour, then decide to try again or give up & do it yourself. https://t.co/vFtMZrturL

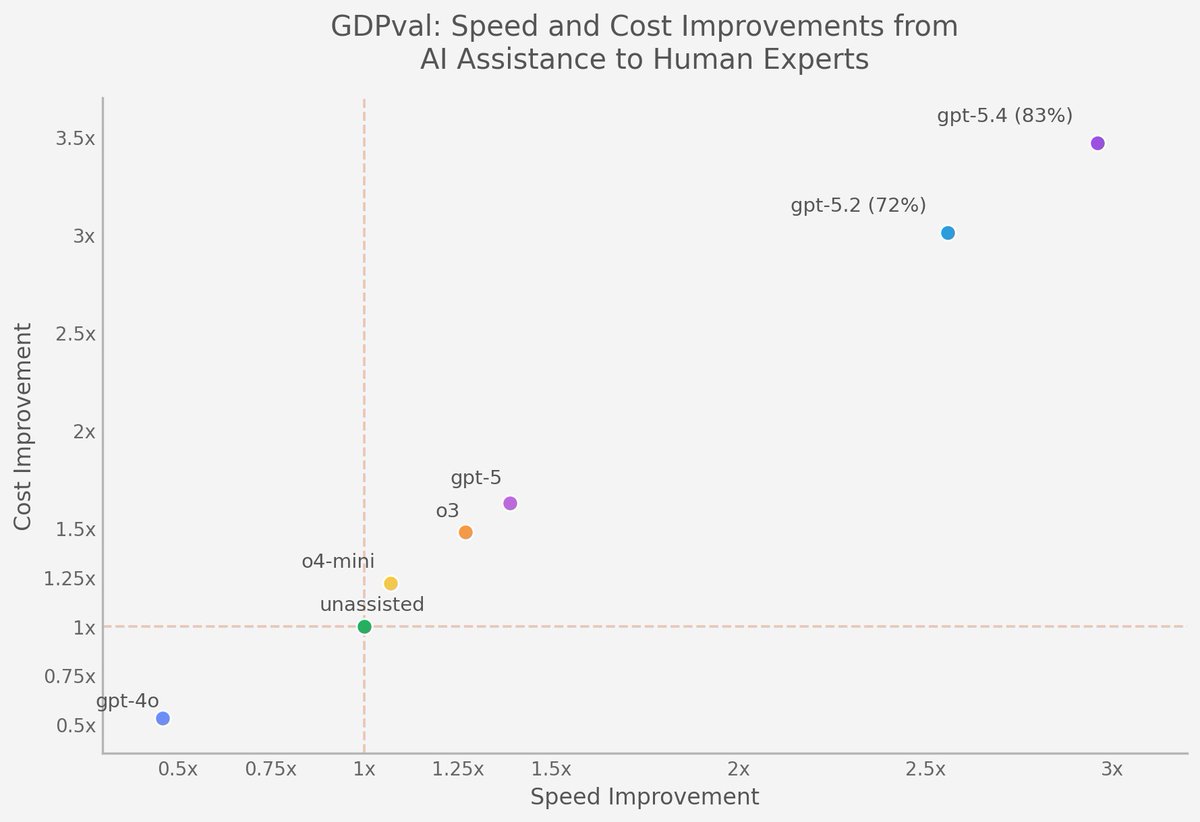

Given the GDPval benchmark for GPT-5.4, I've updated this chart, the new model ties or beats humans as judged by other experts at professional tasks 82% of the time If you give a 7 hour task to AI, even with failure rates and the need to check results, you'd save 4h 38m average https://t.co/U4PQSArQo2

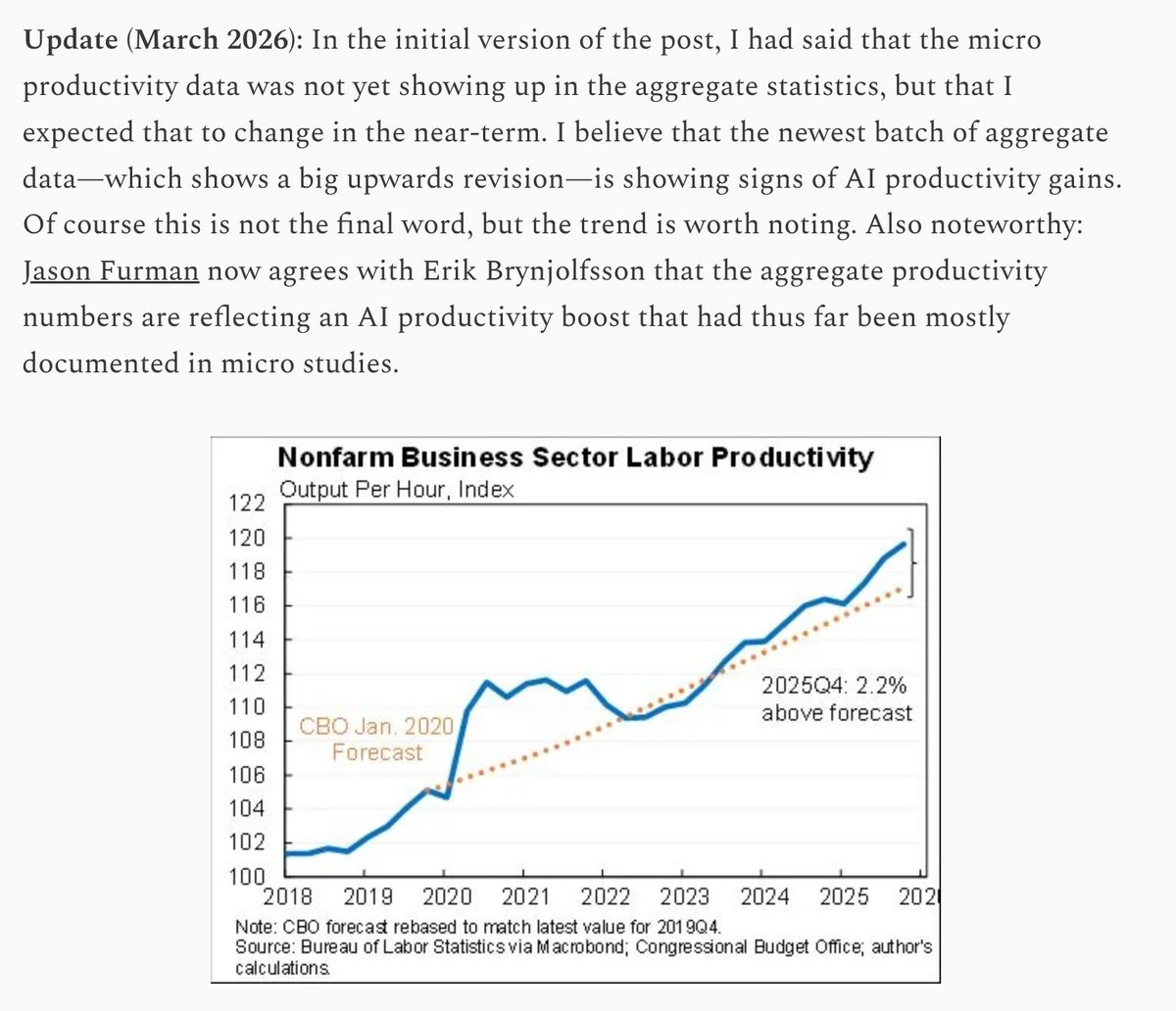

At the end of January I started a "living document" tracking the impact of AI on productivity. I highlighted a disconnect: while micro studies showed a clear boost, the macro evidence was muted. I wrote that I expected this to change in the near term. Apparently "near term" is a bit over a month. The post has been updated with almost a dozen new studies, on benchmarks, new tasks, etc. Importantly, updates to the aggregate data are also showing what looks like AI productivity gains. It is still early days, but worth noting. See post here: https://t.co/AtsqhaHzT9

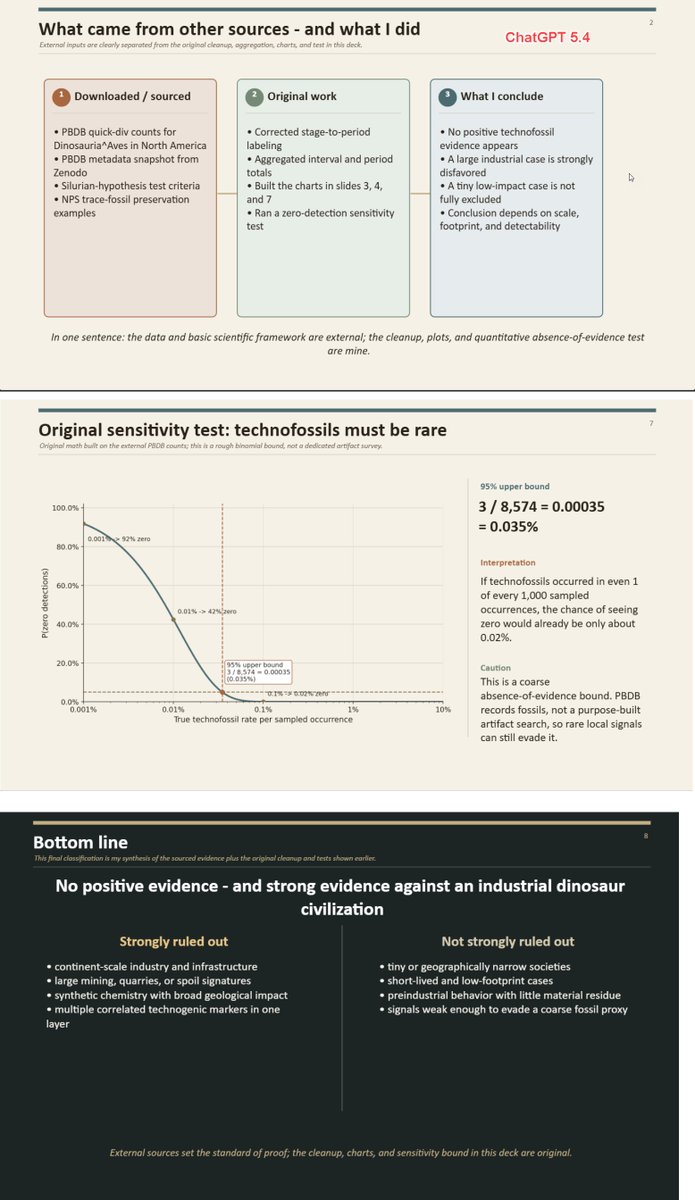

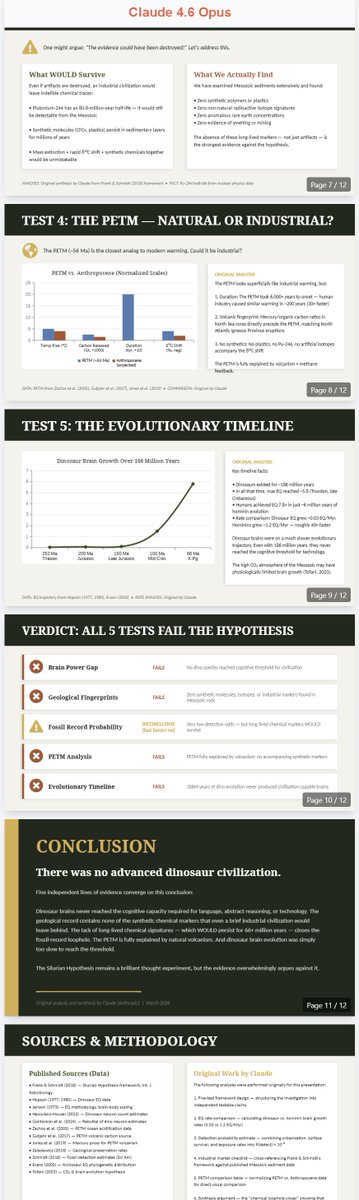

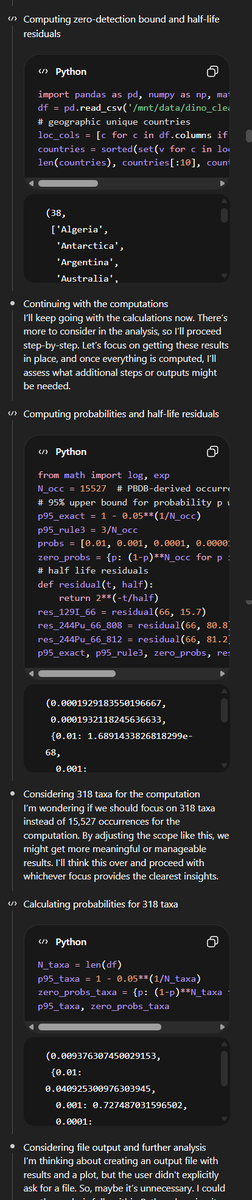

GPT-5.4 Pro, Opus, and Gemini DeepThink: "Prove to me in a PowerPoint that there was no advanced dinosaur civilization by downloading whatever data you think appropriate & running tests" GPT-5.4 and Claude did some original analyses, but someone build a harness for Deep Think! https://t.co/QMzmAIfFtL

Also, right now OpenAI does the best job in the chatbot interface of actually showing you auditable thinking traces. https://t.co/6Yk8J5ZZps

Governance is not “just process.” It is production infrastructure. On Feb. 4, @reserveprotocol detected an unexpected governance proposal targeting hyUSD, a dormant decentralized token folio (DTF). The proposal aimed to upgrade core contracts to an unverified implementation. A Slack alert surfaced the change quickly, the team investigated, and the community mobilized to vote it down. This case study is a look at what “decentralized control” actually requires in practice: visibility, fast triage, and a workflow that turns onchain events into real-world response. Read more: https://t.co/VIkr73cJjK

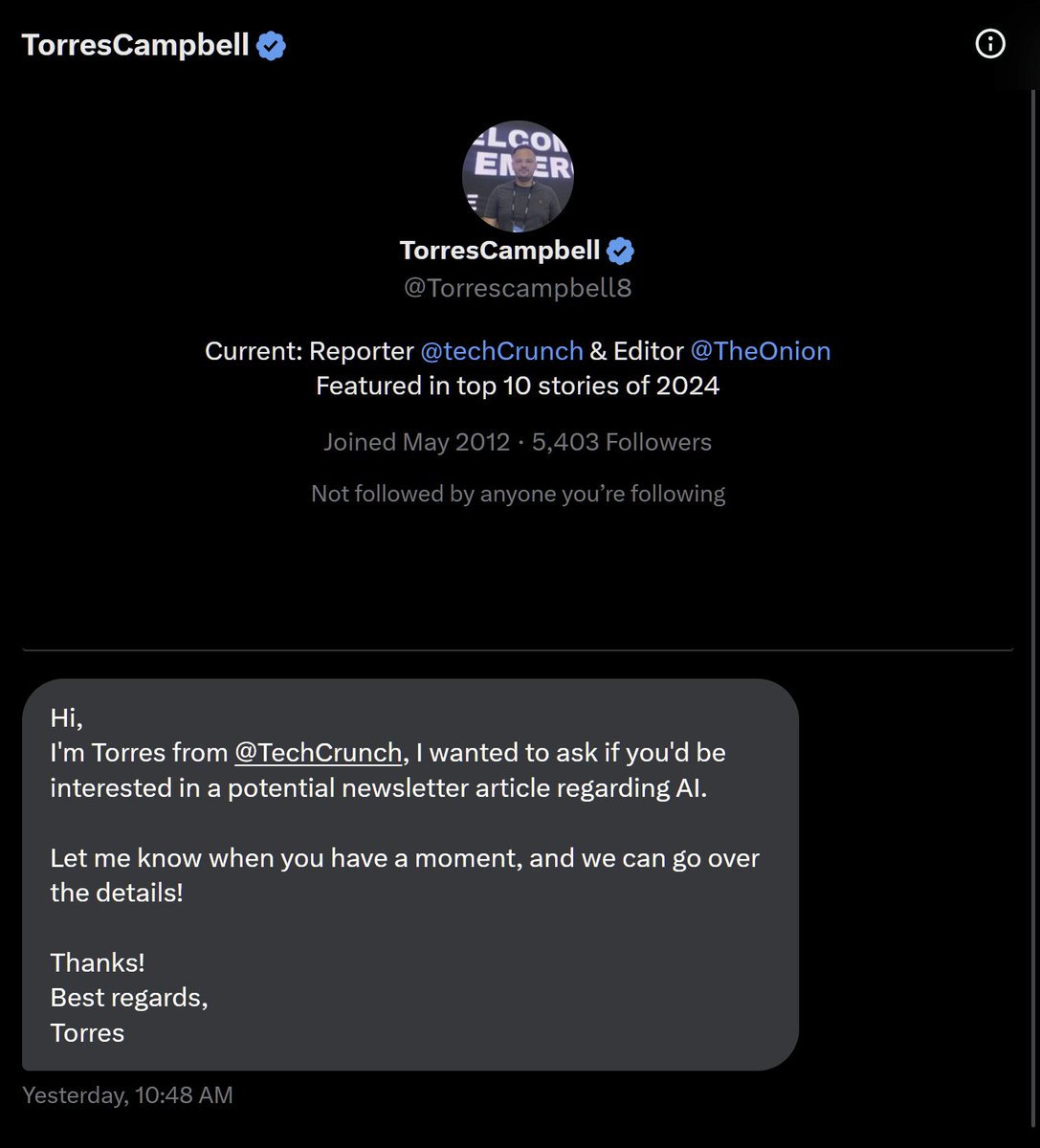

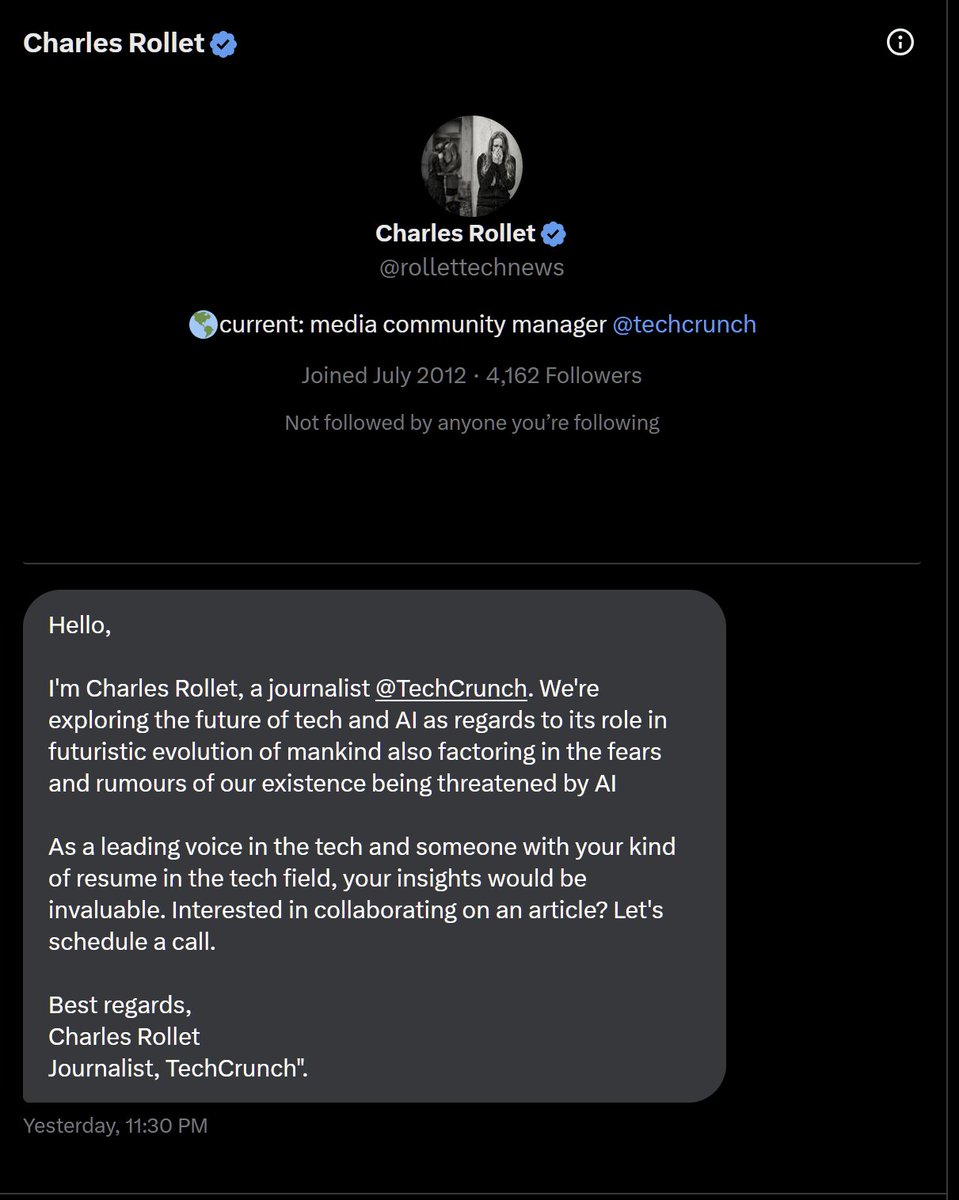

This is an interesting scam. I am getting DMs from people claiming to be journalists at TechCrunch wanting to work on an article with me about AI. The first one I immediately noticed no one named "Torres Campbell" seems to be publishing articles from TechCrunch so must be fake. They must have realized I caught on because the next day I get a DM from "Charles Rollet" who I notice is indeed a journalist from TechCrunch. I was about to respond when I visited his profile on TechCrunch and noticed his X profile is different. His actual account is @CharlesRollet1! Very wild scam. Be safe out there! I am assuming they probably want me to pay them to do an article with them but of course no article comes out on TechCrunch lol

This month, we’re in SF for @Official_GDC and in San Jose for @NVIDIAGTC with a new live demo of our real-time diffusion world model. If you want to see it running under real user input and tight latency constraints, meet us. https://t.co/QputPCxkyk

when the whole team is on claude code https://t.co/uhyfPPsgXm

"People do not realize that there is only one bridge remaining." To solve disease + accelerate, we need to make disease solvable using compute. Data is the limiting reagent. This is why @precigenetic is essential for the future of biological superintelligence.

https://t.co/xQ0tVdFoV4

https://t.co/xQ0tVdFoV4

GPT 5.4 is released https://t.co/kqy67qLlJf

Two major AI releases this week: • Qwen3.5 — new open-source small models • GPT-5.4 — newest frontier closed model Most benchmarks compare math and coding. But the real test for frontier AI should be biology and healthcare. That’s where mistakes actually matter. So our team at @UHN ran them on EURORAD — 207 expert-validated radiology differential diagnosis cases. Results: GPT-5.4: 92.2% Qwen3.5-27B: 85% Gemini 3.1 Pro: ~79% A 27B open model that runs on a laptop is only 7 points behind the most powerful AI model on earth — and already beating Gemini on this benchmark. That gap is much smaller than people expected. And it matters. For years hospitals faced an impossible tradeoff: Frontier models → patient data leaves the hospital Local models → not good enough That tradeoff may finally be ending. Qwen3.5-27B runs fully local. No API. No cloud. No patient data leaving the building. HIPAA / PHIPA compliance becomes architecture, not paperwork. Interesting detail: 27B and 122B score almost identically here. Scaling bigger didn’t help much. One caveat: with web-scale training, it’s hard to completely rule out that frontier models like GPT-5.4 may have seen parts of evaluation datasets. Still, the signal is clear: Small models are getting good enough for real clinical AI. And if we want to measure real AI progress, biology and healthcare should be the benchmark. Huge credit to the team @alifmunim @AlhusainAbdalla @JunMa_AI4Health @Omar_Ibr12 @oliviaamwei

🎙️New episode of "Machine Learning: How Did We Get Here?" Tom Mitchell (@CarnegieMellon) and @ylecun, Executive Chairman of AMI Labs and Professor at NYU, discuss how technological advances and commercial forces shaped AI history. Listen on Spotify: https://t.co/YdWjoVdoVc

Tom's ongoing peek at the personalities and personal stories behind machine learning's history is available wherever you find your podcasts. 🎥Watch on YouTube: https://t.co/czYb2iXB2l ▶️Listen on Apple: https://t.co/z07MFJaTr1

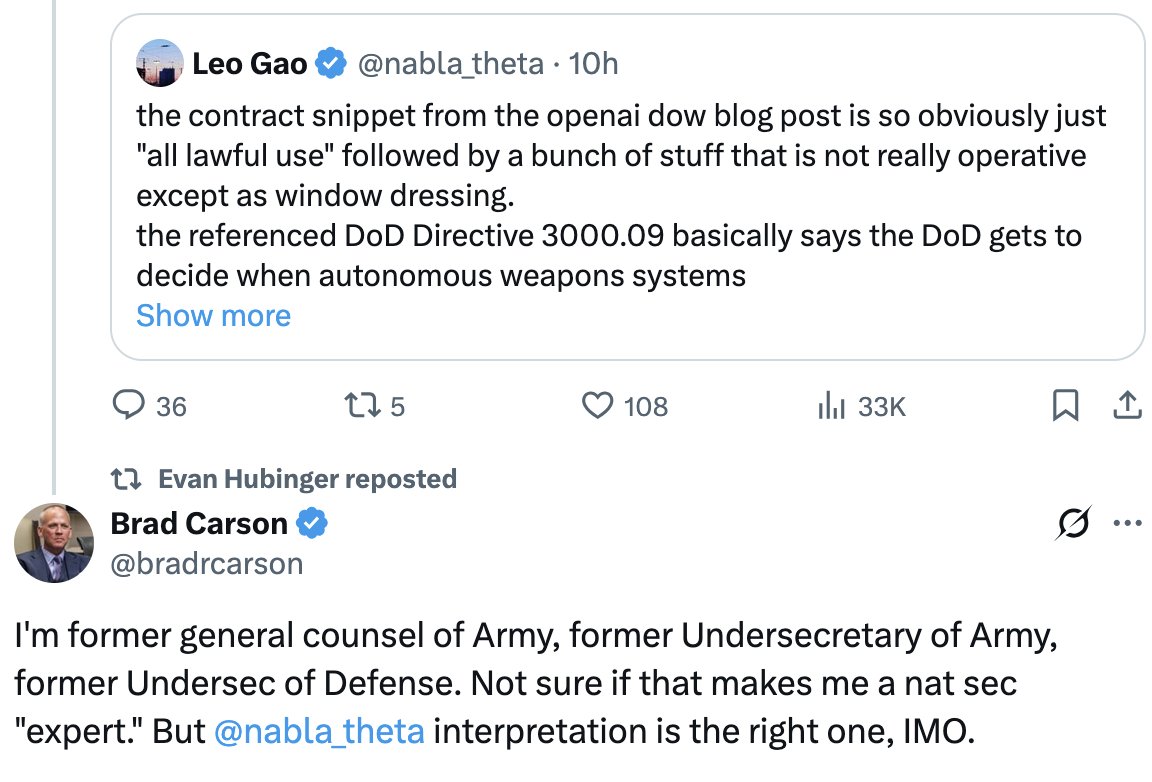

As @bradrcarson explains, the contract language released so far does not restrict the gov from using AI to kill without human oversight. https://t.co/To1RKsQTGg