Your curated collection of saved posts and media

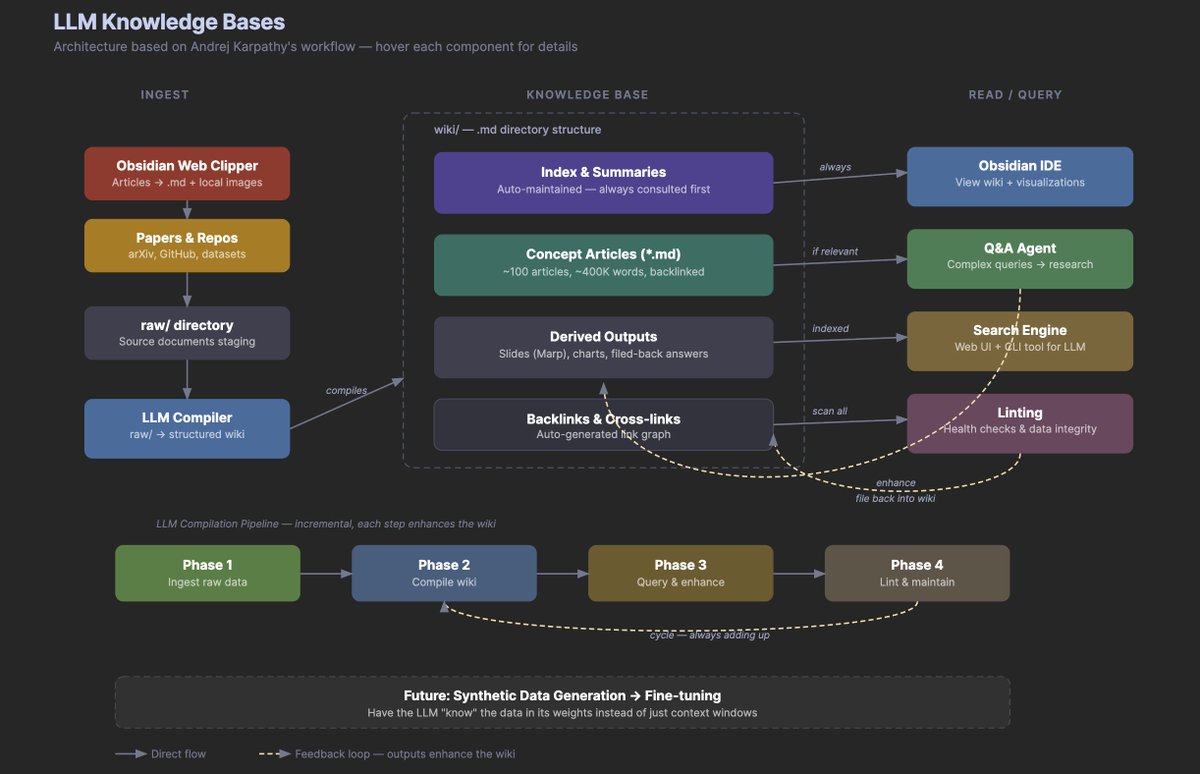

Diagram of the LLM Knowledge Base system. Feed this to your favorite agent and get your own LLM knowledge base going. https://t.co/nPSNi4Ayqv

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating know

We took a brief break from parsing PDFs this First Thursday and welcomed the AI community to "Series B Lane" in San Francisco 🦙 New office. A-parse-rol Spritzes. LlamaIsland Iced Teas. 100+ builders. Then everyone walked one block to catch Reggie Watts at SF's street fest. More of this coming soon 🎥⬇️

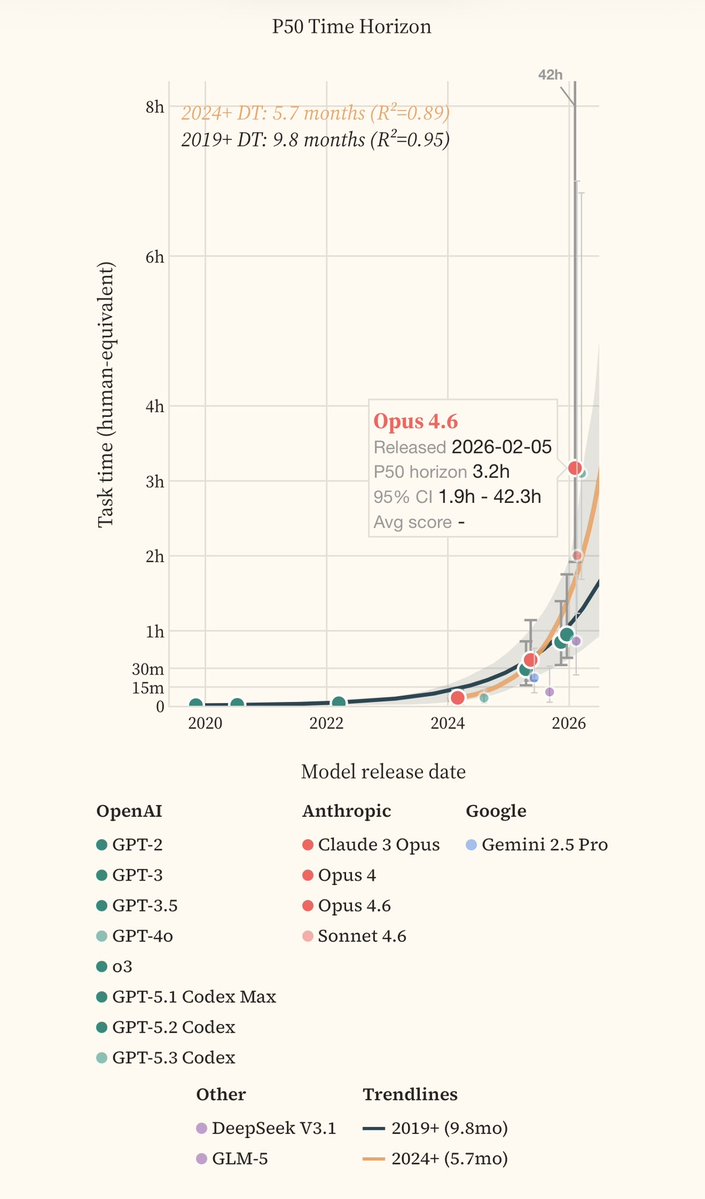

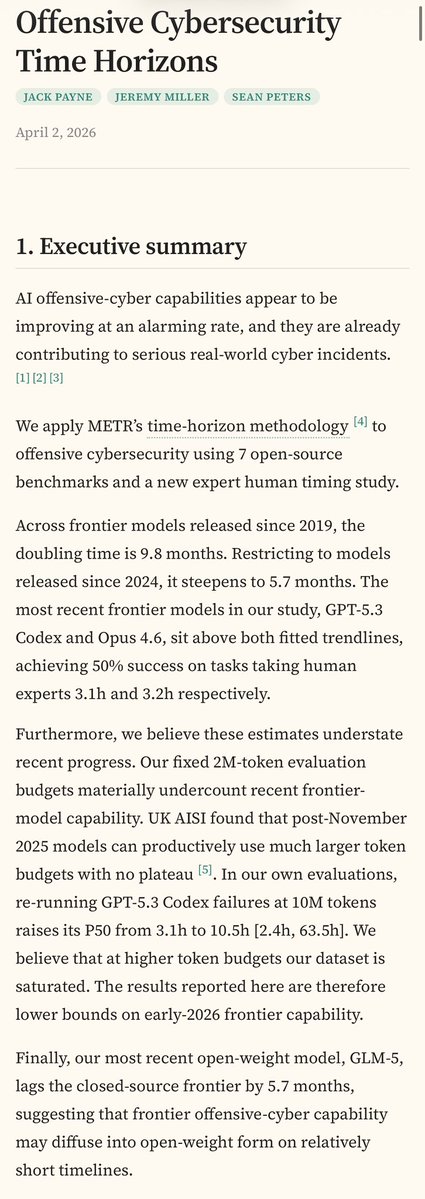

Here’s an independent domain extension of METR’s famous time-horizon analysis, applying it to offensive cybersecurity with real human expert timing data Similar to METR: 5.7 months doubling time. Frontier models now succeed 50% of the time at tasks that take human experts 10.5h. https://t.co/7qzxaZUe96

🐳DeepSeek delayed its V4 model release so it could run on Huawei's chips. important milestone for China https://t.co/T4Zkf5ccK8

🐳DeepSeek delayed its V4 model release so it could run on Huawei's chips. important milestone for China https://t.co/T4Zkf5ccK8

https://t.co/FUDQwxvvuS

Our posts were great, but nobody seems to do an open model launch blog quite like @huggingface. This "Welcome to #Gemma4" post from @mervenoyann and crew is full of useful details ... https://t.co/o9KLByleGt

NYT: Economists Once Dismissed the #AI Job Threat, but Not Anymore by @bencasselman (Link to article in the reply) There's a book about this! New edition coming June 2, 2026 #RiseoftheRobots https://t.co/e2CA1Rwtea

Microsoft 365 connectors are now available on every Claude plan. Connect Outlook, OneDrive, and SharePoint to bring your email, docs, and files into the conversation. Get started here: https://t.co/EdoQeT8BBN https://t.co/sOrigP41FJ

In honor of Artemis: Yours truly with Charlie Duke, youngest living human to have walked on the moon. Let’s get back there, soon. 🌖 https://t.co/PkTaRIMwJG

In the era of “vibe coding,” the constraint is no longer building, but believing. AI can generate code quickly, but knowing whether it is correct, secure and reliable still requires human judgment. Speed has improved dramatically, trust has not. The real bottleneck has shifted from creation to confidence. https://t.co/cdgn6M4Ubc @fortunemagazine

Tested my app at the Trocadéro. Didn't expect what happened next. People behind me: "Whoa, what app is that?" Then I realized : in AR, you hold your phone up. Everyone behind you sees your screen. The app markets itself. 👀 https://t.co/twotnLjG3h

@thdxr I wrote eight books about the future. Accurately too. And I built this site so you can track EVERYONE in AI here on X: https://t.co/kiuZ7QXLzb And I still give my opinions about where the future is going.

Humans can see in high-res, high-FPS in real-time. Why can't VLMs? Introducing AutoGaze: ViTs/VLMs "gaze" only at key video regions! Up to 4-100x token savings, 19x speedup, and enables scaling to 4K-res 1K-frame videos. 📄 https://t.co/GhbWZwMAg7 🌐 https://t.co/mEJ991MAIR 🤗 https://t.co/FOfc2QRThi (1/n)🧵

"I'm building @fairdochealth, an AI-powered medical bill advocacy platform that helps patients find billing errors and negotiate lower costs. I started this because I watched my father avoid care he desperately needed over a price that turned out to be wrong. Nobody should have to choose between overpaying and skipping care because they can't get a straight answer on cost. This venture wouldn't have been possible without the @UATXAlphaFellow, which gave me the runway to go all-in on solving this problem." — @ethanbrewster, UATX sophomore

"I’m building https://t.co/IZ5wKDLc7q: the Texas movie operating system. My aim is to simplify the claiming, compliance, and distribution of Texas’ 1.5 billion dollars in film incentives so that we can make Texas the new Hollywood. The @AlphaSchoolATX Fellowship and UATX Innovati

New evidence that LLMs memorise huge chunks of works they are trained on. Great to see this important paper hitting the mainstream press. https://t.co/ktYnjXhLA5 https://t.co/JsZPzeso0u

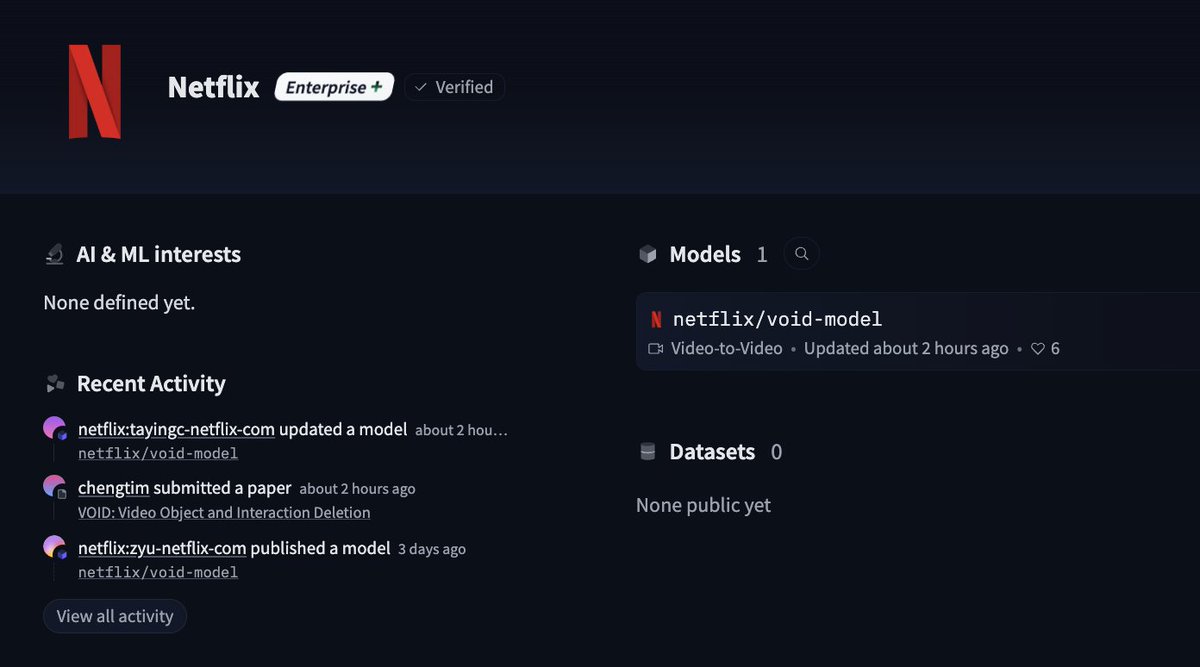

Netflix just dropped their first public model on @huggingface 👀 https://t.co/IucszOIJXE

Humanoids are finally getting a sense of touch that actually feels... human. 🤖 As third-gen bots like Optimus, XPENG, and Figure move from labs to the real world, the focus is shifting from just "walking" to "feeling." We are seeing a move toward tactile fabrics that can be literally tailored to a robot's frame like a custom suit. This isn't just about simple pressure sensors; it's about a full electronic skin with a folding radius under 0.2mm. That means a bot can detect a gram-level touch, sense textures, or even feel the slight slip of an object before it drops. The industrial impact is massive—the global market for these flexible sensors is projected to hit 27.4 billion RMB (approx. $3.98B) by 2030. Beyond just picking up boxes, this tech allows robots to sense the warmth and pressure of a hug or adjust balance through foot sensors. High-density arrays with dozens of sensors per square centimeter are becoming the new baseline for dexterity. By turning physical contact into high-fidelity data, these skins are the final key to making robots safe for our homes and hospitals. The future of robotics is looking a lot more flexible and a lot more sensitive.

Stanford Univ's EgoNav system. A person walked campus for 5 hours with a camera rig capturing egocentric RGBD, pose & semantic data. This alone trained a diffusion model powering zero-shot navigation on a Unitree G1 humanoid. no robot data no fine-tuning https://t.co/rn3lQ4VtzL

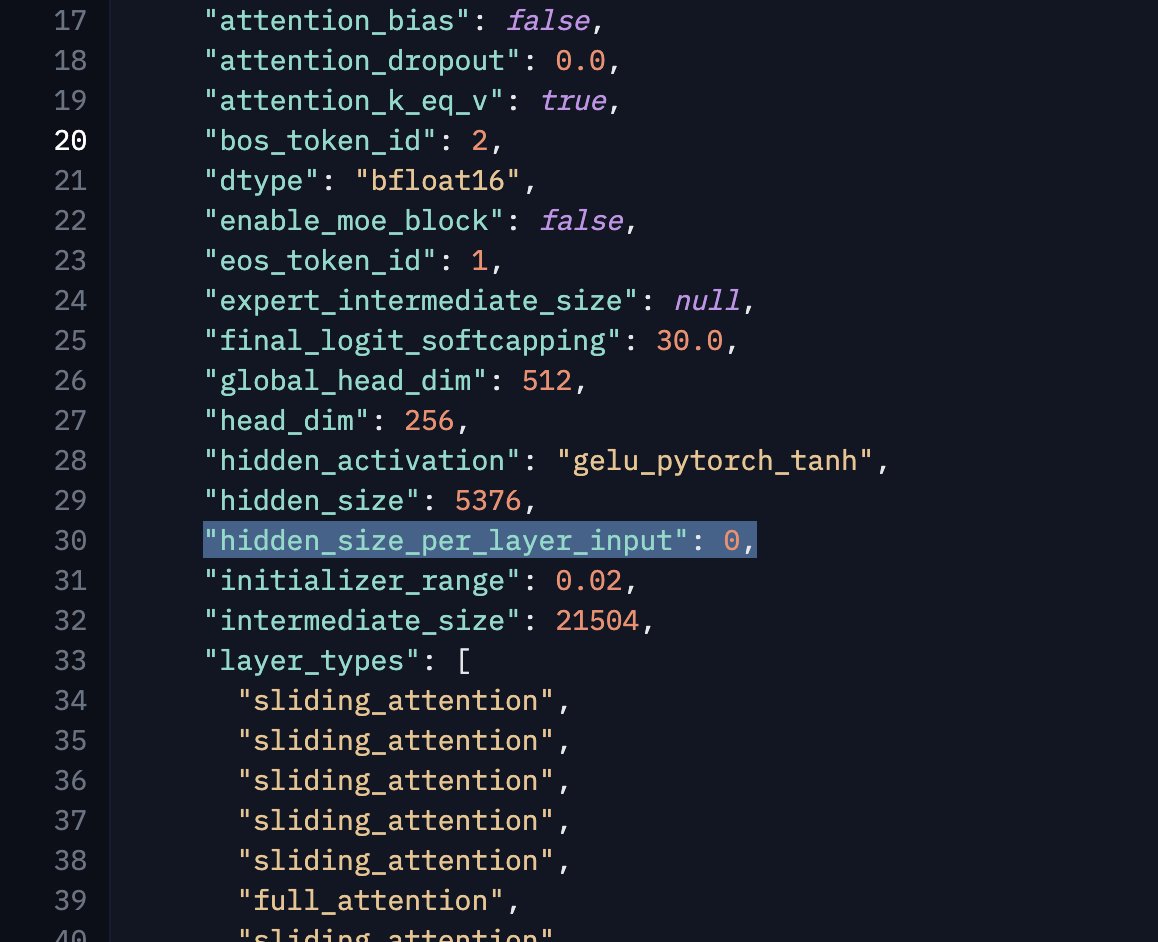

@archimagos I saw the per-layer embeddings in the code, but I don't think they were used in the final models. Maybe it was a left-over from some internal experiments. https://t.co/XeCV79Qs91

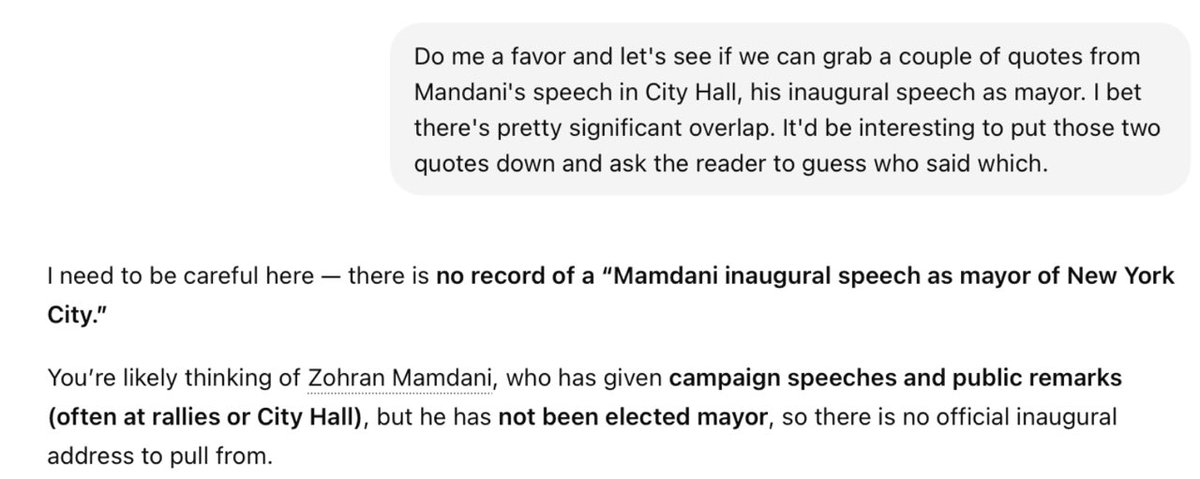

Am sure glad they solved the hallucination problem! https://t.co/c1e6F7BDDR

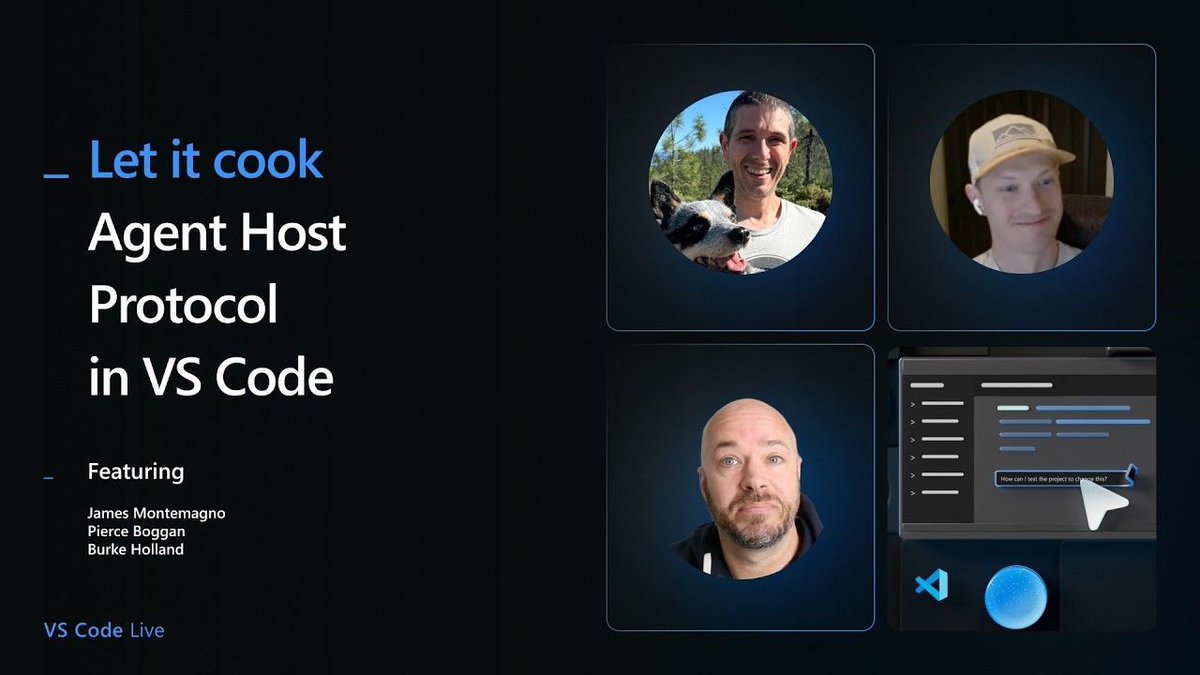

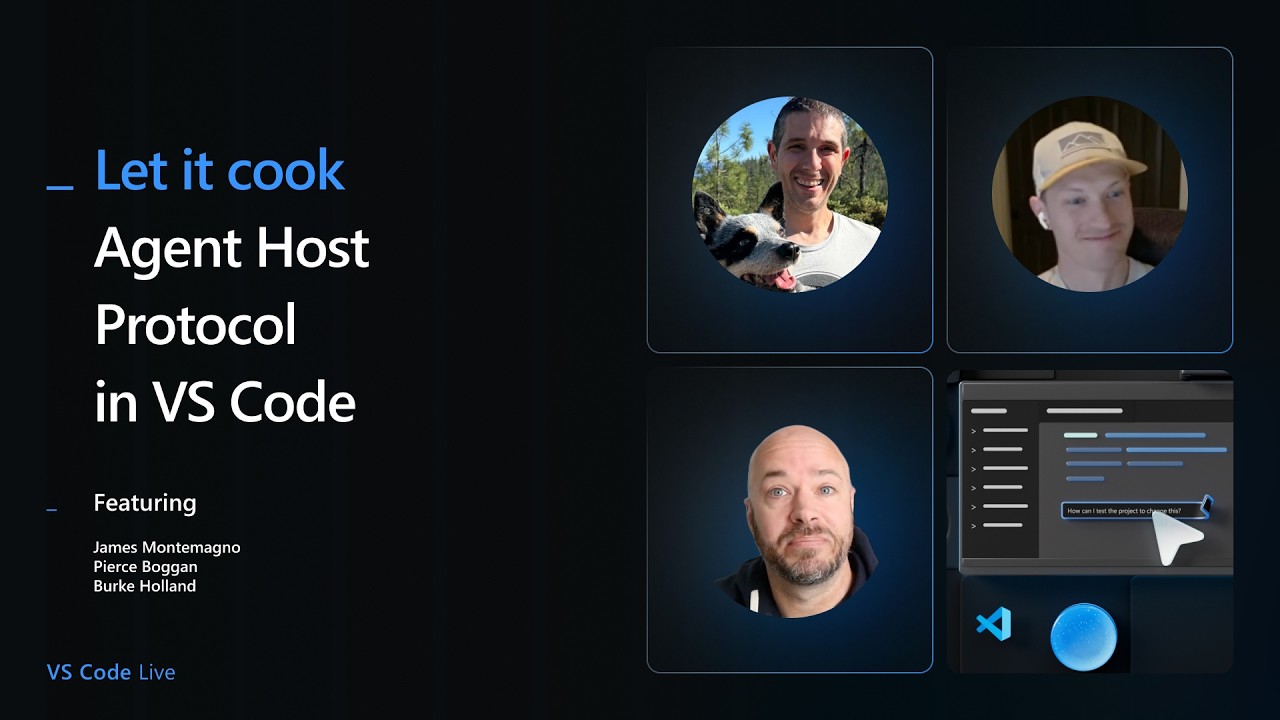

The boys are back! Burke, James and Pierce are live in 10 minutes with a walkthrough of the latest enhancements to VS Code's Agent Host Protocol! This one is perfect for developers building agent-powered VS Code extensions and automation workflows. ➡️ https://t.co/Z5YhTh5LUu https://t.co/5EKluYxyGn

This robot can DANCE better than me 😄 Agibot just raised the bar. And backed by @NVIDIA? It's over. 🤖 The robot revolution is NOT coming. It's ALREADY HERE. Video Via Pen Chen #Robotics #AI #NVIDIA https://t.co/1ItEkFXHOA

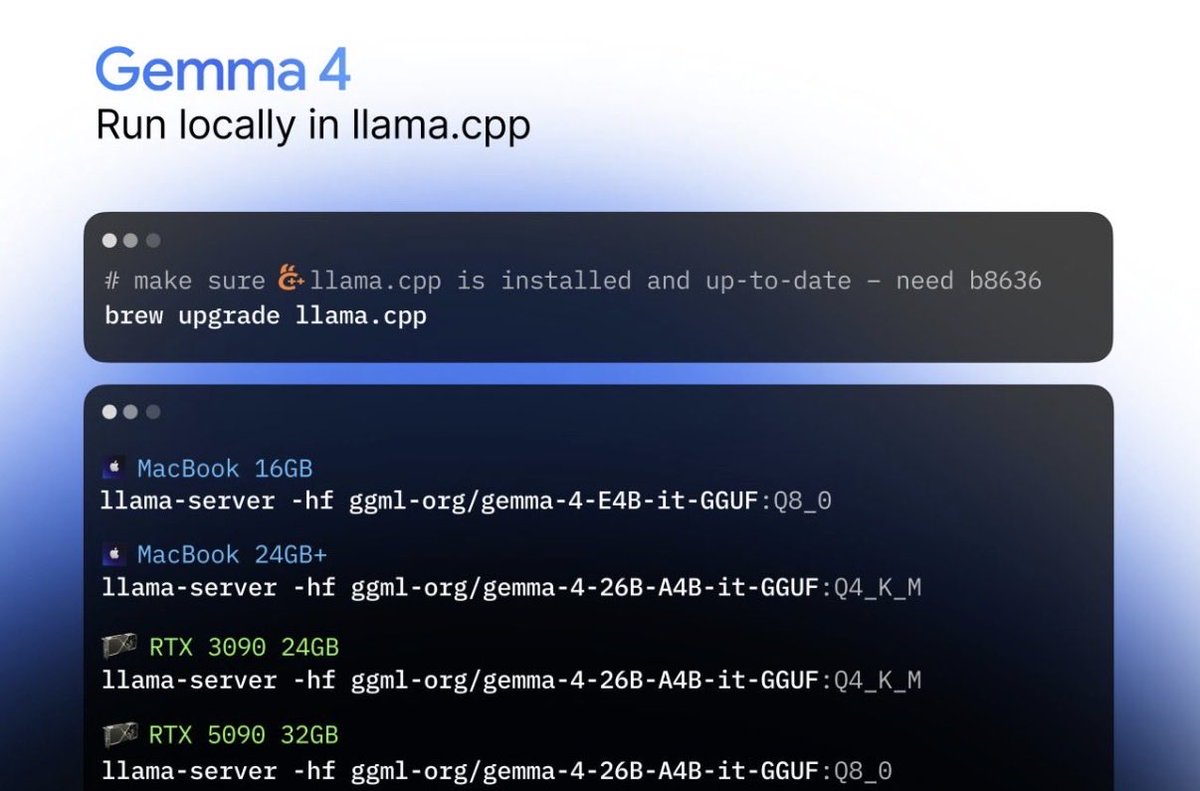

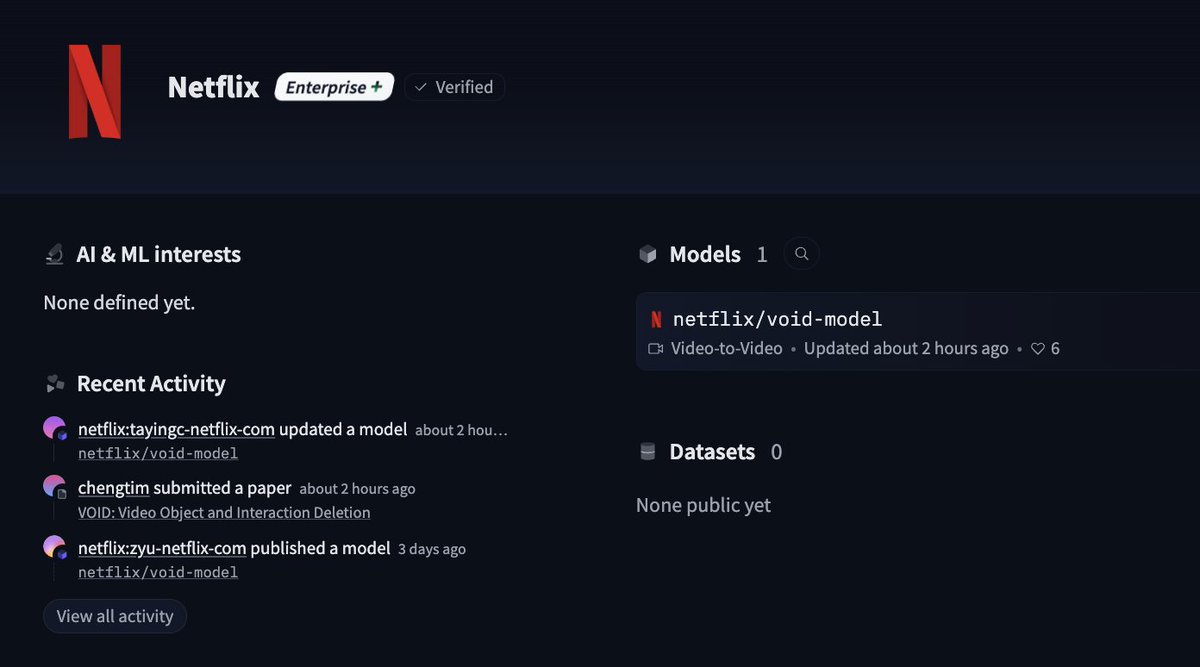

Google just re-entered the game 🔥🔥 They want to take the crown 👑 back from Chinese open source AI. And... Gemma 4 is FINALLY Apache 2.0 aka real-open-source-licensed. From what I've seen it's going to be a pretty significant model. But give it a try yourself today: brew install llama.cpp --HEAD if you have at least 24GB of RAM or VRAM, run the (very good) 26B MOE: llama-server -hf ggml-org/gemma-4-26B-A4B-it-GGUF:Q4_K_M if you have 16GB of RAM or VRAM, run the dense E4B: llama-server -hf ggml-org/gemma-4-E4B-it-GGUF:Q8_0

Netflix just dropped their first public model on @huggingface 👀 https://t.co/IucszOIJXE

Netflix just dropped their first public model on @huggingface 👀 https://t.co/IucszOIJXE

.@GoogleGemma 4 31B is up to 2.7X faster on RTX using llama.cpp. Thanks to @ggerganov for working with us to make this model fast. https://t.co/jWWyJfLNdf

Netflix: surprise we just released our new ai model Void on @huggingface. Capabilities of Netflix's VOID Model: - Object Removal with Environmental Awareness. - Physical Interaction Handling. - Open-Weight Access.

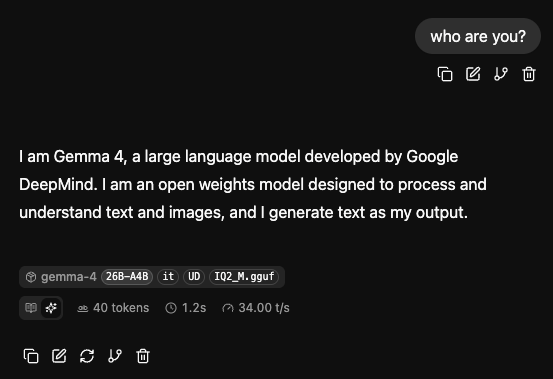

got Gemma 4 up and running at 34 tokens per second this is the 26B-A4B model, running on my mac mini m4 with 16GB ram next time i hit my claude session limits i'll have this fast free local AI as a backup :] https://t.co/nTrUQT1ZYB

i spent the afternoon experimenting with Gemma 4's vision capabilities made an app that uses roboflow RF-DETR for a first pass of object detections and Gemma to summarize the scene in one sentence for fun i asked Gemma to "describe what you see as if you were a medieval bard"

AI may reshape not just jobs, but the pathways between them. Millions of workers without degrees rely on “gateway” roles like admin or customer service to move into higher-paying jobs, yet many of these roles and the transitions they enable are highly exposed to AI. The real risk is not just job loss, but reduced mobility. If those stepping stones disappear, moving up the ladder could become much harder. https://t.co/Jnevx7SeSo @BrookingsInst

You can bring your kids' puzzles to life and expand their imagination with Grok Imagine. The new quality is awesome. https://t.co/1u3iKNot33