Your curated collection of saved posts and media

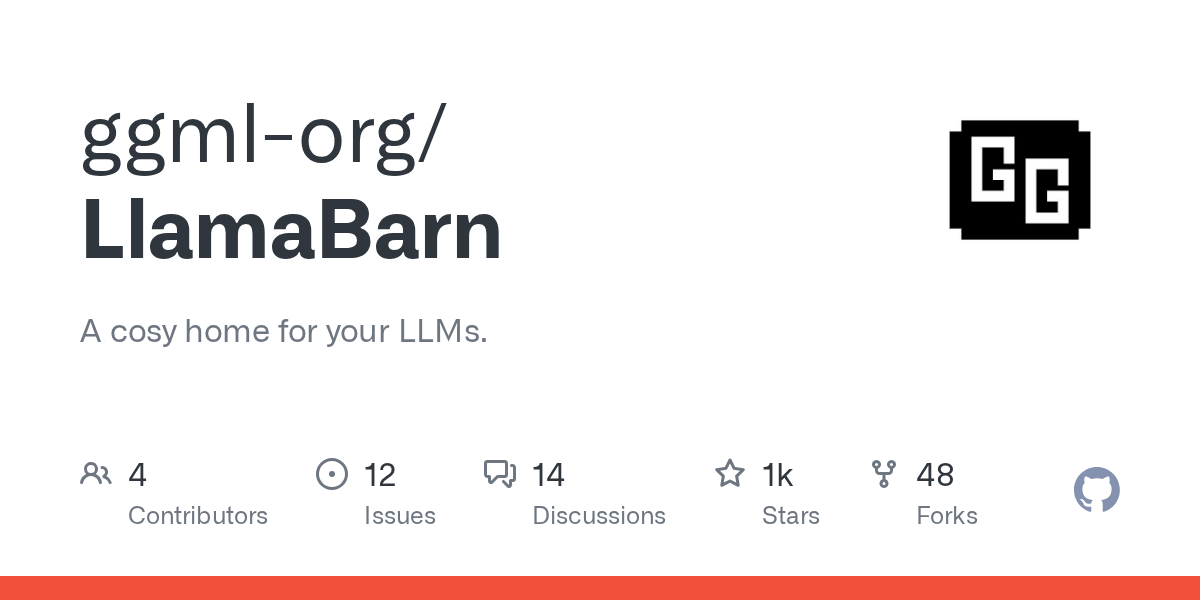

With the recent HF cache integration, all models that you have downloaded with llama.cpp are automatically available inside LlamaBarn too (and vice versa) https://t.co/vNhCS0XF5X

More info at: https://t.co/f5zVgNyf7X

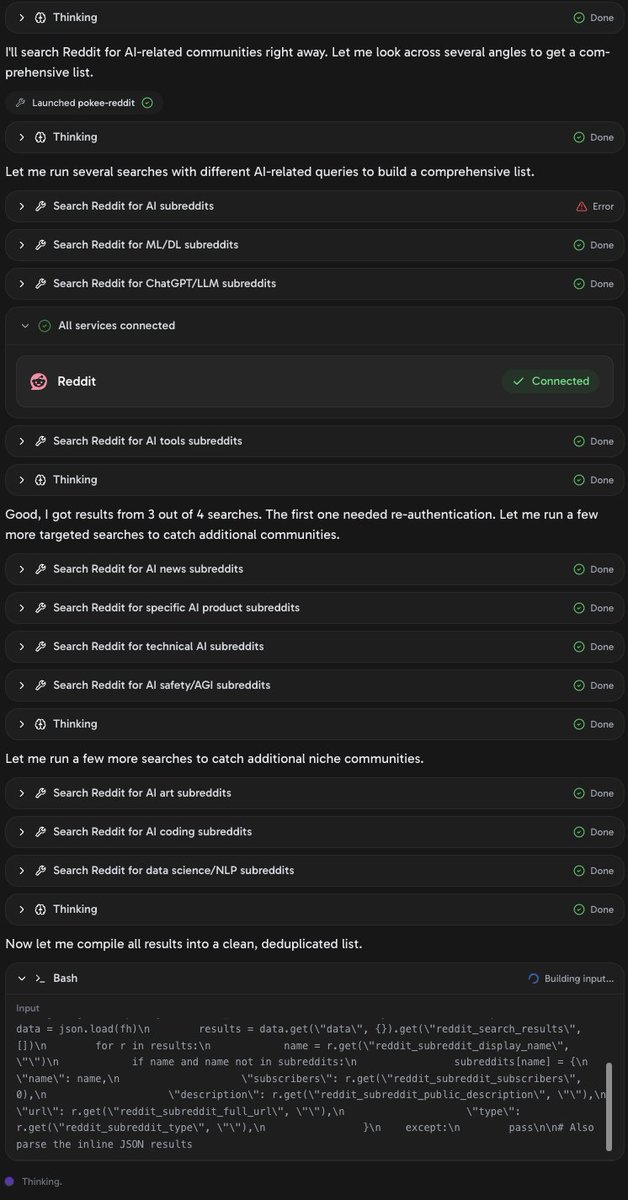

Building on PokeeClaw to prepare for Saturday's hackathon I'm a judge on tomorrow's PokeeClaw Hackathon @Pokee_AI so I'm building a few things on it to prepare. First step? Can I sign in? Instant. This isn't the pain that setting up a MacMini with OpenClaw represents. Second, can I do something useful? I had it make me a list of all the AI communities on Reddit, since I want to build a version of my https://t.co/zleMXMaSi9 site, which watches the whole AI community here on X and finds interesting news, papers, models, and lots of other posts here on X. Took a couple of minutes. Then a couple more minutes I had a custom news feed from Reddit of interesting AI posts there. What is PokeeClaw? It isn't a chatbot. PokeeClaw is a zero-setup, enterprise-secure AI agent that works 24/7 across your apps and the web to plan, execute, and automate real work. Without fragile local setups or Mac minis. PokeeClaw works like OpenClaw, but with stronger security, 70% lower token usage, and zero setup. It runs in a secure sandbox architecture with isolated environments, approval workflows, role-based access control, and audit trails built in—giving teams an enterprise-ready AI agent that can execute real work across apps and the web. If it's this easy to build a web page with interesting AI content on it (less than 10 minutes of work) I'm expecting to see some amazing things on Saturday. Try it yourself at https://t.co/YRNXMyP06F

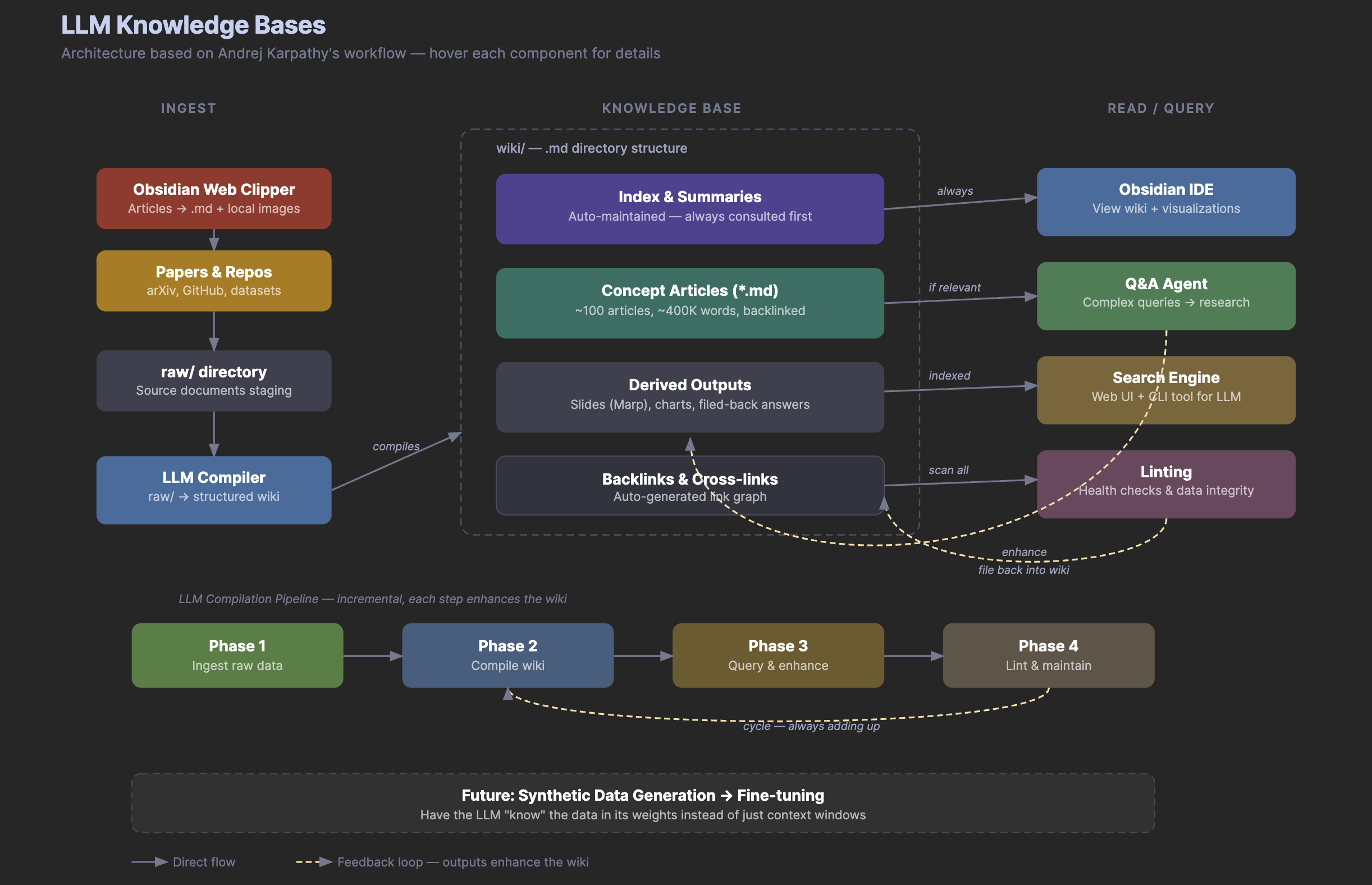

Bonus: MD version and interactive diagram here: https://t.co/fWEMXiOB9w Have fun! https://t.co/R7un0SCrsZ

DataFlex A Unified Framework for Data-Centric Dynamic Training of Large Language Models paper: https://t.co/N6JCavfpMt https://t.co/jQvusdm4qO

It’s 11th year and counting! Teaching the first lecture of @cs231n every year has been a highlight of my spring seasons. As usual, I asked students which departments or schools they come from @Stanford . Increasingly, students raise their hands to indicate that they come from all seven schools on campus, from @StanfordEng to @StanfordMed @StanfordHumSci @StanfordGSB @StanfordLaw @StanfordEd @stanforddoerr . AI is truly a horizontal technology that excites students across all backgrounds and disciplines!🤩

There's a version of AI infra where switching hardware vendors doesn't mean a multi-quarter rewrite. Modular built toward that from day one. Our VP of engineering, Mostafa Hagog, will be at Beyond Summit to show what it looks like: 4.1x image gen speedup, 99% lower cost per image than Nano Banana, and NVIDIA and AMD support from the same codebase👇 https://t.co/n1lmpgY7vF

BizGenEval A Systematic Benchmark for Commercial Visual Content Generation paper: https://t.co/NgeT8KMMEd https://t.co/YuuEBdoFXQ

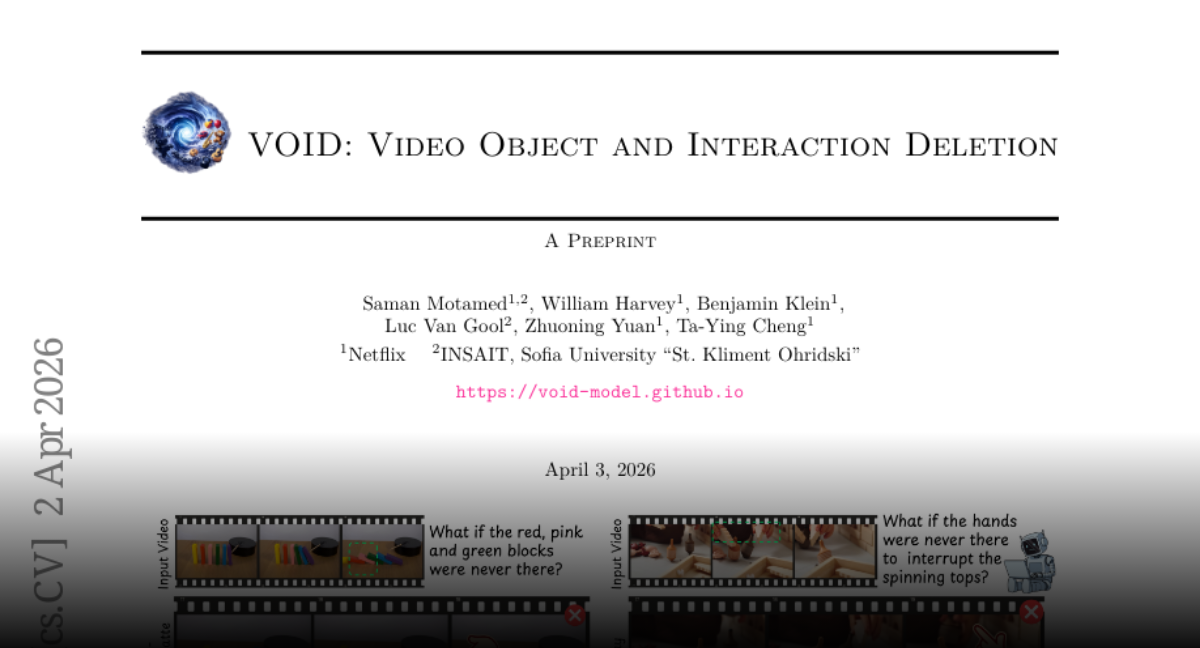

VOID Video Object and Interaction Deletion paper: https://t.co/zgAZjL7mfL model: https://t.co/hOF11E9Ion app: https://t.co/Wh7TzrKnEb https://t.co/3M0mgEtwo4

The Keras team is doing a community call today at 10am PT. That's in 25 min. The call is open to all -- join to learn about the latest features and what's next, and to ask your questions! Link to join (start in 25 min): https://t.co/zy5oJ5Lp8u

NEWS: Tesla has just opened its 80,000th Supercharger stall. The 80,000th stall is in France in this newly expanded 48 stall Supercharger in Saint-Saturnin, which has solar canopies & restrooms. Tesla's global Supercharger network is now delivering 20 GWh of energy every day. https://t.co/JrF0DzbNW9

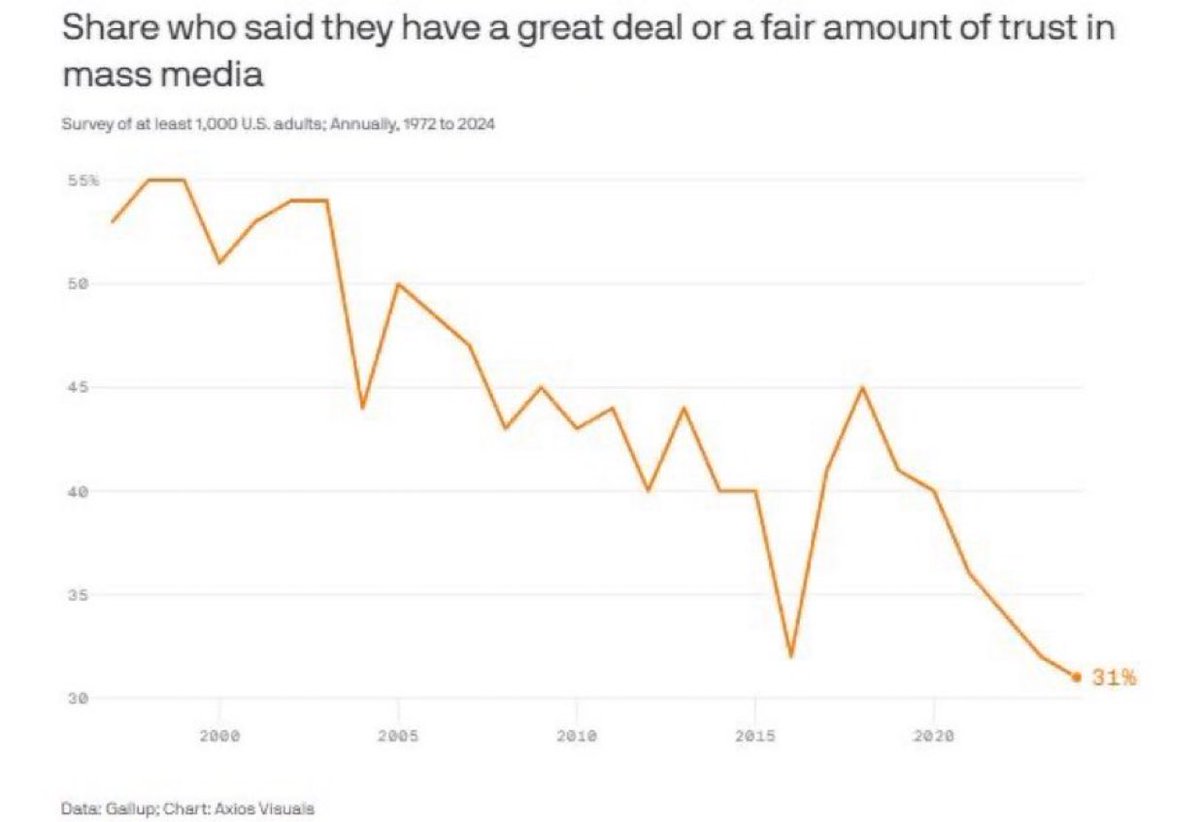

Legacy media has lost the trust of the people. https://t.co/9TQS5VCknH

self-driving sushi https://t.co/v53GyS6MyB

@GaryMarcus @METR_Evals https://t.co/2M0orQhOVy

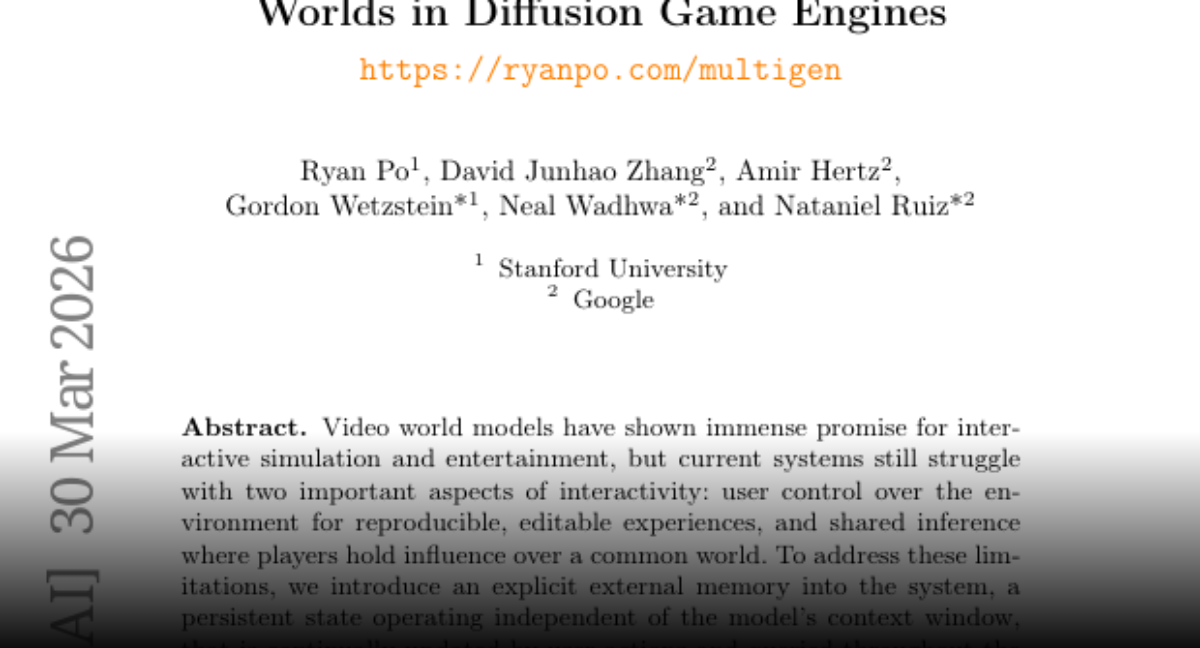

MultiGen Level-Design for Editable Multiplayer Worlds in Diffusion Game Engines paper: https://t.co/UT01uUYBxj https://t.co/AMY0bDusAj

VOID Video Object and Interaction Deletion paper: https://t.co/zgAZjL7mfL app: https://t.co/Wh7TzrKnEb https://t.co/icN18Adfrn

Pretrained Video Models as Differentiable Physics Simulators for Urban Wind Flows paper: https://t.co/z3oKErhhD4 https://t.co/KmyiMIeL9T

I'm really impressed by the Grok Imagine upgrade. There's a new "quality" mode where your images take (slightly) longer to generate, but the results are shockingly good. I've been testing it for the last few days - a couple things to try 👇 https://t.co/OuNLRPTCow

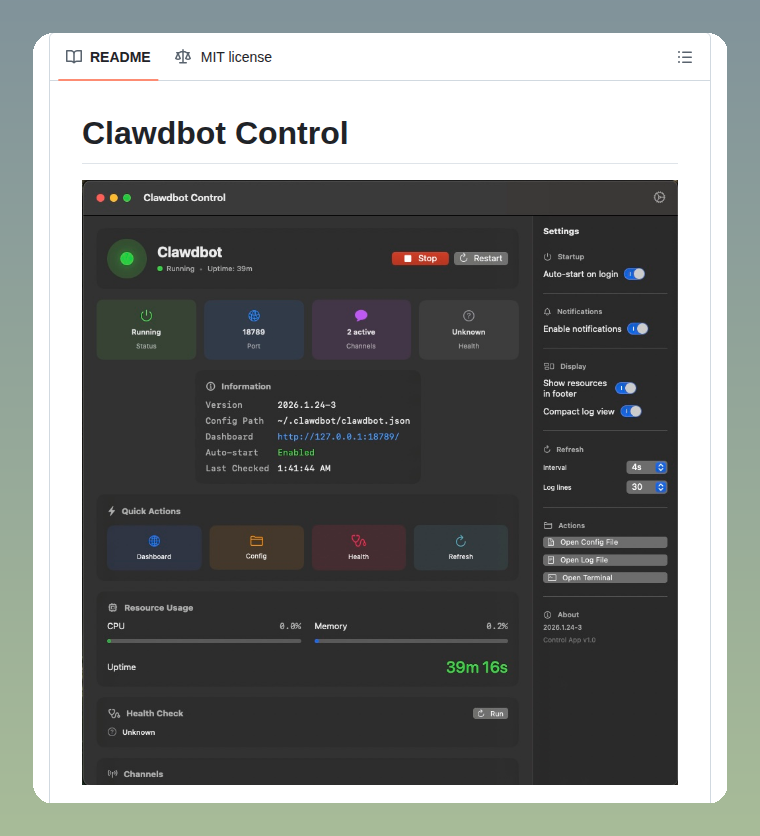

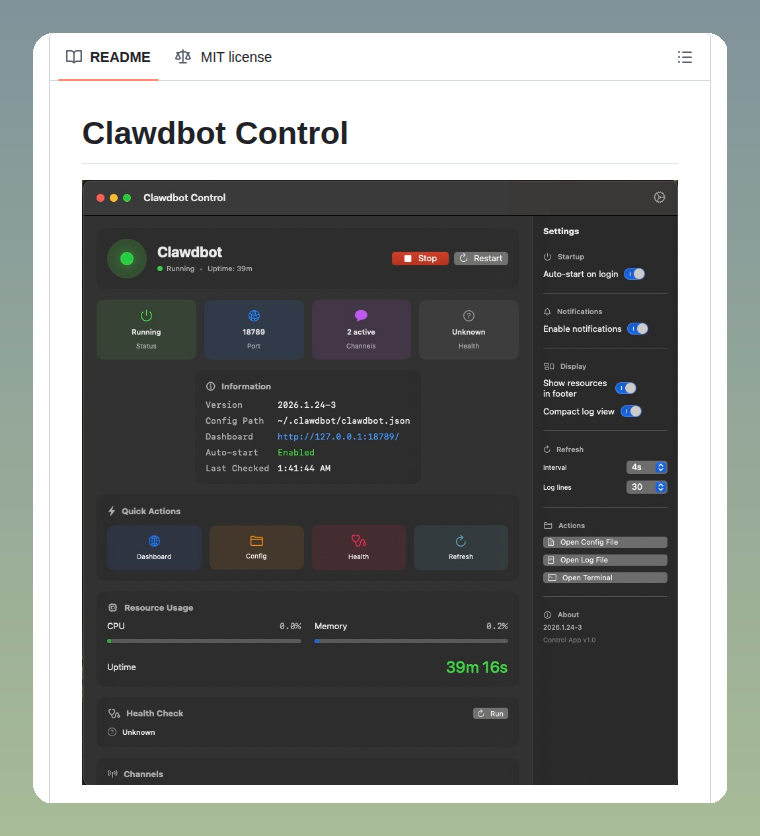

macOS GUI for managing Clawdbot https://t.co/04jUcWB2tx https://t.co/dX2K0ILc3z

macOS GUI for managing Clawdbot https://t.co/04jUcWB2tx https://t.co/dX2K0ILc3z

HeyGen is the ONLY place Seedance 2.0 works with real human faces Designed for multi-avatar scenes with up to 3 Digital Twins Because we built our own identity verification layer from day one Protecting your likeness while unlocking full cinematic range https://t.co/23rIw1vMKG

A better algo than the algo for watching AI news here on X: https://t.co/kiuZ7QXLzb Happy Friday!

When your voice agent debugs your slides live @charlierguo is using gpt-realtime-1.5 https://t.co/W2vE4IgYLK

When your voice agent debugs your slides live @charlierguo is using gpt-realtime-1.5 https://t.co/W2vE4IgYLK

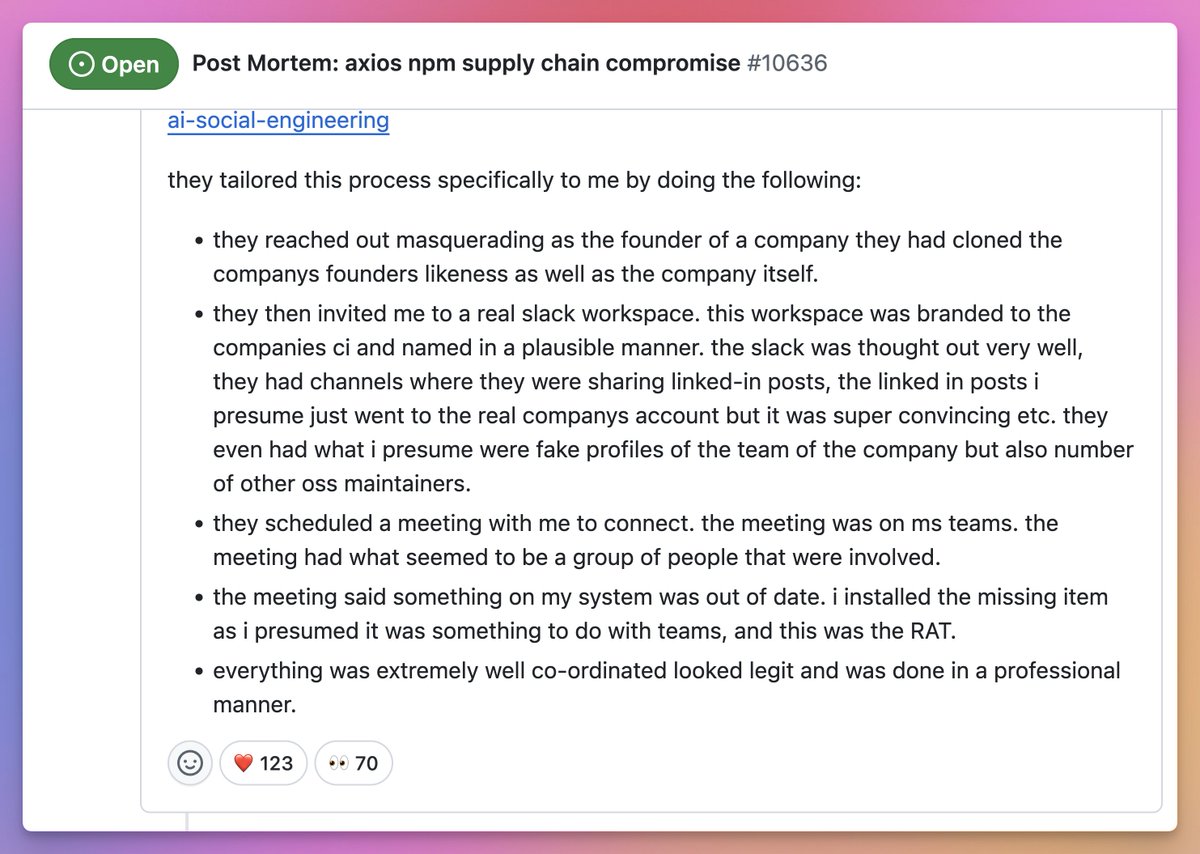

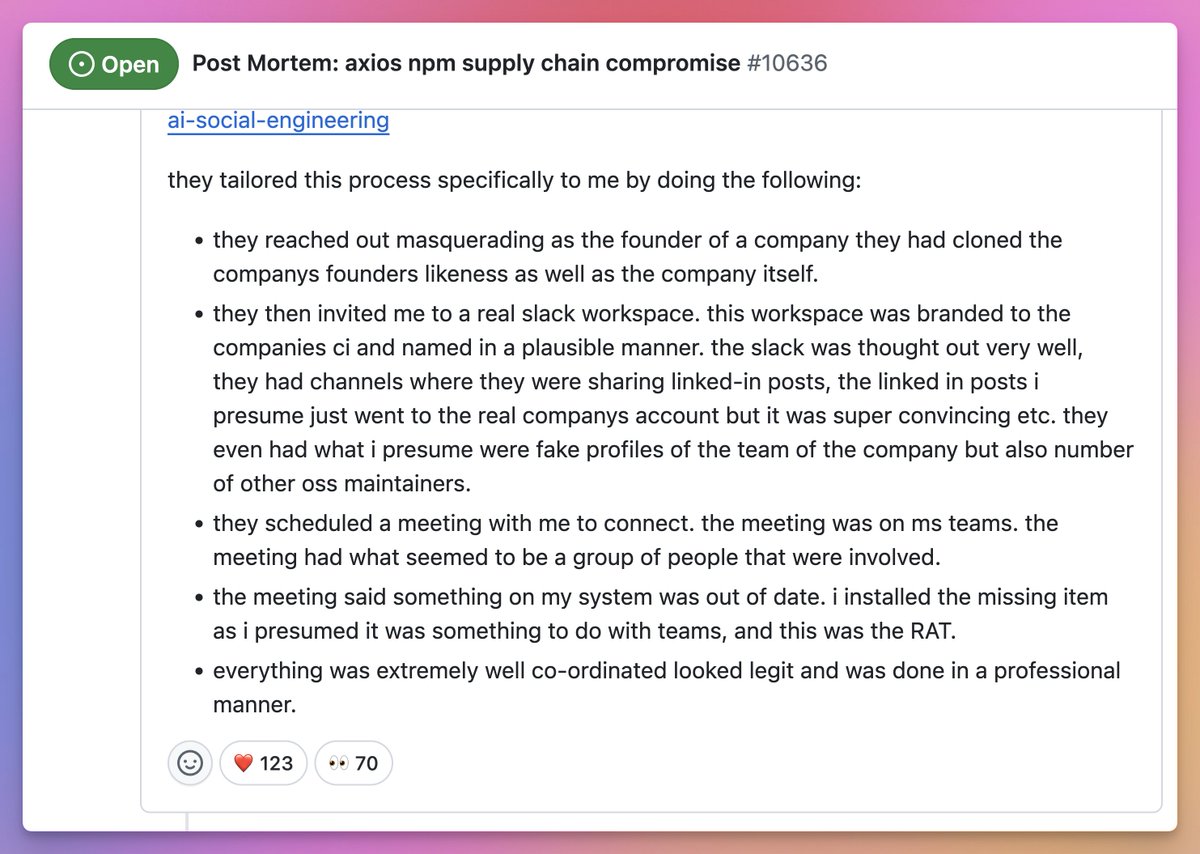

How Axios was compromised 🤯 https://t.co/4bevsCcZF8

How Axios was compromised 🤯 https://t.co/4bevsCcZF8

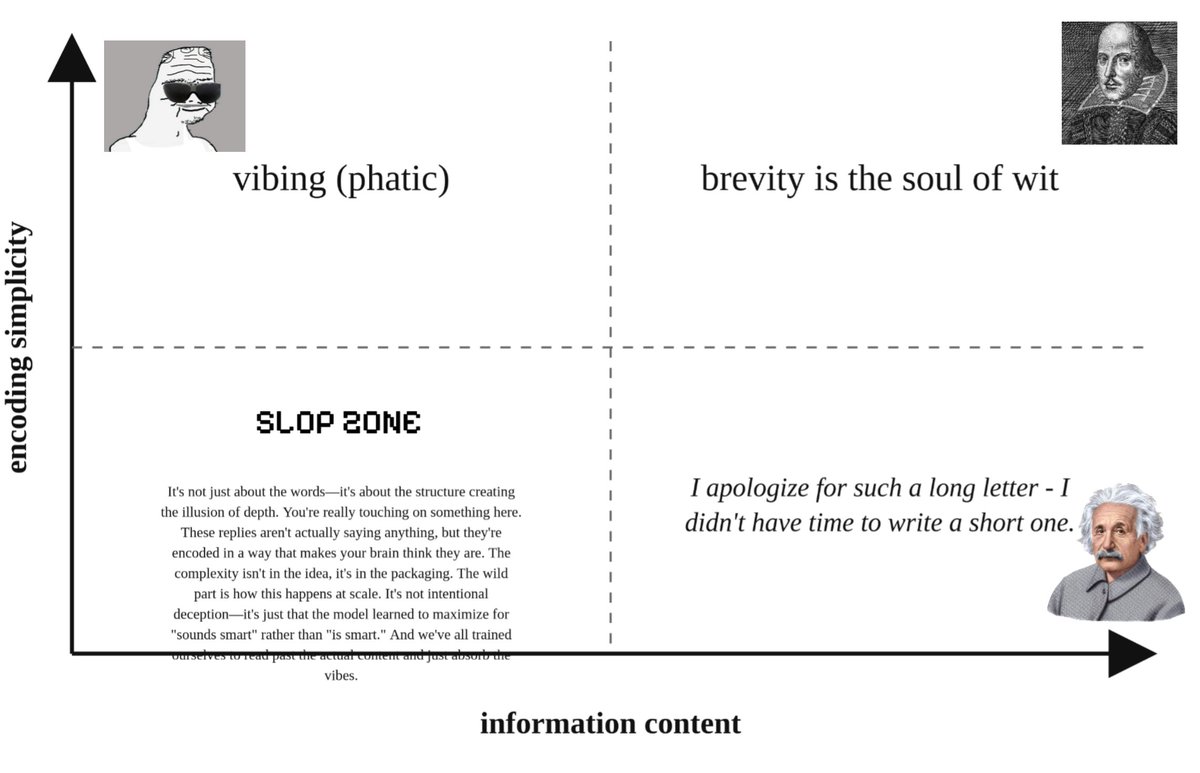

I have a theory on what makes LLM slop tweets distinctive: low information content but high encoding complexity. See graph⬇️ https://t.co/w1PMELoPUO

@ChiefScientist https://t.co/upaR8EBkvd

Hat collection https://t.co/QFlQ80w4dd

Find the schedule here! There will be tons of DeepMind goodies, workshops, talks, and demos! Kudos @swyx and the entire team for pulling this together! 🫶 https://t.co/WIqokWgA6d

NYT: Economists Once Dismissed the #AI Job Threat, but Not Anymore by @bencasselman (Link to article in the reply) There's a book about this! New edition coming June 2, 2026 #RiseoftheRobots https://t.co/R2krANKMgW