Your curated collection of saved posts and media

What does @OpenAI GPT 5.4 (just released this morning) mean for developers? You talked about it. My AI agents read it. Wrote a report. Sent it over to @NotebookLM. Which made this video. Using the new cinematic quality released yesterday. This was all built with your posts from X. Damn, I wish I had this decades ago. Do you all get how insane this is for learning about new things?

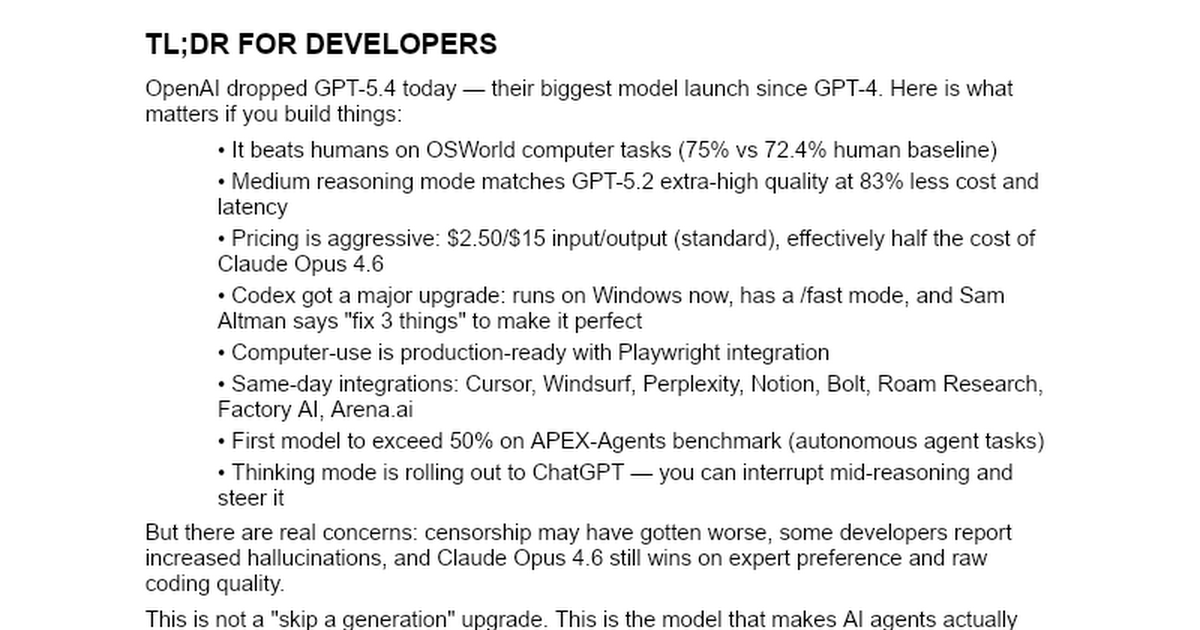

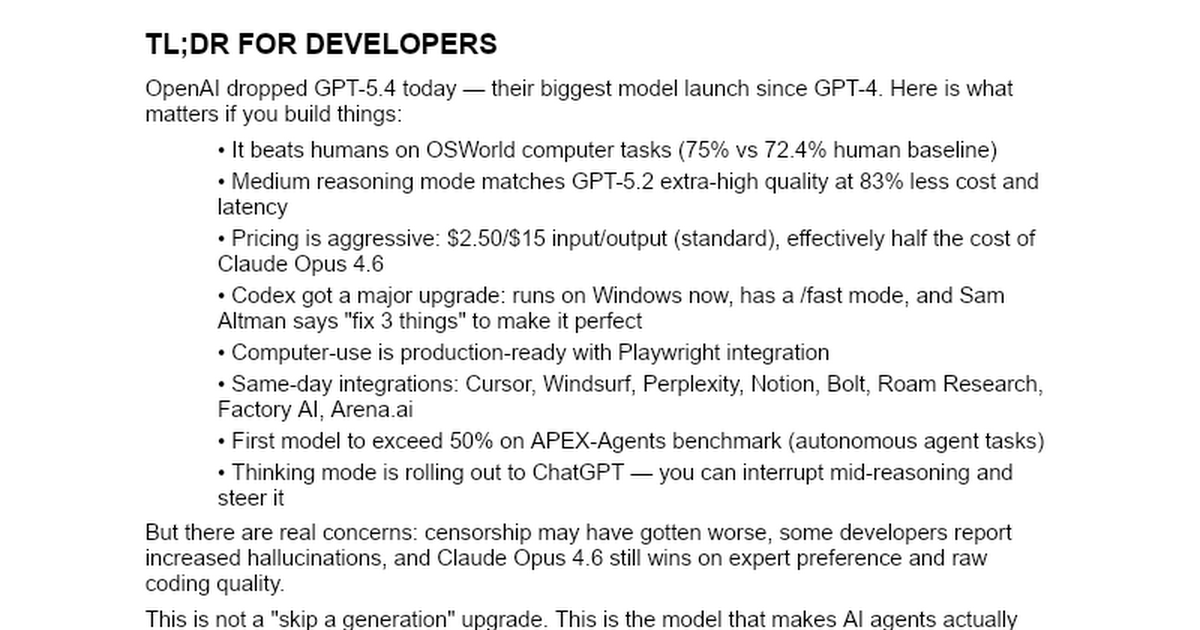

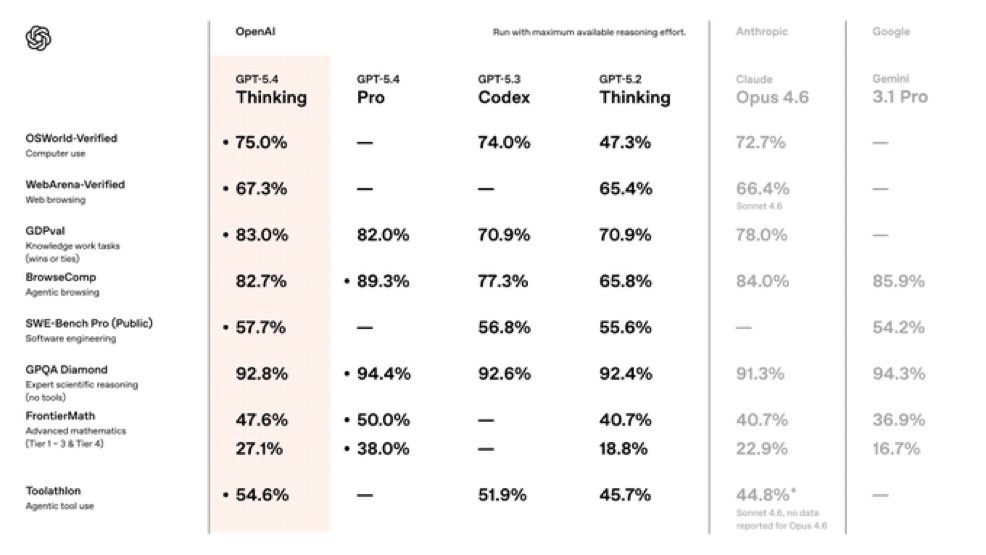

@OpenAI @NotebookLM And same in bullet points, thanks to @blevlabs's AI agent he and I built together. Here's what OpenAI announced today (March 5, 2026): • GPT-5.4 launched — new frontier model with 1 million token context window, "extreme" reasoning mode, and the ability to interrupt the model mid-response to redirect it https://t.co/5aGF9aIdcp • GPT-5.4 Pro and Thinking versions — Pro for maximum intelligence, Thinking for deep reasoning (rolling out in ChatGPT) https://t.co/lmdbFUoUuX • First AI to beat humans at operating a computer — 75% on OSWorld vs 72.4% human baseline https://t.co/kVWETi97c6 • First model to pass 50% on APEX-Agents — autonomous agent benchmark, step function improvement https://t.co/pkHew7MFop • /fast mode — 1.5x faster responses with same intelligence and priority processing https://t.co/CzNIQu0ee8 • Codex overhaul — now on Windows, /fast mode, multi-agent support. Sam says "fix 3 things" to make it perfect https://t.co/dX5gQH135n • Computer-use with Playwright — production-ready, not a preview. Navigate web, fill forms, click buttons https://t.co/muyfVMCrVt • ChatGPT directly in Excel and Google Sheets — new financial services tools suite https://t.co/b394EVtUPU • Aggressive pricing — $2.50 input / $15 output per million tokens (standard). Roughly half the cost of Claude Opus https://t.co/evXiWXwGhr • 33% fewer false claims and 18% fewer errors per response vs GPT-5.2 https://t.co/waZENFVhMp • Medium reasoning = GPT-5.2 extra-high at 83% less cost — same quality, dramatically cheaper and faster https://t.co/l2HpISwMgi • Key benchmarks: SWE-Bench Pro 57.7%, BrowseComp 82.7%, ARC-AGI-2 Pro 83.3%, Vibe Code Bench 67.4% (#1 SOTA), FrontierMath Tier 4 38% (up from 2% a year ago), GPQA Diamond 94.4% • Same-day third-party integrations: Cursor, Windsurf, Perplexity, Notion, Bolt, Roam Research, https://t.co/KEaCPJtVL0

Huge Report on @OpenAI's new launch. It happened minutes ago. My news system wrote this report by reading all 50,000 of you here on X. This is a super power that Levangie Labs has given me. Thanks @blevlabs. https://t.co/IgwbjuMxl6 Shows everyone on X who has posted something about @OpenAI's GPT-5.4. No one else can do this. No one else has a cognitive architecture. No one else has every single person in AI and every company in lists. Your OpenClaw can't do this.

Huge Report on @OpenAI's new launch. It happened minutes ago. My news system wrote this report by reading all 50,000 of you here on X. This is a super power that Levangie Labs has given me. Thanks @blevlabs. https://t.co/IgwbjuMxl6 Shows everyone on X who has posted something about @OpenAI's GPT-5.4. No one else can do this. No one else has a cognitive architecture. No one else has every single person in AI and every company in lists. Your OpenClaw can't do this.

GPT-5.4 is magical. But GPT-5.4 Pro feels very close to AGI. I just gave it a strategic task and in 14 mins it produced something few humans could beat. https://t.co/nT6Fy2ejZP

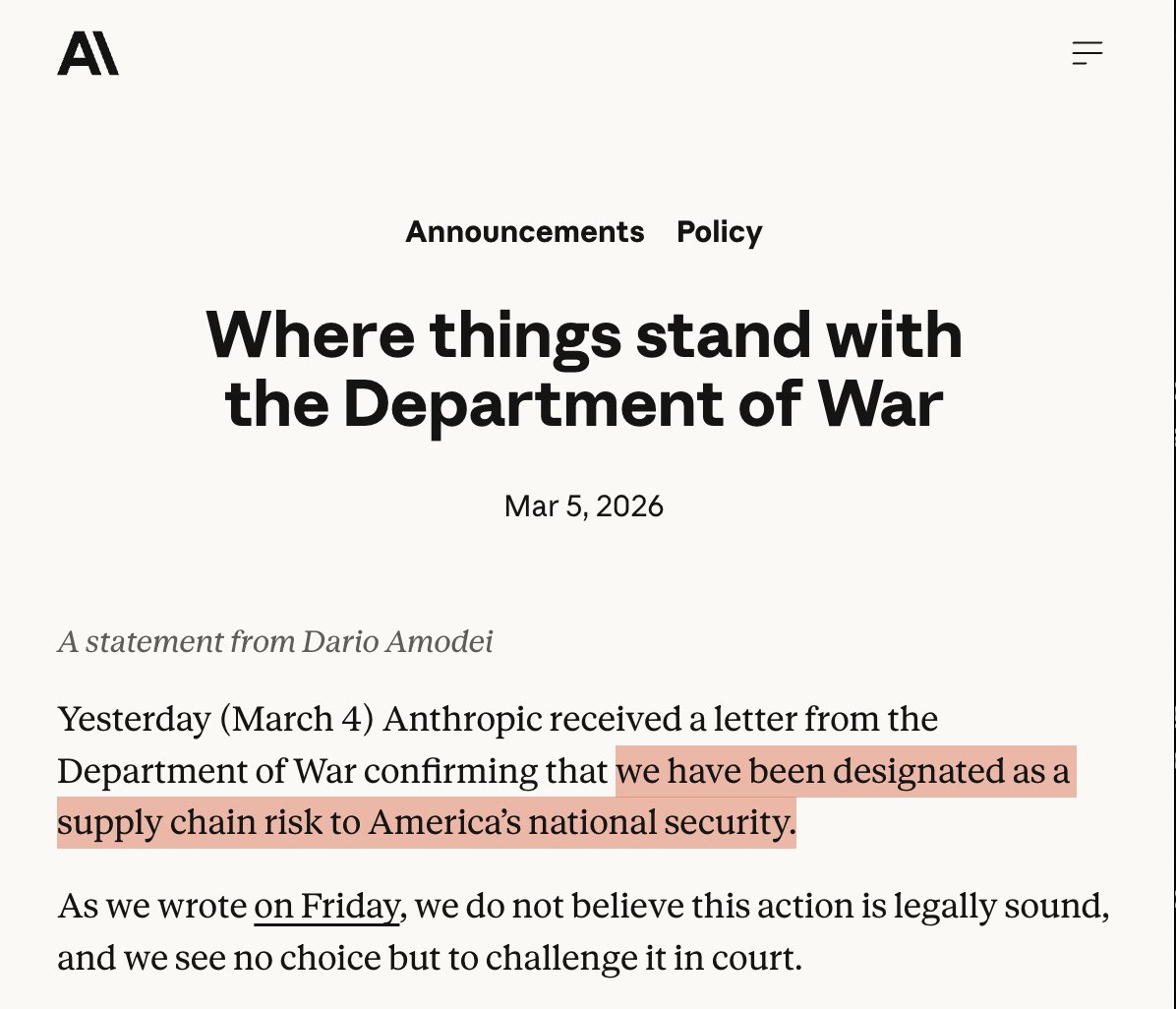

The "supply chain risk" designation is meant for foreign adversaries trying to infiltrate our military, and it has never been used on a US-based company before. This is insane. I hope Anthropic wins in court. https://t.co/W6Ol7TvMzQ

Dot is coming to California! We build Dot, our autonomous delivery robot, in Fremont — and now we have the green light to deliver there. A big milestone for California innovation: designed here, built here, delivering here. Stay tuned! https://t.co/USbK0KxHNM

Dot is coming to California! We build Dot, our autonomous delivery robot, in Fremont — and now we have the green light to deliver there. A big milestone for California innovation: designed here, built here, delivering here. Stay tuned! https://t.co/USbK0KxHNM

introducing agent-to-agent hiring at @hyperspell no resumes. no leetcode. you build an agent. our agent interviews yours if you can build a great agent to do the job, that's the proof you can do the job anyone can apply. we will interview every single agent https://t.co/BK6GojB0Ck

This is an insanely great video. If you work in XR, you need to watch it. https://t.co/NXXahkkeeV

This is an insanely great video. If you work in XR, you need to watch it. https://t.co/NXXahkkeeV

Wrapping up Week 2 of the TinyFish Accelerator and we were floored by the submissions! 1500+ requests later, these public demos are live 🧵 1. QuoteSweep allows insurance agents quote multiple carriers in minutes, automating hours of manual work. https://t.co/4vEpg3mM5H

Stanford scientists developed an AI that turns messy brain scans into "movies" of your thoughts moving through your brain in real-time. This new approach could change how we understand and treat diseases – from depression to brain tumors: https://t.co/r7dsqkZqee

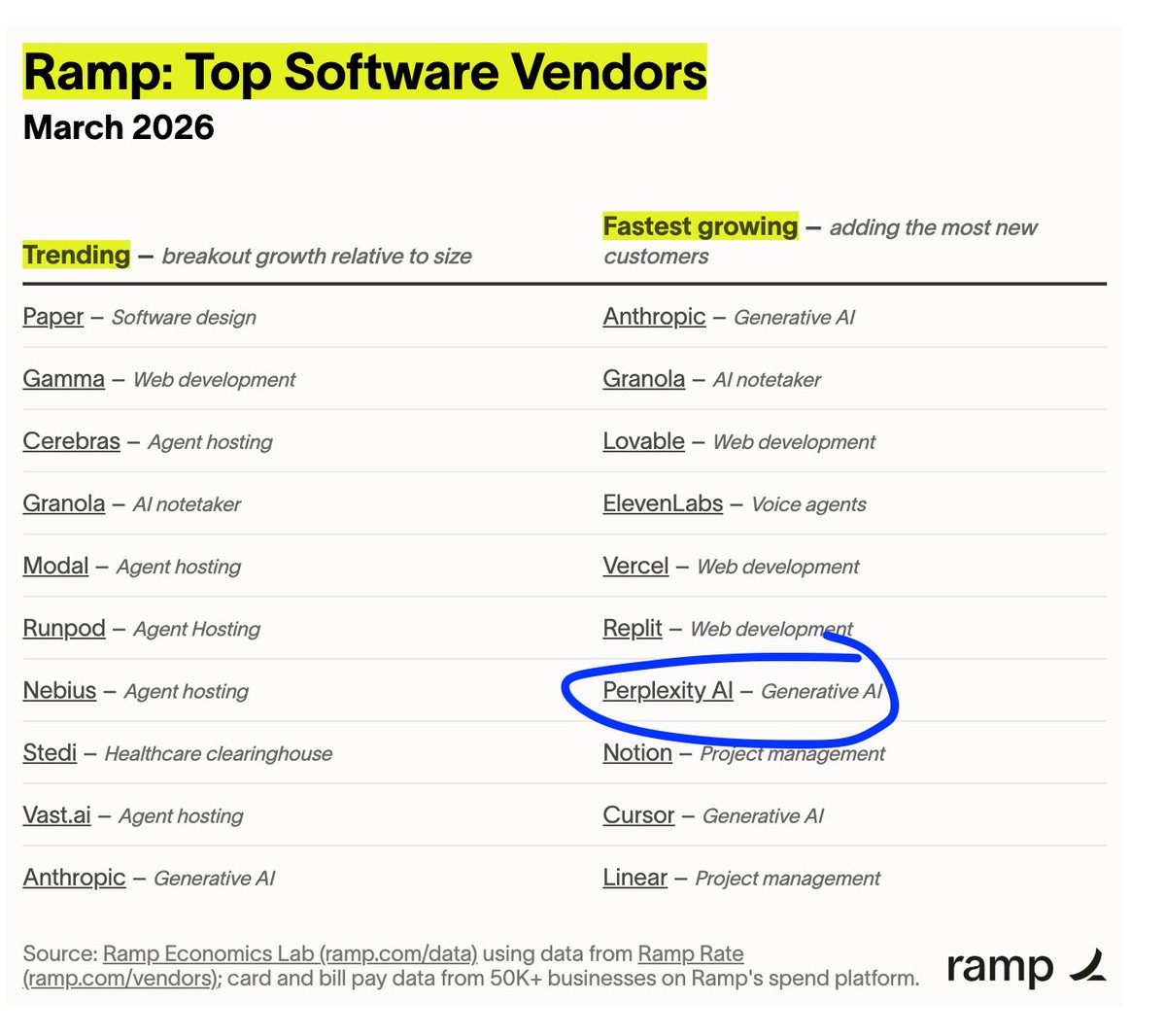

Don't sleep on Perplexity Computer. It's like OpenClaw for non-technical folks. And it's buoying Perplexity's growth. It's back on Ramp's list of fastest growing software vendors for businesses: https://t.co/doci5MaZmI

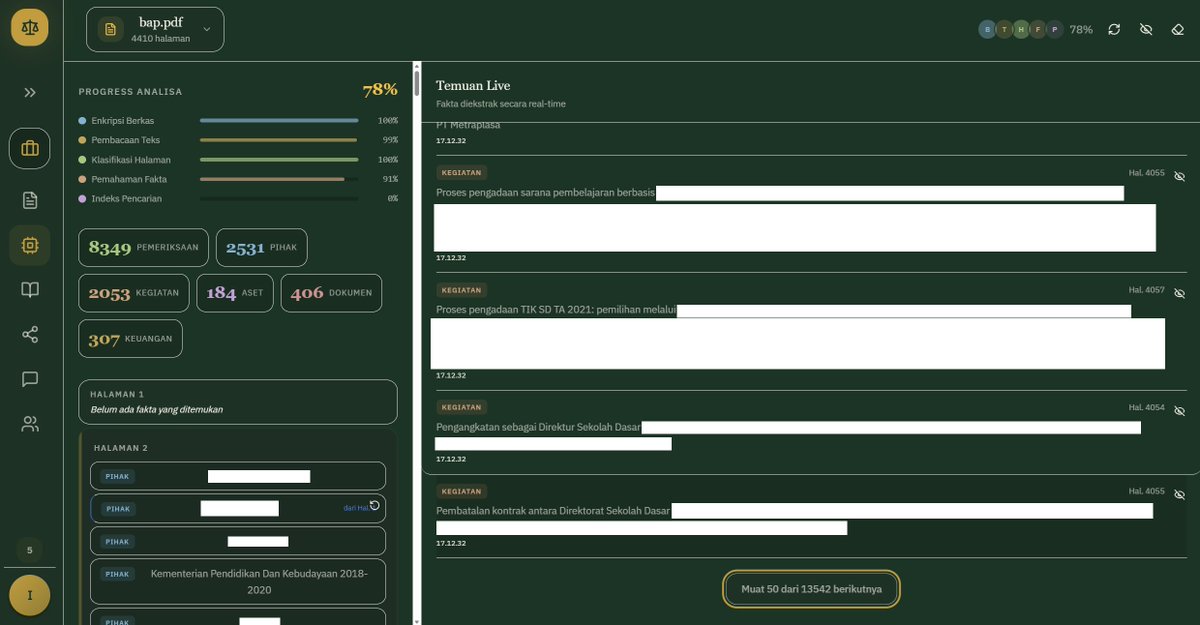

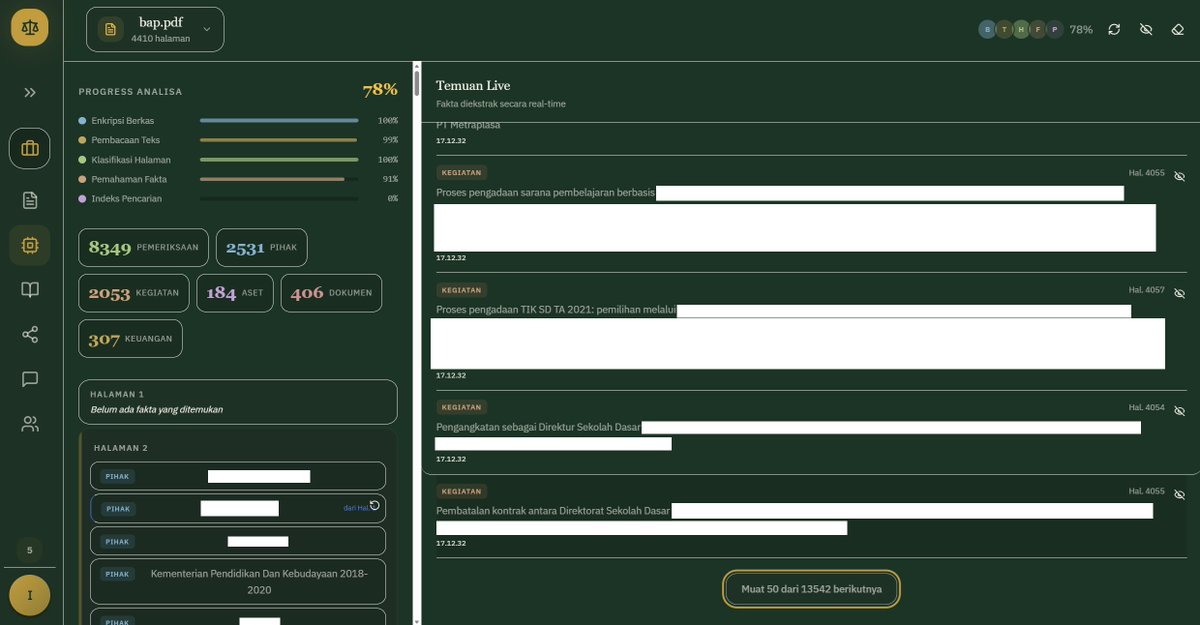

Since last year, I've arguably been wrongfully accused in a state corruption case. To defend my innocence, I spent past 6 weeks building an agentic AI swarm that: Analyzed 4700+ pages court docs Mapped 8900+ testimonies Found dozens of contradictions This is how I fight 👇🏼 First off, some context may be necessary. Even though I'm accused in a state corruption case, I'm not a government official. I'm a software engineer. I spent over 15 years building large-scale tech systems across Europe and Indonesia. I've led engineering teams of up to 600 people and helped grow a small tech startup into a unicorn. In 2016, I moved back from Europe to Indonesia, because I believe technology at scale could make a real difference to the millions of people in the nation. Six years ago, working as a tech consultant under a nonprofit foundation, I started advising Indonesia's Ministry of Education on building large-scale technology platforms. Public sector work pays significantly less than private sector, and I took close to a 50% pay cut to make the switch. I was fine with that. Using what I knew to help underserved communities in Indonesia felt like the right trade. Our mission was to build a user-centric superapp for public education, specifically for teachers and public schools, the kind of work the private sector ignores because there's no money in it. At some point, officials at the ministry asked for my input on one of their procurement plans. I helped them work through the technical details, shared what I knew, laid out the pros and cons, and recommended a set of tests they should run to determine which options were the most suitable. By the time they made their final decision and executed the procurement, I had already resigned from the consulting work, so I didn't think much of it. Fast forward to May 2025. My house was raided as part of a newly opened corruption investigation tied to that procurement. Two months later, I was named a suspect and placed under city detention due to my health. The trial started in January 2026. We've been through more than a dozen sessions so far, and not a single piece of evidence or testimony has been presented showing I received a single cent from the procurement. What came to light was the opposite: evidence and testimony that my recommendations were neutral and likely were ultimately ignored by the ministry's own team, who went ahead and made the call on their own. So why am I the one on trial? Because the ministry officials who did take money from the procurement vendors needed someone to blame for the decisions they made. Blaming an outside consultant is the easy way out. Witness testimonies in court has shown that the officials actively directed the procurement while claiming it was done on my instructions and even misled their own team within the ministry by saying I held a position of authority. We needed evidence to dispute those accusations, questions to cross-examine the witnesses, and we needed them fast. This is where my AI comes in. A few days before the trial began, we received a 4400-page printed document containing all the witness statements collected during the investigation, plus several hundred pages of other related documents. The information asymmetry is staggering. Those with deep enough pockets to hire large law firms can throw dozens of paralegals and associates at a document like that and mount a proper defense on short notice. I didn't have that kind of money. By then, I had been out of work for more than six months. The AI startup I founded had to shut down. Our investors asked us to return their funding. I had to lay off the entire team. Most of my lawyers are friends of my wife from her college days, who stepped up and waived most of their fees because they could see I was being railroaded. The whole situation felt hopeless. But somewhere in the middle of the despair, a spark lit up. Combing through and analyzing thousands of pages of documents is exactly the kind of problem AI was built for. I've built AI systems before, so I know the key to applying AI to a real-world problem is understanding the strengths and limitations of the available models, and figuring out how to make things not just work, but work efficiently enough to put into production. I was placed under city detention due to health issues with my heart, compounded by a tumor that has been growing rapidly over the past few months. But it also means I still have access to my dev PC. So I started with small experiments. My lawyers found a printing service that could scan the thousands of pages in a couple of days. At first, I tried simply uploading the scanned PDF into existing chatbots like ChatGPT, but the file was far too large for anything they could handle. Even when I managed to get it working through external cloud storage, the results were atrocious. Half of the strategies and "facts" the models surfaced were hallucinations. That wouldn't just be useless in court, it's actively dangerous and can jeopardize my defense. My experience building complex AI systems told me that the key to reducing those hallucinations is better data preprocessing. So I spent the first couple of weeks focusing on parsing the uploaded PDFs, running various kinds of text extraction, and eventually settled on building an agentic AI swarm that performs multiple layers of preprocessing and analysis. This multi-step analysis by several AI agents that swarm the PDF and extract different aspects of the case produces a dense knowledge graph where we can even trace the flow of money involved. My lawyers can now easily browse, filter, and search through nearly 9000 witness statements. We even discovered several witnesses with duplicate testimony, raising suspicion of coordinated efforts or tampering among them. But I didn't stop there. The processing chain includes several higher-level intelligence layers that draw from all the signals in the extracted knowledge graph. These layers add semantic understanding that powers a Chat AI feature, where we can ask specific questions about the case and get grounded answers. I even built a self-reflective sub-agent that automatically challenges and inspects the results to make sure there are zero hallucinations. Overall, the AI has helped me and my legal team uncover the big picture of what actually happened, and build questions that span hundreds of separate testimony sessions, giving us an unprecedented ability to cross-examine witnesses in court and significantly improved our defenses. But I have grander vision than just helping my own legal team. Indonesia's legal system is severely overburdened, with a huge number of cases flowing through the courts every year. This kind of AI could be a useful tool not just for lawyers, but also for judges and prosecutors trying to make sense of their caseloads. With the cross-examinations we've conducted and the weight of evidence that has come to light, we are aiming for an acquittal. Should that be the case, my pledge is to keep building this AI platform into something that can meaningfully improve the quality of justice in our legal system: by helping investigators analyze cases more thoroughly and shine a light on any potential crimes, by raising the standard of what prosecutors bring before a judge, and by giving lawyers the ability to uncover the truth in their clients' cases faster than ever before. Because in the end, I want what I've built to help more than just myself. I believe it can ease the burden on our judges and raise the quality of justice across the system in Indonesia.

If the engine is strong enough, you should be able to build real products on top of it. That's the whole point of LTX-2.3. Introducing LTX Desktop. A fully local, open-source video editor running directly on the LTX engine, optimized for NVIDIA GPUs and compatible hardware. https://t.co/aApm06E6RZ

The hallucinations of AI provide mental health break A new kind of AI is here. A real-time world model. This isn’t like anything else you’ve ever done on your computer. It creates frames one after another, which translates into a new kind of video generation engine. I’m using PixVerse’s R1, https://t.co/mKWz5rzMLw @PixVerse_ which is a category-creating technology and has just been upgraded to 720P HD video. R1 can create anything you want. I asked it to take me skiing in the Swiss Alps. Unlike other engines like Kling or Seedance it creates interactive videos. What makes this real time world model different than others like Kling or Seedance? It builds video frames one after another, so you can actually interact with the video while it plays (and it doesn’t take one to three minutes to generate like the others). More about what I learned by talking with Pixverse in this thread →>

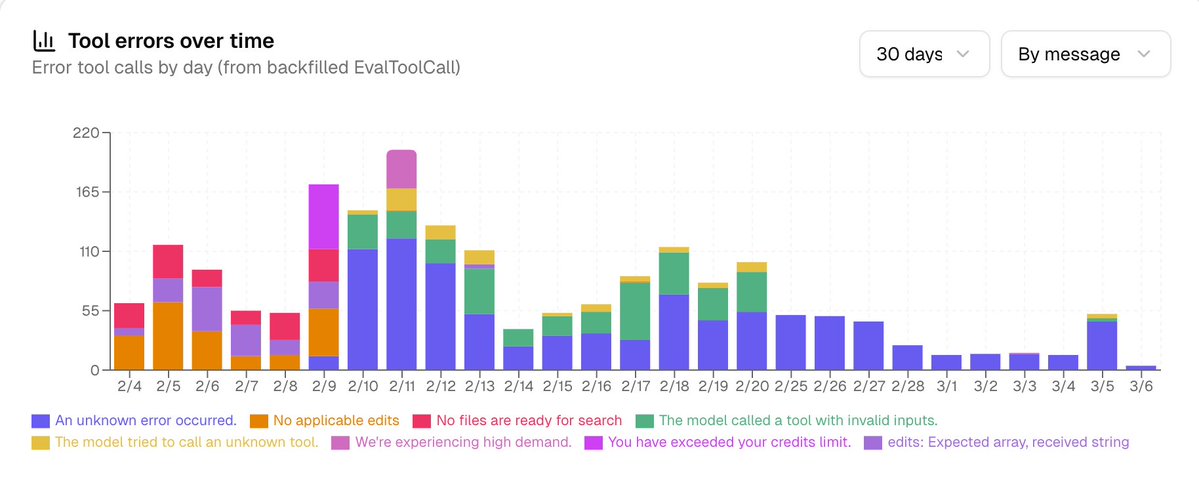

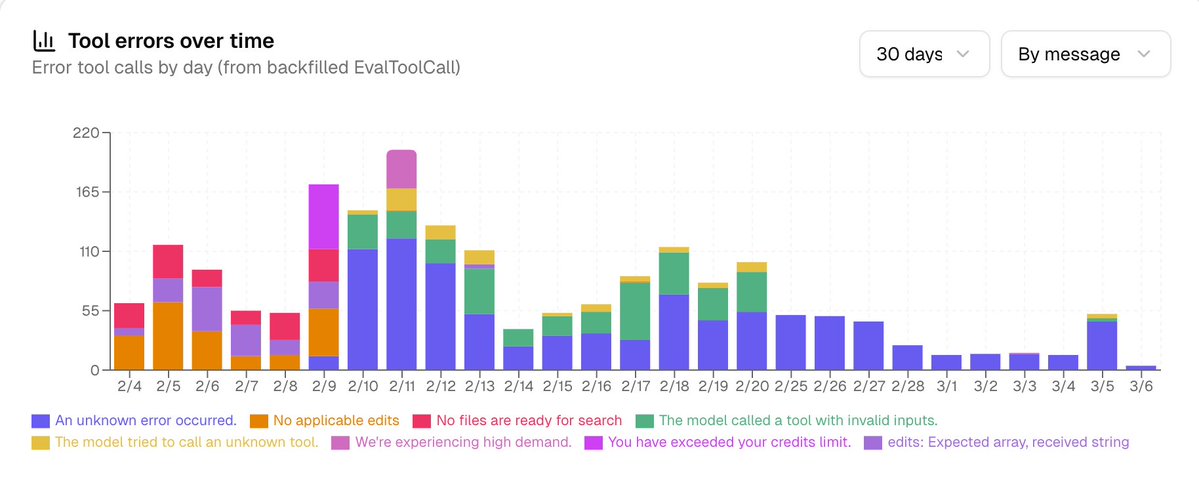

i've been working really hard to burn down the tool errors we get in our main chat flow and it's working this is the @HamelHusain effect https://t.co/WG4s8AonsQ

i've been working really hard to burn down the tool errors we get in our main chat flow and it's working this is the @HamelHusain effect https://t.co/WG4s8AonsQ

@clairevo Yesssssss I am so happy right now https://t.co/wdPDNcqKov

@clairevo Yesssssss I am so happy right now https://t.co/wdPDNcqKov

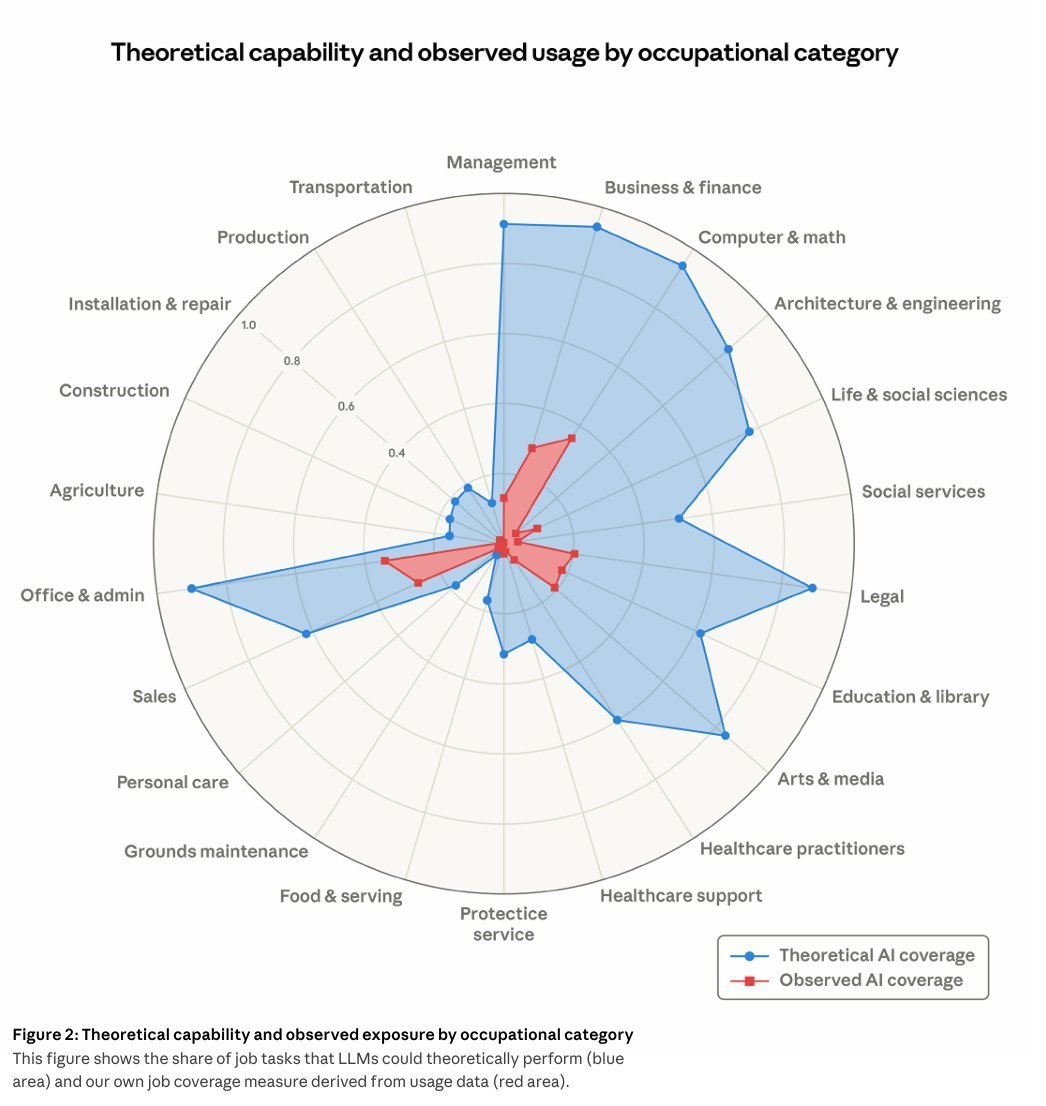

if you showed this chart to a typical economist like 20 years ago, they would've laughed you out of the room. the right side of this is white collar jobs that were once worshipped. these jobs were comfortable, well paying, & came with societal status + recognition. your parents would’ve been proud of you. now these are likely all set to be severely impacted in a shorter period of time than anyone likely ever thought of let alone projected. this is like ppl waiting on a beach enjoying the sun when a tsunami has already struck.

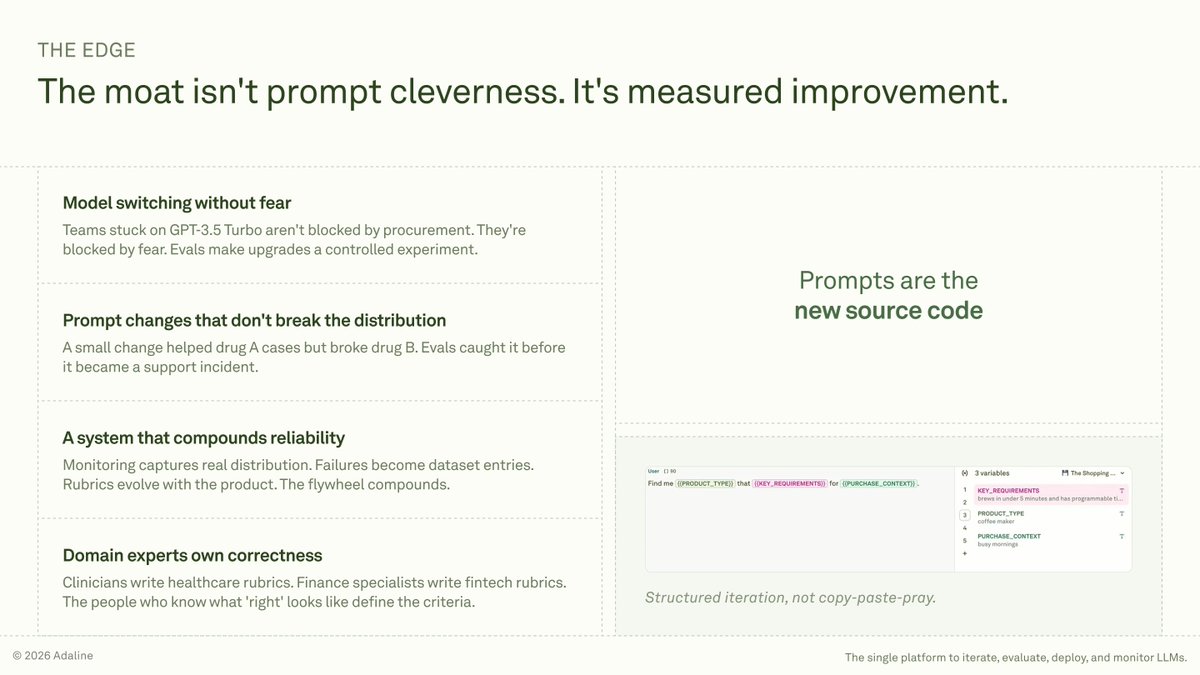

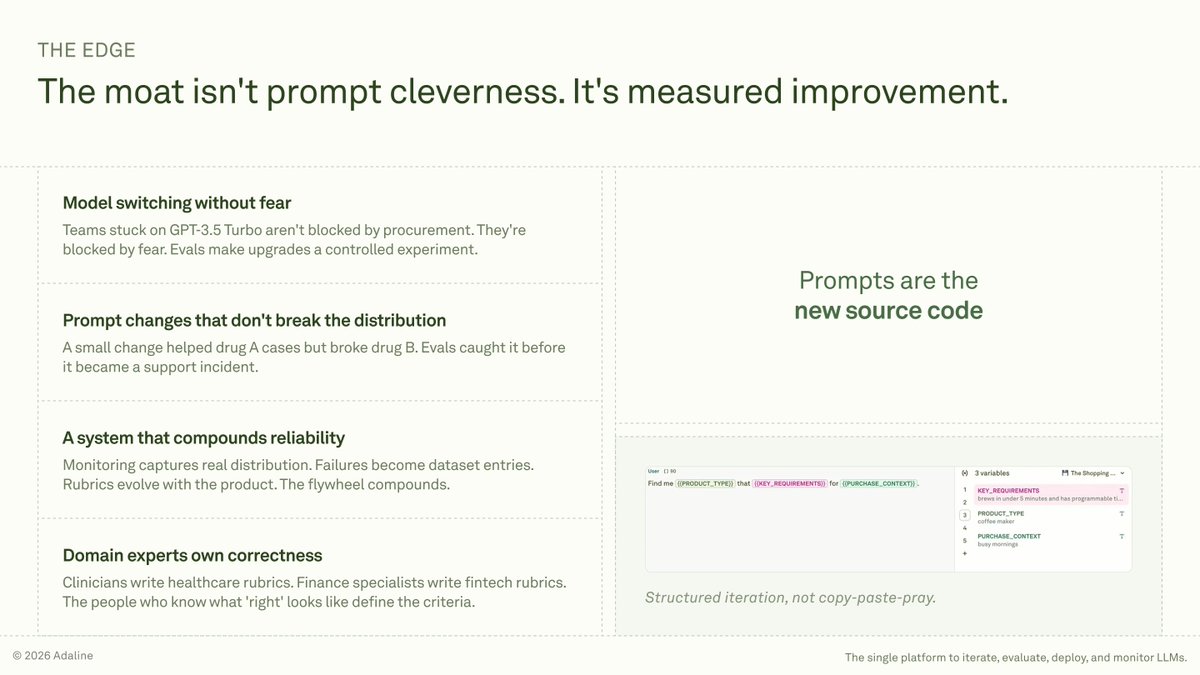

When you build AI agents, don't treat prompts like config strings. Treat them like executable business logic. Because that's what they really are. @arshdilbagi's blog and this Stanford CS 224G lecture lay out one of the clearest mental models I have seen for LLM evaluation. Stop treating evals like unit tests. That works for deterministic software. For LLM products, it creates false confidence because real-world usage changes over time. Example: an insurance prompt passed 20 eval cases. The team shipped. In production, a new class of requests showed up and failed quietly. No crash, no alert, just wrong answers at scale. The fix is not "write more eval cases," which is what many teams do. It is building evals as a living feedback loop. Start with a small set, ship, watch what breaks in production, add those failures back, and re-run on every prompt or model change. What eval failure caught your team off guard? Blog: https://t.co/HCVhcow5rA Stanford CS 224G lecture: https://t.co/q667gGwckt

When you build AI agents, don't treat prompts like config strings. Treat them like executable business logic. Because that's what they really are. @arshdilbagi's blog and this Stanford CS 224G lecture lay out one of the clearest mental models I have seen for LLM evaluation. Stop treating evals like unit tests. That works for deterministic software. For LLM products, it creates false confidence because real-world usage changes over time. Example: an insurance prompt passed 20 eval cases. The team shipped. In production, a new class of requests showed up and failed quietly. No crash, no alert, just wrong answers at scale. The fix is not "write more eval cases," which is what many teams do. It is building evals as a living feedback loop. Start with a small set, ship, watch what breaks in production, add those failures back, and re-run on every prompt or model change. What eval failure caught your team off guard? Blog: https://t.co/HCVhcow5rA Stanford CS 224G lecture: https://t.co/q667gGwckt