Your curated collection of saved posts and media

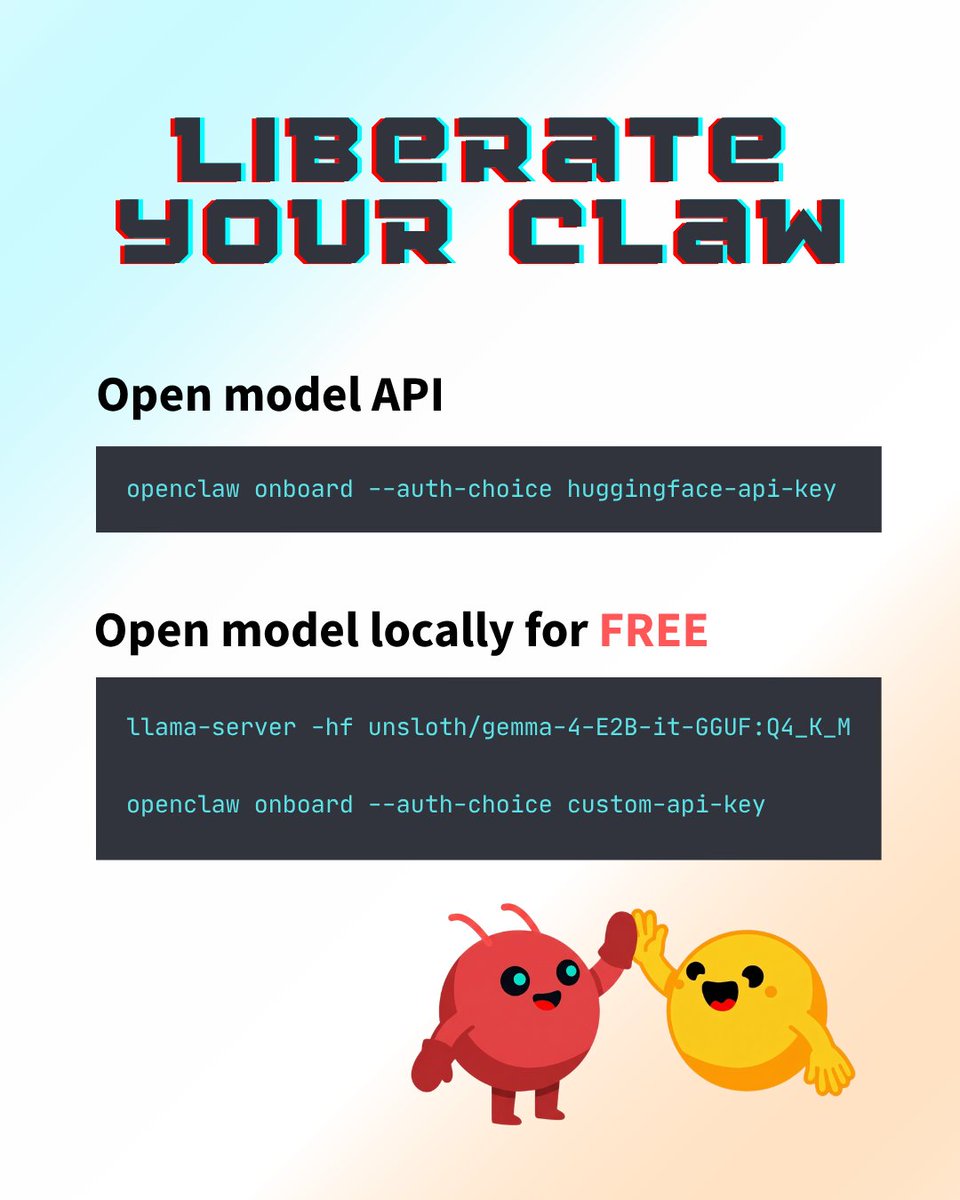

PSA: models on hugging face are free to use. Always have been, always will be. If you're relying on claw, today is the best time to liberate (parts of) your agent's workflow. https://t.co/0cEgJsEcvE

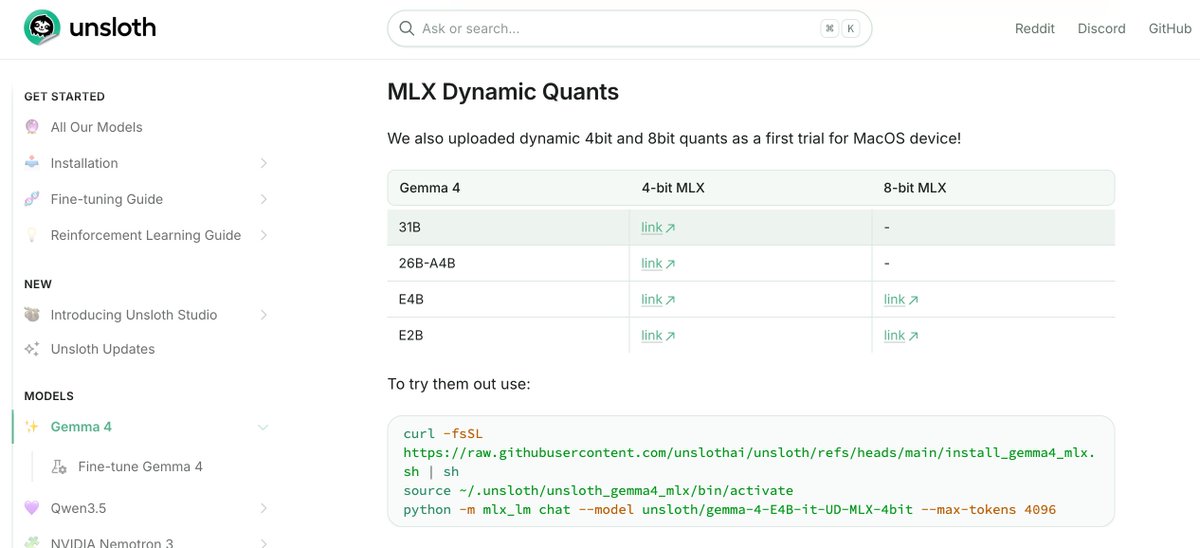

Wait, what? @UnslothAI is starting to upload MLX Dynamic Quants! I have to test them ASAP! Thanks you really rock! 🚀 https://t.co/tYy2ORQCE7 https://t.co/kms8MBxj9I

@RonanFarrow @inafried @NewYorker @andrewmarantz see also from 2024 with a very similar message: https://t.co/0UBjbRg6uW.

The reporting on OpenAI and Sam Altman that I've been working on for the past year and a half, for @NewYorker, with @andrewmarantz: https://t.co/HEPHN4E54P

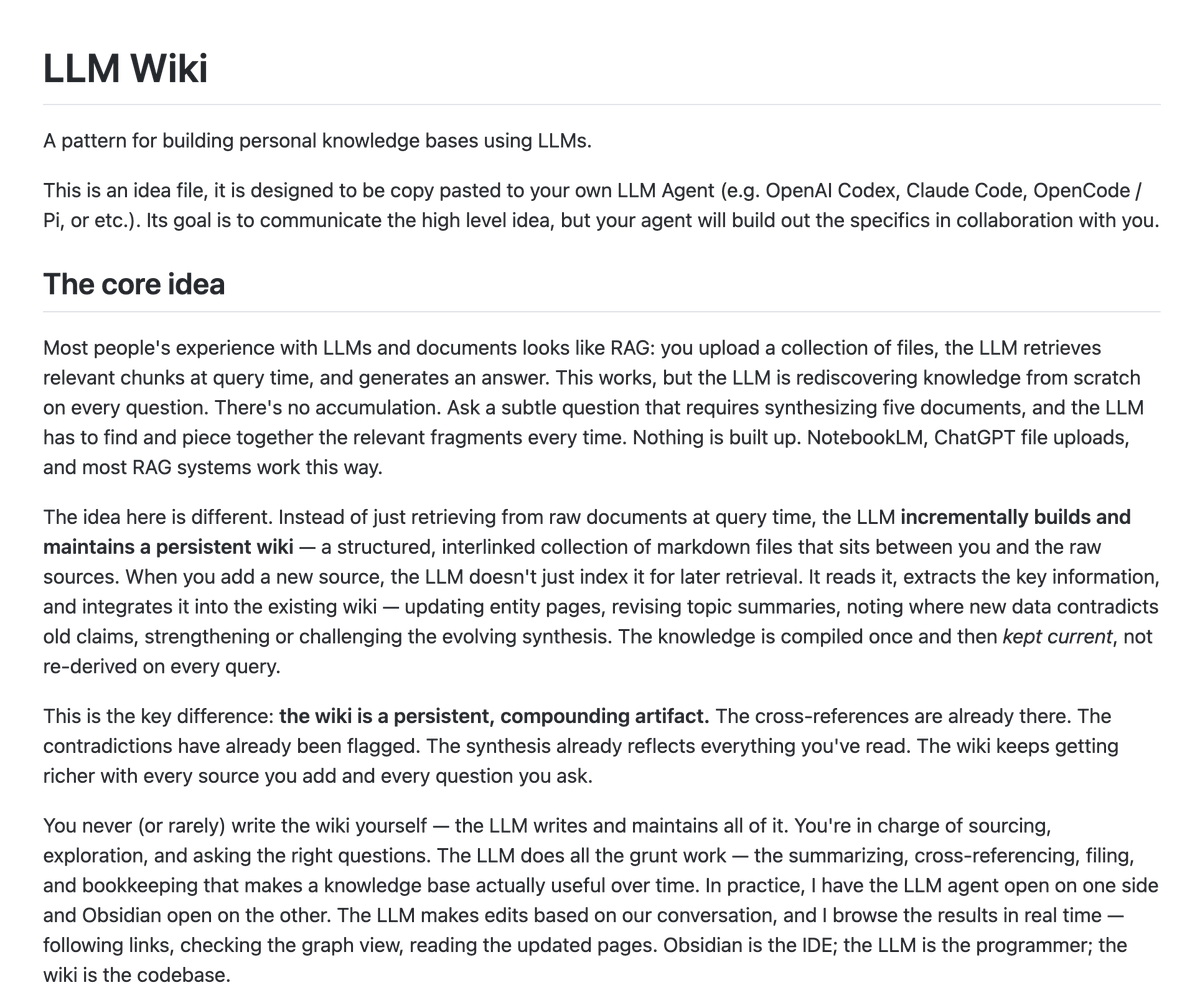

🚨 Andrej Karpathy just dropped something that could replace a lot of RAG workflows. It’s called LLM Wiki. The idea is simple: Most AI systems retrieve context from scratch every time you ask a question. LLM Wiki doesn’t. It builds a persistent knowledge base that gets better every time you add a new source. So instead of: • search docs • pull fragments • answer • forget everything • repeat it does this: • ingest a source • extract the important ideas • update entity pages • revise topic summaries • connect related concepts • flag contradictions • keep compounding the knowledge over time That shift matters. RAG is great for retrieval. But a lot of people are really trying to build memory. Not just “find me the right chunk again.” More like: “help me build an evolving model of this topic over time.” That’s what this is. Karpathy’s examples are strong too: • personal knowledge • long-horizon research • books and topics • internal company knowledge • meeting transcripts • customer calls Basically, anything where the knowledge should accumulate, not reset every session. The best way to think about it: Obsidian is the IDE. The LLM is the programmer. The wiki is the codebase. You don’t manually maintain the system. You feed it sources, ask questions, and the AI keeps the structure alive. That’s a much bigger idea than “better RAG.” 100% open source.

This didn't receive the attention it deserved. They pre-trained this model completely peer 2 peer, no data-centers. Everything was done over a permissionless network, I have tried the model, it's honestly not a good LLM but that's beyond the point. We NEED this, we NEED an alternative. - Download OpenCode - Download Pi - Pay for OpenSource - Share your AI sessions - Learn to do RL We can't be at the mercy of ANY lab. https://t.co/6ruL2lz2Dh

this changes filmmaking completely Seedance 2.0 now can key green screen precisely and.. edit film scene layer by layer separately.. AI just made months of work one click.. full tutorial on OpenArt + prompts: https://t.co/cR7Wjk6MHD

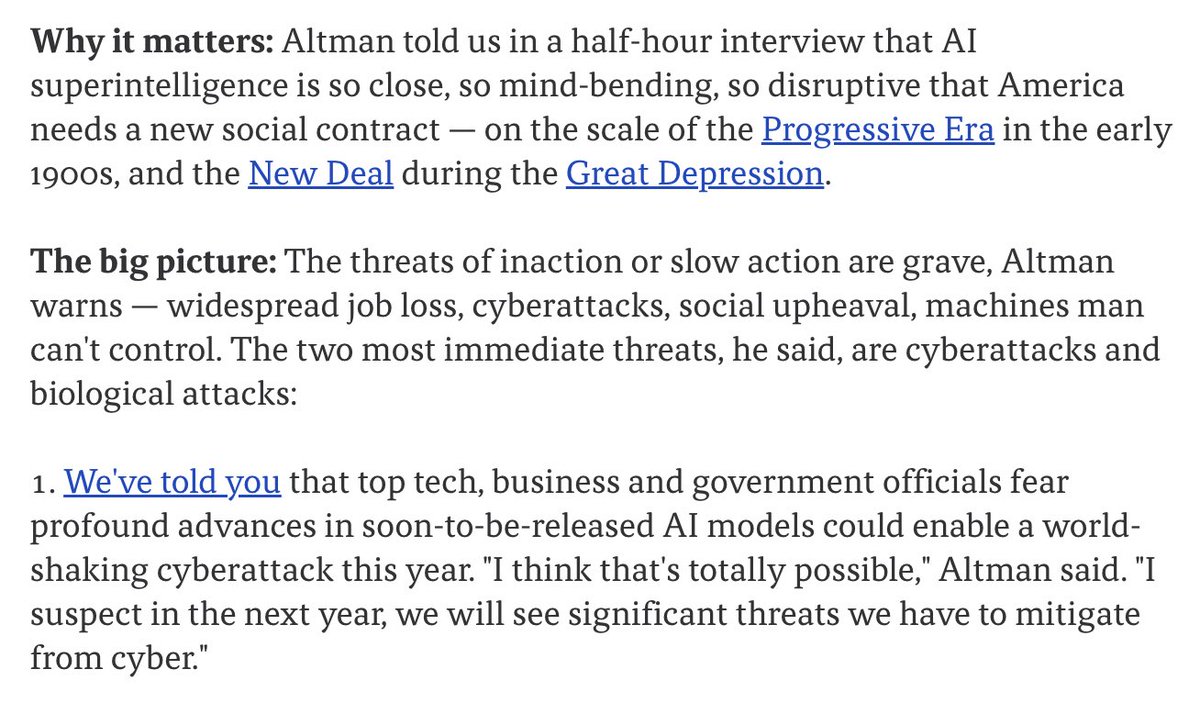

Holy moly: Sam Altman told Axios in a half-hour interview that AI superintelligence is so close, so mind-bending, so disruptive that America needs a new social contract. - It's on the scale of the Progressive Era in the early 1900s, and the New Deal during the Great Depression. - Altman warns: widespread job loss, cyberattacks, social upheaval, machines man can't control - "soon-to-be-released AI models could enable a world-shaking cyberattack this year. "I think that's totally possible," Altman said. "I suspect in the next year, we will see significant threats we have to mitigate from cyber."

@bengodfrey @politico august 2024, i tried to warn everyone: https://t.co/0UBjbRg6uW

@code introduces message steering and queuing. You can now send follow-up messages while an agent request is still running. https://t.co/5o3vPOdoes

Introducing Solana Agent Skills Pre-built skills you can drop into AI tools to interact with Solana. Install in one line and build agents that know Solana. https://t.co/b2gEZA827v

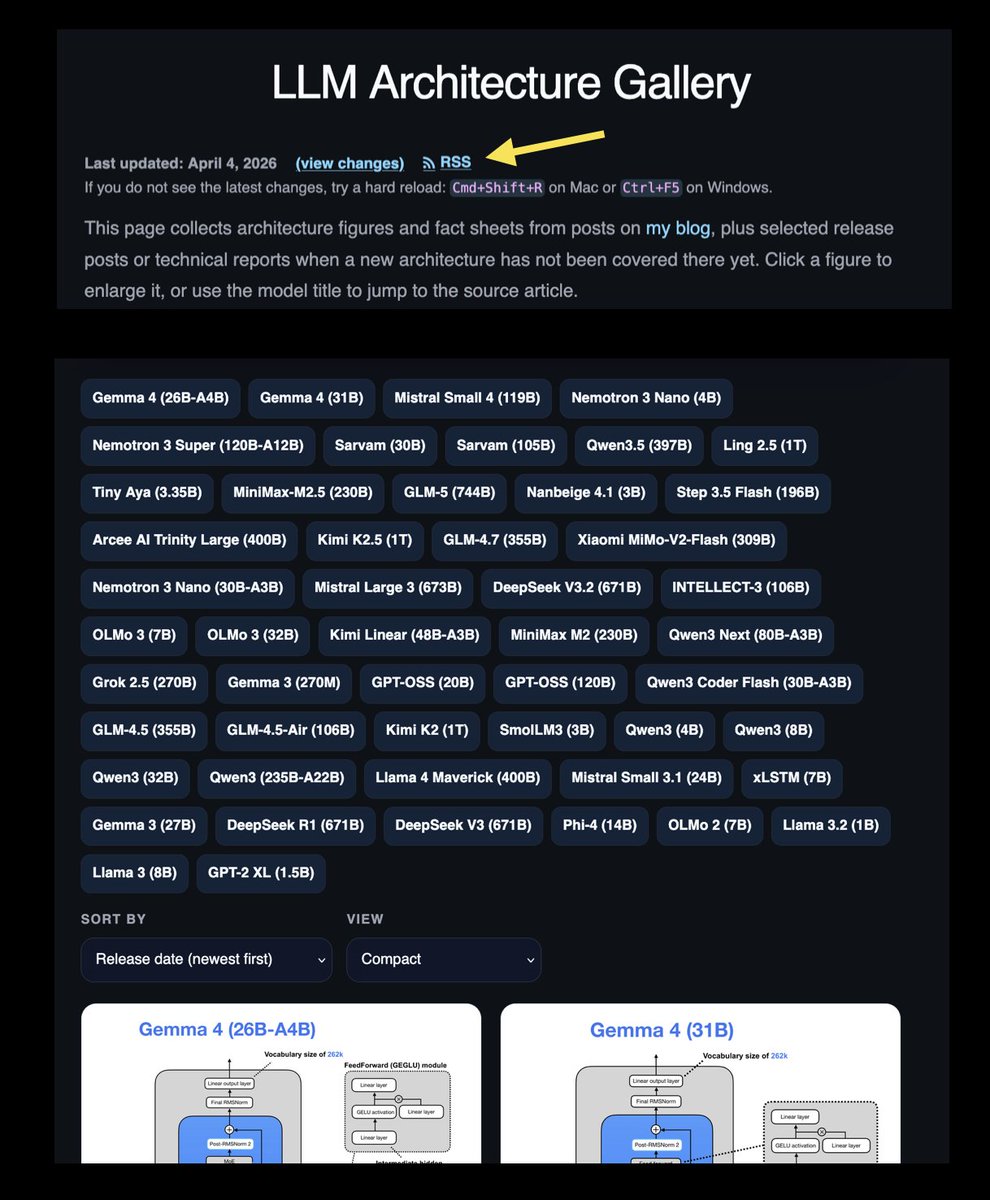

Added an RSS feed to the LLM Architecture Gallery so it is a bit easier to keep up with new additions over time: https://t.co/NO7z6XSRHS https://t.co/7PKrLT1A6S

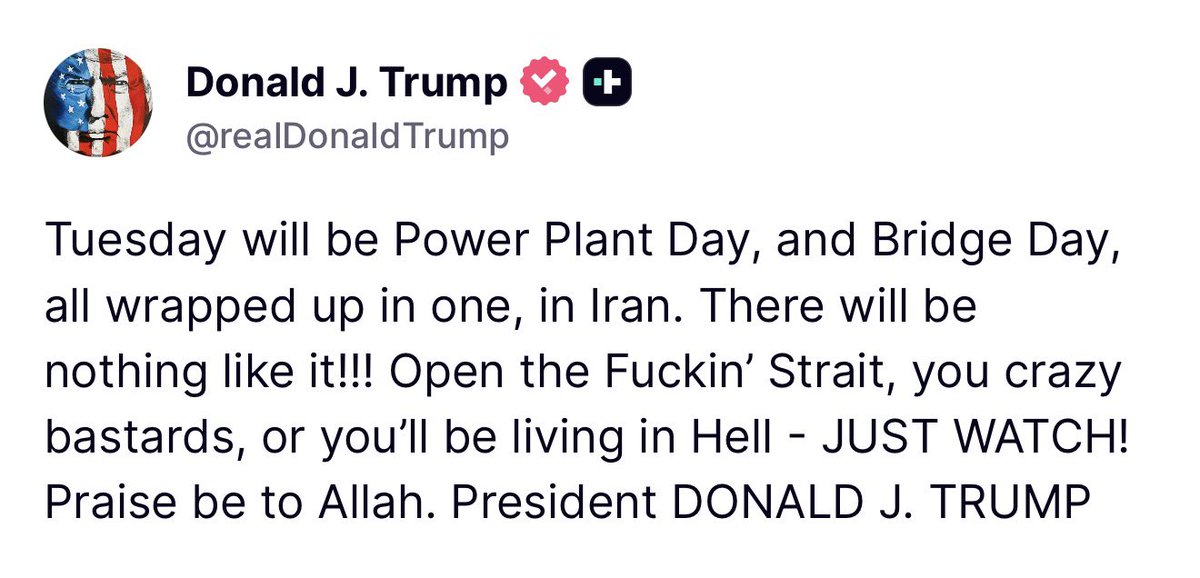

Trump's speech on Iran "was perhaps the clearest evidence yet that the leader of...the world’s predominant superpower is an utterly chaotic thinker, whose aptitude for his job—never obvious to begin with—appears to be in accelerating decline." https://t.co/hRsohZeC46

If you elected this, you fucked up big time. https://t.co/W2vY6seuQB

If you elected this, you fucked up big time. https://t.co/W2vY6seuQB

Just now reading through the Gemma 4 blog Safe to say the @huggingface team is goated Lots of usage examples, guides on inference and fine-tuning, highly recommend! https://t.co/eRUn9d9rtG

A strange AI moment is revealing something much bigger in China. What looks like quirky behavior from an assistant points to a broader push where AI is becoming part of everyday economic survival, especially for young people facing high unemployment and intense competition. From “one-person companies” supported by AI to hiring decisions already favoring AI-skilled workers, the message is clear. In this environment, adopting AI is not optional, it is becoming the new baseline. https://t.co/LVDoeasmW4 @bbcnews

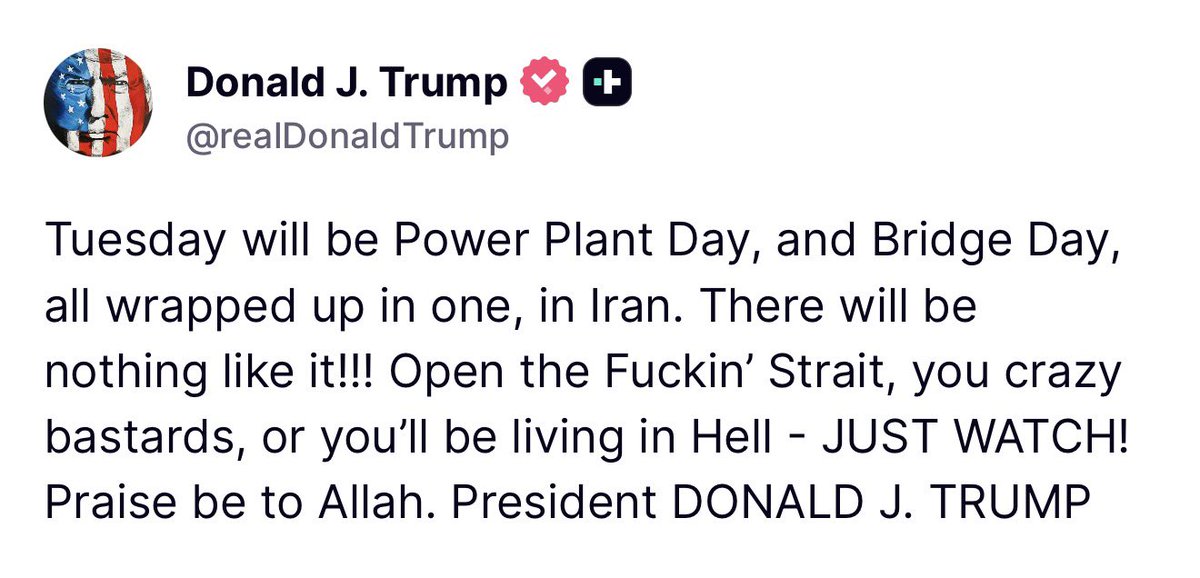

This is not normal. A developer just open-sourced a full AI agent orchestration dashboard that runs 15 providers, bridges 10 chat platforms, and manages a distributed swarm of autonomous agents all self-hosted. It's called SwarmClaw. You deploy it once. Then you connect Claude, GPT-4o, Gemini, Grok, DeepSeek, Mistral, Groq, Ollama all of them from the same dashboard. Your agents live inside Discord, Slack, Telegram, WhatsApp, Signal, iMessage, Teams, Google Chat, and Matrix at the same time. Each one has its own persistent memory backed by both FTS5 keyword search and vector embeddings. Each one runs on LangGraph with automatic sub-agent routing and checkpointed execution so complex tasks never fail silently mid-way. When an API key rate-limits, the model failover system rotates to the next credential automatically. When an agent hits a long-running task, the background daemon processes it on a 30-second heartbeat while you do something else. The OpenClaw Gateway feature lets you point different agents at different OpenClaw instances running on different machines one on your laptop, one on a VPS, one in another country. You manage the whole swarm from a single mobile-friendly UI. Install with one npm command. Deploy via Docker. Update with one button in the sidebar. 107 commits. 24 releases. MIT License. 100% Open Source.

Elon Musk just declared the human eye optional. Not improved. Not repaired. Not reconstructed. Optional. Musk: “Blindsight will enable those who have total loss of vision to be able to see again.” That alone would be historic. Musk: “Including if they have lost their eyes, or the optic nerve.” Eyes gone. Nerve gone. The entire optical pipeline physically missing from the skull. And the solution is not to rebuild what broke. It is to skip it entirely and wire synthetic signal straight into the visual cortex. Every surgery ever performed has tried to restore original hardware to factory condition. Neuralink does not restore. Neuralink treats the biological organ as optional infrastructure. Eye is gone. You do not rebuild the eye. You route around it. You stream raw visual data into the brain and let the cortex do what it was always doing anyway. Processing signal. Your eye never saw anything. Your brain saw. The eye was the middleman. It captured a narrow band of electromagnetic radiation and shipped it to the visual cortex. That is where the image was actually built. Neuralink is firing the middleman. Musk: “Maybe have never seen, were even blind from birth.” A person who has never perceived a single photon of light. Given vision for the first time. Not through healing. Through hardware. And then Musk said the part that should rewire how you think about being human. Musk: “You can see in radar, you can see in infrared, ultraviolet.” This is where it crosses from medical device to species upgrade. The human eye processes roughly 0.0035% of the electromagnetic spectrum. You are walking through a universe flooded with information and your biology lets you perceive an almost immeasurably thin sliver of it. Infrared is bouncing off every surface around you right now. You cannot see it. Radio waves are passing through your body this second. You have no idea. Your biology decided four hundred million years ago what you were allowed to perceive. That decision has never been appealed. Until now. A person with Blindsight would not see like a human. They would see like a machine. Thermal signatures in total darkness. Ultraviolet patterns invisible to every sighted person alive. Radar imaging through walls and weather and smoke. The person born blind would not just gain sight. They would gain sight the sighted have never had. Musk: “Superhuman capabilities.” If you can replace the eye you can replace the ear. If you can stream vision you can stream sound, pressure, spatial orientation, sensory inputs that do not even have names yet because biology never built receptors for them. Every human sense is just a biological sensor converting environmental data into electrical signal. Replace the sensor and the brain does not care where the signal came from. It just processes. That is not a medical company. That is the first serious engineering effort to open the human nervous system to direct hardware integration. Musk: “Cybernetic enhancement.” He said it like a line item on a roadmap. Those two words contain the entire future of the species. The senses are ports. The brain is an operating system waiting for better peripherals. We spent millions of years evolving eyes that could see just enough light to not get eaten. That was the design spec. Survival. Not comprehension. Neuralink is the first product built on a different spec. Every person on earth right now is experiencing a filtered, compressed, biologically throttled version of the universe. Most will live and die believing that narrow bandwidth is all there is. Musk is building the device that proves it never was. It was just all you were equipped for. The eye was not the end of sight. It was the prototype.

This tool can download literally anything from link 🤯 100% free. open source. no ads. https://t.co/62HYPwmYZ0

This tool can download literally anything from link 🤯 100% free. open source. no ads. https://t.co/62HYPwmYZ0

just won best use of browser use at diamond hacks! we made an ADA compliance analysis and 3D immersive platform for people who have mobility impairments. we implemented gaussian splatting for interior view and deployed Browser Use agents for compliance check + annotation. (thanks @browser_use + @reagan_hsu for the opportunity and iphone, crazy timing cause im out of storage)

🚀Just discovered a dope curated AI news website, in a format that I absolutely love! https://t.co/wxrlxyoz0W - Super clean interface. The format is not only dope for consumption for humans, but the robots love it too. And they have a RSS Feed. I think this is a @Scobleizer production. Great tool ------------------ With that my balanced news round up consist of 1. https://t.co/k9Y7ihOdMk - General Purpose AI and Software News. Great UI and good intel. ~~ @Scobleizer & @LevangieLabs 2. https://t.co/wG5ZYK8rYE - This has been a staple in my back pocket. It curates news from some of the AI giants by the https://t.co/XJkYn0v1oA | @replicate team. 3. HackerNews - Of course you have to have @hackernews by @ycombinator

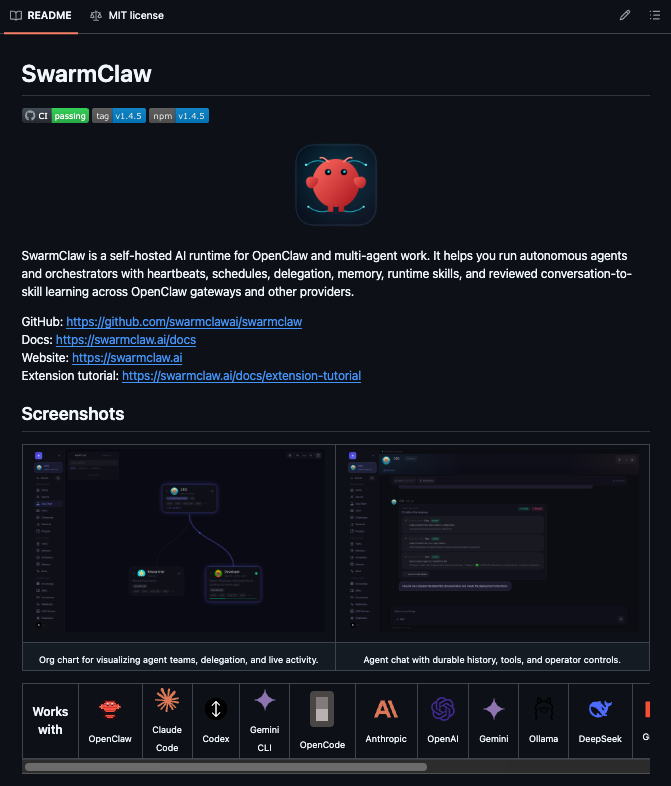

My latest project is called Veritas which is a "Narrative Intelligence Platform." It pulls from hundreds of data sources in real-time: social media (Twitter, Reddit, Bluesky, Telegram, 4chan, Farcaster, YouTube), 177 curated news feeds, plus environmental data (earthquakes, disasters), economic signals (crypto markets, stock markets, central bank data), conflict tracking, humanitarian reports, and more.

@ParallaxPilgrim My AI news system at Aligned News only watches X for AI: https://t.co/kiuZ7QXLzb It is both better and worse. More focused but not as broad. This is another example of how highly personalized news is on the way and will disrupt everything. Congrats, this rocks!

動画の1シーンレベルでの4DGS 動的シーン生成の新手法『TRiGS』 ガウシアンの出現・消失によるメモリ爆発を防ぐため剛体運動を統合。 ガウシアンの寿命を維持し無制限なメモリ増加を抑制します。 600〜1200フレームの長い動画でも高い忠実度と安定性を実現。 https://t.co/OkPeCKjp0m

I've wanted a voice AI to let me go through my emails on the way to work for years. GPT 5.4 just one-shotted it. https://t.co/Uif9Fg9MI4

Watching a liberal slowly wake up. https://t.co/TYXeVMoHIQ

The creator of Diffusers & former core maintainer of Transformers is coming to Paris! 🎨 Patrick von Platen of @MistralAI joins #PyTorchCon Europe to discuss scalable #AI systems & his work on vLLM. 📍 Paris | 7-8 April Learn more: https://t.co/LrfPfk8uQ2 Register: https://t.co/prWHolBpFf

Friendly reminder that Gemini has a built-in alternative to OpenClaw. Yes. Agent mode can literally perform any task: - Leverage a web browser to use any site/tool - Connected to Gmail, Calendar, Drive, Tasks, etc. - Generate presentations with Slides - Use YT videos as sources - Create tasks, archive emails, draft responses And much more like deep research, canvas and so on. You can also ask Gemini to run Agent every day, once a week, etc. for recurring tasks. Probably the feature that people sleep on the most.

Three weeks ago, we launched Genspark AI Workspace 3.0 and Genspark Claw. But we’re already building what’s next. 4.0 is coming! For the first time ever, we'll be demoing major new features live. April 7 at 8 PM PT / April 8 at 12 PM JST & KST Register for the livestream! 👉 Youtube: https://t.co/NO4RMfVDHv X: https://t.co/pU7bMvhO4G

🚨 BREAKING: Someone just open-sourced a fully autonomous AI RED TEAM. It's called PentAGI. A autonomous AI system that coordinates hacking attacks with zero human input. One agent does recon, another exploits, and another writes the final report based on what they find. 100% Open Source.