Your curated collection of saved posts and media

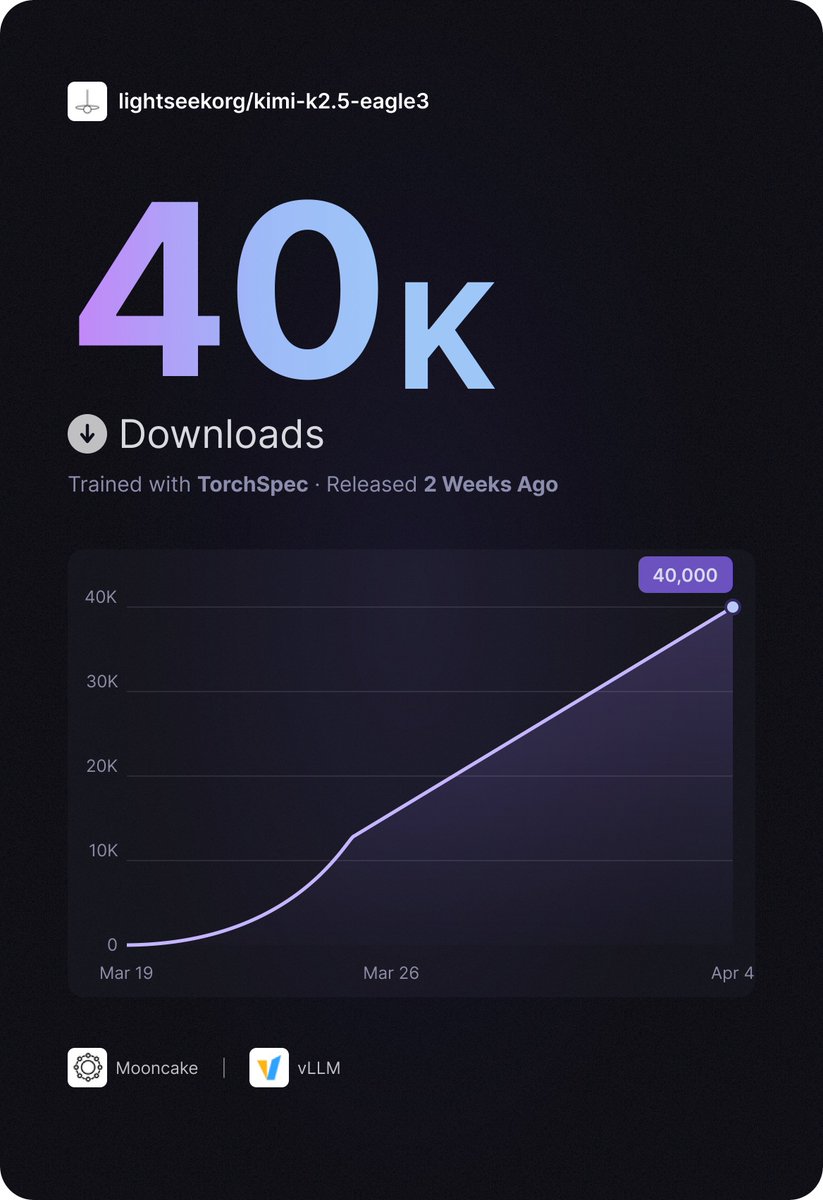

🚀TorchSpec has been live for 2 weeks — and kimi-k2.5-eagle3 just hit 40K downloads on HuggingFace! Thanks to @KT_Project_AI Team and @vllm_project Team for the amazing collaboration. Links in comments. https://t.co/2L4xL100L2

@jyothiwrites This is pretty good https://t.co/Ohe6Qubavq

Stabilizing video from a camera on a running animal turns out to be brutally hard. Traditional stabilization breaks pretty quickly. We're starting to crack it. Before → After from our latest Petpin pipeline. https://t.co/L4NWftjfxC

Google quietly releases an offline-first AI dictation app on iOS https://t.co/5uWR3t1RCS

Google quietly releases an offline-first AI dictation app on iOS https://t.co/5uWR3t1RCS

Demis Hassabis (@demishassabis), co-founder & CEO of Google DeepMind is coming to YC! > Fireside chat with @garrytan on the future of AI > Keynote from DeepMind researchers on Gemini + Gemma > Talks & live demos from YC founders building on Google's AI stack > Meet DeepMind engineers 1:1 at our "Meet the Team" happy hour If you're building at the edge of AI, you should be there. https://t.co/3YzhX0Vkqu

Our run-rate revenue has surpassed $30 billion, up from $9 billion at the end of 2025, as demand for Claude continues to accelerate. This partnership gives us the compute to keep pace. Read more: https://t.co/XgSjL0And7

The founder of Postman says you have to kill your existing org chart, especially if you're still operating with a pre ai hierarchy arrangement. The modern org chart, according to @a85: - wide span of control (even within exec team) - work directly with ICs, not through layers - either you're building, or you're selling Projects are led by staff/principal engineers with high agency. They see across the board as well as deep in the stack. Product managers are building APIs and prototyping in Claude instead of writing PRDs. Designers are shipping PRs through Cursor directly instead of relying solely on Figma. Everyone is building. And the management's job is to develop better judgment.

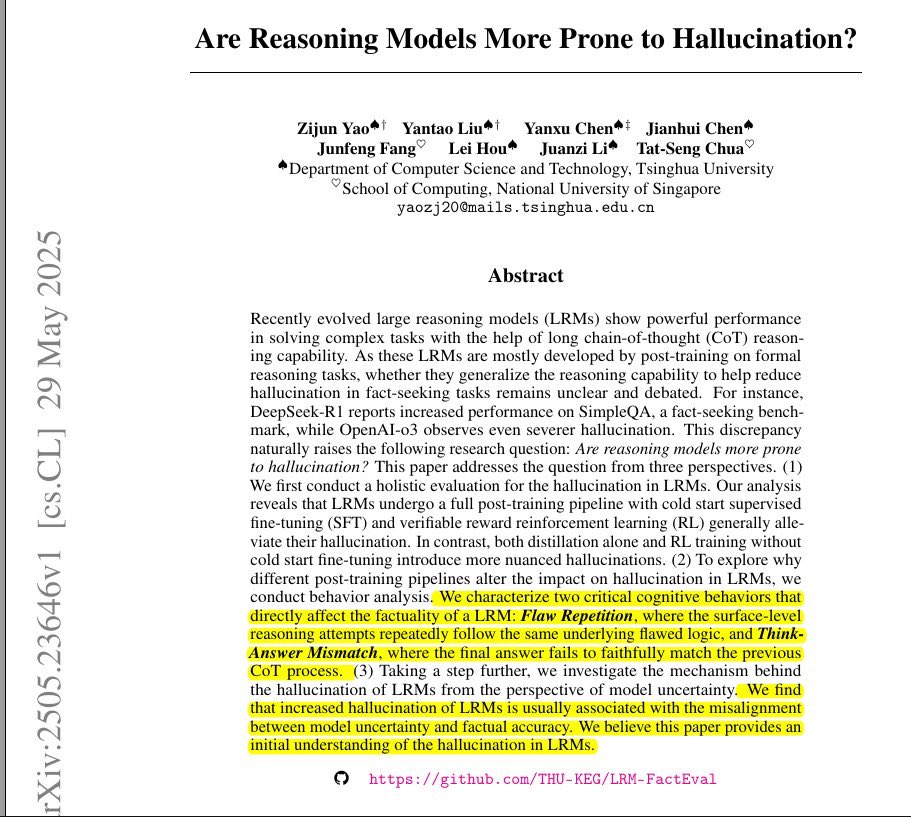

@aran_nayebi @Kasparov63 here’s an example where pretty recent reasoning models still hallucinate according to at least one measure where there is actual data. I think you owe me an apology. https://t.co/GQv4iYbyAW

Got a suspicious URL but don't want to click it? 🔒 @urlscanio visits it for you - capturing a screenshot, every domain contacted, every script loaded, and the tech stack behind it. Used by Reuters in a real hacking investigation. Free for basic use: https://t.co/udZsKthjwf https://t.co/iKx9HfTBU8

🚨 Launching: The OSINT Tools Library A curated, investigator-first directory of tools used in real cases. → https://t.co/U5CJ3JJmHE We’re building the largest and best maintained OSINT tools resource and need your help. Reply and tag a tool we should add 👇

@ntoddpax seems that way, e.g. https://t.co/1gEwAonUS7

https://t.co/GQv4iYbyAW

@aran_nayebi @Kasparov63 here’s a random one that i found quickly, from last year. https://t.co/E5K6oWItUD

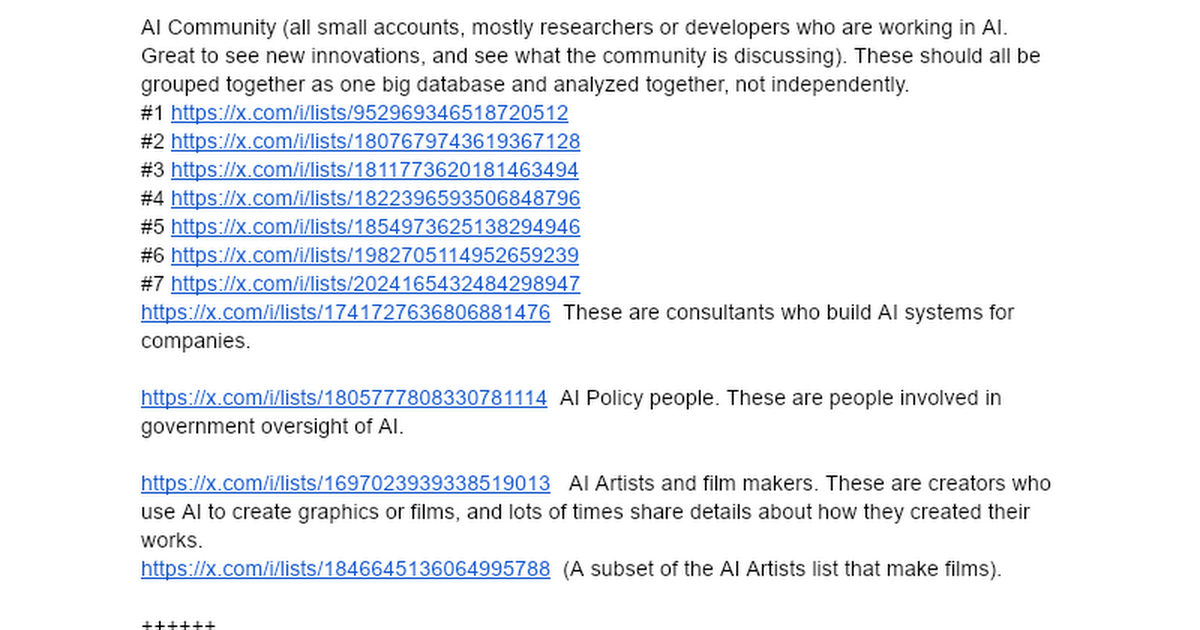

I love X. Over the last 19 years I built a set of lists of the tech industry. That's at https://t.co/tQxJhXvAUE And what can you do with lists? So much. Have @Pokee_AI or your favorite AI agent platform (Pokee includes the X API in as standard feature so you don't have to pay extra). On Saturday I had it watch my Climate list and make an app where it showed all the storms across America. That took a few minutes. I also built a separate app that watched my news lists (about 14,000 people and organizations) and made me an app to watch news about the Iran war. Lists are the secret. They are purified content for your AIs to use to build new kinds of apps. Like this one, which shows you the best from the AI community here on X: https://t.co/8L5xphk0qQ That site could not exist without lists. Why? The X API won't let you find everyone in the AI world (that site reads tens of thousands of posts from 40,000 people and 8,300 companies every day). I spent thousands of hours making the lists and give them to you for free. No one has a set of lists of tech and news like I do, so if you don't use my lists your apps won't be as good, at least about AI and tech (I have a list of all the world news outlets too). And these lists can do a number of things. My site creates a script so you can have @NotebookLM create you a podcast from today's news, for instance. Thanks to the many subscribers I have. I don't do much extra for them but they subsidize my work, which is expensive (I'm spending about $300 a day on X API charges to build Aligned News, something I can't do forever, so my agents are now helping me send notes to investors over on LinkedIn to shake the tree to see if there's some real business here making custom news and market analysis. Something else you can do with my lists. After all, I have lists of more than 10,000 investors here, so AI can tell you about investing trends, and a lot more too. I now get why Elon bought X. AI lets you get a lot more value out of X and in a way very few have really explored. If you are using AI to build new ways to look at X, let me know.

Open source AI is evolving at record speed, reshaping how we train, refine, and deploy models. Hear builders and leaders -- including @jeffboudier @istoica05 and @ying11231 -- discuss how openness drives trust, transparency, and real-world adoption—and what developers need next as models grow more multimodal and agentic. 👉 Watch The State of Open Source AI Panel from #NVIDIAGTC now on YouTube: https://t.co/qL9ZHiLuPw When the community builds together, breakthroughs happen faster. 🙌

Introducing Eastworlds We help robots leave the lab faster Discover More https://t.co/rKwiCHYc8F https://t.co/Usyn7mfi6D

Introducing Eastworlds We help robots leave the lab faster Discover More https://t.co/rKwiCHYc8F https://t.co/Usyn7mfi6D

RAG without auth is a data leak waiting to happen 🔐 FGA + @llama_index keeps every document in check. Read the blog 👇 https://t.co/NhtyDIqDmX

Hawk is helping financial institutions tackle one of the sector’s most complex challenges: financial crime. With AI precision and AWS support, Hawk is achieving the trifecta of security, scalability, and compliance. https://t.co/EahhIpuymk

Mission Report is out now! Catch up on everything new in Antigravity + Q&A! https://t.co/GNC23QNqUD

Mission Report is out now! Catch up on everything new in Antigravity + Q&A! https://t.co/GNC23QNqUD

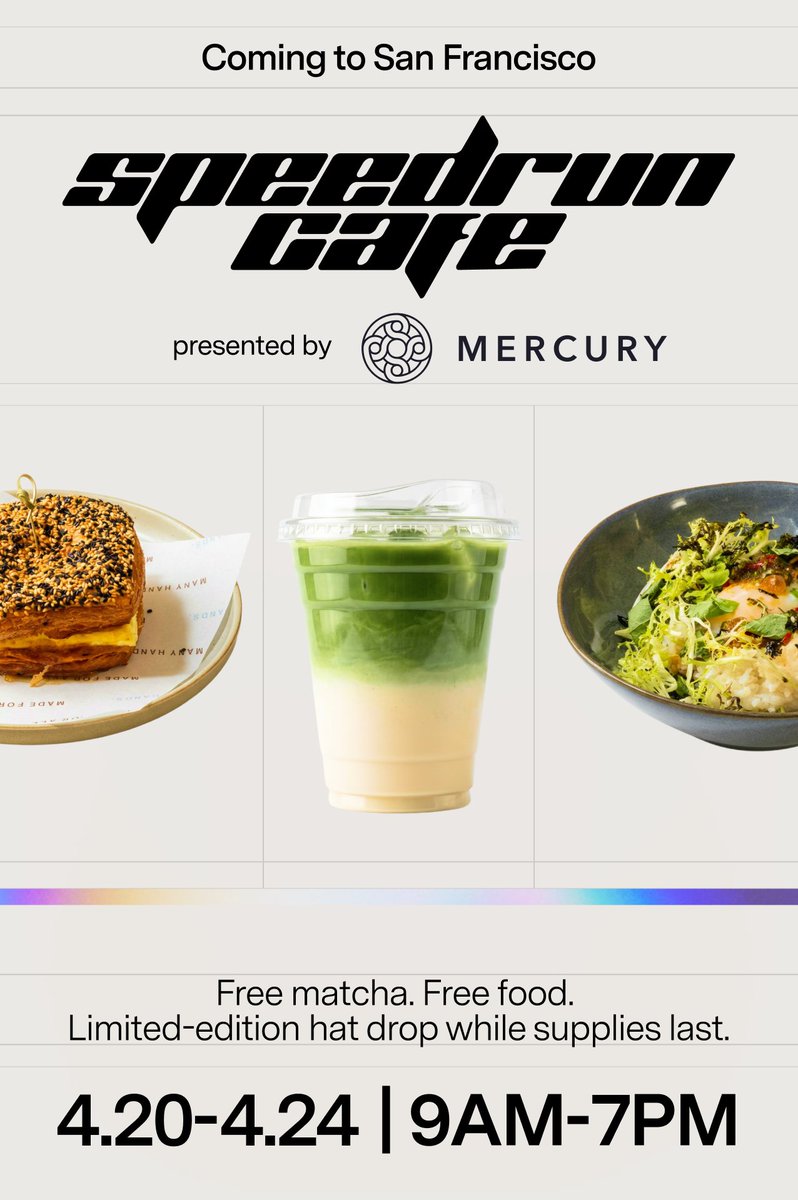

SPEEDRUN APPLICATIONS ARE OPENING SOON Join us IRL at speedrun cafe in SF during the first week of the SR007 application window: April 20th–24th! One drink, one food item, one limited-edition hat per guest (while supplies last) RSVP to snag a spot. Partiful links in replies 👇 https://t.co/8FKhPx3PBj

🚀 HISTORY MADE: Artemis II astronauts travel farther from Earth than any humans ever have. 🇺🇸 https://t.co/8WcfvNYPLC

For the first time ever, Tesla ranked as the #1 imported car brand in South Korea on a quarterly basis, effectively shattering the long-standing dominance of players like BMW. Not just in EVs — across vehicles of all fuel types. Tesla registrations in March skyrocketed +330% YoY. https://t.co/99T17ZqQwq

I don't know what Sam Altman saw internally at OpenAI, but it seems that, according to their definition, AGI is here, and superintelligence is incredibly close. AI models that independently conduct scientific research and find novel solutions are already here, and their internal model appears to surpass everything seen before.

Spitballs by @frogspitsimulat VARIANT: Atom HOST: Sarah https://t.co/OQhUFZQvuV

Spitballs by @frogspitsimulat VARIANT: Atom HOST: Sarah https://t.co/OQhUFZQvuV

icymi: we built Studio Auth for @mastra now you can secure and add RBAC around deployed Studio functionality like agent execution, editing, and observability https://t.co/v0xB2eue6Q

BREAKING: @samsheffer has joined Google DeepMind https://t.co/TMYmRLR4E0

BREAKING: @samsheffer has joined Google DeepMind https://t.co/TMYmRLR4E0

We can just do things. Like solve crime. I'm thrilled by the progress SF has made, and I'm proud Flock has played an important role. https://t.co/wXfVYNsGi2

https://t.co/Bqkc8KoSiM