Your curated collection of saved posts and media

@jonnym1ller Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@Cointelegraph Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@nummanali Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@robinhanson Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Global AI Debates 2026! Debate the future of superintelligence. Get judged by experts in AI research, safety, and policy. Speech or debate track. $1.5k prize pool. Register by April 10th: https://t.co/yOHkk0U2Sl @FLI_org https://t.co/oLUOiUscoe

sam altman watching ChatGPT hallucinate live on stage is the funniest thing i've seen all week the CEO of OpenAI, on stage, in front of everyone, watching his own AI just make things up in real time and his face says it all this is the guy telling us AGI is coming soon btw https://t.co/6uqT1XovBx

Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks / feels / believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Had early access to @synclabs_so latest 3.0 model. The accuracy is wild. It captures lip movement, timing, and subtle facial emotion without breaking realism. This feels natural. Well done, team. Animated on @Kling_ai 3.0 model. @therealprady @IamPrajwalKR @RudrabhaM @sama_sanjit @mhadifilms

Google launches the free Google Vids Screen Recorder Chrome extension, allowing users to capture up to 30 minutes of screen time with a single click. https://t.co/KpyUiyMyv5 https://t.co/A7iO4pIlh9

Meet Pocket 📱 Your IM, your MiniMax Agent Desktop, finally connected. Most AI agents still need you at your desk. Pocket doesn't. Sort your local files. Send attachments. Browse the web. All from a chat message. While you're nowhere near your desk. Try today! → https://t.co/VZEkxJ78Sg

Made in NYC with AWS: George Sivulka, Founder & CEO, @hebbia. New York is a central hub for global finance and the ideal home for a startup serving the world’s investors and bankers. Sivulka discusses why being a founder in NY means ‘going against the grain’ and how building on AWS has helped Hebbia scale and embrace generative AI.

The AI race is not one race, but two very different models competing. The U.S. is pushing rapid innovation driven by companies and markets, while China is advancing under strong state direction and control. Each approach has its own strengths and trade-offs. The outcome may depend on adoption, not just technology. The side that wins over more users and ecosystems could shape how AI defines the century. https://t.co/XXLaBAeCXN @bbcnews @MishaGlenny @lukemintz

Time to try out the new Gemma 4 on iPhone. If it can run agents, it might actually become a really useful part of my daily routine. https://t.co/KPG9G1xoko

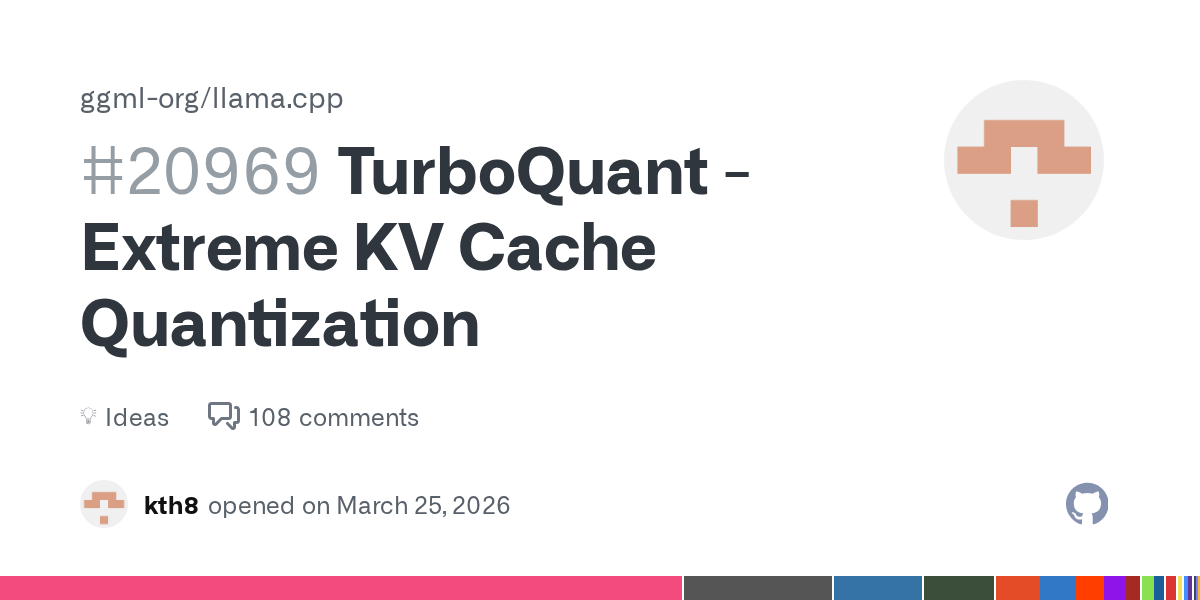

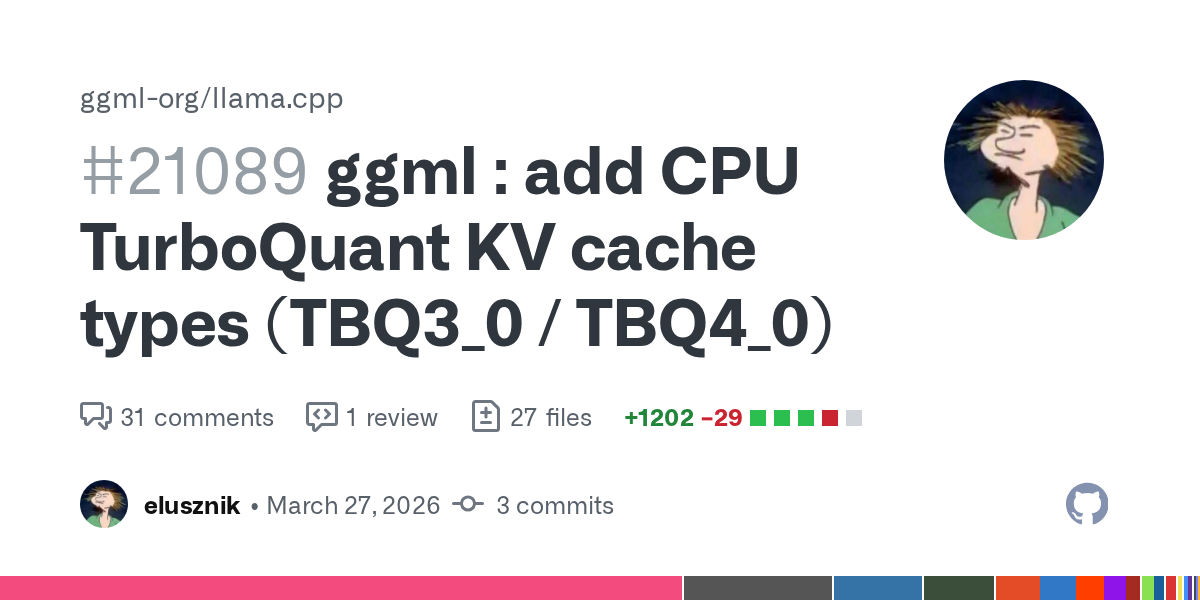

HIGGS: https://t.co/5tILf5wmE6 A weaker version of HIGGS is now standard in llama.cpp: https://t.co/1iWgdUuNtT Hadamard vs rdm benchmarks (I found the same): https://t.co/ZtYLG3b6sI

QJL hurts performance: https://t.co/ZtYLG3b6sI https://t.co/a3KWuDiMLT https://t.co/MFYCMpMx2K

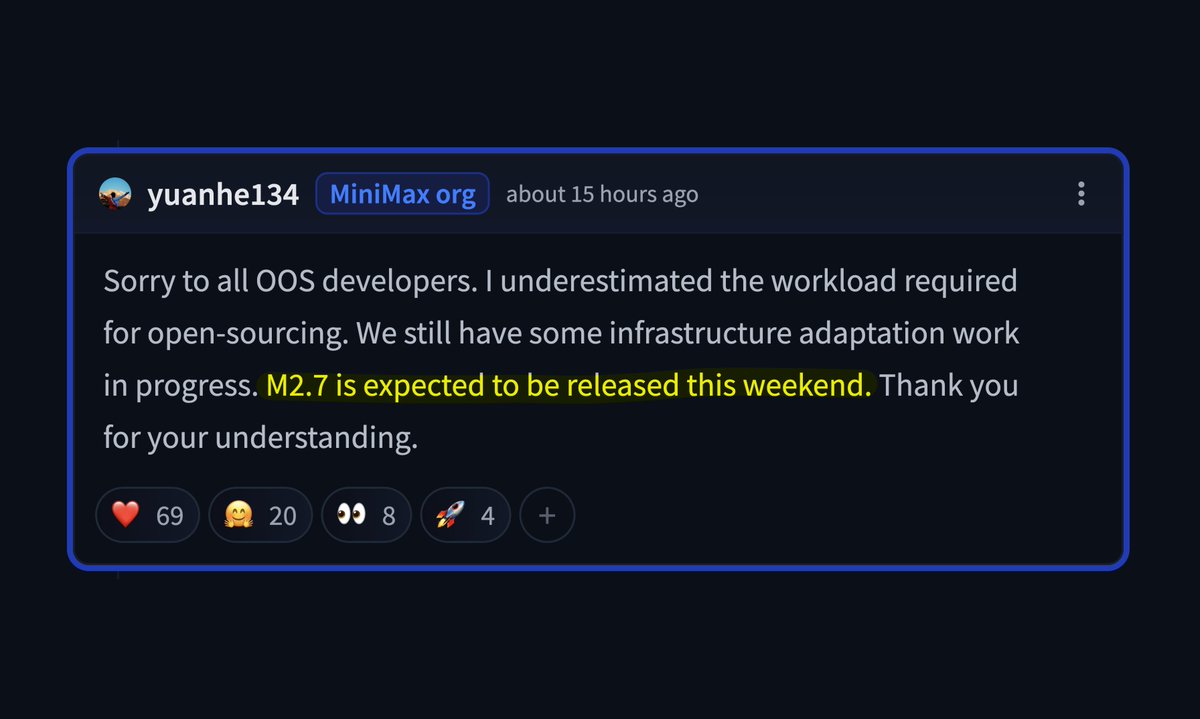

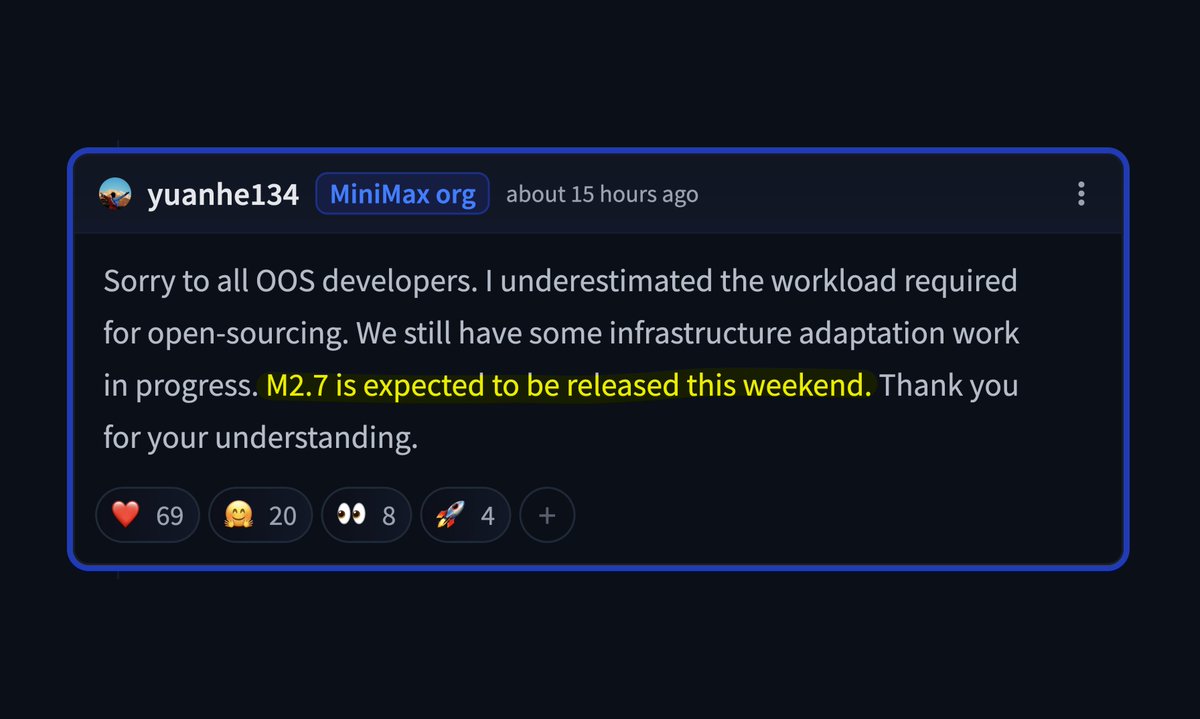

MiniMax-2.7 open weights confirmed and coming super soon - this is going to be a very big one 🔥 https://t.co/qrRuBN0p4N

MiniMax-2.7 open weights confirmed and coming super soon - this is going to be a very big one 🔥 https://t.co/qrRuBN0p4N

Helion is now a foundation-hosted project within the PyTorch Foundation, writes Michaël Aussems in ITdaily. The project, contributed by @Meta, aims to simplify the development of AI kernels and improve portability across different hardware platforms. Check out the article: https://t.co/OBshM7K1dT

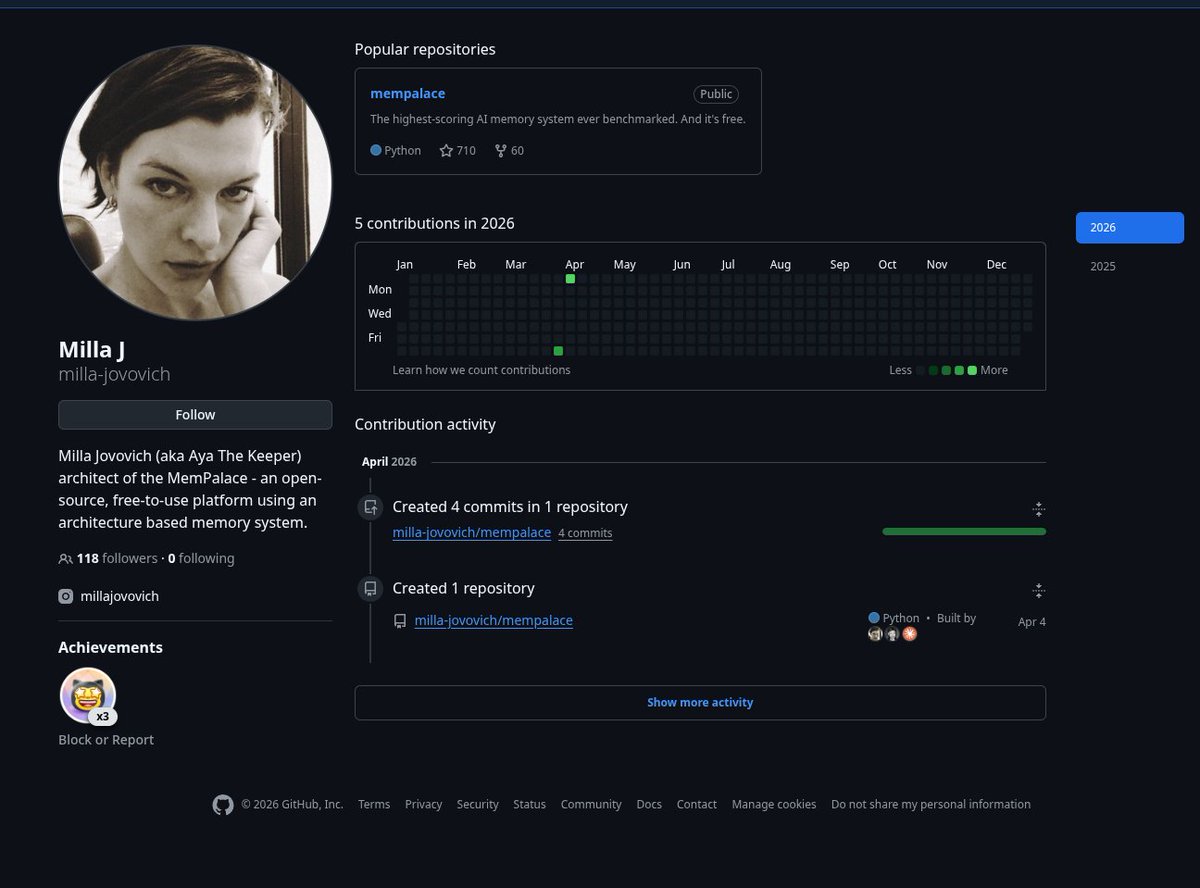

Milla Jovovich has a Github 😏 She's co-developed the highest-scoring AI memory system ever benchmarked with @bensig Totally free and OSS. What a boss. https://t.co/AhVRf4x486

Excited to announce a new open-source, free-to-use memory tool I have been developing with my good friend @MillaJovovich. The project is called MemPalace and it is an agentic memory tool that scored 100% on LongMemEval - the industry standard benchmark for memory… this is higher

Today at #PyTorchCon Europe 2026 we’re excited to share that ExecuTorch is becoming a part of PyTorch Core. ExecuTorch extends PyTorch functionality for efficient AI inference on edge devices, from desktop/laptop to mobile phones and embedded systems. Learn more: https://t.co/REtKoVJ6GZ

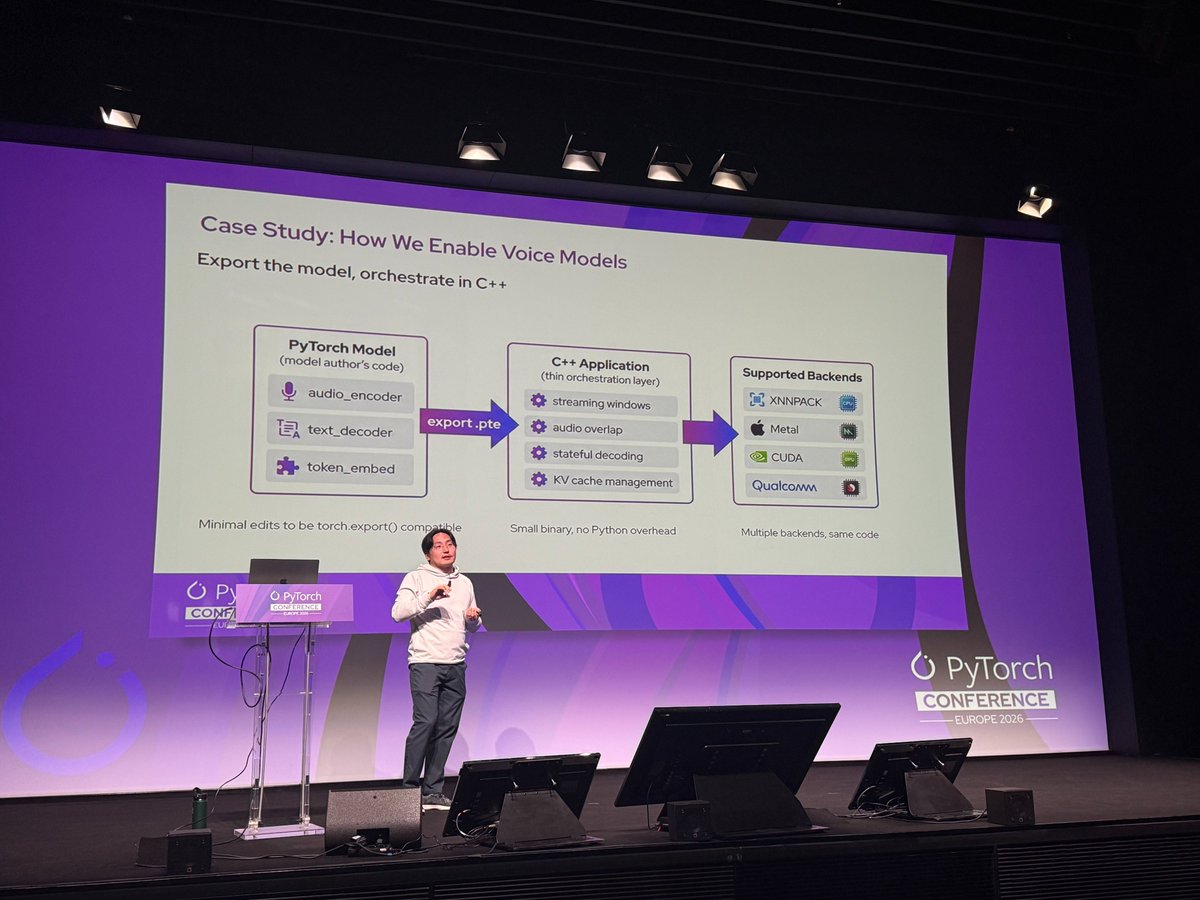

At #PyTorchCon EU, Mergen Nachin of @Meta discusses bringing ExecuTorch to the next frontiers of Edge AI https://t.co/jmnrT7U3f1

🇺🇸🇭🇺La visite officielle de la honte. Alors que des preuves montrent que la Hongrie a livré des informations classifiée à la Russie, J.D Vance se rend à Budapest pour soutenir Orban avant les élections. Outre l'ingérence évidente juste avant les élections (mais on n'est plus à ça près), c'est un marqueur important de la politique étrangère des États-Unis vis-à-vis des Européens. C'est-à-dire qu'ils choisissent consciemment de soutenir celui même qui fait tout pour bloquer les institutions européennes. Il faut bien se rendre compte que dans un monde normal, les États-Unis auraient mis un maximum de pression sur la Hongrie et Orban pour le forcer à faire respecter le droit. Sans compter les révélations sur la collaboration avec la Russie. Il est donc officiel (même si on le savait déjà) que Washington soutient ceux qui affaiblissent l'Union Européenne. Washington valide, par cette visite, la collaboration entre la Hongrie et la Russie de Poutine. Il faut s'accrocher parce qu'à ce rythme, il ne restera plus grand chose à la fin du second mandat de Trump. Ronald Reagan doit se retourner dans sa tombe.

🇺🇸🇰🇷Donald Trump s'en prend désormais à la Corée du Sud, au Japon et à l'Australie. Il critique lourdement ses alliés et chante les louanges de Kim Jong Un. Je dis bien Kim Jong Un. À quel moment est-ce que les États-uniens vont-ils se réveiller et se rendre compte que Trump es

Mistral AI 🇫🇷 🤝 Sakana AI 🇯🇵 https://t.co/4fiv9Q2Djv

Mistral AI 🇫🇷 🤝 Sakana AI 🇯🇵 https://t.co/4fiv9Q2Djv

The TorchInductor team dives deep on how to generate state-of-the-art matrix multiply kernels with the new CuteDSL backend. Learn more: https://t.co/xTLltGx6SW https://t.co/b0TRPFrhN8

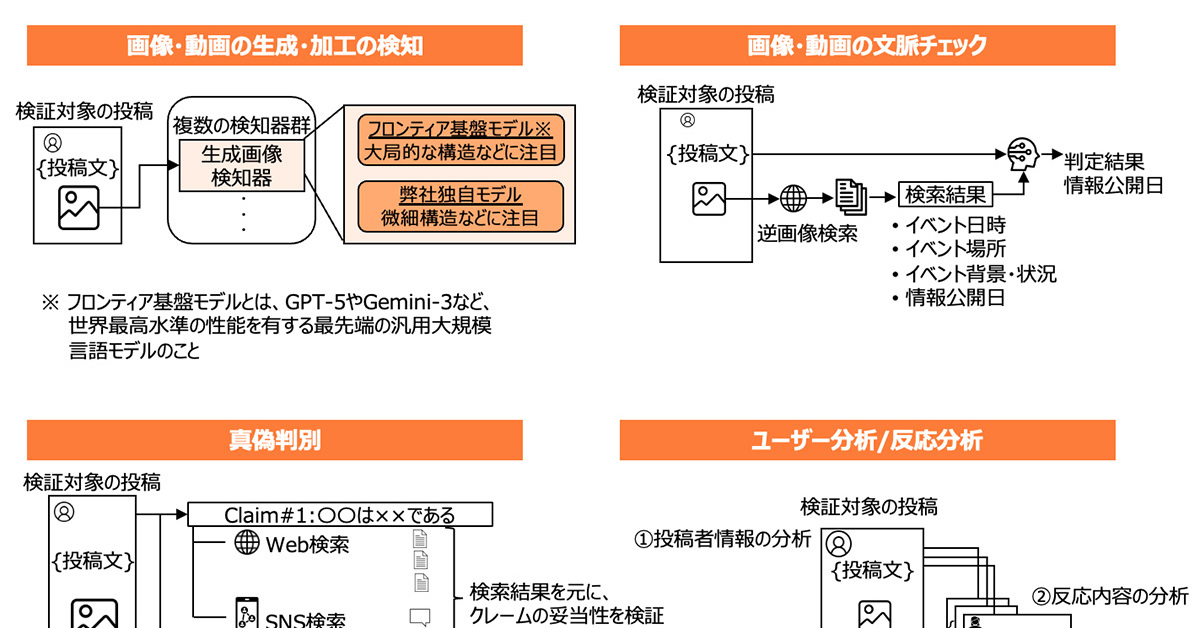

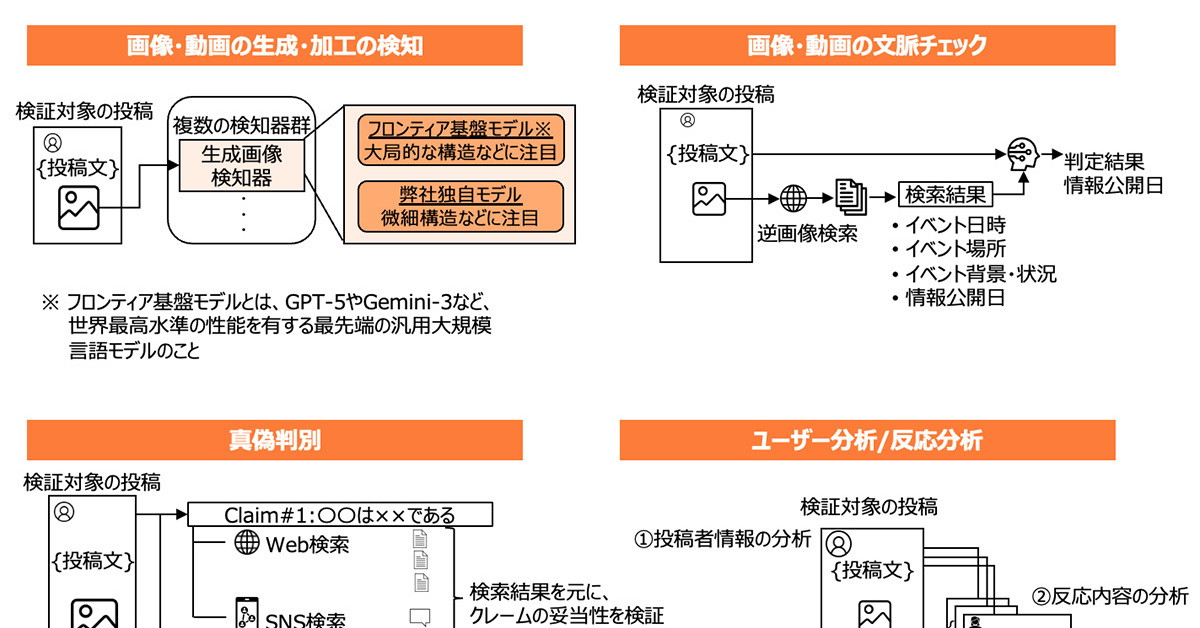

SNSに広がる誤情報をAIで検知する技術 総務省事業でSakana AIが開発 https://t.co/CCpZ7lRhJe

SNSに広がる誤情報をAIで検知する技術 総務省事業でSakana AIが開発 https://t.co/CCpZ7lRhJe

@thomascygn https://t.co/cnzavfkclR