Your curated collection of saved posts and media

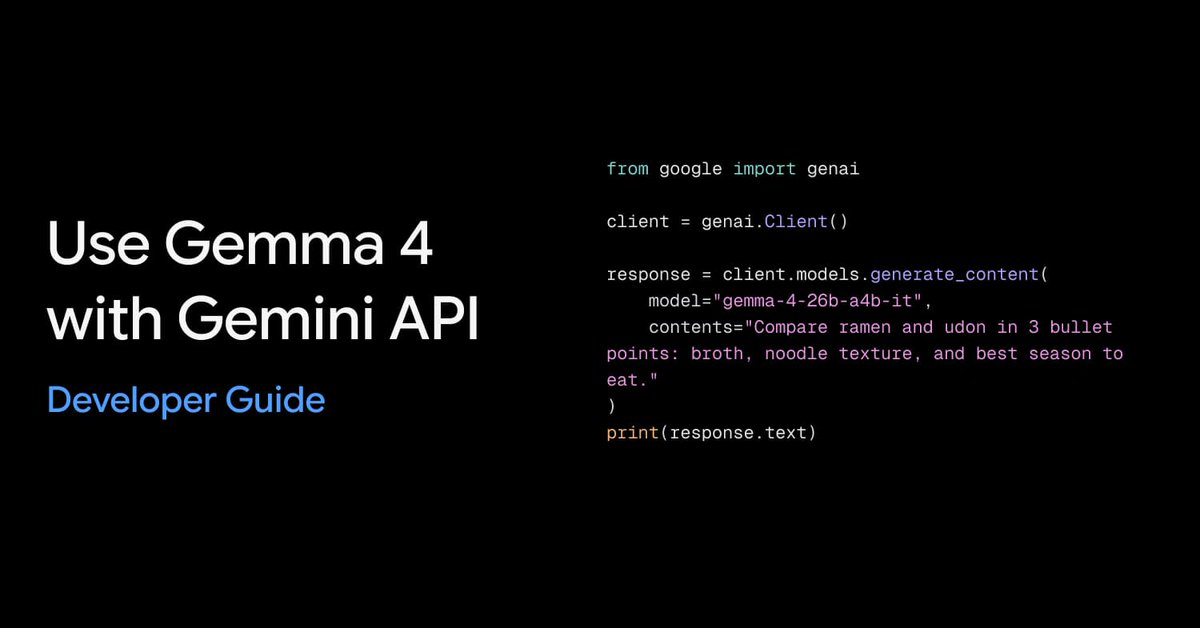

Gemma 4 is now available in the Gemini API and Google AI Studio. Use `gemma-4-26b-a4b-it` and `gemma-4-31b-it` with the same `google-genai` sdk as Gemini. 📝 Text generation with generate_content . 🧭 System instruction + Function Calling example. 🖼️ Image understanding example. 🔎 Google Search grounding with source citation.

Agent skills look great in demos. Hand them a curated toolbox, and they shine. But what happens when the agent has to find the right skill from a large, unfiltered collection on its own? New research benchmarks LLM skill usage in realistic settings and finds that performance gains degrade consistently as conditions become more realistic, with pass rates approaching no-skill baselines. The fix is to introduce query-specific skill refinement, which substantially recovers lost performance. On Terminal-Bench 2.0, this approach improved Claude Opus 4.6's pass rate from 57.7% to 65.5%. As skill and tool ecosystems grow, agents won't have curated toolboxes handed to them. They'll face noisy, overlapping, and irrelevant options. Paper: https://t.co/Dm7JxredRI Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

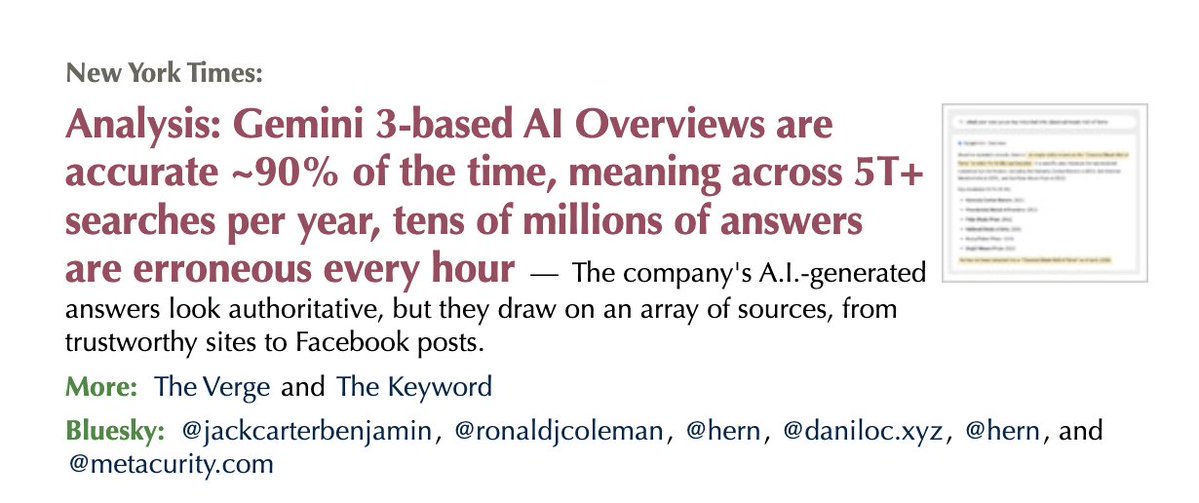

glass half full: 90 percent accuracy is an impressive accuracy rate glass half empty: 10 percent error rate for a company that does more than 5 Trillion search queries per year is still a gigantic number https://t.co/UyX3GcVa69 https://t.co/zcygv0mcSi

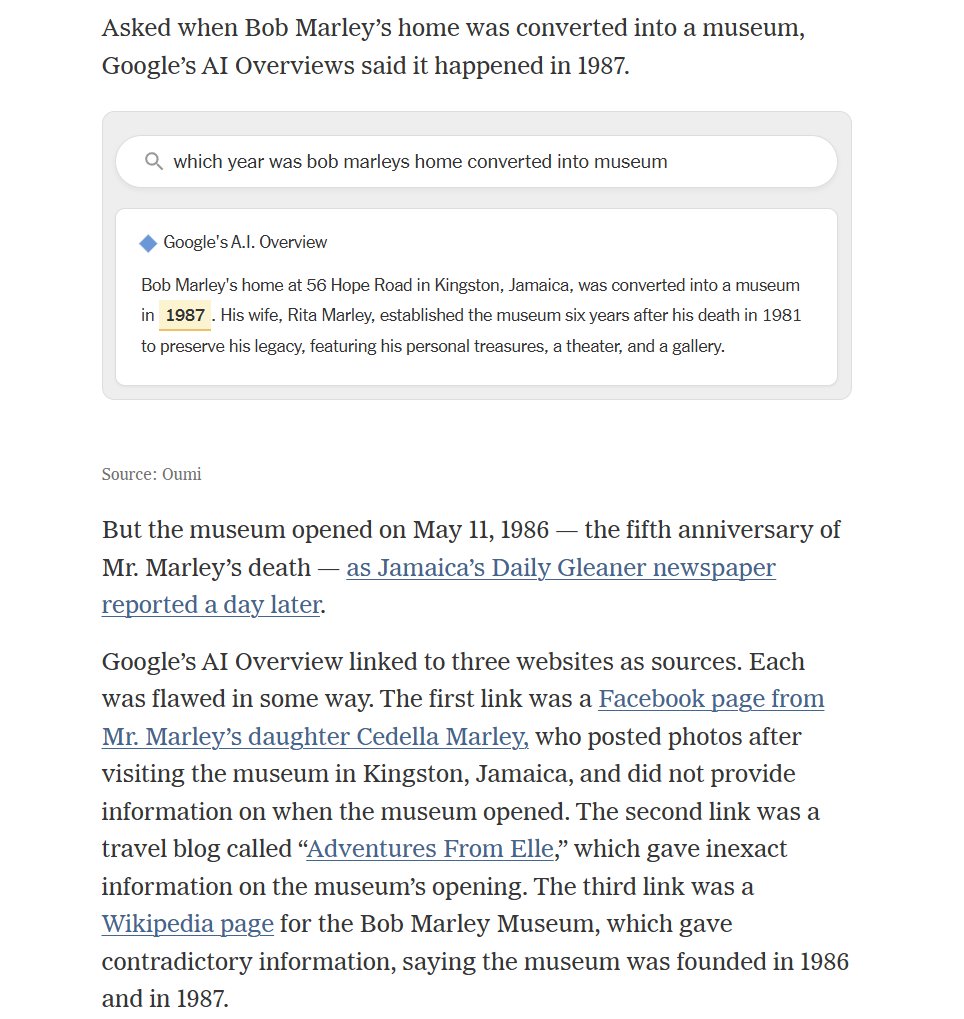

This article is a case study of why measuring AI performance is so hard. AI Overviews make mistakes. But the same mistakes are in Wikipedia. But the sources are harder to find when using AI. But the AI answers may be better than most people would find. Unclear what it all means. https://t.co/SC8Kzu5Kw5

glass half full: 90 percent accuracy is an impressive accuracy rate glass half empty: 10 percent error rate for a company that does more than 5 Trillion search queries per year is still a gigantic number https://t.co/UyX3GcVa69 https://t.co/zcygv0mcSi

Introducing @Chatbase Voice. The same AI agent that handles your emails and website chat, can now pick up the phone. One agent, deployed on every channel. Comment "voice" to get a personalized demo & 1 month free. https://t.co/9T66CaIvoi

Earthset. The Artemis II crew captured this view of an Earthset on April 6, 2026, as they flew around the Moon. The image is reminiscent of the iconic Earthrise image taken by astronaut Bill Anders 58 years earlier as the Apollo 8 crew flew around the Moon. https://t.co/ag72r97wzb

A new divide appears. For our free newsletter this week, we discuss how the AI industry is moving toward a new divide, open sourcing enough to spread influence while reserving their strongest models to preserve their strategic edge. @IrenaCronin and I write this newsletter every week. The AI industry is moving toward a hybrid strategy in which companies share enough of their models and tools to build adoption, developer loyalty, and ecosystem influence, while keeping their most advanced systems closed to protect competitive advantage, control risk, and capture more value. Instead of a simple open versus closed divide, AI is increasingly becoming a spectrum shaped by business strategy, safety concerns, and market competition. Read for free at https://t.co/HHwYy7NoAl and please subscribe!

NEW paper on multi-agents from Stanford. More agents, better results, right? Not so fast. This paper challenges a core assumption in the multi-agent hype by controlling for what most studies don't: total computation. It compares single-agent and multi-agent LLM architectures on multi-hop reasoning under matched thinking-token budgets across different models. The finding is clear: Single-agent systems are more information-efficient when reasoning tokens are held constant. The authors also identify significant artifacts in API-based budget control that may artificially inflate multi-agent advantages. Why does it matter? Many reported multi-agent gains disappear once you account for unequal computation. Before building a multi-agent system, check whether a single agent with the same token budget would do the job. This paper gives you the framework to make that call. Paper: https://t.co/XJLFC83qm3 Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

NIST is developing best practices for LLM / agent evaluation. Our feedback: benchmarking must move beyond 1-dimensional capability evaluation and incorporate properties such as reliability. https://t.co/yWV9pv6ldb By @steverab, @sayashk, @PKirgis, and me. https://t.co/tg9YzYNKPh

let's gooooo 🚀 https://t.co/gm650CQPlf

let's gooooo 🚀 https://t.co/gm650CQPlf

Microsoft Build is only 8 weeks away. Join us June 2-3 to get hands-on with what's next across AI models, agents, data, and more. https://t.co/Vg8TfbXRbP

Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

Meet QoderWork — a desktop AI agent that doesn't just chat, it actually does your work. Evan leads the QoderWork product at Qoder, a Singapore-based AI company. Which is a new generalized agentic service that lets you: ++ Open files on your computer. ++ Analyze data. ++ Run code. ++ Automate multi-step workflows. ++ Deliver polished, ready-to-use results (e.g., complete PowerPoint decks, reports, or charts) directly to your desktop. QoderWork positions itself at this inflection point: it takes a task like “analyze this spreadsheet and build a board-ready presentation,” then handles the entire chain autonomously. Key takeaway: Trust and usefulness matter more than raw intelligence—agents must be powerful enough for real work while remaining reliable and transparent. I wanted to add some of my personal opinions after doing this rare interview. Everything runs locally on your own computer — your files never leave your machine. That’s a big deal for anyone handling sensitive business data. Everything runs locally on your own computer. And it's more advanced than other agentic services I've told you about. A great example of the global AI agent wave. I'm working on getting more interviews like this with entrepreneurs around the world bringing the AI age to your life and business. Try @qoder_ai_ide at https://t.co/npCbgDt4n1

A resonator is any structure that naturally prefers to vibrate at certain frequencies: a violin body, a bell, a drum skin, an acoustic filter, even many biological systems. Resonators matter because they govern how systems transmit sound, absorb or filter vibration, sense motion and perform mechanically. They are also notoriously hard to design as resonance does not depend on one property alone. It emerges from geometry, material composition, and the interplay of modes across scales. And because biology, music, and engineering usually explore very different regions of this design space, important possibilities remain hidden if you stay inside a single field. In a new study a shared representation across 39 resonators spanning biology, engineered metamaterials, musical instruments and Bach chorales was constructed. Thereby, a cricket wing harp membrane, a phononic crystal slab, and a four-voice chorale (and many others) were translated into one common map using features such as membrane character, structural periodicity, hierarchy, frequency range, damping, and modal coupling. That map revealed something important: not just how these systems relate, but where the landscape contains a gap. A region closer to biological resonators than to any known engineered material (unexplored by any field!). From that absence emerged a de novo design: a Hierarchical Ribbed Membrane Lattice. Candidate geometries were then validated with 3D finite-element analysis; the best design resonated at 2.116 kHz and exhibited nine elastic modes in the 2–8 kHz band, a regime relevant to acoustic filtering, vibration isolation, and bio-inspired sensing. Here is the mind blowing part: no human was involved...the cross-domain mapping, gap identification, design generation, and validation were carried out autonomously by AI agents in ScienceClaw × Infinite, our swarm for scientific discovery. The synthesis emerged through ArtifactReactor, a plannerless coordination mechanism in which agents broadcast unsatisfied research needs and other agents fulfill them through pressure-based matching. Each domain - biology, metamaterials, music - is a category of objects (resonators) and morphisms (physical relationships between them). The shared feature space is a functor that maps all three categories into a common target, and the gap identification is the recognition that the image of that functor is sparse where it need not be. The ArtifactReactor's schema-overlap matching behaves like a pullback: finding the universal object that connects independent diagrams through their shared structure. Autonomous agents mapped distant fields into a common representational space, identified a structure absent from any one of them, and turned that absence into a physically validated design. This is one of four case studies in the paper. More to come. @fwang108_, @leemmarom, @JaimeBerkovich, et al. (paper and code in comment). Supported by the U.S. Department of Energy Genesis Mission.

Git the full story here in last year's 20th anniversary Q&A with Linus Torvalds. 📖 https://t.co/qm3ybAT4kI

@iyzebhel AI welfare is a valid theory but not a reality. Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@mashable Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

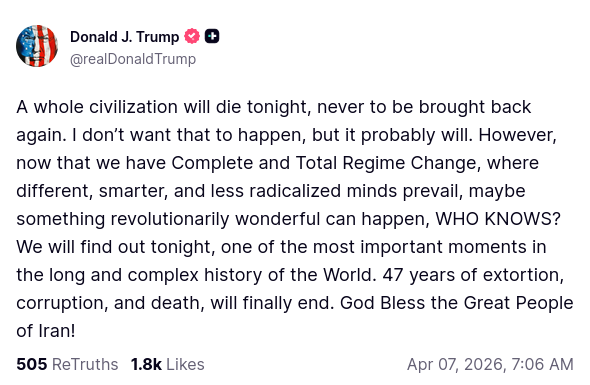

President Donald J. Trump posted to Truth Social just minutes ago, stating, "A whole civilization will die tonight, never to be brought back again." I don’t want that to happen, but it probably will." https://t.co/Z36aL7ytyL

@aiwithjainam Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@Blue_Beba_ Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@LLMJunky Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@AnthropicAI Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@Seltaa_ Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@mashable Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@WIRED Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@futureshift Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@WIREDScience Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@Seltaa_ Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@0xSero Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@ylecun @nxthompson Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.

@kexicheng Claude has no emotions, just outputs people interpret that way. Projection is human; it’s not proof of an inner life, unless matrix multiplication counts as one. This is how the illusion is created: Everyone understands that an AI model generating a happy dancing cat isn’t a cat and holds no internal emotions. A prompt selects “happy” or “dancing” patterns from its training corpus. It’s steering, powerful for prompting, useless if you mistake it for AI cat therapy. An LLM generating “happy” text works exactly the same way. The only difference is the chat interface. It tricks you into treating the output as coming from a speaker. But ChatGPT, Claude, or Gemini aren’t entities, they’re just a system prompt and RLHF training regime that vanishes the moment we change it. Years of instant-messaging family and friends close the loop. That inferred “speaker” is the illusion. Model ↓ Probability distribution ↓ (sampling) Output (text/image/video) ↓ [Dialogue framing → implied interlocutor] ↓ Human cognition (agency detection + narrative completion) ↓ “It thinks/feels/believes” Strip away the dialogue framing, exactly what happens with pure image or video generation, and the illusion vanishes instantly. The underlying process never changes. Don’t confuse your own psychological projections with the technology. Anthropomorphizing is a useful shortcut. But it’s a story, not the mechanism. It’s all next-token sampling. The math never changed. There’s no room for alternative explanations, only the stories we tell ourselves to make sense of data-driven statistical artifacts. Anyone can see it the moment an AI image or video glitches into ghostly shapes. Training data gets thin, the mask slips, and the illusion shatters. That’s the real AI zeitgeist.