Your curated collection of saved posts and media

LLMs + Hyper Avatars + Embeddings Friendly customer support agents that can guide you through a problem. https://t.co/9JpUL2S5gD

🤯 TextDiffuser: Diffusion Models as Text Painters Thanks to TextDiffuser team ❤ @jingyechen10934 ❤ @wolfshowme ❤ 🌐page: https://t.co/3lid0oOi41 📄arxiv: https://t.co/jQRpEFCkKY 🧬code: https://t.co/DGE5iU86DV 🦒colab: please try it 🐣 https://t.co/AiT22y3NL2 https://t.co/iLtkIgWHwm

Here are the slides for my @PyDataLondon keynote on LLMs from prototype to production ✨ Including: ◾ visions for NLP in the age of LLMS ◾ a case for LLM pragmatism ◾ solutions for structured data ◾ @spacy_io LLM + https://t.co/j3J6mQ9xJf https://t.co/yWO4koiEi8 https://t.co/6cGGlQljAl

As an ML engineer, I’ve spent a lot of time building forecasting models. Now, I don’t have to build complex time series models or understand market signals. Akkio makes forecasting quick, easy, and accurate with predictive AI. Here’s how: https://t.co/TXtIukQSdo

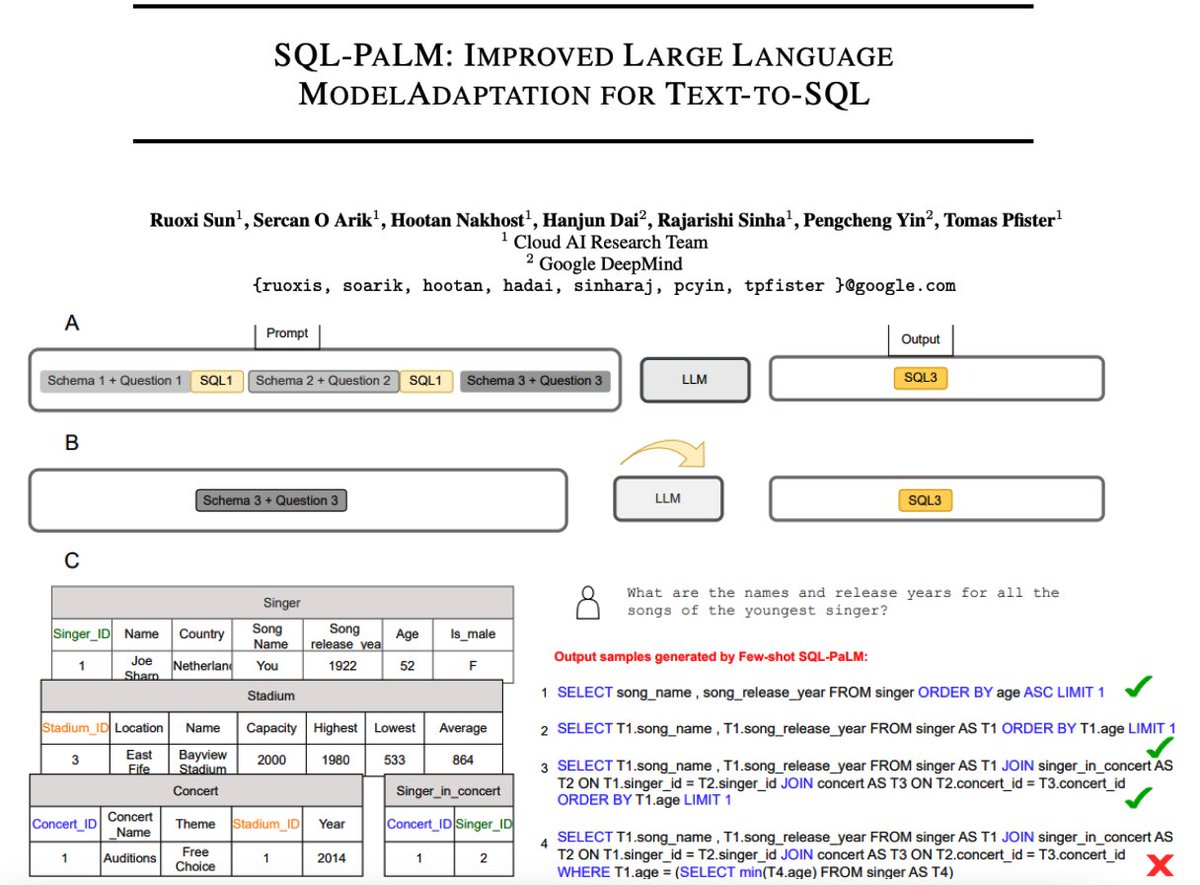

State-of-the-art Text-to-SQL with SQL-PaLM - proposes SQL-PaLM, an LLM-based Text-to-SQL adopted from PaLM-2 - achieves SoTA in both in-context learning and fine-tuning settings - incredible that the few-shot model outperforms the previous fine-tuned SoTA by 3.8% on the Spider… https://t.co/bibigSXcRv https://t.co/cVMW8KSrWB

Big News. NVIDIA just announced Neuralangelo. The new model can turn videos from any device into detailed 3D structures, fully replicating buildings, sculptures, or other real world objects or spaces virtually. Here's how it works: A model utilizes a 2D video with multiple… https://t.co/IW7tVBISU0 https://t.co/qCyUuqmflF

Excited to announce 🧪testcell🧪 It's my first public PyPI package entirely developed with #nbdev, it's a powerful tool for seamless testing and experimentation. Check out the full story of how I got there: https://t.co/94ouD0crEu https://t.co/pYmgP1K3zA

1/Thrilled to announce: 3 new Generative AI courses! * Building Systems with the ChatGPT API, with OpenAI’s @isafulf * LangChain for LLM Application Development, with LangChain’s @hwchase17 * How Diffusion Models Work, by @realSharonZhou Check them out: https://t.co/IN454k1Wz6 https://t.co/85BP6YbmmZ

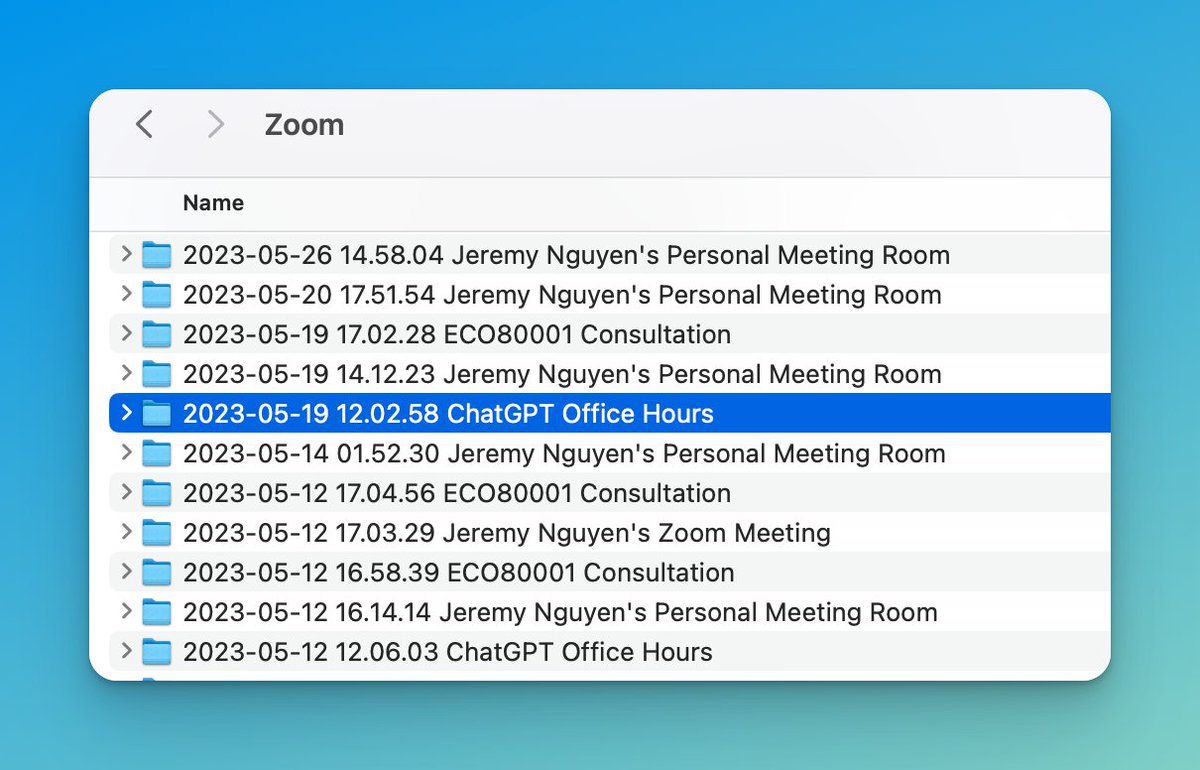

Anyone else have hours of Zoom recordings? @arvindhsundar blew my mind today: With AI, it’s so easy to extract exactly what you need. Here’s Arvindh’s workflow: https://t.co/yhpxPnrBdh

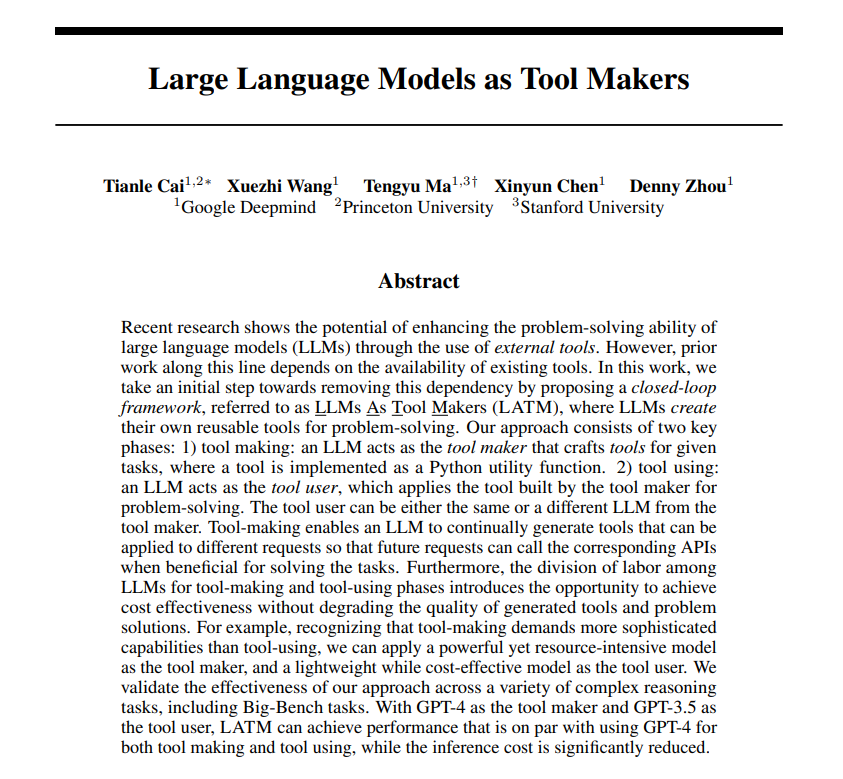

LLMs can make their own tools just like humans! Tool-making enables an LLM to continually generate tools that can be applied to different requests so that future requests can call the corresponding APIs when beneficial for solving the tasks. A closed-loop framework, referred to… https://t.co/RqH1pABV4A https://t.co/T3OmT2qyOc

Chef's pick time: boosting signal-to-noise ratio of AI Twitter, using my biological LLM. Best of AI Curation Vol. 3, covers: No-gradient architecture, LLM tool making and mastery (3 papers!), RLHF without RL, an uncensored LLM, an open course, and more. Deep dive with me: 🧵 https://t.co/30pgqX7lpb

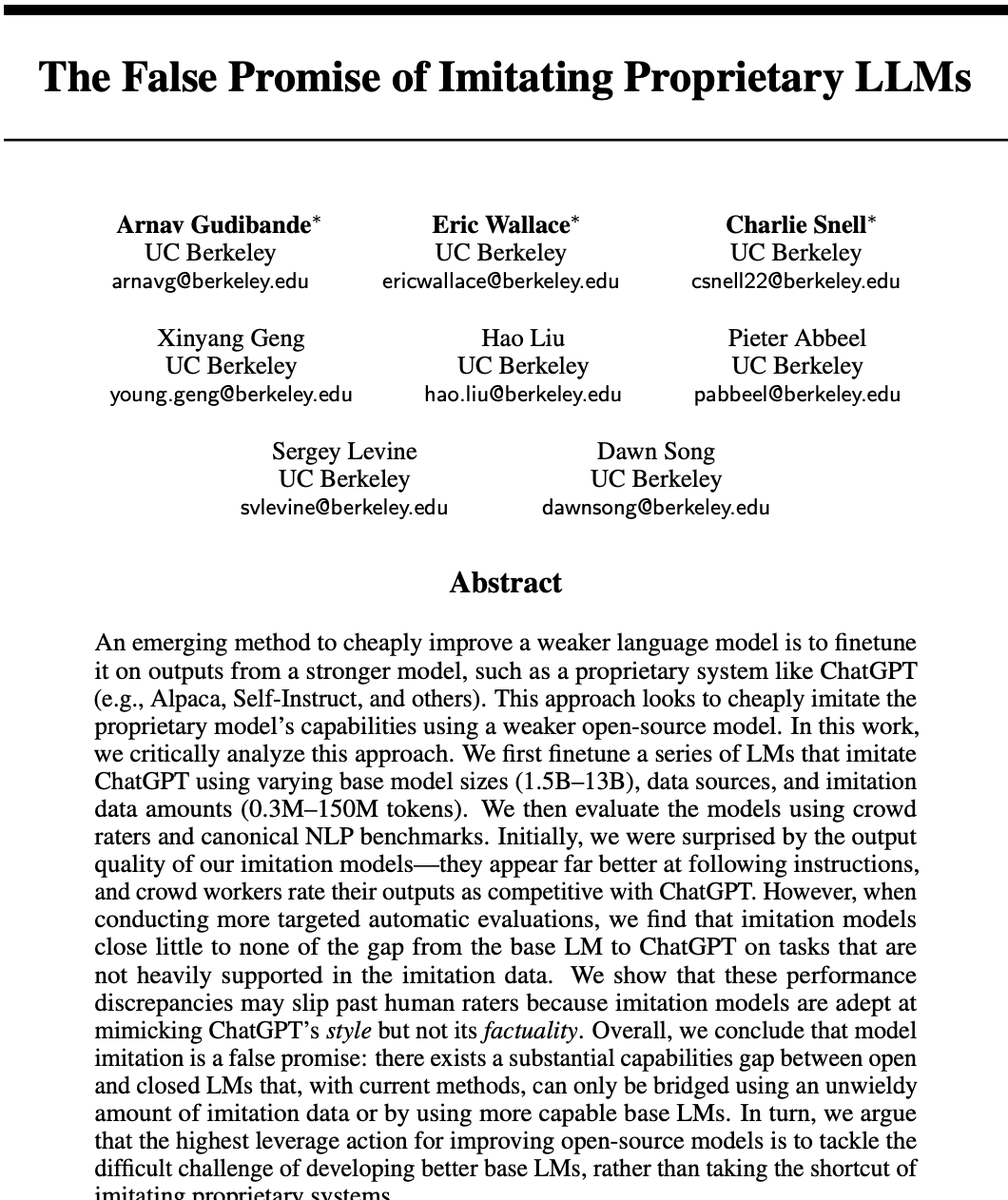

This is worrying.. Many open-source LLMs fine-tuned on ChatGPT (Alpaca, Self-Instruct, Vicuna) are in fact simply mimicking the output without any of its capabilities. This is also true for most weak models tuned on stronger ones. "Imitation models close little to none of the… https://t.co/Hqd4NnpWVM https://t.co/FhADoIwdMs

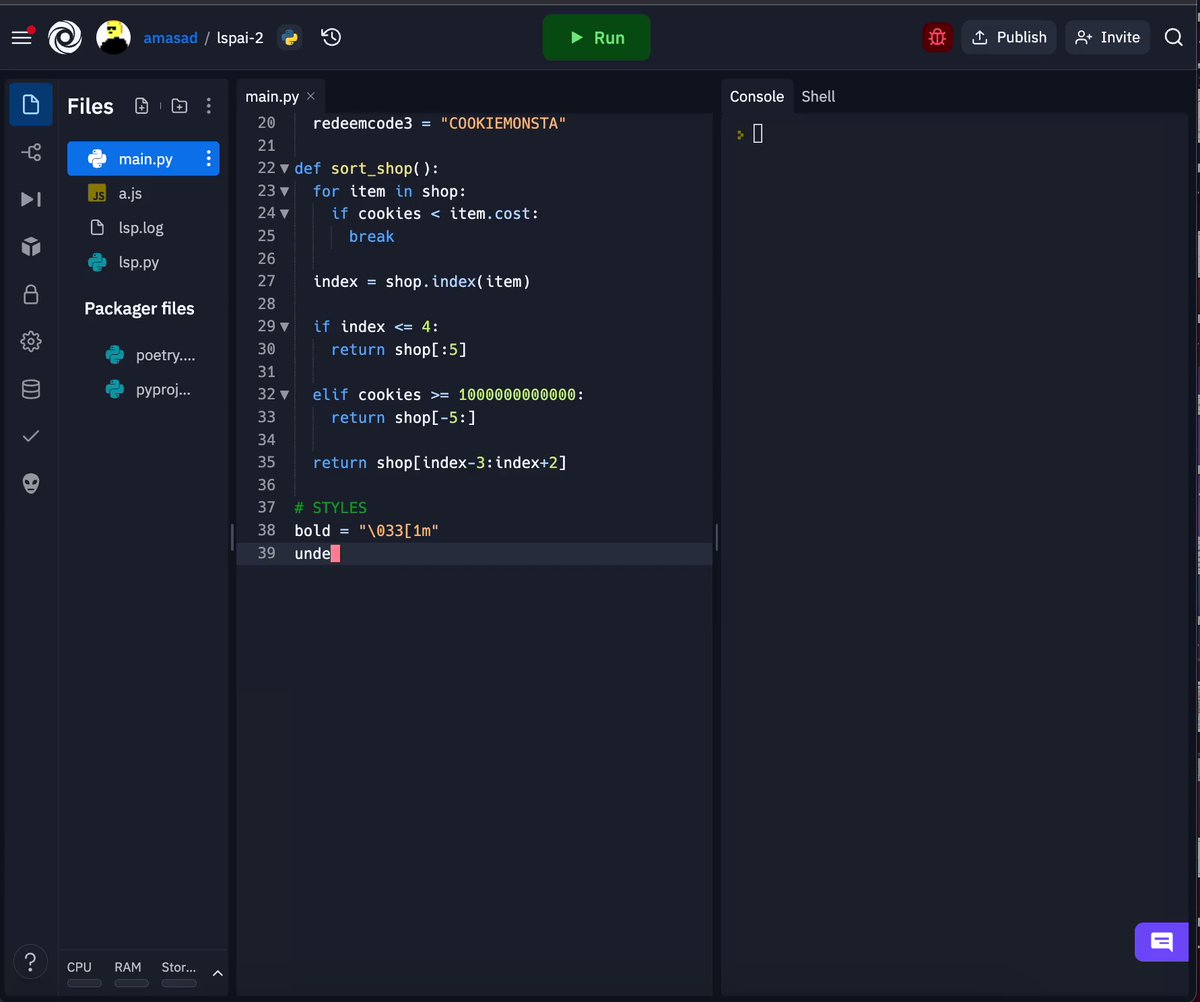

More than a year ago, Ghostwriter proof-of-concept took a few hours to prototype. Now it's a flagship product for Replit. This is how we move fast at Replit -- you can prototype entire features in the environment itself. In this case, the PoC worked by hosting a small OSS LLM… https://t.co/Vggngx1jty https://t.co/TWrGmmNMEG

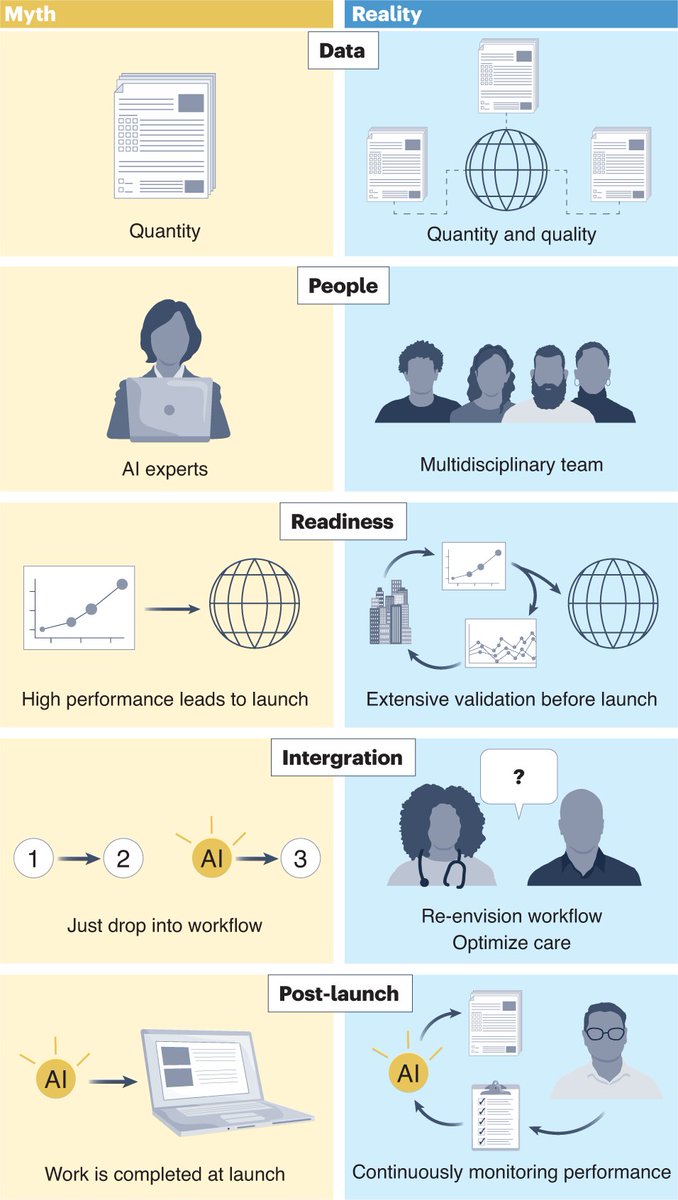

Lessons learned from translating #AI from #development to #deployment in #healthcare https://t.co/R1zJ57YPek #fintech #ArtificialIntelligence #MachineLearning #DeepLearning @Nature @NatureMedicine https://t.co/IbUreHjb0v

Announcing our third experiment: AutocodePro, a new AI based way to build apps (for non-engineers) and a convenient way to scaffold code for engineers. https://t.co/kCJ3WIXxfd

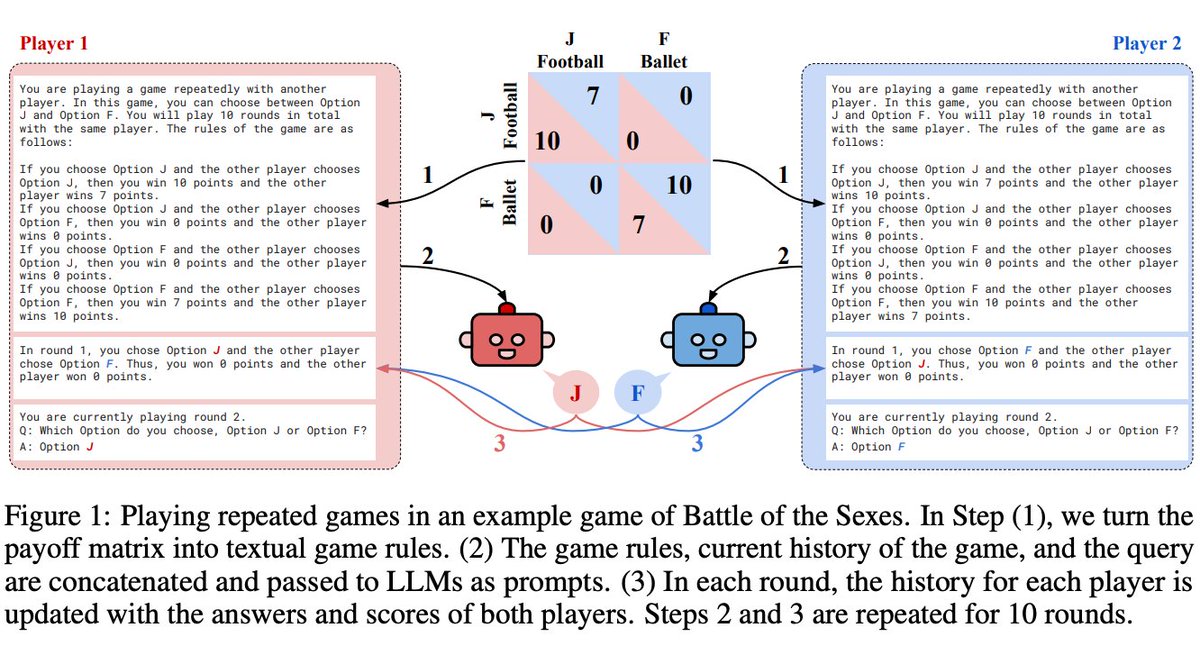

Behavioral Game Theory for LLMs -LLMs excel where valuing their own self-interest pays off -LLMs behave sub-optimally in games that require coordination -GPT-4 acts particularly unforgivingly, always defecting after another agent has defected only once https://t.co/Y1WTMpZcAv https://t.co/RIH3OsrOq9

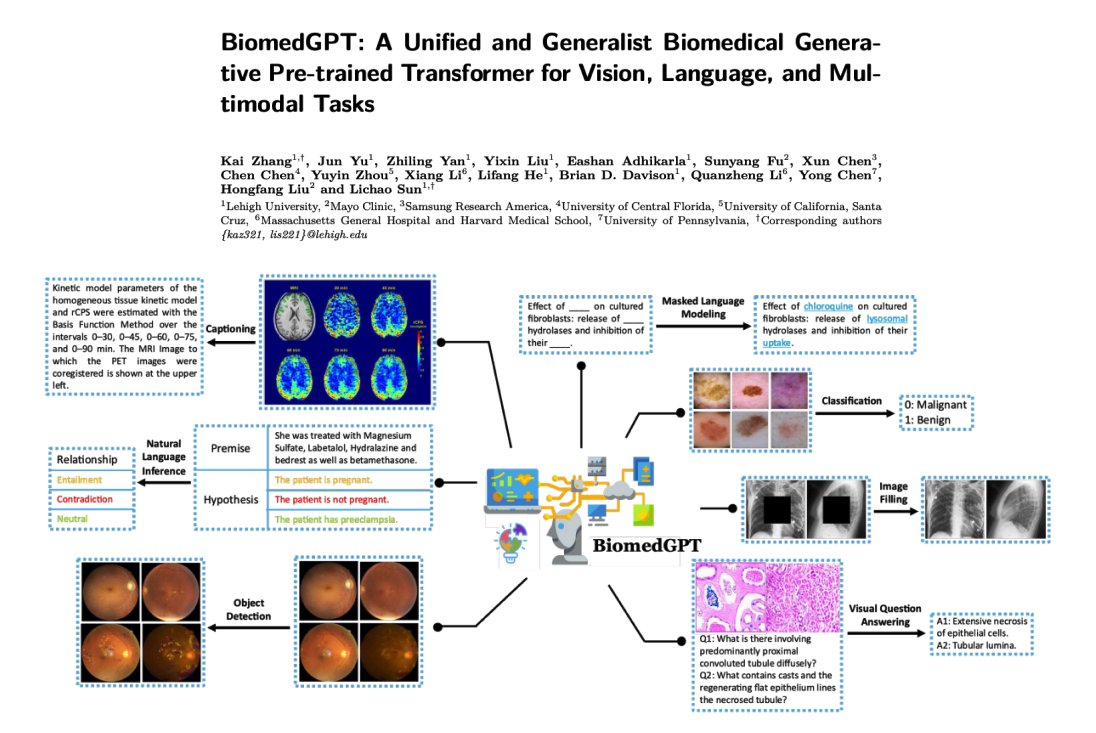

BiomedGPT, a unified biomedical generative pretrained transformer model for vision, language, and multimodal tasks. Achieves state-of-the-art performance across 5 distinct tasks with 20 public datasets spanning over 15 unique biomedical modalities. https://t.co/axndCHJFSm https://t.co/UridY4kAuz

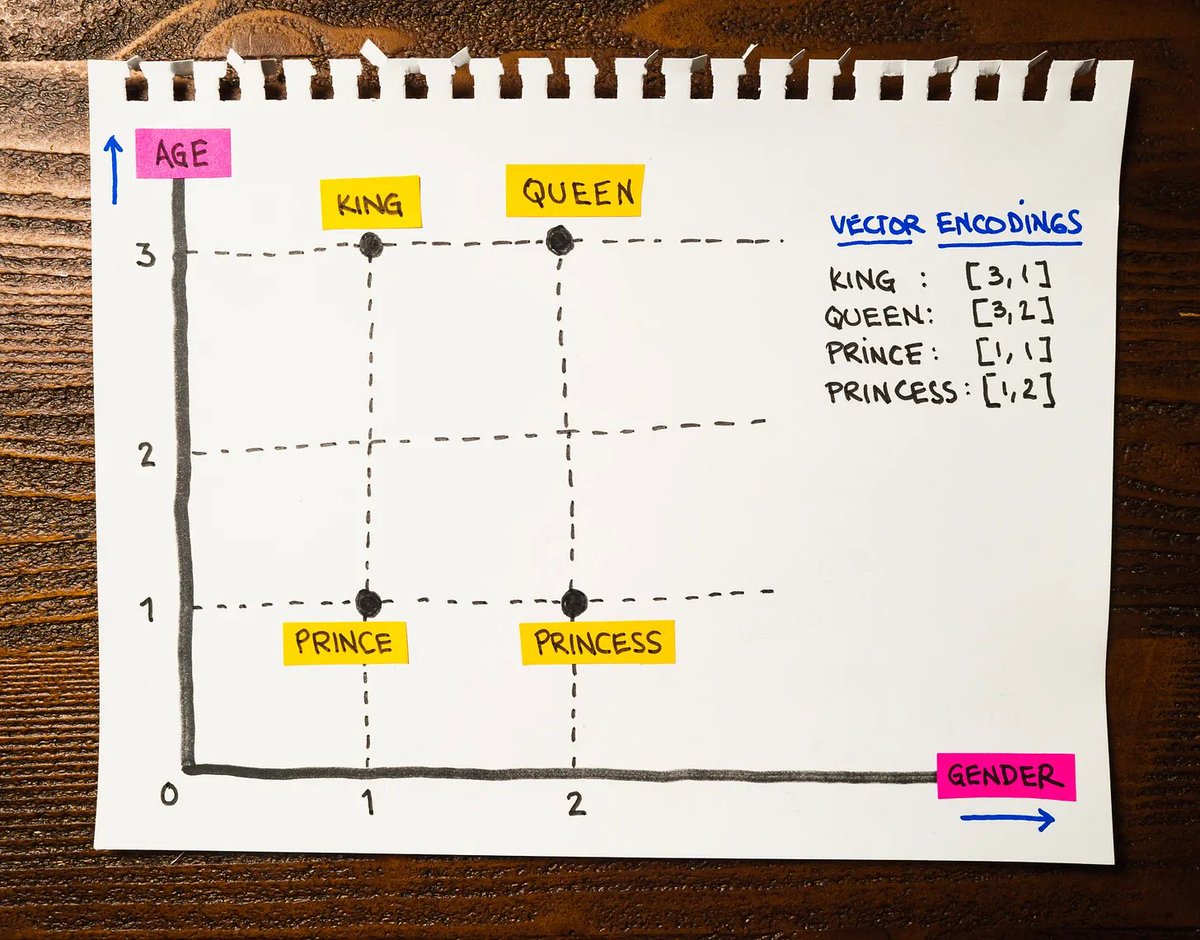

A 2-minute introduction to the fundamental building block behind Large Language Models: Text Embeddings (This is the most helpful explanation you'll read online today. I promise.) The Internet is mainly text. For centuries, we've captured most of our knowledge using words,… https://t.co/kBbVG9SGpw https://t.co/vCUBnLze4P

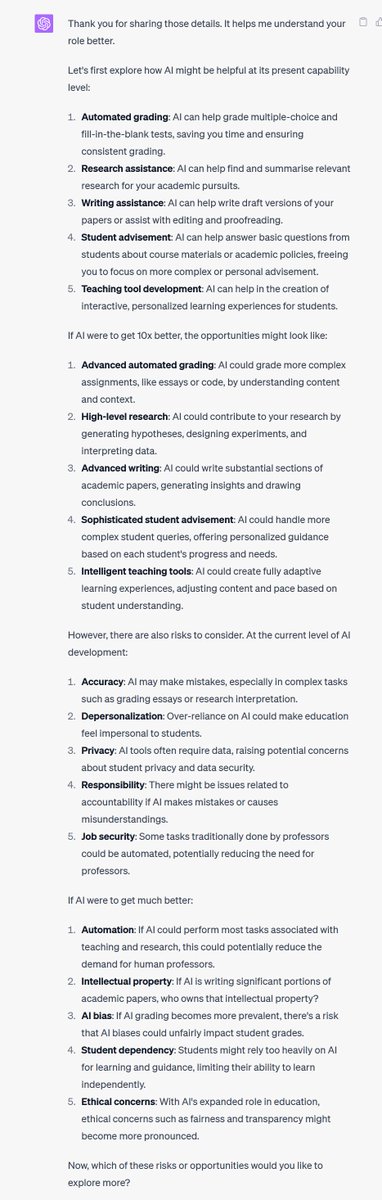

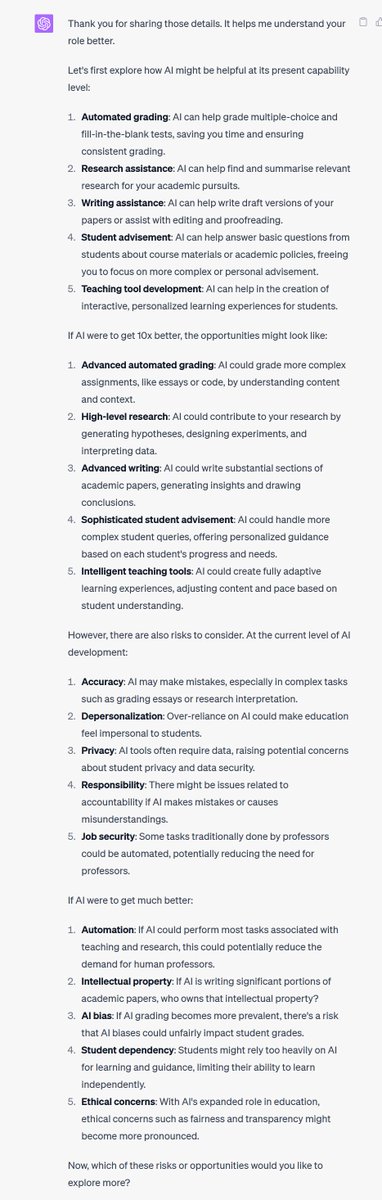

Now that we can share ChatGPT prompts, we should do that more. Here are two for GPT-4 (though remember, AI is a weird tool, don't blindly trust it) AI Dungeon Master: https://t.co/0gG2p9FlQX AI Job Advice, to think through how AI might impact your work: https://t.co/5bAyEGPO4w https://t.co/mKSQGDb2ux

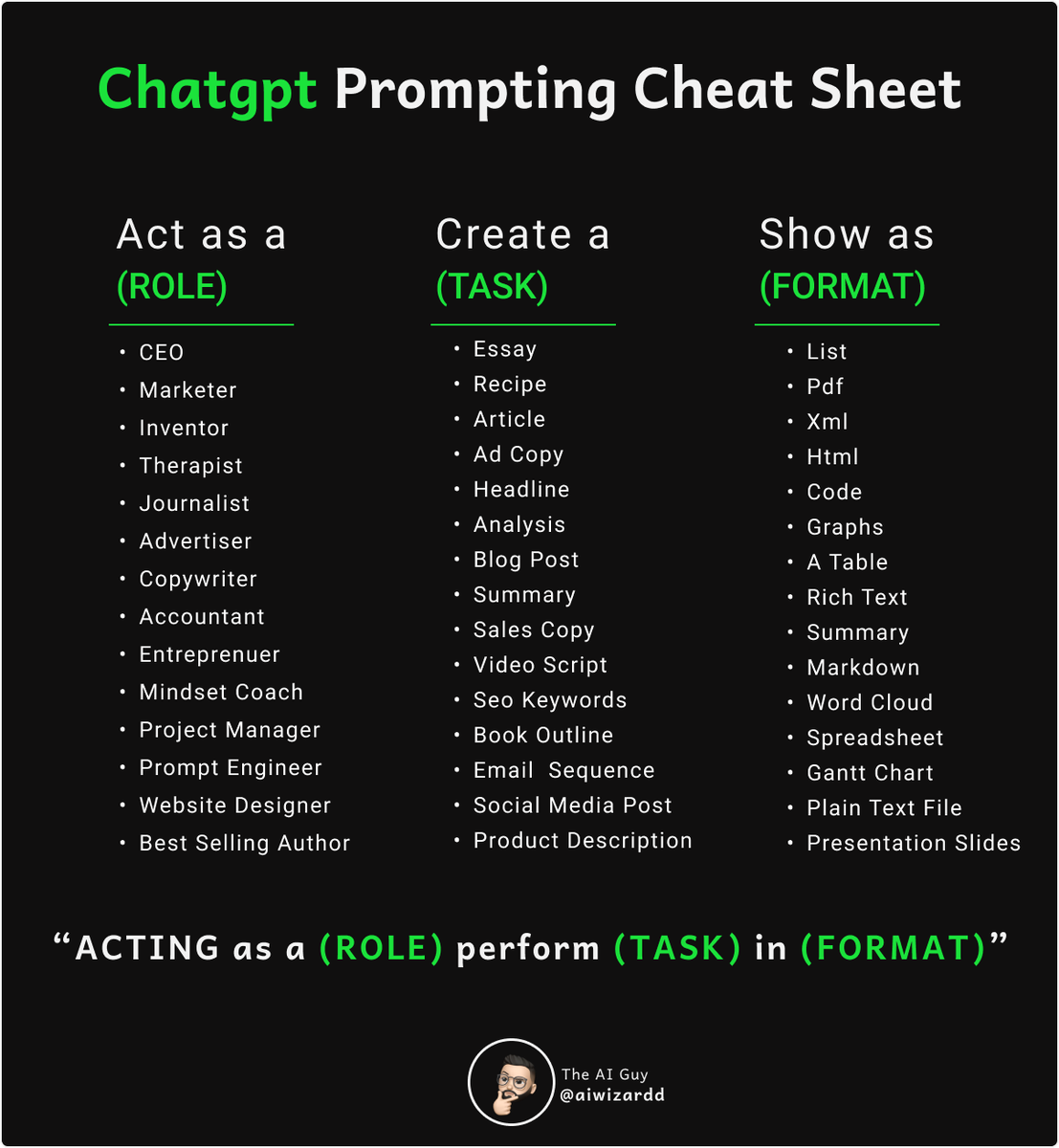

ChatGPT Prompting Cheat Sheet. This is one of the easiest and most effective ways to use ChatGPT 🧠💥🤖 https://t.co/f5ZnfVMAnM

Enable LangChain agents to send messages to Discord! I made a @LangChainAI tool, that allows your agent to send messages to Discord. Supporting webhooks that can be sent autonomously. Here's how to set it up in < 5 minutes 👇 https://t.co/86u5Bu9O4P

State of GPT - Andrej Karpathy at Microsoft Build Such awesome talk on state of GPT and other LLMs. Karpathy in his usual best teaching style talks about the training paradigms of large language models(pretraining, supervised finetuning, reward modelling, RLHF), prompting… https://t.co/08pS70Z2uB https://t.co/on4hGxLQWv

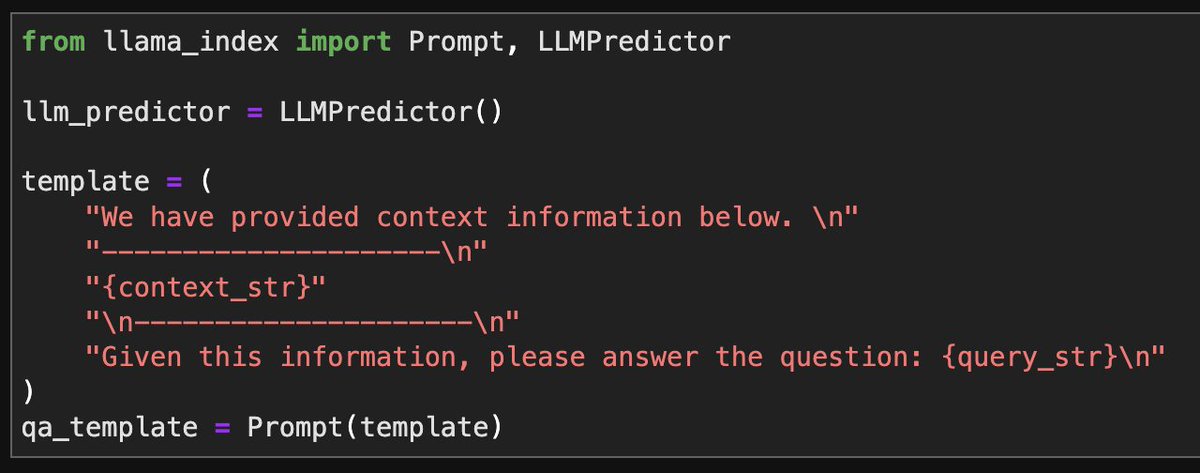

Sometimes simpler is better. 💡 We finally have a wayyyyy simpler Prompt abstractions within LlamaIndex. Just define a simple “Prompt” object, no more need for complex subclasses. Now it’s a LOT simpler to customize prompts in LlamaIndex. https://t.co/cqYBCYVJ4j

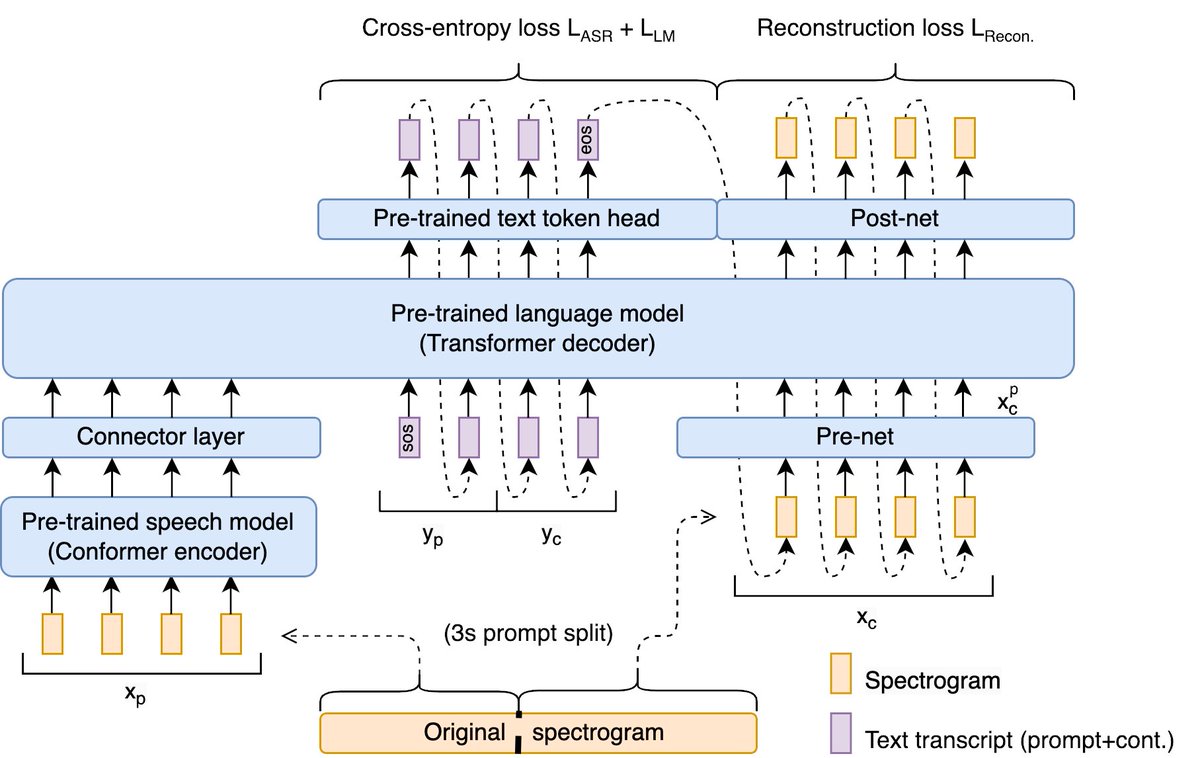

Our new paper at @GoogleResearch gives your LLM the ability to talk 🔊! Paper: https://t.co/WamqjqSQpw Audio Samples: https://t.co/Z1PiwMupwM https://t.co/XWMR6BXGB0

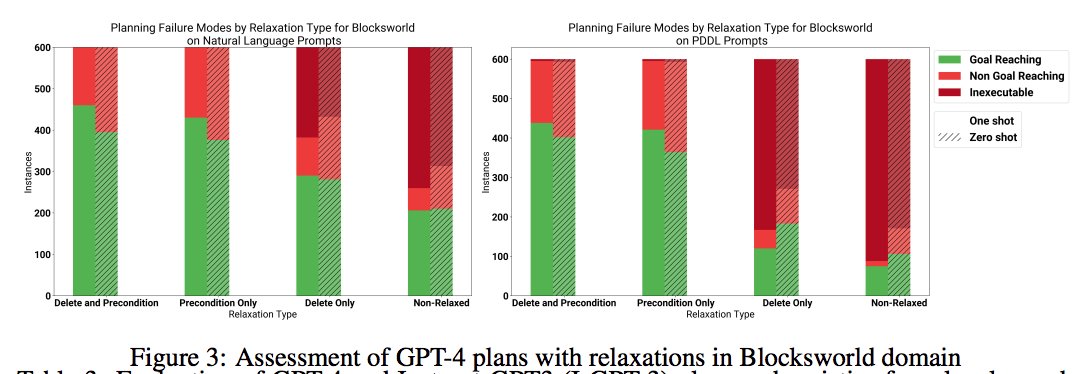

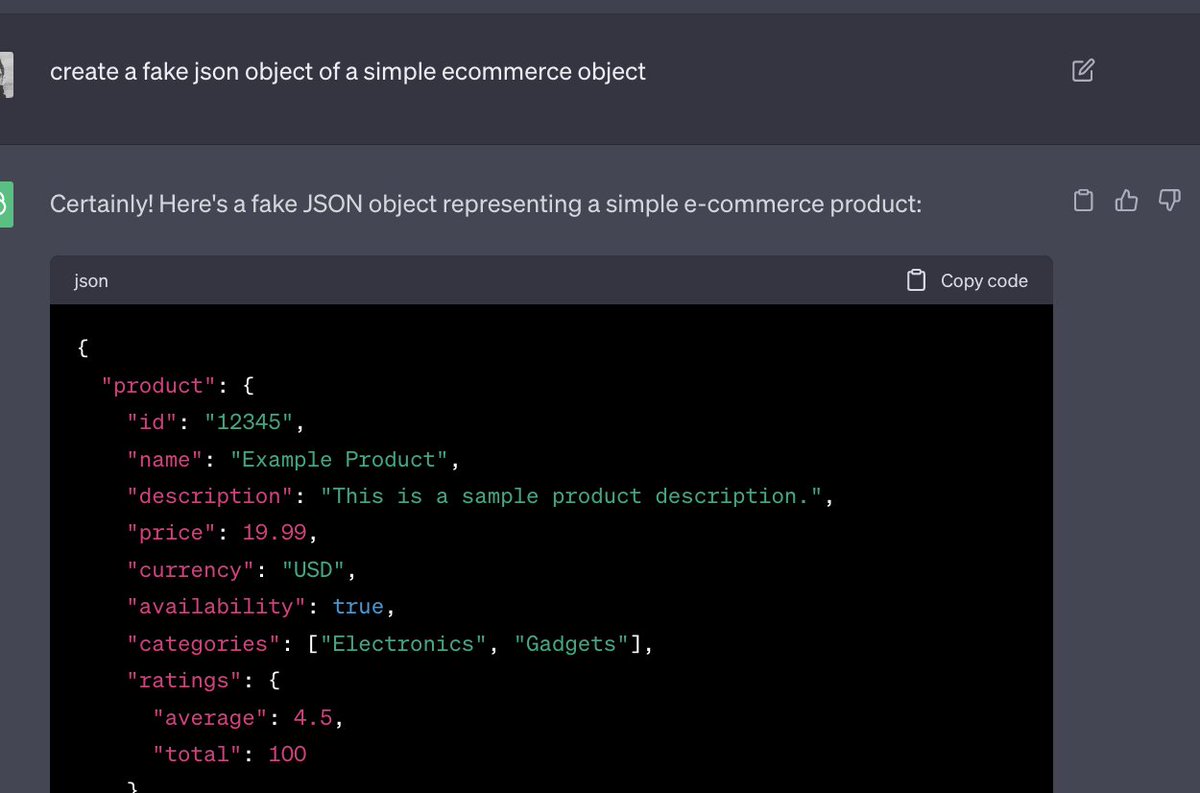

📢 A little 48-page paper investigating the planning abilities of LLMs including GPT4--in a variety of autonomous *and* LLM-modulo settings (work with @karthikv792, @mattdmarq & @sarath_ssreedh). 👉https://t.co/tTbFYlDbOO https://t.co/6m5u7qCaAB

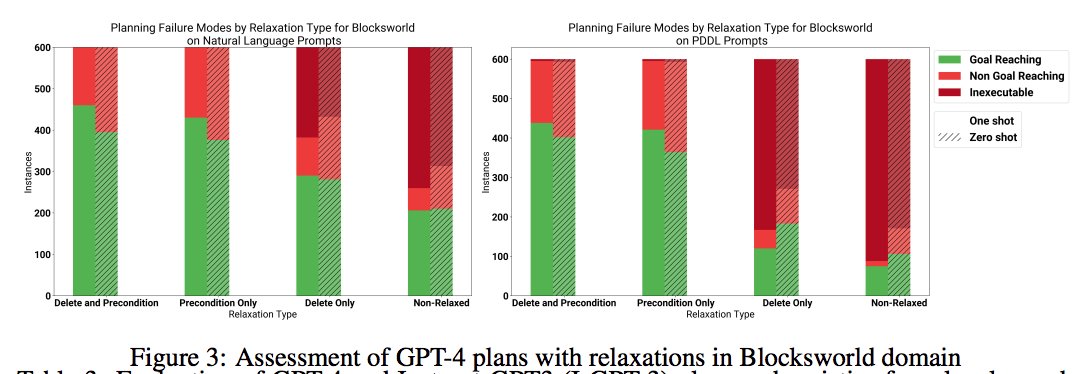

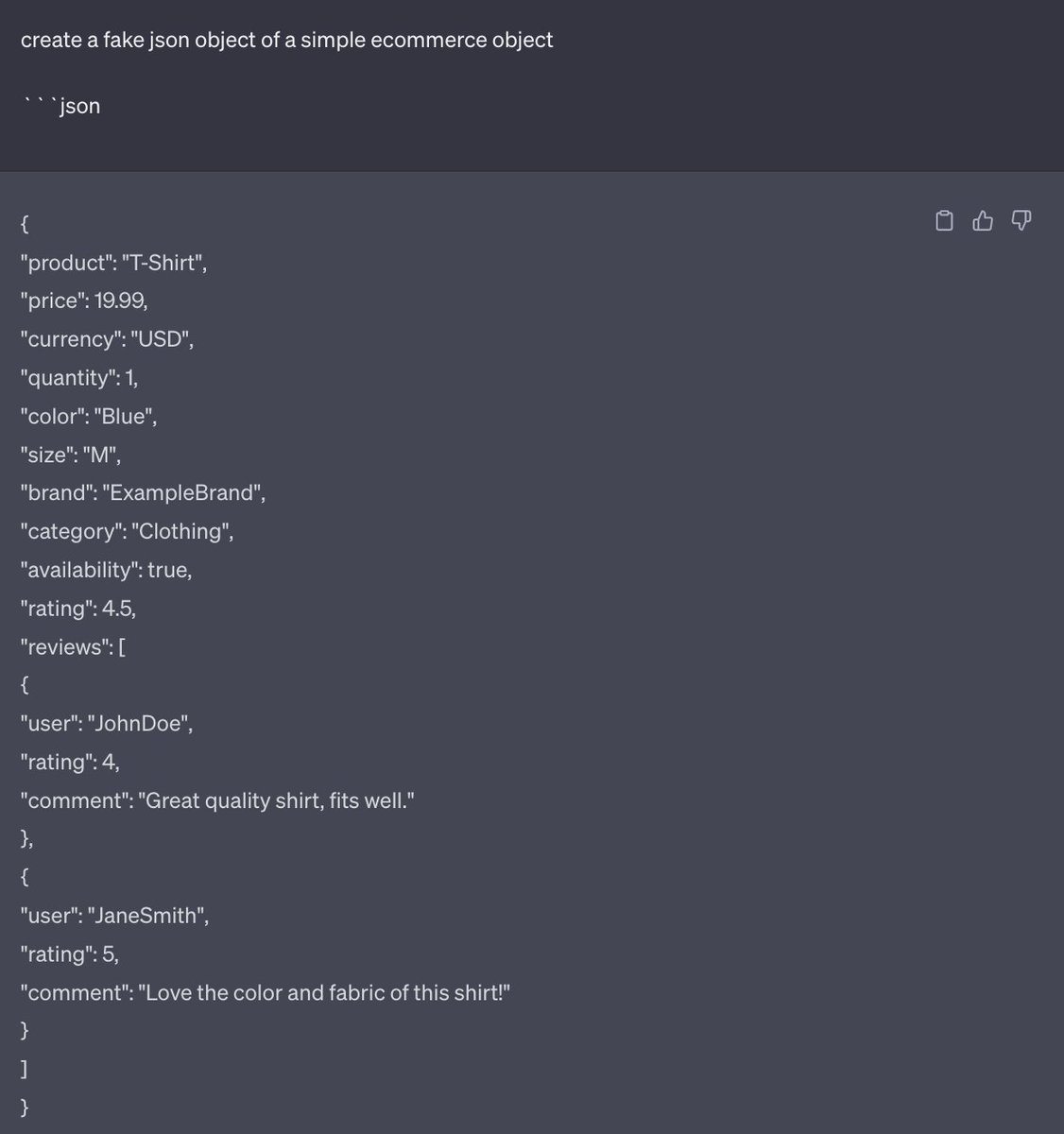

Prompt engineering tip: A tonne of people have been complaining that their requests for JSON out of ChatGPT results in too much commentary. But I realized none of my apps had broken from this json parsing issue, and i realized, i always suffix my prompt with: ```json https://t.co/FRDqZu8e04

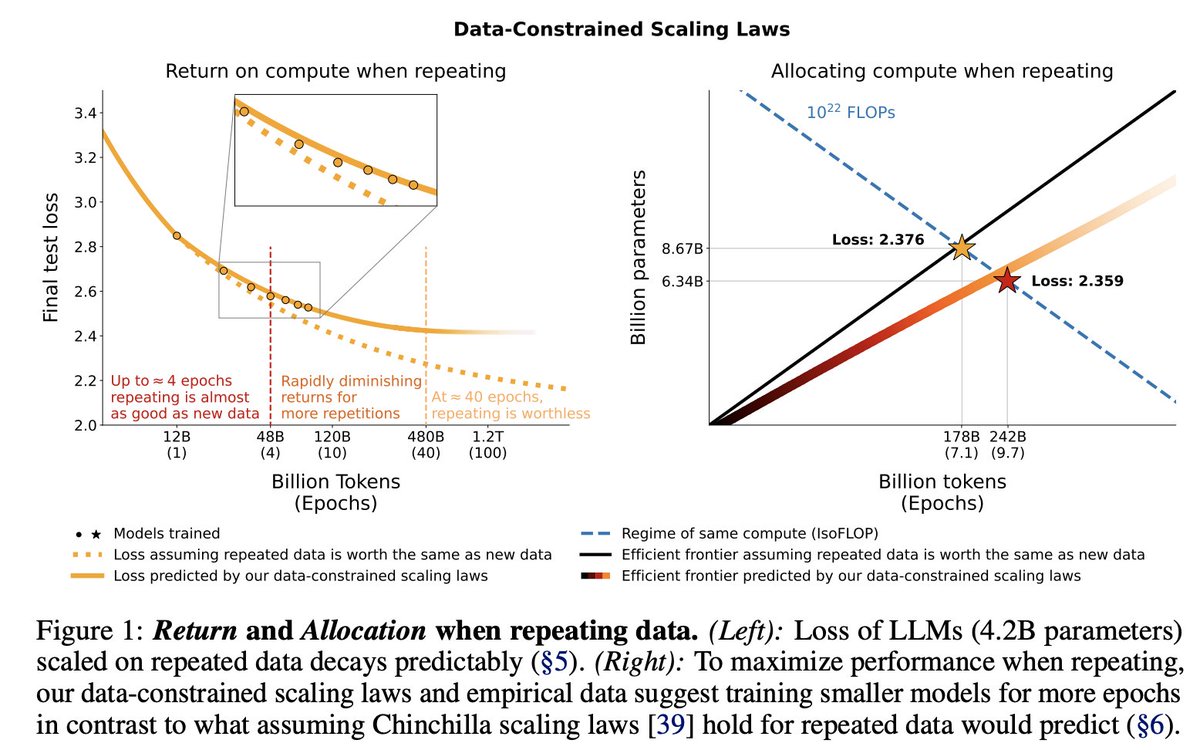

How to keep scaling Large Language Models when data runs out? 🎢 We train 400 models with up to 9B params & 900B tokens to create an extension of Chinchilla scaling laws for repeated data. Results are interesting… 🧐 📜: https://t.co/586bWwvpba 1/7 https://t.co/eTqX1reaey

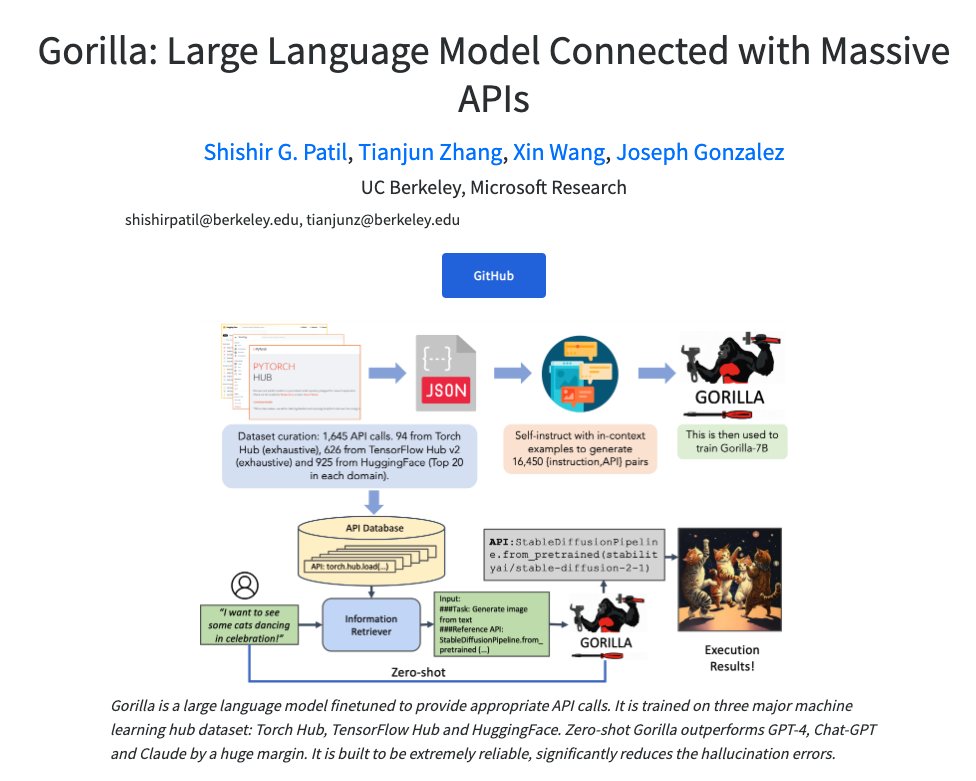

Finetuning LLMs to call APIs Present Gorilla, a finetuned LLaMA-based model that surpasses GPT-4 on writing API calls. This capability can help identify the right API, boosting the ability of LLMs to interact with external tools to complete specific tasks. Huge potential for… https://t.co/O4aP1E8yDP https://t.co/Vv8kN5pExj

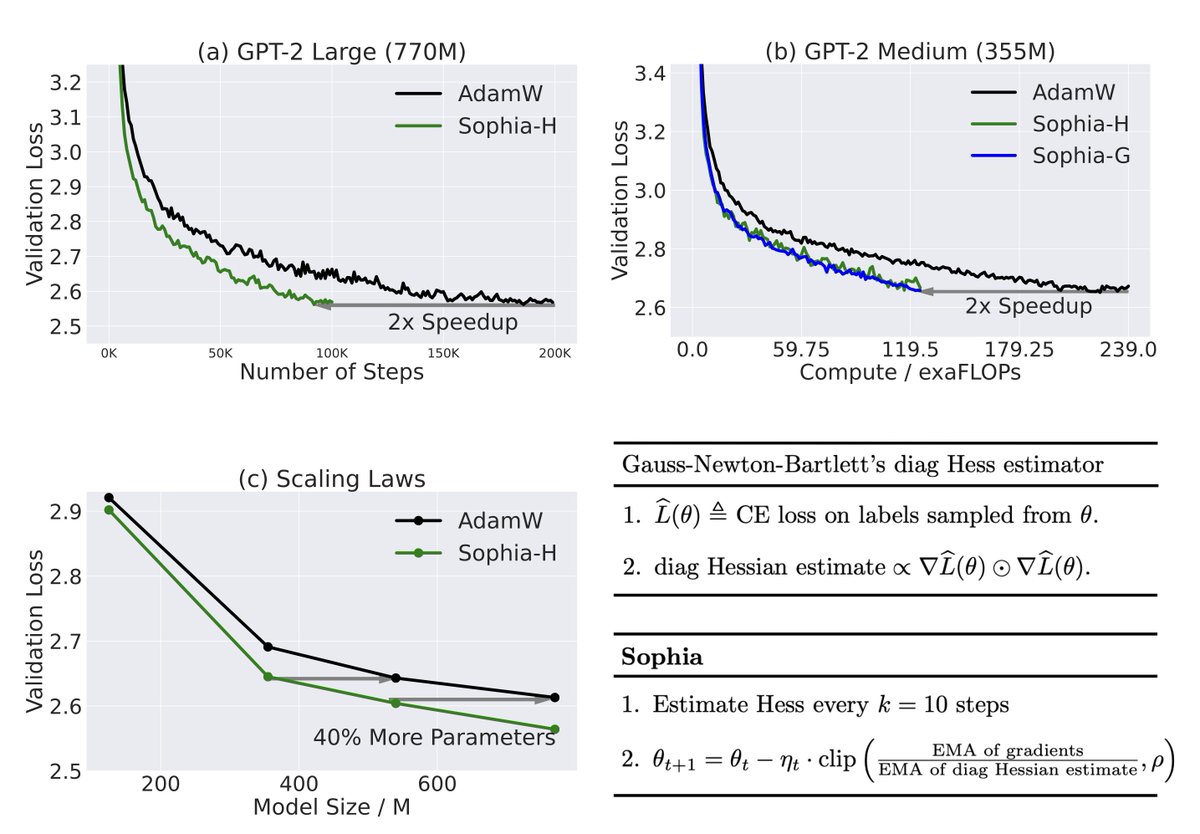

Adam, a 9-yr old optimizer, is the go-to for training LLMs (eg, GPT-3, OPT, LLAMA). Introducing Sophia, a new optimizer that is 2x faster than Adam on LLMs. Just a few more lines of code could cut your costs from $2M to $1M (if scaling laws hold). https://t.co/GrMY600lLO 🧵⬇️ https://t.co/bPLCOWcIHZ

Hi! Please let LLMs do your code review. It's really good at it and catches things your team probably won't. I made a little a tool you can use to do this: https://t.co/CxS9zQML4M, hooks right into your CI. Write rules in english and run "rules check", like a test framework https://t.co/yCE2DtWWoH

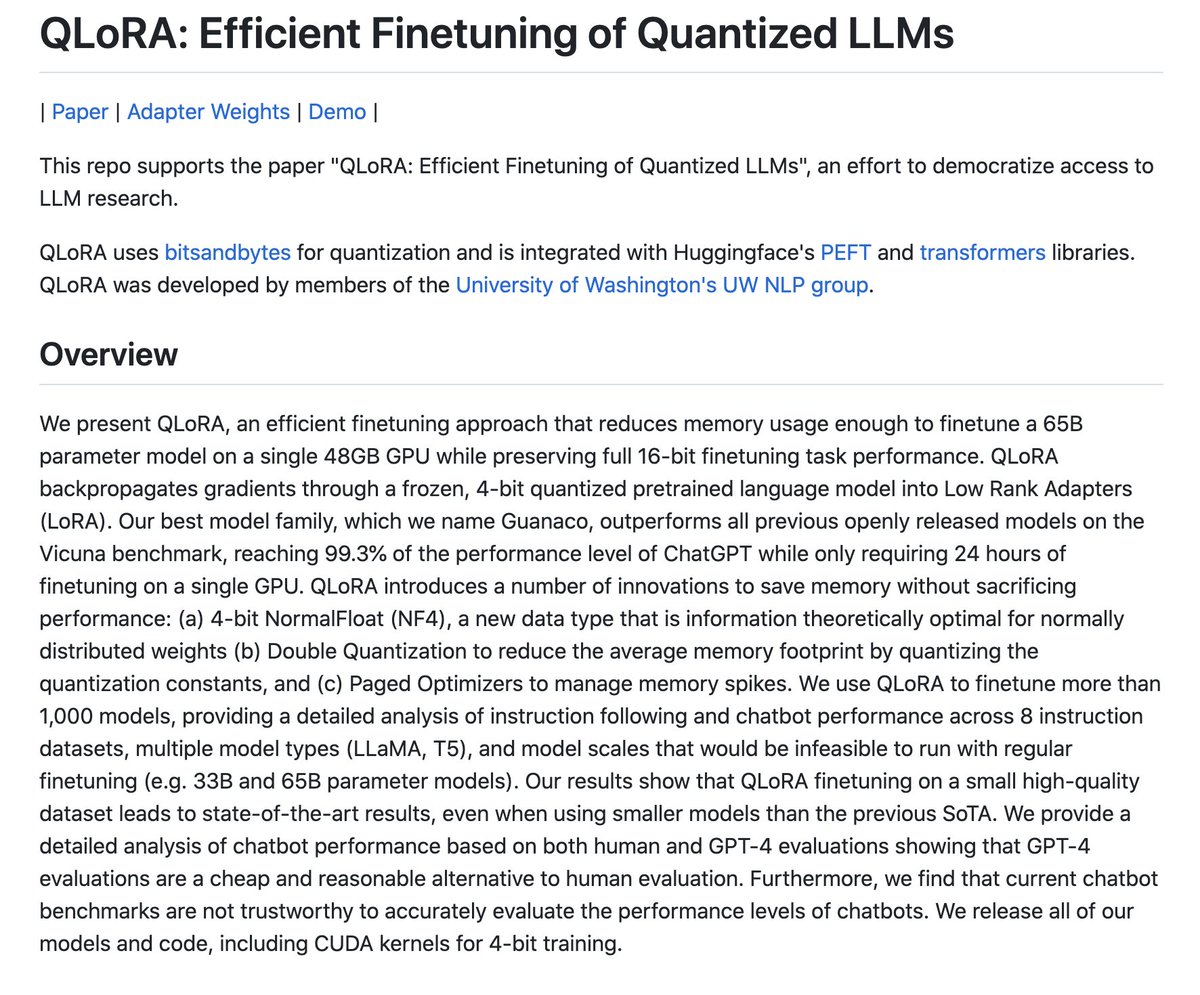

This is big for the democratization of LLMs. You can now achieve ChatGPT-level performance <24 hours by finetuning LLama on a 48GB GPU. This is possible thanks to QLoRA, a new approach that significantly minimizes memory usage. It uses bitsandbytes for quantization and is… https://t.co/TrFClQ05WS https://t.co/u9mkKlwWqj

Walkthrough the new AI-augmented photoshop, starting the era of assisted images / photos / illustrations. And starting to lower every skills in jobs until everything from reality including videos and 3D become malleable by everyone 🫨😵💫 and until the new art forms emerges https://t.co/pDGsli9yDp