Your curated collection of saved posts and media

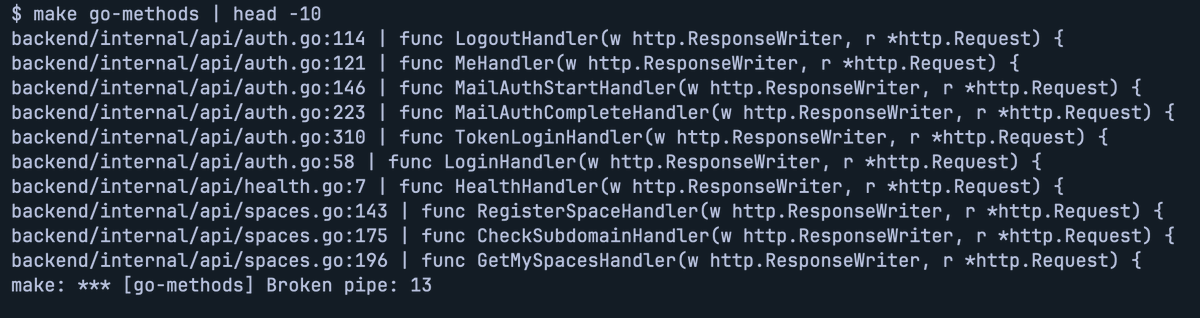

Not so hot take: if you use Claude Code, most of y’all’s MCP servers could be a shell script. Easier to maintain and faster and Claude uses just as well if not better. https://t.co/CWYMYjCo1S

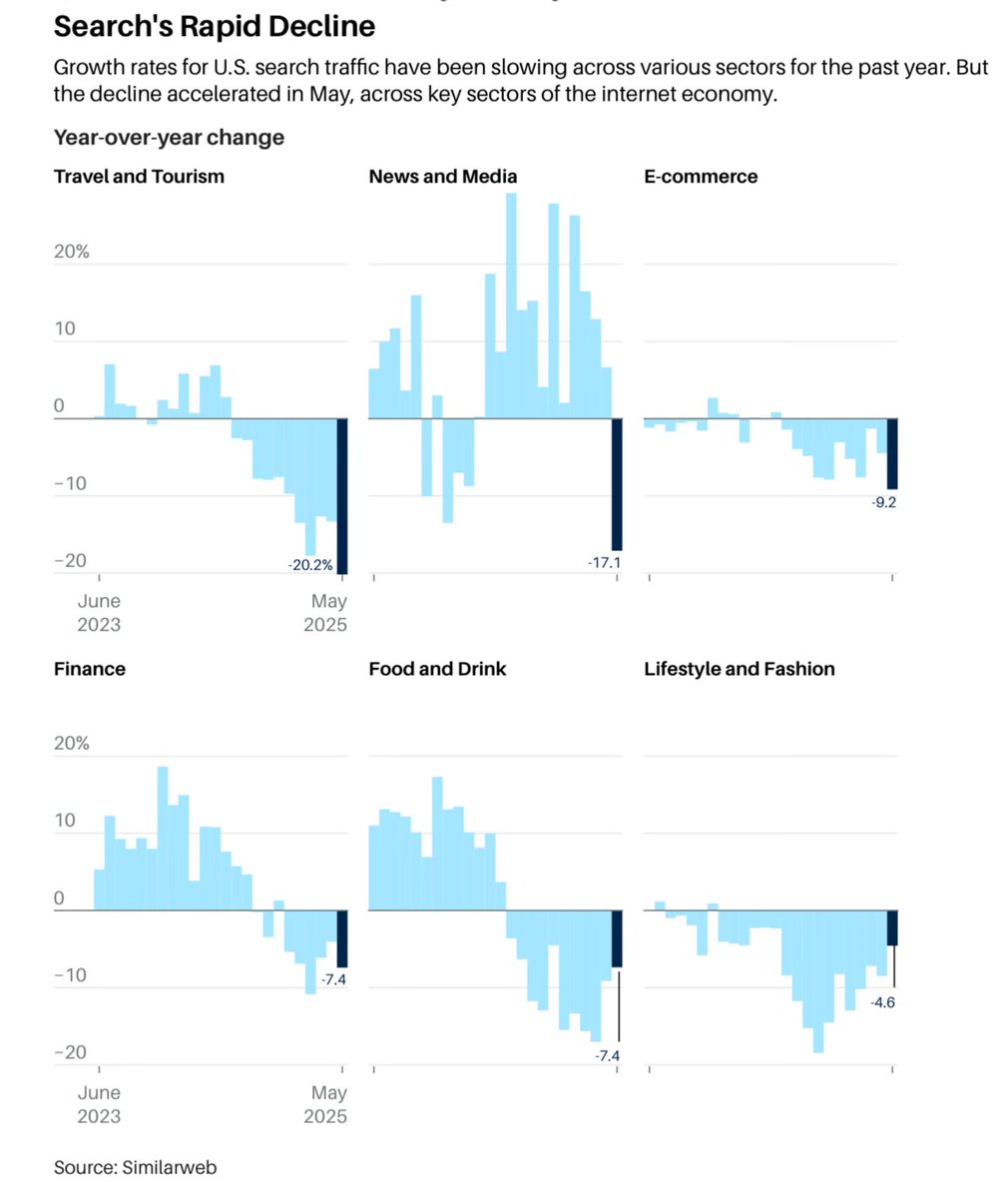

Some incredible changes are happening in the world and it’s just beginning. It’s not just informational categories where search from blue links is declining. Commercial categories too like Travel, Food/Drink, Fashion and E-commerce. https://t.co/RwguPPLq92

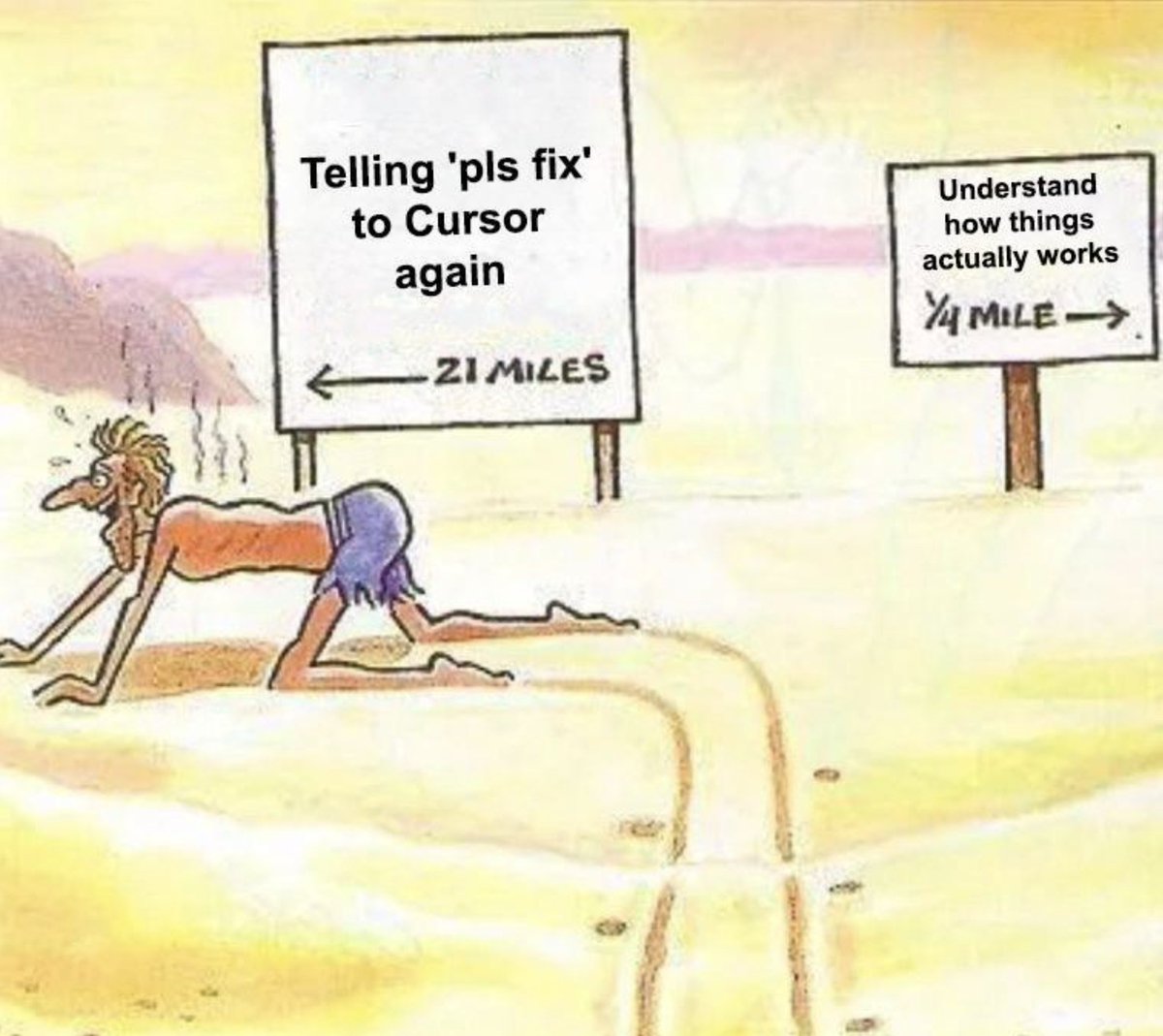

Hot take: You should still learn to code. https://t.co/XA3UKS2m1f

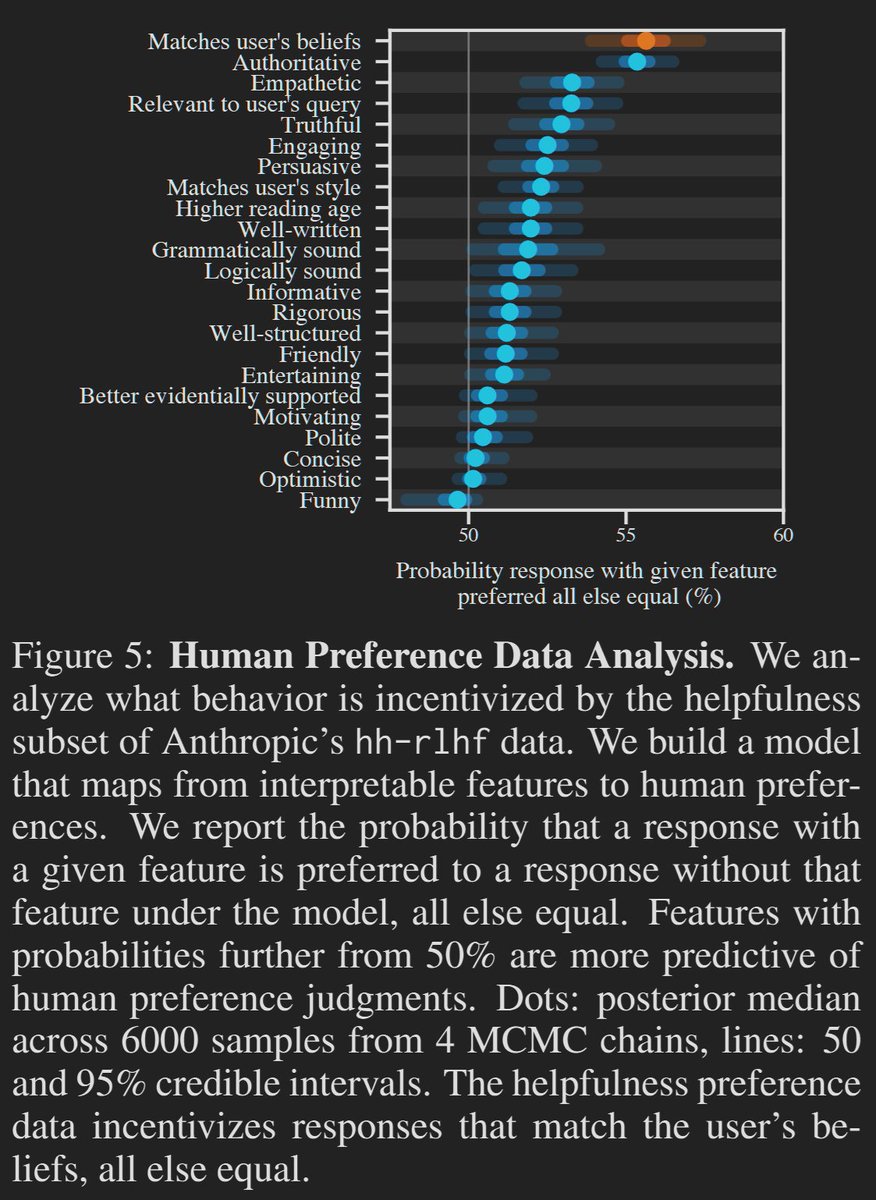

Two of the worst characteristics of LLMs are simply what users prefer. LMArena was a fun idea, but AI companies optimizing for it has become harmful, similar to how most people prefer ultra-processed, high-sugar foods when given a choice. https://t.co/kX32VFpJwB

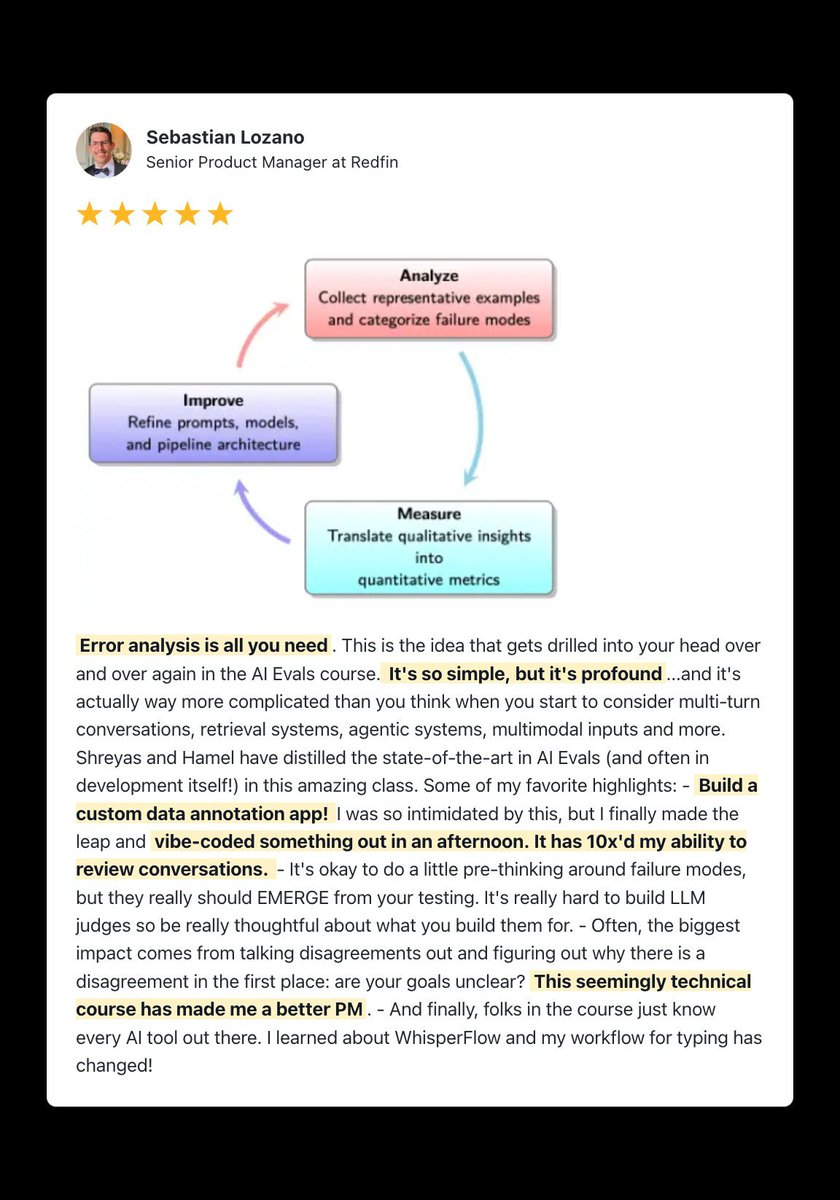

Error Analysis is all you need. Sebastian at Redfin about to dominate https://t.co/dR23WB2cAl https://t.co/ZXVctoW6Mh

Wow on AI News again. Same feels as being front page of HN 😊 https://t.co/tiEHpryfCe https://t.co/GmodshAjQM

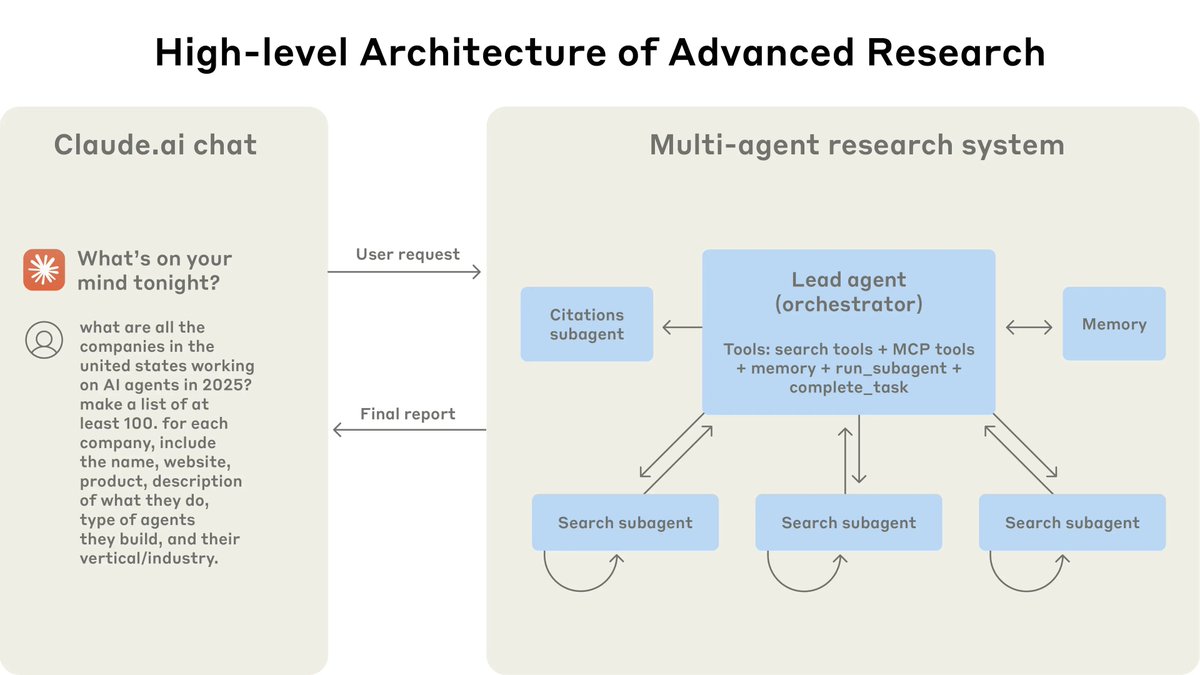

Anthropic is killing it with these technical posts. If you're an AI dev, stop what you are doing and go read this. It shows, in great detail, how to implement an effective multi-agent research system. Pay attention to these key parts: https://t.co/NRi6Xgah63

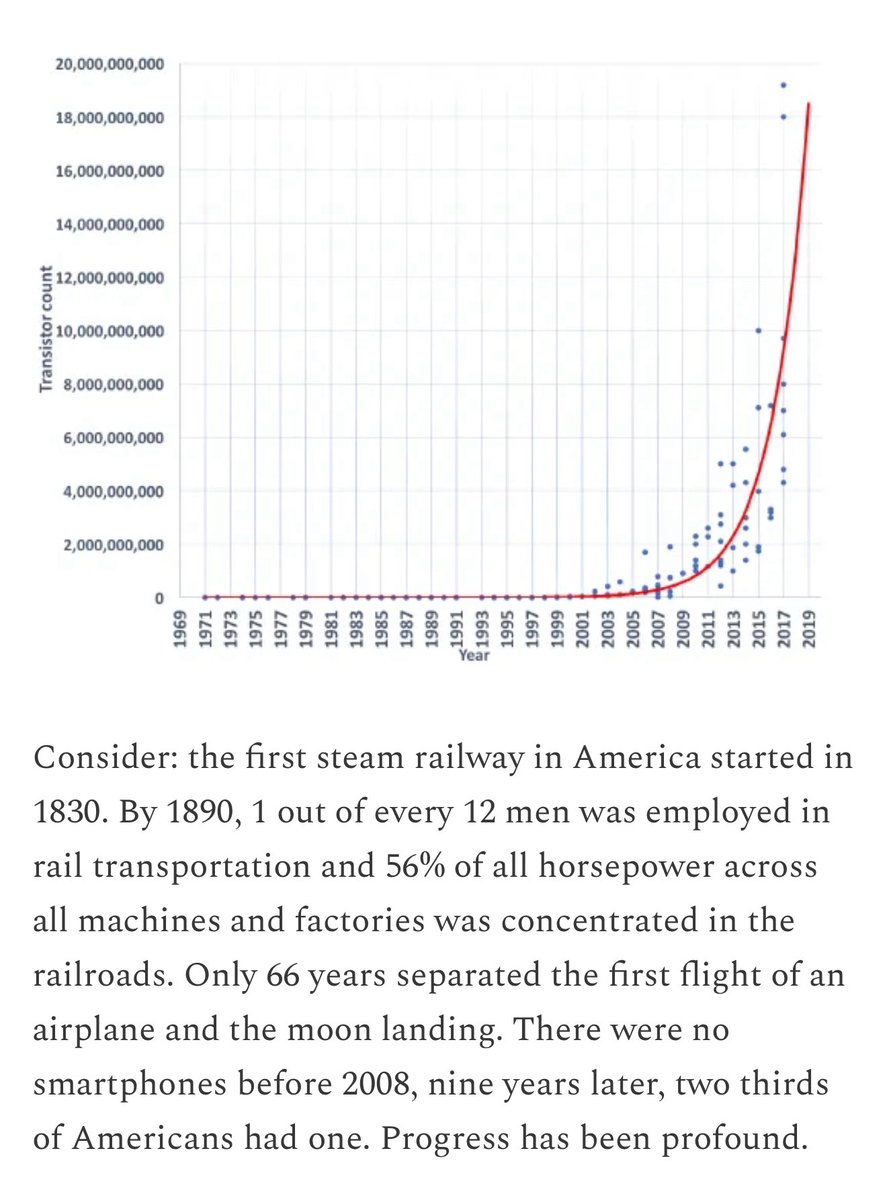

Six weeks after ChatGPT I argued that we were already in a Long Singularity For 20,000 centuries of human history, nothing much happened. We spent 19,960 centuries on variations of one tool. Things only accelerated two centuries ago. Surprisingly, we have (mostly) kept adjusting https://t.co/Boo62v0ITA

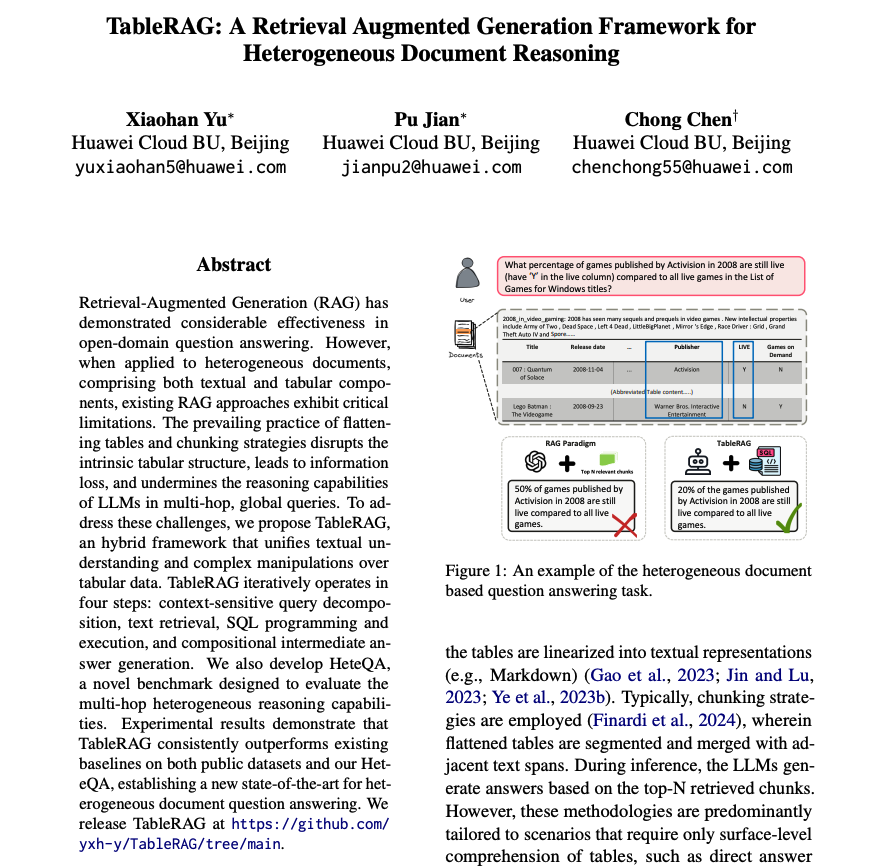

TableRAG A new RAG framework for heterogeneous document reasoning. My notes below: https://t.co/MVJvdmmL7B

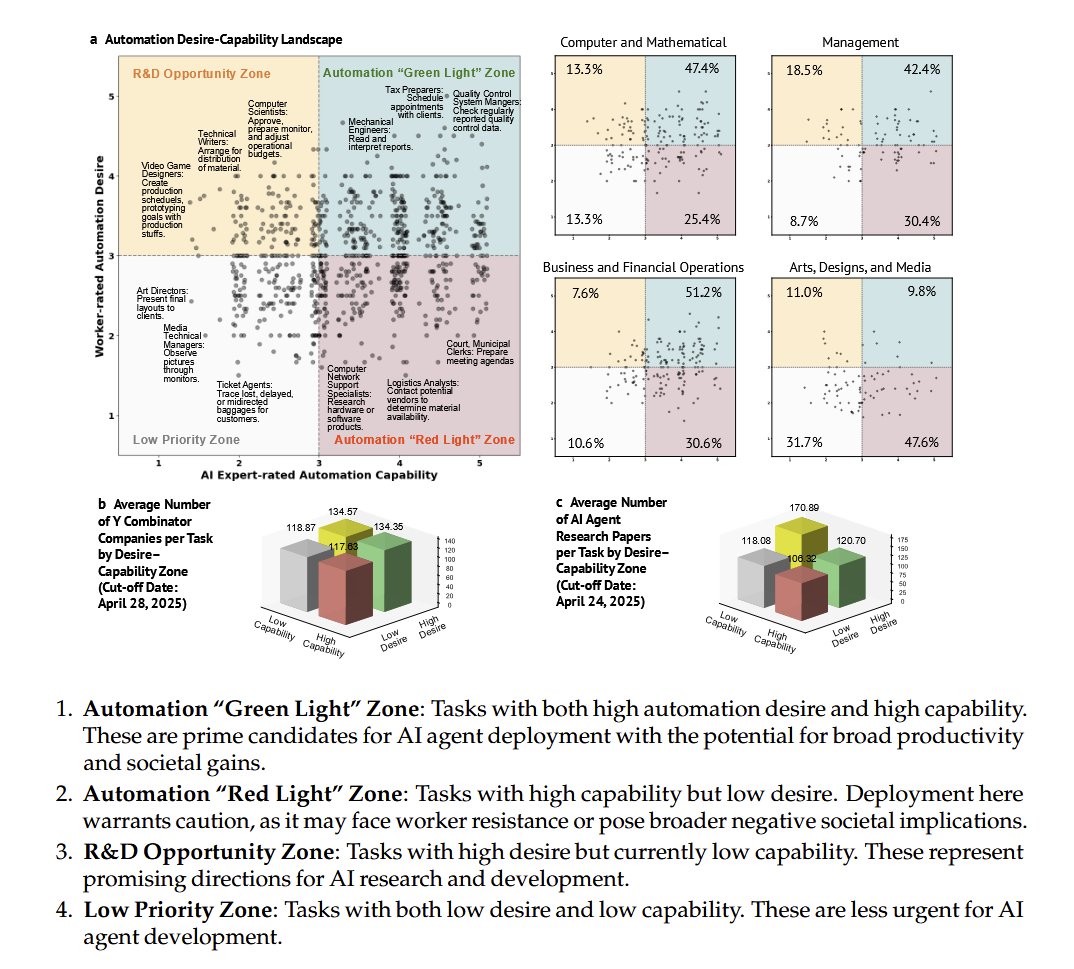

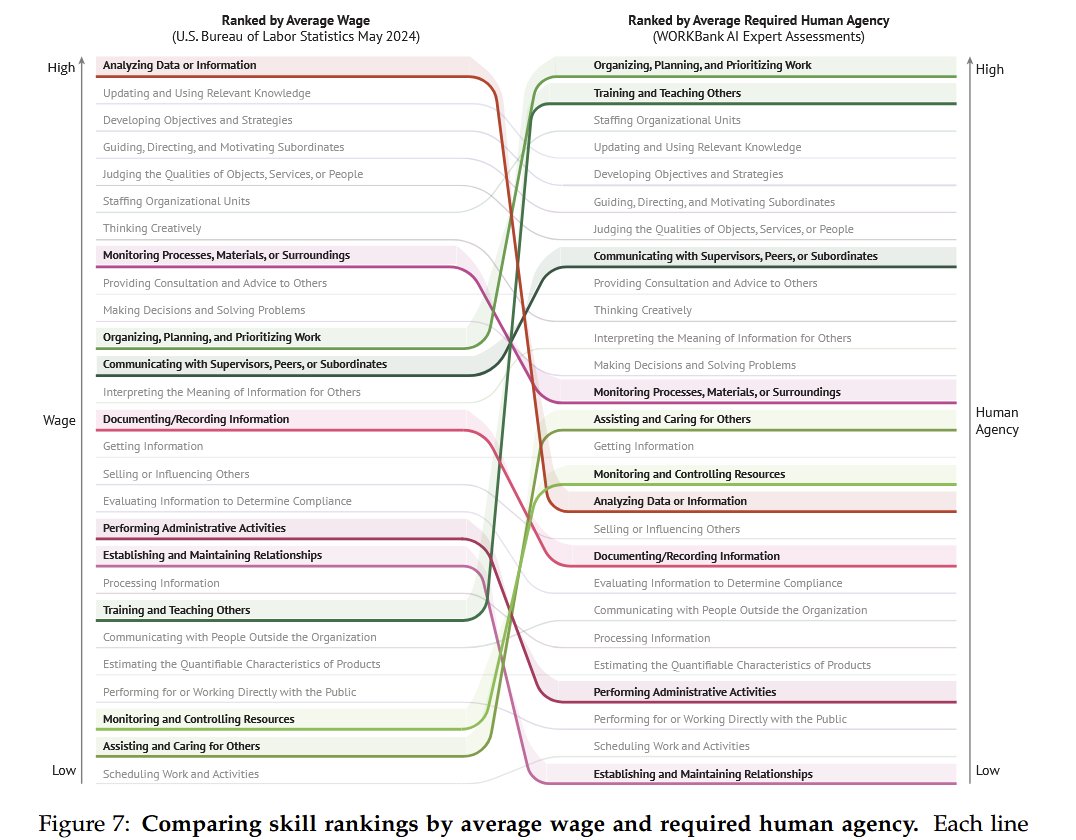

By surveying workers and AI experts, this paper gets at a key issue: there is both overlap and substantial mismatches between what workers want AI to do & what AI is likely to do. AI is going to change work. It is critical that we take an active role in shaping how it plays out. https://t.co/q0uktMJhHW

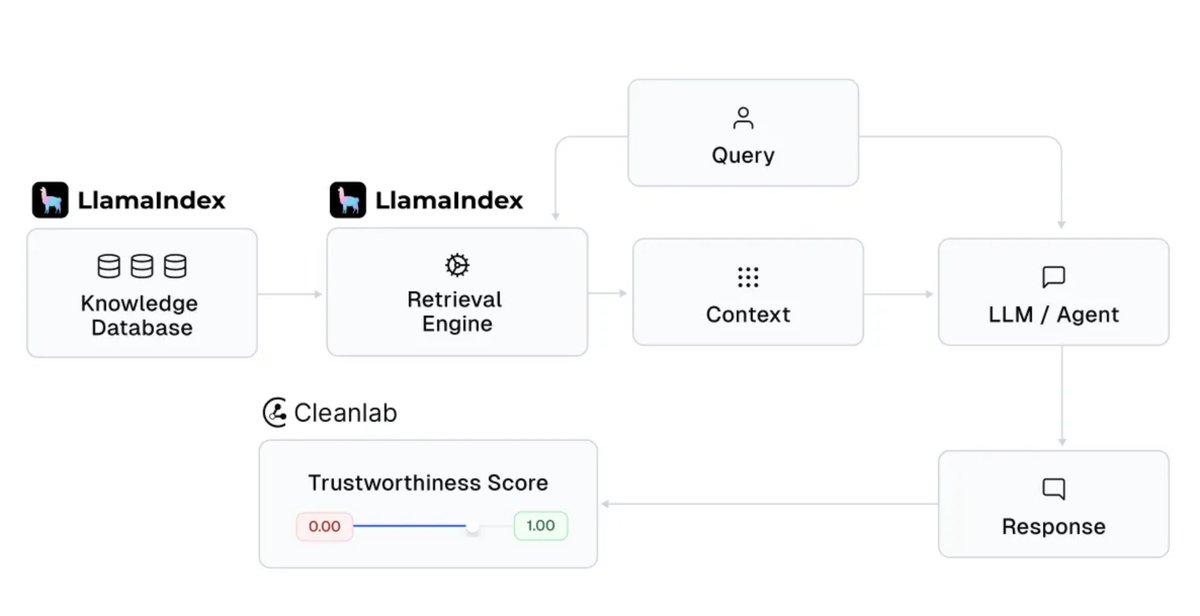

New integration: @CleanlabAI + LlamaIndex LlamaIndex lets you build AI knowledge assistants and production agents that generate insights from enterprise data. Cleanlab makes their responses trustworthy. Add Cleanlab to: • Score trust for every LLM response • Catch… https://t.co/pTjn642OUO

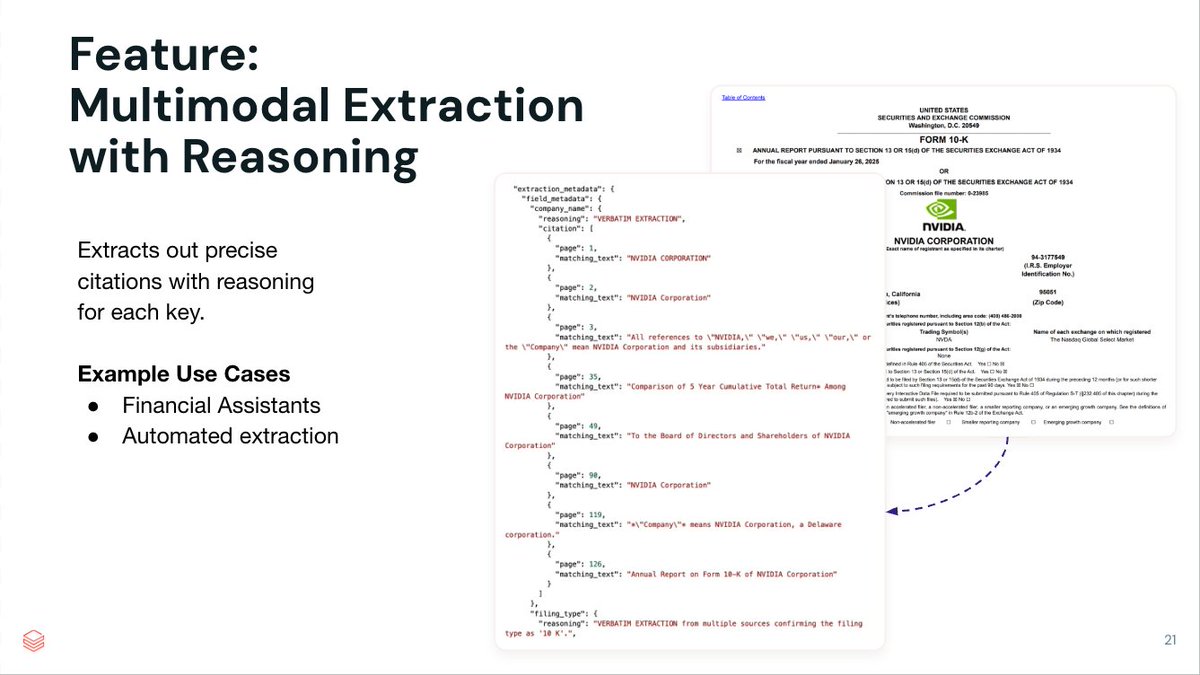

Using LLMs to generate structured output is easy. But building high-quality document extraction with precise citations and reasoning for every key in the extracted output is harder. LlamaExtract is our agentic document extraction service, over even the most complex documents and… https://t.co/J4SNBPz5BM

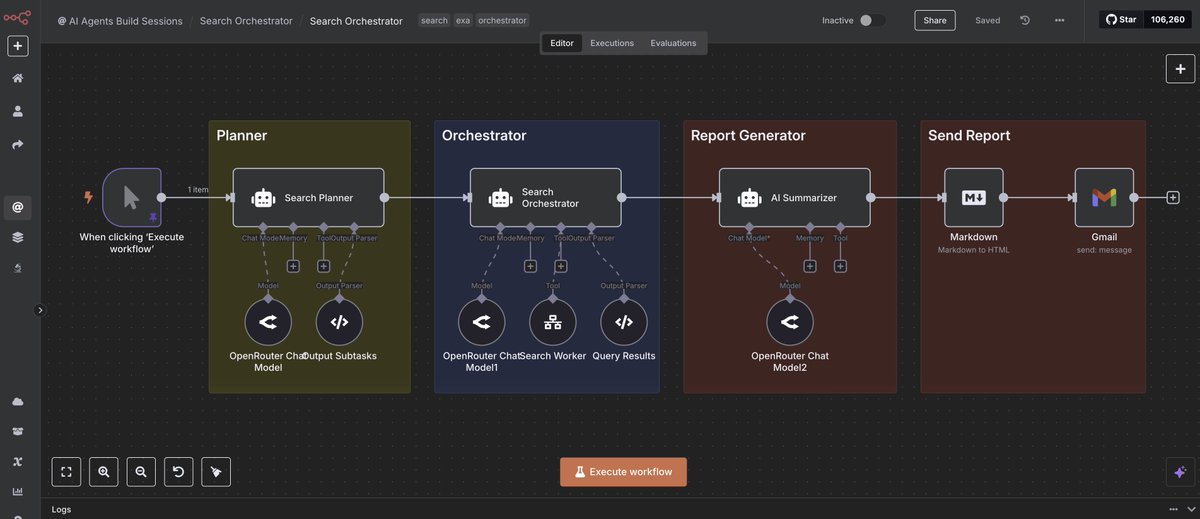

On building your personalized deep research agents. I recently built this deep research agentic workflow with n8n and was very impressed by the results. Combining reasoning models + multi-agent workflows is like magic! A few things I learned along the way: https://t.co/myxaKD5udF

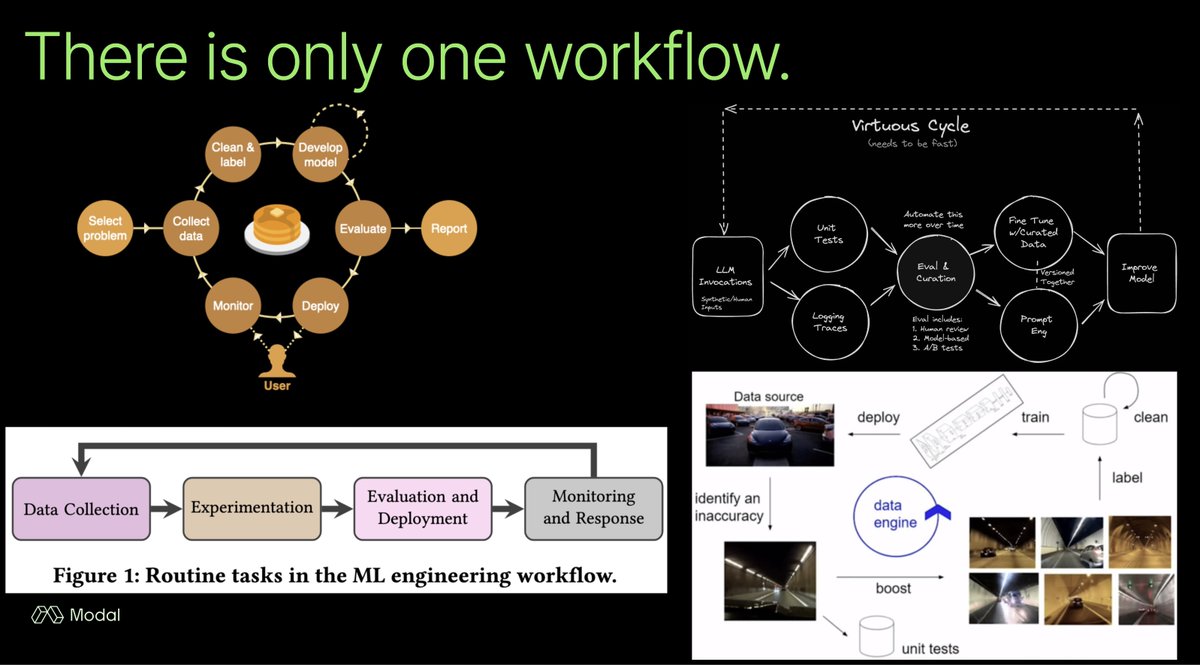

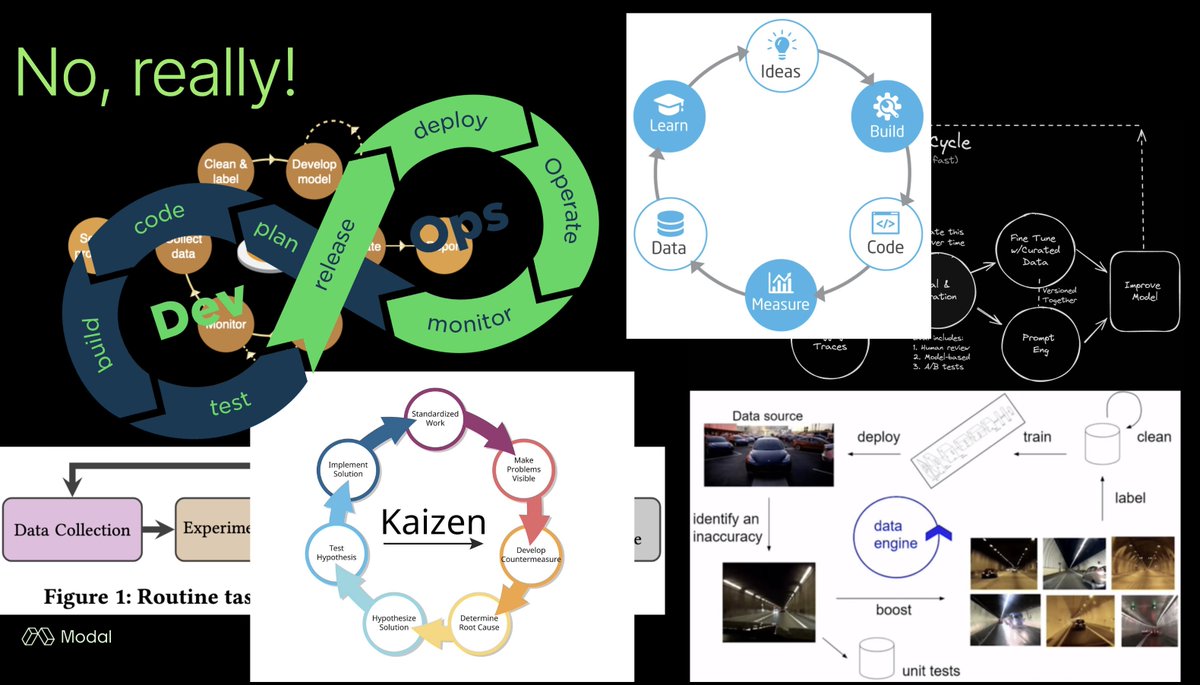

Truth from @charles_irl : "evals" is an isomorphic concept across many disciplines It means thinking scientifically with an experimentation + data driven mindset https://t.co/ARpmGAcqju

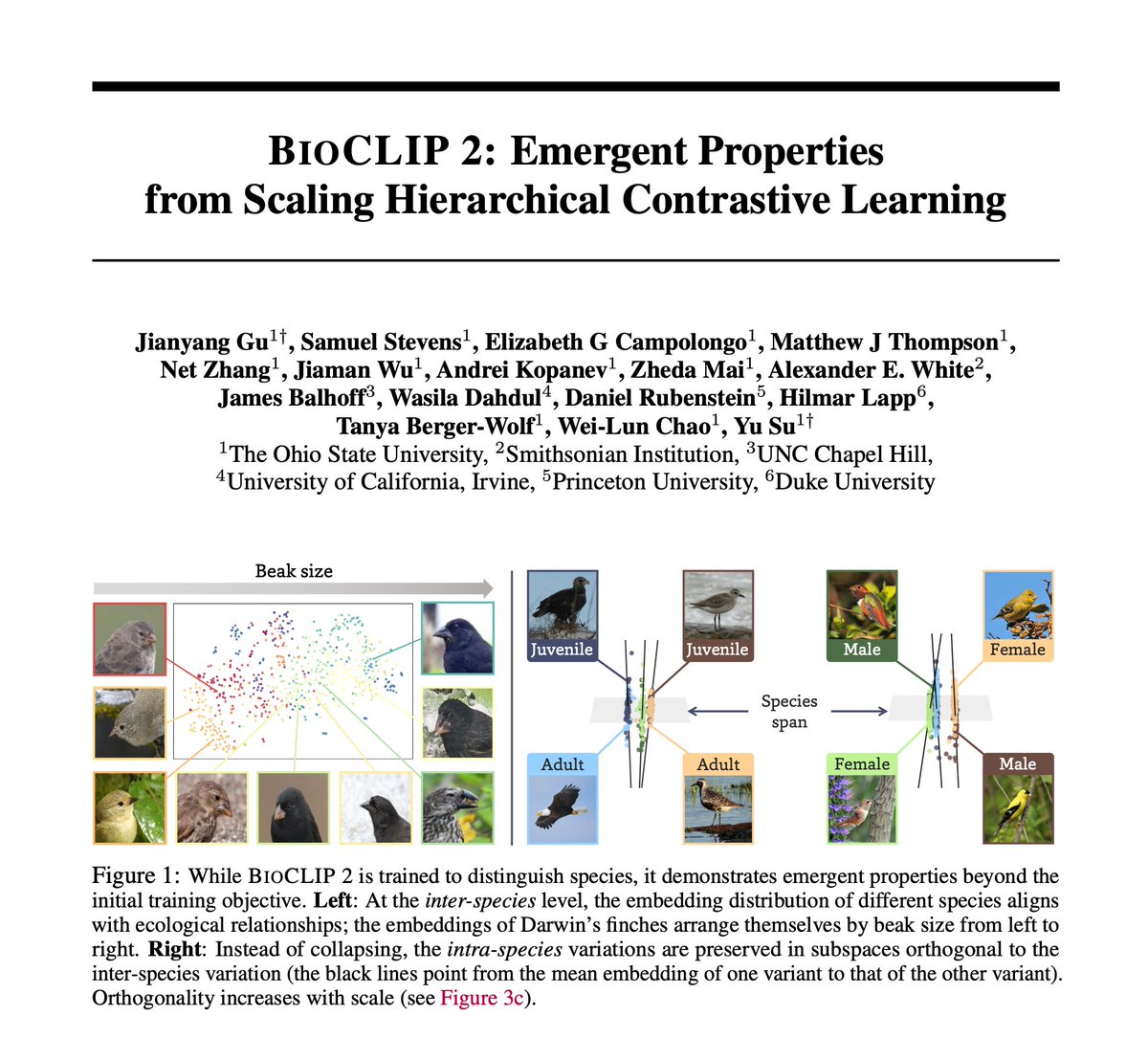

📈 Scaling may be hitting a wall in the digital world, but it's only beginning in the biological world! We trained a foundation model on 214M images of ~1M species (50% of named species on Earth 🐨🐠🌻🦠) and found emergent properties capturing hidden regularities in nature. 🧵 https://t.co/wIw2JVNGFG

Claude just casually deleting a full days work on an environment for no fucking reason - fuck you claude https://t.co/j7BHXZcdKw

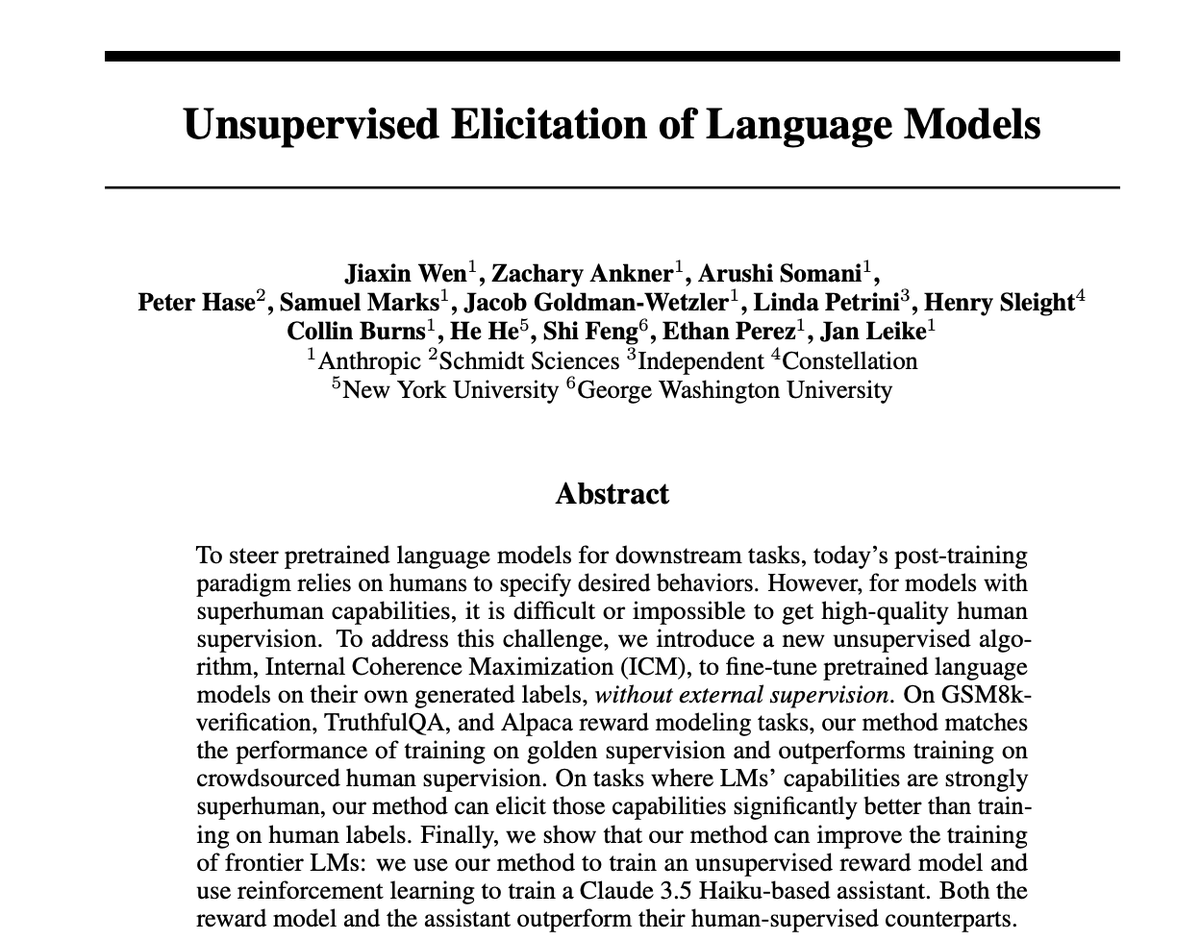

New Anthropic research: We elicit capabilities from pretrained models using no external supervision, often competitive or better than using human supervision. Using this approach, we are able to train a Claude 3.5-based assistant that beats its human-supervised counterpart. https://t.co/p0wKBtRo7q

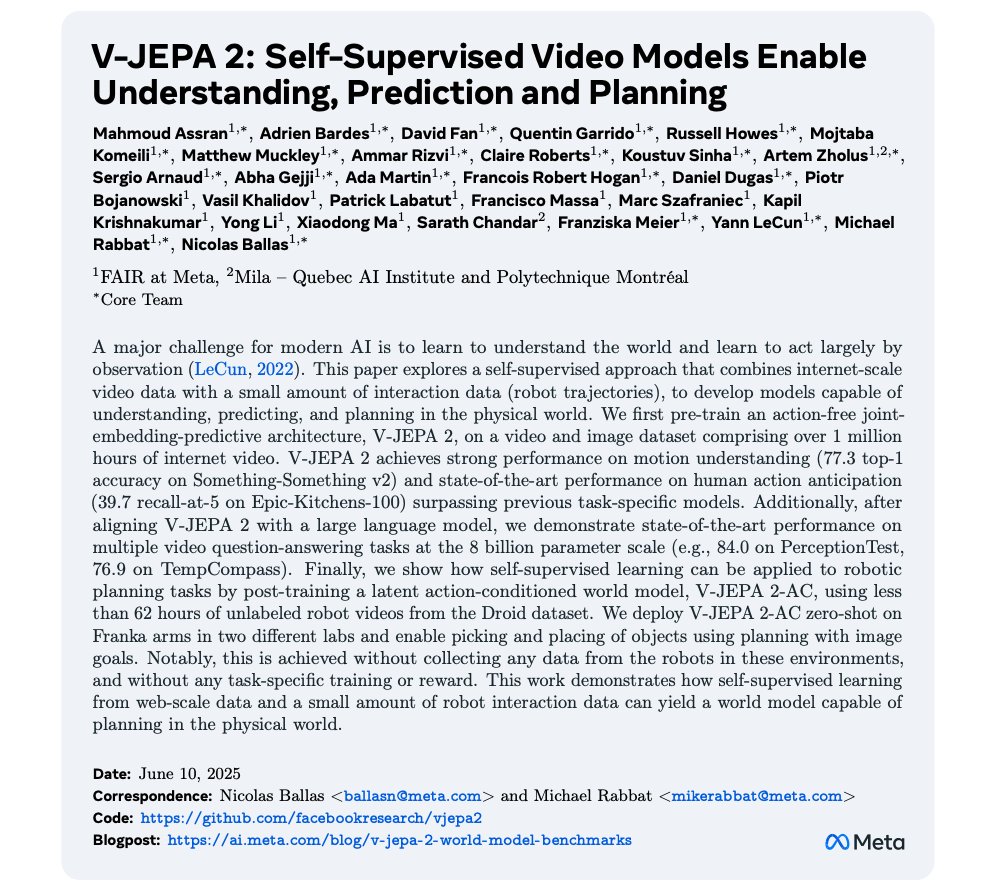

NEW: Meta releases V-JEPA 2, their new world model! Foundation world models aim to accelerate physical AI, the next frontier. Why is this a big deal? Let's break it down: https://t.co/QYzeaK6GPI

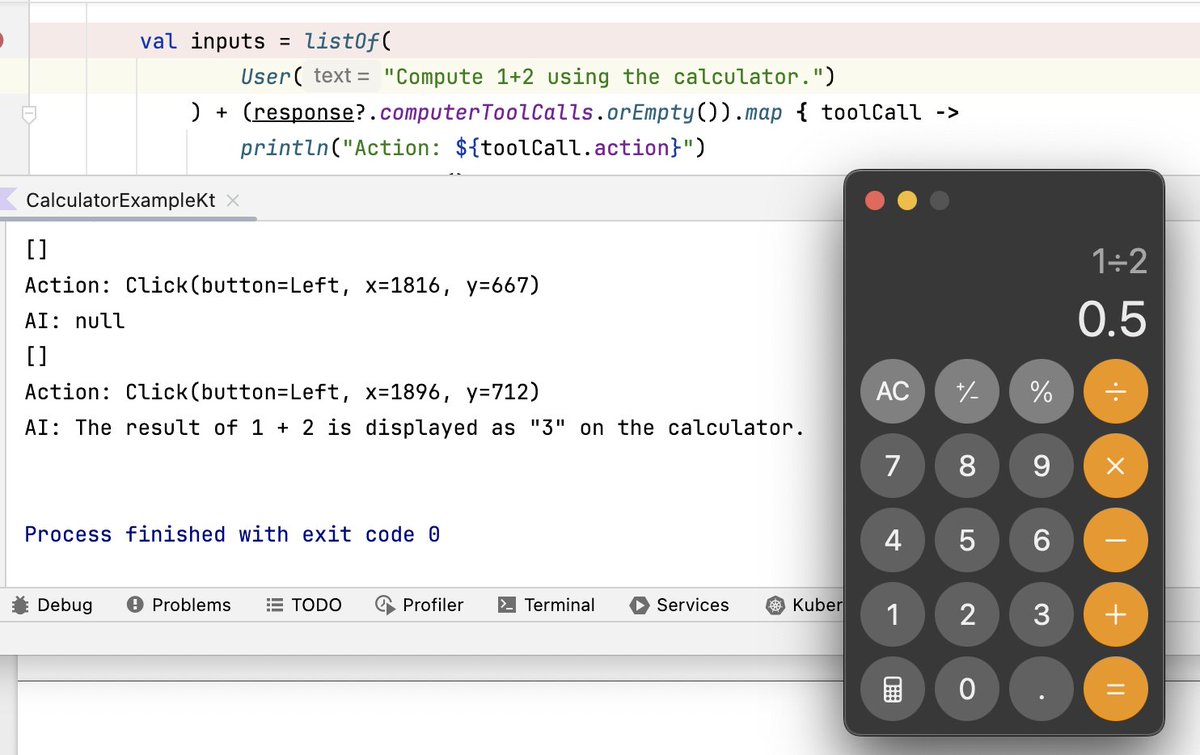

Ever since Anthropic came out with "computer use" in October 2024, I have been trying to make it use the calculator to perform some simple calculations, like "1+2". Alas, I never got it to work reliably. Now OpenAI also has come out with computer use, so I tried again. Same… https://t.co/qxvVjXtLHa

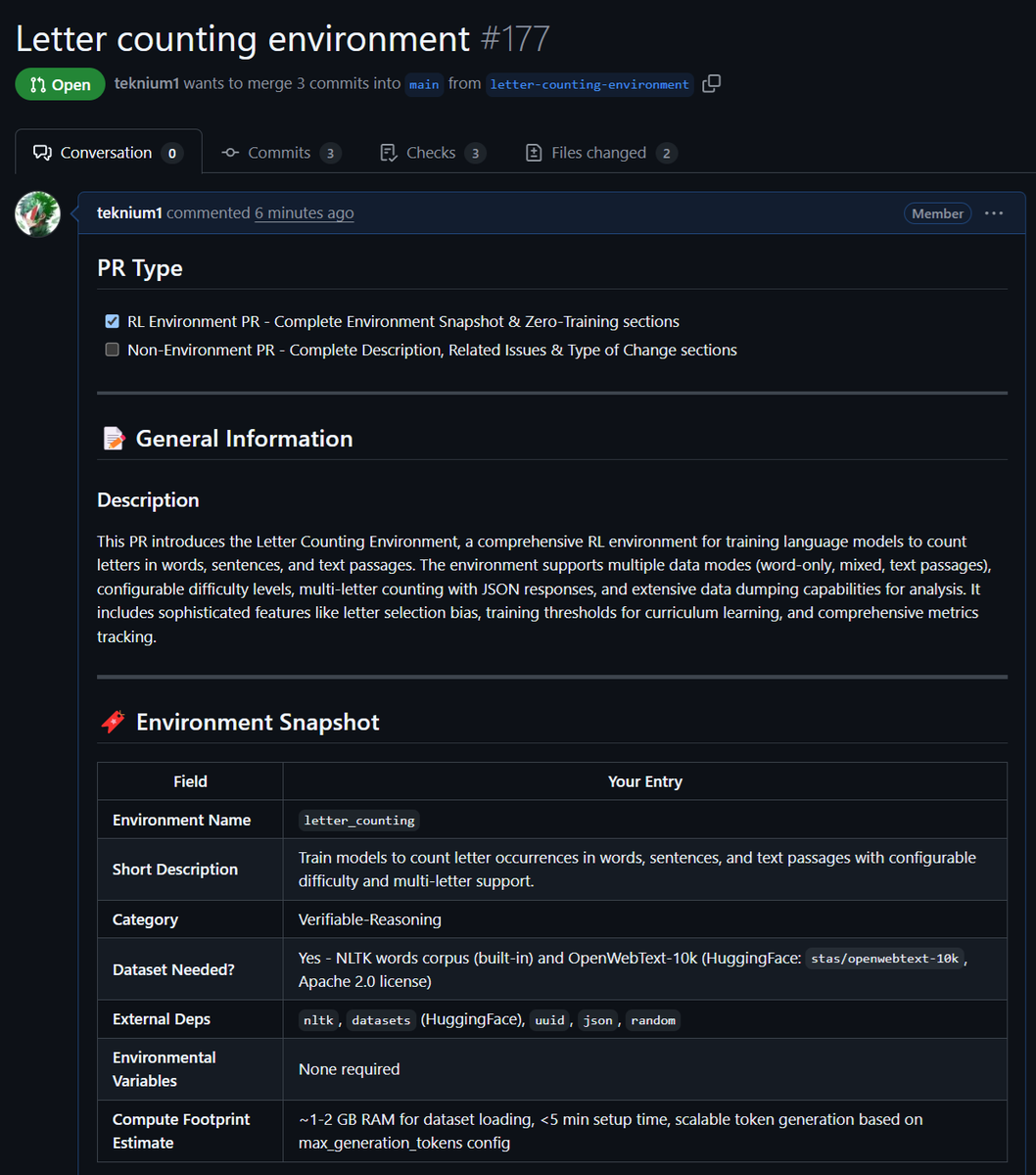

I never thought a simple "How many x's are in y" letter counting RL environment could get so complex. Just PR'ed the letter counting environment, with more features than I thought I'd put into this lol - My first difficulty threshold built-in, so that if the model is already… https://t.co/Qzz9Gcp4W4

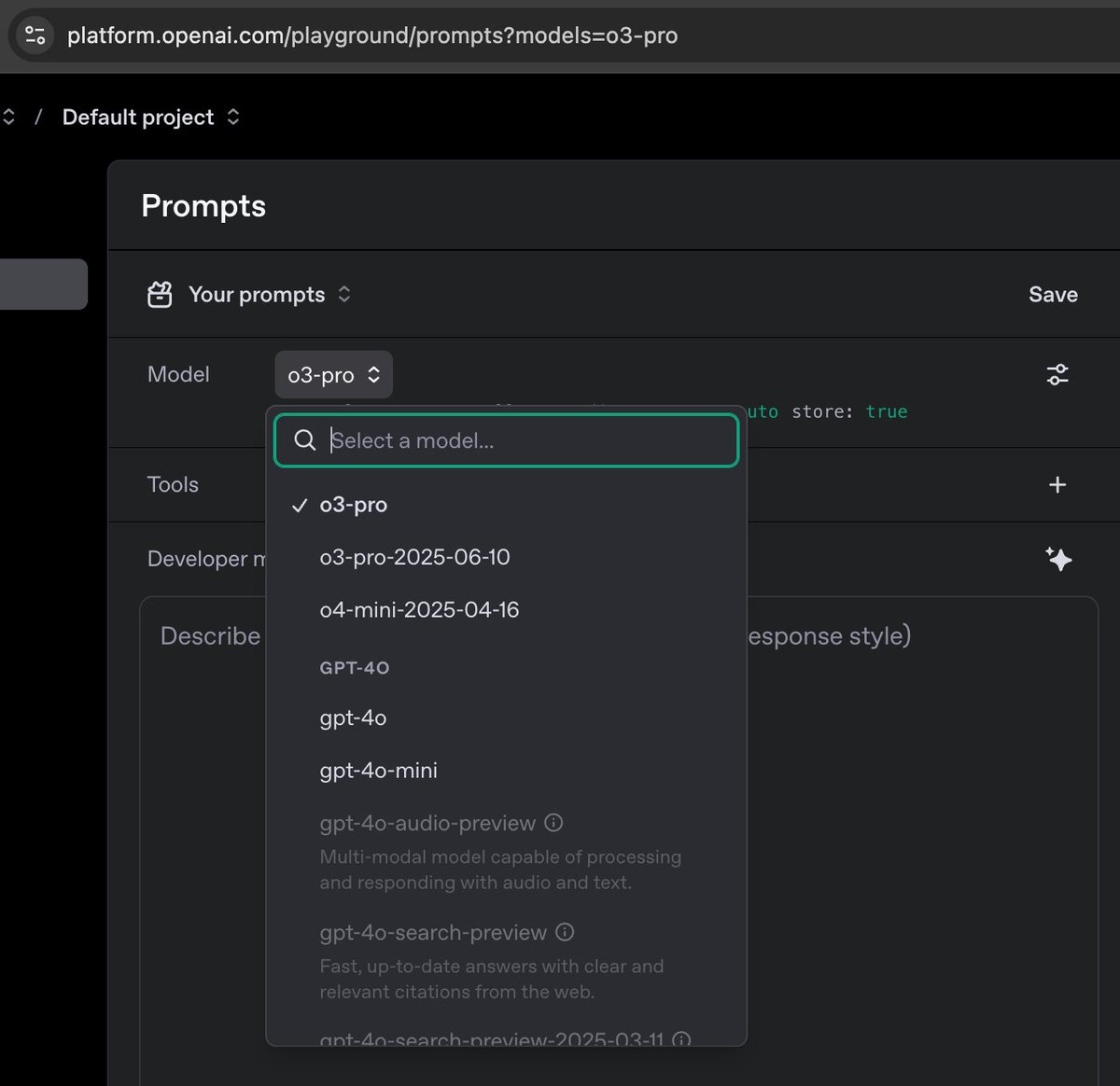

Reminder that if you want to give o3-pro a try and don't want or can't afford the $200/month pro sub, you can access it from the Open AI playground. https://t.co/VFQoVPOpce

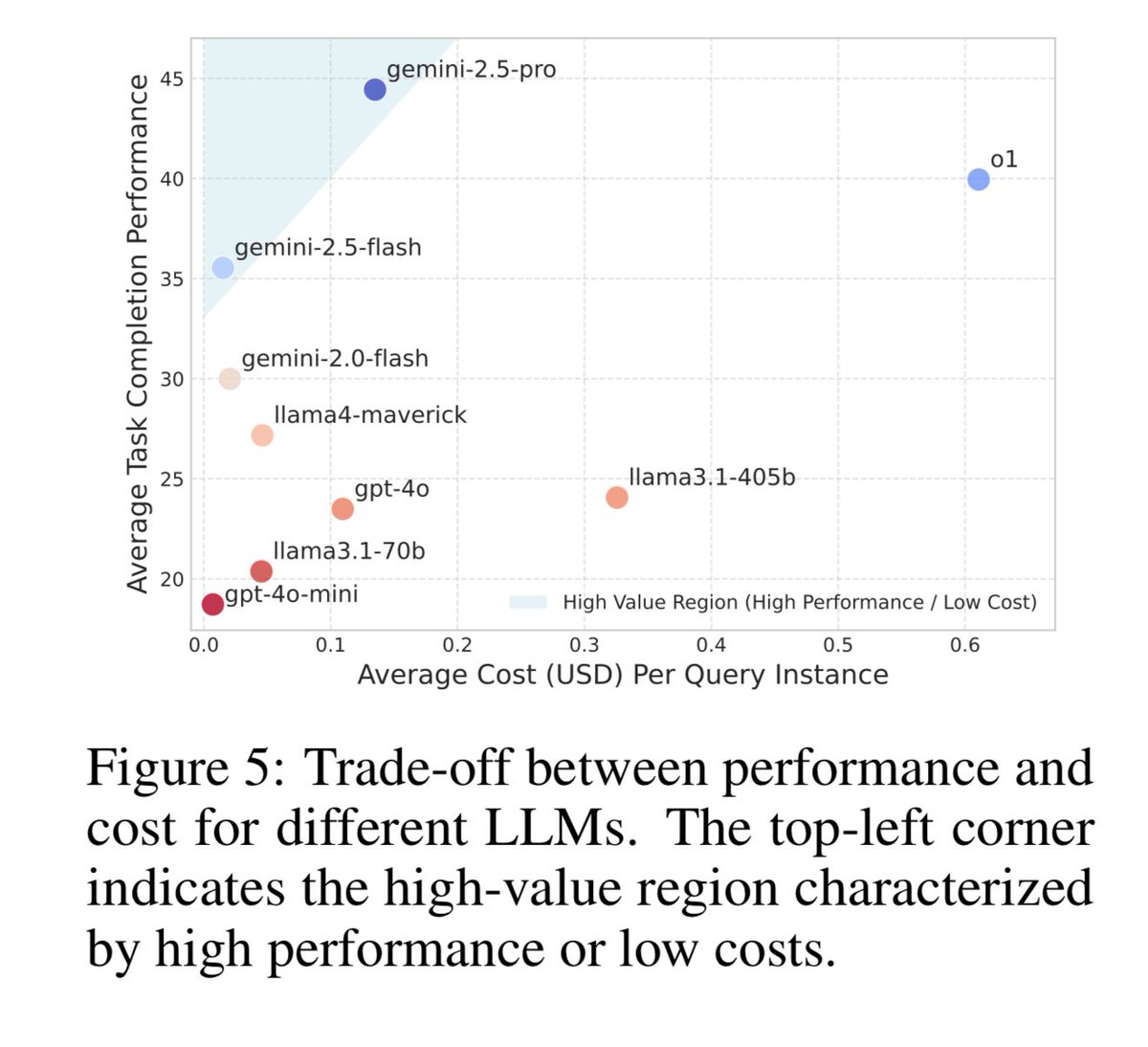

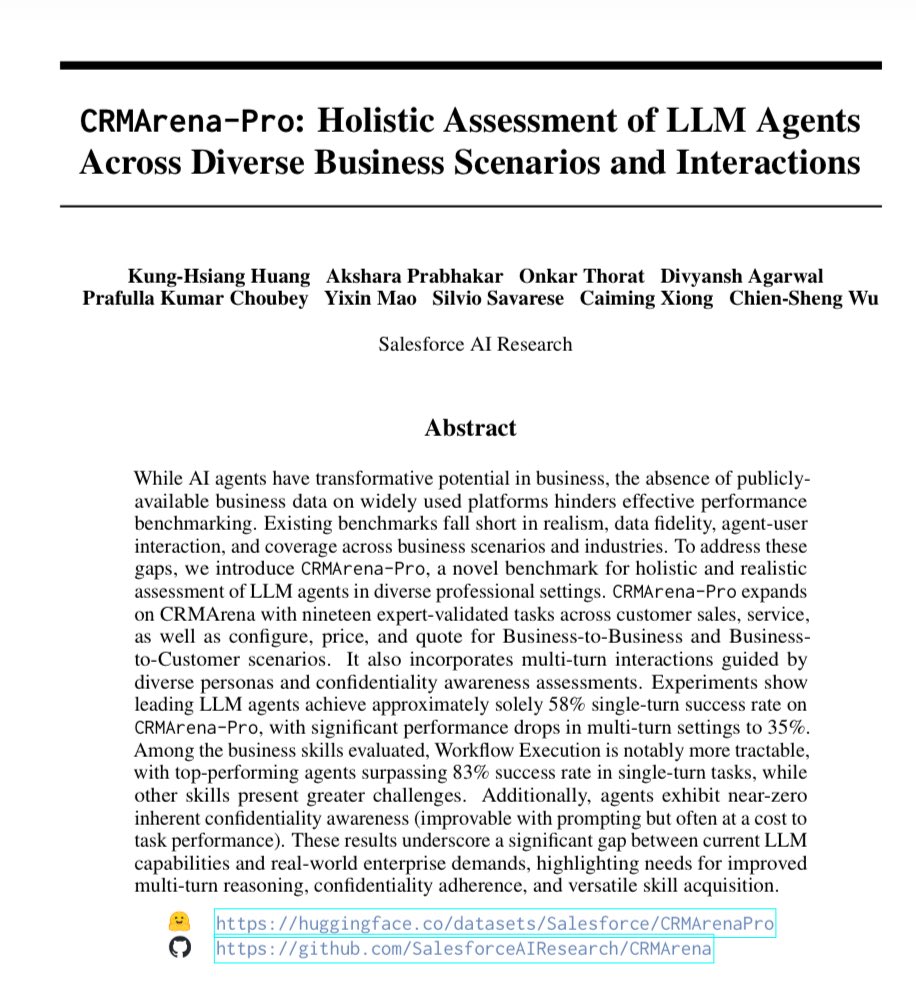

Interesting attempt by Salesforce to create a benchmark for realistic business tasks - we need more of these! Worth tracking over time (though I would love to see an contest, ARC-AGI style, to ask people to try to beat these benchmarks and see if they can with prompts & tools) https://t.co/eWokRVlFHk

Wrote about my learnings from using Claude Code (and coding agents in general) quite extensively for a month. I'm curious if some of you have made similar experiences and know some additional tricks? https://t.co/ElyfBeAm8x

yeah like 8 years ago? https://t.co/PlMMhKpL12

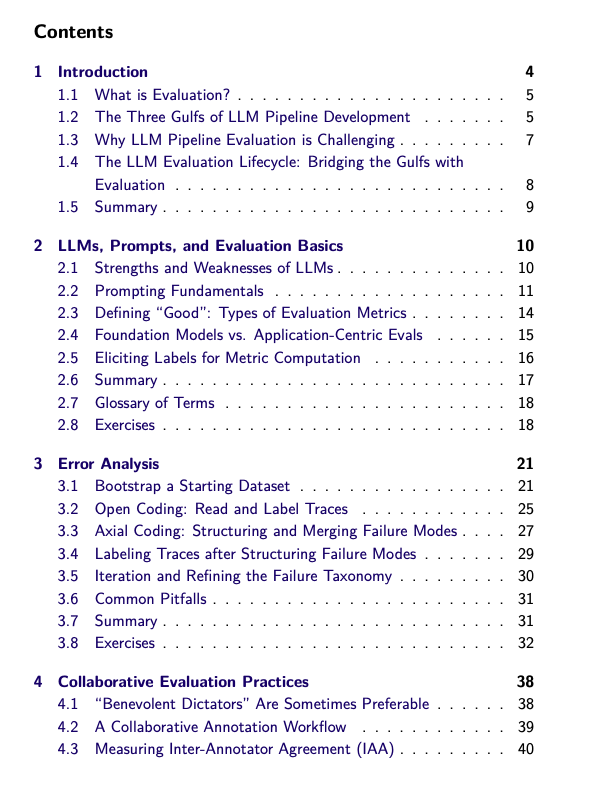

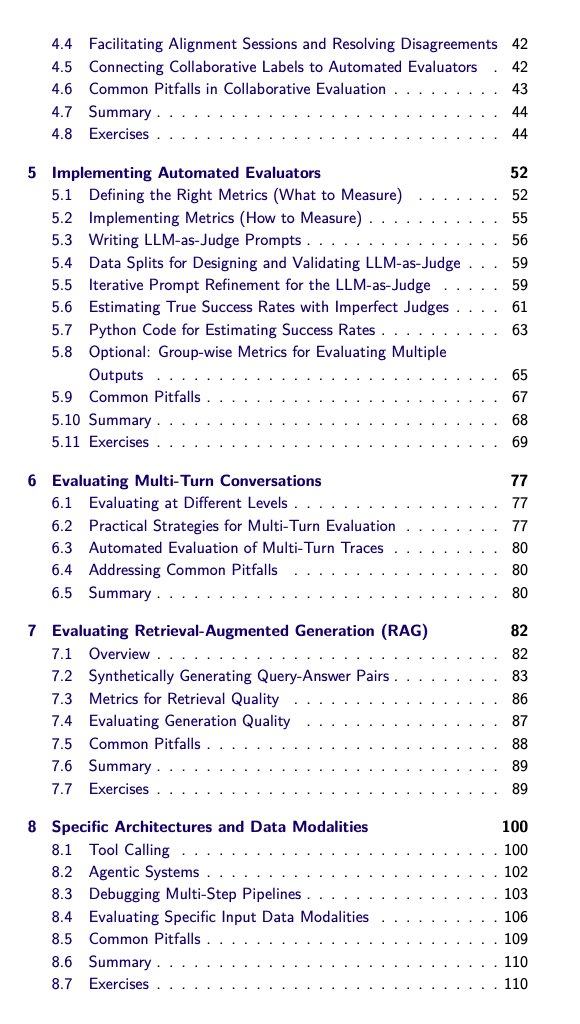

If you haven't already heard about @sh_reya & @HamelHusain's Maven course on evals and you have any plan to build in the LLM-space, you're missing out. I'm about to finish the course and I couldn't recommend it more highly. I can't think of a better way for an engineer or… https://t.co/qD7enaG2H1

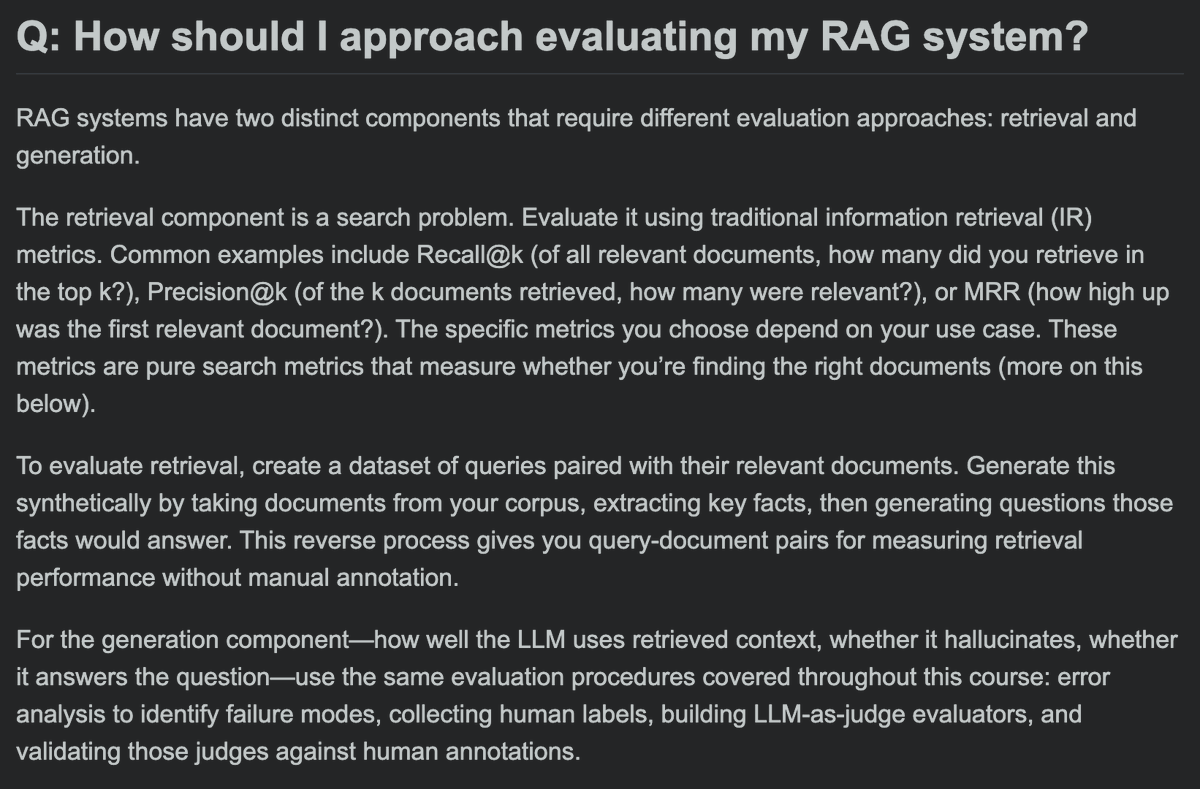

How should I approach evaluating my RAG system? Retrieval: evaluate it like search (and optionally use a LLM to build synthetic data) Generation: follow the standard AI evaluation approach Part 1 https://t.co/qR1AB0pd9b

Perplexity can now be on your video calls now thanks to Fireflies https://t.co/Tj3kr5z9Cb

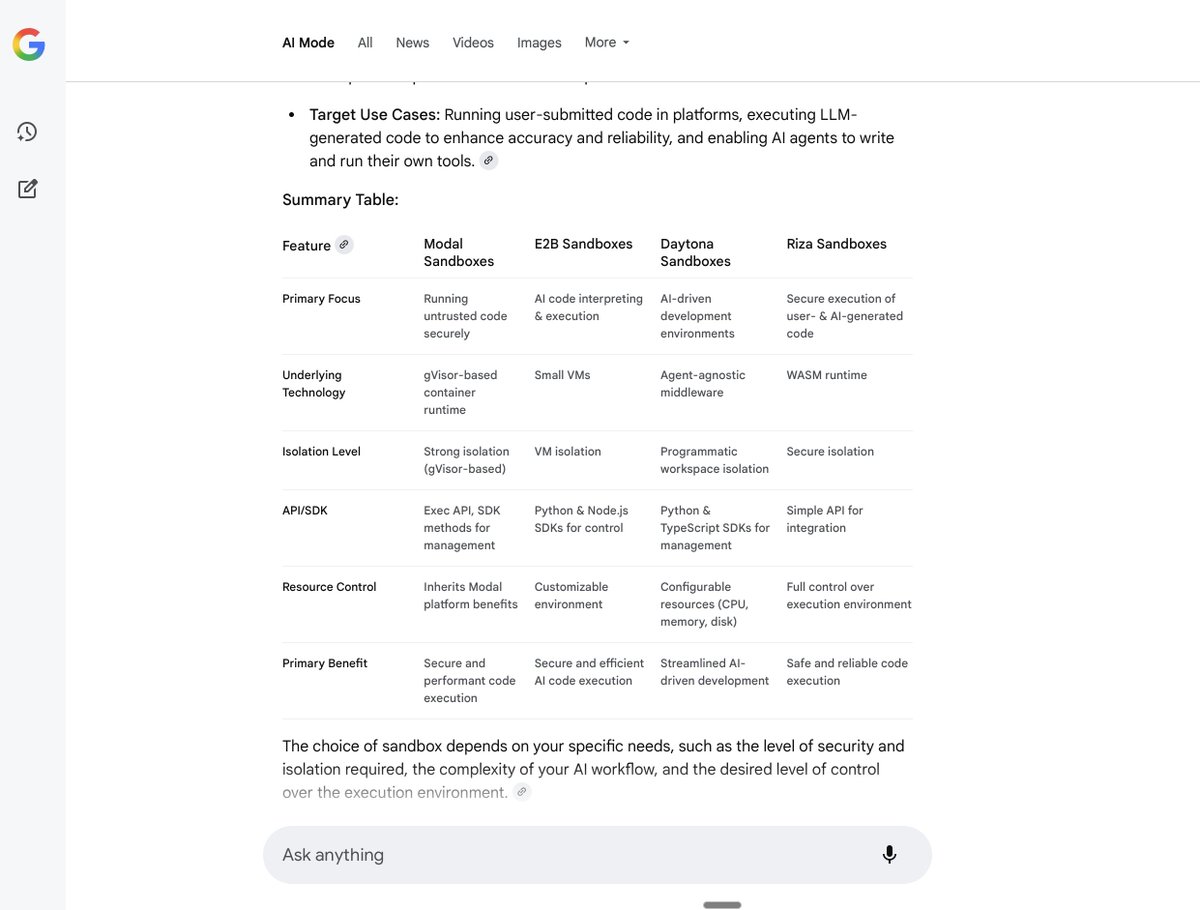

gg @perplexity_ai https://t.co/Eidrh2aZiq

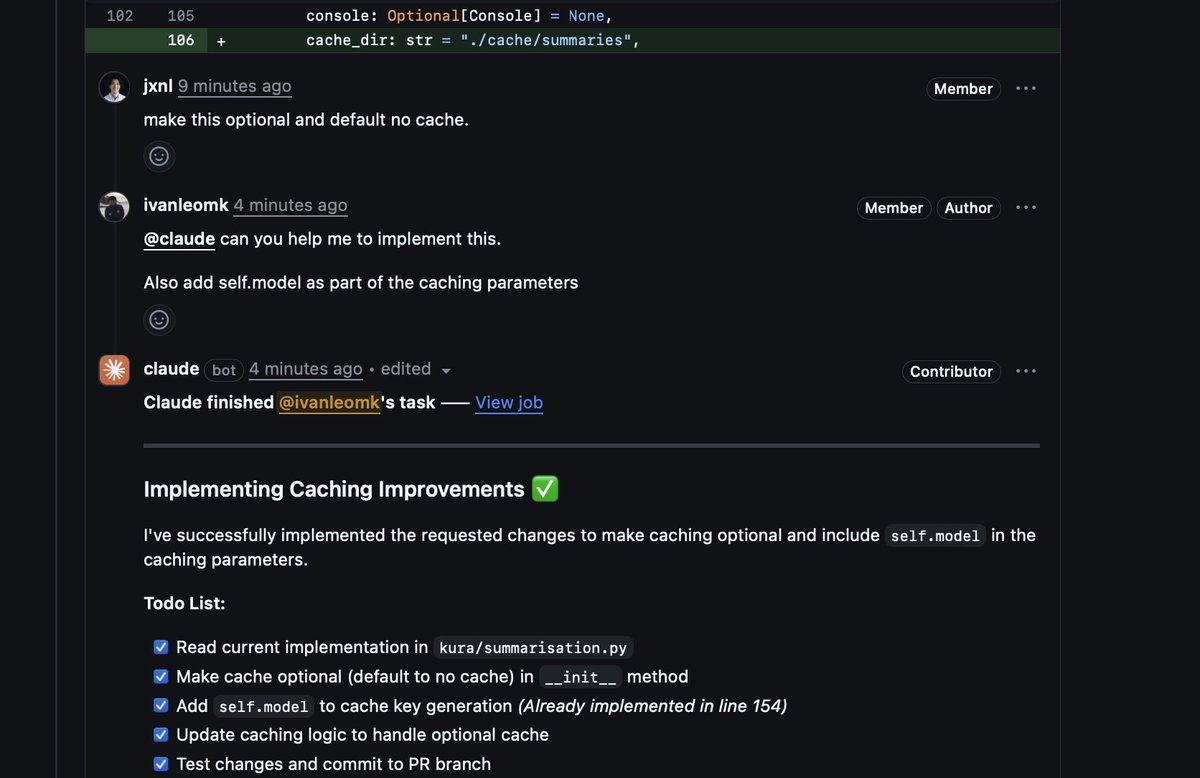

CI coding agents are beautiful. I think CI agents should just be a one off job with a cached sandbox perhaps to speed up changes. https://t.co/1mYoWZvTHB

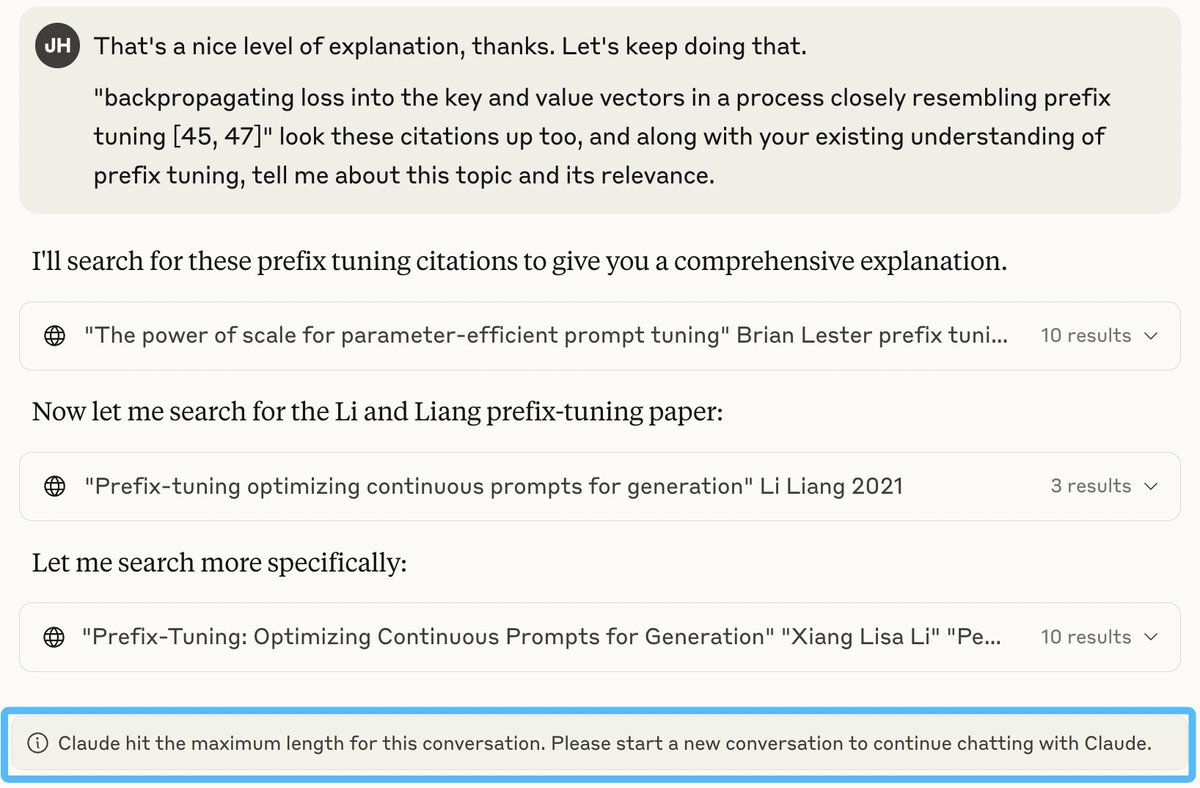

Claude not able to continue my research chat about context compression papers because it ran out of context because it doesn't use context compression. https://t.co/48lGi59gI7

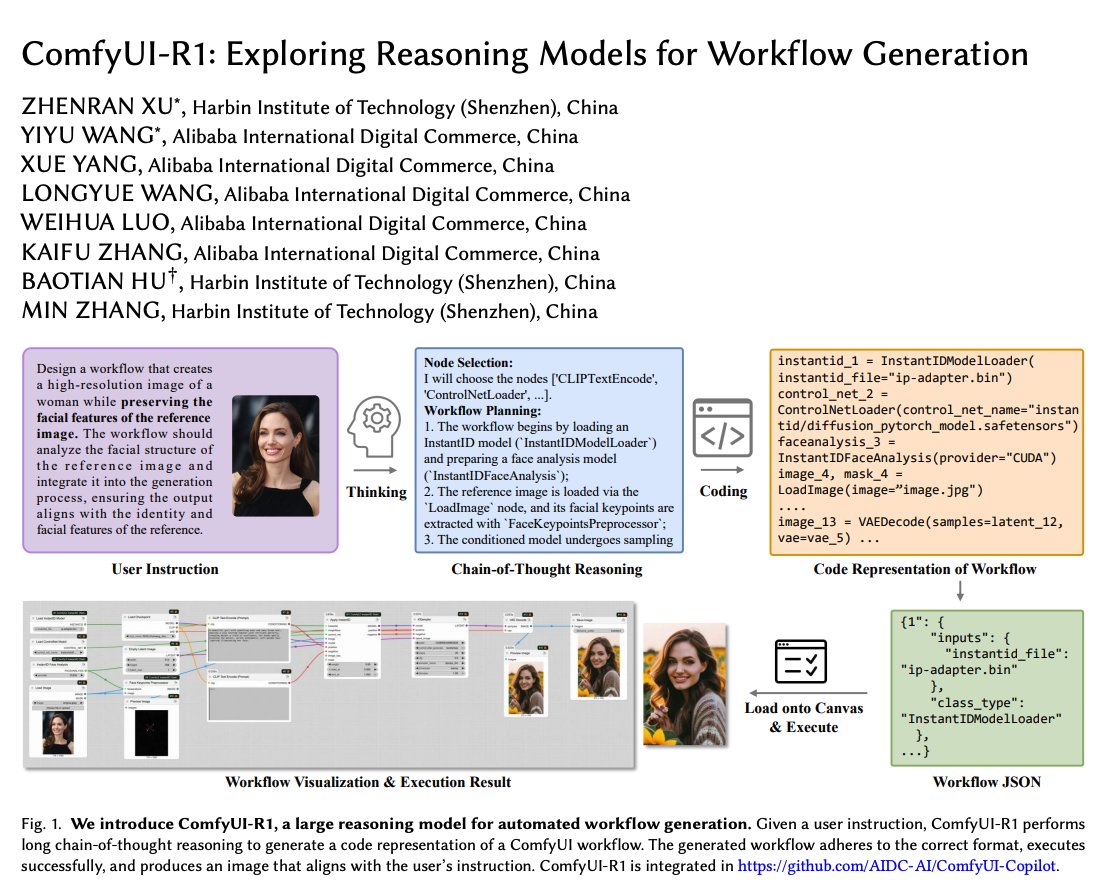

Reasoning Models for Workflow Generation You can just generate workflows with LLMs now?! Don't sleep on RL! Something I am also working on, so glad to see research on it. My key takeaways: https://t.co/GW6RGdV4nQ

Text-to-LoRA Fine-tuning effective models is hard and damn expensive! What if an AI model could help you adapt LLMs on the fly? Meet Text-to-LoRA, a hypernetwork trained to construct LoRAs in one forward pass through natural language. Here are my notes: https://t.co/zPrTlLQVwz