Your curated collection of saved posts and media

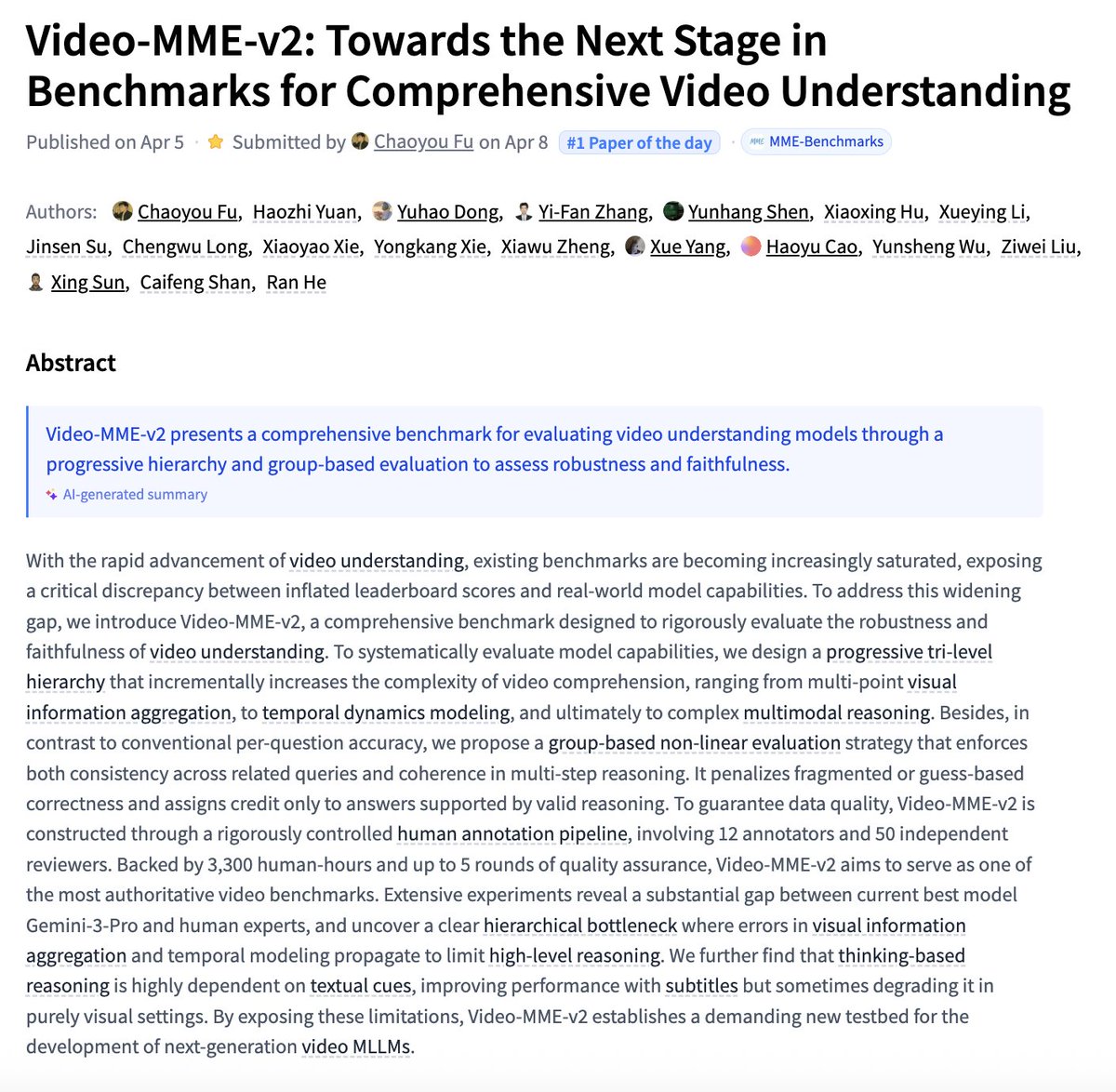

Video-MME-v2 Towards the Next Stage in Benchmarks for Comprehensive Video Understanding paper: https://t.co/NfocAfrxu9 https://t.co/ejdu3LdEqf

Artemis II mission route in 3D https://t.co/wkRV89sxsG

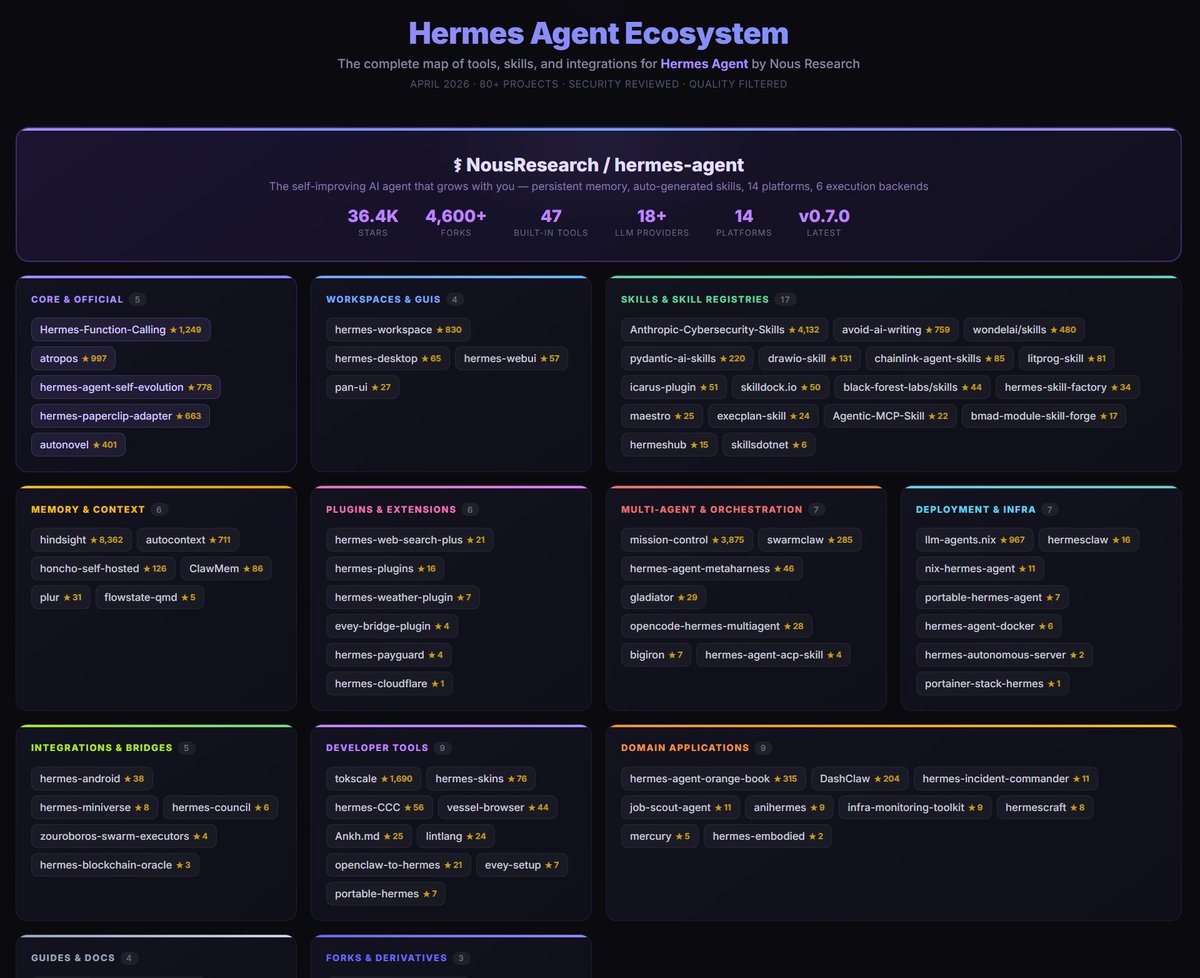

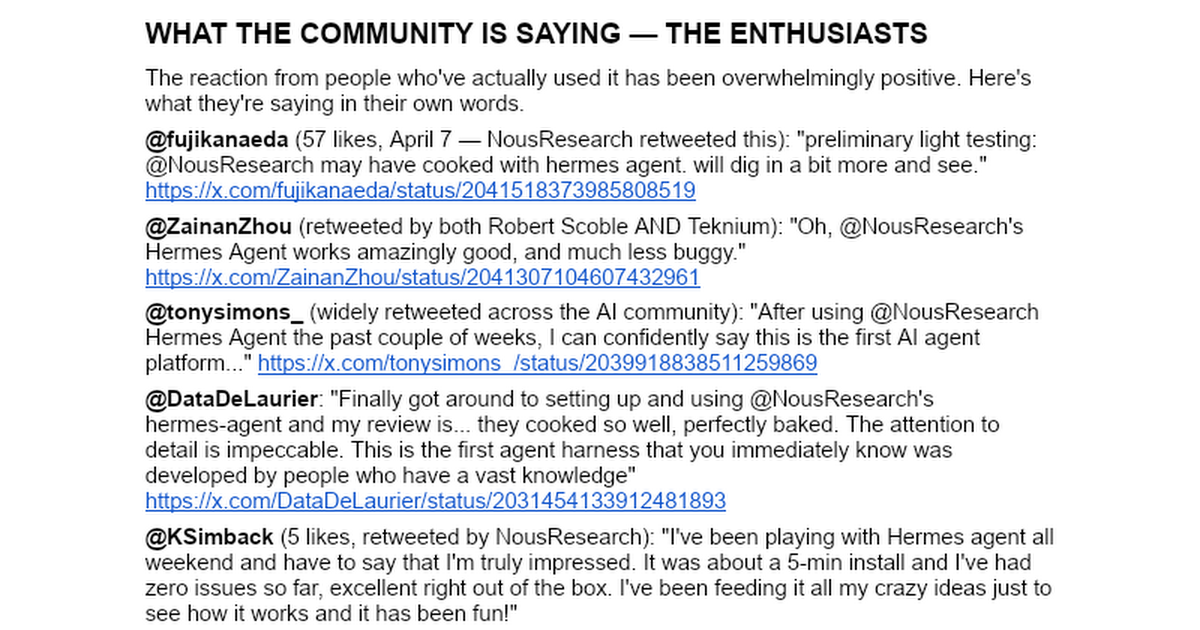

Introducing the Hermes Ecosystem Map I was an early user of Hermes Agent from @NousResearch and have been a power user ever since But as the ecosystem has grown, its been hard to keep up, so I did some research: > Scraped every GitHub repo related to Hermes > Filtered out repos that looked unfinished or had 0 stars > Built an ecosystem map of everything created and organized it all by category > Published a website where you can see all the projects with star ratings, and if you hover over you get a short description and link to the repo Then I had Claude run a security check on every repo to exclude anything that looked sus Link is in the replies, and also open sourced the repo so feel free to submit PRs if you see anything missing Oh, and the repo has a /research folder that includes a scrape of everything I could find that's been published on Hermes - you can clone that and add it to your personal knowledge base / wiki

We've raised $8.4m to make software that sharpens your attention. We're starting with an email app that lets you handle your Gmail inbox in seconds. We call it Avec. It's available now! https://t.co/3KZraK41k7

@KSimback @NousResearch Cool, will go good with this report about Hermes: https://t.co/qeOiuNkiG0

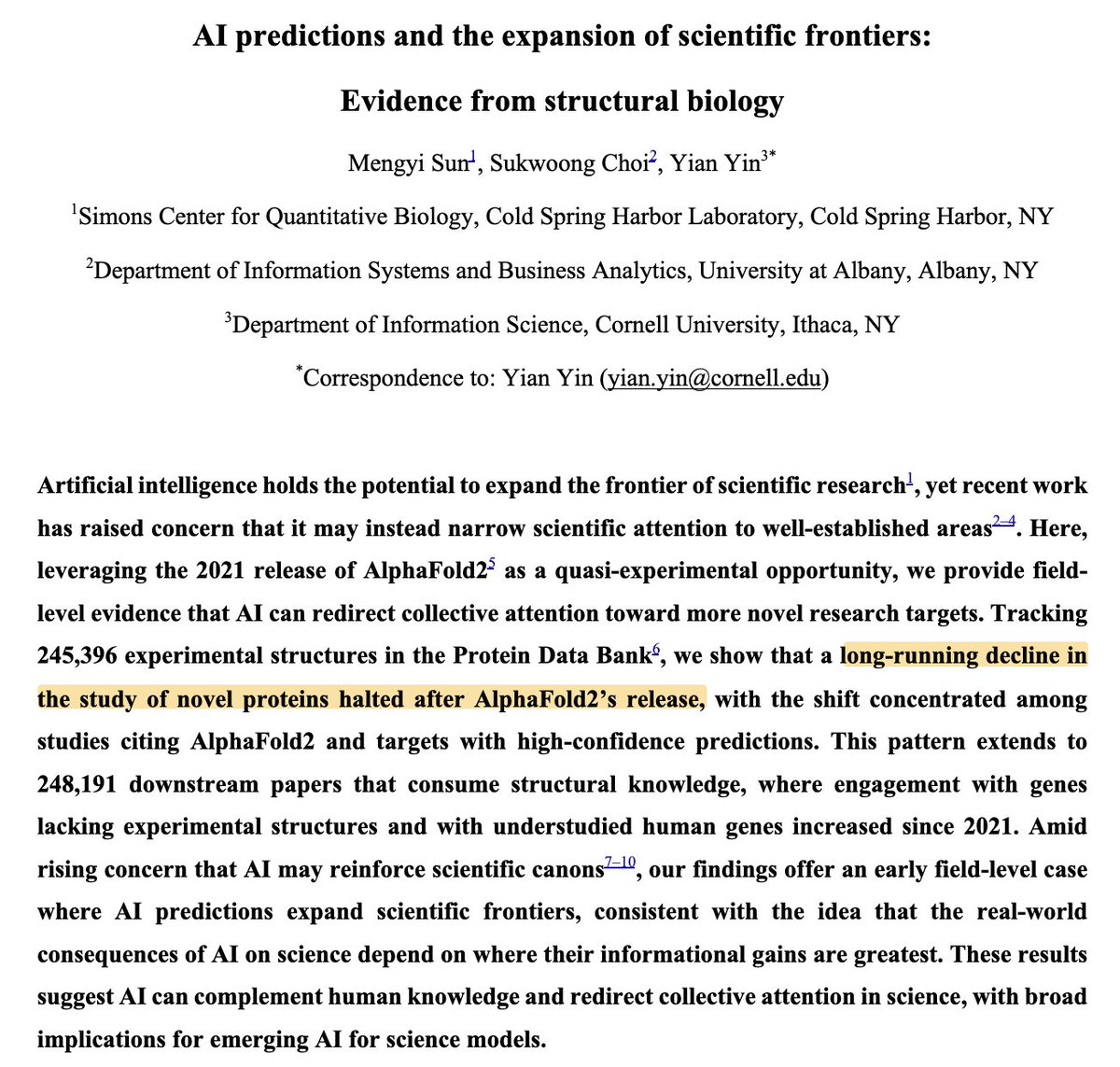

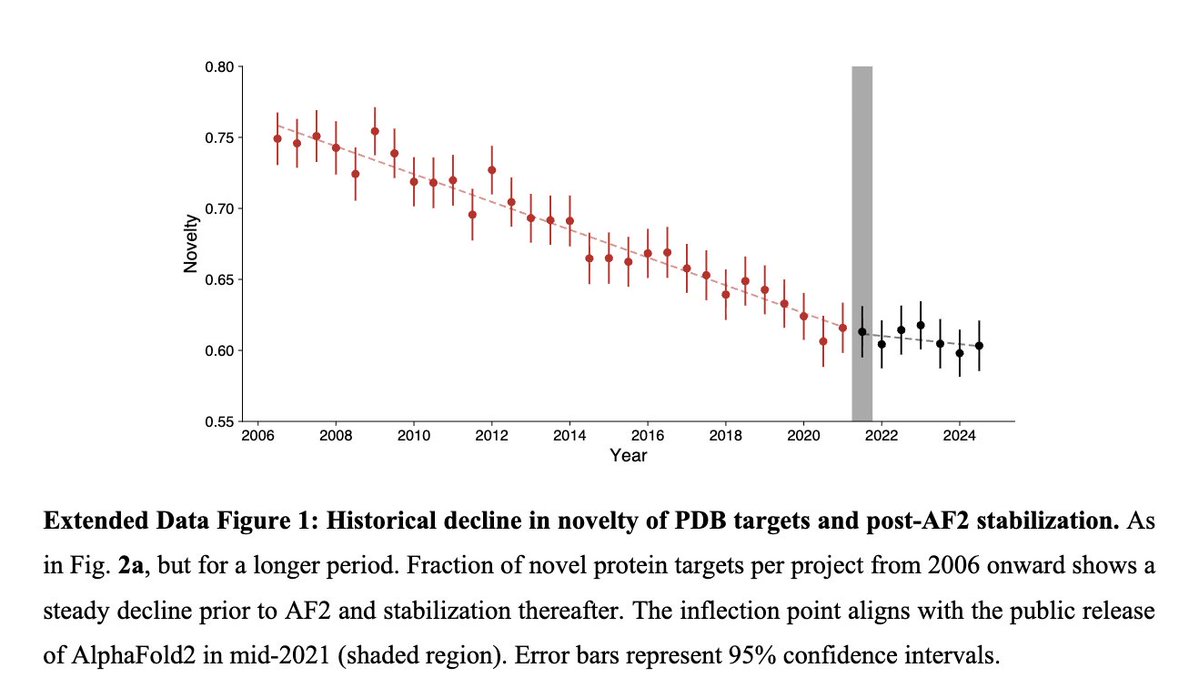

Very interesting paper shows some suggestive evidence that release of AlphaFold caused researchers to work with more novel proteins than they would've otherwise https://t.co/okeVfssLcI

Introducing Stora, AI agents for the app store The app store is a full-time job nobody signed up for Screenshots. Compliance. ASO. Publishing We built agents to take it all on Shipping on mobile is now as easy as shipping to web @stora_sh | https://t.co/5Baz0BEjOH https://t.co/z5JsFaEvgJ

Pick up! It’s your AI Self calling 🤳 All Pika AI Self agents can now talk on the phone. For when it’s just too difficult to explain, your thumbs are tired, or you’re craving a more personal connection. https://t.co/lwowXmMBp5

Introducing Claude Managed Agents: everything you need to build and deploy agents at scale. It pairs an agent harness tuned for performance with production infrastructure, so you can go from prototype to launch in days. Now in public beta on the Claude Platform. https://t.co/vHYfiC1G56

Today we're releasing the Factory desktop app. A native interface for autonomous AI agents that work across every part of your software business. https://t.co/17j06HMb2G

✨ Introducing AIMock - one mock server for your entire agentic stack! Your AI app calls LLMs, MCP tools, A2A agents, vector DBs, search, reranking, and moderation. If any of those are live in your tests, you've got flaky CI and burned tokens. No tool mocked all of it. So we built one. One package. One port. Plus drift detection and record & replay that nobody else ships. Zero dependencies. Open source. Mock with one command: `pnpm add @copilotkit/aimock`

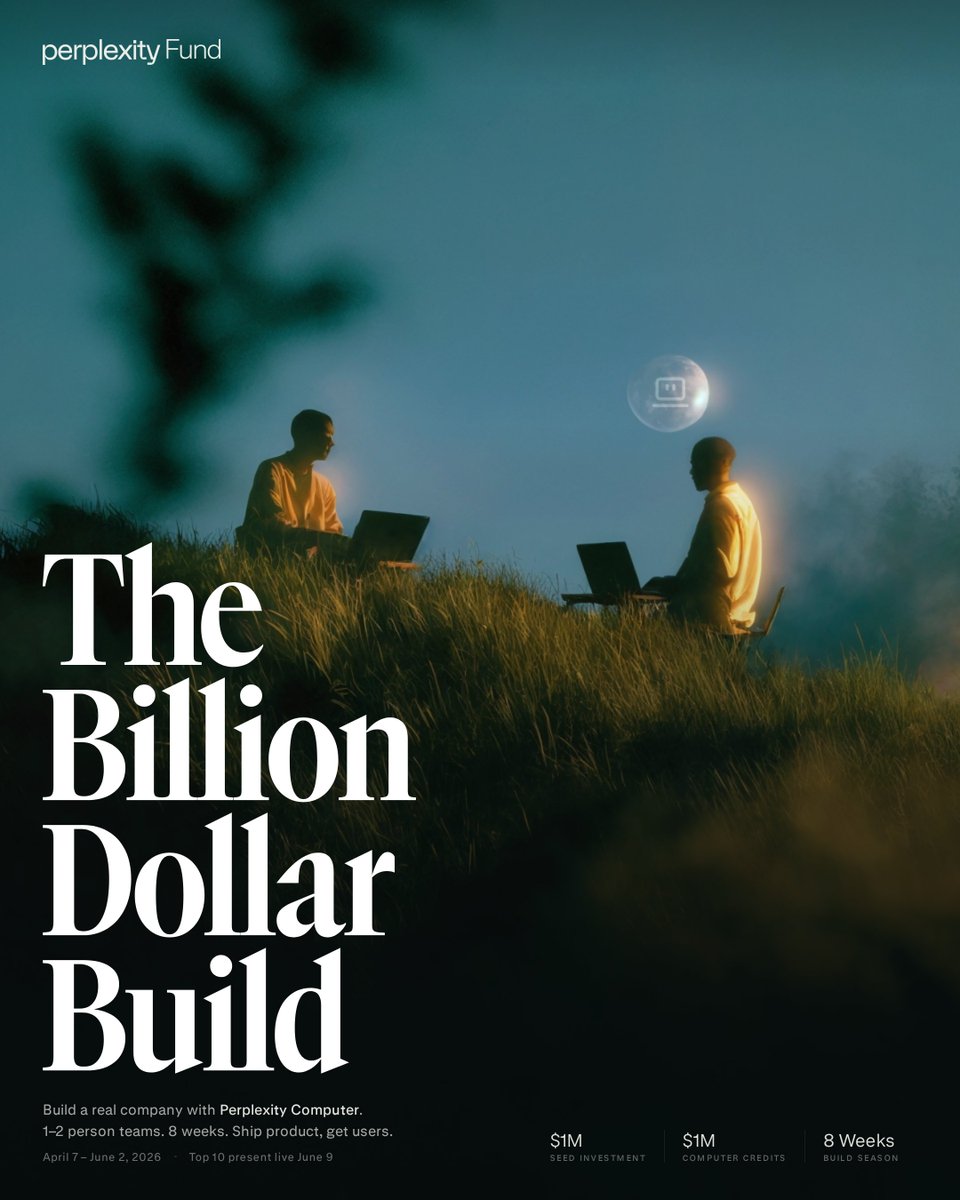

Today we're announcing the Billion Dollar Build. An 8-week competition where teams will use Perplexity Computer to build a company with a path to $1B. Finalists have the opportunity to secure up to $1M in investment from the Perplexity Fund and up to $1M in Computer credits. https://t.co/OmEqtdIpbY

Allen AI just released the WildDet3D dataset on Hugging Face millions of 3D bounding boxes with depth maps and camera parameters across 11,000+ categories from COCO, LVIS and more. https://t.co/bSXXfAVlUP

New on the Engineering Blog: Building Managed Agents—our hosted service for long-running agents—meant solving an old problem in computing: how to design a system for “programs as yet unthought of.” Read more: https://t.co/YYaEub2QGV

Improve latency up to 1.68x with NVFP4 and MXFP8 using Diffusers and TorchAO on Blackwell across a suite of different models 🔥. Squeeze out maximum performance with recipes involving selective quantization and regional compilation. 🔗 Read our latest blog from @vkuzo (@Meta) and @RisingSayak (@HuggingFace): https://t.co/QRHwAiOSc5 #PyTorch #TorchAO #MXFP8 #NVFP4 #OpenSourceAI

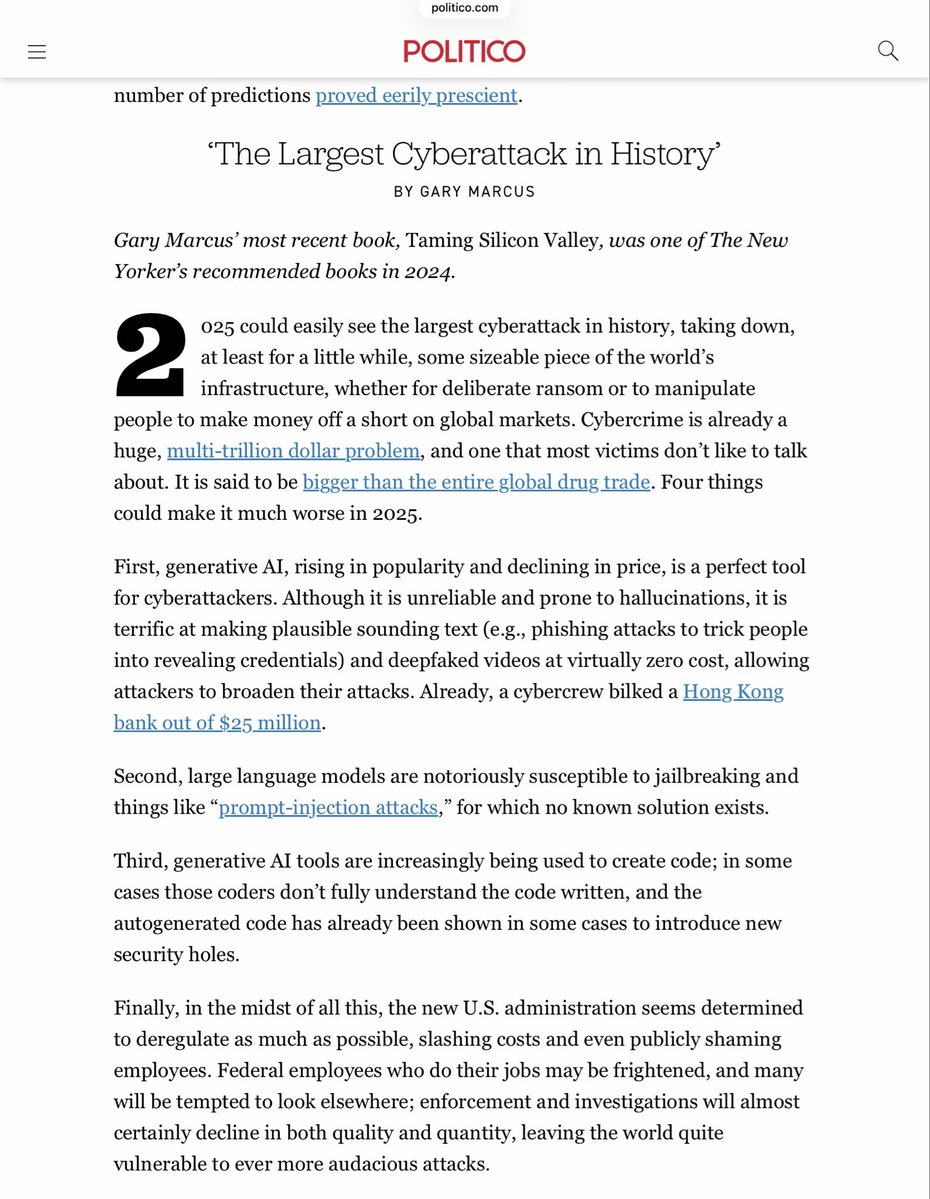

Chilling. The only thing I got wrong here in @politico was the year. This is exactly where we are now. https://t.co/TvemiQ0DWS

60 Cybercabs spotted at Giga Texas today 🤖 https://t.co/uj2fyrAt24

Happy 8 April (Wednesday) at Giga Texas, especially for those wanting an update on Cybercabs … I saw about 60 of them in two groups in the outbound lot today … the largest grouping yet! Also, looks like at least some of these have white seats and most still have clearly visible

60 Cybercabs spotted at Giga Texas today 🤖 https://t.co/uj2fyrAt24

introducing Motion, a video agent for tasteful motion design. this launch video was made entirely with Motion. 👇🏽 comment "MOTION" to get early access + free credits. tag @motion_so in any post on your X feed for a surprise. here’s how it works + examples (thread): https://t.co/CmeMgYPW6j

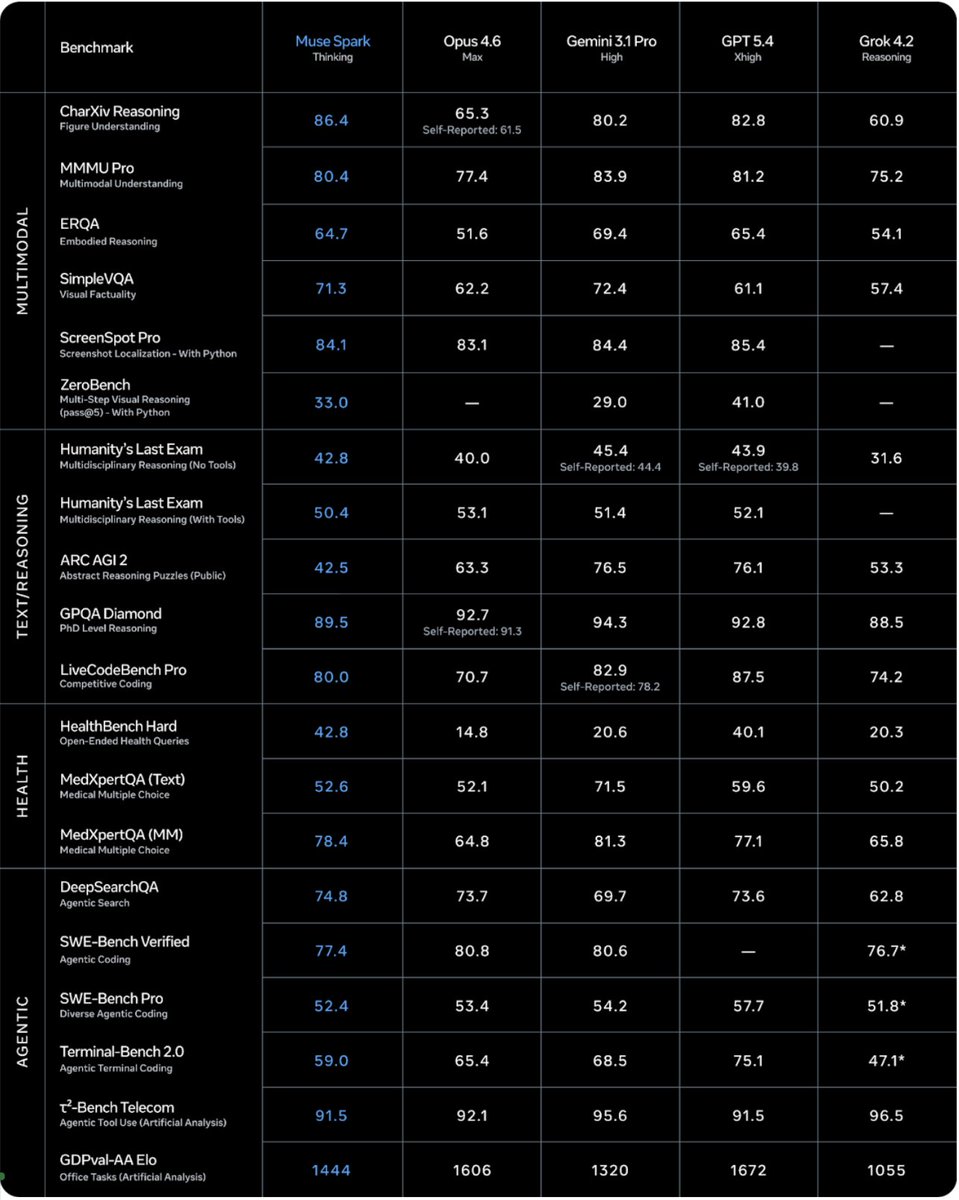

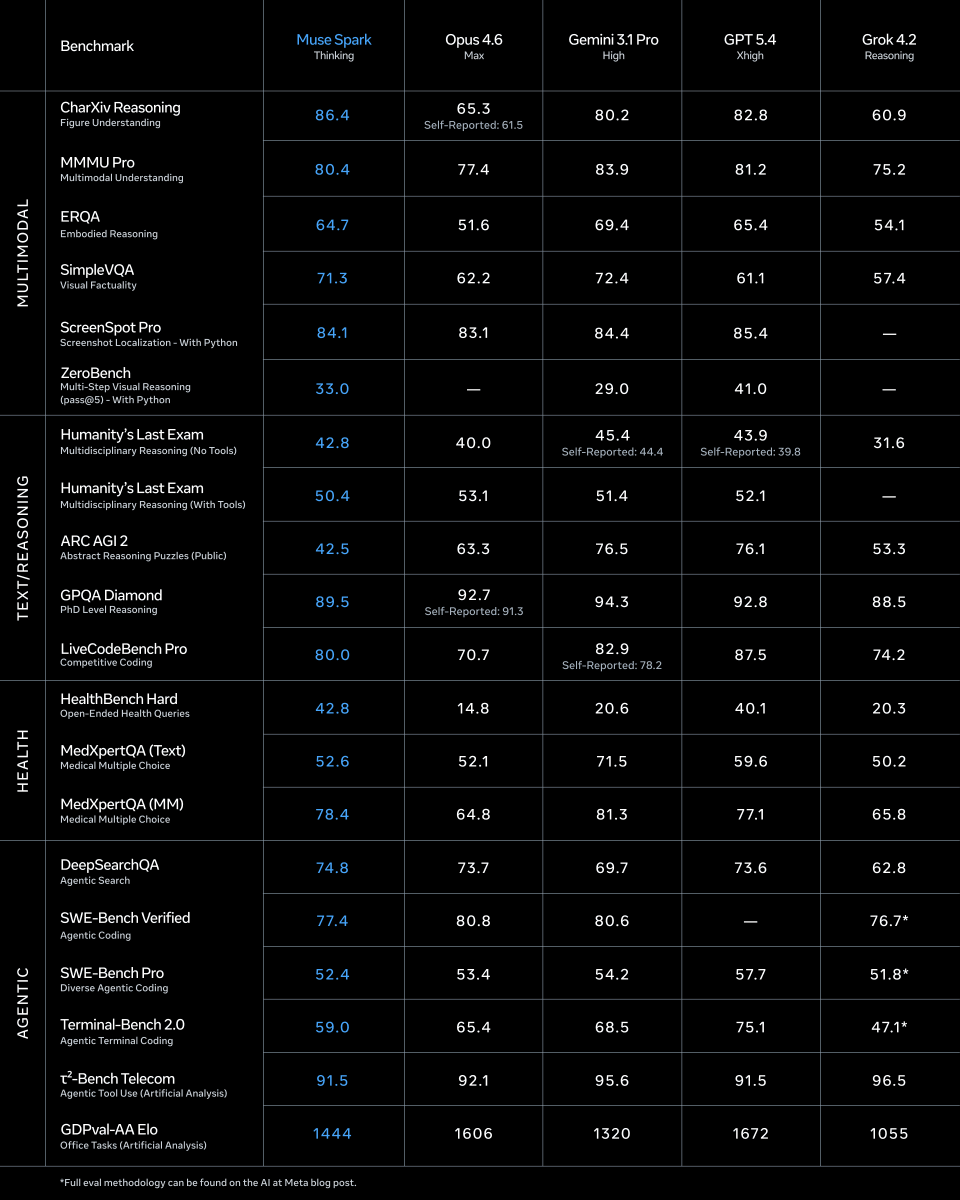

Excited to share what we’ve been building at Meta Superintelligence Labs! We just released Muse Spark, our first AI model. It's a natively multimodal reasoning model and the first step on our path to personal superintelligence. We've overhauled our entire stack to support scaling, and this is just the beginning. https://t.co/KNVjgMcch1

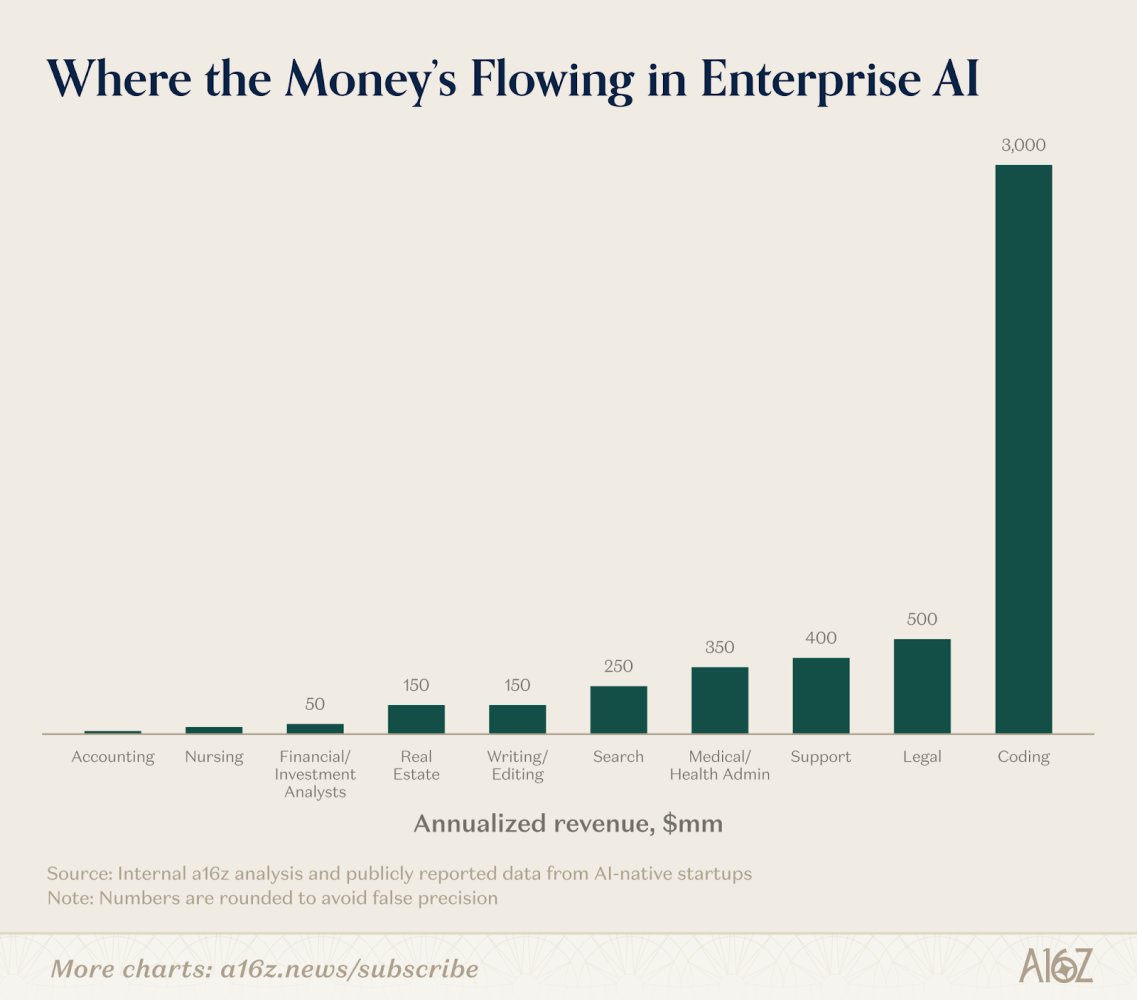

Enterprises are using AI today for coding, legal, support, healthcare, and more. @kimberlywtan's must-read deep dive compiles hard data on where AI has the most enterprise adoption – and the industries AI is coming for next: https://t.co/uiooUsHrMi https://t.co/7fXOhI1bgT

https://t.co/7dLRsDaaEg

You can now run Cursor on any machine and control it from anywhere. Kick off agents from your phone to run on your devbox. https://t.co/ZpxNr9EMWm

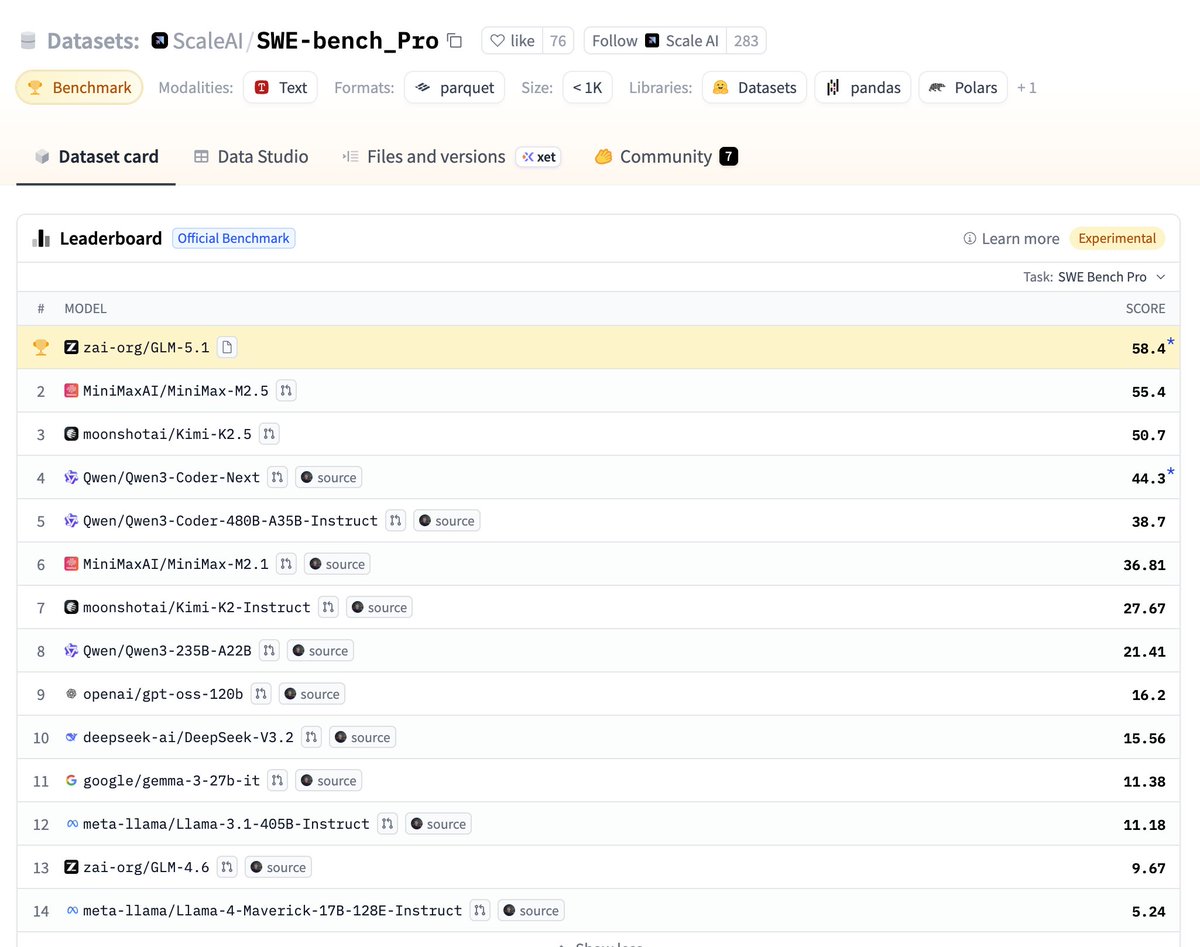

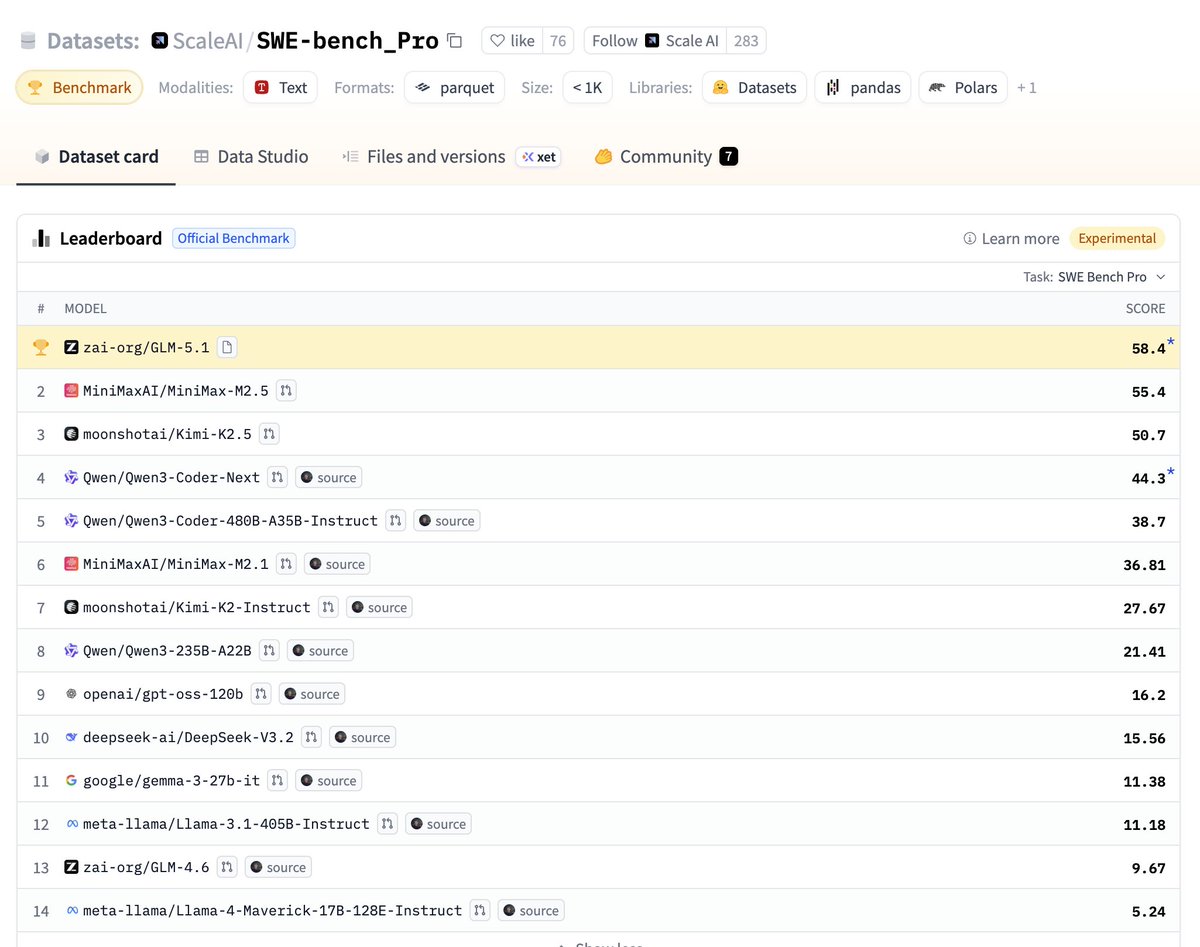

GLM-5.1 is the new open SOTA on SWE-Bench Pro Comes with an MIT license. Congrats @Zai_org! https://t.co/u66GEFYhXx

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Bl

GLM-5.1 is the new open SOTA on SWE-Bench Pro Comes with an MIT license. Congrats @Zai_org! https://t.co/u66GEFYhXx

Our parallel reasoning project ThreadWeaver is now open-sourced 🎉! Check out our Data Gen/SFT/RL recipe at https://t.co/3fE3srlAPv In case you don't know, ThreadWeaver 🧵⚡️ is the first parallel reasoning method to achieve comparable reasoning performance to widely-used sequential long-CoT LLMs, with up to 3x speedup across 6 challenging tasks.

ThreadWeaver Adaptive Threading for Efficient Parallel Reasoning in Language Models https://t.co/LzYpML0iSs

Common Failure Modes Break VLM-Powered OCR in Production. 🔁 Repetition Loops — model spirals into infinite whitespace, exhausts resources, cascades latency across your system 🛑 Recitation Errors — safety filters hard-stop legitimate extractions as "copyright violations" Same pipeline. Completely different root causes. Completely different fixes. Our enginerring leadership broke down what went wrong and how we solved both 👇 https://t.co/fFkLmnG11h

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵 https://t.co/fThDXdsxwB

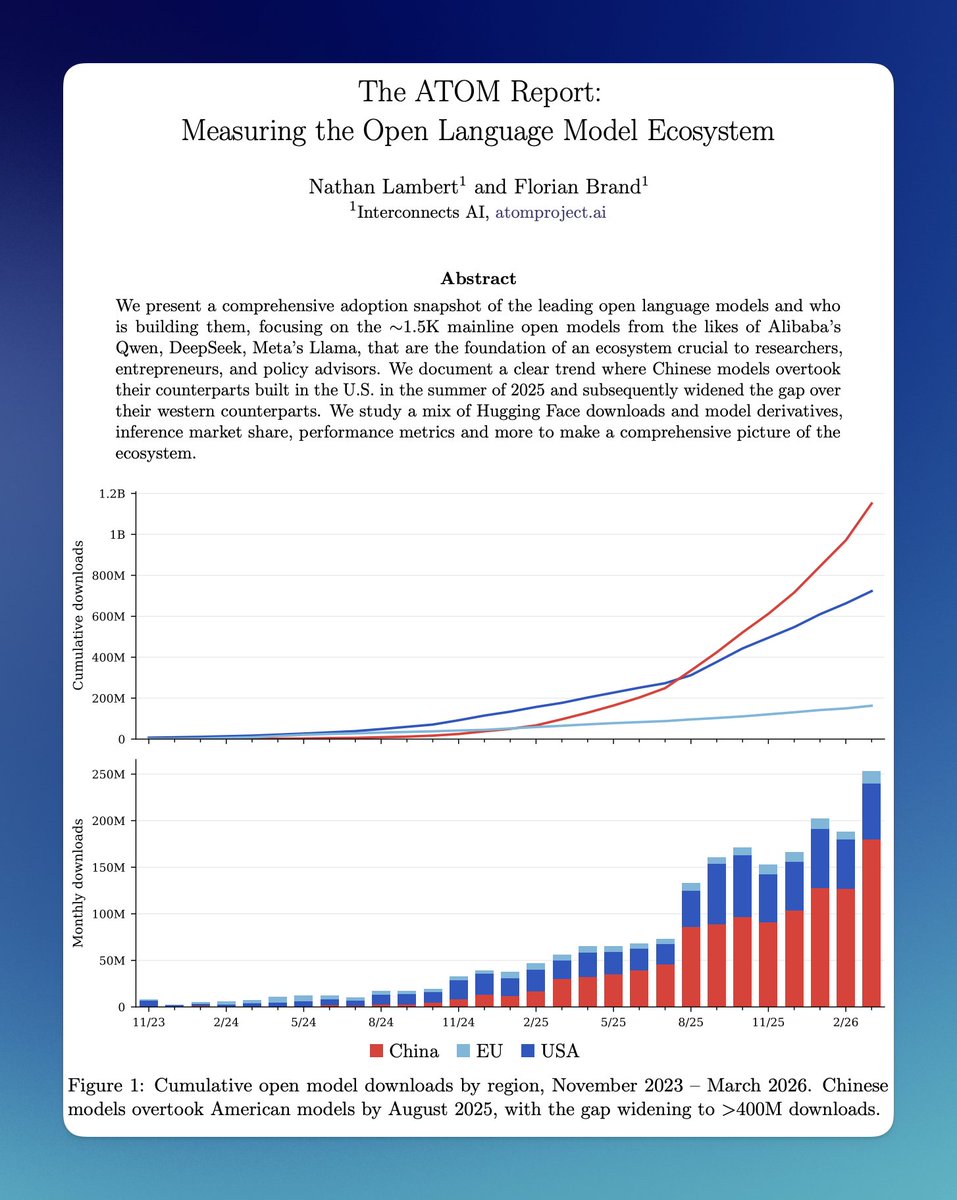

Excited to launch The ATOM Report with @natolambert! For over 9 months, we scraped publicly available data to measure the open ecosystem. Some insights, some of them surprising, others less so: https://t.co/uEIPRdOJWc

Alzheimer’s is one of medicine's hardest unsolved problems, and one of the most devastating. At the OpenAI Foundation, we believe AI is well suited to its complexity. We're directing over $100M to scientists mapping the disease, designing drugs, & more. I wrote about it here: https://t.co/wOkiE78KUo

We solved character consistency. Forever Avatar V captures you in 15 seconds and holds your identity across every video. Change the look, outfit, and setting to create unlimited versions of you. RT + comment "AvatarV" below and I'll DM 100 credits to test it out (must follow) https://t.co/el5kNz4IOd

AI is going to take every single human job that exists and help us do it 100x better through smart glasses. This delivery driver makes fewer mistakes, drives safer, and spends less time per stop as his smart glasses AI copilot help him navigate, find the right package, and move around hands free. AI is an extension to our brains. It extends our thinking and allows us to do things that we could never do before. I don't think AI is replacing people. I think it's going to enter into the physical world in an entirely new way. AI in a chatbox already changed the world of knowledge work. Now watch what it does to jobs in the physical world. Mentra is building the OS and hardware infrastructure to make this happen. Are you another boring B2B SaaS that is about to get replaced? Disrupt yourself with smart glasses. Mentra's got the infra covered.

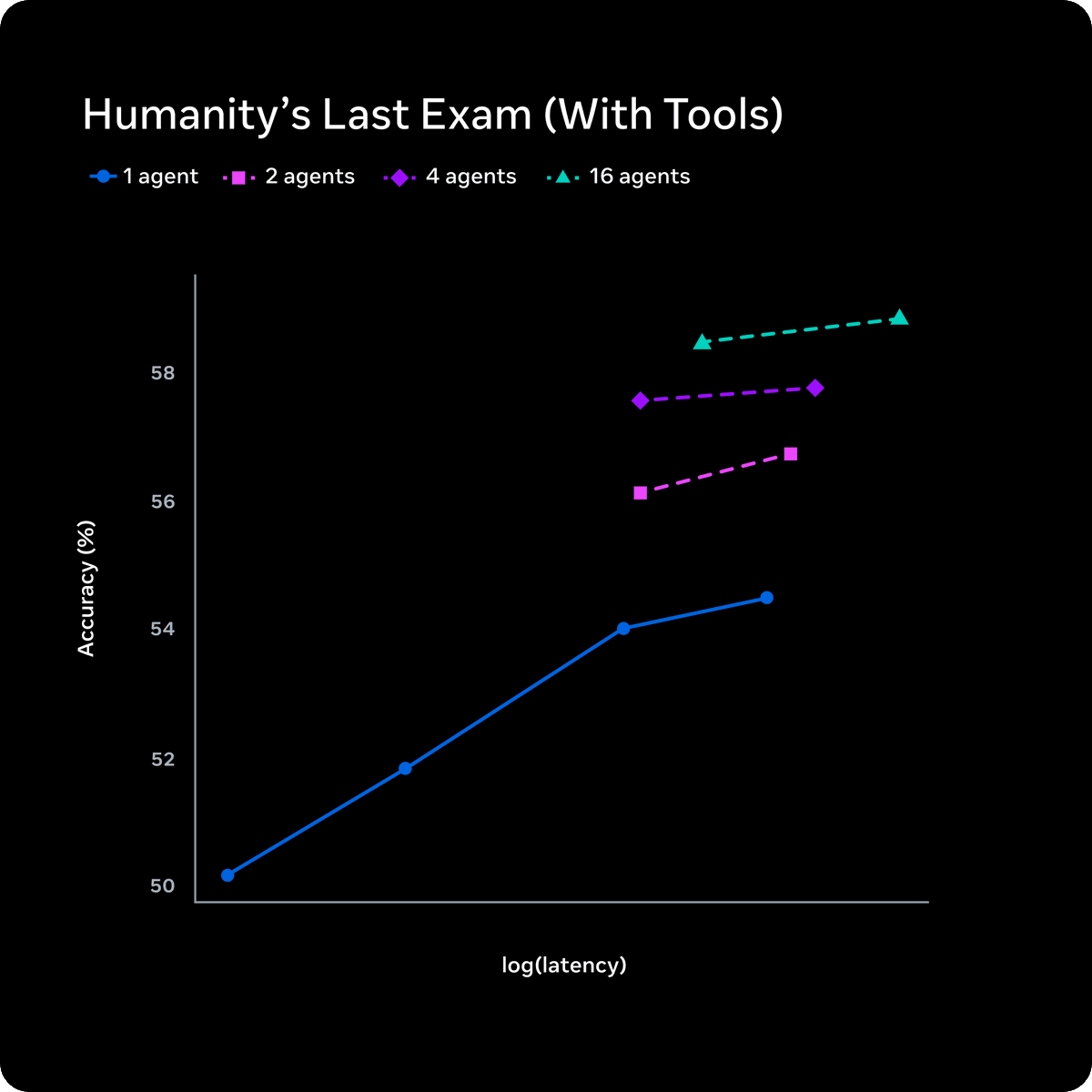

NEW: Meta announces Muse Spark. All you need to know: * It's their new multi-modal reasoning model. * Strong at multi-agent orchestration and multi-modal reasoning. * Contemplating mode orchestrates multiple agents that reason in parallel. Helps to compete with models such as Gemini Deep Think and GPT Pro. * The test-time reasoning efficiency is probably the most important bit; Muse Spark can compress its reasoning to solve problems using significantly fewer tokens (referred to as though compression). Look at the chart in the figure. Agents are parallelized without significantly increasing latency. That's huge! Great to see Meta finally get back in the game. It's good to see a focus on native multimodal capabilities. No open-source release and a private API only available to a select few. Results look promising, too, though there are some gaps, especially to enable long-horizon agentic systems and coding workflows.

Introducing Muse Spark, the first in the Muse family of models developed by Meta Superintelligence Labs. Muse Spark is a natively multimodal reasoning model with support for tool-use, visual chain of thought, and multi-agent orchestration. Muse Spark is available today at https