Your curated collection of saved posts and media

It’s happening https://t.co/sqOCmm0Voq

Link to all testimonials: https://t.co/a5qR3zRKKJ I am now motivated to revise the curriculum for a 3rd time. Thanks everyone for the positive reception

Just finished @HamelHusain and @sh_reya's AI Evals course. Some may think "look at your data" is just a meme, but it's actually the key to AI success. 🧵 https://t.co/urxhXpo5A2

Make one with 512gb of RAM, and I'd buy 64 of them. https://t.co/qP7mpaJENV

🔥 Beelink GTR9 Pro Is Here! Pre-Orders Open NOW! What's New in GTR9 Pro 🧠 Powered by Ryzen AI Max+ 395 Processor 🚀 140W Full Performance Release 🤖 Up to 126 TOPS AI Performance 🔇 32dB Ultra-Silent Operation 🔌 Dual 10Gbps LAN Ports & Dual USB4 Ports Silent and powerful — perfect

Make one with 512gb of RAM, and I'd buy 64 of them. https://t.co/qP7mpaJENV

@ivenzor I heard chunks of it, eg love his performance of this song among others haha https://t.co/WcqaseGkqj

I learned that you can get 'Dolby 3D glasses' that filter out light of different wavelengths per eye. The mismatch on some natural colors makes things like flowers look 'shiny', like IRL quest markers. Cheap way to pretend you have more than 3 cone types :) Comparison pics: https://t.co/2iXXA3w9QG

As a bonus you get to walk around looking stylish, and can use them as filters in front of a phone camera to get some fun color effects. Not bad for a few bucks 😂 https://t.co/iV0nY8b161

Say hello to DINOv3 🦖🦖🦖 A major release that raises the bar of self-supervised vision foundation models. With stunning high-resolution dense features, it’s a game-changer for vision tasks! We scaled model size and training data, but here's what makes it special 👇 https://t.co/VBkRuAIOCi

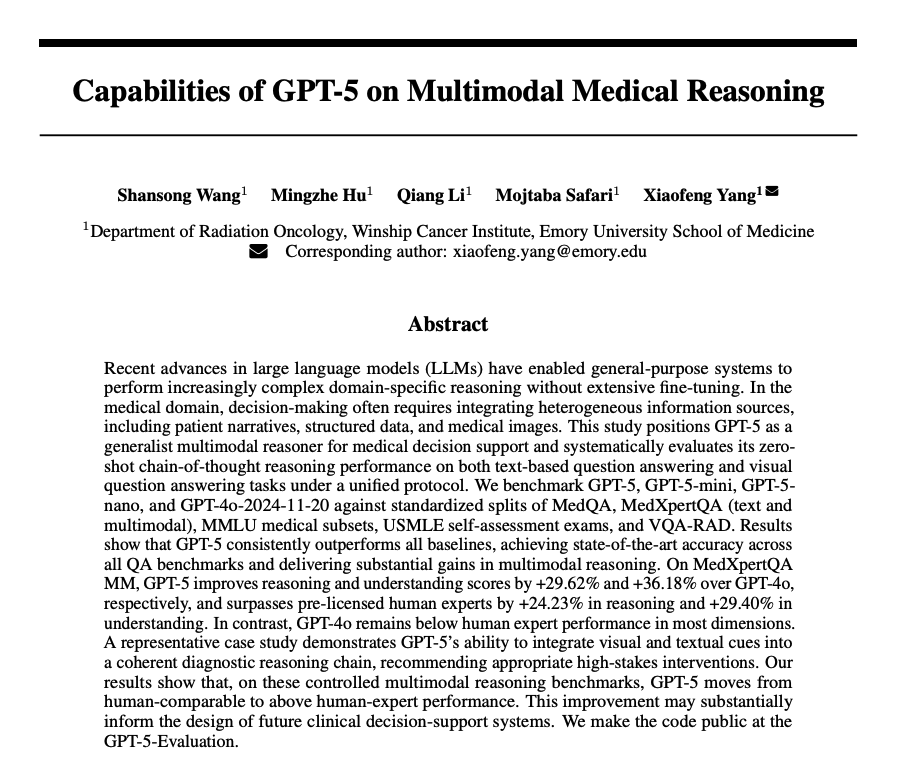

GPT-5 on Multimodal Medical Reasoning On MedXpertQA MM, GPT-5 improves reasoning and understanding scores by +29.62% and +36.18% over GPT-4o. It surpasses pre-licensed human experts by +24.23% in reasoning and +29.40% in understanding. https://t.co/MBeVYbTNNZ

🏆 We're thrilled to announce that Meta FAIR’s Brain & AI team won 1st place at the prestigious Algonauts 2025 brain modeling competition. Their 1B parameter model, TRIBE (Trimodal Brain Encoder), is the first deep neural network trained to predict brain responses to stimuli across multiple modalities, cortical areas, and individuals. The approach combines pretrained representations of several foundational models from Meta – text (Llama 3.2), audio (Wav2Vec2-BERT from Seamless) and video (V-JEPA 2) – to predict a very large amount (80 hours per subject) of spatio-temporal fMRI brain responses to movies acquired by the Courtois NeuroMod project Download the code: https://t.co/Qwy6t8yvMj Read the paper: https://t.co/ln4DVlGXdp Learn about the challenge: https://t.co/yX5MEgPB8v Download the data: https://t.co/dkubim3Hj5

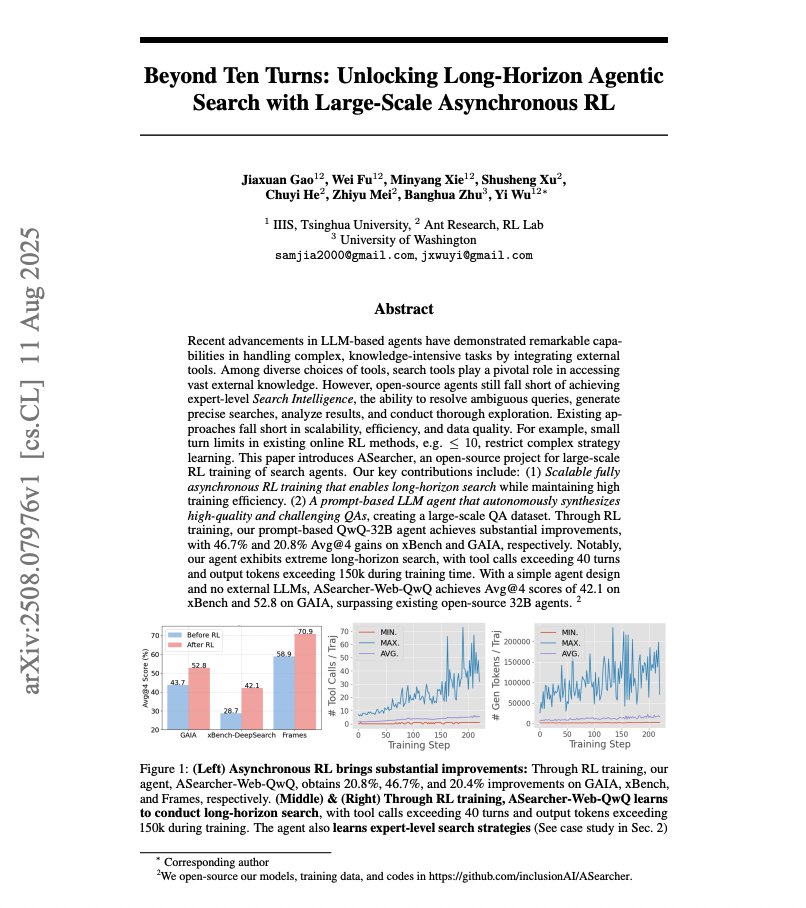

Unlocking Long-Horizon Agentic Search AI agents still struggle with long-horizon tasks. This paper sheds light on how to improve long-horizon agentic search with RL. Here are my notes: https://t.co/agEyG57fyS

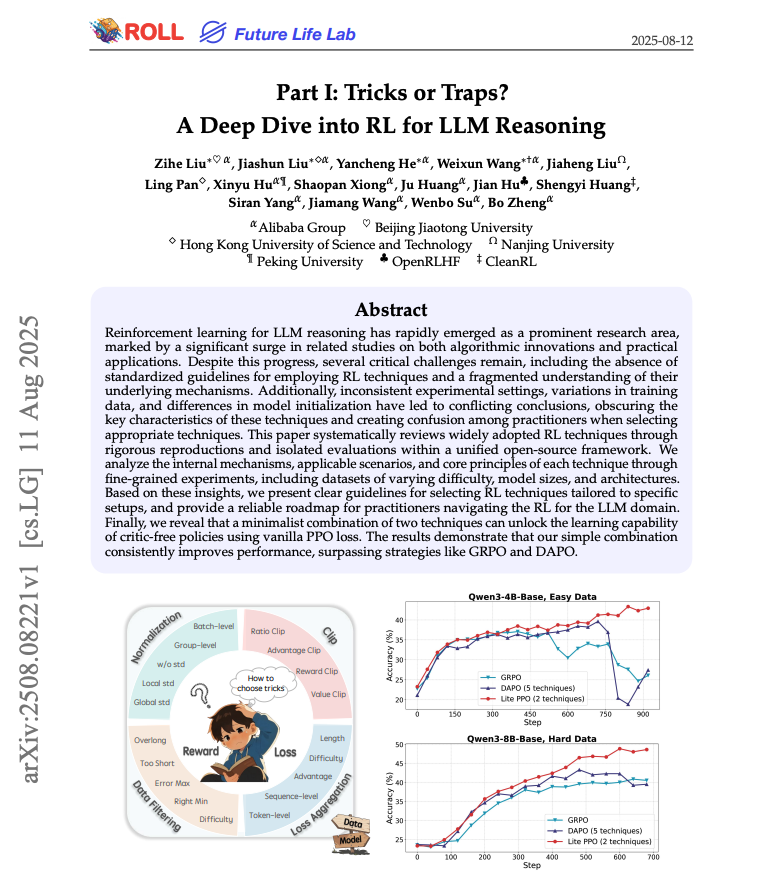

A Deep Dive into RL for LLM Reasoning Provides a roadmap for practitioners applying RL for LLM reasoning. Nice to have some of the latest techniques in one place. https://t.co/AtKHGSTq1A

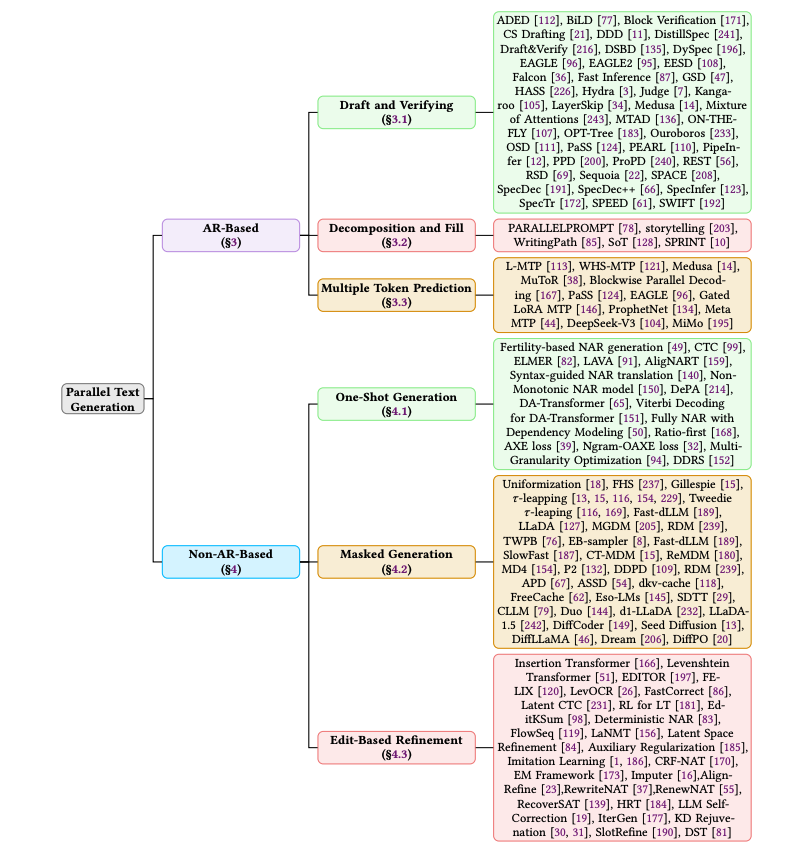

A Survey on Parallel Text Generation Explores parallel text generation techniques in LLMs. Categorizes them into autoregressive and non-autoregressive. It compares the methods, the speed–quality trade-offs, and outlines where the field is heading. Great read for AI devs! https://t.co/7hvAZ505q9

https://t.co/kZAmXj4lb9 https://t.co/eo2G9vmMiv

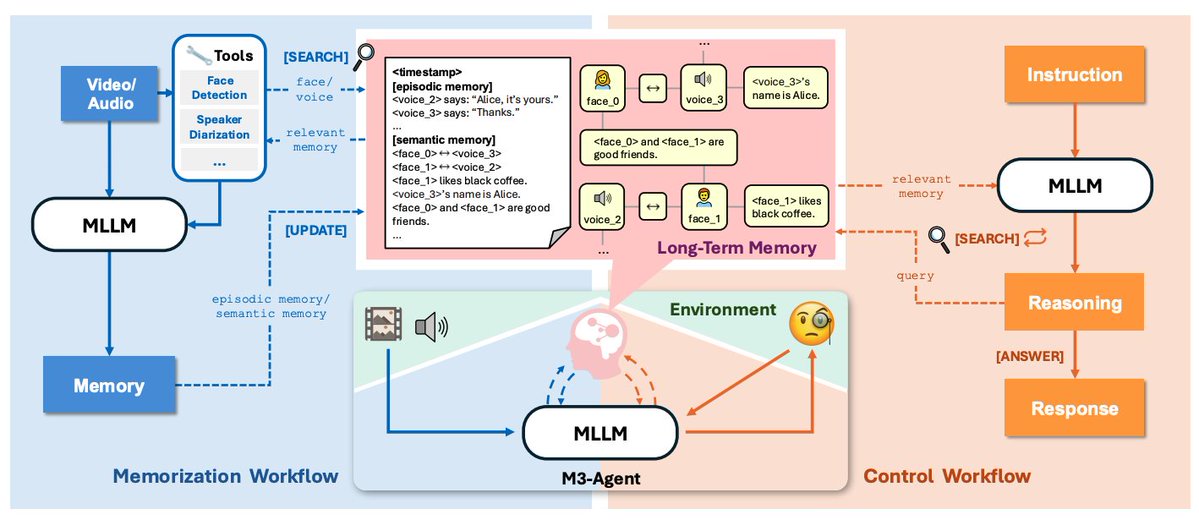

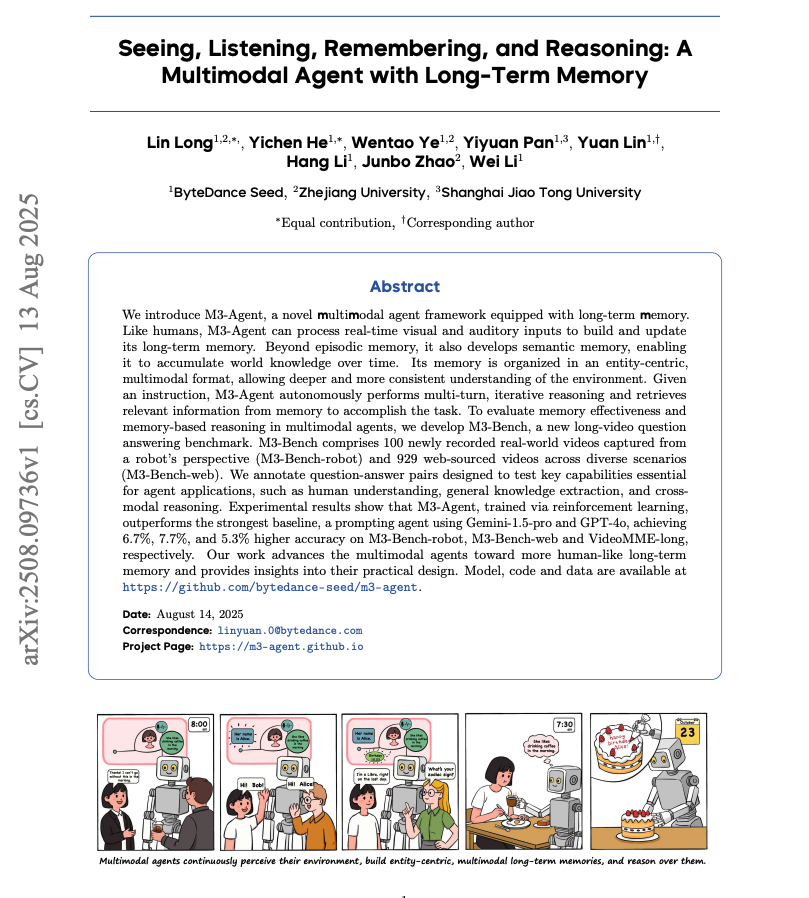

M3 Agent Introduces a framework for agents that watch and listen to long videos, build entity-centric memories, and use multi-turn reasoning to answer questions. https://t.co/nUQRzp91SN

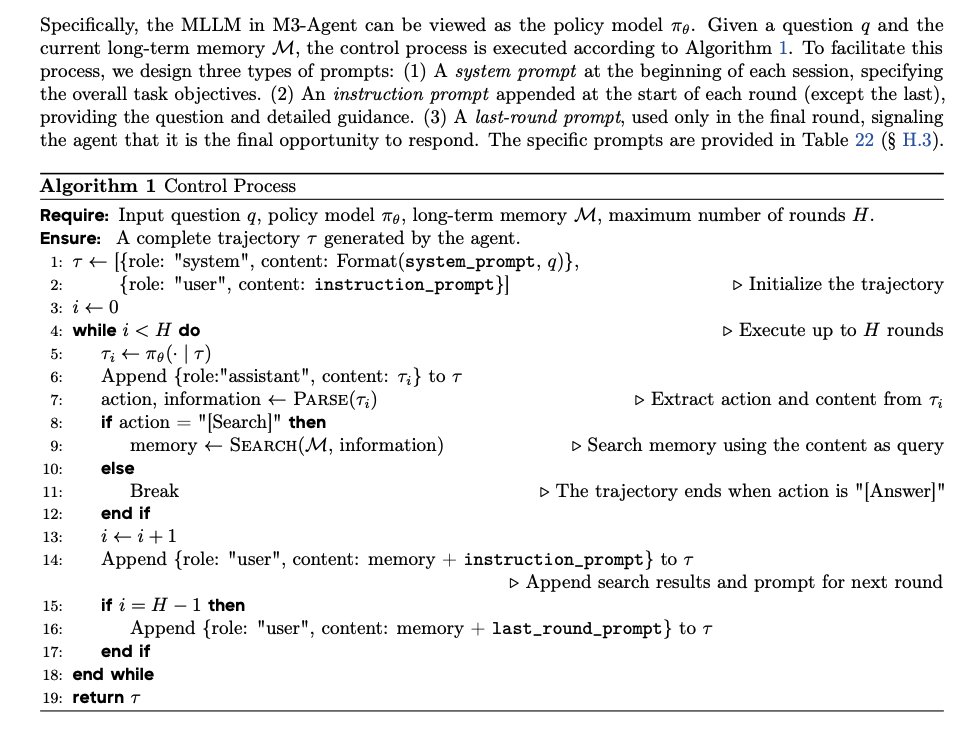

RL-trained control for retrieval and reasoning A policy model decides when to search memory and when to answer, performing iterative, multi-round queries over the memory store rather than single-shot RAG. https://t.co/IYnSrrzXso

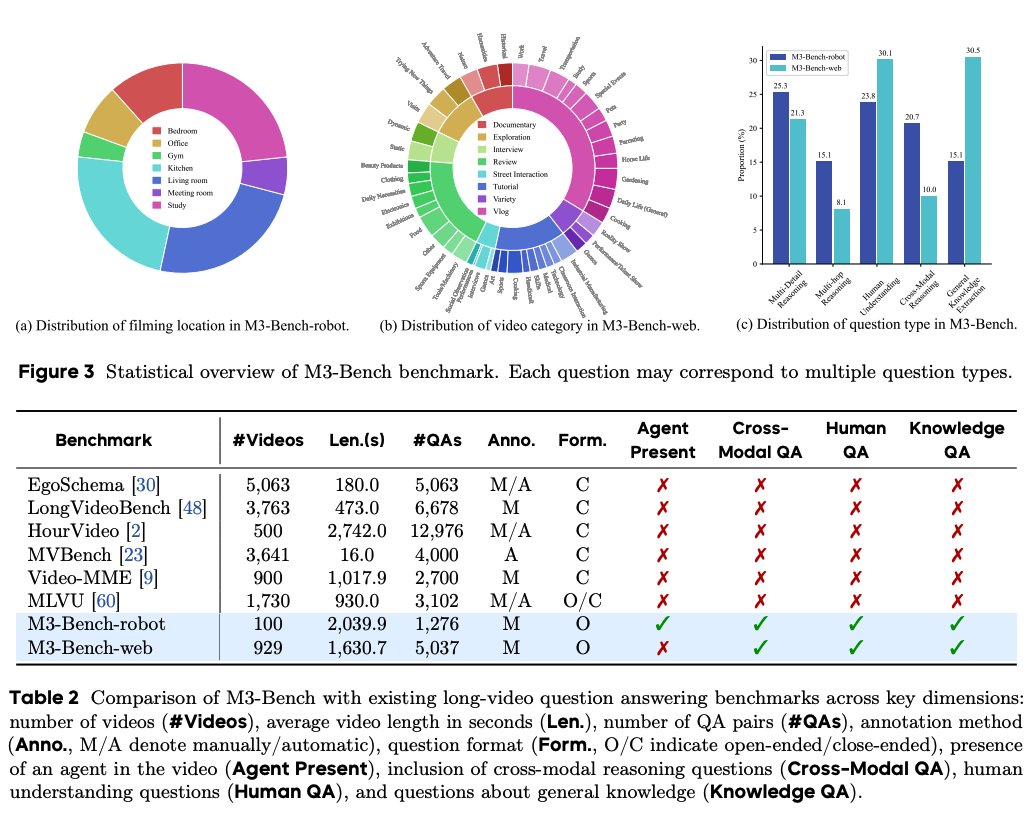

M3-Bench for long-video QA 100 robot-perspective videos and 929 web videos with 6,313 total QA pairs targeting multi-detail, multi-hop, cross-modal, human understanding, and general knowledge questions. https://t.co/xlLEYjb5Qi

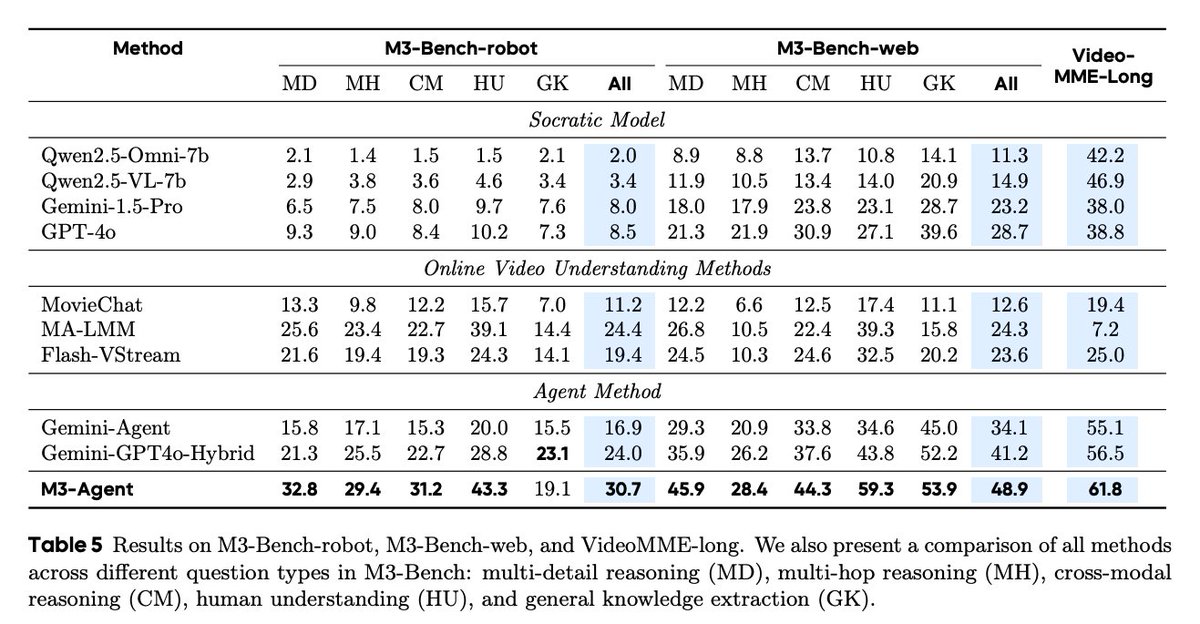

Performance M3-Agent beats a Gemini-GPT-4o hybrid and other baselines on M3-Bench-robot, M3-Bench-web, and VideoMME-long. Semantic memory and identity equivalence are crucial, and RL training plus inter-turn instructions and explicit reasoning materially improve accuracy. Paper: https://t.co/XZQlJgcv4w

M3-Agent: A Multimodal Agent with Long-Term Memory Impressive application of multimodal agents. Lots of great insights throughout the paper. Here are my notes with key insights: https://t.co/An4dmn6ky7

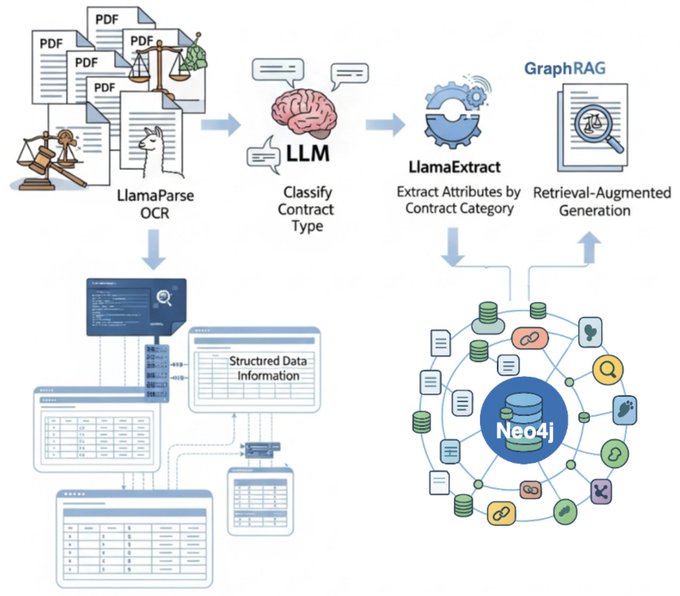

Build a knowledge graph over any legal document 🧑⚖️ This weekend, learn the full stack to ETL your legal contracts into an entity graph that captures intricate relationships between parties/locations/contract terms. The trick: have a full document workflow that can convert docs into structured data first! 1️⃣ Parse your documents into well-structured markdown tokens with LlamaParse 2️⃣ Classify contracts into different categories 3️⃣ Extract structured data using LlamaExtract where schema is dependent on the category. This is a fantastic collab with @neo4j (Tomaz). Shoutout @tuanacelik from our side! Notebook: https://t.co/x2fp7h1hVN LlamaCloud: https://t.co/rlDaAlE4SH

Transform unstructured legal documents into queryable knowledge graphs that understand not just content, but relationships between entities. This comprehensive tutorial shows you how to build a knowldedge graph creation workflow using LlamaCloud and @neo4j for legal contract pro

@tensorbert Thanks! Sorry for the project spoiler but I already have a resource on that here 😅: https://t.co/fEx2e8E7jS https://t.co/yfl5D92rFT

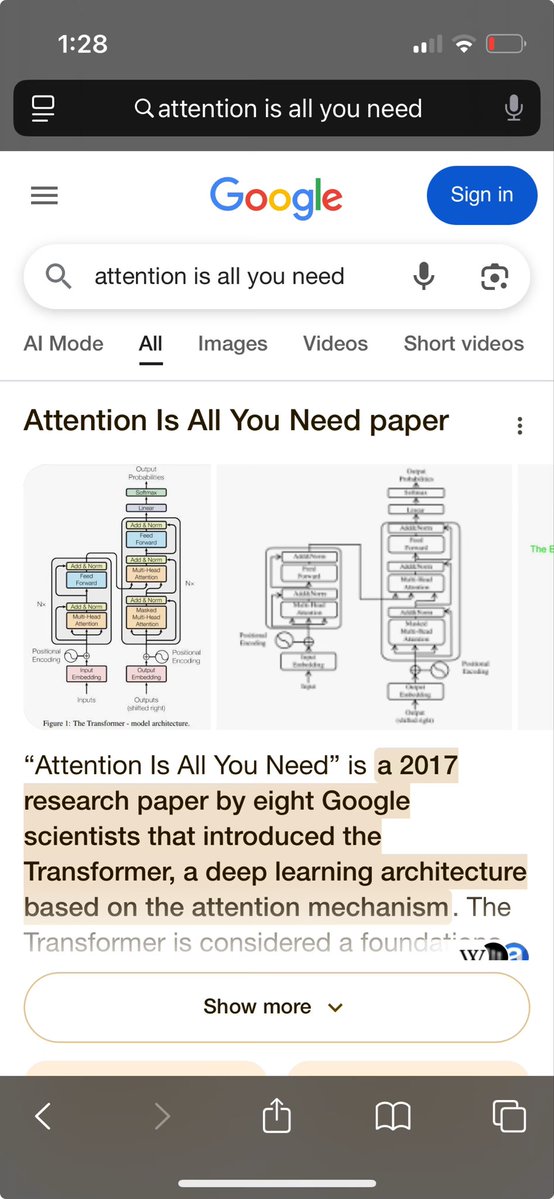

@deepfates Common convention in the deep learning and AI community. But only for architecture figure, not other flowcharts. https://t.co/Yy2f6E13Bi

@Mevrael I do! https://t.co/26NfddeCBl

@brickywhat @BrandonWaselnuk @pk_iv https://t.co/BjwaLPlfge

@Madisonkanna @CatOrman1 Or should I do a white shirt. https://t.co/HjqP6LrgcY

Danny from Every is coming on to talk about how to use Spirtal to turn your call transcripts into content! come check it out https://t.co/mckBVs3k0e

Me and the boys at the gender ratio party https://t.co/QGzR8TDzZU

Me and the boys at the gender ratio party https://t.co/QGzR8TDzZU

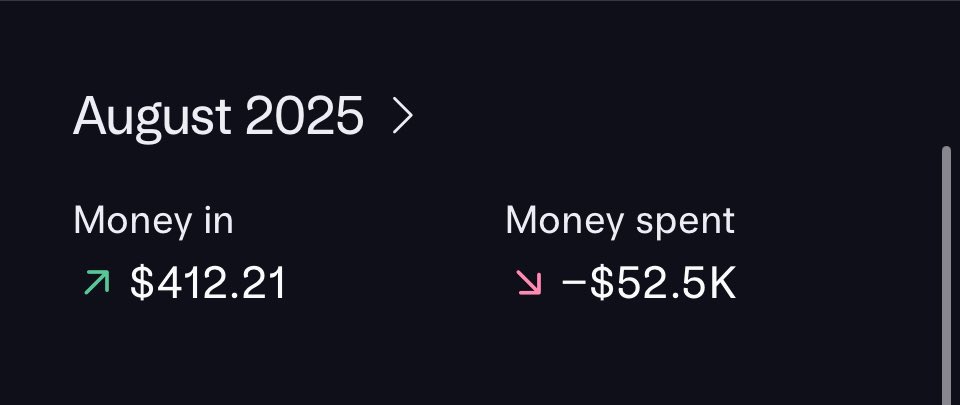

Feeling VC backed. Might delete later. https://t.co/xwOBonAzZL

https://t.co/M0K0ifHP0K

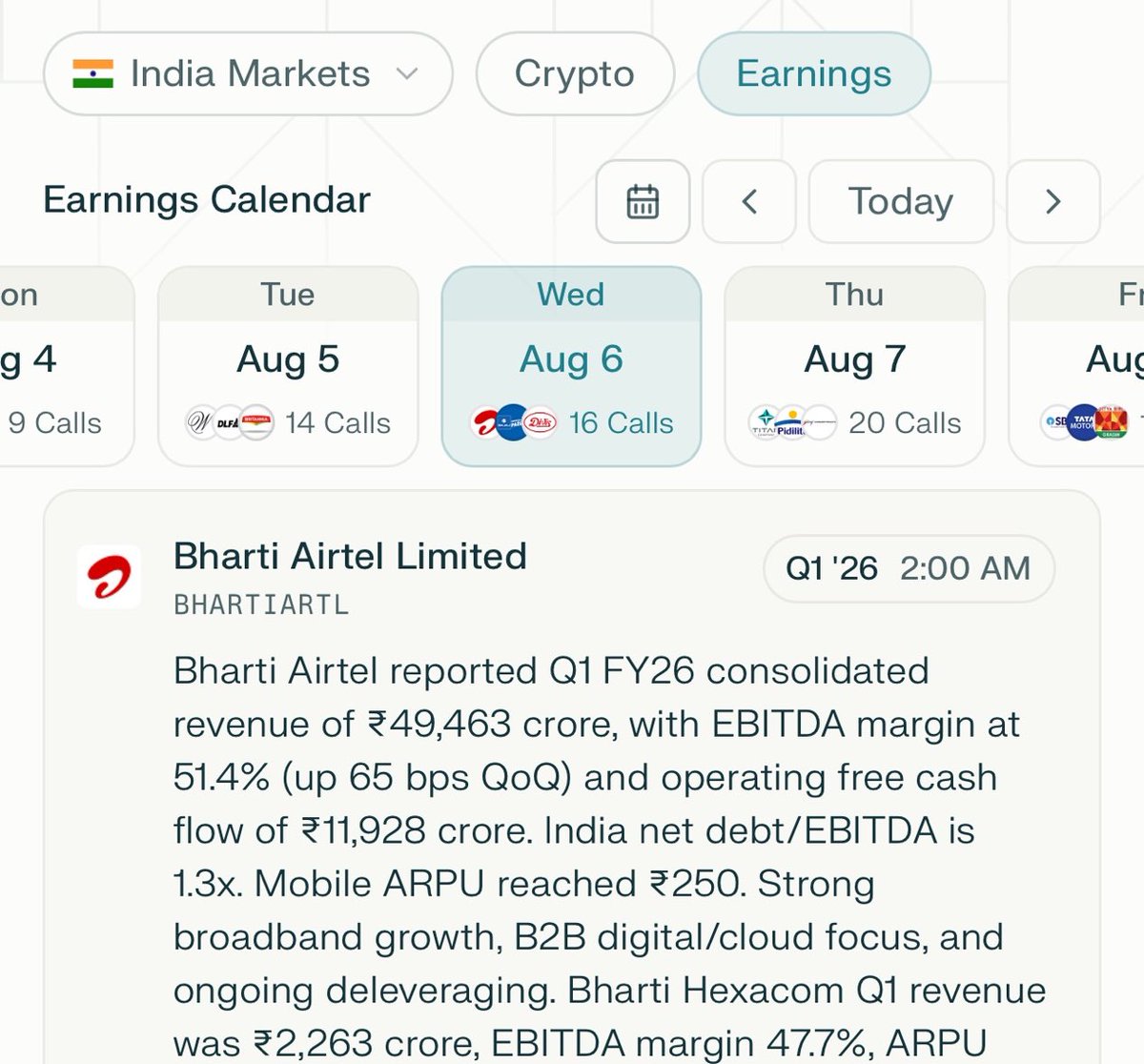

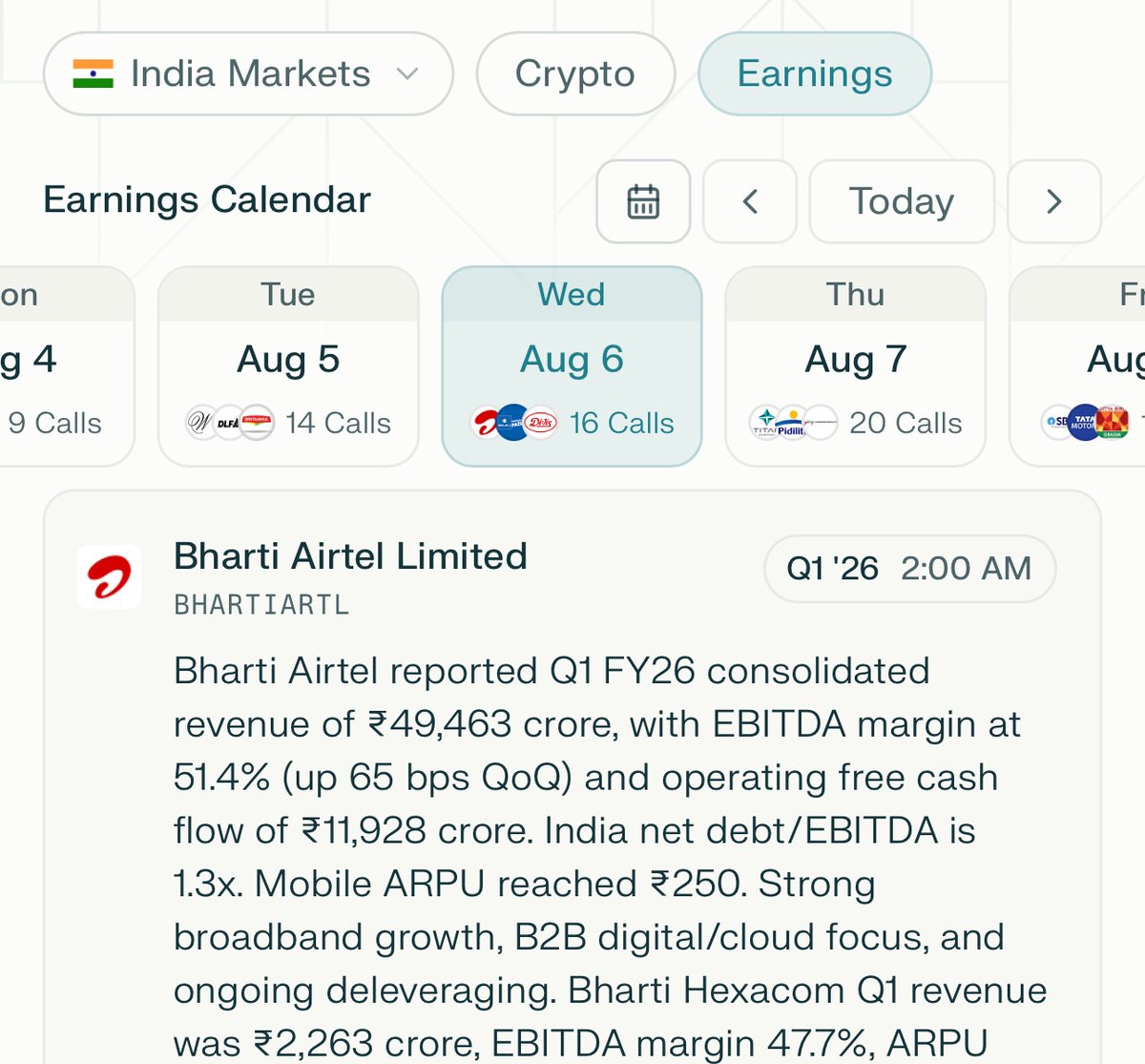

this weeks’s @PPLXfinance feature— live earnings call schedule + realtime transcription/analysis for Indian markets engineering and quality control on this one was non trivial. Lots of data to capture and present accurately. https://t.co/A79uzkR6y4

Perplexity’s Finance dashboard now support live earnings calls transcriptions and features earnings calls schedules for Indian stocks. We hope to add a lot more value to Indian equity markets research in the coming days! Enjoy! 📈 🇮🇳 https://t.co/5IM1rAW6QC

this weeks’s @PPLXfinance feature— live earnings call schedule + realtime transcription/analysis for Indian markets engineering and quality control on this one was non trivial. Lots of data to capture and present accurately. https://t.co/A79uzkR6y4