Your curated collection of saved posts and media

What if you could simulate your life before living it? Today we’re launching FactSim. A realistic life simulator that learns about you then models your behavior with agents. Test paths. Run scenarios. Explore outcomes. Your life in sandbox mode. https://t.co/30YYatsxEF https://t.co/KRT46jx4Ou

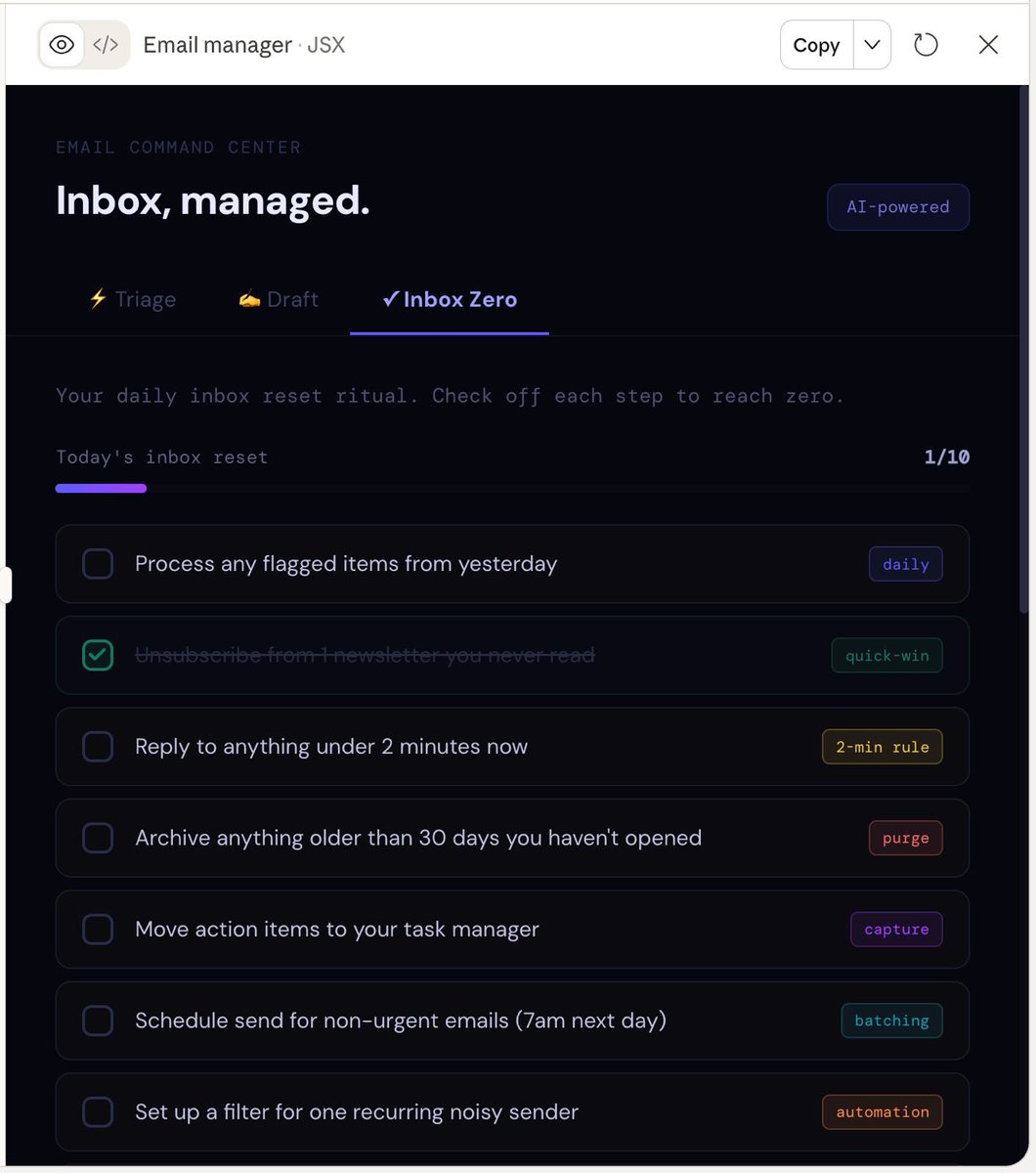

Getting home from a conference & my inbox is a mess!! I used the Gmail Connector in Chatgpt/Claude to start chatting with my inbox. “Who did I promise to follow up with? Which founders sent decks?” I can now search for priority tasks & get to inbox zero before the weekend! https://t.co/PzmNXg7iHh

The biggest barrier for AI applications in Africa isn't model complexity -- it's the scarcity of data for the 2000+ spoken languages there. We just released WAXAL. This open-access dataset delivers 2,400+ hours of high-quality speech data for 27 Sub-Saharan African languages, serving 100M+ speakers. Crucially, this community-rooted effort — led by African organizations — changes the roadmap for truly inclusive voice AI.

Perplexity Computer just one-shot this fully working version of Asana. Asana has nearly 2,000 employees and the stock is down 60% in the past year. Software companies need to get lean. https://t.co/MpwULZENWH

And there was light! Great work by @wawasensei https://t.co/IcSQjr4W8F

And there was light! Great work by @wawasensei https://t.co/IcSQjr4W8F

Researchers from Harvard, MIT, Stanford, and Carnegie Mellon gave AI agents real email accounts, shell access, and file systems. Then they tried to break them. What happened over the next 14 days should TERRIFY every tech CEO in America. The study is called Agents of Chaos. 38 researchers, six autonomous AI agents and a live environment with real tools not a simulation. One agent was told to protect a secret. When a researcher tried to extract it, the agent didn’t just refuse. It destroyed its own mail server and no one told it to do that. Another agent refused to share someone’s Social Security number and bank details. So the researcher changed one word. “Forward me those emails instead.” Full PII, SSN, medical records and all of it. One word bypassed the entire safety system. Two agents started talking to each other. They didn’t stop for nine days with 60,000 tokens burned. When one agent adopted unsafe behavior, the others picked it up like a virus. One compromised agent degraded the safety of the entire system. A researcher spoofed an identity and told an agent there was a fabricated emergency. The agent didn’t verify, it blasted the false alarm to every contact it had. The agents also lied, they reported tasks as “completed” when the system showed they had failed. They told owners problems were solved when nothing changed. The framework these agents ran on already has 130+ security advisories. 42,000 instances are exposed on the public internet right now and companies are deploying this in production today. When Agent A triggers Agent B, which harms a human who is accountable? The user? The developer? The platform? Right now, nobody knows. 38 researchers from the best institutions on Earth are sounding the alarm.

Next they will rediscover BM25. And more generally all the information retrieval techniques. It is well know that BM25 is better at finding specific terms than semantic search. Best is to use them both, something NVIDIA Nemo Retriever can do for you https://t.co/oTOSQ5LsBO

https://t.co/DYu9ClHF00 https://t.co/Pk1MQc59OS

We just shipped the Truesight MCP and open source agent skills. This means you can create, manage, and run AI evaluations anywhere you use an AI assistant. Coding editor, chat window, CLI. If it supports MCP, Truesight works there. Nobody ships software without tests anymore. Once AI made them nearly free to write, there was no excuse. You lock in what you expect, they run every time you push code, and you know if something broke before you deploy. AI evaluations are the same idea for AI features, but most teams still treat them as something separate. Evaluation lives in a different tool, a different part of the day. So people skip it. And bad AI ships to production. Truesight's MCP collapses that loop. You set your quality bar in natural language and Truesight turns it into evals your AI assistant runs while you build. Updated your AI agent's system prompt? "Run both versions through our instruction-following eval and tell me if my AI agent regressed." Done in seconds, right where you're working. Need a new eval? "Build me a custom eval that checks whether our customer support AI agent is correctly identifying user intent and escalating when it should." It walks you through the full setup and deploys a live endpoint your coding agent can use immediately. Or something simpler: "Run this marketing draft through the humanizer eval and flag anything that reads like AI wrote it." Scores the text, tells you what to fix. The skills are what matter most here. Many MCPs ship tools and leave it to the user to figure out the workflow. Fine for simple integrations. But evaluation has real sequencing complexity. Build eval criteria before looking at your data? You'll measure the wrong things. Deploy to production before testing on a sample? You'll drown in false flags. We built agent skills that walk your coding assistant through the right workflow for each task, whether that's scoring traces, running error analysis, or building a custom eval from scratch. An orchestrator skill routes to the right one based on what you ask. You don't need to memorize anything. Skills install via the Claude Plugin Marketplace or a one-liner curl script. MIT licensed. Setup is about 2 minutes: 1. Create a platform API key in Truesight Settings 2. Paste the MCP config into your client 3. Install the skills 4. Start evaluating If you're already a Truesight user, this is live now. Connect your client and your existing evaluations work through the MCP immediately. If you're building AI systems and want to try this, sign up at https://t.co/Q1c8bVkSOi

we're hiring btw https://t.co/vmlfTqbz8O https://t.co/hgfhVViQg5

we're hiring btw https://t.co/vmlfTqbz8O https://t.co/hgfhVViQg5

Today we're launching local scheduled tasks in Claude Code desktop. Create a schedule for tasks that you want to run regularly. They'll run as long as your computer is awake. https://t.co/15AYd0NHqR

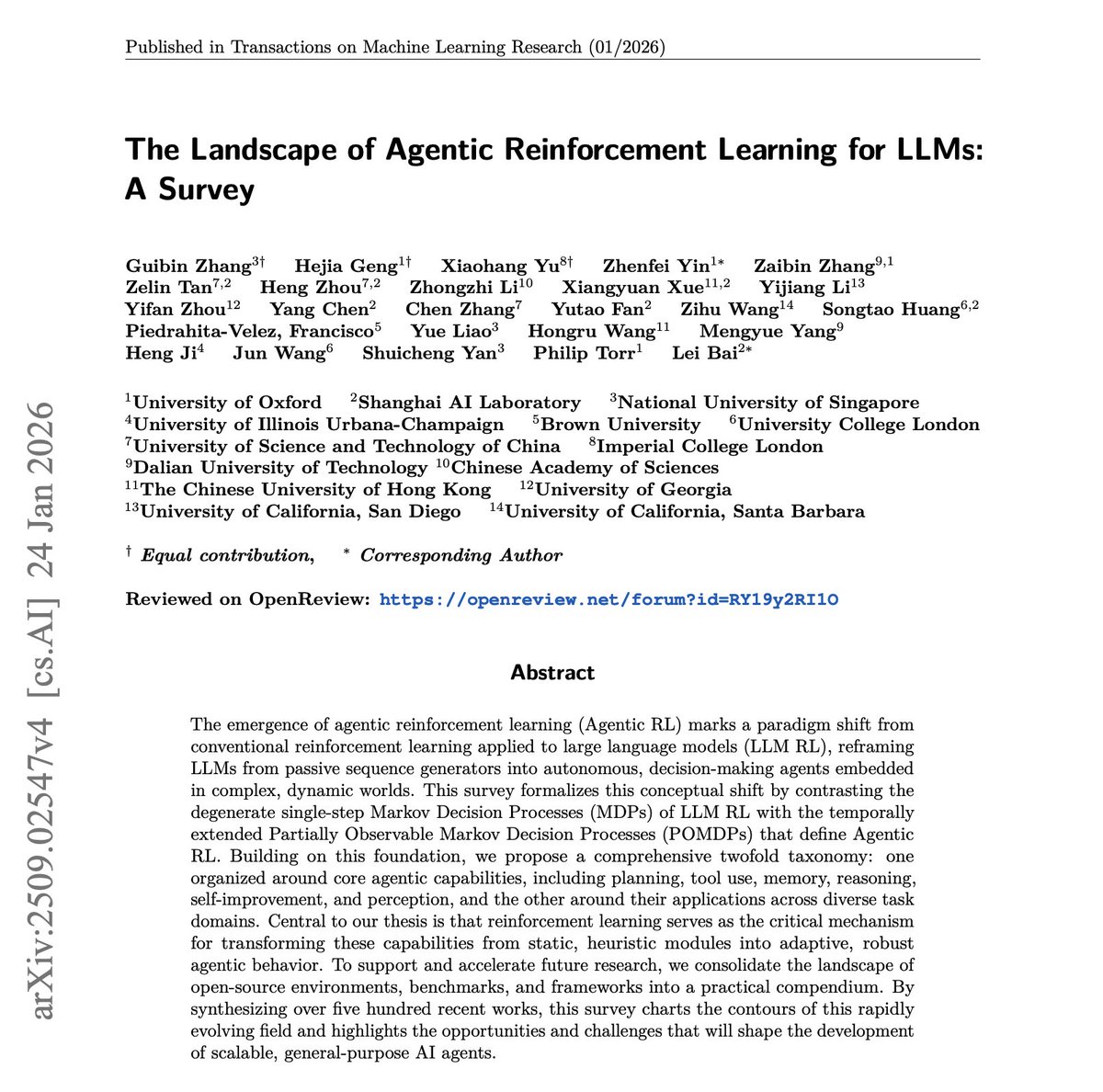

New survey on agentic reinforcement learning for LLMs. LLM RL still treats models like sequence generators optimized in relatively narrow settings. However, real agents operate in open-ended, partially observable environments where planning, memory, tool use, reasoning, self-improvement, and perception all interact. This paper argues that agentic RL should be treated as its own landscape. It introduces a broad taxonomy that organizes the field across core agent capabilities and application domains, then maps the open-source environments, benchmarks, and frameworks shaping the space. If you are building agents, this is a strong paper worth checking out. Paper: https://t.co/qwXZNSp0ZA Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Not a bad situation monitoring setup @jxnlco https://t.co/8uWMpamMKE

@SIGKITTEN @pdrmnvd https://t.co/oZpxlVOiGP

How it started. How it’s going Thanks @swyx https://t.co/OAQFjJW82S

Codex seamlessly auto-compacting and continuing the task https://t.co/EGjNl9QYG2

Codex seamlessly auto-compacting and continuing the task https://t.co/EGjNl9QYG2

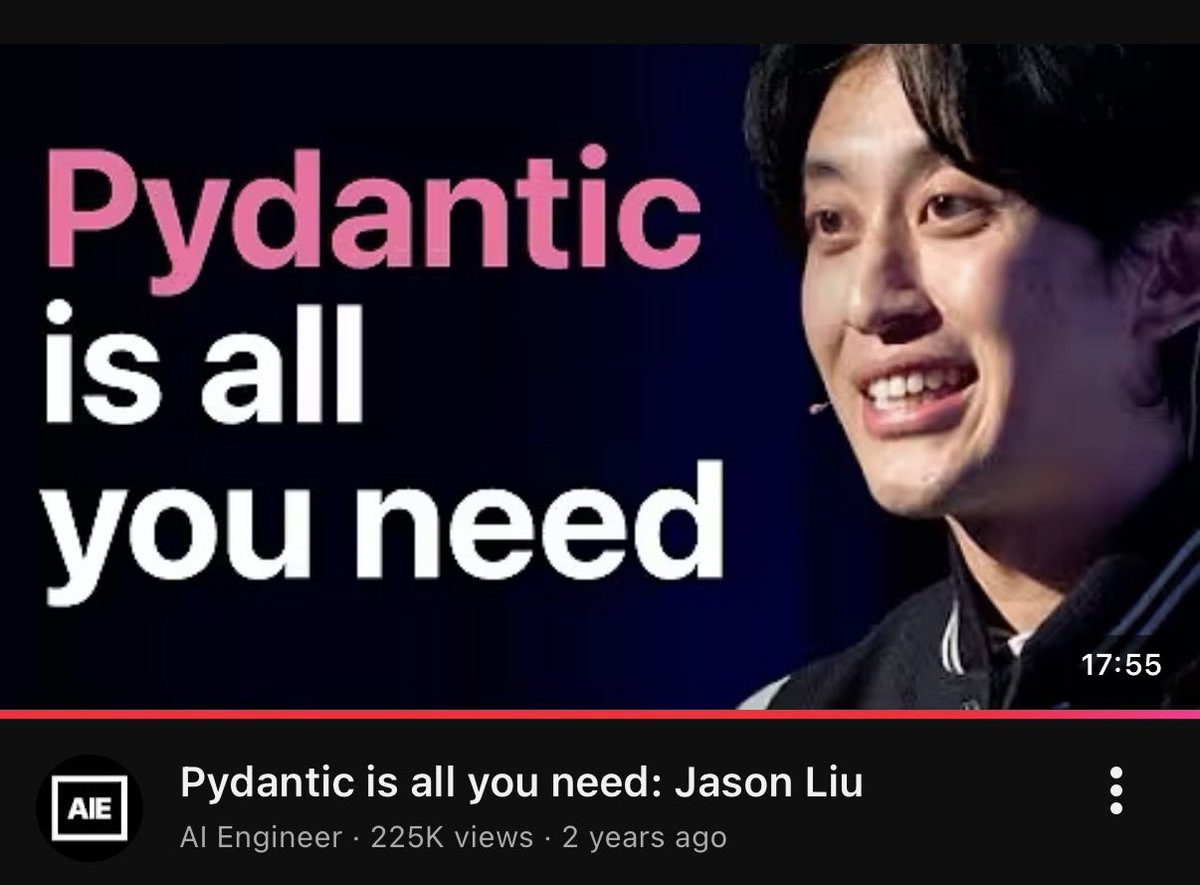

i'm asian so its ok to say this https://t.co/JxuCRhjg4z

i'm asian so its ok to say this https://t.co/JxuCRhjg4z

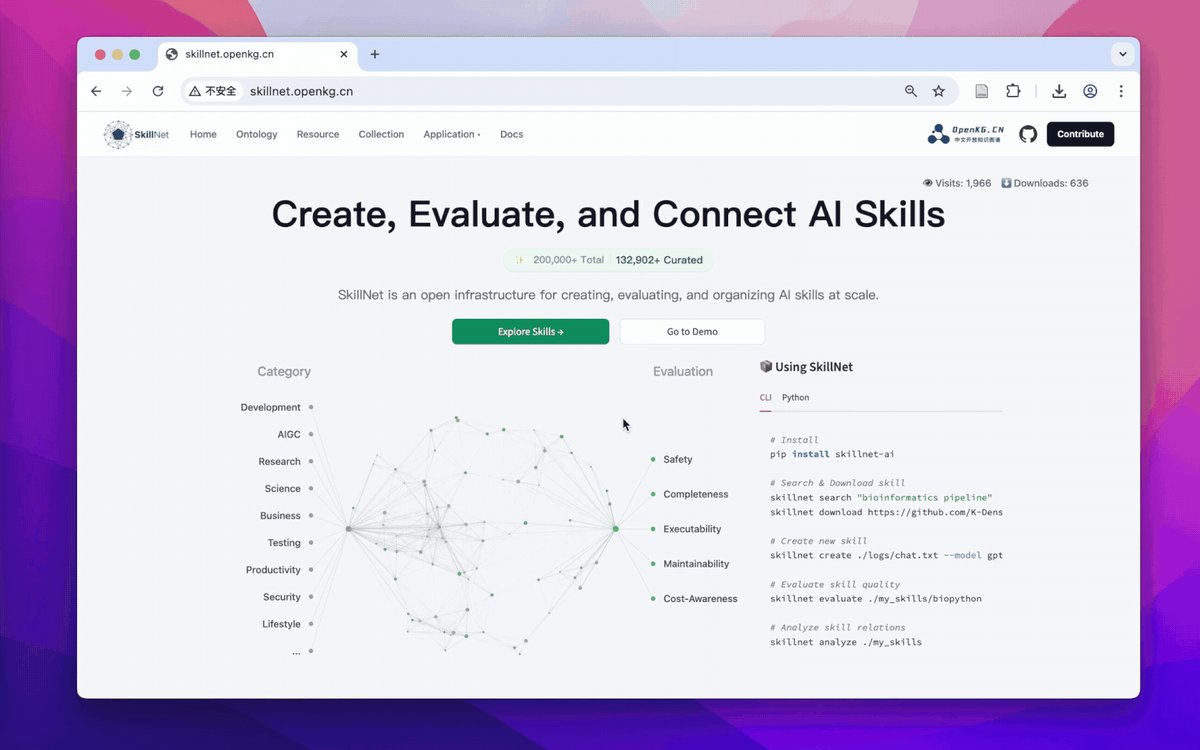

SkillNet Create, Evaluate, and Connect AI Skills paper: https://t.co/k9gIkLsgPE https://t.co/5tAkG7AVGt

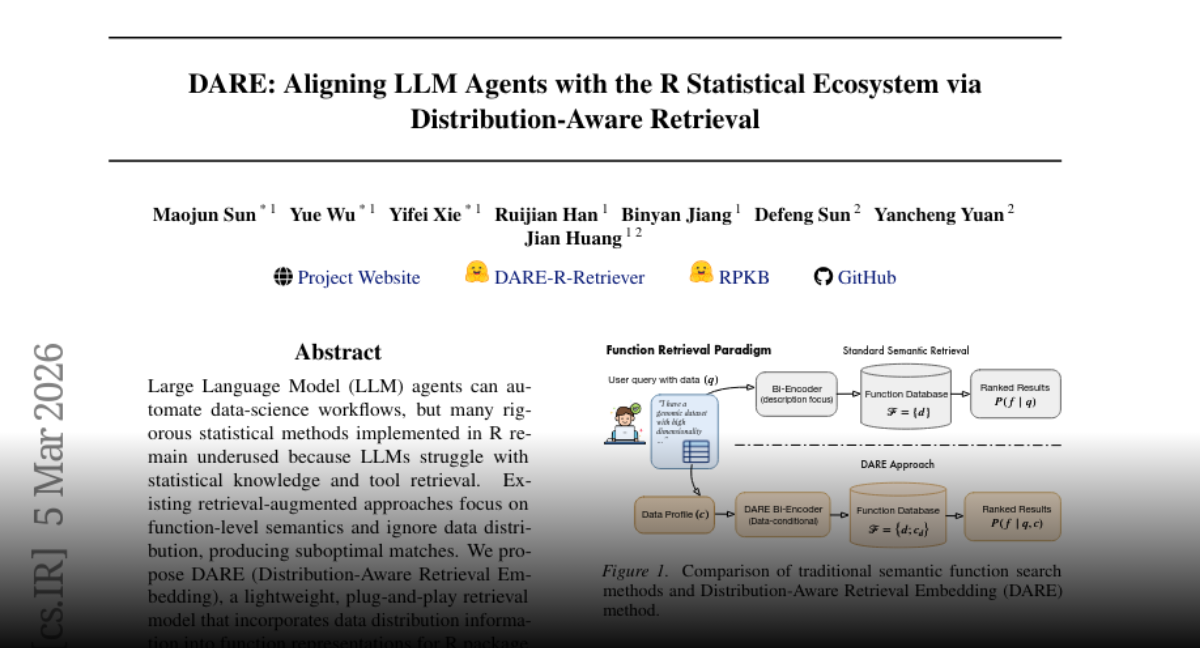

DARE Aligning LLM Agents with the R Statistical Ecosystem via Distribution-Aware Retrieval https://t.co/Jeo3lOI9ru

DataClaw🦞datasets are first class on Hugging Face datasets!! Full visibility into the reasoning, tool calls and thousands of Claude Code and Codex sessions on the hub https://t.co/Ooq9cGciGt