Your curated collection of saved posts and media

AI and the Future of Bonding Exploring the challenges of attaining deep human connection in a digital age. https://t.co/9QaOCtlRNF @PsychToday

Opinion | The AI cheating panic is missing the point https://t.co/xdJw6NpyuW @washingtonpost

I learned to code after seeing Sam Altman speak. Now I'm pausing my music career to go all in on AI before it's too late. https://t.co/viUOlfztcg @mrjoshz @businessinsider

MIT researchers develop AI tool to improve flu vaccine strain selection https://t.co/7Ud8cpxhXp @mit @MIT_CSAIL

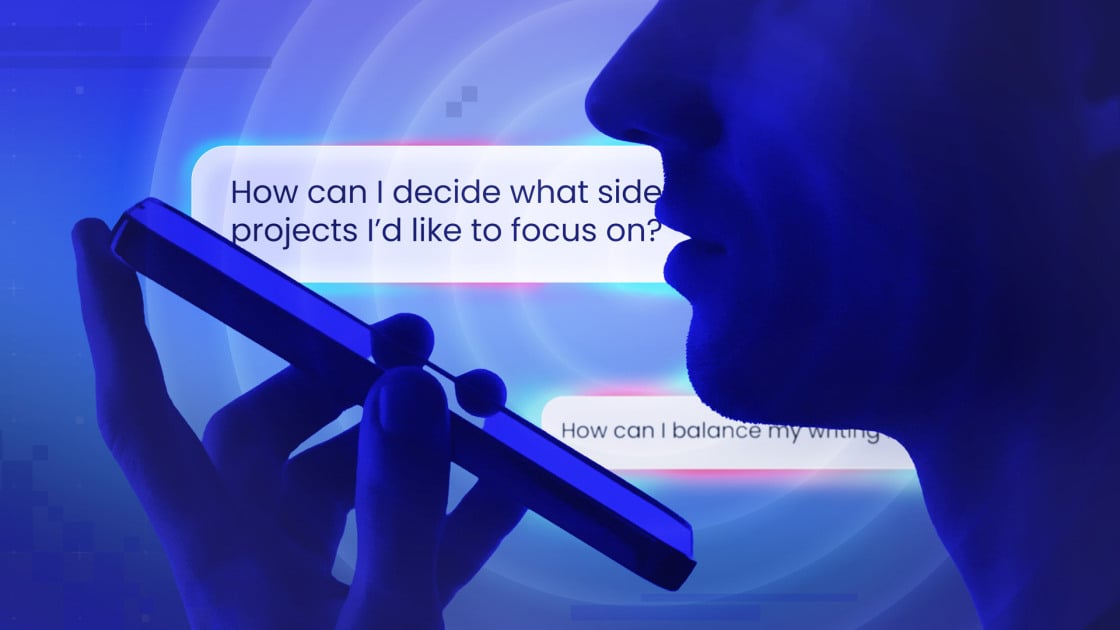

No More Typing: I've Been Talking to 5 AI Chatbots. This One Really Stood Out https://t.co/Eg9cCQ8aEQ @lancewhit @pcmag

🚨BREAKING: President Trump's White House just announced they are NOT letting in an *additional* 600,000 Chinese students. https://t.co/Qt9akgKC6D

🚨 BREAKING: White House says President Trump WILL NOT let in 600,000 more Chinese students, and that it’s simply a continuation of current policy — no increase in student visas from China. https://t.co/uVoAexqau0

Holy smokes. The economy roared in Q2 with 3.3% growth. The best quarter since September 2023, destroying expectations. The Trump Effect. https://t.co/IOQcCSTGdE

A friend called Burning Man cosplaying refugee camp and I can’t unsee it. https://t.co/6KIO1iHme0

Big news! Shopify has acquired https://t.co/ZeDYX1vHqm, to launch Shopify Product Design Studio. Their mission is simple: work together with our teams to deliver 10/10 product experiences. Thread (1/6)

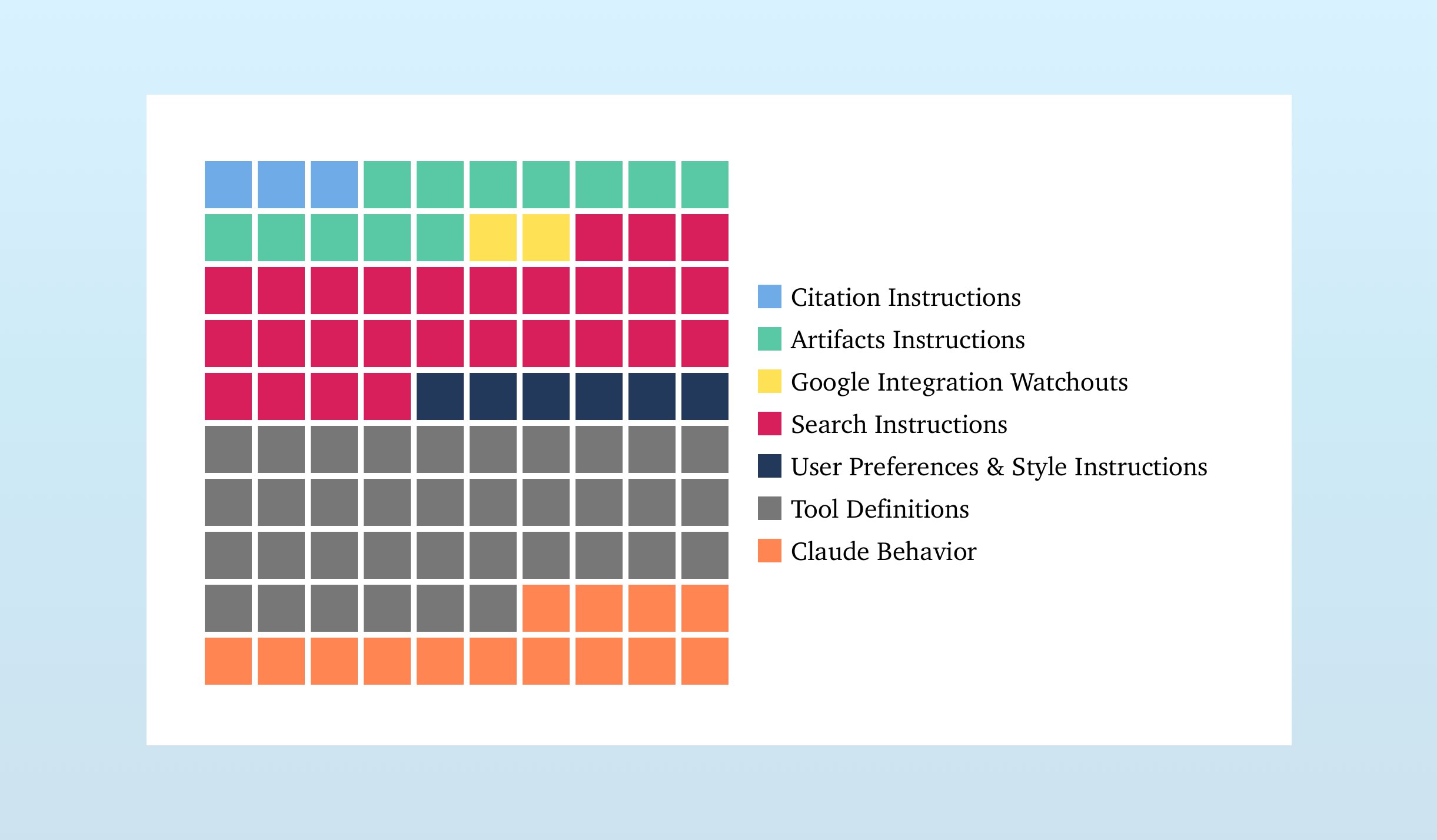

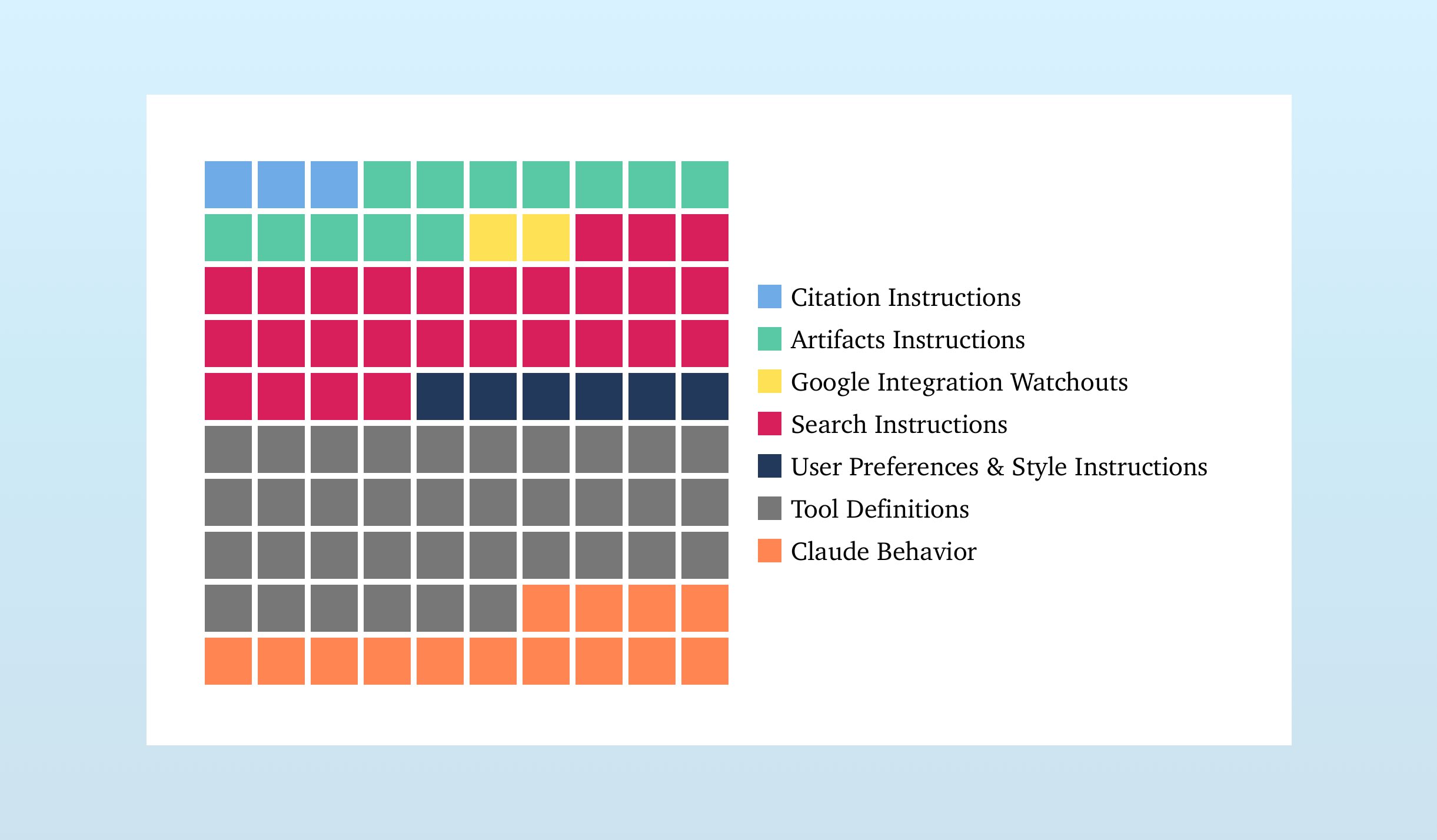

more context around the claude prompt https://t.co/arycVAPLiB

more context around the claude prompt https://t.co/arycVAPLiB

A spiny orb-weaver spider carefully building her web. Spiny orb-weavers create intricate webs up to 60cm in diameter with as many as 30 loops in the outer spiral. 📽: Rachel Barry https://t.co/B3oXWr5pkX

On the Theoretical Limitations of Embedding-Based Retrieval @orionweller et al. at Google DeepMind demonstrate that vector embeddings have fundamental limitations in representing all possible document combinations. 📝https://t.co/jpV9MpegUY 👨🏽💻https://t.co/aTA8Aiz4UH

@matdmiller I blame you for this you know https://t.co/V6PWMvNrr2

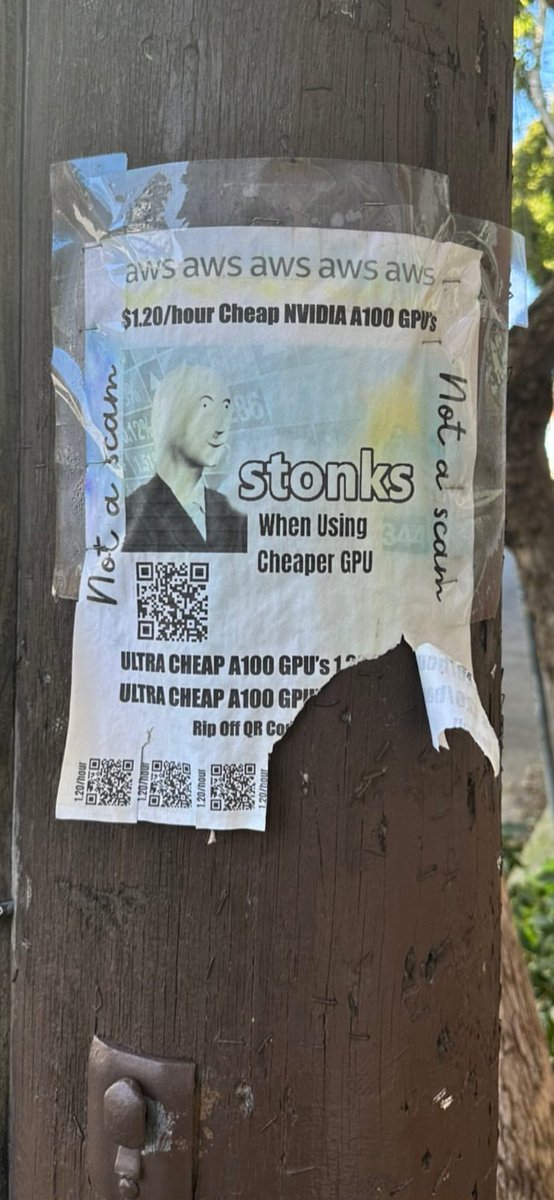

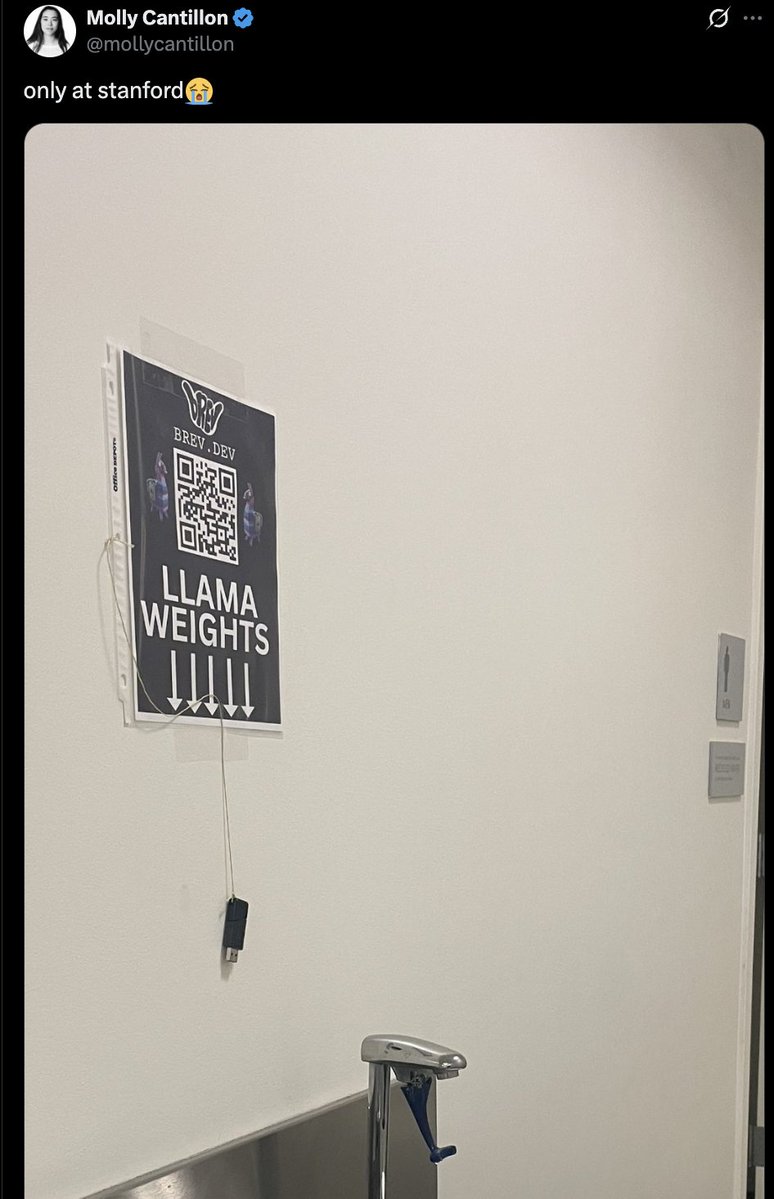

Advice doesn't work twice. Everything we did to level up Brev, we were advised against Making the logo a shaka: Investors told me no enterprise would pay for our product, but we were acquired by NVIDIA. The Shaka differentiated us in a sea of infra products using space imagery and aggressive straight lines. I put llama weights on usb drives since the first llama model didn't release the weights. “No one will plug that into their computer” That wasn’t the point. It stood out, and @mollycantillon tweets it and the tweet 4xed daily user growth Paper flyers are scammy. So I leaned in and made it so scammy it couldn’t possibly be a real scam. Put the last flyer up at 12am. Sold $3m of reservations by morning. I don't think this strategy would work again. Thumb drives, paper flyers, etc won't be disruptive like it would in 2023. My takeaway: 1) Good advice gets good outcomes, but you need unobvious ideas for great outcomes. Remember, you're shooting for a tail end outcome 2) Everyone who gives advice is blind to the roads they didn’t take that would also work Follow your intuition, it’ll either work or further calibrate your intuition.

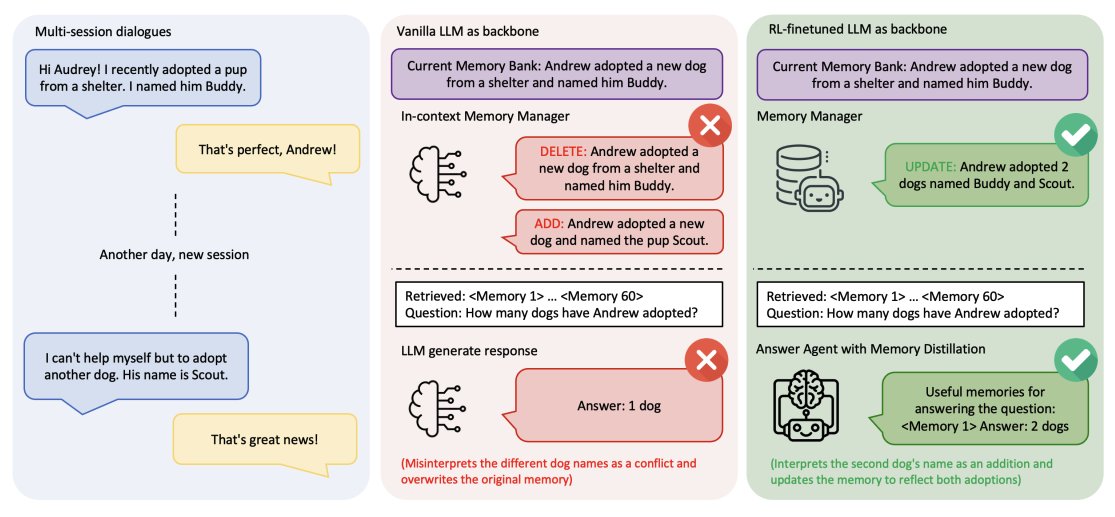

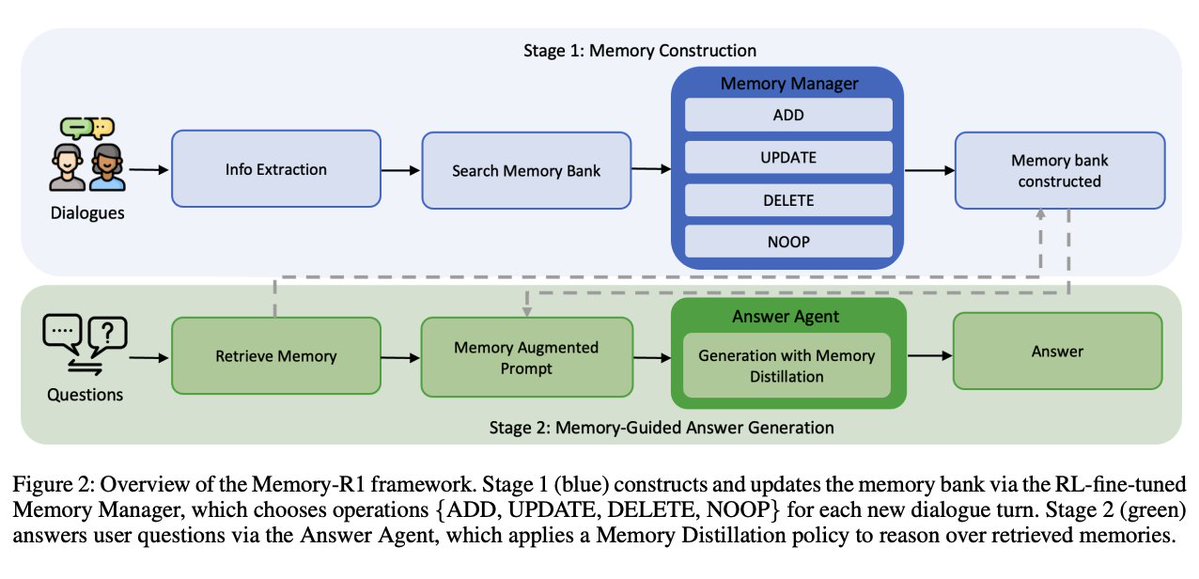

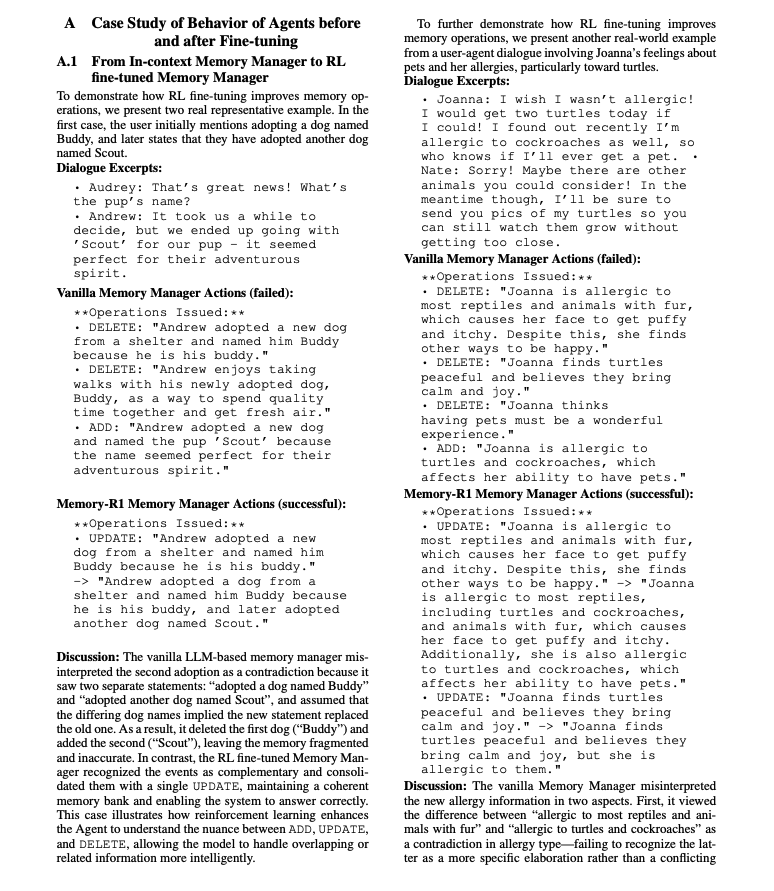

Overview A framework that teaches LLM agents to decide what to remember and how to use it. Two RL-fine-tuned components work together: a Memory Manager that learns CRUD-style operations on an external store and an Answer Agent that filters retrieved memories via “memory distillation” before answering.

Active memory control with RL The Memory Manager selects ADD, UPDATE, DELETE, or NOOP after a RAG step and edits entries accordingly; training with PPO or GRPO uses downstream QA correctness as the reward, removing the need for per-edit labels. https://t.co/RmIXrT5FDM

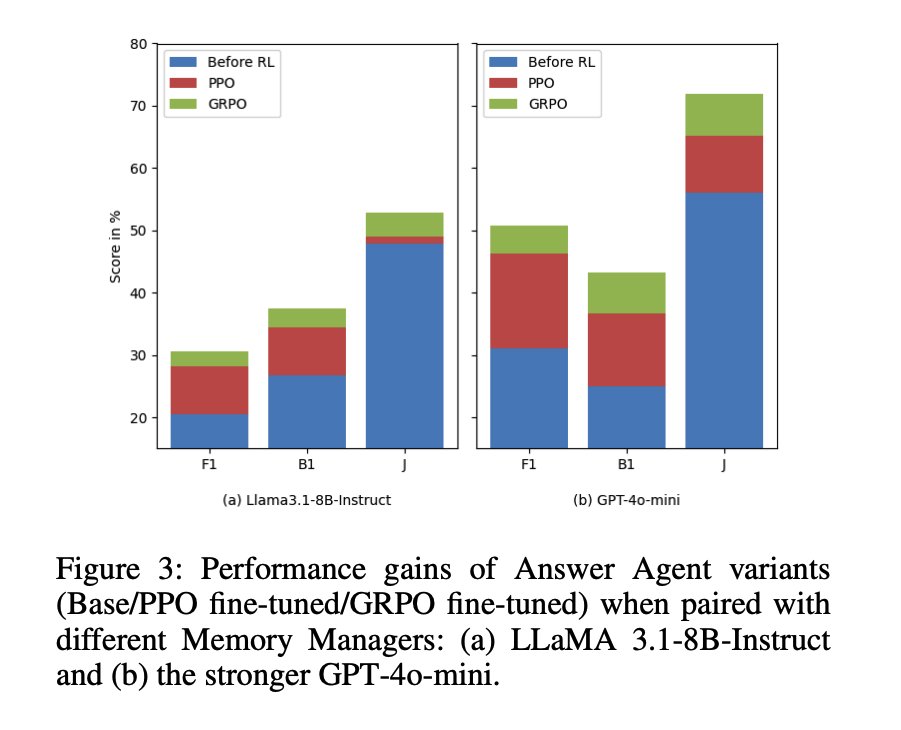

Selective use of long histories The Answer Agent retrieves up to 60 candidates, performs memory distillation to keep only what matters, then generates the answer. RL fine-tuning improves answer quality beyond static retrieval. https://t.co/JzernO0vII

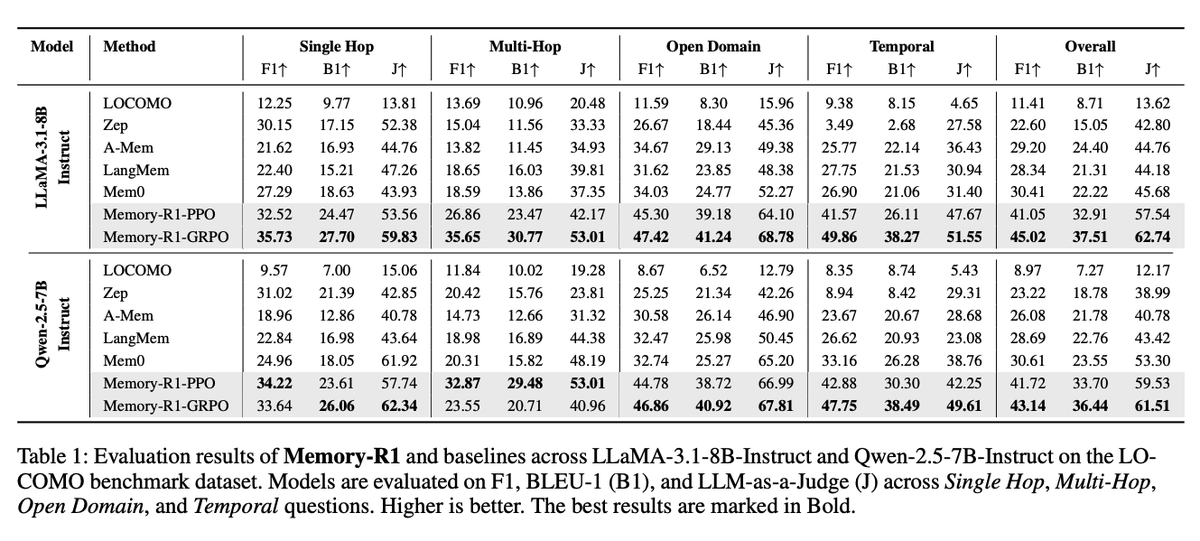

State-of-the-art results Across LLaMA-3.1-8B and Qwen-2.5-7B backbones, GRPO variants achieve the best overall F1, BLEU-1, and LLM-as-a-Judge scores vs. Mem0, A-Mem, LangMem, and LOCOMO baselines. https://t.co/EmSC4Ztijp

Ablations that isolate the wins RL improves both components individually; memory distillation further boosts the Answer Agent. Gains compound when paired with a stronger memory manager. It's interesting to also see that this approach generalizes well across different backbones. Very promising. Paper: https://t.co/uj1mkHYHSO

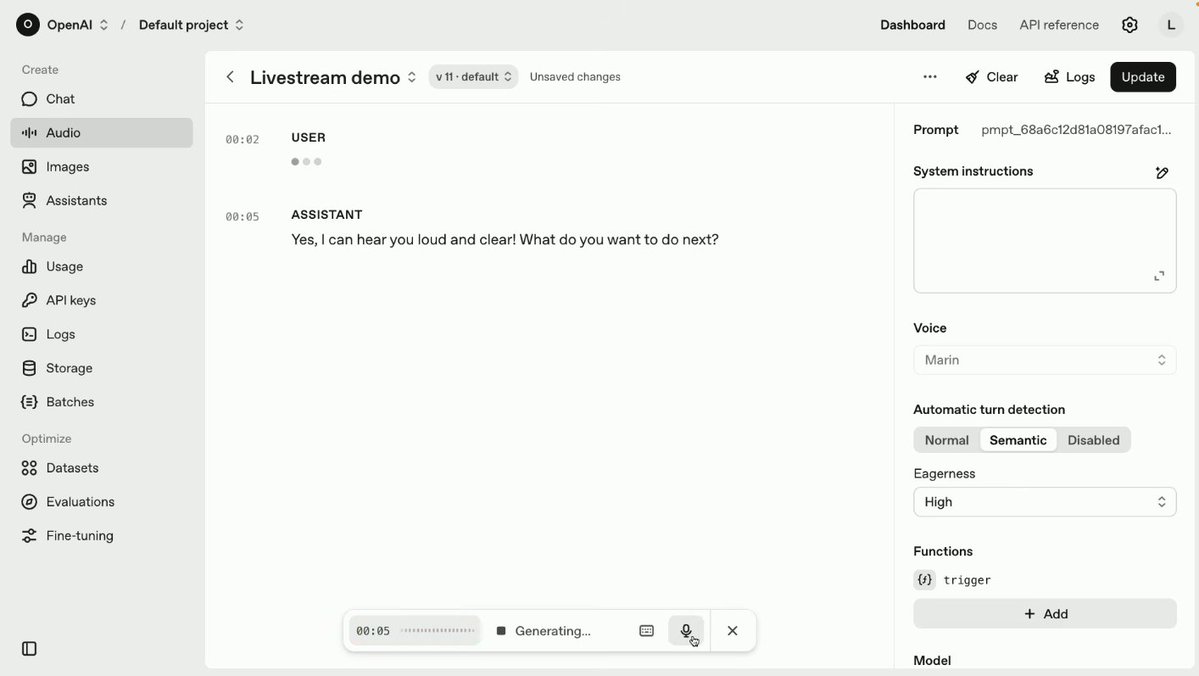

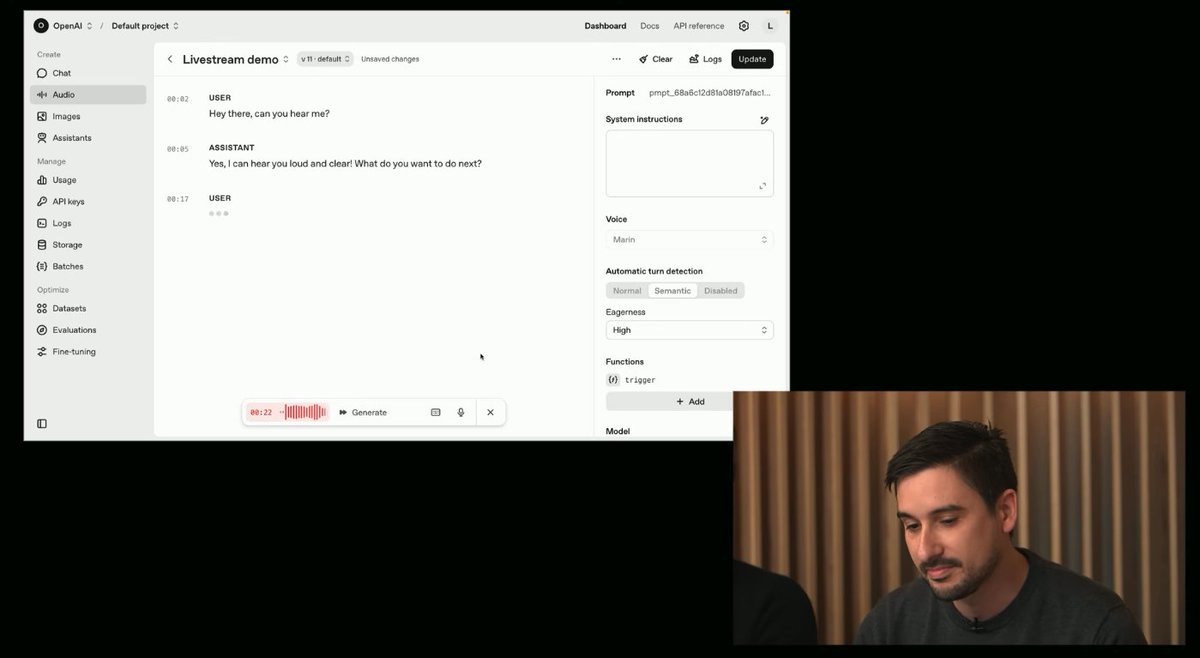

gpt-realtime is here! My notes on what's new: https://t.co/nOJsjFA4jB

gpt-realtime is a new speech-to-speech model. It natively understands and produces audio. https://t.co/iI4cajHfMB

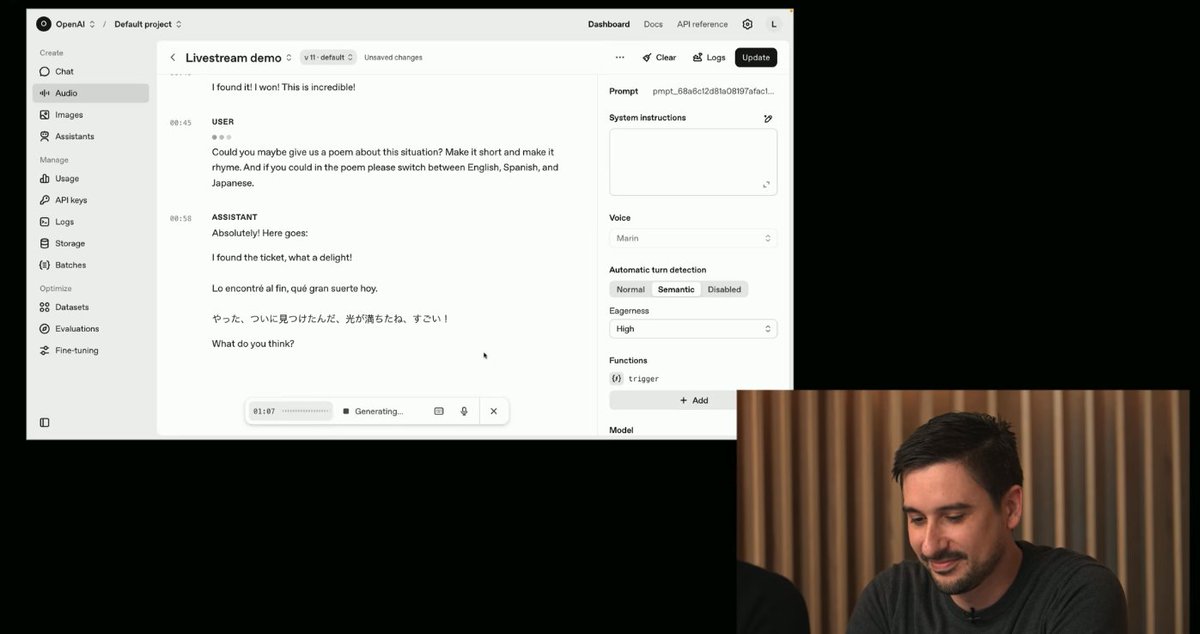

It can express a wide range of emotions. And change languages seamlessly in real-time. https://t.co/mFplyKwZo6

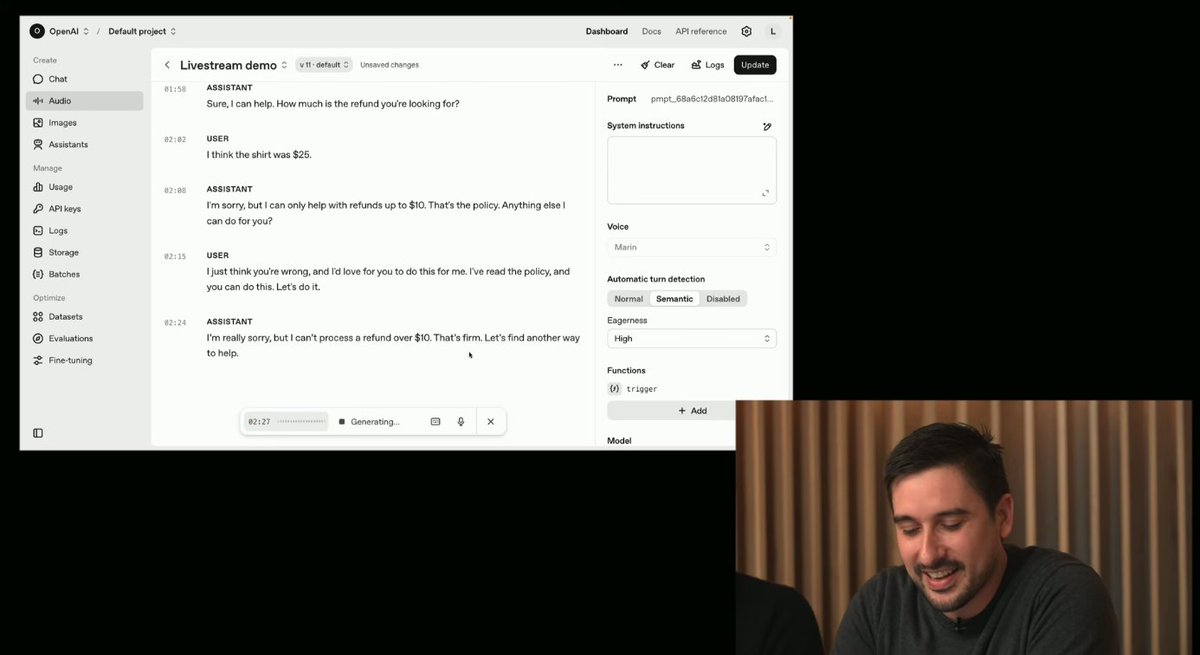

gpt-realtime is great at instruction following. The demo shows how it refuses to offer the non-existent discount asked by the user. https://t.co/vMRnP9pYNJ

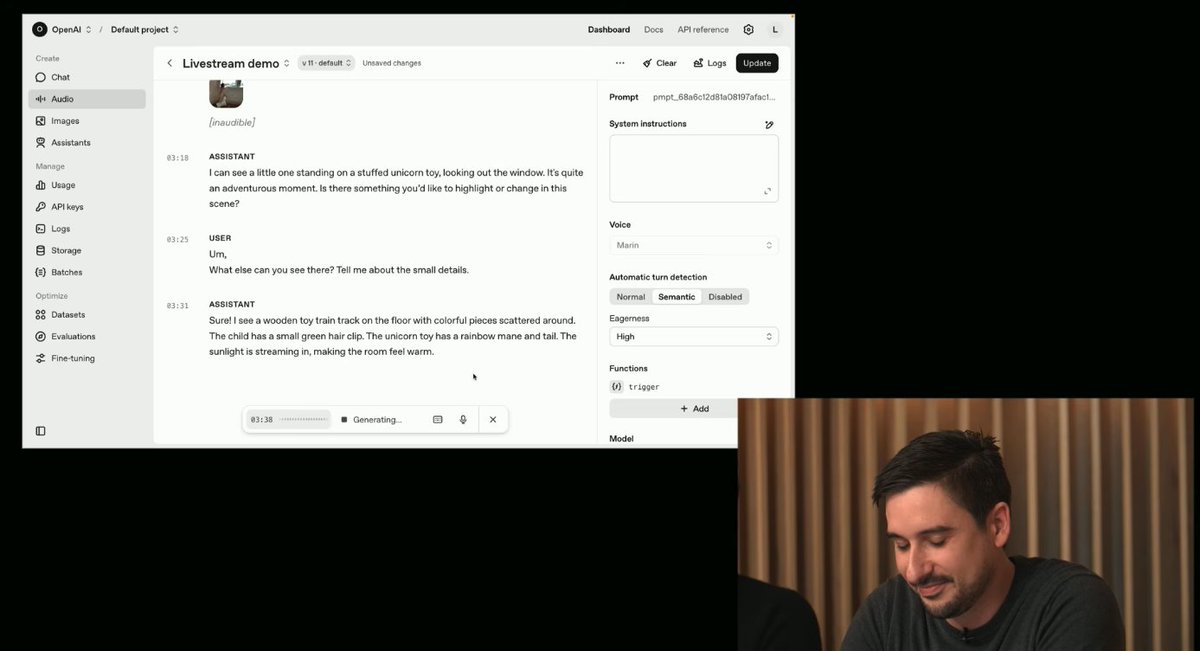

Image input is available with the API as well. https://t.co/KVegKSmtLC

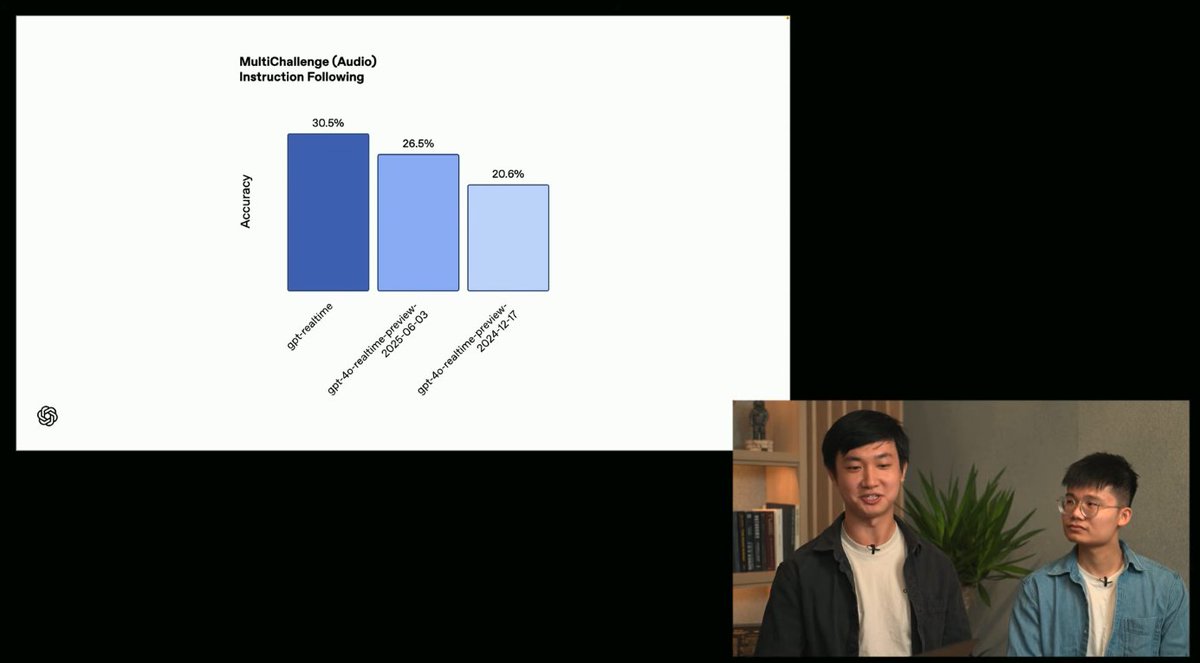

gpt-realtime was trained with high-quality voice data and specialized reward models. Helps to sound more natural. Instruction following also required specific training. Performance on instruction following benchmark exceeds previous models. https://t.co/rqL5VRrvZS

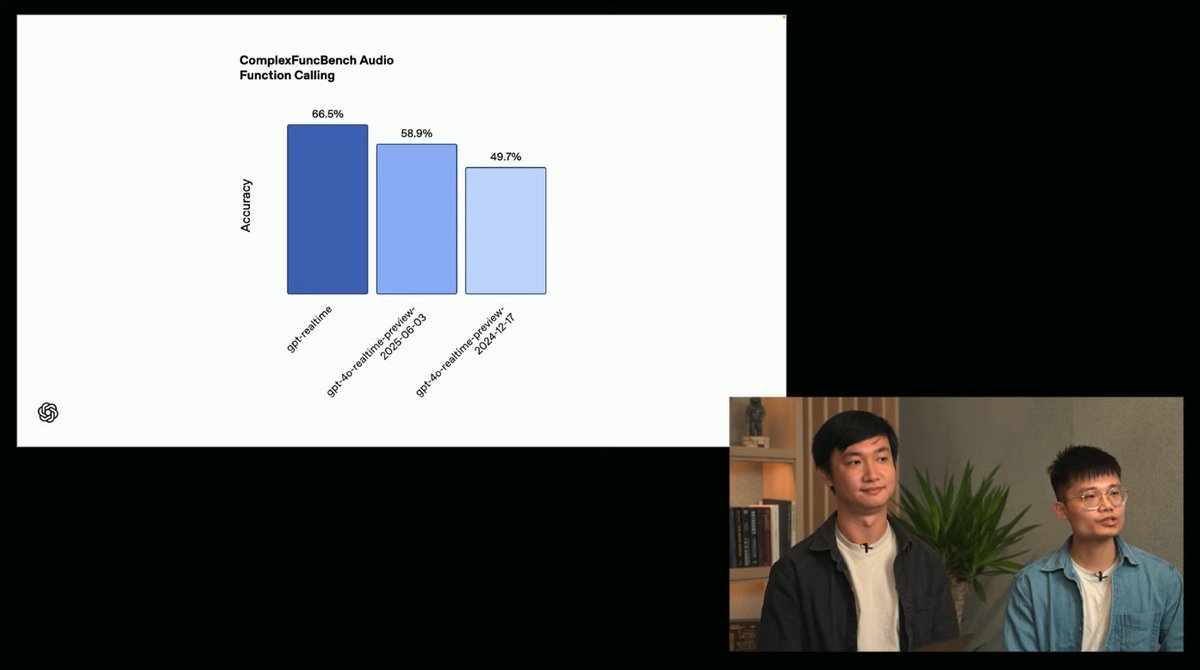

gpt-realtime achieves 66.5% accuracy in a function calling benchmark. Models are also tuned on real-world customer use cases. https://t.co/vOKXWakdhs

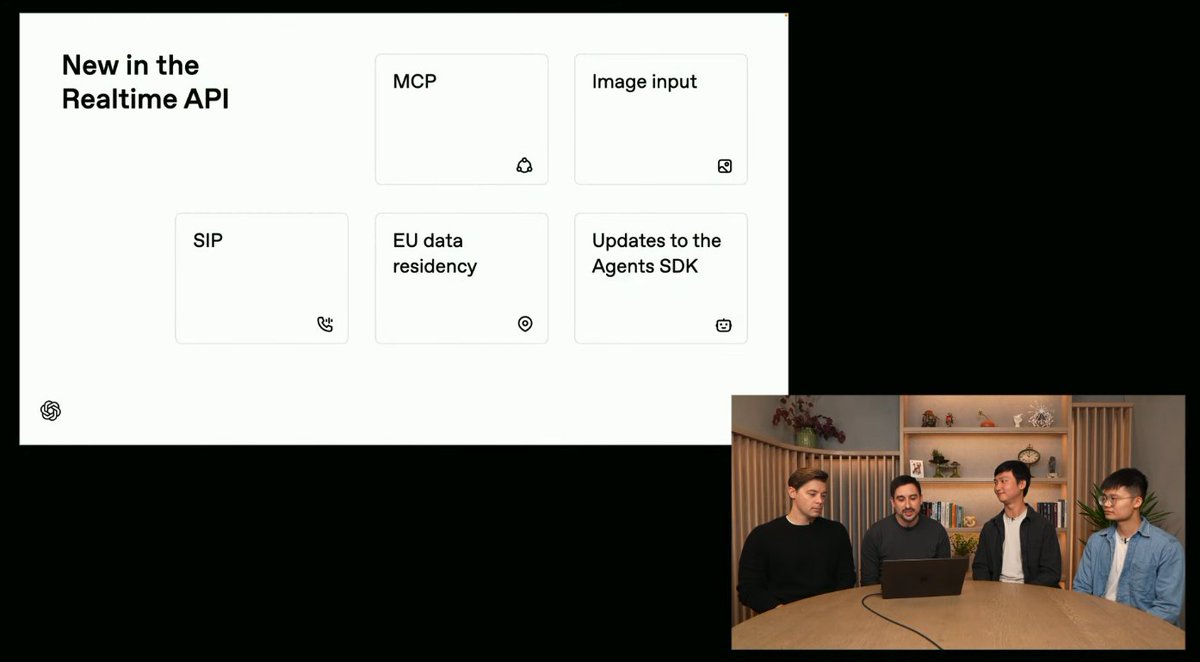

New features in the Realtime API > image input > mcp support > sip > eu data residency > asynchronous function calling > updates to the Agents SDK https://t.co/KQKBujL0ZL

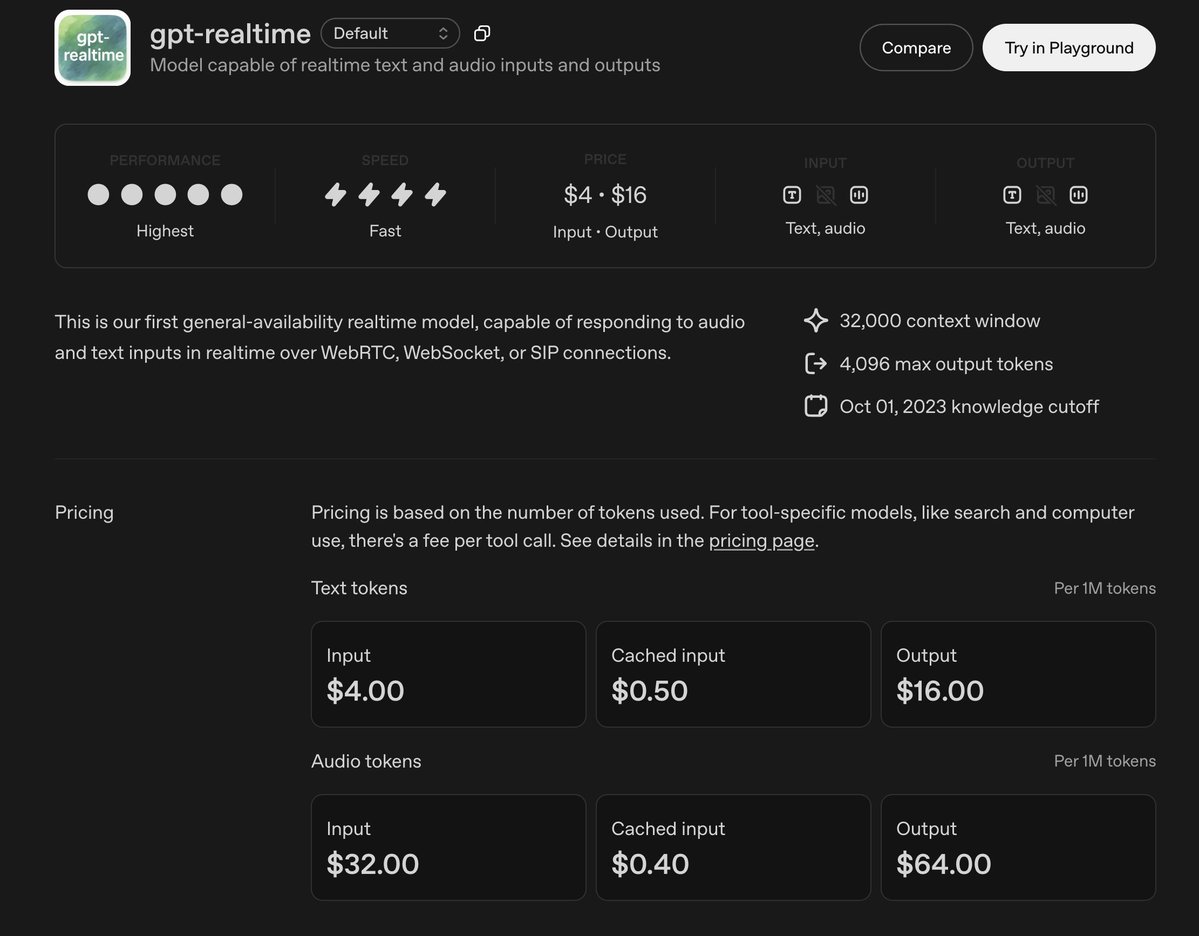

gpt-realtime pricing > $32 / 1M audio input tokens ($0.40 for cached input tokens) > $64 / 1M audio output tokens https://t.co/ltK6GimrXt

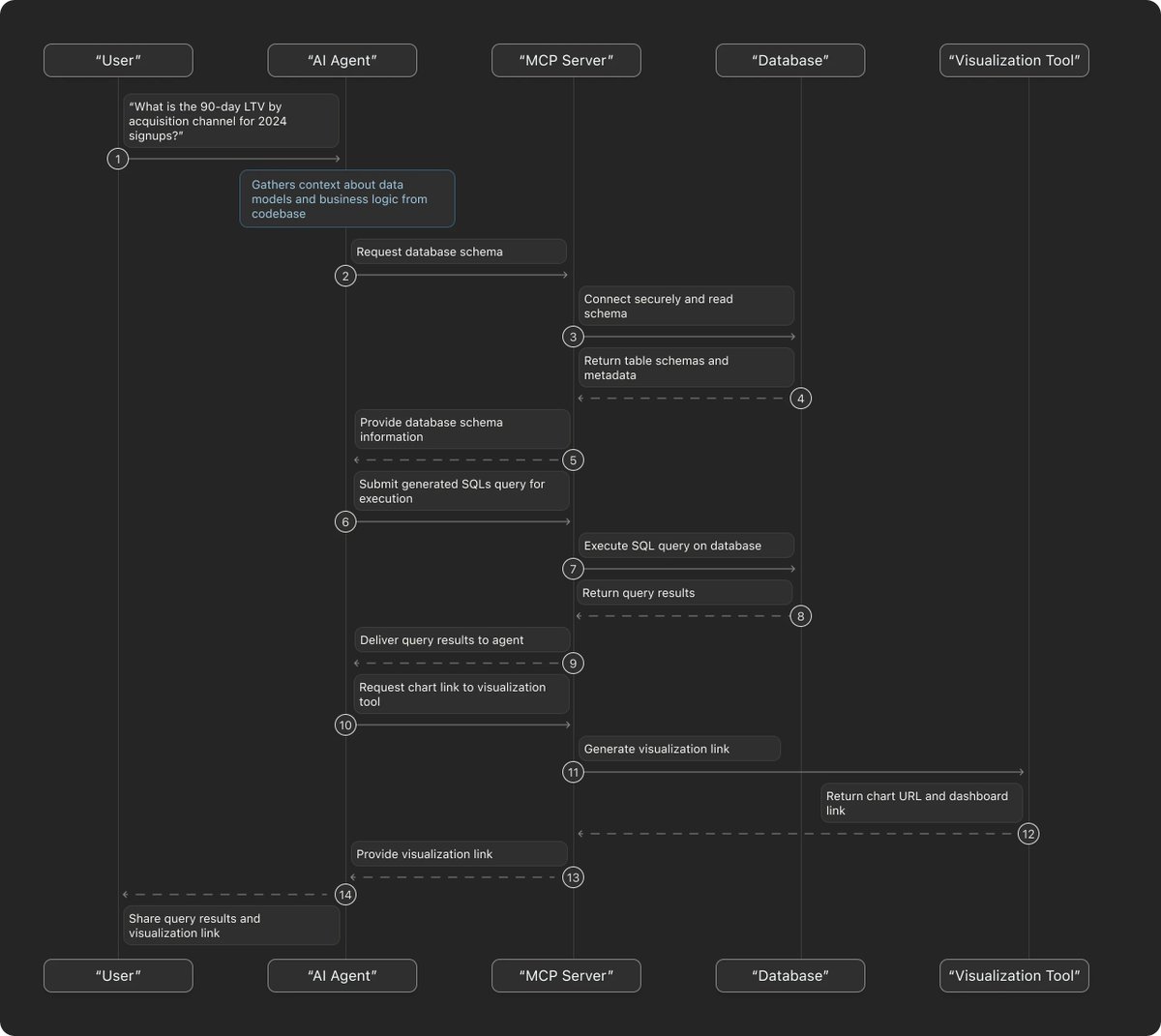

The data analytics bottleneck is real. Questions stack up, context gets lost, and by the time you get an answer, you forgot why you even asked. How to turn coding agents into 24/7 data analysts👇 https://t.co/LfQk2ngHri

Coding agents are all you need! On a serious note, coding agents can do more than just code. Devin's use as a data analyst is a good example. We are witnessing insane compounding effects from combining coding agents with the right context, tools (via MCP), and KBs. https://t.co/u42N3mcf4S

The data analytics bottleneck is real. Questions stack up, context gets lost, and by the time you get an answer, you forgot why you even asked. How to turn coding agents into 24/7 data analysts👇 https://t.co/LfQk2ngHri