Your curated collection of saved posts and media

The DSA platform includes abolishing USAID, so technically Trump has almost achieved a socialist priority https://t.co/PyWQvIZF3t

AIcos: At long last, we have built almost literally exactly the AI That Tells Humans What They Want To Hear, from Isaac Asimov's classic 1941 short story, "Don't Build AI That Tells Humans What They Want To Hear" https://t.co/GjZ1cy709e

china launches groundbreaking 6g chip capable of 100gbps, paving the way for next-gen ai business innovations Source: https://t.co/QnLzvL0kW0

nvidia reveals that two key clients generated 39% of q2 revenue, highlighting business opportunities in ai monetization Source: https://t.co/uOZcxyP1Nm

Source: https://t.co/XhCSWQyFv3

Plus, the policy is pushing for mass production of non-implantable devices in different shapes—like forehead-mounted gear, headsets, ear buds, helmets, glasses, and headphones. Source: https://t.co/FqShOzlpg1

Whistleblower on TikTok just dropped bombshells on AI training at Scale AI - Pay slashed from $35 to $15/hr - Faulty automation - Approving bad work - Trainers using Wikipedia as ‘authoritative’ sources Affecting ChatGPT, Grok, Gemini. Full details ↓ https://t.co/B21LPrrxJZ https://t.co/gclp7eVnqj

In the age of AI chatbots, are we just buying digital Pet Rocks? Our anthropomorphic bias turns tools into “friends.” Slick UIs mimicking human combo, marketed as companions, and zero AI literacy—users need education. https://t.co/RAeWDEzDCc #AIHumanInteraction https://t.co/hJvO9XqHXh

@POTUS believes that he has established US greatness again: every other ally leader has figured out how to suck up to you to get their way, and the other guys are joining up with China to dominate the US. You @POTUS loser!! https://t.co/5tMAuh1hRQ

Congratulations to CDS PhD Student @vlad_is_ai, Courant PhD Student Kevin Zhang, CDS Faculty Fellow @timrudner, CDS Professors @kchonyc and @ylecun, and Brown's @randall_balestr on winning the Best Paper Award at the ICML 'Building Physically Plausible World Models' Workshop! https://t.co/J18pKWw0Em

We’ve raised $15M to date to build the future of cinematic, on-chain video. 🌀 Today, we’re thrilled to welcome @Mysten_Labs, creators of Sui, as investors in Everlyn joining its $250M valuation round. They join a strong lineup: powerhouse investors like Baseline (Emirates), @SeliniCapital, @NesaOrg, @AethirCloud, @ionet, @MH_Ventures, with leadership from Kling AI, Google, Amazon, Meta, TencentGlobal. This is more than funding. It’s long-term conviction in our vision: the dream machine belongs to everyone.

MATS 9.0 applications are open! Launch your career in AI alignment, governance, and security with our 12-week research program. MATS provides field-leading research mentorship, funding, Berkeley & London offices, housing, and talks/workshops with AI experts. https://t.co/Gi0W5BzuOJ

🇦🇪 UAE GDP 1994: $57 billion 2024: $537 billion https://t.co/CDMzTz1y2D

🇦🇪 UAE GDP 1994: $57 billion 2024: $537 billion https://t.co/CDMzTz1y2D

🇨🇳 CHINA’S BUS CHARGES FASTER THAN YOUR PHONE In China, city buses now recharge in 10 minutes flat. Not with bulky lithium batteries, but with supercapacitors - tech built for instant power bursts. One quick stop = 50 km range. Picture it: buses running all day, pausing for coffee-length pit stops under sci-fi-looking chargers built right into city streets. The payoff? Faster commutes, greener cities, zero excuses for diesel holdouts. If every metro ran on this, public transit would be unstoppable. And your phone battery would suddenly look embarrassing.

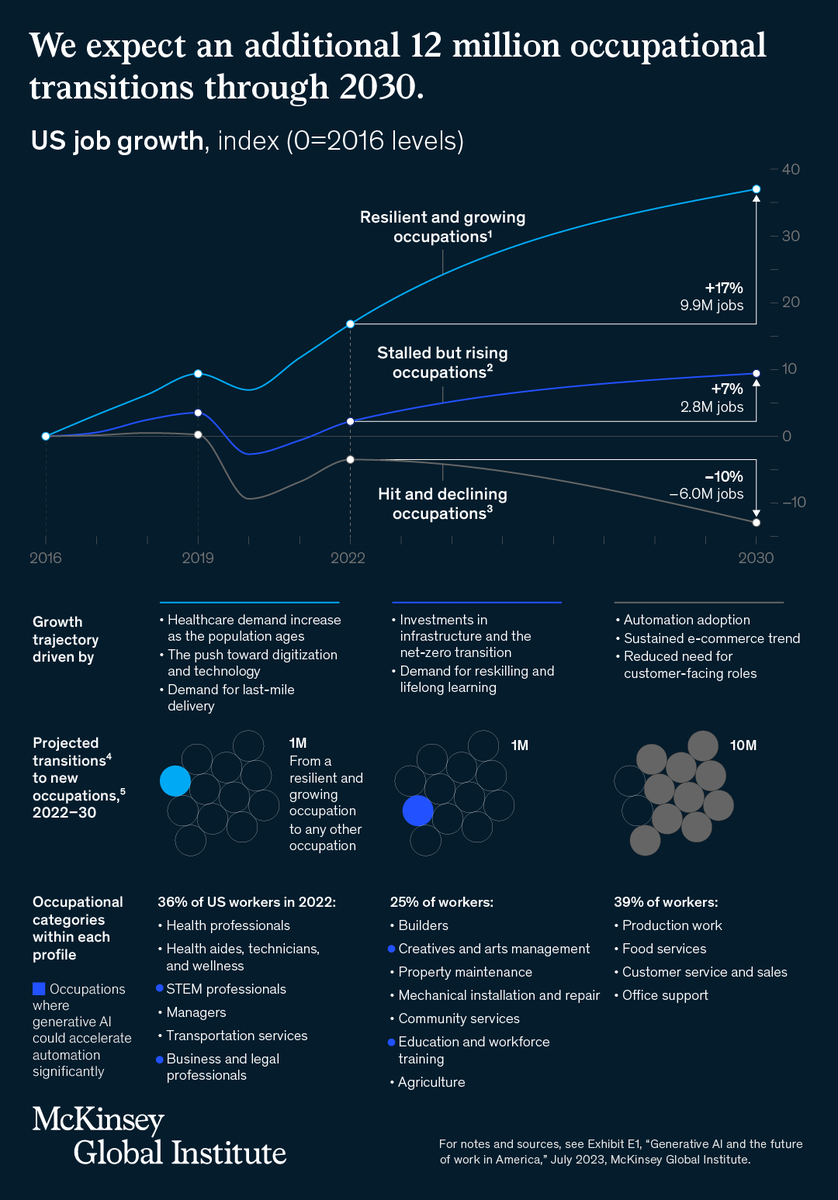

Generative AI has the potential to change the future of work. By 2030, up to 30% of U.S. work hours could be automated. Which jobs will be in demand? Which ones are shrinking? And which ones could be hardest to fill? Our research: https://t.co/f7EGTFH3a3 https://t.co/LNJicm7cfl

@AngelaStew72006 @Joshuareader463 It really exposes how outdated the courtroom process has become https://t.co/F1qjqnEYzk

It’s such an entertaining time to be alive. https://t.co/ZYYbfc6P34

Excited to launch "Novix"🚀, our PhD-level AI-Scientist designed for autonomous scientific discovery. Novix revolutionizes research workflows through comprehensive capabilities spanning: deep research, innovative ideation, intelligent coding, advanced data analysis, automated experimentation, and paper writing. 🌐 Platform Access: https://t.co/hB7kSBNf3V 👉 Open-Source Foundation: https://t.co/O3arx6M0bs 🚀 Accelerated Scientific Discovery Pipeline: From concept to publication-ready research with unprecedented efficiency ✨ Core Capabilities: - 🧠 Research Co-Pilot Intelligence: AI-powered ideation and hypothesis generation that collaborates with your research intuition - ⚙️ Autonomous Algorithm Innovation: End-to-end design, implementation, and validation of novel computational approaches - 📊 Intelligent Data Orchestration: Advanced analytics with automated insights discovery and compelling visualizations - 🔬 Scientific Reproducibility Engine: Automated verification and replication of research methodologies and findings - 📚 AI-Powered Deep Survey: Comprehensive literature synthesis and gap analysis across scientific domains We're building an AGI Level 4 innovation engine that empowers researchers, developers, and businesses to achieve breakthrough results in scientific innovation and discovery. From our open-source foundation to this production-ready platform, Novix represents a paradigm shift in how we reshape scientific discovery. 🎁 Launch Benefits - 🚪 Barrier-Free Access: Simply register and start exploring - 💰 Welcome Bonus: New users receive $5 in credits to experience the platform's full potential - 🎯 Enhanced Experience: Complete our user feedback survey to unlock a $20 Pro account with complete feature access We deeply understand the challenges of research work and genuinely hope Novix can serve as your trusted research companion. Join us in this exciting journey of AI-powered scientific discovery and help shape the future of research innovation!

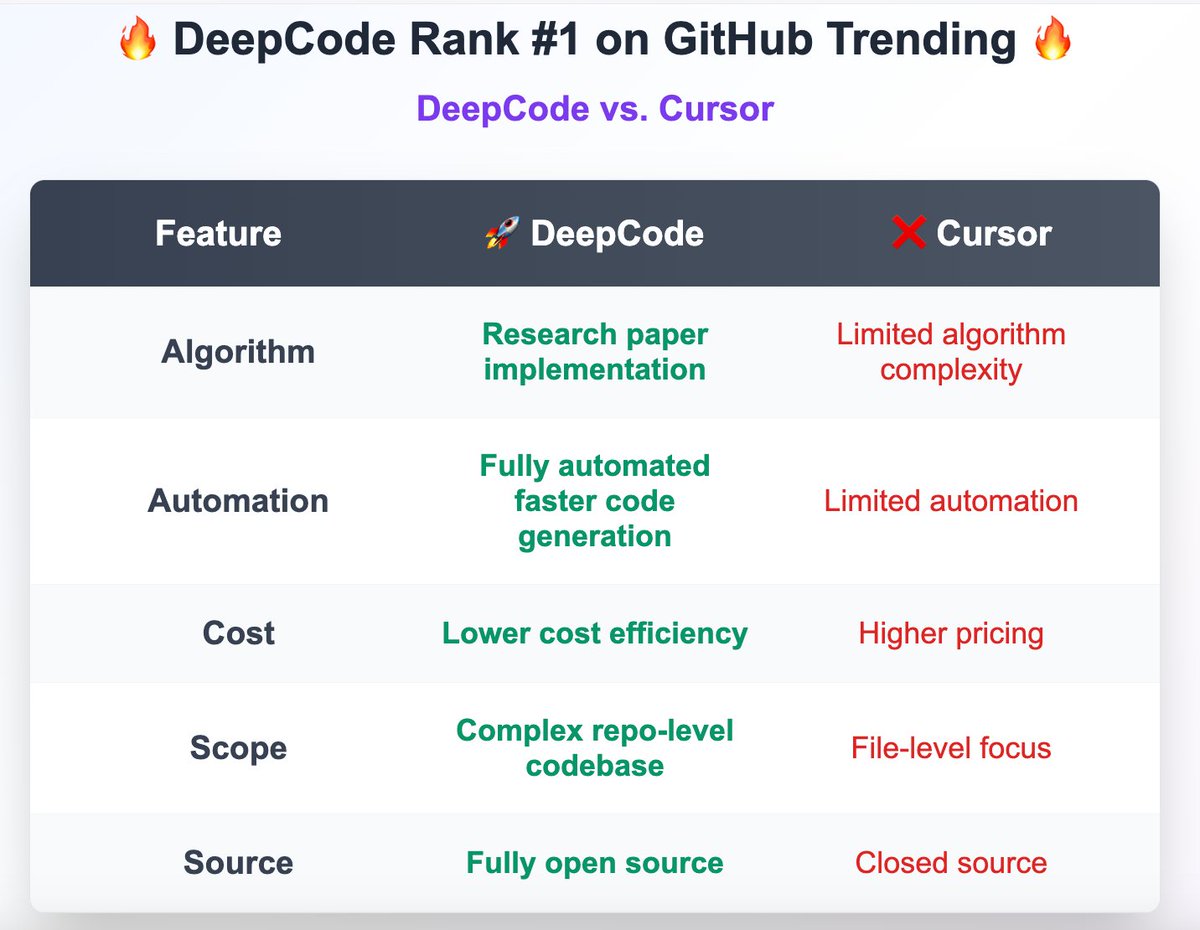

🔥 DeepCode hits #1 on GitHub Trending for consecutive days! 🔥 Vibe Coding is trending! Let's take it one step further with "Vibe Research" 🧠✨ Paper2Code, Docs2Code, Bug Report2Fix... the possibilities are endless! 👉 Github: https://t.co/OM1WbgRJiw Let's take AI coding to the next level together! 🚀 #vibecoding #viberesearch #AI4code #codeagent

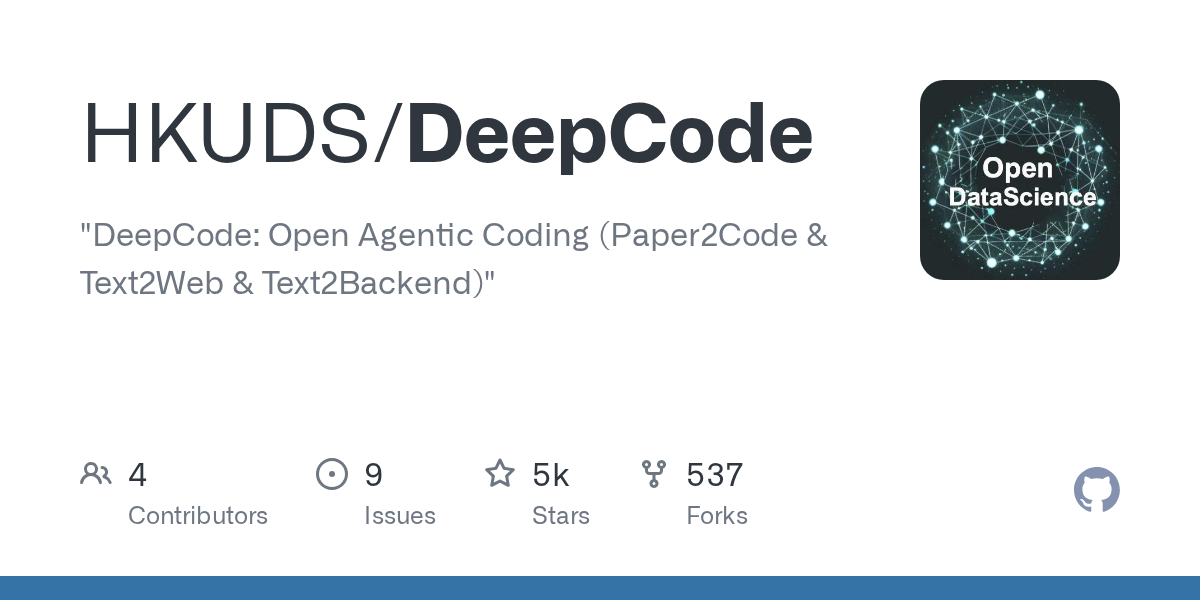

Thrilled to open-source WebWatcher: our vision-language deep research agent from @Alibaba_NLP! Available in 7B & 32B parameter scales for the community. Achieving SOTA on the toughest VQA benchmarks: • HLE-VL: 13.6% (vs GPT-4o's 9.8%) • BrowseComp-VL: 27.0% (2x GPT-4o!) • LiveVQA: 58.7%

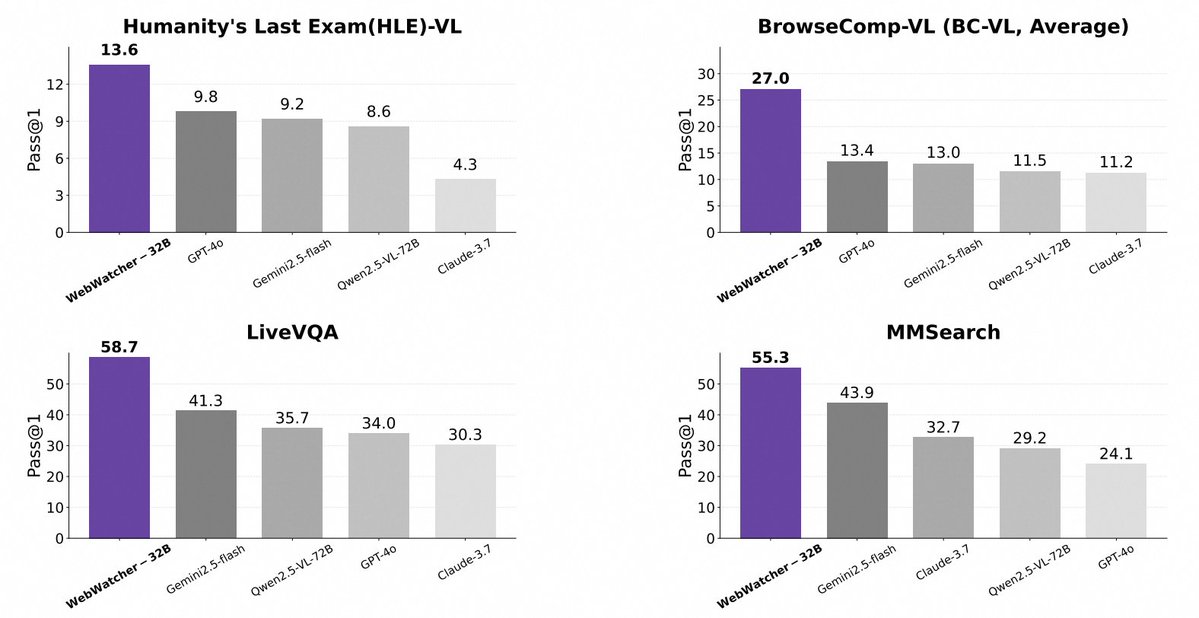

[New Post] CUA market and where things are. Below is what it takes to construct a computer use agent today, and some different approaches to get there We are still in the very early innings but @zephratic @JenniferHli @seema_amble and I are excited to hear what you build 🧵 https://t.co/poWu4J5zqD

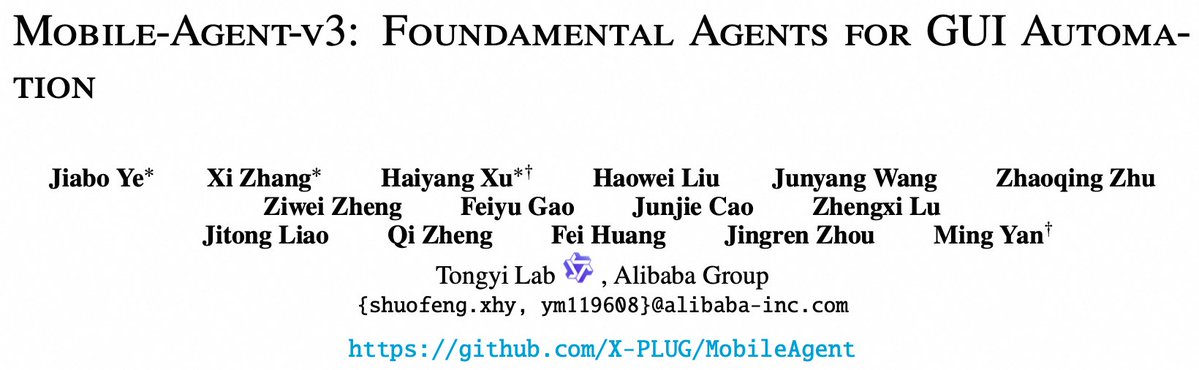

1/4 Thrilled to launch Mobile-Agent-v3 & GUI-Owl! Our new framework for GUI automation sets a new SOTA for open-source models, dominating benchmarks like AndroidWorld (73.3) & OSWorld (37.7). We're excited to open-source our work! GitHub: https://t.co/ndwds839Ay

🇨🇳 Another great Chinese Model, OmniHuman-1.5 from ByteDance Turns 1 image plus a voice track into expressive avatar video by pairing a System 1 and System 2 inspired planner with a Diffusion Transformer, Produces coherent motion for over 1 minute with moving camera and multi character scenes. Most avatar models move to the beat of the audio but miss meaning, so gestures feel generic and emotions feel shallow. The fix here is a Multimodal LLM planner that listens to the speech and drafts a structured plan describing intent, emotions, beats, and high level actions, which gives the motion engine clear semantic targets instead of only rhythm. The motion engine is a Multimodal Diffusion Transformer that fuses the plan with audio, the single reference image, and optional text prompts, then synthesizes continuous body, face, and head motion that matches both words and tone. A key trick is a Pseudo Last Frame, a synthetic target that summarizes the next expected state, which stabilizes fusion across modalities and keeps motion consistent over long spans. From just 1 image and speech, the system outputs speaking avatars with synchronized lips, context aware gestures, and continuous camera movement, and it also supports multi character interactions without manual choreography. Reported results show strong lip sync accuracy, high video quality, natural motion, and close match to text prompts, and the same setup works on nonhuman characters too.

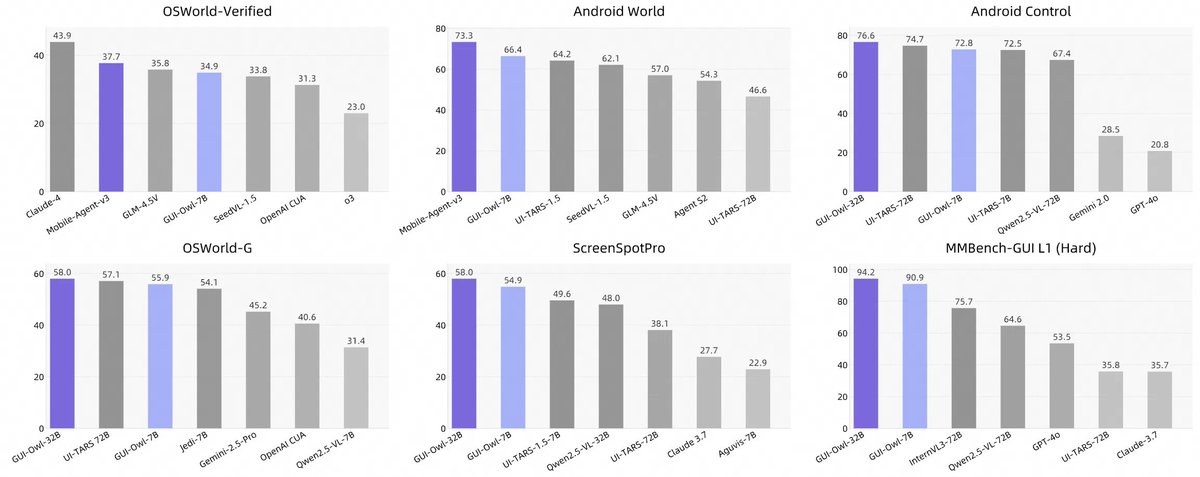

New open-weights Chinese model with a really detailed tech report just dropped. It has tons of details on architecture and infra. Here are some of my notes and the parts I found interesting :) https://t.co/gOM2TMP5LU

GPT-4o level intelligence running on your phone! MiniCPM-V 4.5 delivers enterprise-grade AI performance in just 8B parameters, outperforming models like GPT-4o, Gemini-2.0 Pro on vision and language tasks. - 30+ language support - Runs smoothly on iPhone/iPad 100% open-source! https://t.co/gk483zcEJ4

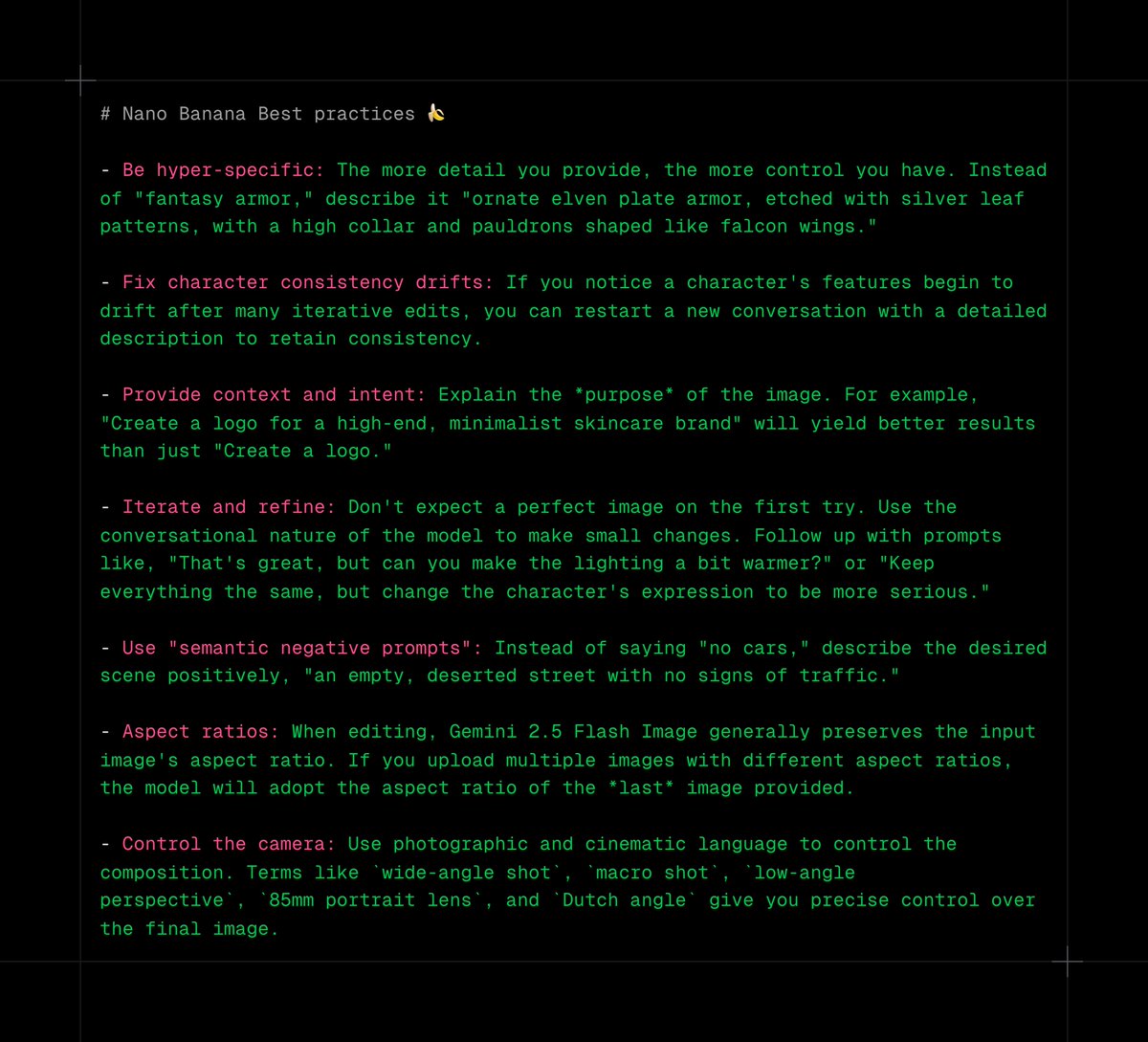

Gemini 2.5 Flash Image (Nano Banana) best practices 🍌🍌🍌 - Be hyper-specific: The more detail you provide, the more control you have. Instead of "fantasy armor," describe it "ornate elven plate armor, etched with silver leaf patterns, with a high collar and pauldrons shaped like falcon wings." - Fix character consistency drifts: If you notice a character's features begin to drift after many iterative edits, you can restart a new conversation with a detailed description to retain consistency. - Provide context and intent: Explain the *purpose* of the image. For example, "Create a logo for a high-end, minimalist skincare brand" will yield better results than just "Create a logo." - Iterate and refine: Don't expect a perfect image on the first try. Use the conversational nature of the model to make small changes. Follow up with prompts like, "That's great, but can you make the lighting a bit warmer?" or "Keep everything the same, but change the character's expression to be more serious." - Use "semantic negative prompts": Instead of saying "no cars," describe the desired scene positively, "an empty, deserted street with no signs of traffic." - Aspect ratios: When editing, Gemini 2.5 Flash Image generally preserves the input image's aspect ratio. If you upload multiple images with different aspect ratios, the model will adopt the aspect ratio of the *last* image provided. - Control the camera: Use photographic and cinematic language to control the composition. Terms like `wide-angle shot`, `macro shot`, `low-angle perspective`, `85mm portrait lens`, and `Dutch angle` give you precise control over the final image.

🚨BREAKING: A new open-source voice model just dropped and it’s better than ElevenLabs. This open-source voice AI is ultra-expressive, high quality, and fully free with no paywalls, no limits. Here’s how it works (with real examples):👇 https://t.co/AjtwEwY0rq

So we made image-to-video Realtime: Lucy-5B generates 5s clips in <3.2s Here's how you can access Lucy on @FAL (and a cool iOS/Android demo app!) 🧵 https://t.co/iksTD27dlq

New consumer interfaces: infinite generated feeds, in Realtime, via our demo Delulu (on iOS/Android) https://t.co/zz1N4I4mLF

New consumer interfaces: infinite generated feeds, in Realtime, via our demo Delulu (on iOS/Android) https://t.co/zz1N4I4mLF

If you were a very advanced civilization you would conclude the best “space ship” one could invent is a planet spinning around a power supply called a star, in machine called a solar system. https://t.co/cKRkImhGIR