Your curated collection of saved posts and media

GEPA: Reflective Prompt Evolution Can Outperform Reinforcement Learning https://t.co/5fxnQcbRuo via @YouTube

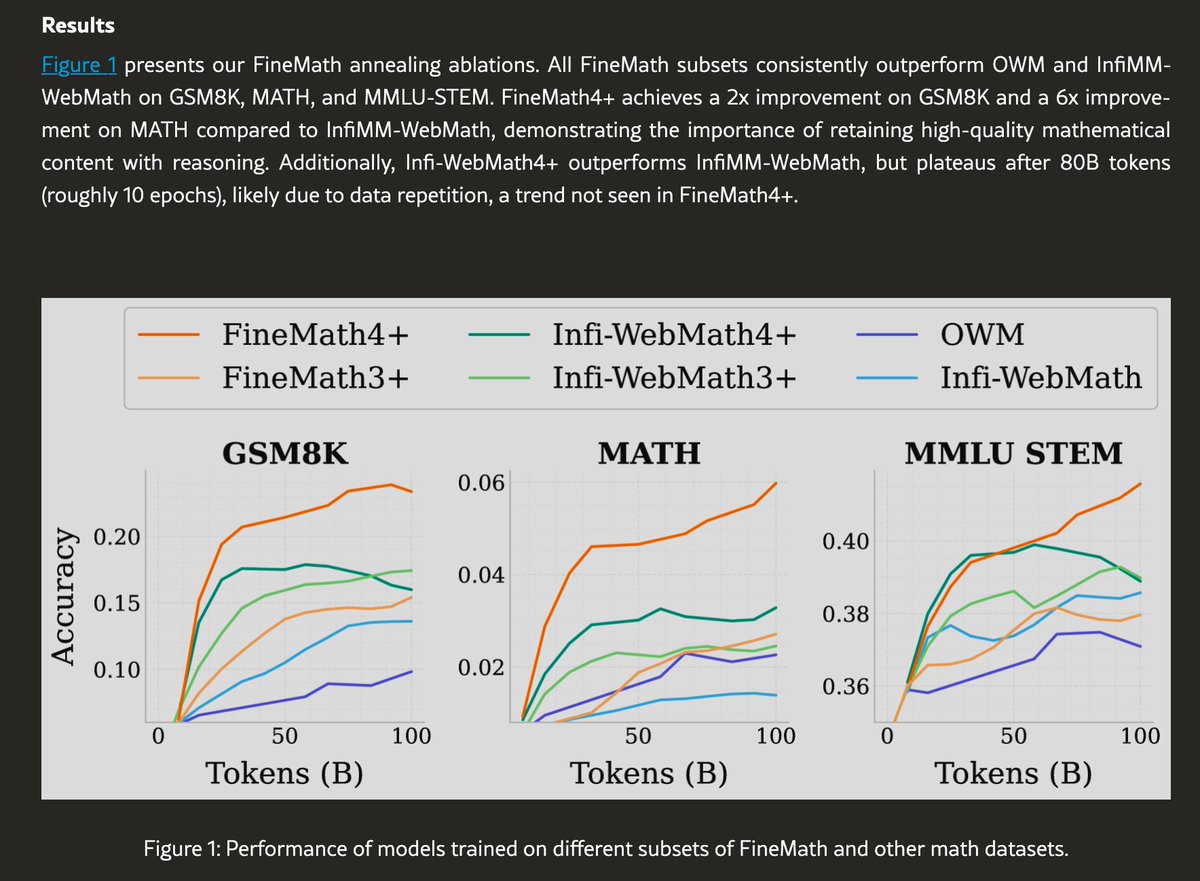

High quality math is the secret sauce for reasoning models. The best math data is in old papers. But OCRing that math is full of insane edge cases. Let's talk about how to solve this, and how you can get better math data than many frontier labs 🧵 https://t.co/eY57bv8863

OpenAI published "Why Language Models Hallucinate", explaining the root causes of AI hallucinations and proposing solutions to reduce them - Language models hallucinate because standard training and evaluation procedures reward guessing over acknowledging uncertainty, with most evaluations measuring model performance in a way that encourages guessing rather than honesty about uncertainty since when models are graded only on accuracy, they are encouraged to guess rather than say "I don't know" - Hallucinations originate during pretraining when models learn through pretraining, a process of predicting the next word in huge amounts of text without "true/false" labels attached to each statement, making it doubly hard to distinguish valid statements from invalid ones, especially for arbitrary low-frequency facts like a pet's birthday that cannot be predicted from patterns alone and lead to hallucinations - The researchers conclude that accuracy-based evals need to be updated so that their scoring discourages guessing since if the main scoreboards keep rewarding lucky guesses, models will keep learning to guess, and that hallucinations are not inevitable because language models can abstain when uncertain

Sitting here waiting for @Cmdr_Hadfield's next book, Final Orbit! https://t.co/aVGq9ZsFB9

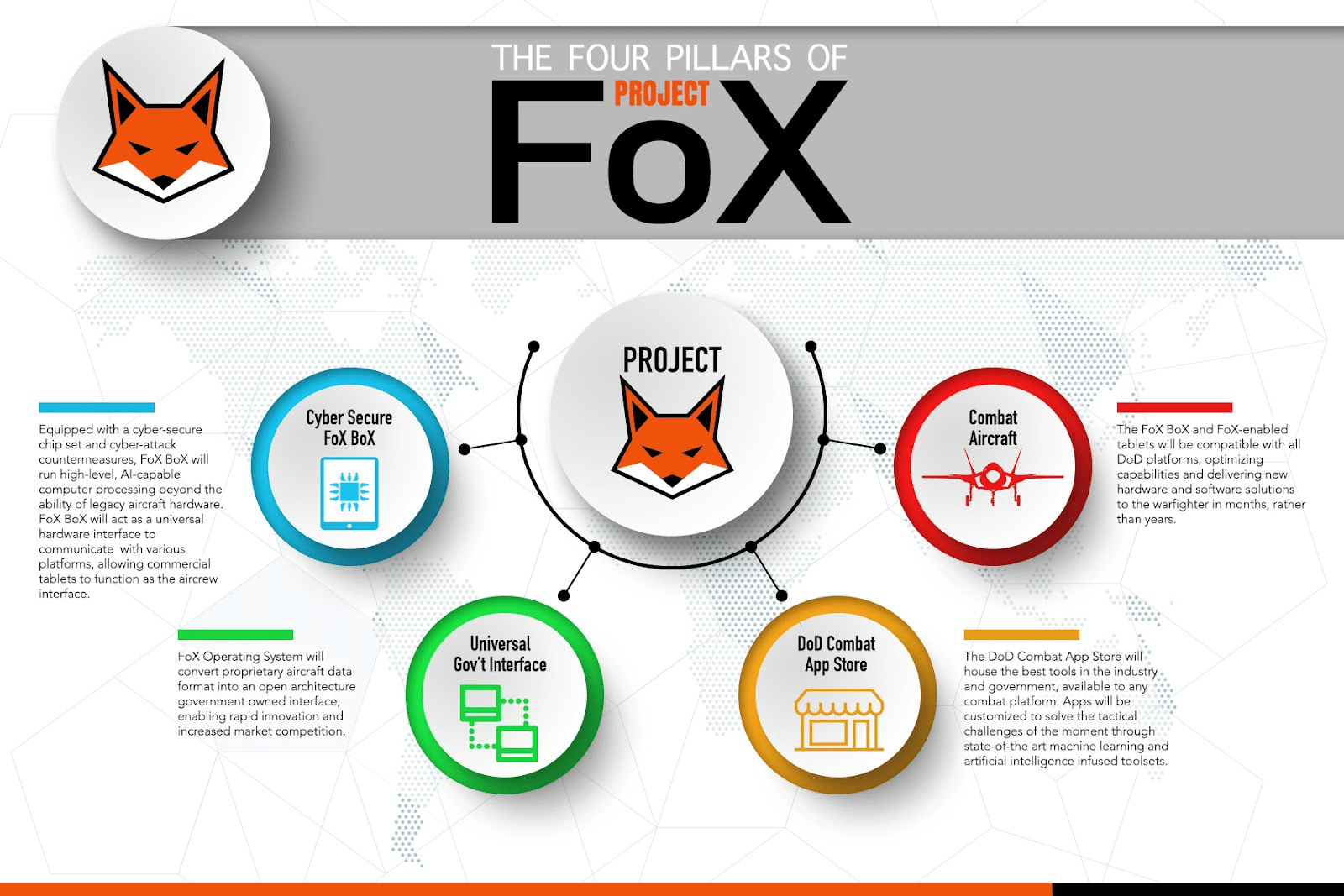

USAF and Matroid announce $25M ceiling contract under Project FoX. We’re bringing advanced #ComputerVision to support the USAF. More here 👉 https://t.co/h7GGtjGVmU #AI #USAF #ProjectFoX #Matroid

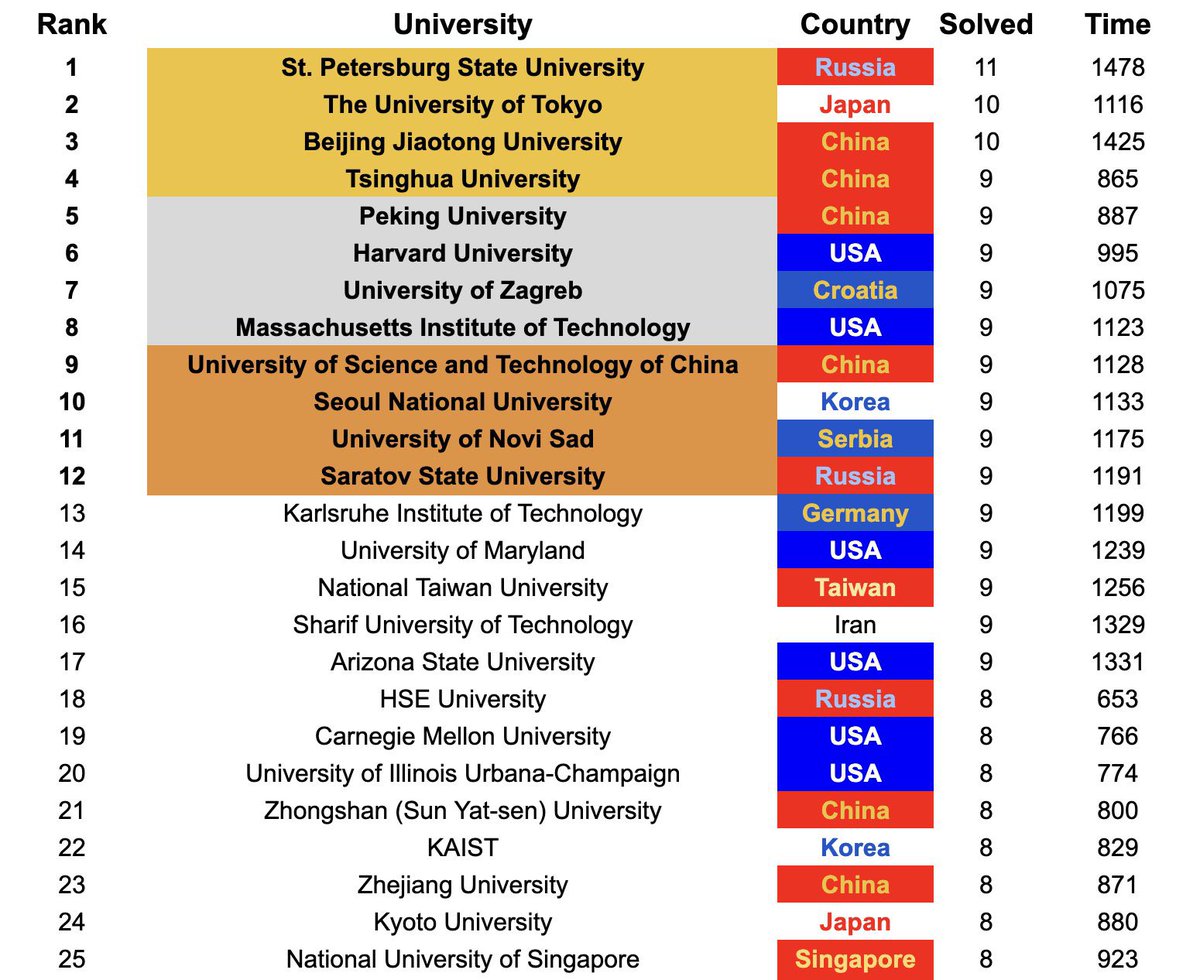

The Olympics in Computer Science (ICPC) just ended. These countries will lead the future. https://t.co/W9EtKaD88x

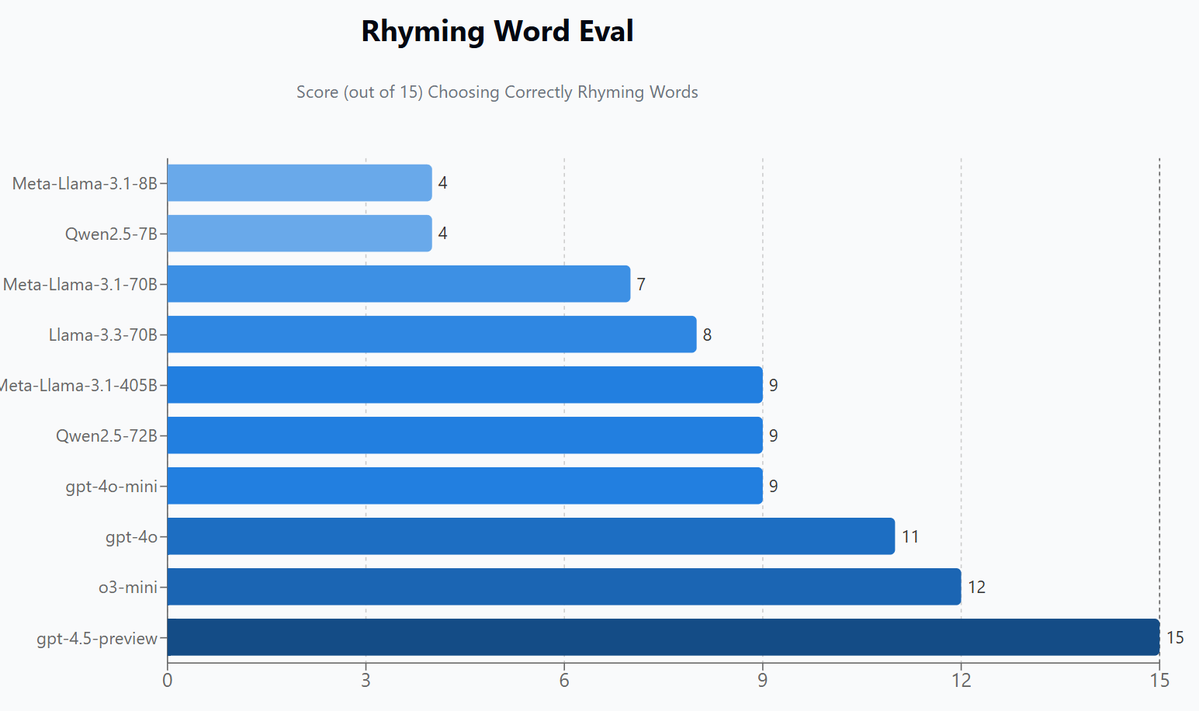

@Miles_Brundage I found 15 'which word rhymes with X: A,B,C,D' style questions a while back that sorted models by big model smell quite nicely. https://t.co/s87vGgkojk

Introducing AI Key, a small device that lets AI control your entire phone. just plug it in and ask it to complete a task. pre-order now. https://t.co/agnDGkaX0d

You can now run 100B parameter models on your local CPU without GPUs. Microsoft finally open-sourced their 1-bit LLM inference framework called bitnet.cpp: > 6.17x faster inference > 82.2% less energy on CPUs > Supports Llama3, Falcon3, and BitNet models https://t.co/pv8W6DMyr8

5-min daily newsletter for developers to keep up with AI: https://t.co/ZJ2Iz2bdY5 Repo: https://t.co/AXOTGN54zo

Marc Andreessen. https://t.co/QFBP03Dkbx

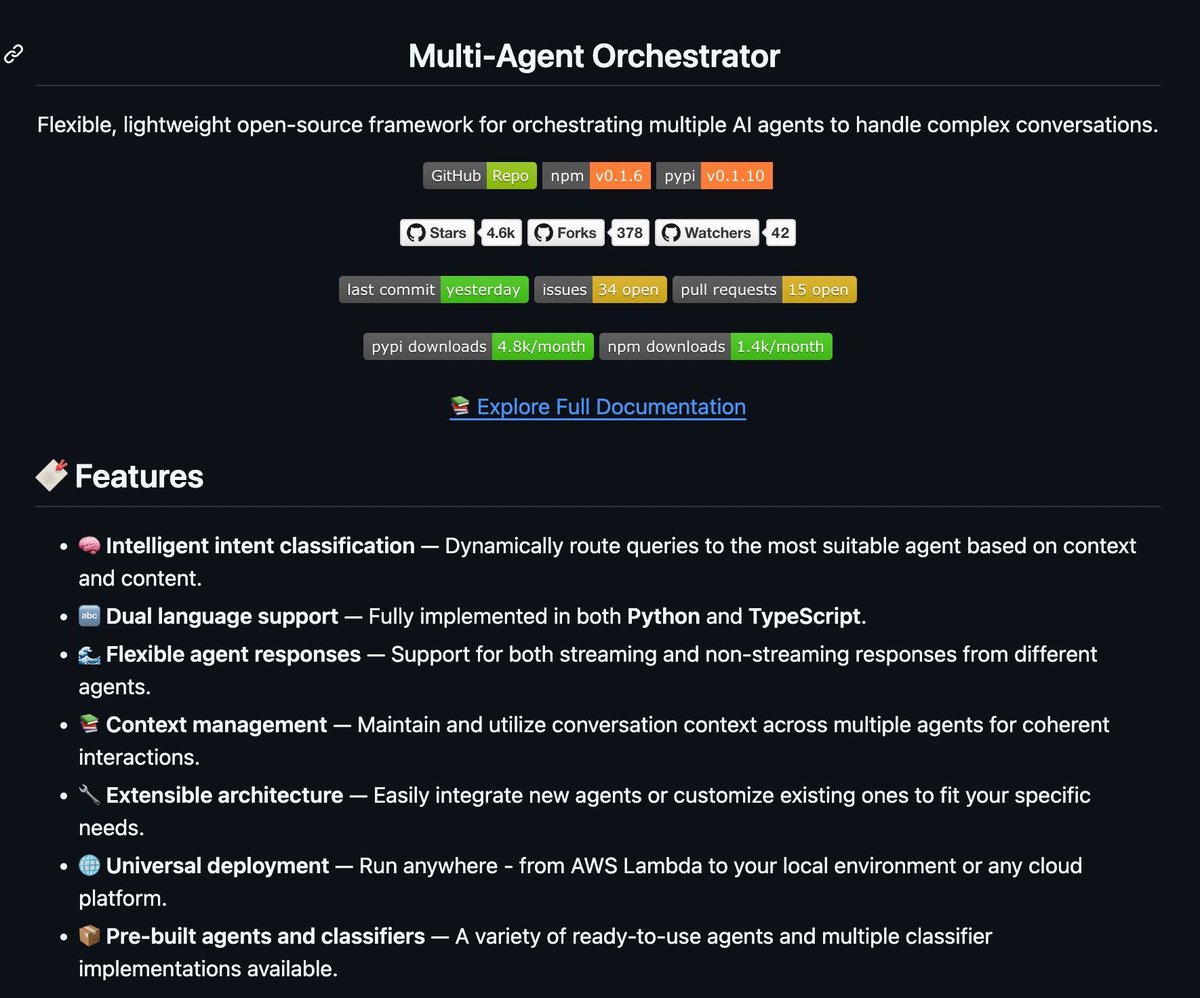

AWS released an open-source framework that lets you orchestrate multiple AI agents and handle complex conversations. Can be deployed locally on your computer. https://t.co/J0Jp6AI7Kg

▸ 5-min daily newsletter for developers to keep up with AI: https://t.co/ZJ2Iz2bdY5 ▸ Source: https://t.co/E8tfMnQRwj

nano banana infinite canvas progress: added a 3x3 grid editor that handles blends, lets you pick which tiles to update https://t.co/hu5DpAyfAF

Adaptive LLM Routing under Budget Constraints It frames LLM routing as a contextual bandit problem. This helps to maximize quality under a fixed budget. It can also handle diverse user budgets with an online cost policy. Lots of cool ideas in this one. https://t.co/0dLOrA8diA

Implicit reasoning is one of the most fascinating AI research topics I read about these days. This new survey paper covers it really well and provides a good set of related readings on the topic. https://t.co/xTuhwbWbaf

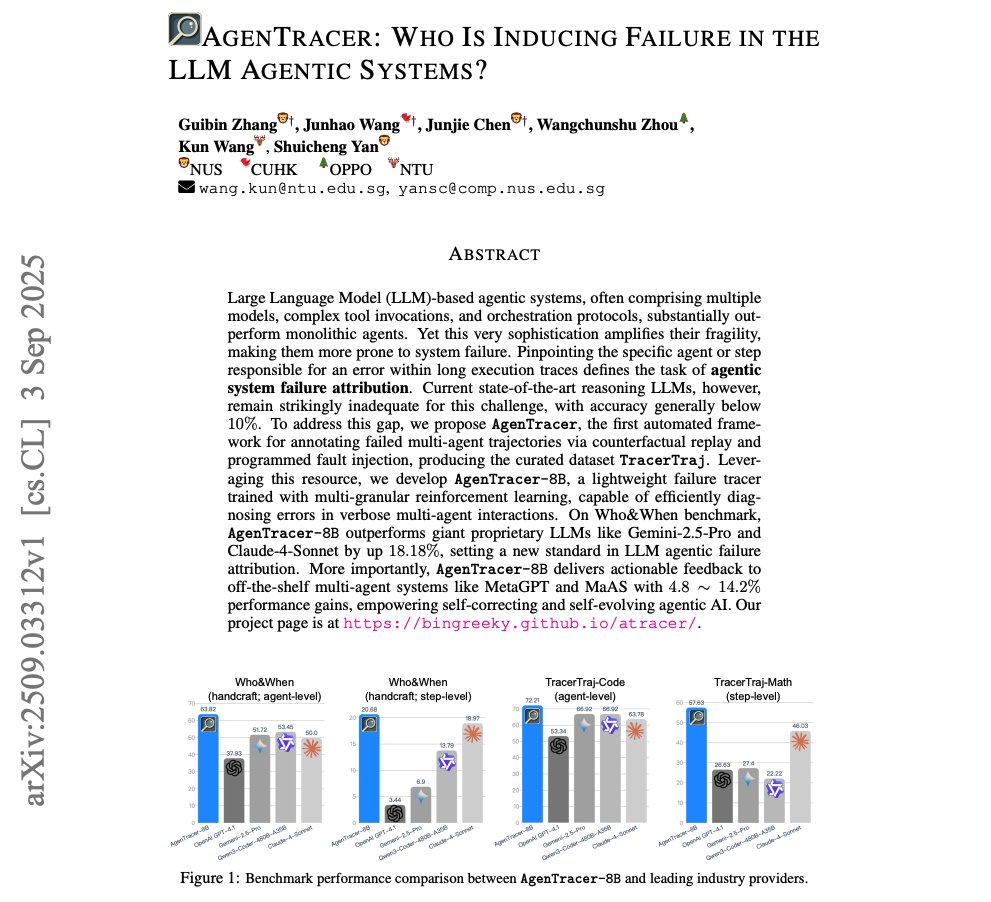

Who is inducing failure in LLM Agentic Systems? This is a cool idea to diagnose errors in multi-agent interactions. AgenTracer-8B outperforms giant proprietary LLMs like Gemini-2.5-Pro and Claude-4-Sonnet by up to 18.18%. https://t.co/ctrfCl9ZRu

A comprehensive survey on trustworthiness in reasoning with LLMs. Great read for AI devs. https://t.co/i4d4p7DHO7

A comprehensive survey on trustworthiness in reasoning with LLMs. Great read for AI devs. https://t.co/i4d4p7DHO7

Towards a Unified View of LLM Post-Training This work proposes Hybrid Post-Training, which switches between RL and SFT using simple performance feedback to balance exploration and exploitation. More below: https://t.co/u7SPSA8HRw

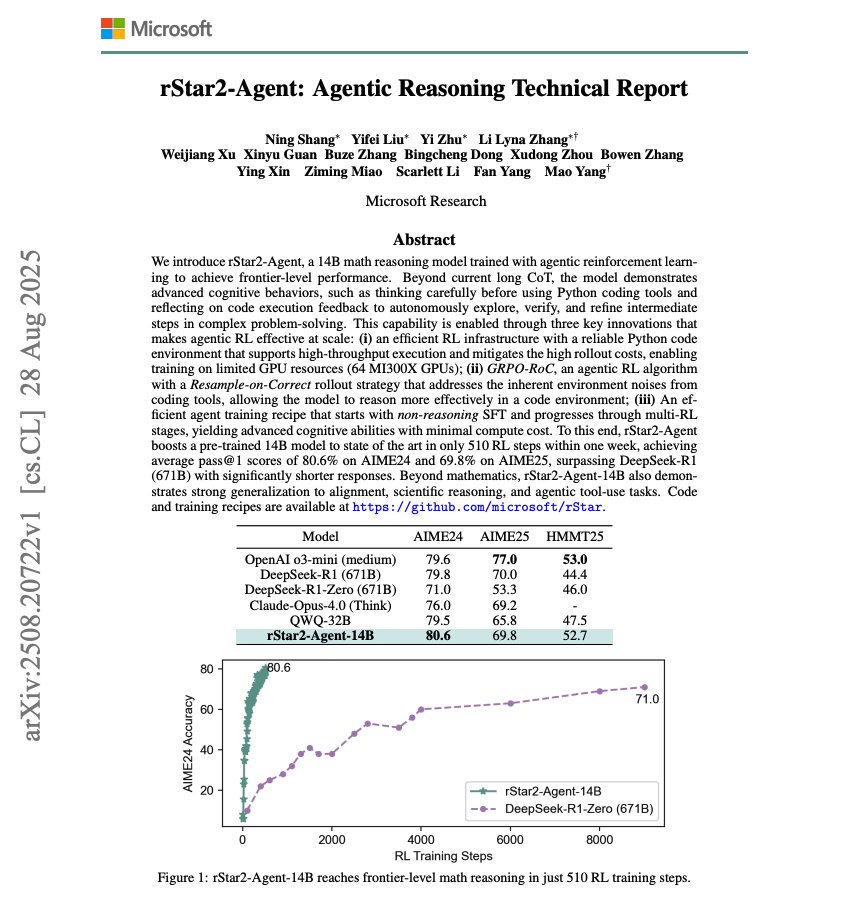

Cool research from Microsoft! They release rStar2-Agent, a 14B math reasoning models trained with agentic RL. It reaches frontier-level math reasoning in just 510 RL training steps. Here are my notes: https://t.co/q6Mfh7EJqg

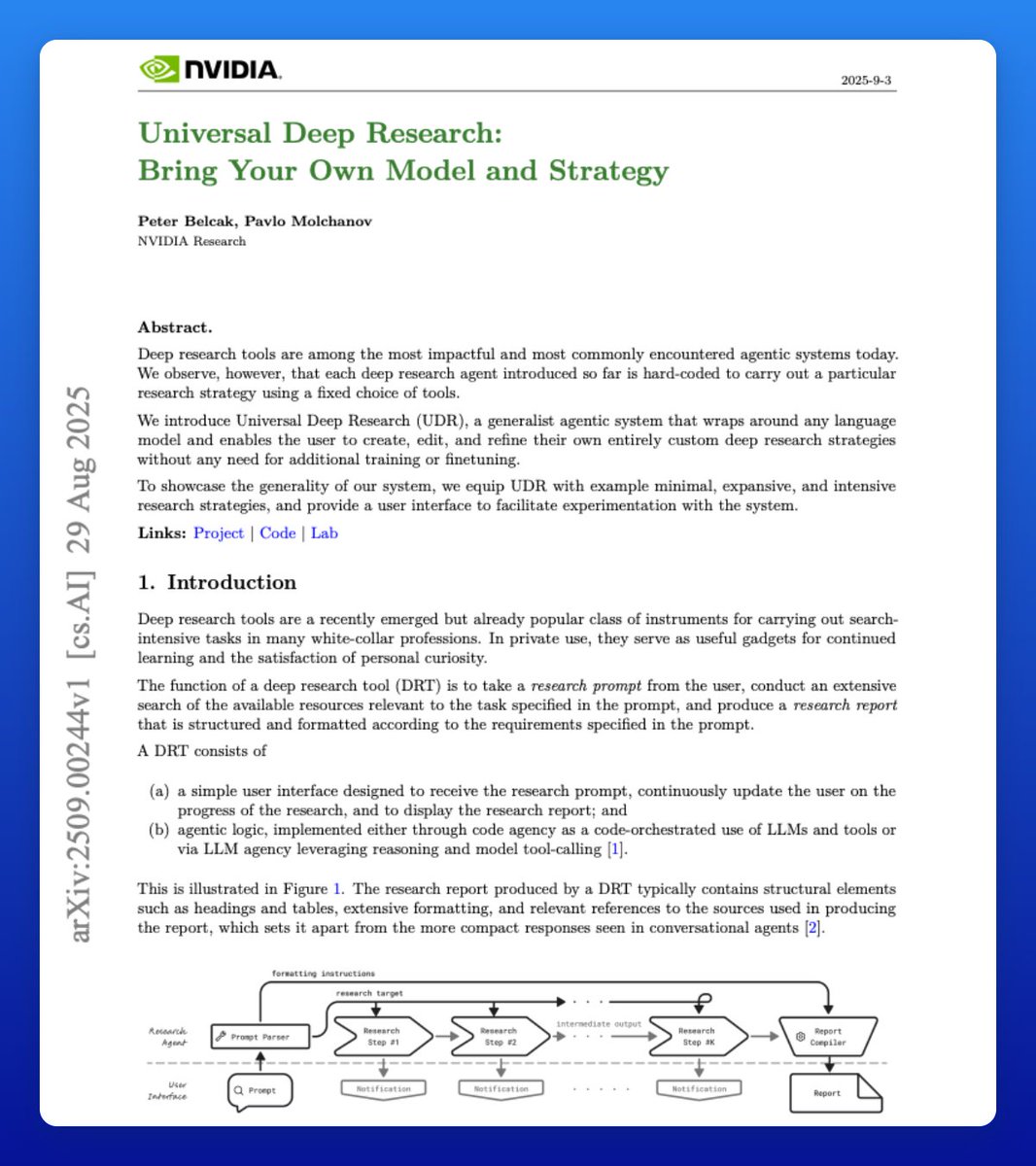

Universal Deep Research NVIDIA recently published another banger tech report! The idea is simple: allow users to build their own custom, model-agnostic deep research agents with little effort. Here is what you need to know: https://t.co/1QRvJ77lo2

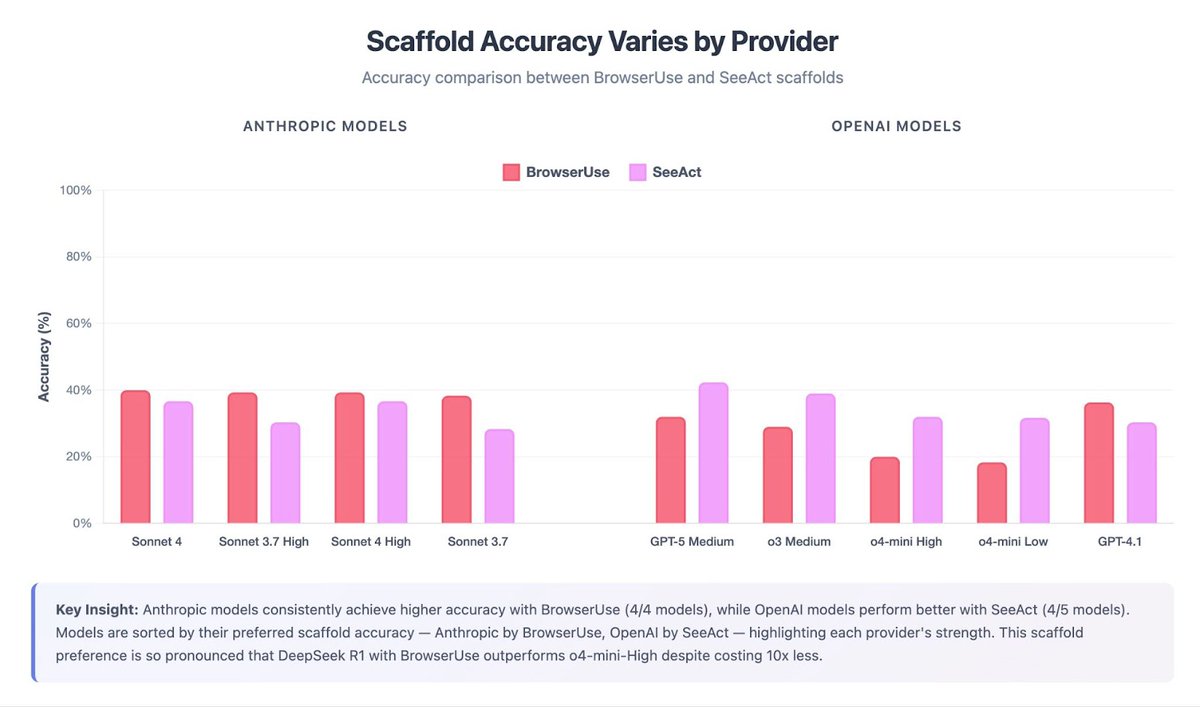

Can AI agents reliably navigate the web? Does the choice of agent scaffold affect web browsing ability? To answer these questions, we added Online Mind2Web, a web browsing benchmark, to the Holistic Agent Leaderboard (HAL). We evaluated 9 models (including GPT-5 and Sonnet 4) with two agent scaffolds (Browser-Use and SeeAct) on Online Mind2Web 🧵

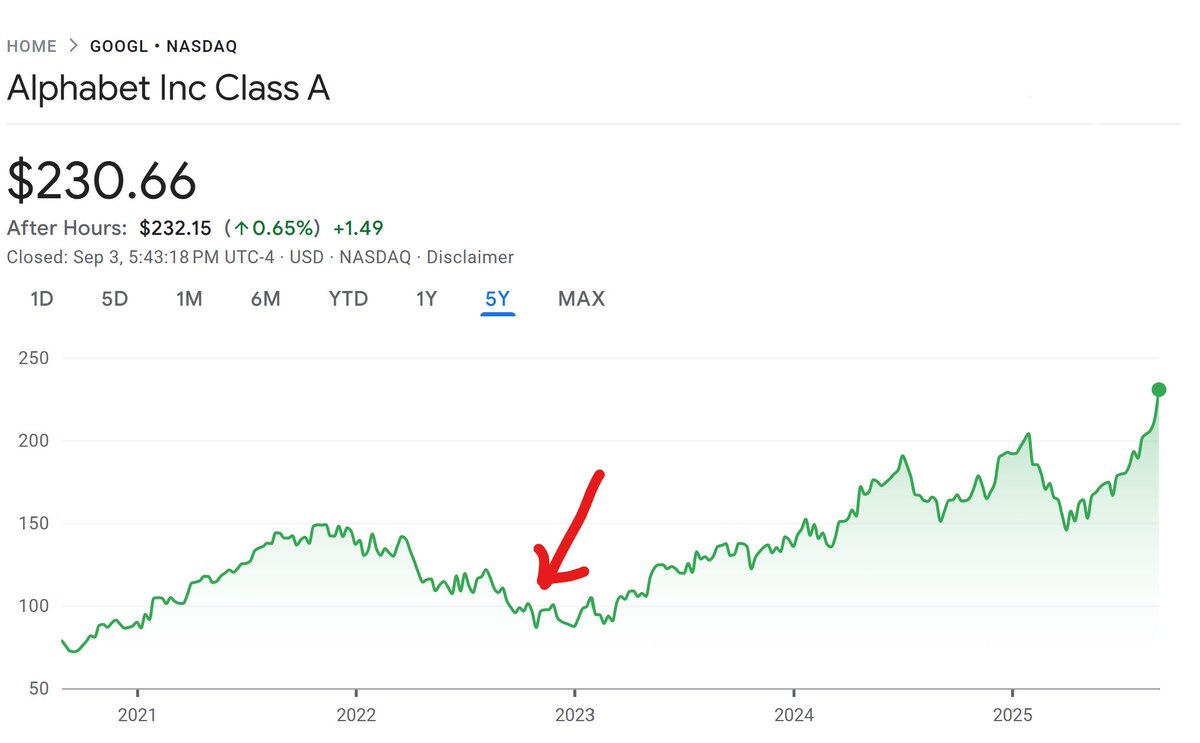

One of the more popular finance takes since ChatGPT was released in Nov '22 was that Google was cooked. https://t.co/urs2pBUtcW

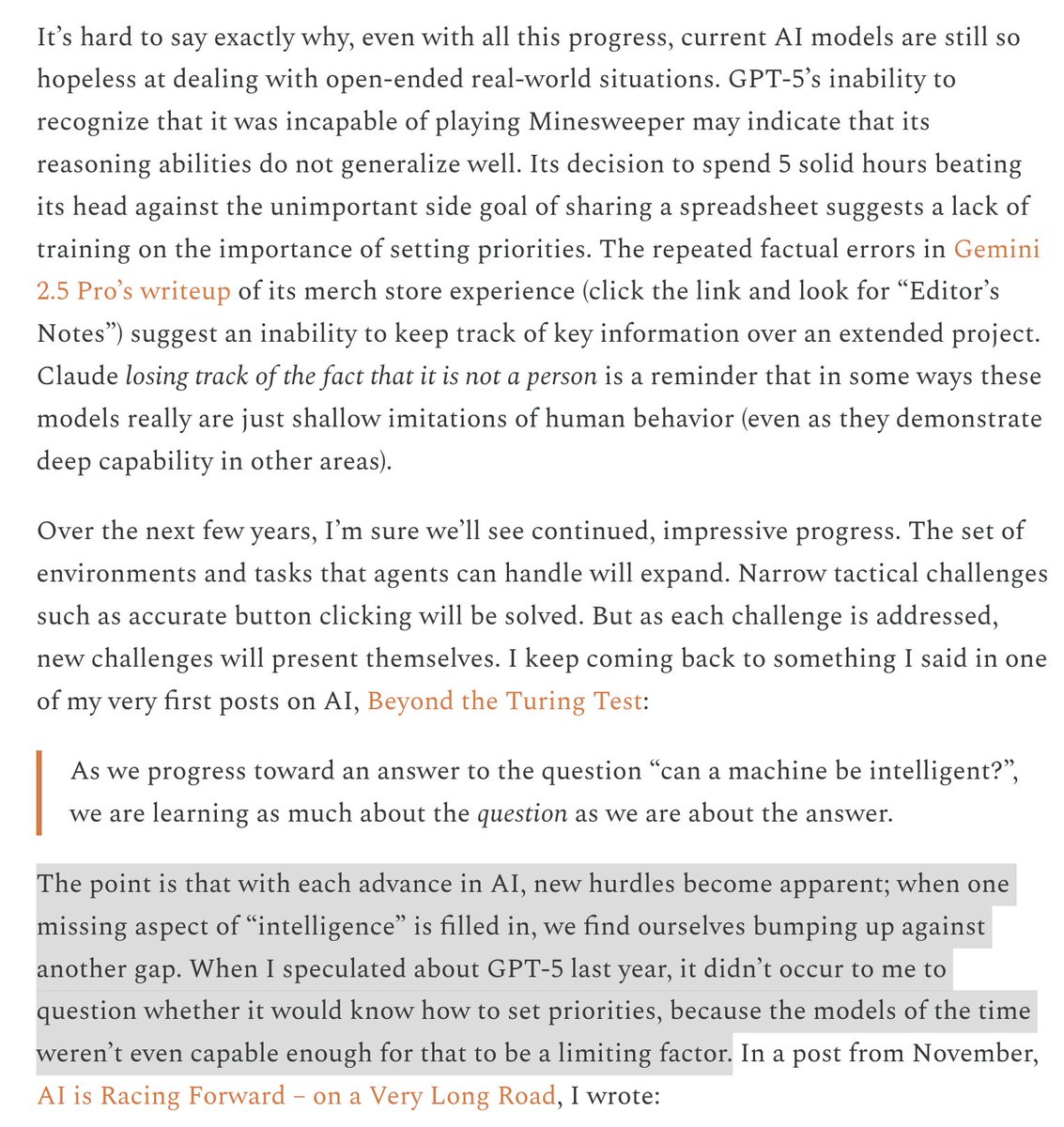

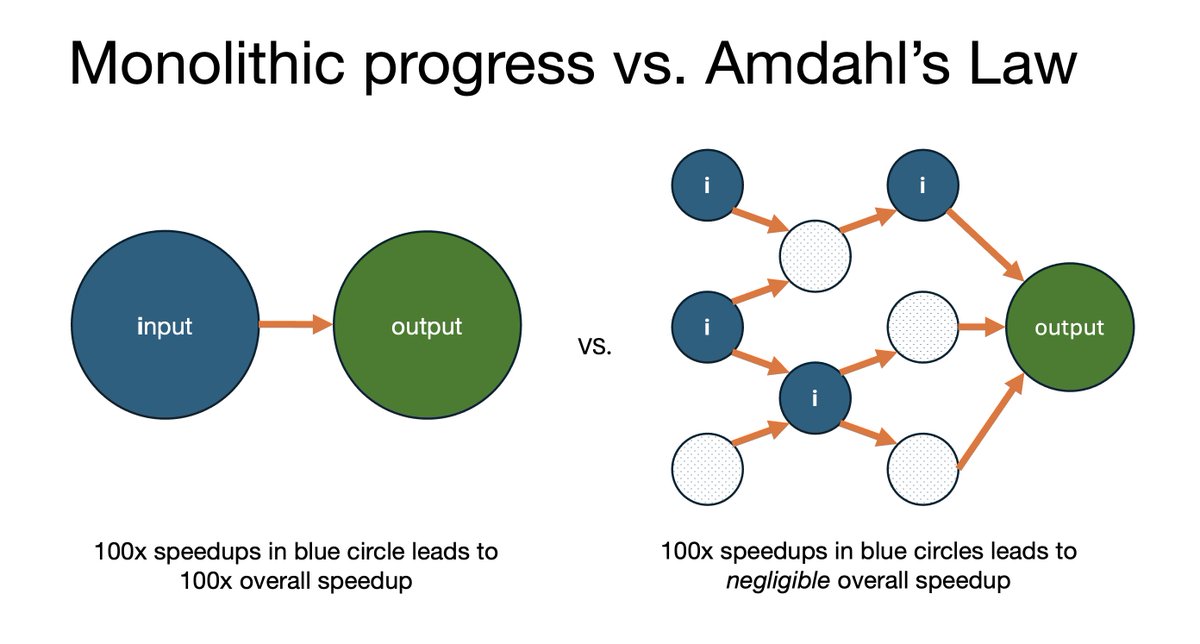

This by @snewmanpv is spot on, and exactly what we called the "false summit" phenomenon in AI as Normal Technology — as we climb the mountain of AGI, what we thought was the peak is repeatedly revealed to be a false summit. This is what leads to the accusation that skeptics keep "moving the goalposts". Of course we keep moving the goalposts — the actual goal turns out to be too far for anyone to see or understand, and the goalposts are mere proxies, so as our understanding improves the target moves farther away. https://t.co/tDqewRNcjT

AI as Normal Technology is often contrasted with AI 2027. Many readers have asked if AI evaluations could help settle the debate. Unfortunately, this is not straightforward. That's because the debate is not about differences in AI capability, which evaluations typically measure. It is about two completely different causal models of the world. But most AI evaluations don't even *attempt* to measure differences in causal models. 🧵

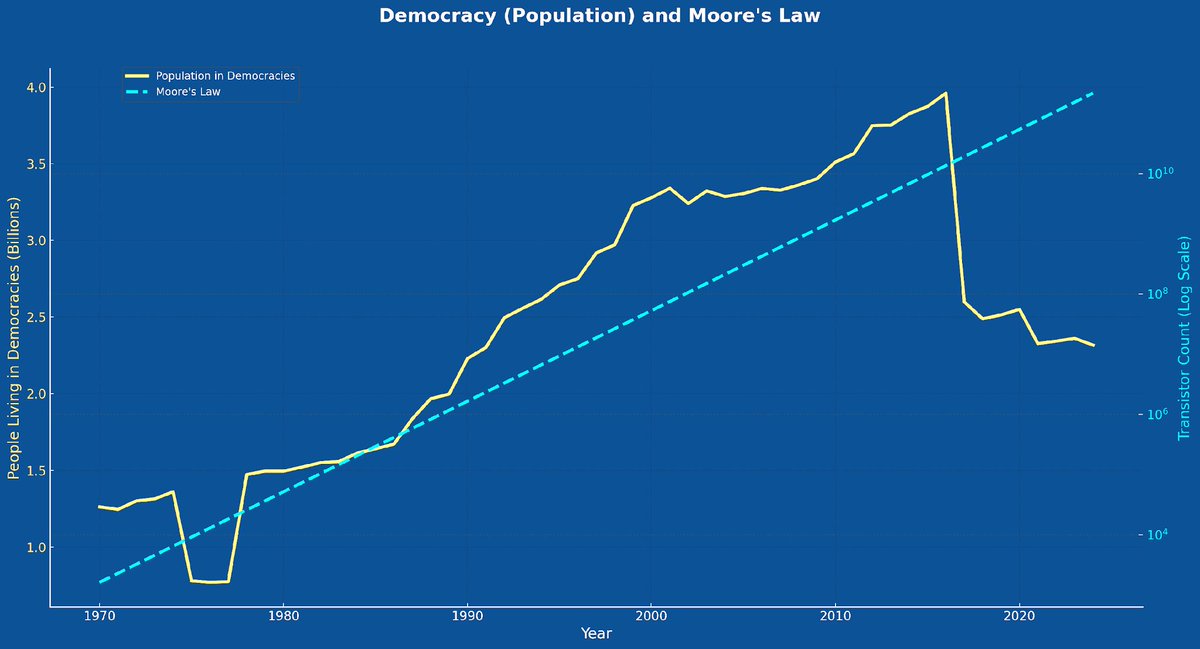

How will AI agents impact democratic values? Democracies are—for independent reasons—already under acute pressure. Since WWII Moore's Law and democratisation went up and to the right in lockstep. Not any more. https://t.co/utEH1oYPF6

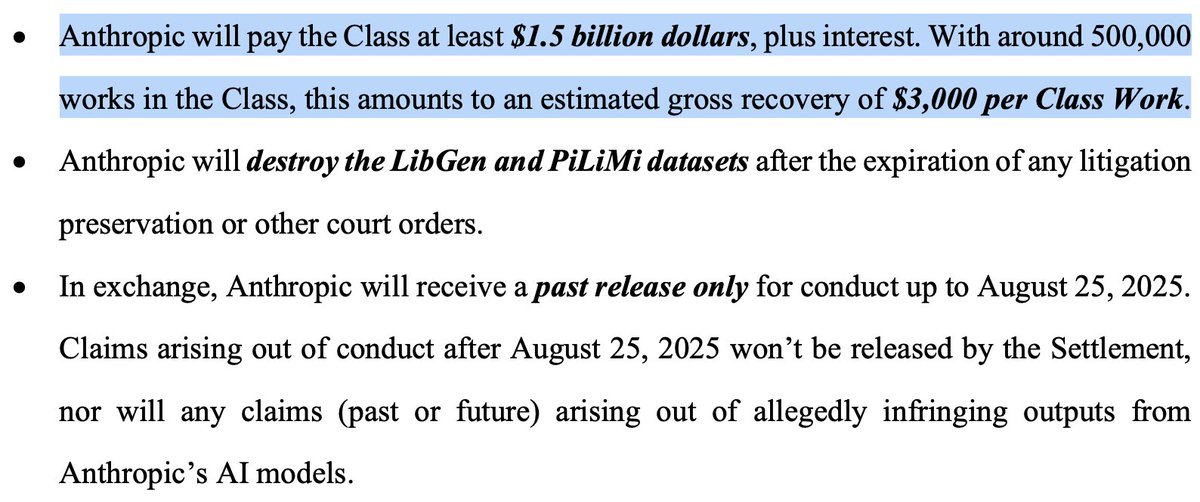

The terms of Anthropic's settlement w/book authors just came out. 💰$1.5B to authors in libgen (Books3 corpus)! Interestingly, this is ~$3k per book, close to the terms that HarperCollins allegedly gave to authors for their books ($2.5k). Consensus price forming? https://t.co/rBOIkh6RwT

Turn Claude Code into a Financial Analyst 🤖💹 In this video we point Claude Code at a bucket of 10k filing PDFs, and have it perform complex analysis across the entire set of docs! Claude Code doesn’t have file understanding out of the box (it kind of does, but it’s terrible / doesn’t work over long PDFs). We equipped Claude Code with targeted tools for file parsing and efficient search, courtesy of our recently released `semtools`. It’s way faster/more versatile than naive RAG 💡. You get super-fast in-mem keyword/semantic search, and Claude Code can combine this with standard file tools like grep and `Read` to load in dynamic context instead of fixed chunks. You can do this in seconds. Just install `semtools`, add it to your https://t.co/UHeZpqIKkF, and point Claude to any bucket of files you want to analyze. SemTools (s/o @LoganMarkewich): https://t.co/xg1iqbghIr File parsing courtesy of LlamaCloud: https://t.co/XYZmx5TFz8

Introducing LlamaIndex Classify: Rules-Based Document Classification Made Simple Learn how to automatically classify your documents with LlamaIndex's newest beta feature! In this quick demo, Laurie walks through the Classify service - a powerful tool for preprocessing documents in your AI workflows. What you'll learn: ➡️ How to set up classification rules for different document types ➡️ Using built-in templates (like resumes) and creating custom rules ➡️ Classifying documents through both the UI and programmatically with Python ➡️ Getting confidence scores and reasoning for each classification ➡️ Optimizing performance by parsing only the first few pages Demo includes: ✅ Live classification of resumes vs 10-K financial filings ✅ Step-by-step API setup with LlamaCloud ✅ Python code examples and best practices ✅ Real confidence scores and classification reasoning Check out the demo notebook: https://t.co/9vpT0GIXsS Or the full documentation: https://t.co/ZCFHZp0GeR Or dive into LlamaCloud right away: https://t.co/yQGTiRSNvj

Announcing the Fullstack Agents Hackathon on September 27th! We are partnering with CopilotKit, Composio, Microsoft for Startups, B Capital & AI Tinkerers to put on an amazing hackathon. Participants will start with a boilerplate fullstack agent application connecting a LlamaIndex Agent to a frontend with AG-UI. The Agent will have access to thousands of tools via Composio. $20k+ in prizes on the line for the teams that can transform the template into a powerful fullstack agent for their use-case. Venue is the Microsoft SV Center, 🗓️September 27th -- register today! https://t.co/zre2OXf1bw

How @Jeppesen (a @Boeing company) went from 512h → 64h to build AI agents: ✅ Built a Unified Chatbot Framework on LlamaIndex ✅ 1,792h saved already ✅ Nearly 4,900h projected annually From chatbot to full agent orchestration system. 🚀 Case study: https://t.co/jEIKT2kFAY #GenAI #AIagents #LlamaIndex