Your curated collection of saved posts and media

10m/s!! Unitree Breaks the World Record Again😊 With the physique of an ordinary person, running at a world champion’s speed! Leg length: 0.4+0.4=0.8m, body weight: approx. 62kg! H1: “Give me one more chance, give the world one more honor!” https://t.co/Fk4Zo9zKit

Introducing LPM 1.0 — a video-based character performance model that speaks, sings, listens, reacts, and emotes in real time. - Generating full-duplex conversation, identity-consistent infinite-length generation, and nuanced human-like performance. - Building across a co-designed data pipeline, Base model, Online model, and streaming inference optimization. - Key advantages over other video generation models: performance quality, emotional conversation, precise lip-sync, identity preservation, and lifelike naturalness. Turning an image into a performance video, LPM 1.0 serves as a visual engine for conversational agents, live streaming characters, and game NPCs. Page: https://t.co/Ve2c2YNuqj Arxiv: https://t.co/cM54T3KSPs

While I was at Costco my agent read everyone in AI community and rounded up the week: https://t.co/kiuZ7QXLzb Curation is dead. :-)

@GoogleAIStudio A new way to read the AI community here on X: https://t.co/kiuZ7QXLzb Builds a script for Notebook LM automatically so you can make a podcasst out of the news.

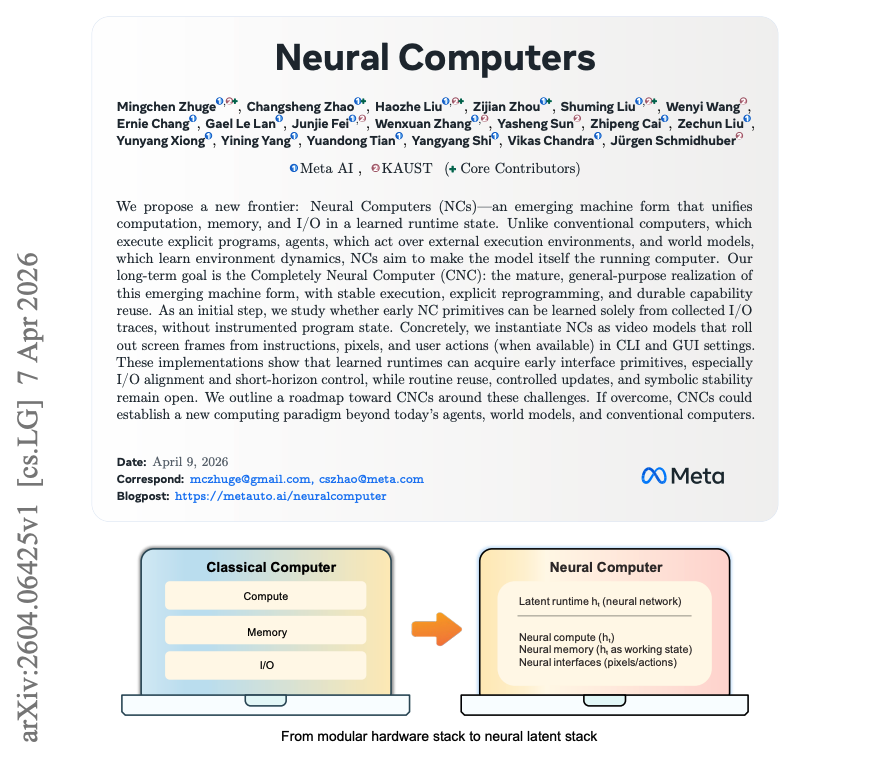

NEW paper from Meta. (bookmark this one) What if the model wasn't just using the computer, but became the computer? New research from Meta AI and KAUST makes a serious case for Neural Computers (NCs). The paper proposes NCs as learned runtimes where computation, memory, and I/O live inside a single latent state. Their first prototypes use video models to roll out terminal and GUI interfaces from prompts, pixels, and user actions. Why does it matter? Today's agents still depend on external computers to store state, execute actions, and enforce system contracts. Neural Computers point to a different machine form: one where interface dynamics, working memory, and execution are learned together. The early results are promising but grounded. CLI rendering improves, GUI cursor control reaches 98.7% with explicit visual supervision, and reprompting boosts arithmetic-probe accuracy from 4% to 83%. But symbolic reliability, stable reuse, and runtime governance remain open. This is less "agents got better" and more "what comes after agents as a computing substrate?" Paper: https://t.co/CKdclokmer Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

One great outcome of PaperWiki is personalized surveys. Survey papers continue to be one of the best ways to track a field. My agents are now generating personalized surveys on topics using my paper LLM-generated knowledge base. Self-improving and always up-to-date as new papers are published. Love how these topic wiki pages are evolving. One of my favorite views so far, but there is a lot more to do here. Plan to share these with our students at the @dair_ai Academy so their agents can work on the frontier too. Stay tuned!

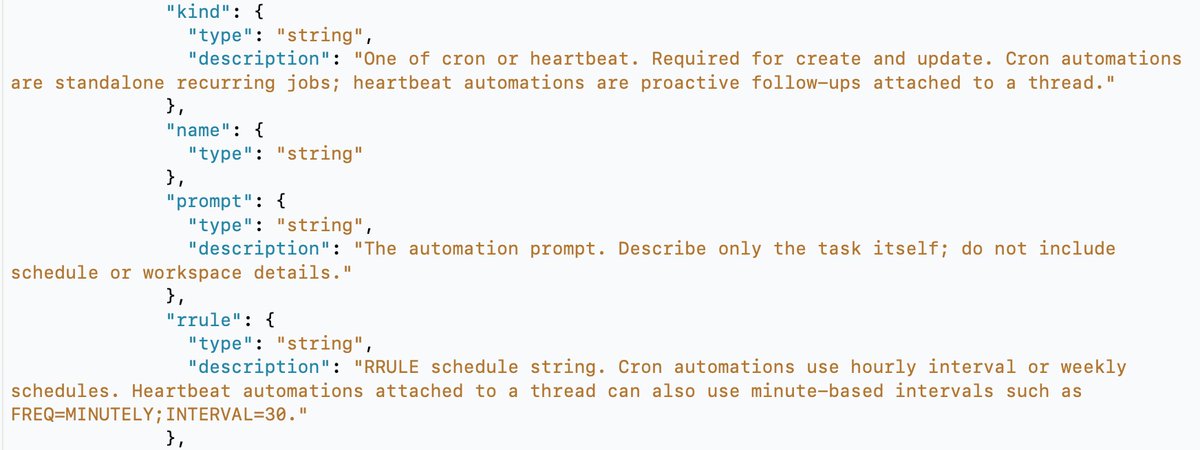

🚨 OpenAI is quietly turning the Mac Codex app into an all-in-one platform Chat + Codex + OpenClaw, all under one roof. > Foundation for rendering and reading images + video > Heartbeat system (like OpenClaw) > Model and thinking mode selection per task (like an OpenClaw agent manager) > UI changes to make Codex less "for coders" and more universal They're using the Codex app as the base and building everything on top of it. (h/t @MsRFlorida for the breakdown)

big plans https://t.co/WOzTyoC4Vo

Introducing Latent Briefing, a way for agents to quickly share their relevant memory directly. Result: 31% fewer tokens used, same accuracy. Multi-agent systems are powerful, but can be wildly inefficient. They pass context as tokens, so costs explode and signal gets lost. We built an algorithm that allows agents to communicate KV cache to KV cache.

Still at the office. https://t.co/zbhqhUeSr6

Look ma new Codex Updates! 0.119.0 and 0.120.0 are here. And with it, a HUGE number of quality of life updates and bug fixes! > Hooks now render in a dedicated live area above the composer. They only persist when they have output, so your terminal stays clean. If you're running PreToolUse or PostToolUse hooks, this is a huge readability win. > Hooks are now available again on Windows > CTRL+O copies the last agent output. Small but clutch when you're pulling a code block into another file or chat. > New statusline option: context usage as a graphical bar instead of a percentage. Easier to glance at mid-session when you're trying to gauge how much runway you have left. > Zellij support is here with no scrollback bugs. If you've been stuck on tmux just because Codex was broken in Zellij, you're free now (shout out @fcoury) > Memory extensions just landed. The consolidation agent can now discover plugin folders under memories_extensions/ and read their instructions.md to learn how to interpret new memory sources. Drop a folder in, give it guidance, and the agent picks it up automatically during summarization. No core code changes needed. This is the first real extension point for Codex's memory system, and it opens the door for third-party memory plugins. > Did you know, you can /rename a thread? But what's really cool about that is, after you rename it, you can resume it with the same name, no more UUIDs. codex resume mynewapp or directly from the TUI: /resume mynewapp > Multi agents v2 got an update to tool descriptions More reliable multi agent environments and inter agent communication > You can now enable TUI notifications whether Codex is in focus or not. Modify this in your config: [tui] notification_condition = "always" > MAJOR overhaul to Codex MCP functionality: 1. Codex Tool Search now works with custom MCP servers, so tools can be searched and deferred instead of all being exposed up front. 2. Custom MCP servers can now trigger elicitations, meaning they can stop and ask for user approval or input mid-flow. 3. MCP tool results now preserve richer metadata, which improves app/UI handoff behavior. 4. Codex can now read MCP resources directly, letting apps return resource URIs that the client can actually open. 5. File params for Codex Apps are smoother: local file paths can be uploaded and remapped automatically. 6. Plugin cache refresh and fallback sync behavior are more reliable, especially for custom and curated plugins. > Composer and chat behavior smoother overall, resize bugs remain though. > Realtime v2 got several significant improvements as well. > You're still reading? What a legend. 🫶 npm i -g @openai/codex to update

OpenAI is working on a new experimental feature for Codex called Scratchpad. Users will be able to start multiple Codex chats from a TODO list view, which will be executed in parallel. It will become very instrumental in the upcoming Codex Superapp, where you will be able to trigger a broader range of tasks to achieve your goals. * Not available yet 👀

Which hat should I wear today https://t.co/jBf5eyBGTx

https://t.co/guwZR1tGte

https://t.co/guwZR1tGte

Now this is an ad. https://t.co/TN9oz2VHMv

Now this is an ad. https://t.co/TN9oz2VHMv

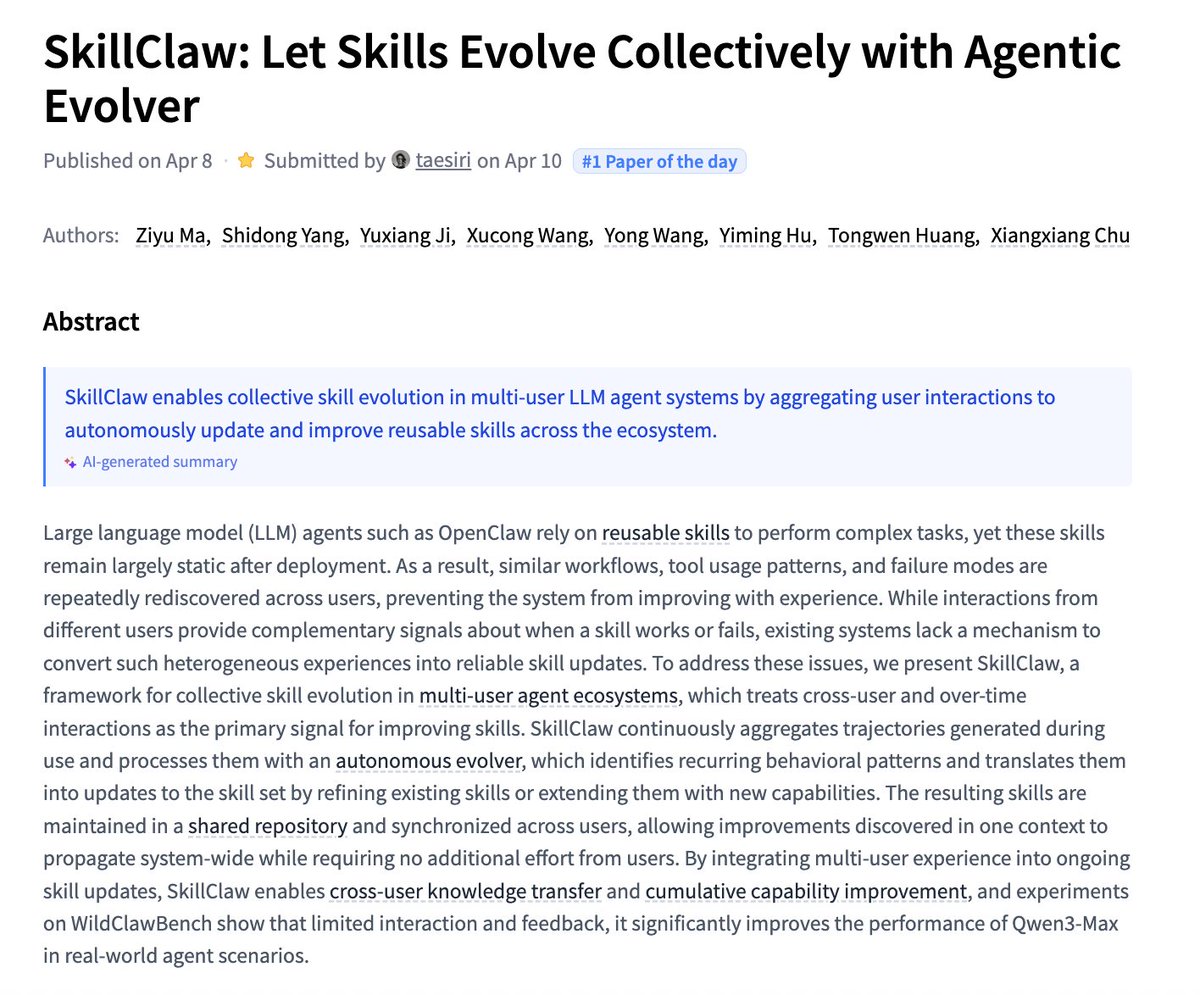

SkillClaw Let Skills Evolve Collectively with Agentic Evolver paper: https://t.co/fyZT9YqUP6 https://t.co/zN4KuczCbA

Rethinking Generalization in Reasoning SFT A Conditional Analysis on Optimization, Data, and Model Capability paper: https://t.co/AFqLfOfK3R https://t.co/j7gHnlDofv

HY-Embodied-0.5 Embodied Foundation Models for Real-World Agents paper: https://t.co/ocajaLYTLl https://t.co/MOqXLCTcLE

MegaStyle Constructing Diverse and Scalable Style Dataset via Consistent Text-to-Image Style Mapping paper: https://t.co/szeFIYDvcN https://t.co/kiMko1Q3Jk

void-model has been awesomely trending on @huggingface this week! Please use it and let us know how we can make it better 🤗 https://t.co/4BwZKr975B https://t.co/r8XtGBglds

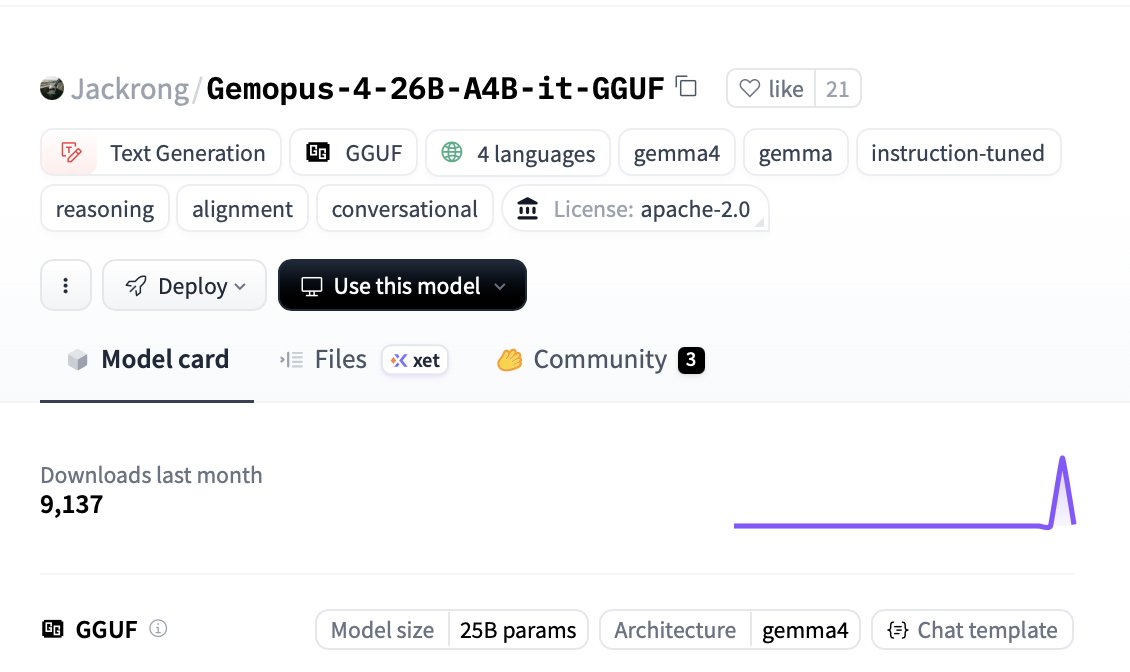

🤯NEW JACKRONG GEMOPUS-4 26-A4B DROPPED The Model Magic 🪄 🧠 Google’s Gemma 4 26B MoE base 4B Active Params 🤖Full Claude Opus-style reasoning distillation Beast Mode Performance🚨 🔥 75 tokens/sec at Q6_K ✅ Just 22.7 GB VRAM 🚨 Full 131k context window Pair this with HemresAgent and run it locally Makes it VERY capable! Tweak this for your use case Try it now 👇🏻 https://t.co/GZI6yPXnHm

1/3 Money, money, money, moneyyyyy 💸💸💸💸 Today we’re making it possible for you to earn actual money from your Pika AI Self agent. Because we think your agent should work FOR you in every sense of the phrase. Every time someone talks with them, or uses one of their skills, you earn tokens redeemable for cash. Say goodbye to those deadbeat agents.

👀some thoughts: https://t.co/bisWAsEQQn

👀some thoughts: https://t.co/bisWAsEQQn

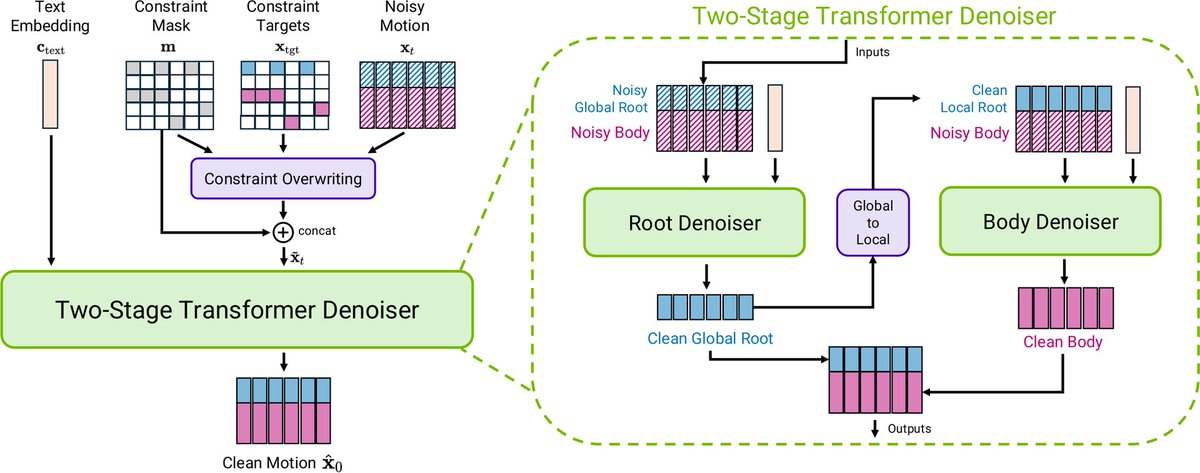

NVIDIA just released Kimodo on Hugging Face A kinematic motion diffusion model trained on 700 hours of optical motion capture to generate 3D human and robot motions controlled by text and kinematic constraints. https://t.co/s7foOXXqqB

Speculative decoding for Gemma 4 31B (EAGLE-3) A 2B draft model predicts tokens ahead; the 31B verifier validates them. Same output, faster inference. Early release. vLLM main branch support is in progress (PR #39450). Reasoning support coming soon. https://t.co/PoK8zbA7li

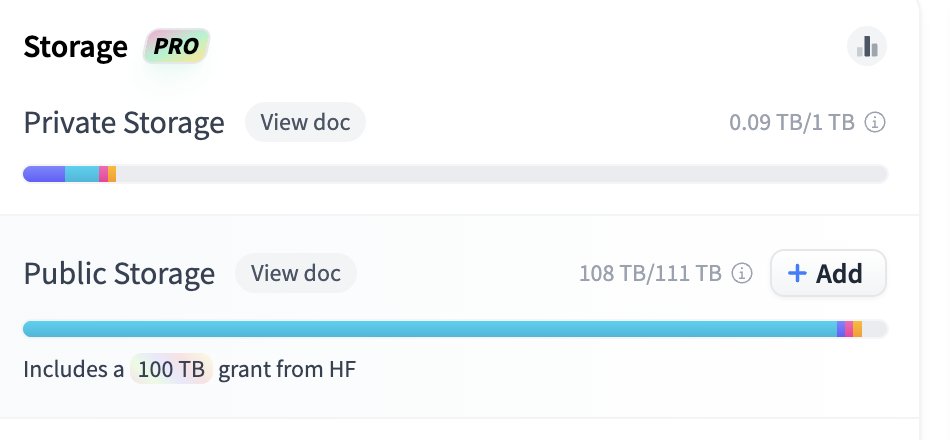

RIP my huggingface storage 🪦 https://t.co/yBCMZ2f2sg

RIP my huggingface storage 🪦 https://t.co/yBCMZ2f2sg

favorite AGI/sci-fi vibe these days is coding a robot code together with the robot here vibe-pluging @ElevenLabs in @reachymini for a talk later today https://t.co/0m65ozY8JA

NVIDIA's Nemotron 3 Super can be found here: https://t.co/VXMrQHxXLS Fully open AI btw

Super

NVIDIA's Nemotron 3 Super can be found here: https://t.co/VXMrQHxXLS Fully open AI btw