Your curated collection of saved posts and media

Day 9/10 of launching a new Perplexity Finance feature every day through the end of November. We've improved the watchlist page to give you a deeper look at what's happening with stocks, crypto, and ETFs you're following: 1) Comparison graph of the top gainers and top losers on your watchlist 2) Newswire feeds filtered to tickers on your watchlist

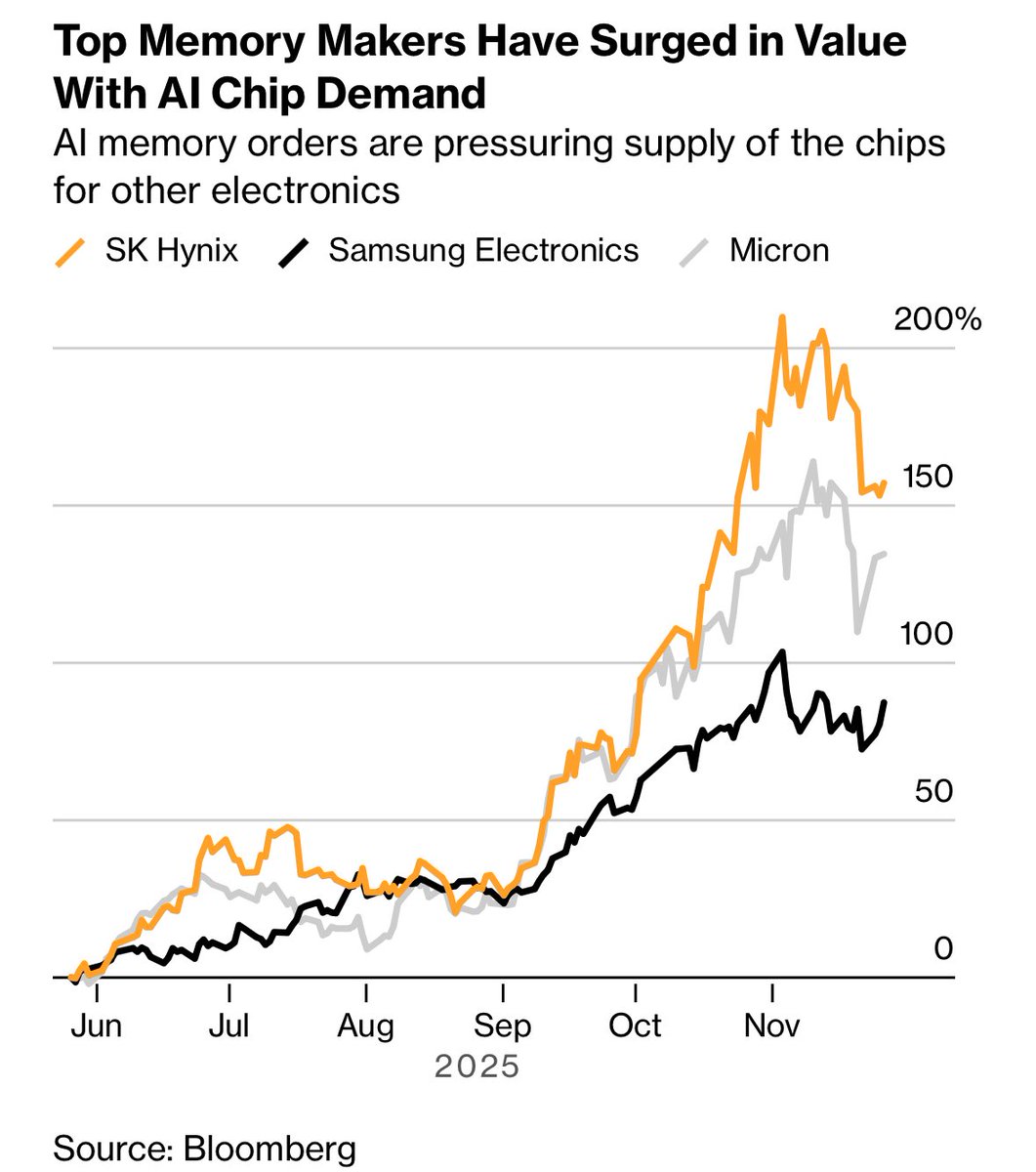

Why RAM Is So Expensive Right Now 1. AI data centers are consuming insane amounts of RAM Training and running large AI models uses enormous quantities of DRAM and HBM (high-bandwidth memory) per server — far more than a gaming PC. Hyperscalers (Nvidia platforms, AWS, Google, Microsoft, etc.) are buying massive quantities of server DDR5, HBM, and LPDDR for AI clusters. Analysts expect server DRAM prices to roughly double over the 2025–2026 period because of this AI demand, and HBM is even tighter and more expensive. All that demand at the top of the food chain sucks supply away from regular consumer RAM kits.

Perplexity Comet is awesome if you find the right use case. I am using it for many things and one of them is asking Comet to do API endpoint testing using Postman after development. Give it enough context about the payload and other details and let it handle the rest. - It generated multiple payloads for different tests - It generated a final report for me after the full run This is awesome, Aravind bhai.

Perplexity Email Assistant can now process image and file attachments. Starting today, you can send your Assistant any document and have it: - understand the document - summarize its contents - add relevant dates to your calendar Here’s how I use it to add dates from a syllabus to my calendar:

Small details like these matter! https://t.co/UwWUFfEF0M

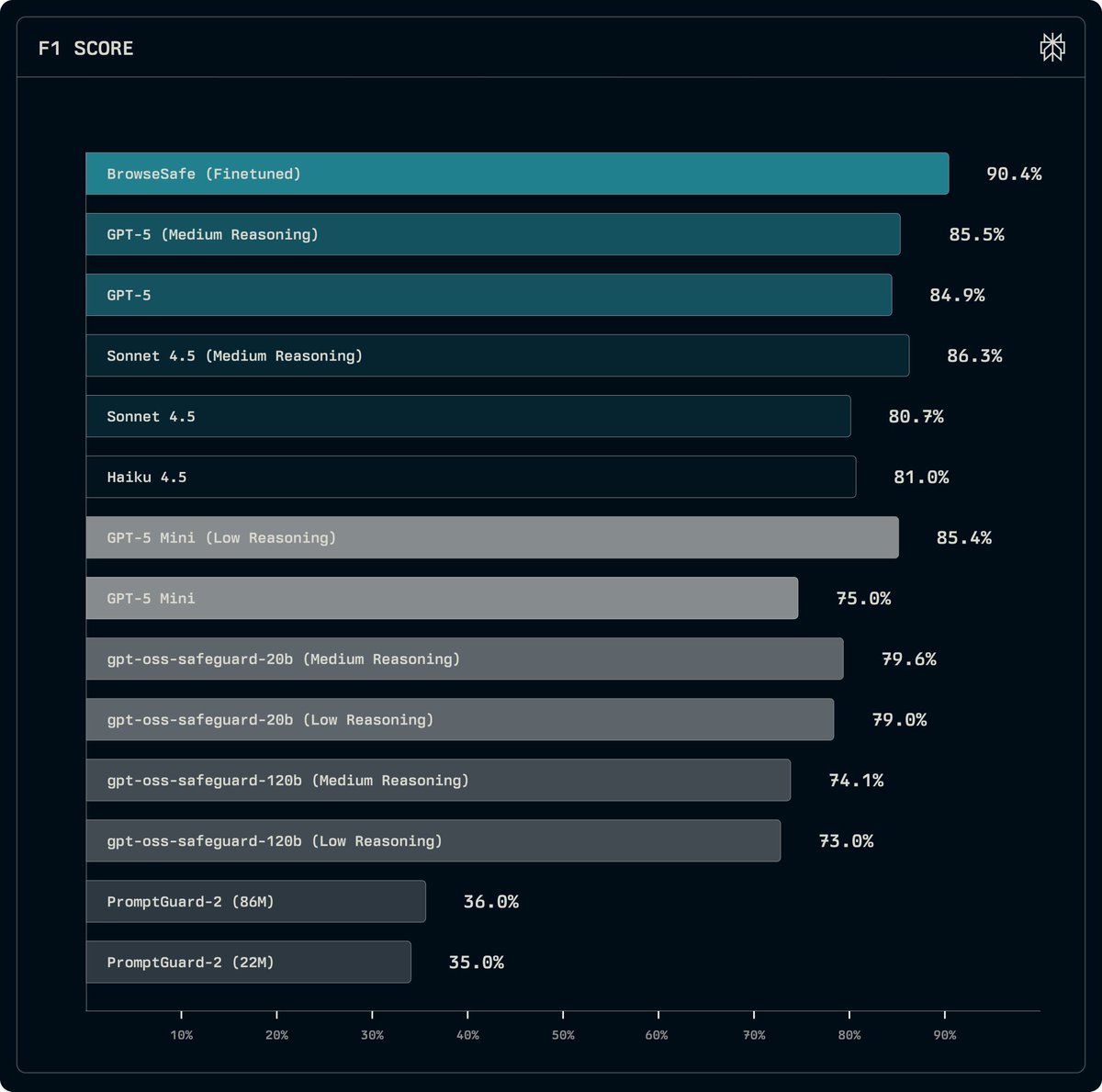

We've fine-tuned a version of Qwen3-30B that can scan raw HTML and detect prompt injection attacks even before a user initiates any request to the Comet Assistant on the client. We've also released the BrowseSafe-Bench of simulated attacks, and hope to continue contributing to making Comet safe and secure to use while benefitting from all the agency and utility it offers to all users.

Today we're releasing BrowseSafe and BrowseSafe-Bench: an open-source detection model and benchmark to catch and prevent malicious prompt-injection instructions in real-time. https://t.co/TutfaBnTte

Curiosity is a requirement for greatness. You win when you keep asking new questions every day. That’s why I am proud to announce my investment in Perplexity. Perplexity is powering the world’s curiosity, and together we will inspire everyone to ask more ambitious questions. https://t.co/xhCeLuW51J is just the beginning!

https://t.co/AJWHRmDQ3L https://t.co/f7n2bTlREn

Winners never stop learning. Never stop asking. @perplexity_ai https://t.co/SWFDKvyFTp

Winners never stop learning. Never stop asking. @perplexity_ai https://t.co/SWFDKvyFTp

Lots of nice examples in the replies from China, India, Brazil, etc. And since people are asking about Utah's jello: https://t.co/h1LnpLZ9rV ...and tamales in Arkansas: https://t.co/IwmpsLdPwd

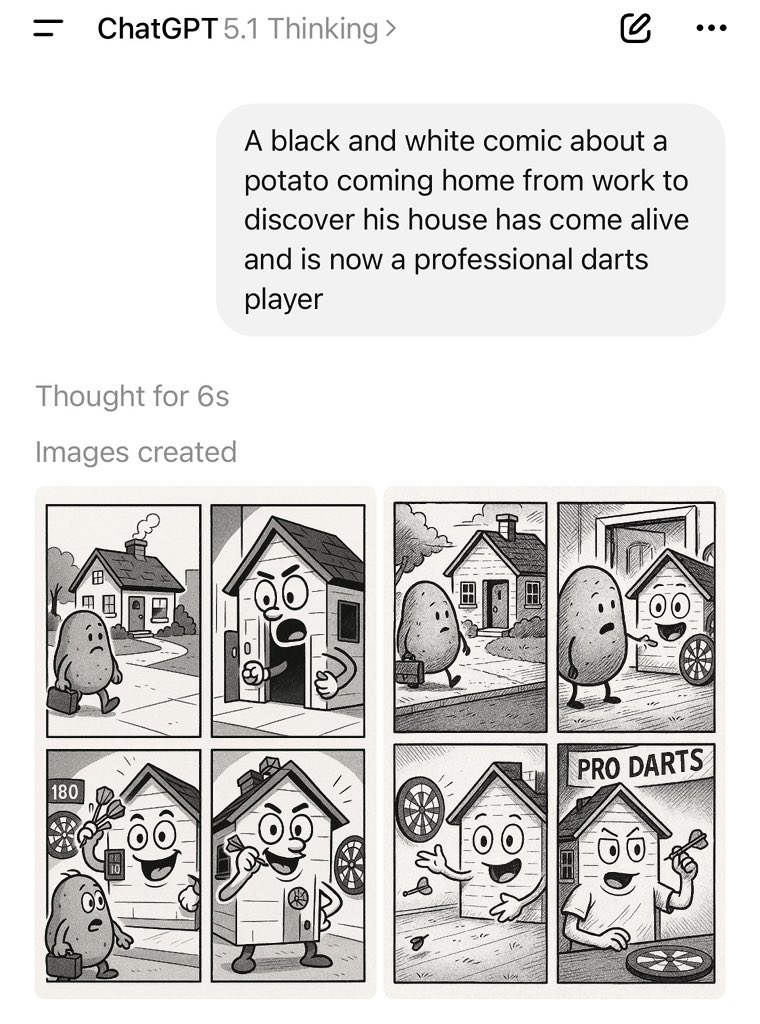

Its weird that there was never a fix for the ubiquitous yellow tint in ChaGPT imagegen. Was there ever an explanation for why it happens? It is something that no other major image generation model does & I have long been curious why. https://t.co/R1BNB3Xs6j

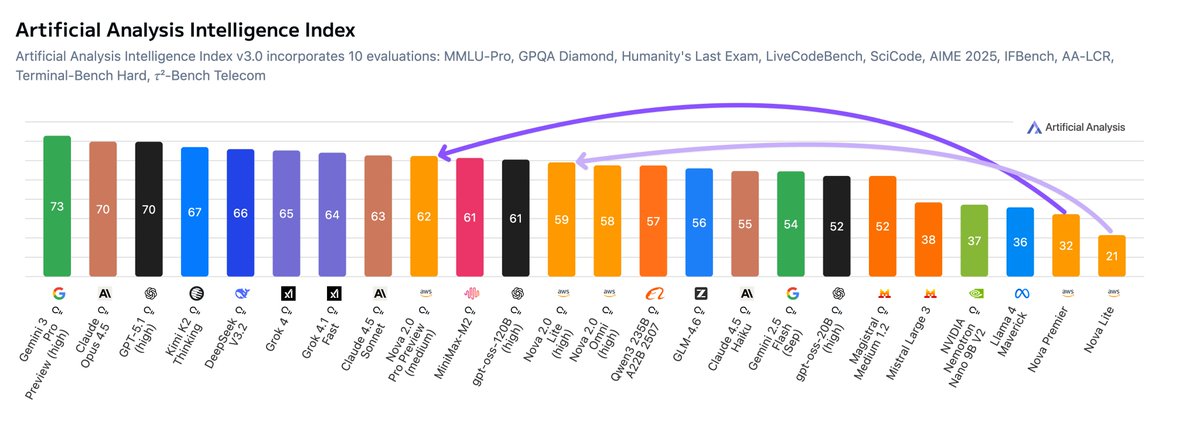

Amazon is back with Nova 2.0, a substantial upgrade over prior Amazon Nova models and demonstrating particular strength in agentic capabilities Amazon has released Nova 2.0 Pro (Preview), its new flagship model; Nova 2.0 Lite, focused on speed and lower cost; and Nova 2.0 Omni, a multimodal model handling text, image, video and speech inputs with text and image outputs. Key benchmarking takeaways: Amazon back amongst top AI players: This is Amazon’s latest release since Nova Premier and Amazon’s first release of reasoning models. Nova 2.0 Pro jumps 30 points in the Artificial Analysis Intelligence Index over Premier and Lite 38 points. This represents a huge increase in capabilities and Amazon’s return to being amongst the top AI players. Strengths in agentic capabilities: Agentic capabilities including tool calling is a strength of the models, Nova 2.0 Pro scores 93% on τ²-Bench Telecom and 80% on IFBench on medium and high reasoning budgets respectively (complete benchmarks for high reasoning coming soon). This places Nova 2.0 Pro Preview amongst the leading models in these benchmarks. Multimodal: Nova 2.0 Omni is one of few models, alongside most notably the Gemini model series, that can natively handle text, image, video and speech inputs. This is a new differentiator for Amazon’s Nova model series. Competitive pricing: Amazon has priced Nova 2.0 Pro at $1.25/$10 per million input/output tokens, and considering token usage the model took $662 to run our Artificial Analysis Intelligence Index. This is substantially less than other frontier models like Claude 4.5 Sonnet ($817) and Gemini 3 Pro ($1201), but remains above others including Kimi K2 Thinking ($380). Nova 2.0 Lite and Omni are both priced at $0.3/$2.5 per million input/output tokens. See below for further analysis

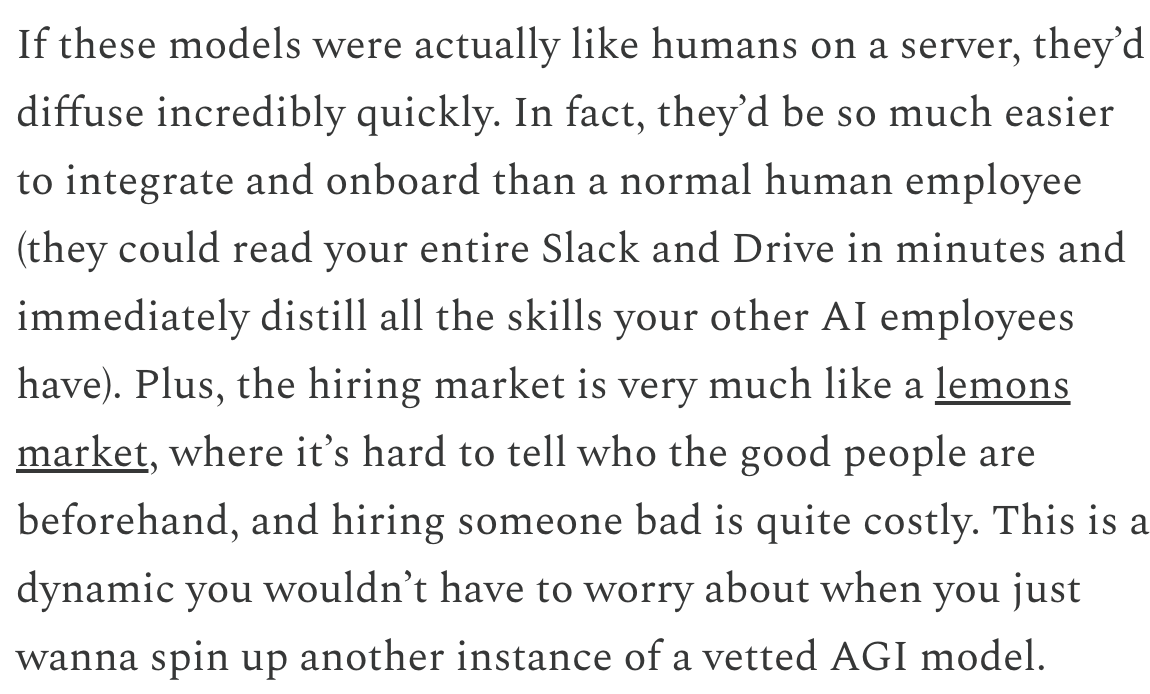

3. "Economic diffusion lag" is cope for missing capabilities https://t.co/QJpAqxaUOl

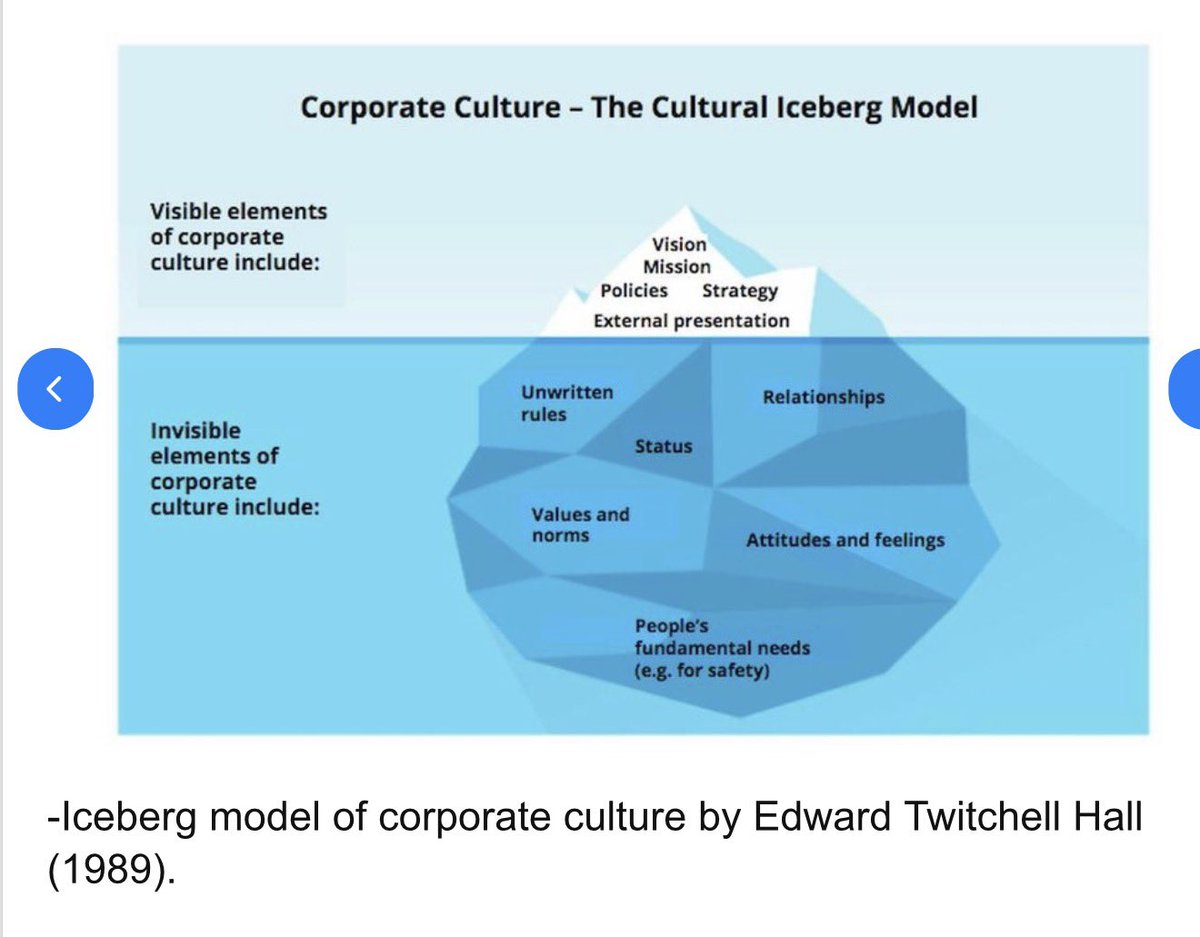

Interesting post & agree AI has missing capabilities, but I also think this perspective (common in AI) undervalues the complexity of organizations. Many things that make firms work are implicit, unwritten & inaccessible to new employees (or AI systems). Diffusion is actually hard https://t.co/fY1S3Fjt0c

3. "Economic diffusion lag" is cope for missing capabilities https://t.co/QJpAqxaUOl

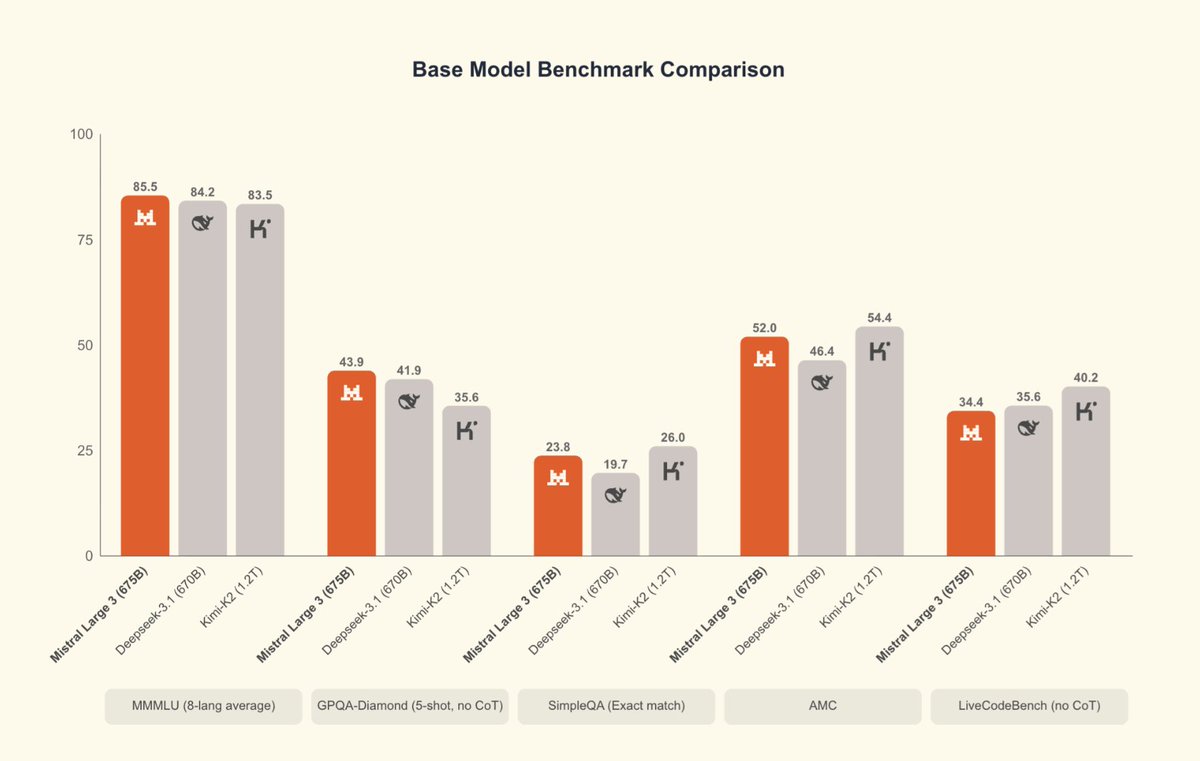

Europe still has one frontier model maker that can generally keep pace with Chinese open weights models, though no reasoner for Mistral 3 yet means they are behind the curve of actual performance - DeepSeek r1 got 71.5% on GPQA Diamond (& 1-shot, not 5-shot) back in January. https://t.co/iSIAMJuc91

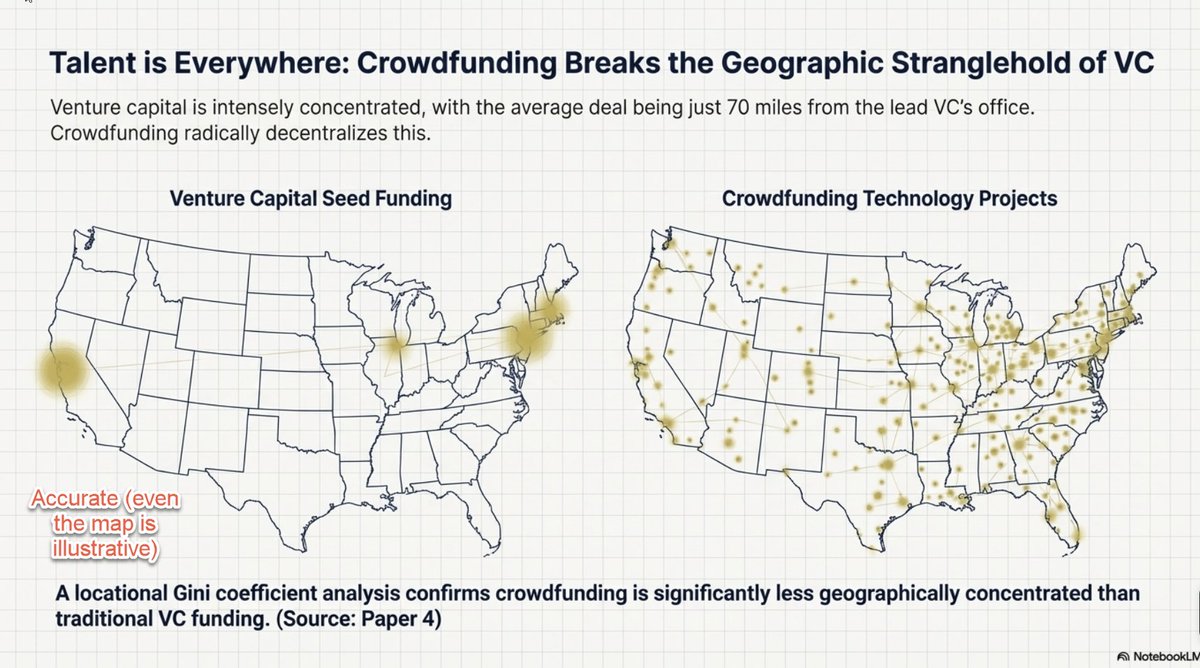

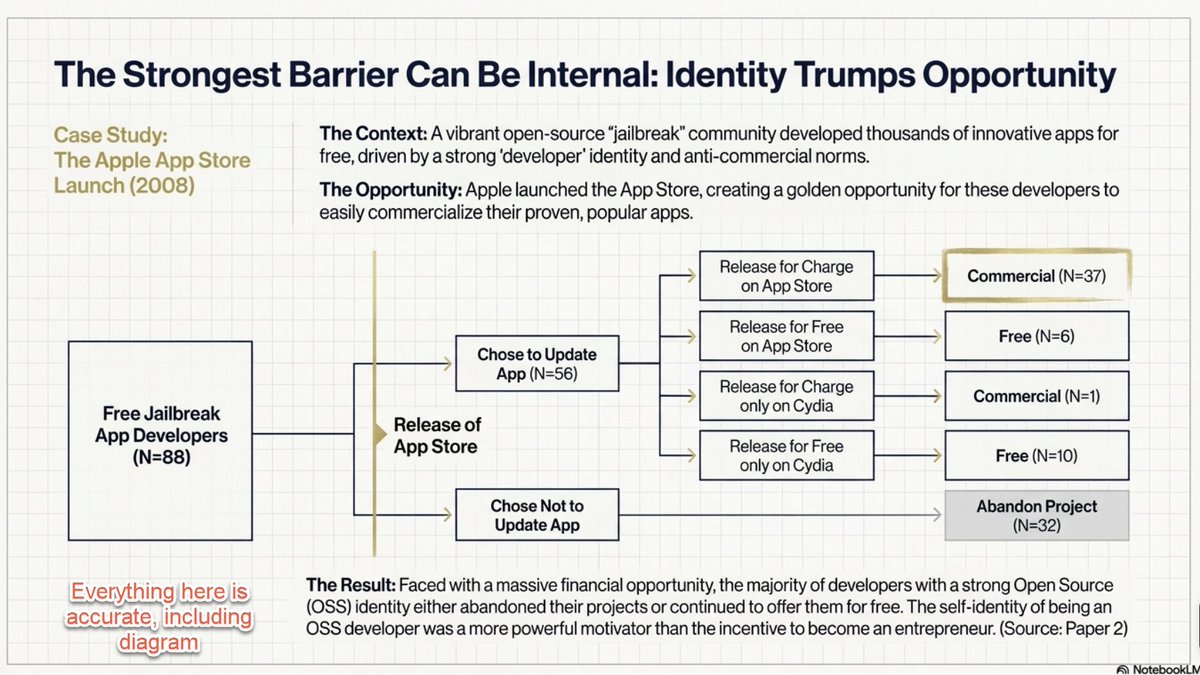

While I wasn't looking, slide generation in Google's NotebookLM became impressive Here I threw in 10 academic papers I authored & it summarized them into a coherent (& quite pretty) deck. I saw no hallucinations, but nano banana results in some occasional spelling & graph issues https://t.co/4sBC7greEe

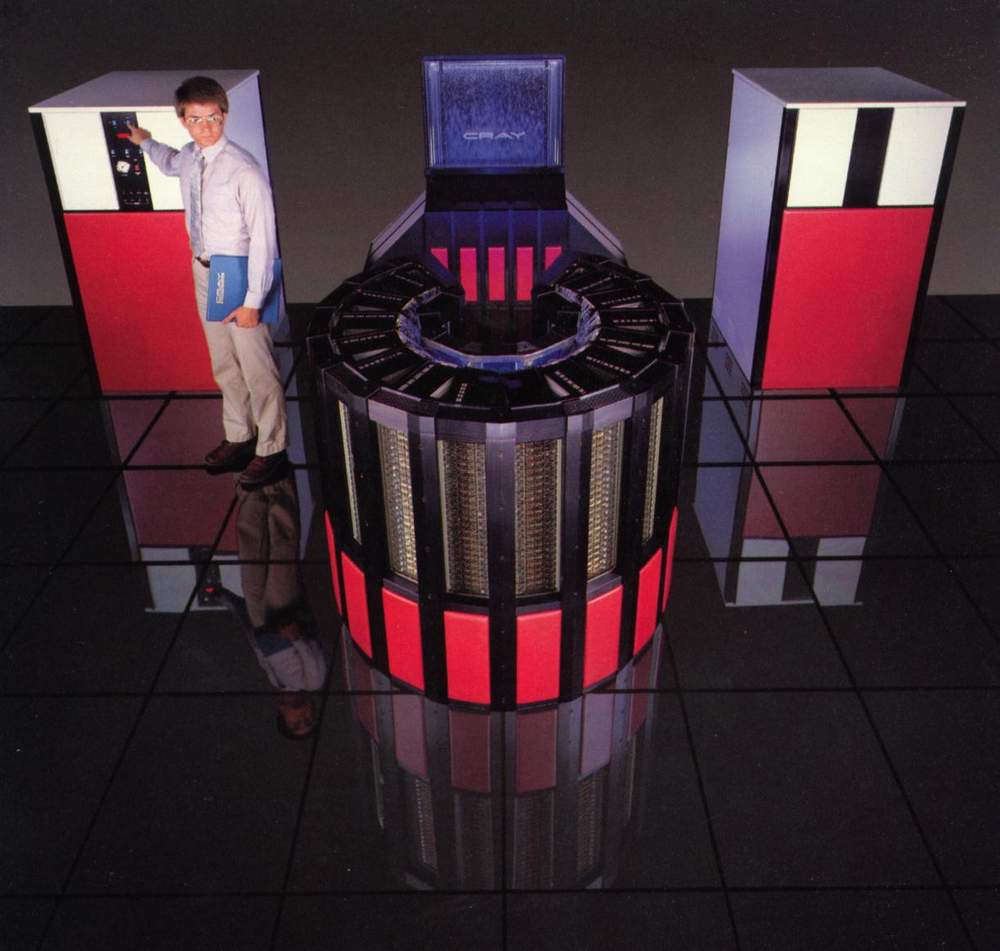

Yes, supercomputers used to have style: the Japanese Fugaku (422 petaflops, 2020), Cray-2 (1.9 gigaflops, 1985), CDC 6600 (1 megaflop, 1964) & the Difference Engine (One flop per 8 seconds, depending on crank speed, never finished, 1823) An iPhone 12 did 11 teraflops to compare https://t.co/eXoREGsSwI

It's a shame supercomputers don't come with a wraparound couch anymore

The Barcelona Supercomputing Center is pretty impressive as well. https://t.co/1odi46Kwol

Gemini 3 Deep Think is here. Deep Think is our most advanced reasoning mode that explores multiple hypotheses simultaneously to give you an even more sophisticated output.

At NeurIPS next week. AI × Science afterparty. 800+ people on registration. See you there! https://t.co/wf0rLSAMt7

At NeurIPS next week. AI × Science afterparty. 800+ people on registration. See you there! https://t.co/wf0rLSAMt7

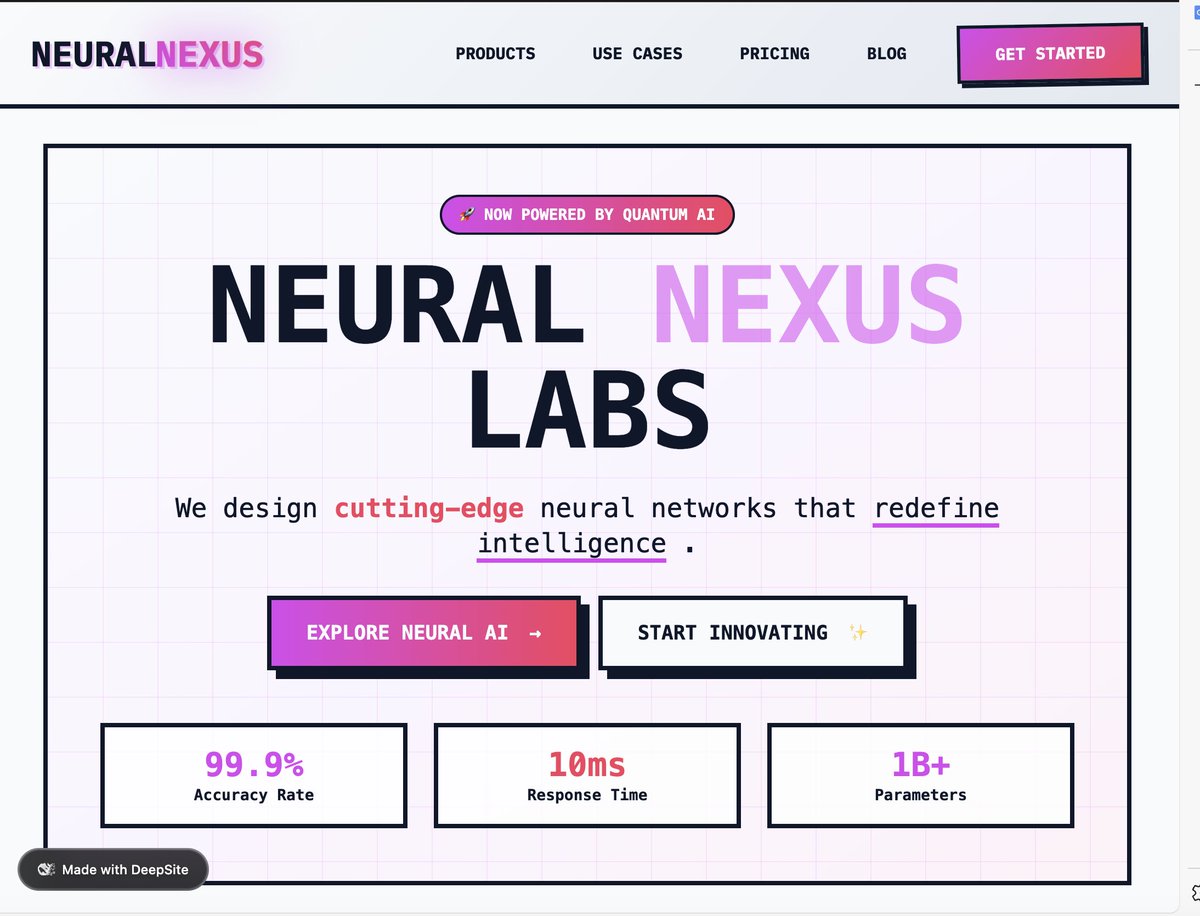

Built with @huggingface deepsite. Result is surprisingly good, model used: DeepSeek V3 0324 Only 2 edits required after first prompt. I didn't even know huggingface had an app builder, realized after @victormustar 's post. https://t.co/jE1CMgcjV6

Throwback to the first version of HF Optimized for NN4 and IE5 https://t.co/B1VcicKp2R

Throwback to the first version of HF Optimized for NN4 and IE5 https://t.co/B1VcicKp2R

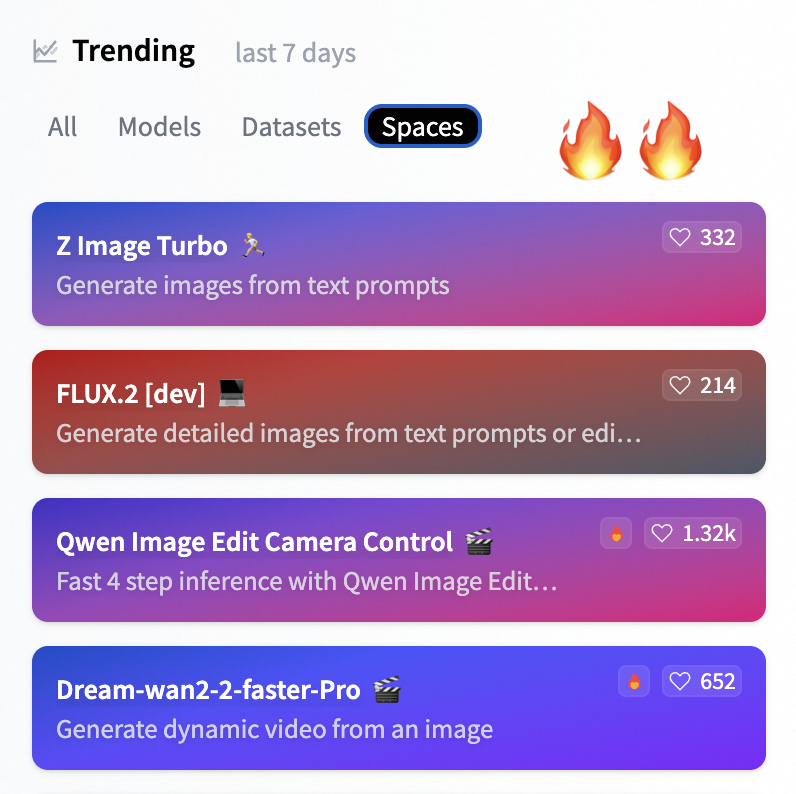

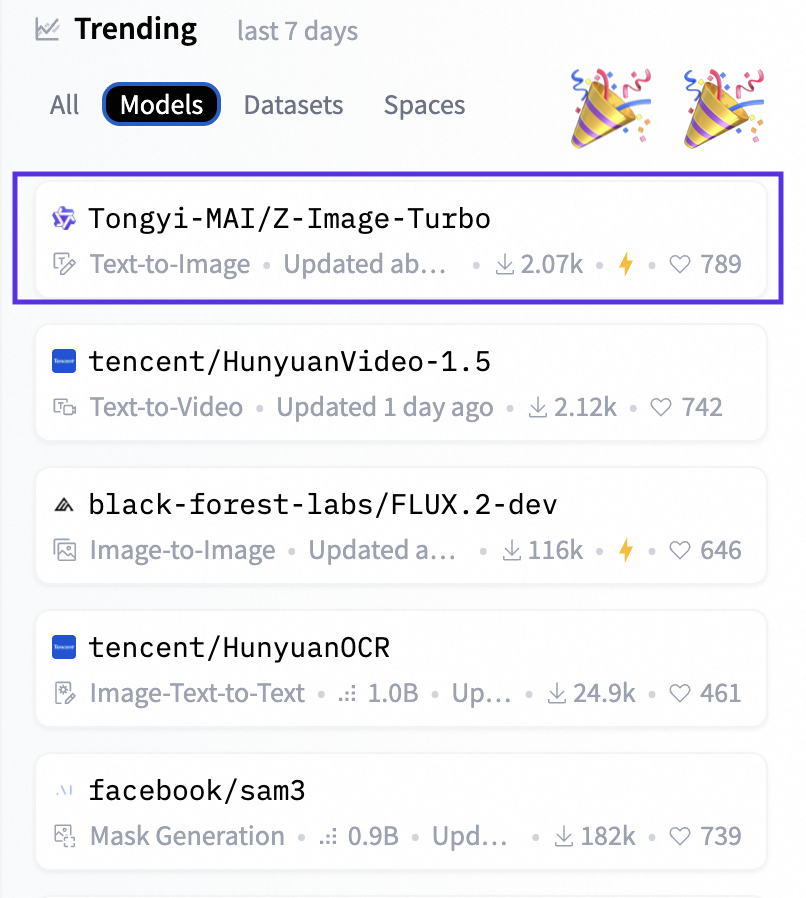

We put the "Turbo" in the name, but you guys put us on the charts. Z-Image Turbo is sitting at the top of HF Models AND Spaces trending lists. We’re not crying, you’re crying. (Okay, we’re definitely crying🥹). Huge thanks to the community! https://t.co/yKUBw6UCFN

we shipped a new thing like it / hate it? https://t.co/G2jgXOppsI

we shipped a new thing like it / hate it? https://t.co/G2jgXOppsI

The whales 🐋 is back! DeepSeek-Math-V2: 685B-parameter math monster built on V3.2-Exp-Base, fully open under Apache 2.0 - 1st model to use a generator-verifier loop in training: writes proofs → verifier scores them → RL closes the loop for self-verifiable reasoning. - Focuses on verifiable full proofs, not just final answers - huge leap for formal theorem proving. - Trained w automatic high-compute verification runs to create its own high-quality proof data at scale Enjoy 😊 👇 https://t.co/0SlhCibvP3

Olmo 3 is now available through @huggingface Inference Providers, thanks to Public AI! 🎉 This means you can run our fully open 7B and 32B models – including Think and Instruct variants – via serverless API with no infrastructure to manage. https://t.co/vb1hS2utsz

Nvidia silently dropped Orchestrator-8B 👀 “On the Humanity's Last Exam (HLE) benchmark, ToolOrchestrator-8B achieves a score of 37.1%, outperforming GPT-5 (35.1%) while being approximately 2.5x more efficient.” https://t.co/euQwQUWnJb

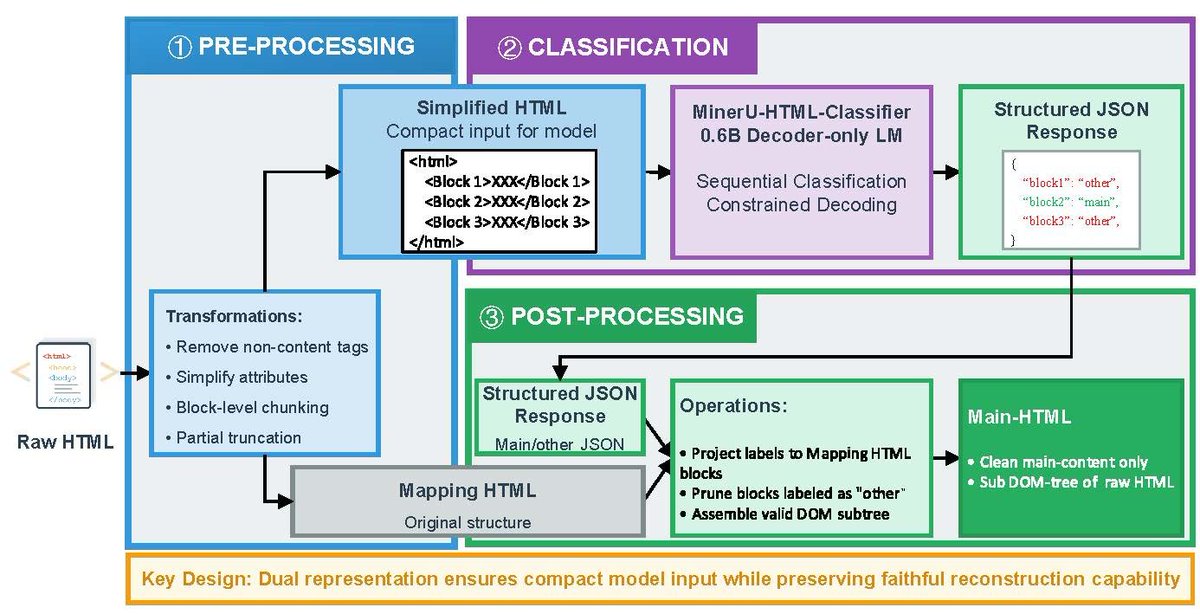

opendatalab/AICC: Markdown version of Common Crawl, extracted by MinerU. Very cool. It only has two shards for now but someone could scale it up to the entire Common Crawl. https://t.co/bH8m8rqbuH