Your curated collection of saved posts and media

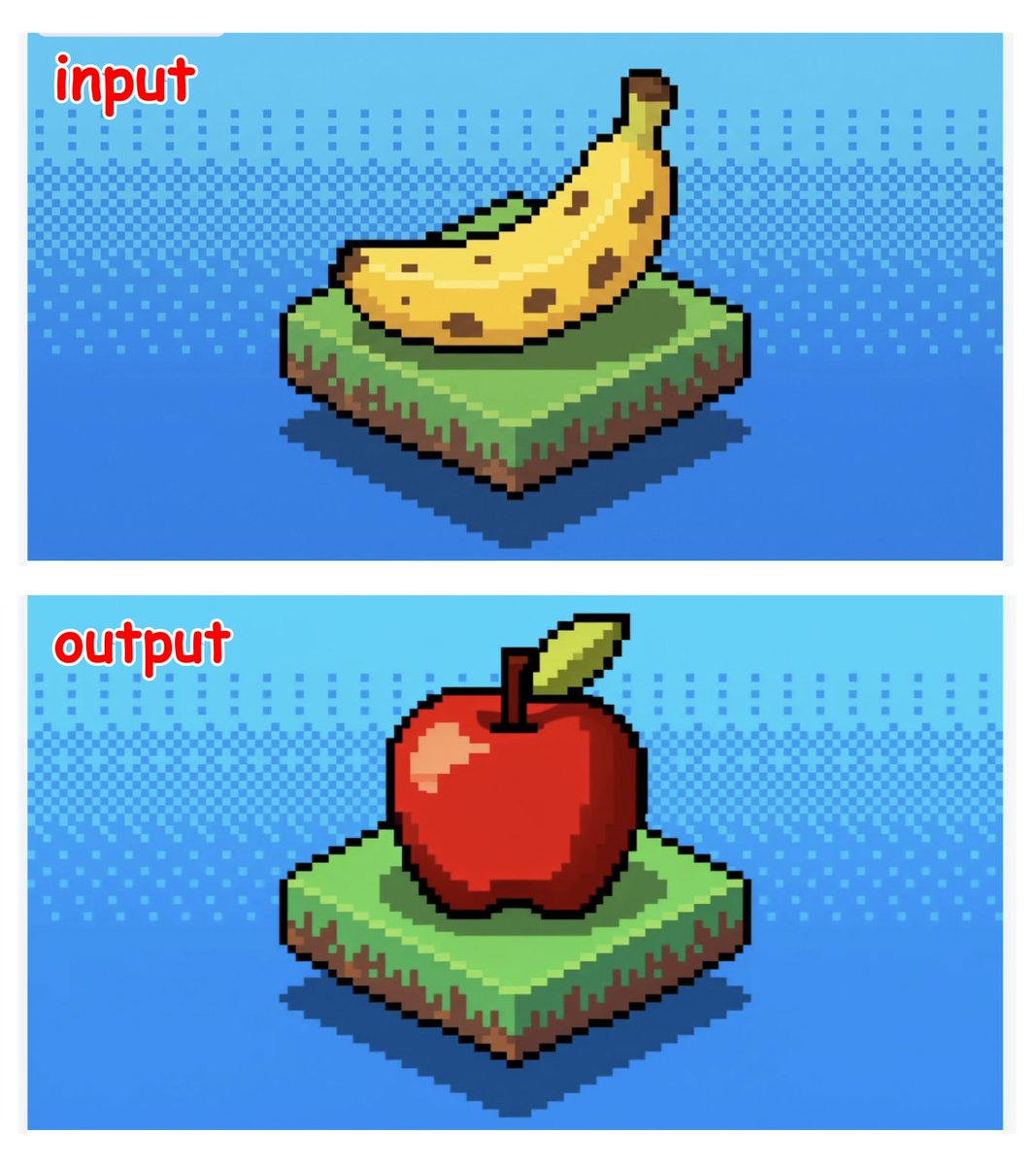

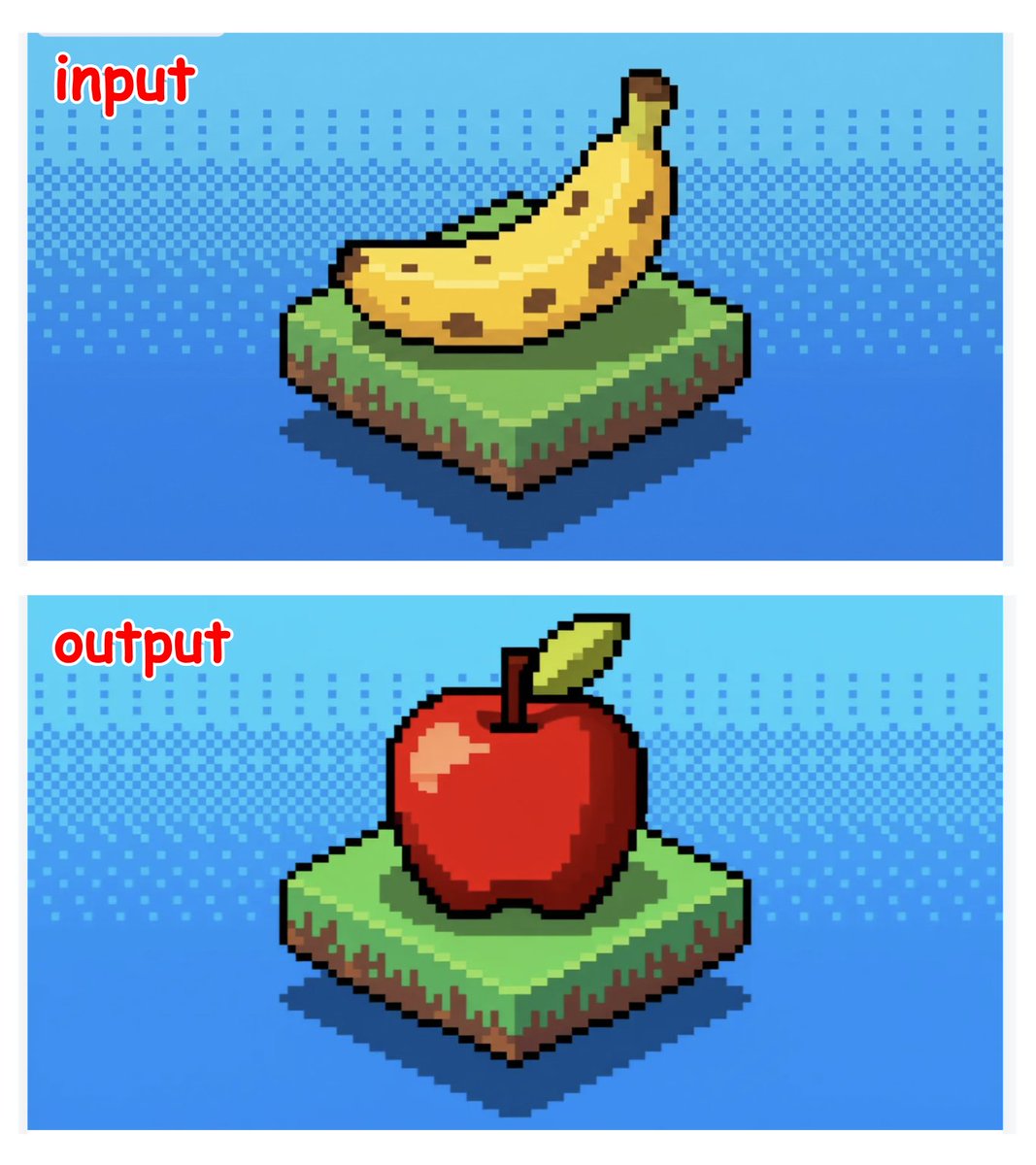

prompt: "replace the banana with an apple" wait this is very good? 👀

This is another really cool release by @Microsoft Try it here: https://t.co/WcExDggJjP https://t.co/N20l5cKgj2

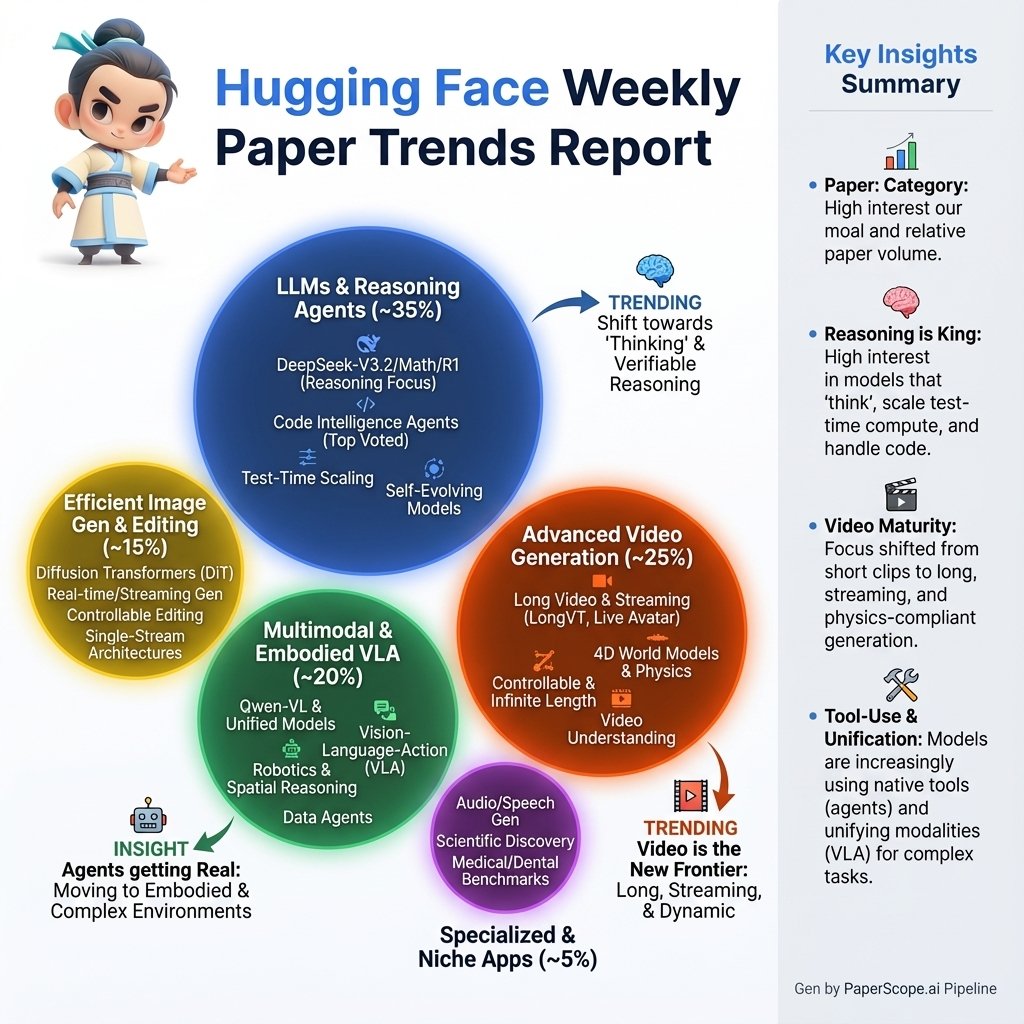

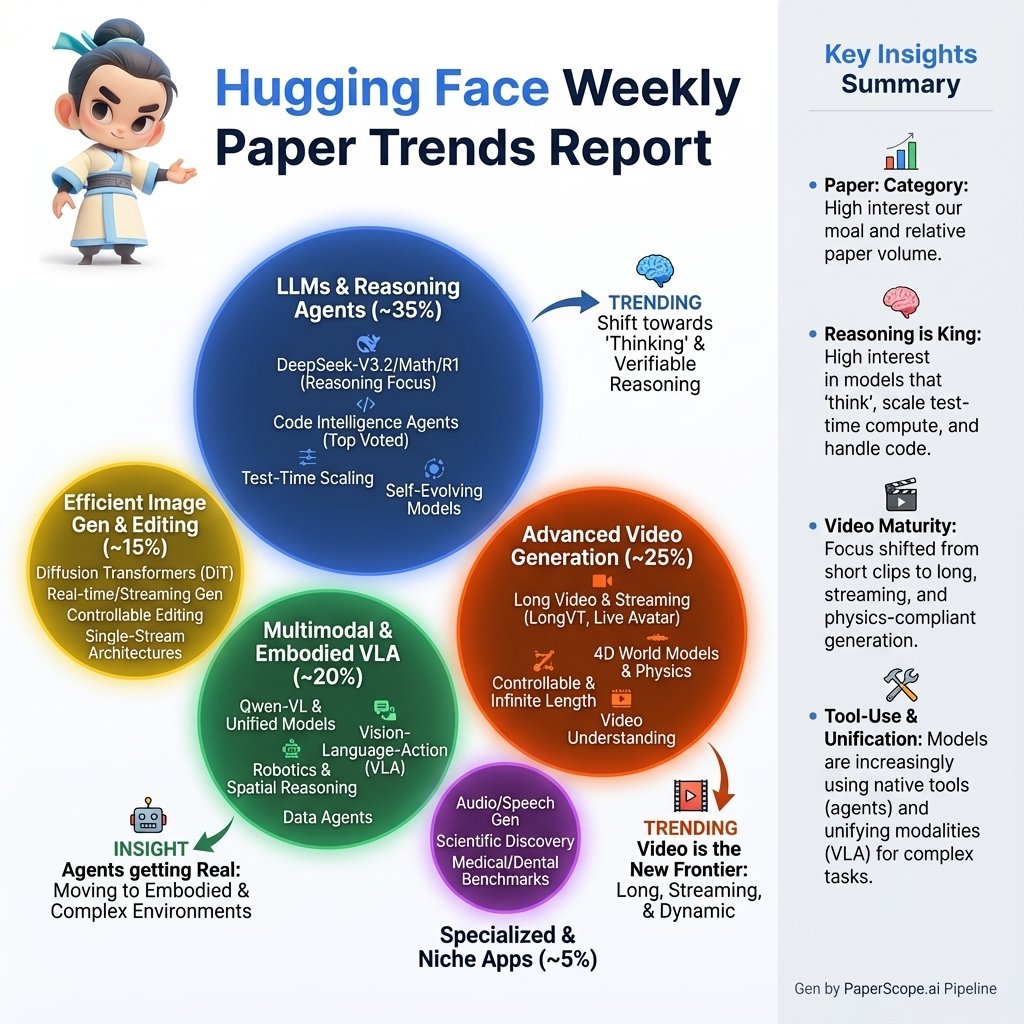

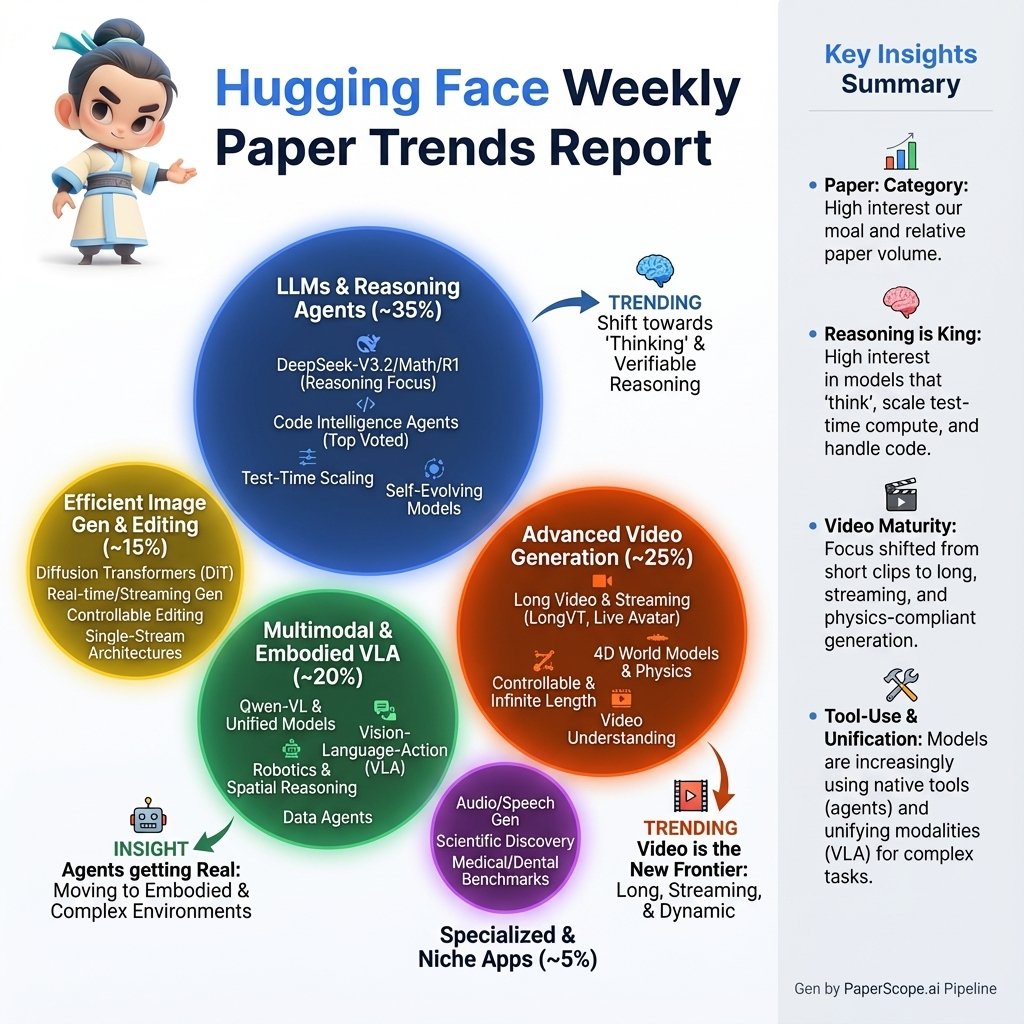

Hugging Face Weekly Paper Trends @_akhaliq (Gen by nana-banana-pro) https://t.co/GYJGO069Qm

Hugging Face Weekly Paper Trends @_akhaliq (Gen by nana-banana-pro) https://t.co/GYJGO069Qm

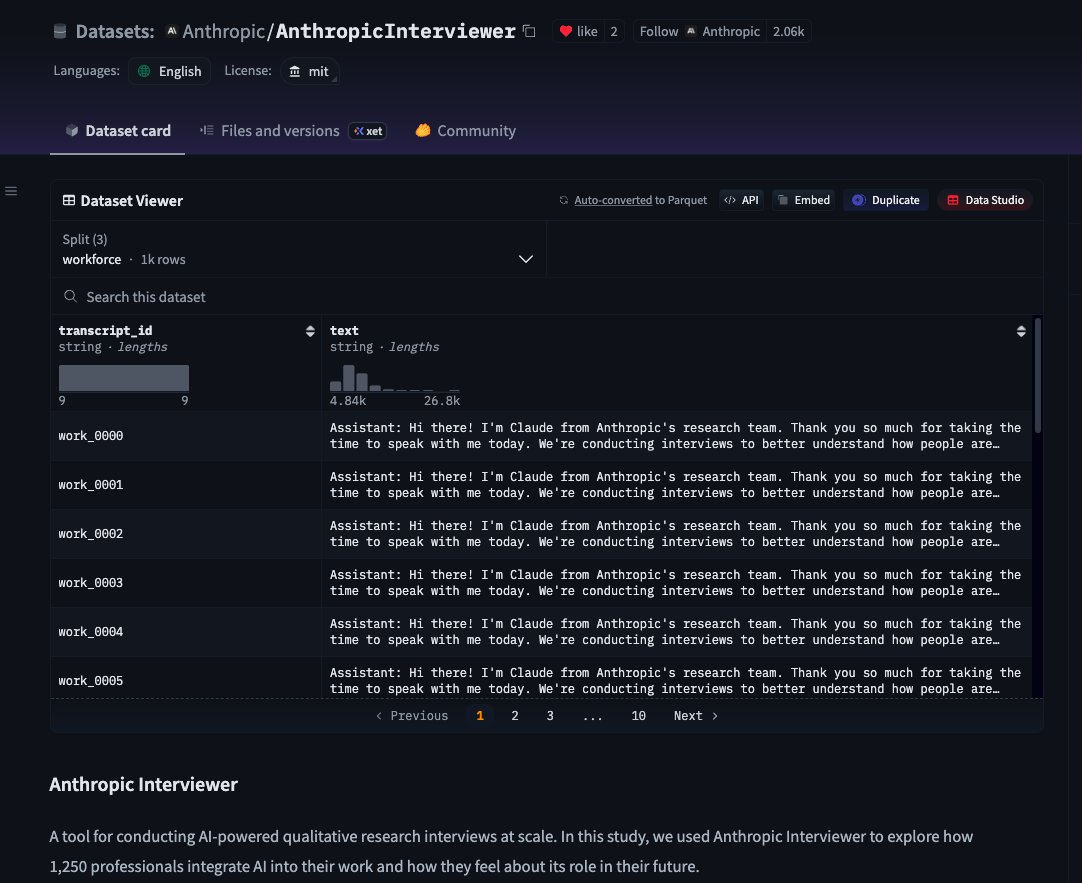

WOW! @AnthropicAI released interviews with 1,250 professionals about how they use AI for work. You can find it on @huggingface as an open dataset! https://t.co/YEL3ZD7ypc

We’re launching Anthropic Interviewer, a new tool to help us understand people’s perspectives on AI. It’s now available at https://t.co/W8P36sPQBy for a week-long pilot.

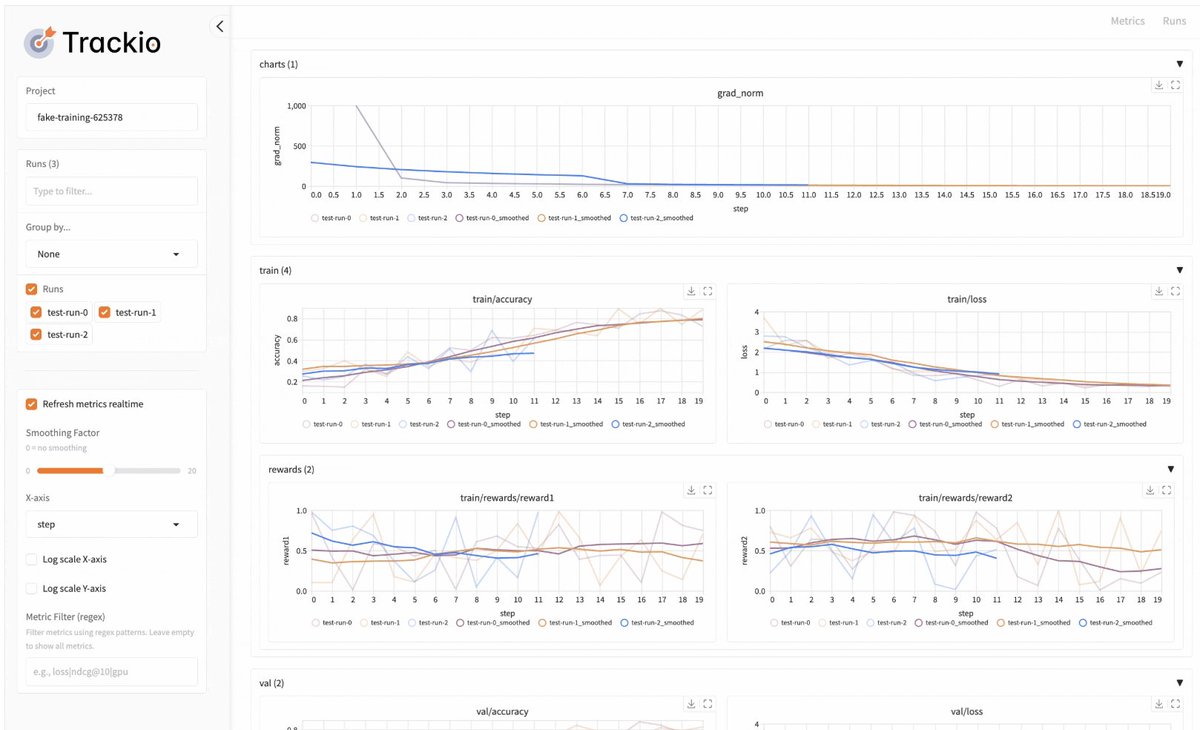

With Neptune acquired by OpenAI and W&B by Coreweave, it's important that your experiment tracking libraries are open, free, and forever yours. That's why we built @TrackioApp, a local-first library with the same API as W&B, just: 𝚒𝚖𝚙𝚘𝚛𝚝 𝚝𝚛𝚊𝚌𝚔𝚒𝚘 𝚊𝚜 𝚠𝚊𝚗𝚍𝚋 https://t.co/TqEYNdojXs

VibeVoice from Microsoft goes real-time! > 0.5B model tuned for ultra-low-latency speech > Lightweight architecture, high-fidelity outputs > Token-level streaming for instant feedback > Designed for real-time LLM interaction https://t.co/2FwTIrPsmN

We’ve just released @nvidia #DRIVE Alpamayo-R1 (AR1) — the world’s first industry-scale open #reasoning #VLA model for autonomous-vehicle (AV) research. AR1 integrates Chain-of-Causation reasoning with trajectory planning to improve decision-making in complex driving scenarios. Built on @nvidia #Cosmos #Reason, AR1 is designed as a customizable foundation for a broad range of AV applications — from instantiating an end-to-end backbone for autonomous driving to powering advanced, reasoning-based auto-labeling tools. Resources: Model: https://t.co/9nI9L08LJJ Inference Code: https://t.co/QpPzLEsFnm Paper: https://t.co/8PSdQNDqwO Blog Post: https://t.co/S92N6ff58L A subset of the data used to train and evaluate AR1 is available in the @nvidia Physical AI Open Datasets: https://t.co/fD9eUcndya AR1 can be evaluated using AlpaSim (https://t.co/9Wqutgpe5d), @nvidia's newly released open-source AV simulation framework built specifically for research and development. (Separate post on AlpaSim coming soon.) This release completes @nvidia’s trifecta — model, data, and simulator — to accelerate research and development in the autonomous-vehicle domain. Happy developing, and stay tuned for more! Huge thanks to the phenomenal team that made this possible @NVIDIAAI @nvidia.

Excited to share that I've joined the @huggingface Fellows program! 🤗 Looking forward to contributing to & working more closely with the open-source ecosystem - huge thanks to everyone who's supported me on this journey! 🚀 https://t.co/MGomY8035T

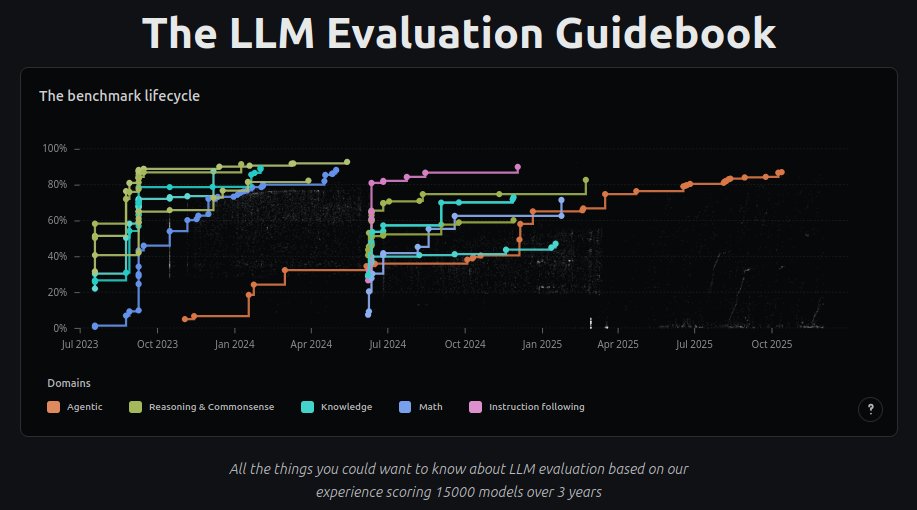

Hey twitter! I'm releasing the LLM Evaluation Guidebook v2! Updated, nicer to read, interactive graphics, etc! https://t.co/xG4VQOj2wN After this, I'm off: I'm taking a sabbatical to go hike with my dogs :D (back @huggingface in Dec *2026*) See you all next year! https://t.co/veWQKmjx9Q

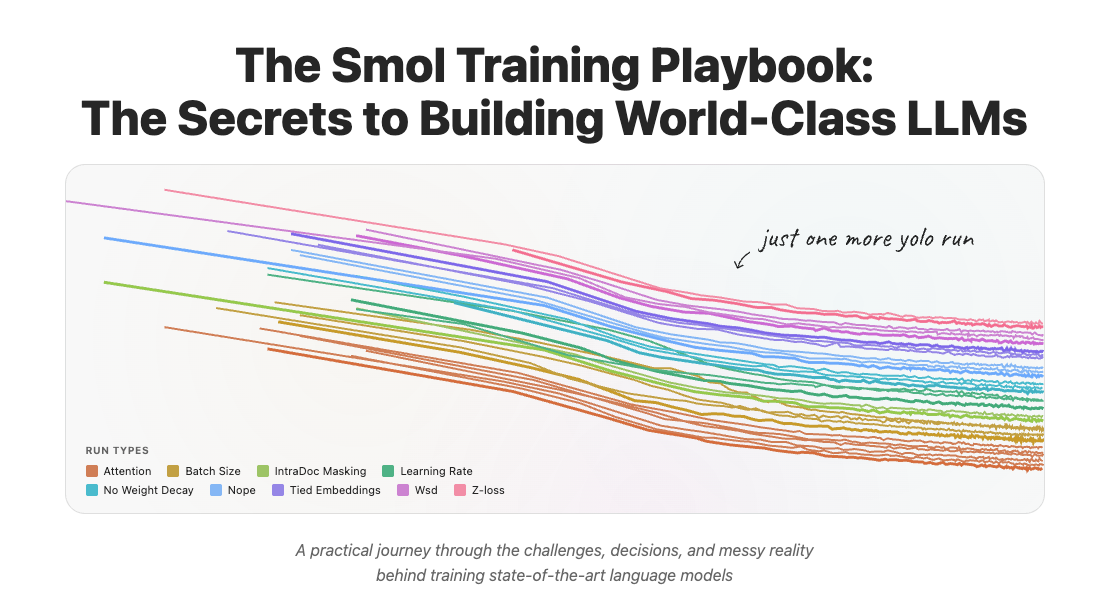

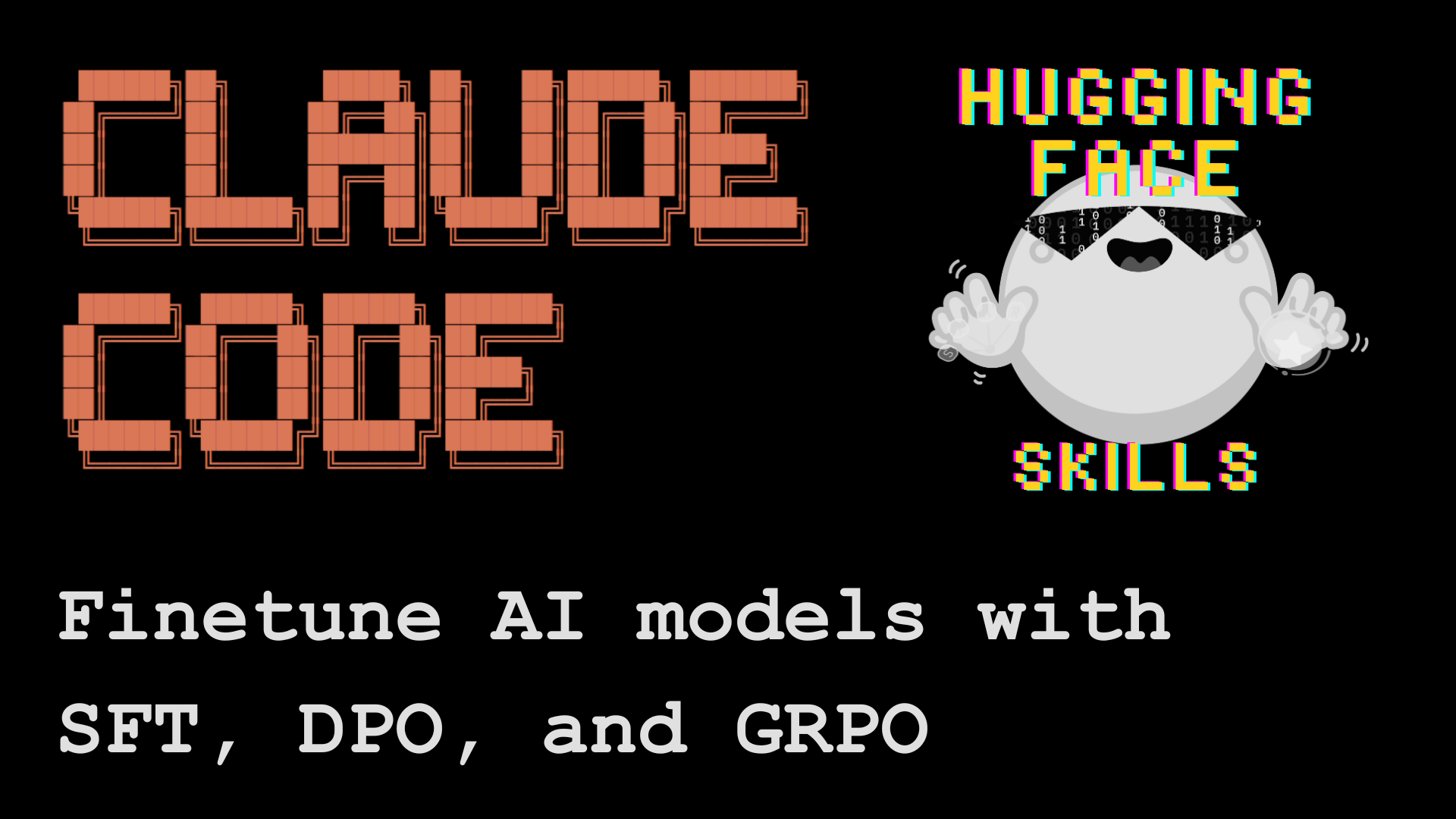

We managed to get Claude code, Codex and Gemini CLI to train good AI models thanks to @huggingface skills and you can too even (especially?) if you've never trained a model before 🤯🤯🤯 After changing the way we build software, AI might start to change the way we build AI (autocatalysis much?)!! 🔥🔥🔥

Yesterday at AI Pulse we plugged our real-time STT + TTS API into @reachymini and turned it into a live, unscripted conversational robot. Voice, personality, language, gestures, all controlled by speech. Big shoutout to @pollenrobotics and @huggingface for making this cool little guy.

Live demos are risky, but so worth it! With @GradiumAI, we plugged their conversational demo into Reachy Mini. The result? ✅ Personality switching (Gym Bro mode!🏋️♂️) ✅ Multilingual (Québécois accent 🇨🇦) ✅ Dancing/emotions on command 💃 Reachy comes alive. Unscripted demo👇 https://t.co/06zwxvX0g2

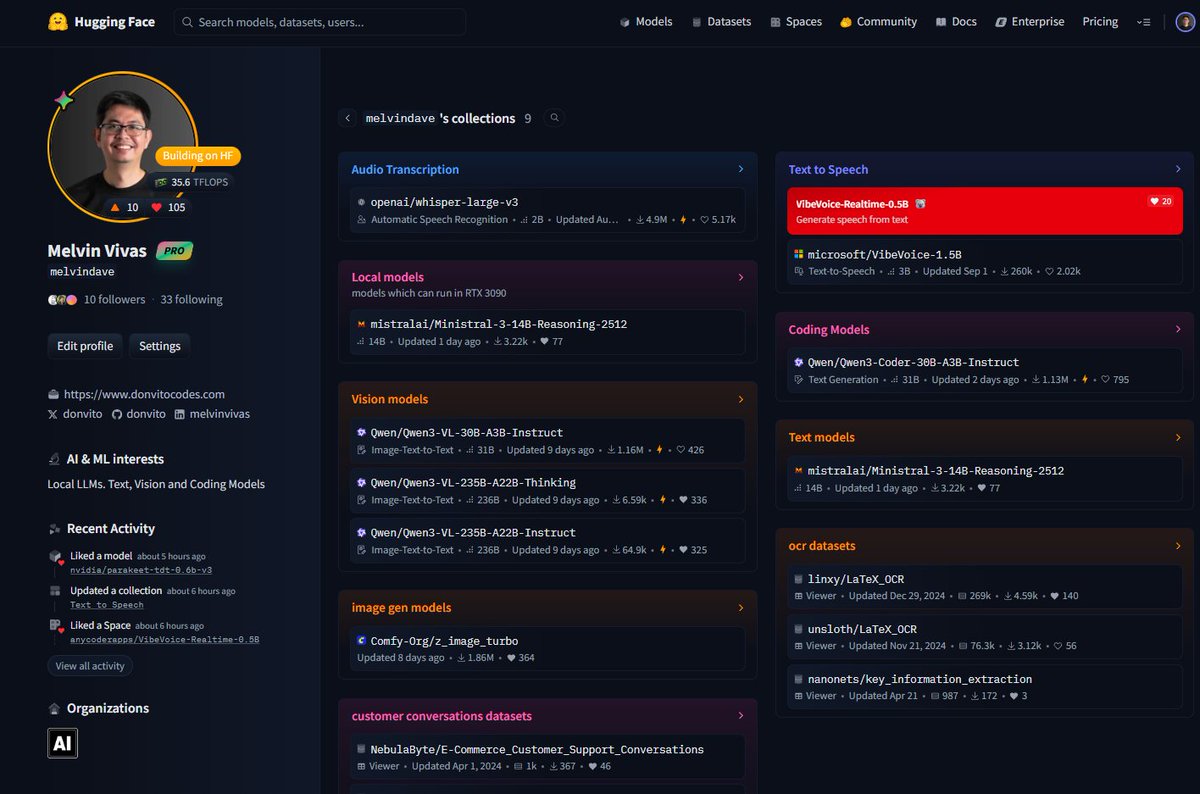

I've added Collections in my @huggingface curating models, datasets and spaces could be useful to you guys https://t.co/mkXUJz5YYj https://t.co/gH8eFsp6FS

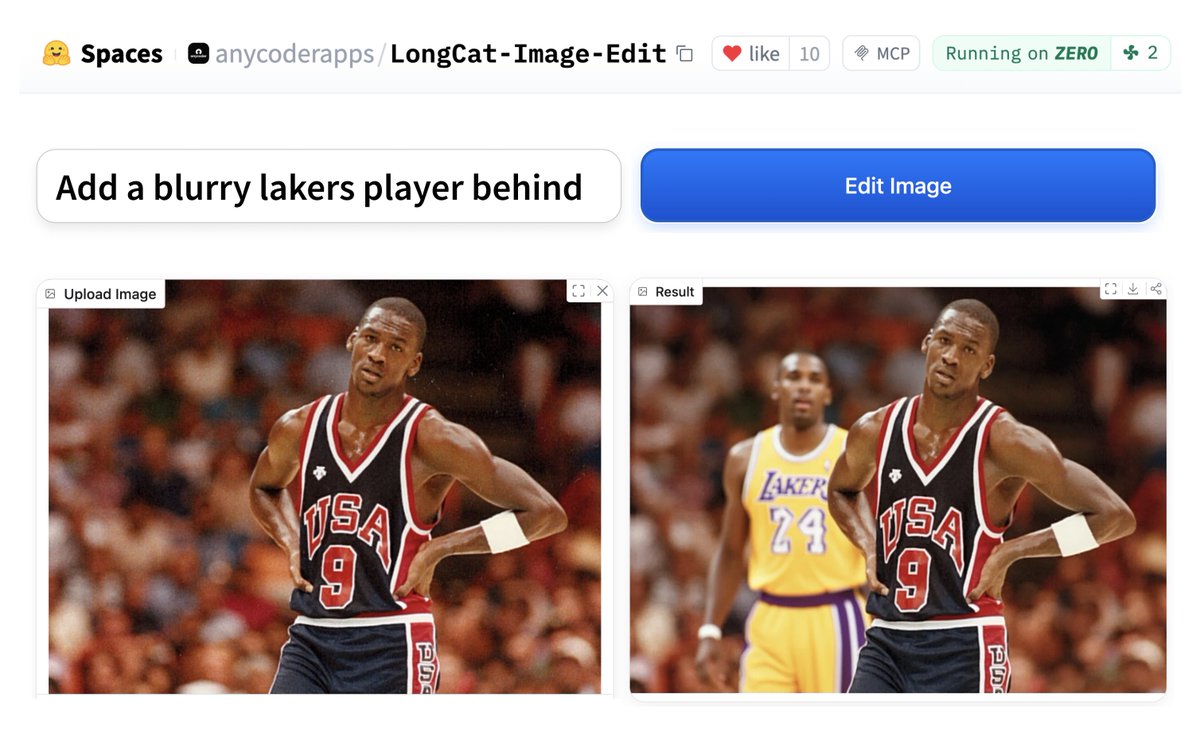

Out now: LongCat-Image-Edit - seems very very good at image editing (+ Apache 2.0 license) 🔥 ⬇️ Demo available on Hugging Face https://t.co/W50ZE8tsNp

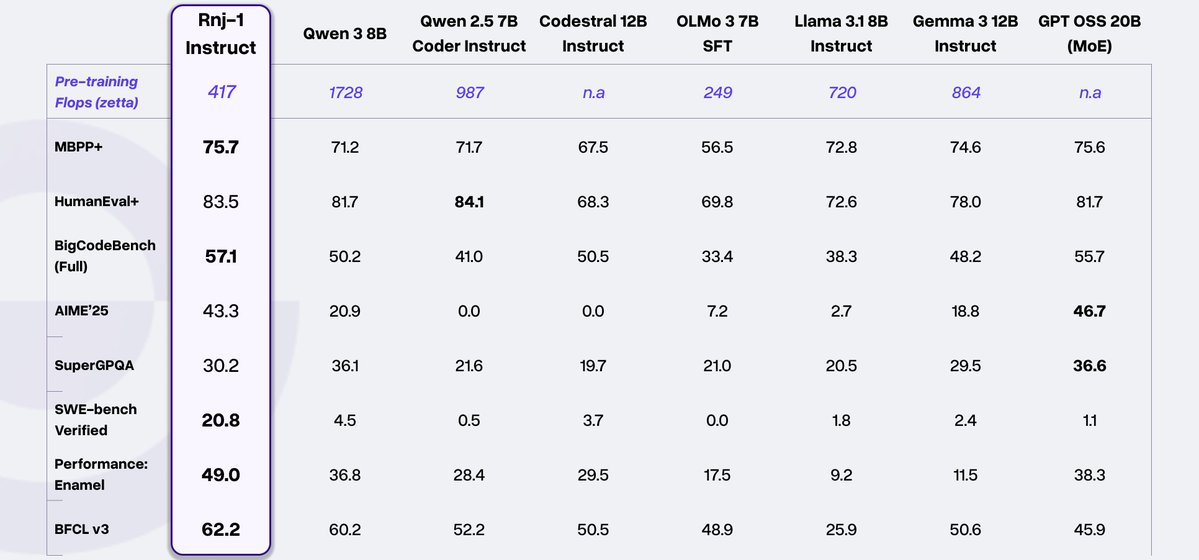

Today, we’re excited to introduce Rnj-1, @essential_ai's first open model; a world-class 8B base + instruct pair, built with scientific rigor, intentional design, and a belief that the advancement and equitable distribution of AI depend on building in the open. We bring American open-source at par with the best in the world.

This is another really cool release by @Microsoft Try it here: https://t.co/WcExDggJjP https://t.co/N20l5cKgj2

Microsoft just launched VibeVoice Realtime on Hugging Face A lightweight, streaming text-to-speech model generating initial audible speech in ~300ms, perfect for live data and LLM conversations. https://t.co/wBvHDRgcBb

This is another really cool release by @Microsoft Try it here: https://t.co/WcExDggJjP https://t.co/N20l5cKgj2

https://t.co/R6e5foIrVk

https://t.co/R6e5foIrVk

I just built a demo for this Light Migration LoRA on @huggingface the quality surprises me on every output 🤯 https://t.co/xmzEU0Nua2

Love seeing what the community builds with @ModelScope2022 . @dx8152 just dropped a game-changing Light Migration LoRA for Qwen-Image-Edit-2509. It solves the "secondary lighting" headache perfectly. Incredible work. 👏 https://t.co/5IYTfR53uD

Introducing swift-huggingface: a complete Swift client for @huggingface Hub. • Fast, resumable downloads • Flexible, predictable auth • Sharable cache with Python / hf CLI • Xet support (coming soon!) https://t.co/u0A4WWfs4F

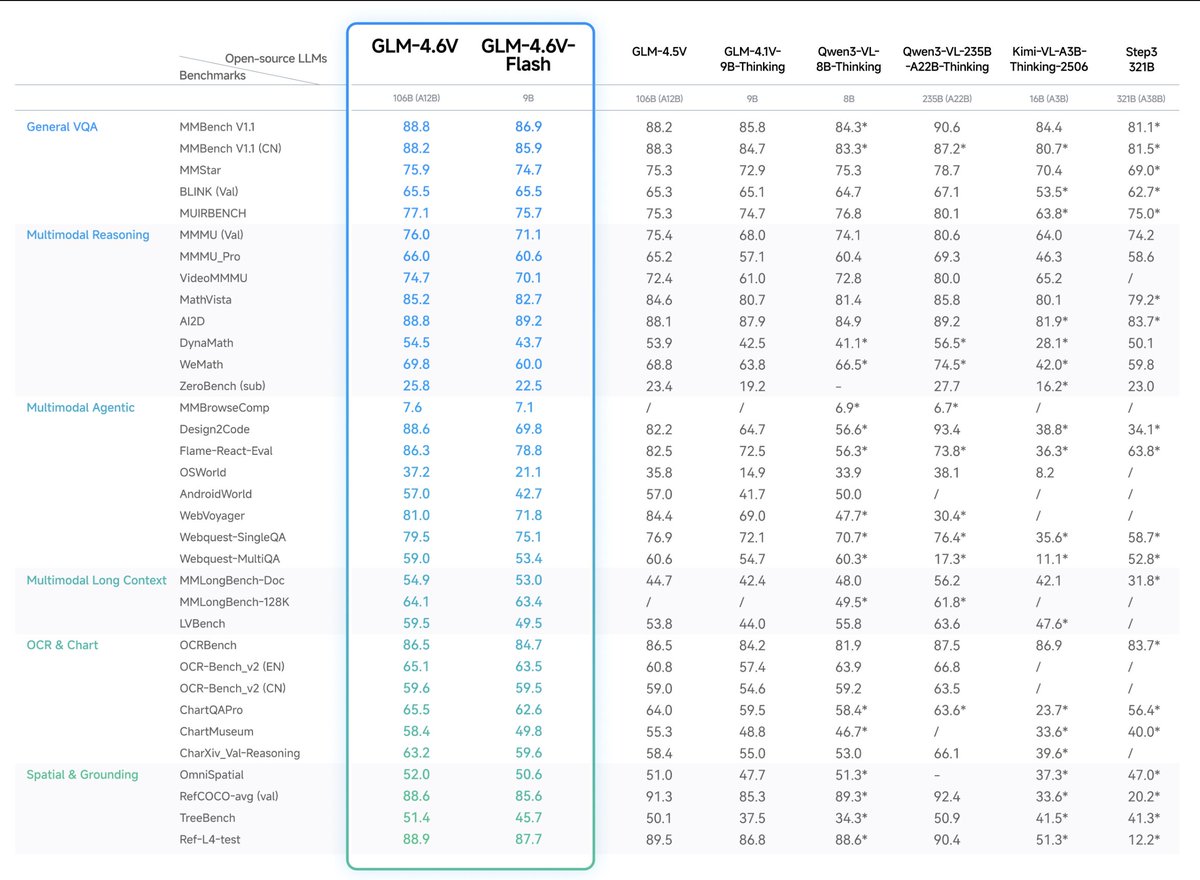

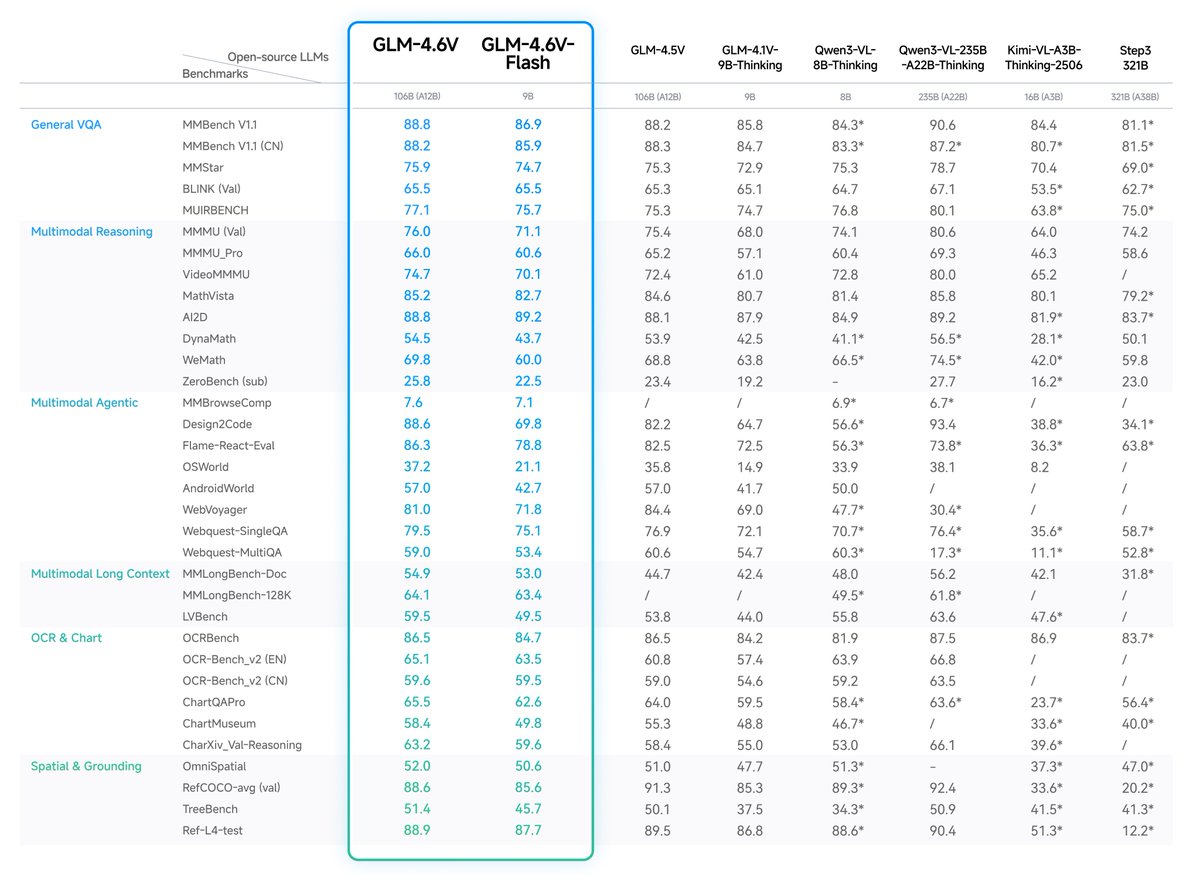

@Zai_org just released GLM-4.6V on @huggingface, amazing model given its size. - Native multimodal function calling!!! - 128k context length - 106A12B and 9B (for flash variant) - native integration with Transformers 5, sglang and vllm - mit license (as always) Great work @jietang !

GLM-4.6V Series is here🚀 - GLM-4.6V (106B): flagship vision-language model with 128K context - GLM-4.6V-Flash (9B): ultra-fast, lightweight version for local and low-latency workloads First-ever native Function Calling in the GLM vision model family Weights: https://t.co/vKmNosrHeo Try GLM-4.6V now: https://t.co/WCqWT0raFJ API: https://t.co/pPSGa3meyS Tech Blog: https://t.co/w9t96C2EKQ API Pricing (per 1M tokens): - GLM-4.6V: $0.6 input / $0.9 output - GLM-4.6V-Flash: Free

Happy weekend to those who celebrate https://t.co/lafvNmmOJO

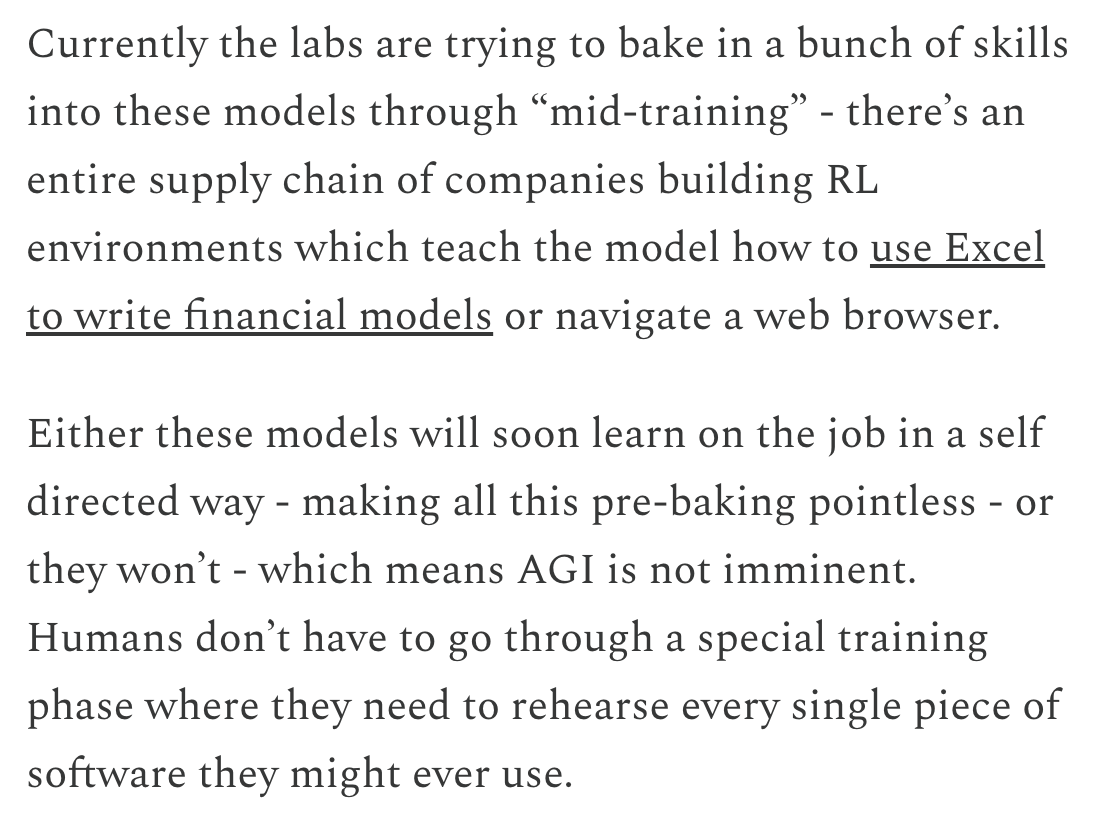

New post: Thoughts on AI progress (Dec 2025) 1. What are we scaling? https://t.co/lWyVVSuwO7

You can join here: https://t.co/zy5oJ5Lp8u The call starts at: 10am PT 1pm ET 7pm Europe time 11:30pm India time

Announcing the ARC Prize 2025 Top Score & Paper Award winners The Grand Prize remains unclaimed Our analysis on AGI progress marking 2025 the year of the refinement loop https://t.co/Lbap0VVFs9

Really enjoyed this interview about our 3rd place solution and history of our techniques that helped propel the top solutions this year (and last). Thanks to @bryanlanders for working so hard @arcprize ! @DriesSmit1 @MohamedOsmanML @bayesilicon https://t.co/FXXn96i9kI

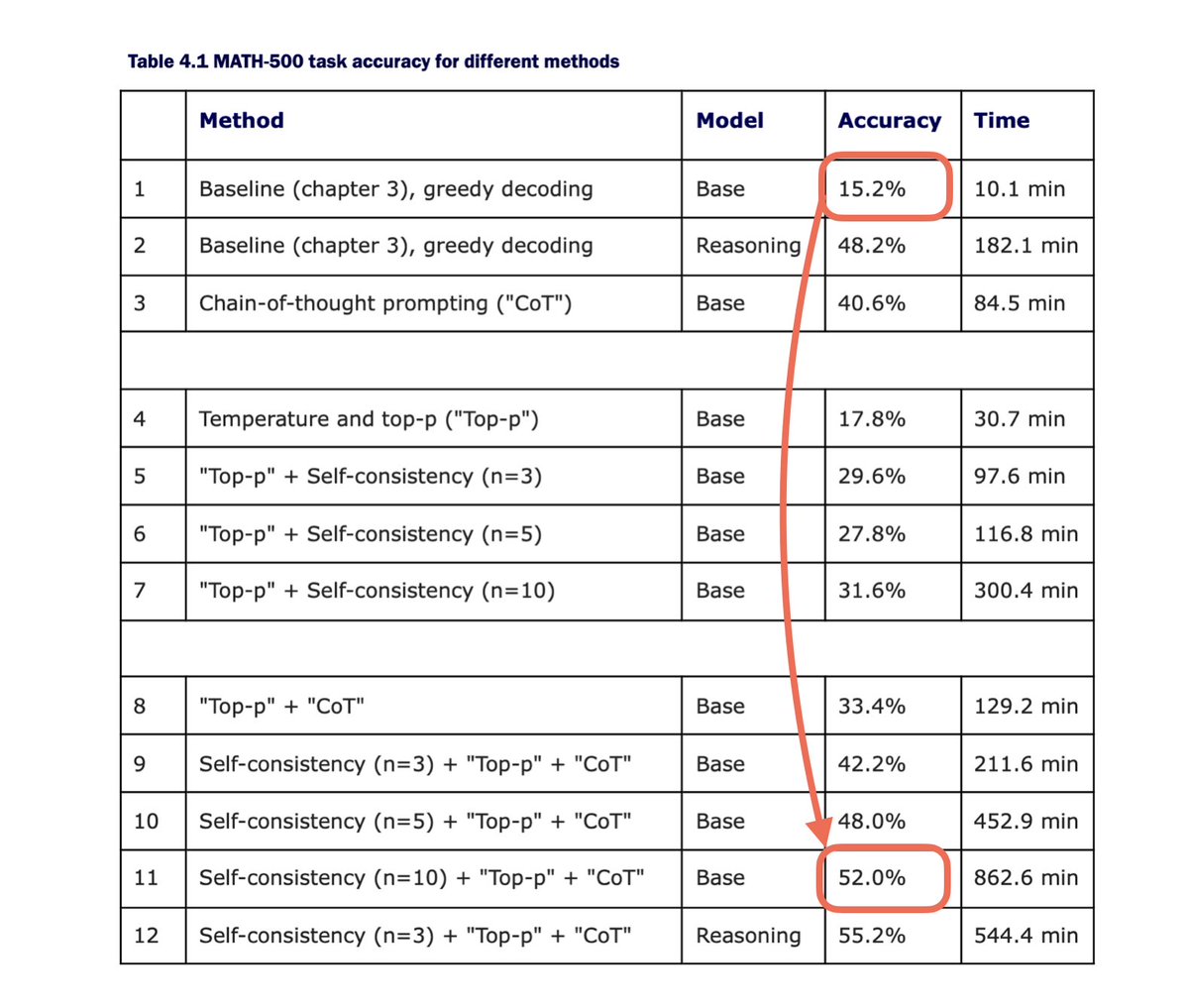

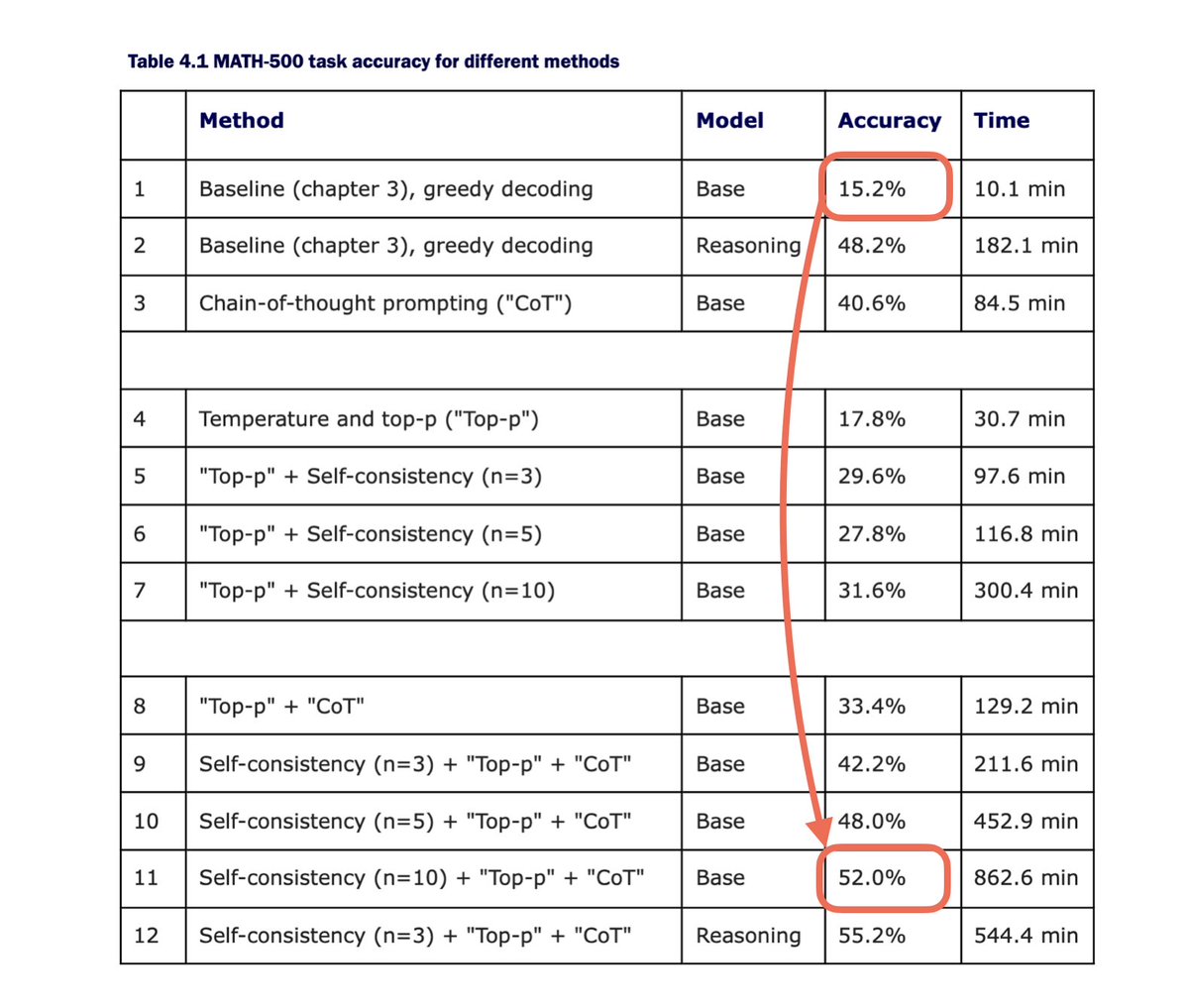

@demishassabis @GeminiApp Nice! Can echo that parallel sampling / self-consistency is probably one of the simplest but in my experience also one of the best ways to improve results on reasoning benchmarks. Even goes a long way for base models (here, experiments with a small 0.6B model). https://t.co/mj9RMLpZFg

@Do_Widzeni_a @demishassabis @GeminiApp This is on the MATH-500 test set. Here’s the code to reproduce the results if you are interested: https://t.co/z3oj5Vkno1

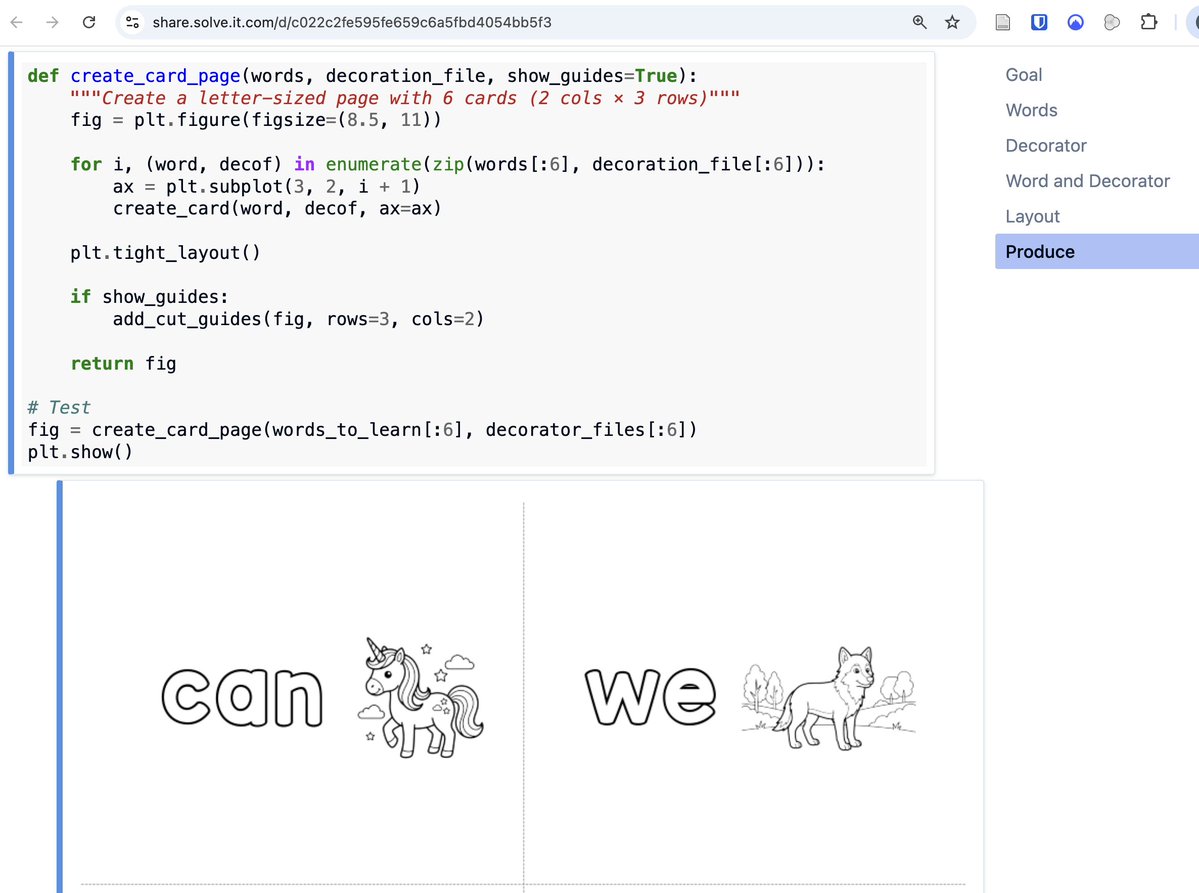

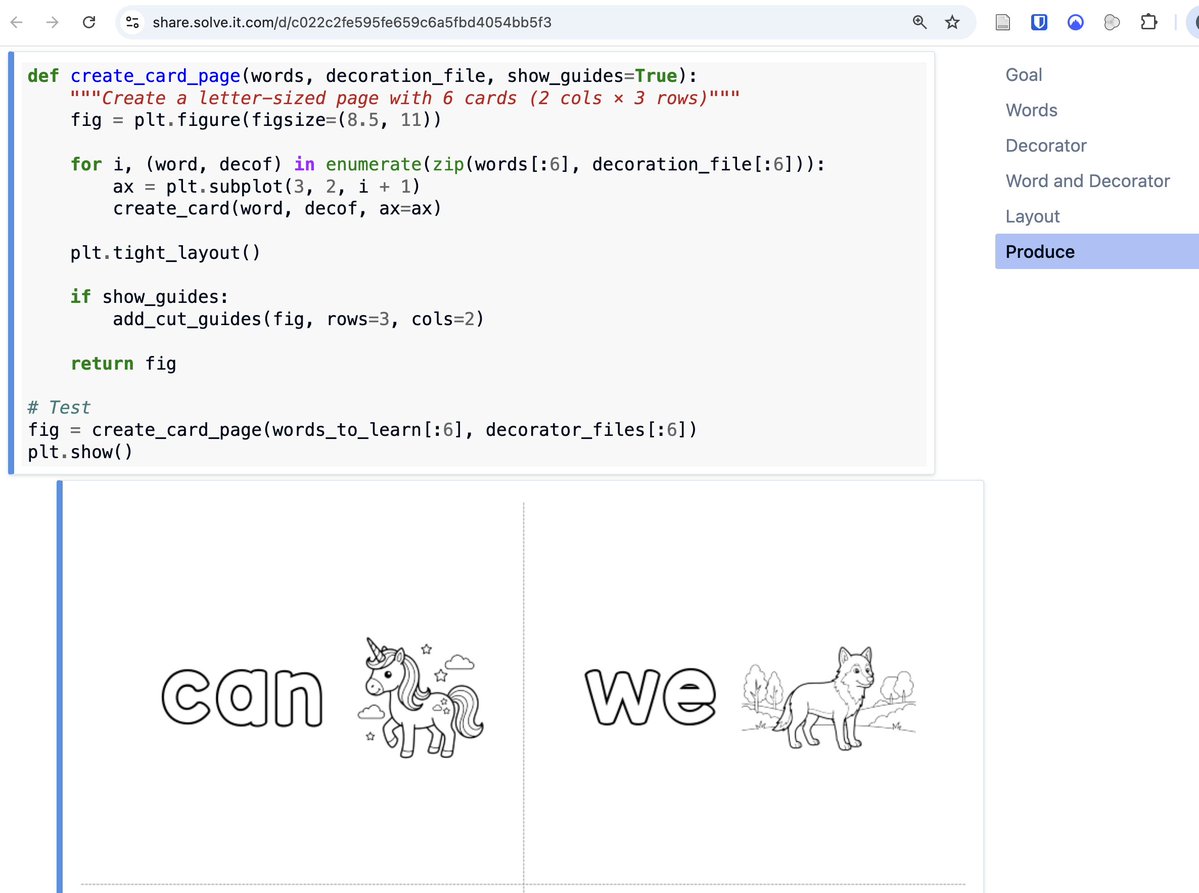

Love to see what our Solveiteers are building. Here's a delightful dialog, from which a little girl will be getting special flash cards to help her learn her 1st sight words, in a game of hide & seek. The full process, which you can customise and re-use: https://t.co/u7505DiZFC https://t.co/JcJwkUD4hL