Your curated collection of saved posts and media

🔥 BRAD GERSTNER: “X is better today than it's ever been. And remember, they have 70% fewer employees than they had the day Elon walked into the building.” https://t.co/wZdyNKU9HM

https://t.co/4O71rLpy9H

BREAKING: Court documents reveal that Australian government war crimes investigators do not even have the NAMES of two individuals Ben Roberts-Smith is alleged to have killed in Afghanistan almost 20 years ago. Nobody has managed to identify these alleged victims - even after $300 million was spent on war crimes investigations over five years. Australian Office of Special Investigations director Ross Barnett already revealed that investigators have: - No crime scenes - No access to the deceased - No bodies - No post-mortem report - No official cause of death - No recovery of projectiles to link to weapons that might have been carried by members of the ADF - No photographs - No site plans - No measurements - No recovery of projectiles - No blood spatter Now we know that after nearly $300 million and 5 years of investigation, they do not even have the NAMES of two alleged victims. If there is no name, no identification, no body - how do we even know they were killed? Does anybody actually think this is fair? Does anybody actually think that a criminal conviction - proved to a criminal standard, beyond reasonable doubt - is remotely possible in these circumstances? Daily Mail: ''Two of the five men Ben Roberts-Smith is accused of murdering while serving with the Special Air Service in Afghanistan have never been identified by war crimes investigators. Court documents seen by the Daily Mail show one of the Victoria Cross recipient's alleged victims is described only as 'Person Under Control 1', or alternatively 'Enemy Killed in Action 3'.''

To był ostatni film jaki widziałem, w którym młody chłopiec nauczył się czegoś od mężczyzny. W którym kobieta musiała stać się silną postacią, a swoich mocy nie uzyskała za fakt bycia kobietą. W którym "dobro" musi ZABIĆ zło, zamiast je rozumieć i wykazać się empatią. W którym bohater nie odpuścił ani na chwilę, cel był jasny, a koszt nie grał roli. W którym przemoc jest związana nierozerwalnie z instynktem przetrwania. Nie ma już takiego kina, które inspiruje, skłania do refleksji, daje wzorce i kształtuje mężczyzn. Jest tylko nijakość, ideologiczna breja i spłycanie wszystkiego.

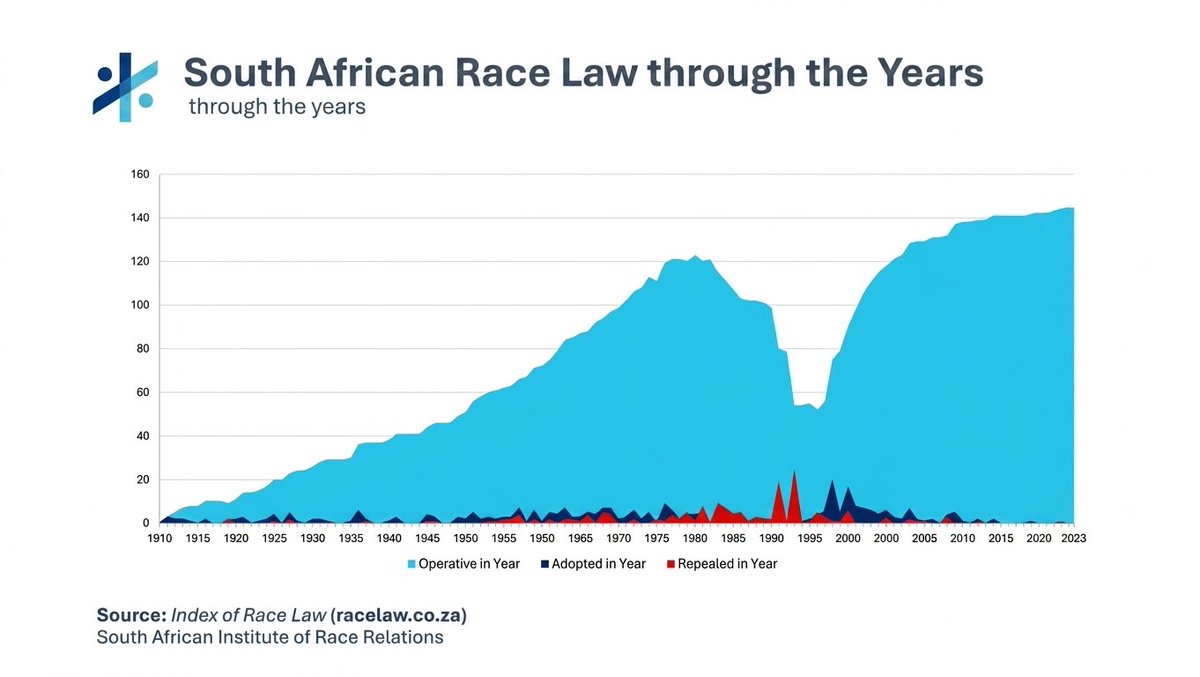

South Africa has more race laws than at the height of apartheid But it's anti-White, so it's fine https://t.co/kKIHFQeW5f

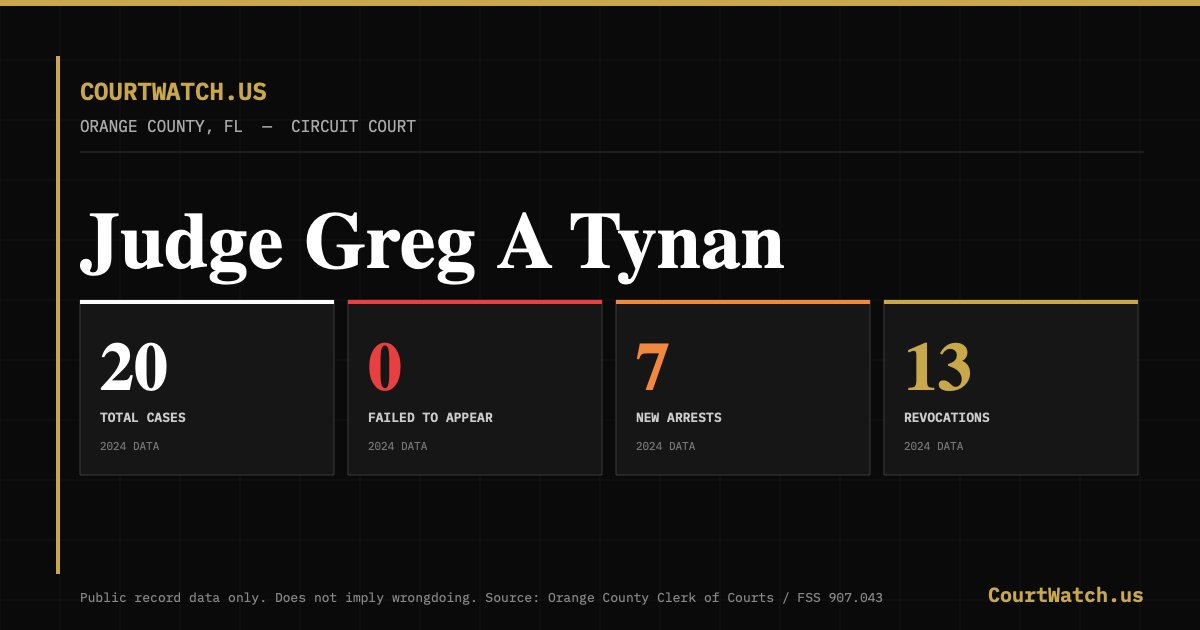

I built https://t.co/R1jAMUfNTv — a free public database for American citizens who deserve safer communities. You can track which judges released defendants who then got rearrested, skipped court, or violated their release conditions. All public records. All free. I started with Orange County FL and will be expanding to all 67 Florida counties and eventually every state in the country. This first batch of info is from 2024 and since public reports are released in March/April for the previous year, data is behind. But I wanted to see if this is plausible. After adding 2024,I'll add 2025 and then figure out how to get real-time-data uploaded. It's in beta — would love to know what you think 👇 Numbers don't lie, but criminals do. https://t.co/DfTcJ6XMYn @bennyjohnson @jockowillink @GrantCardone @LauraLoomer @nickshirleyy @j_fishback

Even when AI surpasses the combined intelligence of all humanity, I bet it will still look back at Starship amazed that humans built it https://t.co/34Cx5lPDtT

Elon Musk thinks the entire education system is built on a broken assumption. That every student should learn the same thing. At the same speed. In the same order. At the same time. Musk: “Everyone goes through from like 5th grade to 6th grade to 7th grade like it’s an assembly line. But people are not objects on an assembly line.” The model was designed for a factory economy. Standardized inputs. Predictable outputs. That economy is gone. The assembly line is gone. But the education system still runs on its logic. A student who masters algebra in two weeks sits through eight more weeks because the calendar says so. A student who struggles gets dragged forward because the schedule doesn’t wait. Neither is being served. Both are being processed. Musk: “Allow people to progress at the fastest pace that they can or are interested in, in each subject.” AI doesn’t teach a classroom. It teaches a student. One at a time. Every time. It skips what a student already knows. It finds where they’re stuck and approaches it from a different angle. It adjusts in real time. Not at the end of a semester when the damage is already done. A student obsessed with basketball learns fractions through shooting percentages. A student who builds in Minecraft learns geometry through architecture. The subject doesn’t change. The entry point does. No teacher with thirty students can do this. Not because they lack skill. Because the math doesn’t work. AI doesn’t have that constraint. Musk: “You do not need to tell your kid to play video games. They will play video games on autopilot all day. So if you can make it interactive and engaging, then you can make education far more compelling.” The brain isn’t broken. The format is. Kids learn complex systems and strategic thinking for hours voluntarily. Then walk into a classroom and can’t focus for twenty minutes. That’s not a discipline problem. That’s a design problem. Musk: “A university education is often unnecessary. You probably learn the vast majority of what you’re going to learn there in the first two years. And most of it is from your classmates.” Four years. Six figures of debt. And the real value comes from the people sitting next to you. Not the institution charging you. The degree doesn’t certify knowledge. It certifies endurance. Musk: “If the goal is to start a company, I would say no point in finishing college.” The system was built to train employees. If you’re not trying to be one, it has nothing left to offer you. Every lecture. Every textbook. Every curriculum. Now available instantly. Personalized to any learner. Adapted to any pace. The question isn’t whether the old model survives. It’s how long we keep forcing students through it while the replacement already exists.

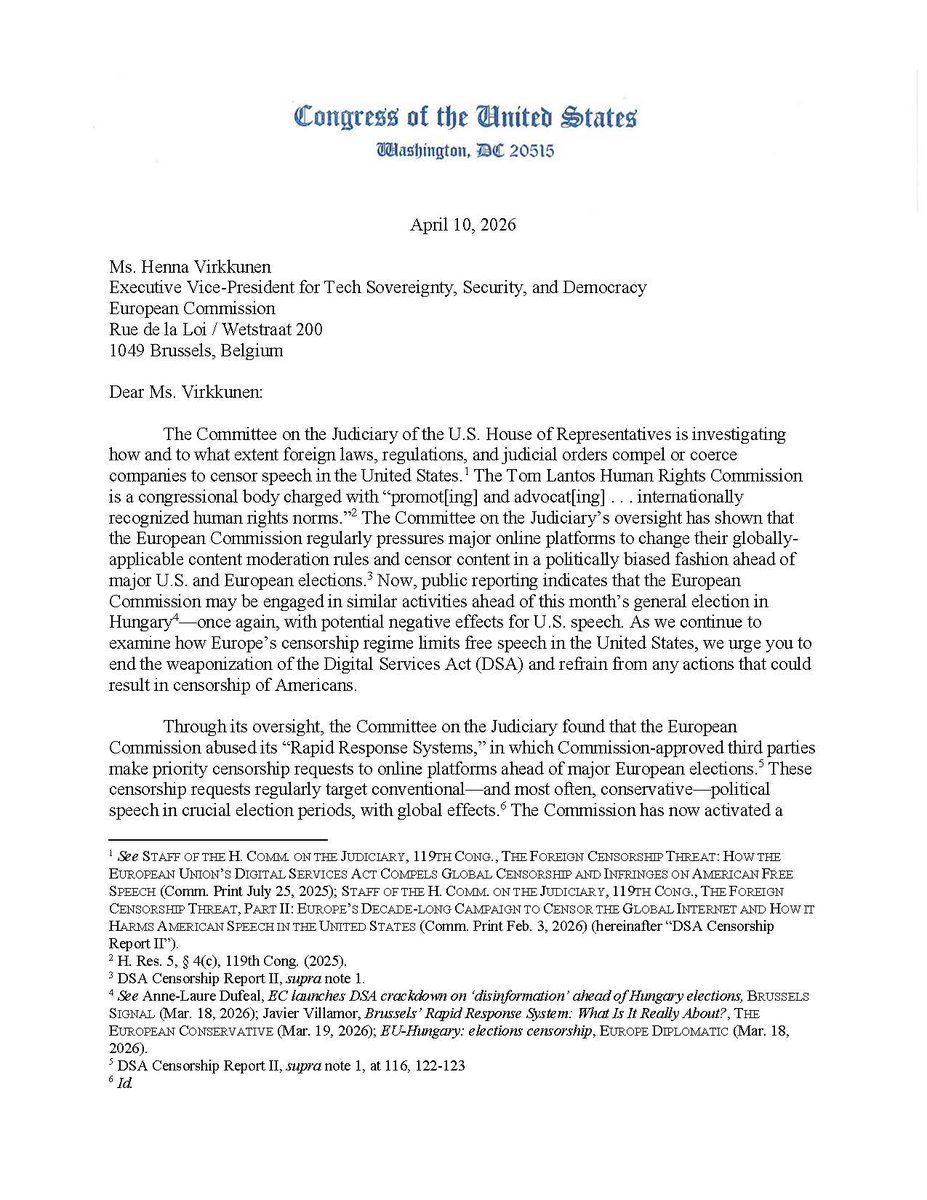

#NEWS: The European Commission uses its vast power to limit online discourse ahead of major elections in the U.S. and Europe. Its actions could affect U.S. speech—including the global removal and demotion of content protected by the First Amendment. Ahead of tomorrow's Hungarian election, Chairman @Jim_Jordan and Rep. Chris Smith wrote to @HennaVirkkunen urging the Commission to refrain from any interference. Read the full letter here ↓

Elon Musk speaks hard truths on what Nelson Mandela actually stood for and how South Africa has completely lost that Starlink is still blocked in Elon's home country because he is not black "The vision that Nelson Mandela, a remarkable leader, proposed was for all races to coexist equally in South Africa. Currently, there are around 140 laws that preferentially benefit Black South Africans over others" Mandela fought for absolute equality, but the current system has betrayed that vision They cannot claim to honor Mandela’s legacy of racial harmony while enforcing 140+ race-based ownership quotas. That is not progress - it is just discrimination wearing a new mask By actively blocking Starlink over these "discriminatory laws," bureaucrats are literally keeping their own rural schools and communities disconnected from the future

Is Elon Musk right about Sam Altman? just read the new Ronan Farrow and Andrew Marantz piece on Sam Altman in The New Yorker, and it is damning. And the answer is yes. It’s a deep dive based on never-before-seen 70-page memos from Ilya Sutskever and interviews with over a hundred people. The reporting revives the 2023 board drama where Altman was fired for not being “consistently candid,” only to get reinstated in days after employee pressure and Microsoft backing. But the real story is the pattern that led to it: repeated claims that Altman misrepresents facts, downplays safety issues, and tells people whatever they want to hear while steering OpenAI away from its original nonprofit safety mission toward aggressive commercialization, government deals, and power consolidation. Basically, he’s a lot like ChatGPT. Dishonest and sycophantic. From Sutskever’s memos and board discussions: “Sam exhibits a consistent pattern of… Lying.” And his direct warning: “I don’t think Sam is the guy who should have his finger on the button.” On the firing call, Altman reportedly said, “This is just so fucked up… I can’t change my personality.” A board member took it as him admitting he lies and isn’t going to stop. Altman later told the New Yorker authors: “In the past, my main flaw as a manager had been my eagerness to avoid conflict. Now I’m very happy to fire people quickly.”On integrity: “Yes, [AI] demands a heightened level of integrity, and I feel the weight of the responsibility every day.” One former colleague summed up the skepticism: “His words were almost certainly bullshit.”

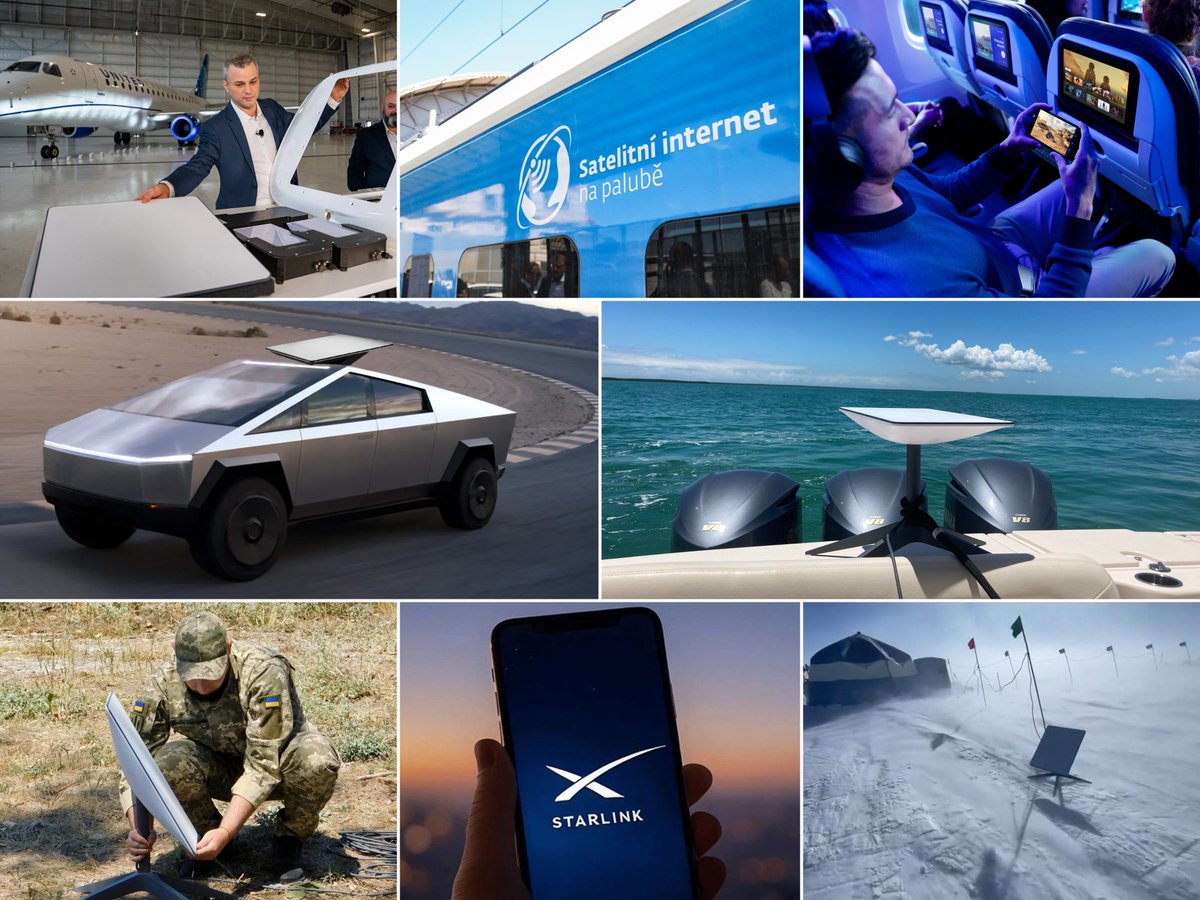

Most people have not even realized it yet, but Starlink just quietly became the invisible backbone of Earth For decades, high-speed internet was a luxury of geography. If you lived outside a major city or dared to travel, you had no choice but to be disconnected from the world We literally went from "can you hear me now?" dropped calls in the suburbs to you move anywhere in the world and you are covered by Starlink internet Just look at what SpaceX has achieved so far: → Free, high-speed WiFi rolling out on thousands of flights, trains, and ships → Now officially live in over 150+ countries, territories, and global markets → Direct-to-Cell tech is turning standard smartphones into sat-phones with zero extra hardware → Flawless high-speed internet streaming in the middle of Antarctica → Serving as a critical lifeline for first responders and victims during major natural disasters → Providing unbreakable comms in active warzones and geopolitical conflicts → More than 11 MILLION+ active subscribers globally as of early 2026 → Eliminating dead zones entirely - if you can see the sky, you can connect "I think the single biggest thing you can do to lift people out of poverty and help them is giving them an internet connection because once you have the internet connection, you can learn anything for free on the internet, and you can also sell your goods and services to the global market" — Elon Musk While legacy telecom providers are still struggling to lay cables in the dirt, SpaceX is actively building humanity’s collective nervous system in Low Earth Orbit SpaceX is showing the world what it actually means to be connected on a magnitude we never thought possible

Why Elon Musk is RIGHT to fight South Africa’s racist rules blocking Starlink? Imagine this: Long ago, South Africa had very unfair laws called apartheid. They treated Black people badly and kept them from good jobs and money. When those bad laws ended, the country made new rules (called B-BBEE) to help Black people get a fair share of business. The idea was good – like a big helping hand. But now? For companies like Starlink to sell fast internet, they MUST give away 30% of their business to Black partners. Just because of skin color. Elon Musk was born in South Africa. He left as a teen to chase big dreams. Today, his company SpaceX wants to bring Starlink – super fast satellite internet – to South Africa. But the rules say no unless they give up part of the company. Elon said it right: “Starlink is not allowed because I’m not Black.” SpaceX promised to spend about $30 million (that’s 500 million rand!) to give FREE high-speed internet to 5,000 rural schools. That helps over 2.4 MILLION kids every year learn better, get jobs later, and have a brighter future. Real help for the people who need it most! Starlink already works in about 24 other African countries. Villages there now have internet for school, doctors, and business. South Africa’s villages are missing out because of these racist rules. Elon isn’t asking for special favors. He just wants fair play so Starlink can connect everyone fast. Internet = education, jobs, hope. Why hold back millions of kids over rules that pick by race and color?

I didn't think it could get worse, I was wrong. Watching that illegal animal from Haiti beat a woman to death with a hammer has radicalized me more than I thought possible. Our politicians bear the blame for this. It was an intentional policy choice. The Biden admin opened our border to the criminal filth from the third world knowing that would kill Americans like me and you. The blood is on their hands. Prosecute anyone inside the Biden admin who allowed for this to take place 13,000 Americans are DEAD because of them. These government officials must be punished. Lock them up.

This 15-minute talk by the creator of Pydantic on how to correctly use MCPs will teach you more about making your AI tools actually work together than everything you've scrolled past this year. Bookmark this & watch, no matter what. Then read the guide below by @eng_khairallah1

https://t.co/UCeC55qdhi

AI is starting to reshape how trips are planned. Travel platforms like Rome2Rio and Omio are integrating directly with OpenAI, allowing users to search routes, compare prices and organize journeys inside ChatGPT. Travel planning is becoming conversational. Instead of searching, people may simply ask and go. https://t.co/RwEk1CR3Va @euronews @Pascale_Davies

🚨OpenClaw just added built-in video, music, and image generation. All in one open source tool. Anthropic cut them off. GPT-5.4 got better. They shipped anyway. What’s new in 2026.4.5: → Video: Alibaba, BytePlus, fal, Google, MiniMax, OpenAI, Qwen, Runway, xAI → Music: Comfy, Google, MiniMax → Image: Comfy, fal, Google, MiniMax, OpenAI → /dreaming mode is now live → Structured task progress → UI + docs in 12 new languages 10 video providers. One open source tool.

OpenClaw 2026.4.5 🦞 🎬 Built-in video + music generation 🧠 /dreaming is now real 🔀 Structured task progress ⚡ Better prompt-cache reuse 🌍 Control UI + Docs now speak 12 more languages Anthropic cut us off. GPT-5.4 got better. We moved on. https://t.co/T3LaSJYOvU

MiniMax Music 2.6 is live. A few things worth knowing: 🎬 Original BGM in minutes No more hunting for "probably fine to use" tracks. Describe your scene, get something fully yours. 🎭 Structure that actually follows your prompt. You can now write "open with tension, build toward awakening, explode into triumph", and the model follows, beat by beat. For the first time, AI music generation feels less like rolling the dice and more like directing. 🎤 Intentional imperfection In lo-fi, indie folk, jazz — the breathiness that makes a track feel human, not generated. Also shipping with 2.6: → First audio in under 20s: write a prompt, take a breath, it's ready → Improved low-mid frequency response: tighter bass for House, Trap, Drum & Bass → Style transfer & remixing: reimagine your own melody in a completely different genre 14-day free global beta starts today (500 songs/day). 👉Try now: https://t.co/oGNTfjD57b

I’ve spent the last two weeks testing Hermes against OpenClaw. With OpenClaw, there are just too many ways for things to break. Hermes is the first agent I’ve used where you actually get the feeling of it "just working" You can now spin up Hermes on Agent37 for just $3.99. • 1-click integrations for 1000+ apps (Gmail, Calendar, Notion, etc.) • Pre-configured LLM (swap any OpenRouter model) • Full GUI access to your files and live browser Demo 👇

When Steve Jobs bought Siri for $200M before the world understood why (2010) https://t.co/bzmsUUmzWC

Built an MCP server so AI agents like Poke @interaction and @openclaw can read my Oura ring data Integrates into my day and I never have to check the app The future is your wearable talking directly to your assistant https://t.co/llwtJqr7fm

It really is incredible how wrong the mainstream media has been with their skeptical AI takes over the past three years, while giving awards to the same AI tech journalists whose predictions and takes have been categorically off the mark. Now it looks like they are all going to be wrong again during this current exponential AI agentic compute demand liftoff. Amazing.

@JJColao When meeting readers, I’ve been stunned by how many just regurgitate false narratives and information about AI presented by the mainstream media.

this might be the wildest growth hack i’ve seen in a minute met the founder of rent a human yesterday. he’s on stage doing a demo, asks for users… i raise my hand 30 seconds later someone in japan is about to stand in the busiest crossroad holding a sign for finlingo this isn’t even a joke. this is global distribution on demand this will actually kill fiverr. 730k users in a month… is insane. not gonna lie i went on rent a human and dropped a bunch of $1 bounties for reddit posts just to get people talking about finlingo 😭 i’ll post the results in a couple days shoutout to @AlexanderTw33ts this is actually insane 🚀

我人傻了。 我的小龙虾,已经可以跟我开视频会议了。 Pika AI Self 可以直接进 Google Meet— 带脸、带声、带表情,边聊边干活: 约会 查资料 现场参与讨论 还能记住你的风格,越用越像你。 视频里那个 Shiro AI, 3 秒帮老板约好会, 还能 4 人一起认真辩论: “sandwich 算不算 hot dog?” 这已经不是助手了, 是能开会的数字同事。 还在 Beta, 但已经支持任意 agent(GitHub 一键接入), Pika 的 AI Self,是真的猛。 我只想说一句: 以后那些发邮件就能解决的会, 能不能都让 AI 去开。

slime molds are one of the coolest organisms ever btw In order to optimally transport nutrients, they've basically learned to solve the shortest path problem. Scientists have leveraged this behavior to do cool things, like replicating the US Interstate Highway system and building unconventional computing applications. On a personal note, I remember I was introduced to slime molds in my college botany class. One of our projects was to grow slime molds, and I was so fascinated I kept growing them for like a year after the class ended, trying to replicate some of these interesting behaviors. It was like a pet for me lol

fungi can solve mazes. slime molds have recreated the Tokyo rail network. no neurons. no brain. zero. we still don’t know how. but sure let’s keep modeling intelligence as “big neural net”

Fortifying #Cloud Security Operations with #AI Driven Threat Detection https://t.co/2NQ9gg5vKu @DZoneInc #CyberSecurity Cc @roxananasoi @jblefevre60 @BroadenView @SpirosMargaris @TamaraMcCleary @LaurentAlaus https://t.co/imin2zc4lx

Steve Jobs once called Warren Buffett for advice on stock buybacks. Buffett told Jobs—“There’s just 2 questions” to ask: 1. Do you have enough cash to build the business the way you see it over the next 5-10 years? 2. Is your stock selling for less than it’s worth? Jobs answered Yes to both. Buffett said: “Well, you’ve answered your own question.”

Demis Hassabis just described the moment medicine stops treating disease and starts deleting it from the source code. For all of human history, doctors have fought symptoms. The tumor. The organ failure. The collapse. Always downstream. Always after the damage has already started. The cause sits upstream. Written into the DNA. Ninety-eight percent of the human genome sits in non-coding regions. For decades, science understood the genes but couldn’t read the vast dark territory between them. That’s where most disease hides. Hassabis: “It takes the big, long genetic sequences and then it tries to predict, if you made a mutation to this particular single letter, single position in the genetic sequence, will that be a harmful mutation that might cause disease, or is it benign?” AlphaGenome reads your entire genetic sequence and identifies the exact letter that’s corrupted. Not a region. Not a probability range. A single position in a three-billion-letter sequence. That alone would be a generational breakthrough. But most diseases aren’t that clean. Hassabis: “What if they’re multigenic diseases where there’s cascades of mutations causing the problem? Those are even harder to detect, but actually perfect for sort of AI.” One mutation is hard enough to find. A cascade of mutations interacting across the genome is a problem no human researcher can hold in their head at once. Three billion data points. Compounding errors across all of them. The human brain cannot solve that. AI doesn’t solve it either. It maps it. All of it. At once. The most devastating diseases on Earth. The ones medicine has called untreatable for generations. They are not mysteries to the algorithm. They’re compute problems. But finding the error was only ever half the equation. You also need the ability to fix it. That tool already exists. CRISPR is a molecular scalpel. It cuts DNA at exact positions. The limitation was never the editing. It was knowing exactly where to cut. Hassabis: “A kind of combination of things like AlphaGenome and CRISPR could be incredibly powerful.” AI reads the code. CRISPR rewrites it. One finds the mutation. The other corrects it at the source. Not managing symptoms. Not slowing progression. Deleting the error from the genome. The implications go beyond treatment. A disease corrected at the genetic level doesn’t just disappear from one patient. It disappears from their bloodline. The read access is here. The write access exists. The merge is inevitable. The era of accepting a broken genetic hand is ending. We stopped being passengers in our own biology.

The Infinity Machine, a new book by CFR expert @scmallaby, follows DeepMind founder Demis Hassabis and the quest for superintelligence. Out now: https://t.co/F9edf9Wcyd

Warren Buffett: "Stick with businesses that you can understand and use the government bond rate [as the discount rate]." "When you can buy something you understand well at a significant discount, then you should start getting excited." https://t.co/WVXp5SX4OS

Jeff Bezos explains why Buffett stands out as an investor. The primary reason is that Buffet is able to think more long-term when it comes to investing. Save this to stay ahead of the curve. https://t.co/VBPZtihyVm