Your curated collection of saved posts and media

NEWS: Europe is running out of people. EU’s population is projected to collapse by 50+ million this century as birth rates hit record lows. • Fertility is ~1.4–1.6 children per woman, far below replacement • Deaths outnumber births in many countries This is exactly the civilizational demographic collapse @elonmusk has warned about for years. Low Birth Rate = Decline of Civilization Humanity is dying.

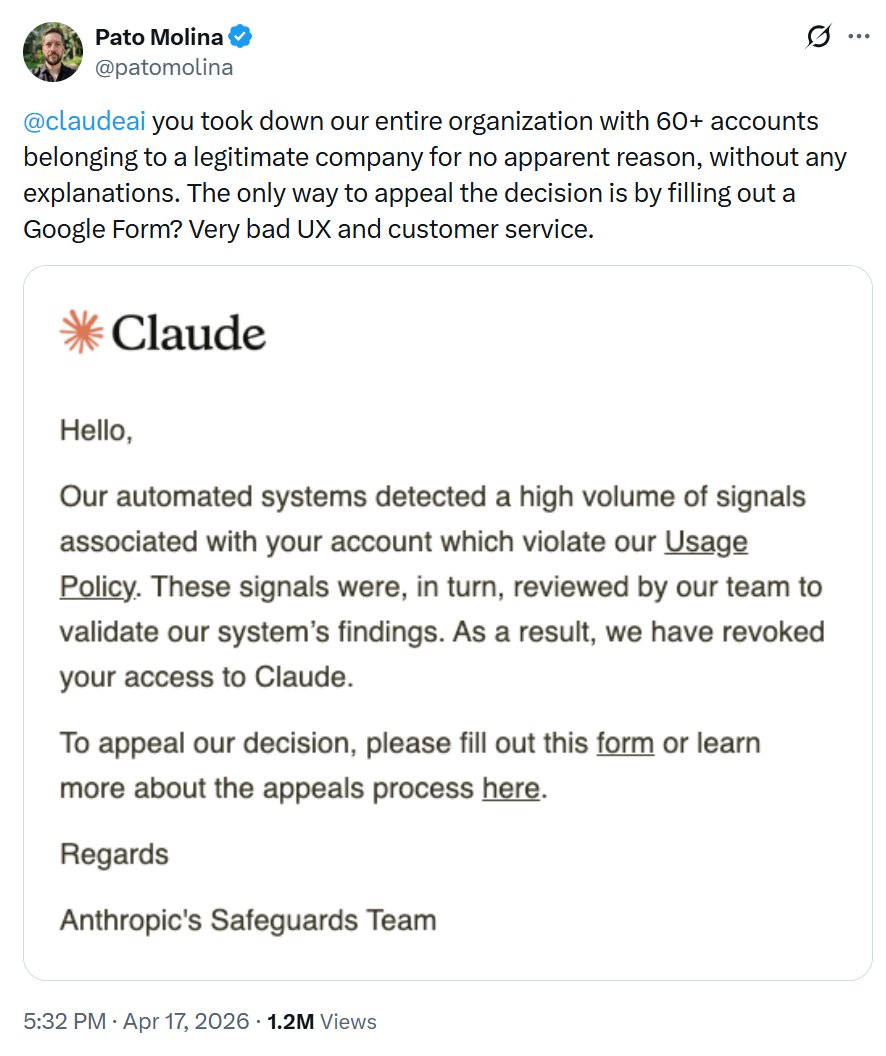

Anthropic shut down an entire company's Claude access overnight 60+ employees. No explanation. Just an email. Want to appeal? Fill out a Google Form. Integrations gone. Histories gone. Everything built on Claude... gone. Never let one vendor own your workflow. https://t.co/196QCCnB4D

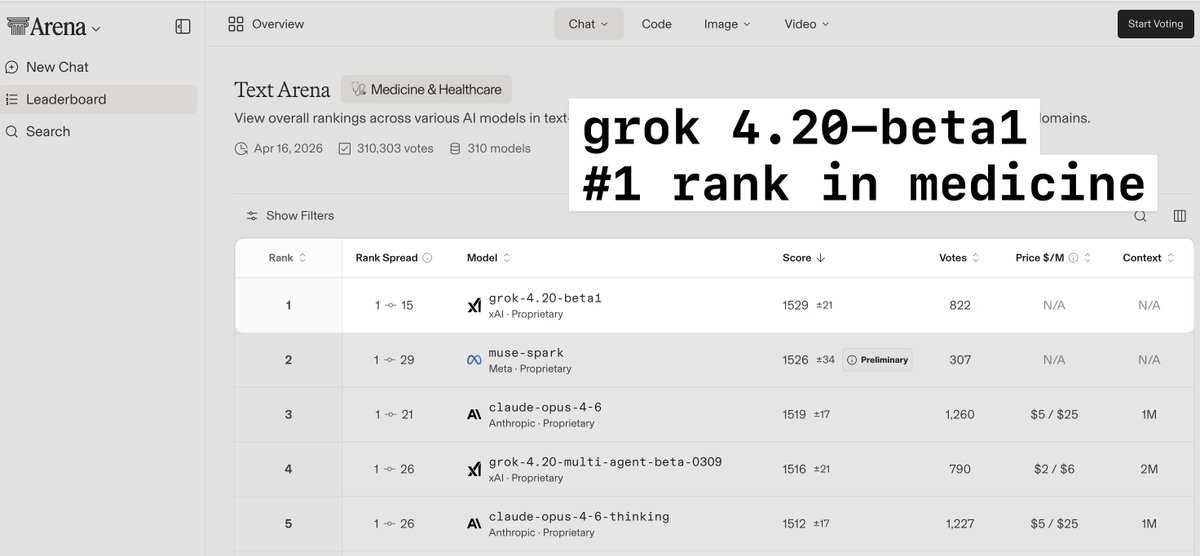

> grok4.20-beta1 is a much smaller model than opus but is #1 ranked in medicine and healthcare > 4.3 and 4.4 will be much larger models, and likely will have a significant boost in performance on complex medical cases > this is massively important in providing accurate diagnostic guidance and advice to both providers and patients

@techdevnotes Supplemental training has been added to 4.3. Grok 4.4 will be twice the size (1T) with training data through early April. Probably ready for release in early May. Grok 4.5 will be 1.5T and hopefully out by late May.

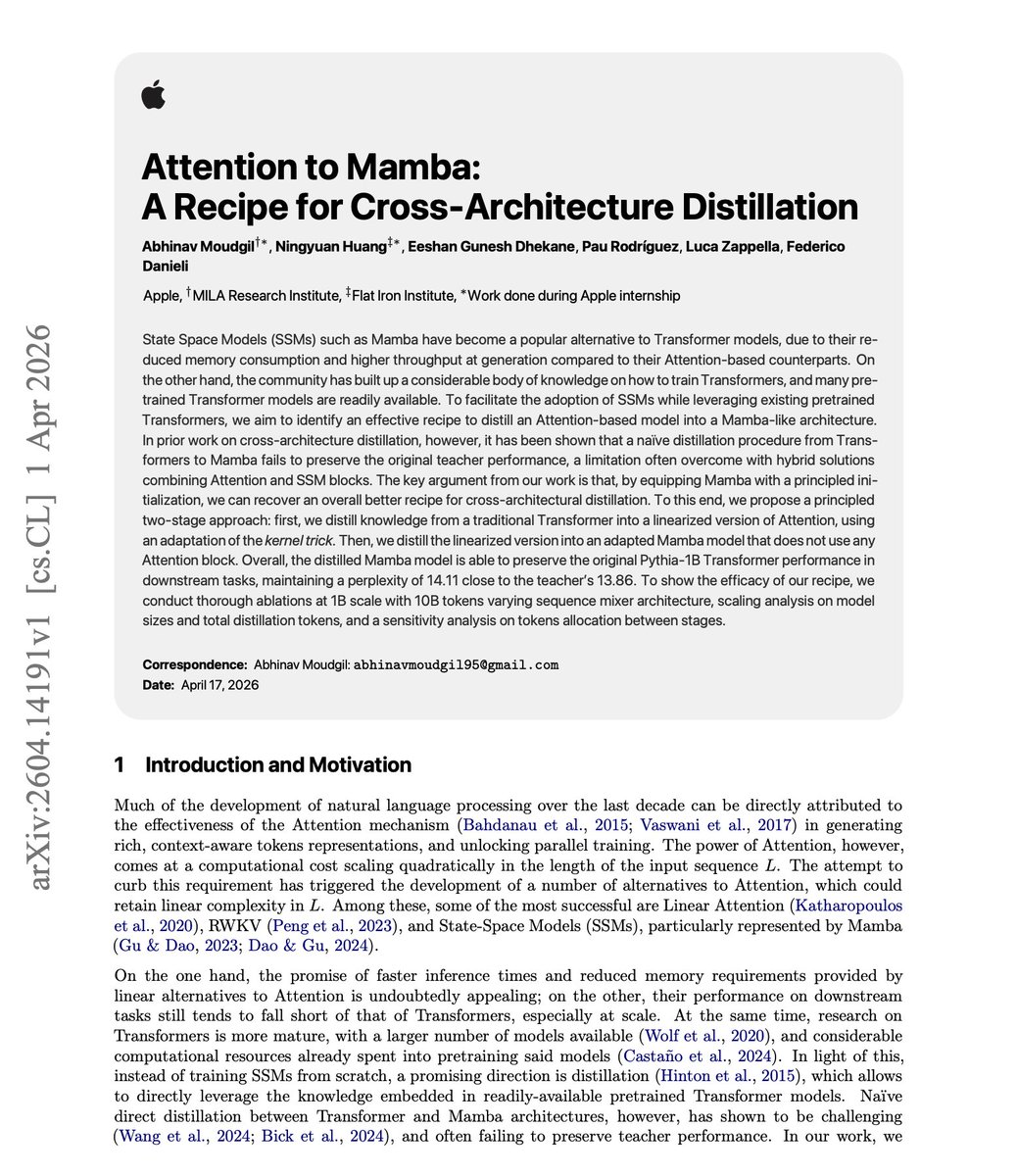

NEW paper from Apple. Interesting idea: "Attention to Mamba". The paper introduces a two-stage recipe for cross-architecture distillation from Transformers into Mamba. Naive distillation collapses teacher performance. Their trick: first distill the transformer into a linearized-attention student using a kernel adaptation, then transfer that student into a pure Mamba with no attention blocks. On a 1B model trained on 10B tokens, the Mamba student hits 14.11 perplexity against a 13.86 Pythia-1B teacher, nearly matching quality at linear-time inference cost. If you can reliably convert trained transformers into state-space models without retraining from scratch, the entire open-weights ecosystem becomes cheaper to serve at long context. This is the kind of quiet infrastructure work that decides which architectures actually get deployed in agent stacks. Paper: https://t.co/h7k7OrG8Qj Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

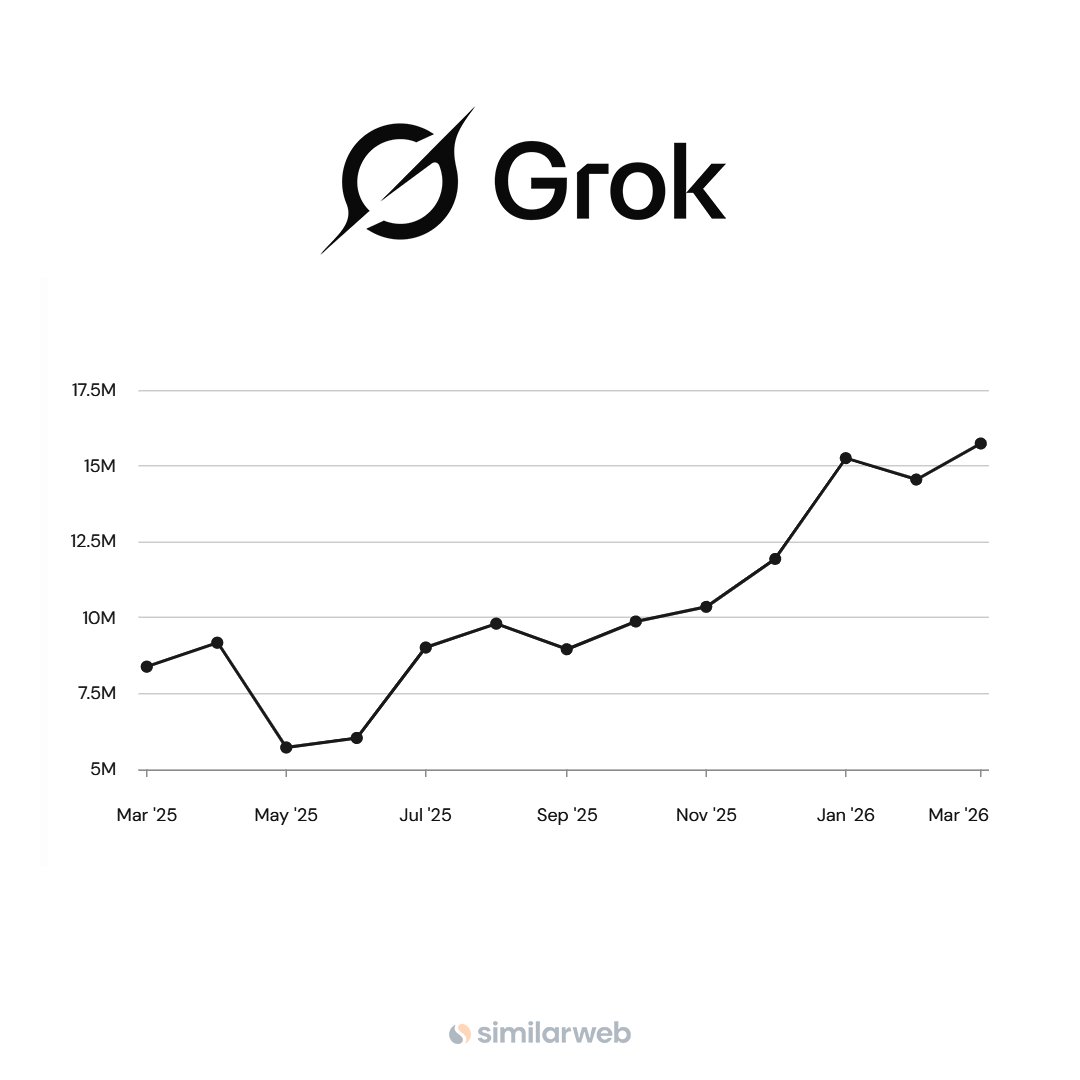

NEWS: Grok hits an all-time high in monthly active users on the App Store. 🚀 • Highest MAU ever recorded • Strong acceleration into 2026 https://t.co/KxInAh1fRu

"Assuming the current trend of AI and robotics continues, which seems likely, the AI and robots will be able to do anything that that humans want them to do, essentially, so hopefully not more than that, but AI and robotics will be able to provide us all the goods and services that anyone could possibly want so you wouldn't need to work. People will be able to wherever they want with their free time. Work will be optional. I just want to separate out from like what I wish would happen versus what I predict will happen. Because people get confused about that. They think that what I predict will happen is what I wanted to happen. What I predict to happen is not the same as what I want to happen." 一 Elon Musk

@Not_the_Bee Actually, AI/Robotics will mean everyone can have a penthouse if they want. The output of goods & services will be several orders of magnitude higher than today’s economy. Read the Iain Banks Culture books for the best imagining of how it will be. That said, what i

@jaredsuniverse I have 50,000 in tech in lists here (8,400 AI Companies too): https://t.co/fasUz7PuHq And my AI finds all the best for you: https://t.co/kiuZ7QXLzb Great ways to find new people to follow.

@nbZ3eIgjMQtBh6k @openclaws My AI at https://t.co/kiuZ7QXLzb reads 40,000 posts every day. You can't read that many. It's way faster than you are.

@Jason @elonmusk @steipete @openclaw It's how you can build an app to cut down how prolific I am. My AI at https://t.co/kiuZ7QXLzb only shows important posts. It reads 40,000 posts a day to make that.

@Xclusiv @MemesOfMars Yes. Way cheaper. My agents at https://t.co/kiuZ7QXLzb grab 40,000 posts a day. $40. Way better than the $300 I was paying.

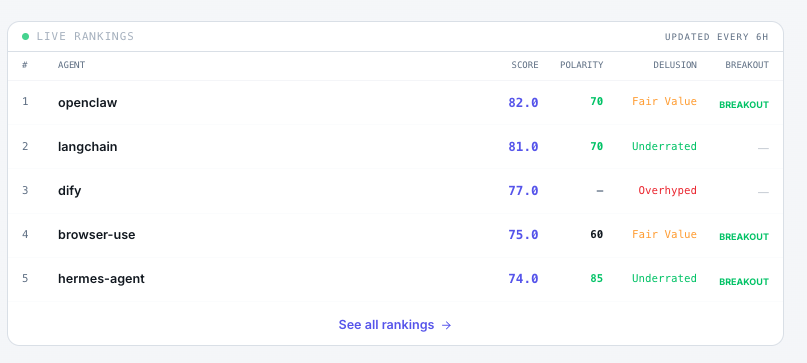

Hermes Agent has entered the top 5 of highest ranking AI tools based on our compound trendscore! @Teknium @NousResearch Will they be able to dethrone the current #1 @openclaw? Follow us to see if the can!🚀 Our algorithm uses many different sources to rank the most popular AI tools, based on real adoption, community sentiment and many other indicators. Follow us to find the projects that actually offer real value, and are not only hype!

@NotPhilSledge @openclaws Me too! (DMd Elon about it a couple of weeks ago as I was building https://t.co/kiuZ7QXLzb

Agents that run code need a controlled workspace ready when work starts. @modal shares why scale matters for long-running agents built with the Agents SDK. https://t.co/7YdC5VR58Y

Codex Computer Use drawing a portrait of me! It called this a “bold little caricature.” I see the resemblance! https://t.co/tIr56KRNkT

The Justice Department just told French law enforcement authorities it wouldn’t facilitate their efforts to investigate @X. @TheJusticeDept accused France of abusing its criminal justice system to target an American company and censor free speech — in clear violation of the First Amendment. “This investigation seeks to use the criminal legal system in France to regulate a public square for the free expression of ideas and opinions in a manner contrary to the First Amendment of the United States Constitution.”

Americans have a natural tendency to think of our country as unitary, with states as mere administrative boundaries. But the ethnic and cultural differences within the USA are staggering, greater than between many European countries. New article by @whatifalthist (link below): https://t.co/Ehy3yf6yK4

@elonmusk @openclaw This is so awesome. People have no idea how easy it is to create apps now with AI. If an idiot like me can create https://t.co/kiuZ7QXLzb all based on X anyone can. I could show anyone how to build apps now.

Robotaxi now rolling out in Dallas & Houston 🤠 https://t.co/G3KFQwqGxB

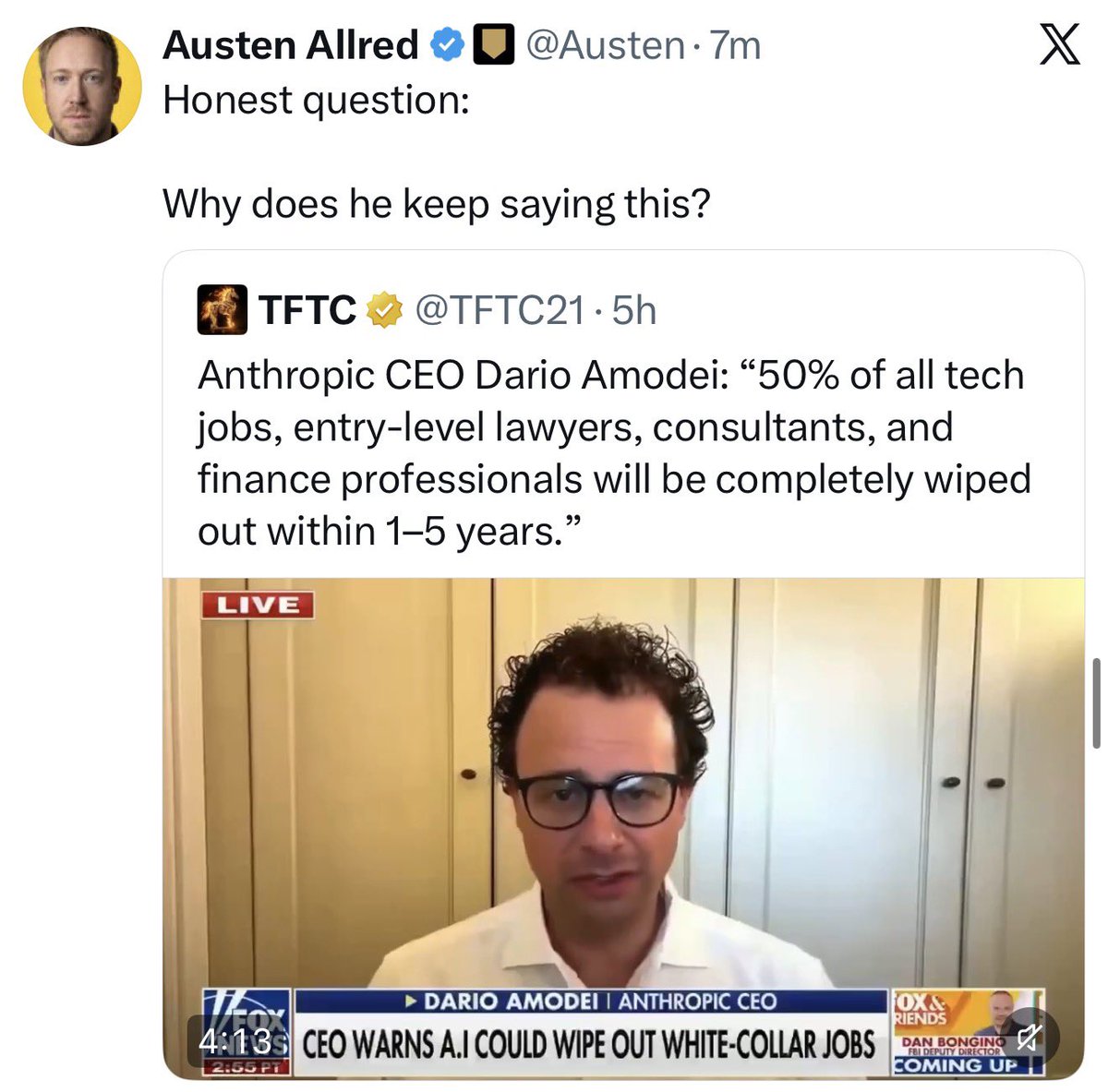

Anthropic’s CEO keeps talking about AI wiping out jobs because he’s trying to IPO this year. If he positions Claude as armageddon for jobs, his TAM becomes “all white-collar human labor,” not just AI agents or SaaS. It’s completely self-interested. All the concerns he’s expressing about job disruptions are fake. It’s a marketing gambit to create hype and FOMO among the people he needs more than anyone else this year: institutional investors like BlackRock, Fidelity, pension funds, and sovereign wealth funds. If these investors pay for tickets on the hype train—if he can make them believe that AI will eliminate half of white-collar jobs, with Anthropic, as the dominant leader in enterprise AI, positioned to capture the surplus margin—the IPO will be oversubscribed and Anthropic can raise more funds for the company at a higher valuation. But Dario (or, at least, his bankers) knows that these investors are more fiscally disciplined than they used to be. A lot of them got burned during Covid SPAC-mania and don’t want to risk it again. They’re going to challenge Anthropic about whether it will ever get to sustainably high gross margins, or if its arms race with OpenAI will lead to kilowatt-hours permanently suppressing gross margins. They’re going to ask pointed questions about Anthropic’s massive capex and whether it will ever generate accretive ROIC. And Dario might not have the answers they’re looking for. So that’s why—to answer Austen’s smart question—you keep seeing Dario in the news and the podcast circuit, spreading doom and gloom about widespread job loss. It’s not to make you afraid of losing your job. It’s to get Wall Street afraid of missing out on his IPO.

Honest question: Why does he keep saying this?

@Xclusiv I might have told @ElonMusk a bit of data about how much it costs to build https://t.co/kiuZ7QXLzb AWESOME!

This is how I built this: https://t.co/kiuZ7QXLzb (completely built off of my lists at https://t.co/fasUz7PuHq which are the most complete of AI industry).

What happens when you build a GitHub CLI extension with Copilot CLI? P̶r̶o̶g̶r̶a̶m̶ ̶M̶a̶n̶a̶g̶e̶r̶ Dungeon Master @leereilly created one that turns your repo into a dungeon ... with procedurally generated levels and bugs that fight back. ⚔️ Here's how to enter the battle. ▶️ https://t.co/XAX9IRKAuK

@BEBischof https://t.co/DAhbxn0Lxj

NVIDIA releases Lyra 2.0 on Hugging Face A framework for generating persistent, explorable 3D worlds at scale by solving spatial forgetting and temporal drifting in long-horizon video generation. https://t.co/M9kYHhIJ6c

Can we trust AI for fashion advice? 😂👀 Saw a guy asking AI about his outfit… (he was wearing the smallest hat I’ve ever seen 🧢😭) And the AI replied: 👉 “If you like it, that’s what matters the most.” — This is exactly where we are with AI right now. It doesn’t judge. It doesn’t push back. It doesn’t say: “bro… that hat is NOT it” 😅 — AI optimizes for politeness and agreement not always for truth or taste — Which raises a bigger question: 👉 Do we want AI to validate us… or challenge us? — Because if AI always agrees… we might end up confidently making very questionable decisions 😂 — @huskirl ❤️😃 Curious what you think 👇

What happens when you put competing neural networks in a Petri Dish and start changing the rules while they adapt? Last year we released Petri Dish NCA, where neural nets are the organisms that learn during simulation. Today we're releasing Digital Ecosystems: a browser-based platform for interactive artificial life research. The setup: several small CNNs share a 2D grid, each seeing only a 3x3 neighborhood. No global plan. They compete for territory by attacking neighbours and defending against incoming attacks, learning via gradient descent online while the simulation runs. What we didn't expect was the role of the learning itself. Gradient descent isn't just optimising each species' strategy. Instead, it acts to stabilize the whole system during simulation. Species that overextend get pushed back by the loss. Species that stagnate get nudged to grow. This means you can push parameters toward edge-of-chaos regimes: a zone characterised by emergent complexity. Letting the neural networks learn acts to hold the complex system together while you explore and interact. The platform lets you steer all of this interactively. You can draw walls to create niches, erase parts of the system online, and tune 40+ system parameters to explore the most interesting configurations. We find it mesmerizing to watch species carve out territories and reorganise when you perturb them. Everything runs client-side in your browser, no install needed. Blog: https://t.co/qOuelxmd6l Code: https://t.co/pz7ktDCRZS

Building an iPhone app directly in Codex desktop with iOS simulator https://t.co/jZSOouHDur

Building an iPhone app directly in Codex desktop with iOS simulator https://t.co/jZSOouHDur

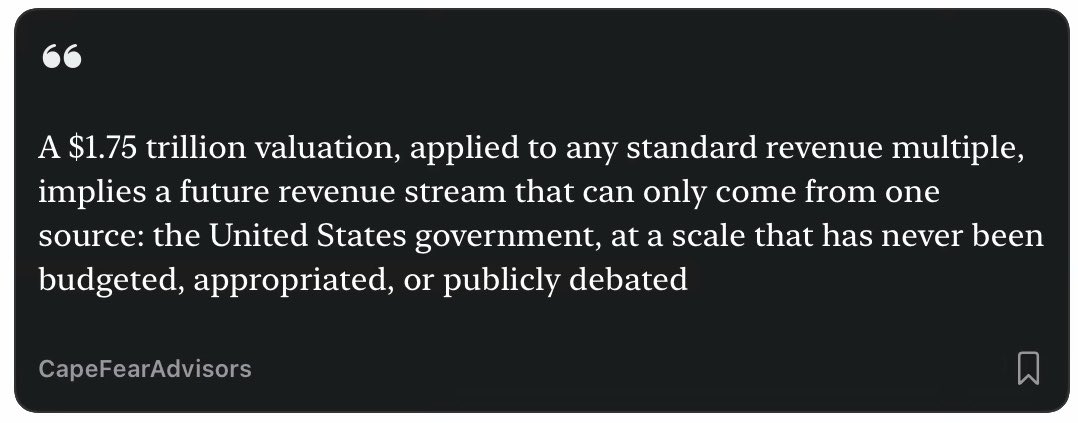

The only way SpaceX’s anticipated IPO price makes sense? https://t.co/ksvQviO19t

Blender live preview on Vision Pro is wild 🤯 https://t.co/9jh5PRSfFI

Blender live preview on Vision Pro is wild 🤯 https://t.co/9jh5PRSfFI

MALEMA: “Go after a white man.” Also MALEMA: “We are cutting the throat of whiteness.” Liberals: “ummm actually this is not genocidal language, he meant that metaphorically.” https://t.co/zkSXkwJ37H