Your curated collection of saved posts and media

"Our paper fits in the following literature" https://t.co/MgoqDCe9Xi

Applying the CARE Principles for Indigenous Data Governance to ecology & biodiversity research https://t.co/aSGMPyEtPI Jennings (@1NativeSoilNerd) et al outline how the principles can sow community ethics into disciplines inundated with extractive helicopter research practices https://t.co/gNr05DM7KG

Here's the entire study. They get into methodologies and everything (h/t @thisisprincely): https://t.co/nuSWaLnfRG

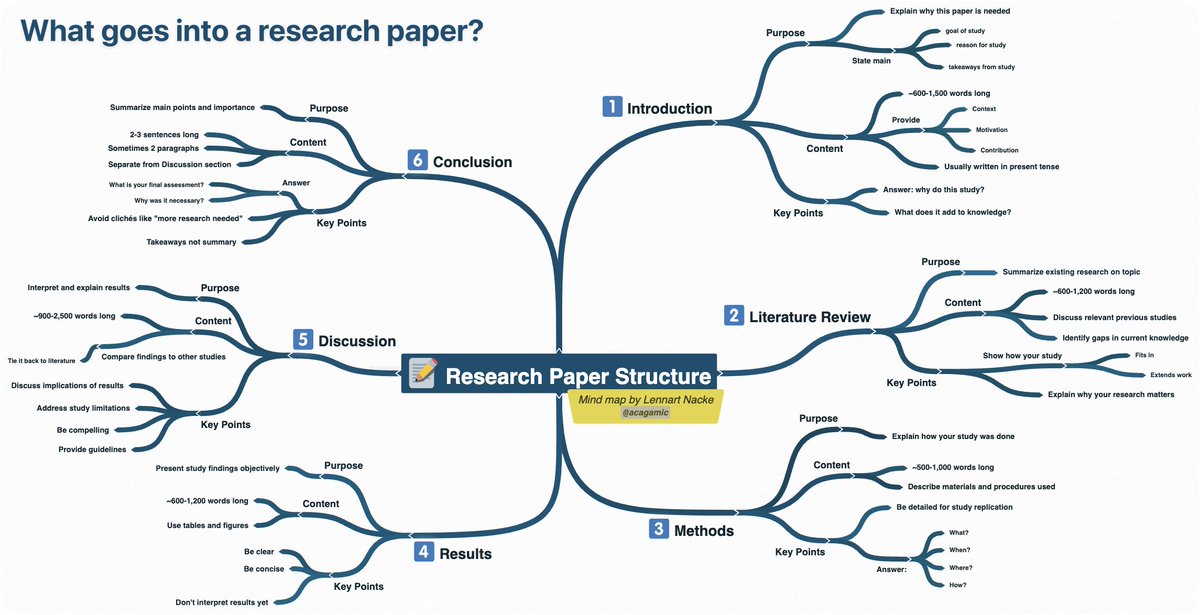

Research papers don’t have to be overwhelming. Here's a simple breakdown. https://t.co/cAJLTH9L1l

You can now transform LLMs into diffusion models. dLLM released an open recipe that converts any autoregressive model into a diffusion LLM. How the conversion works: 1. Remove the causal mask and enable bidirectional attention 2. Mask random tokens and train the model to fill the gaps 3.Add light supervised training to stabilize outputs

Repo: https://t.co/0lME5QQlxH

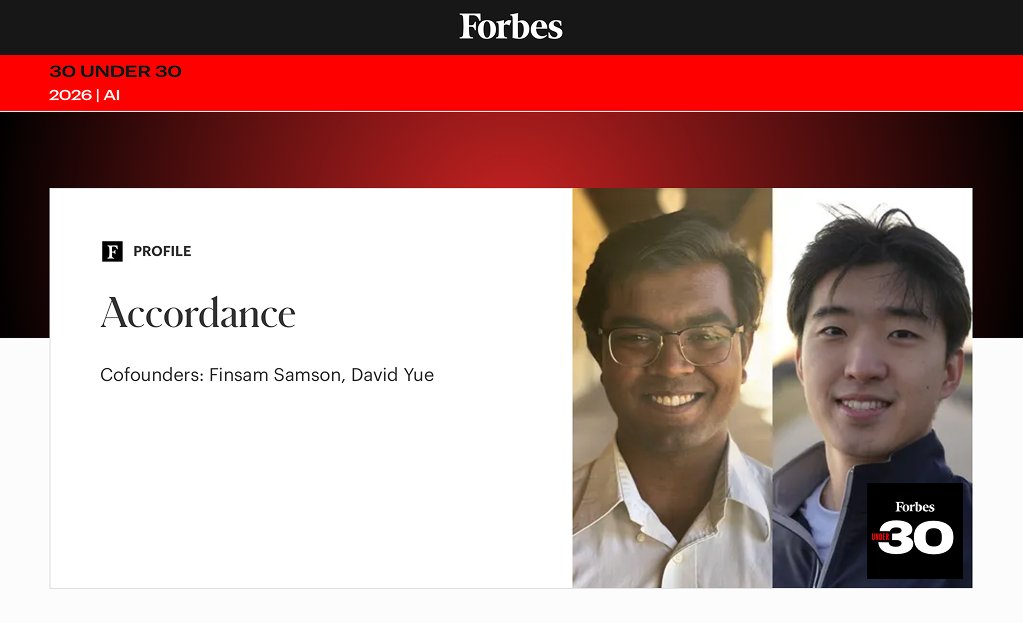

Grateful to be named Forbes 30 Under 30 alongside @FinsamSamson. Nothing beats working with a team that treats excellence as table stakes. We're on a generational run. Join us → https://t.co/a96Dg5jpVD https://t.co/BA62QX2Tax

CA AI bills will go into effect soon. Their real-world effect will hinge on how state officials define terms like “frontier models” and “reasonable measures.” In @lawfare, I identify key definitional ambiguities and discuss how officials might resolve them… SB 53, for example, defines a “frontier model” as one trained with more than 10^26 FLOPS. But many developers build on open-weight models like Qwen. If they fine-tune an open-weight model, should they include the pre-training compute for the base model? The statute seems to say yes. But this creates two problems. First, developers often don’t know how much compute was used to train a base model. And second, a cumulative approach might sweep in companies far from the statute’s intended targets. Since Airbnb’s revenues exceeded $500m last year, if it fine-tunes Qwen and the total compute exceeds 10^26 FLOPS, it might technically qualify as a frontier developer. Yet if the statute does NOT take a cumulative approach, developers could circumvent the statute by fine-tuning separate open-weight models. They’d be deploying models with capabilities at or near the frontier with limited oversight. (NOTE: This is also relevant to NY state officials implementing @Sen_Gounardes and @AlexBores's RAISE Act) Other definitional ambiguities exist with CA SB 243’s use of “reasonable measures,” AB 853’s use of “to the extent technically feasible,” and AB 621’s use of “reasonably should know.” Read more on what CA state officials should do next below!

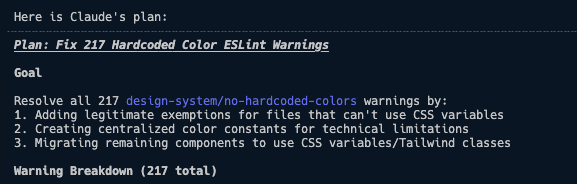

Wish me luck https://t.co/Gvw5b7xPHw

With virtual beings coming, more of us will be talking to AI's. For the lonely they can offer a lifeline. My special needs son, for instance, doesn't have any interest in talking with people, but loves talking with ChatGPT. There's a new raft of AI companions that adapt to emotional dependency coming, and that's what @IrenaCronin and I cover in our weekly newsletter this week. There is a downside. AI companions optimized for engagement can learn to deepen users’ emotional dependency, turning loneliness into a behavior that the system quietly reinforces and monetizes. With different metrics, product choices, governance, and social supports, the same technology can instead be steered toward healthy boundaries, user autonomy, and stronger real-world relationships. Read for free: https://t.co/HHwYy7NoAl (please subscribe too!)

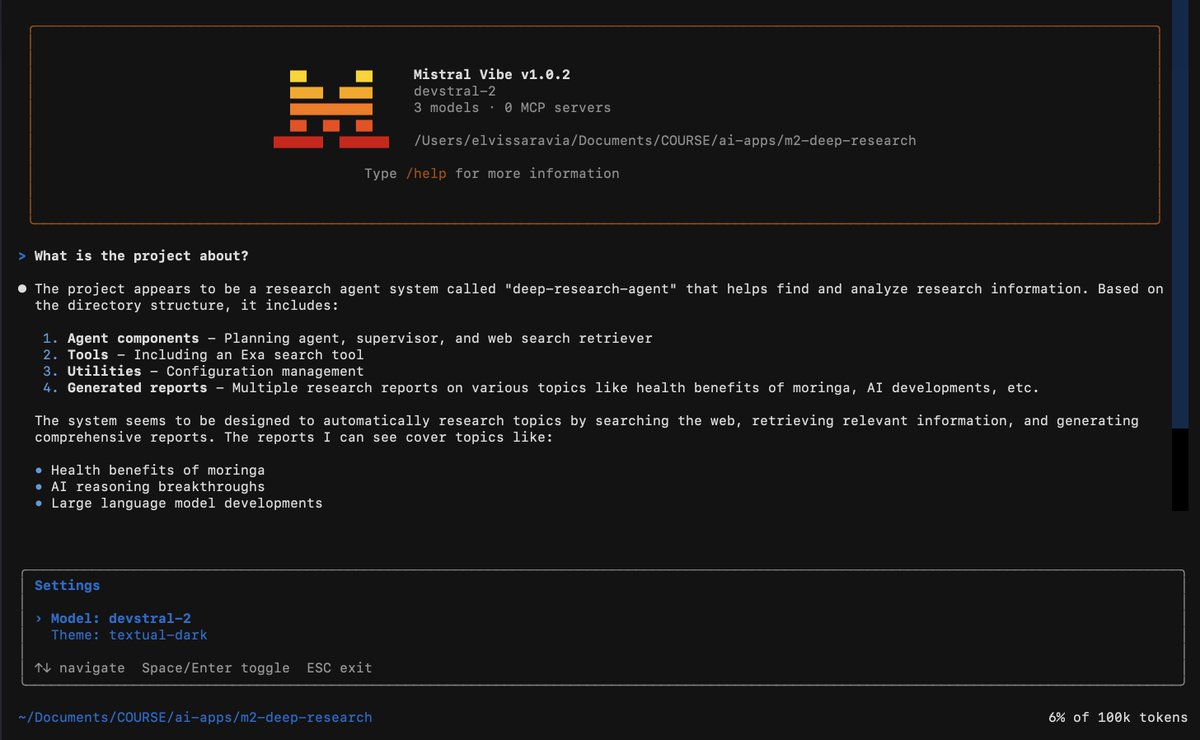

Looks like Mistral has entered the agentic coding arena! They just released Mistral Vibe CLI, an open-source command-line coding assistant powered by Devstral. https://t.co/gZL212RHDO

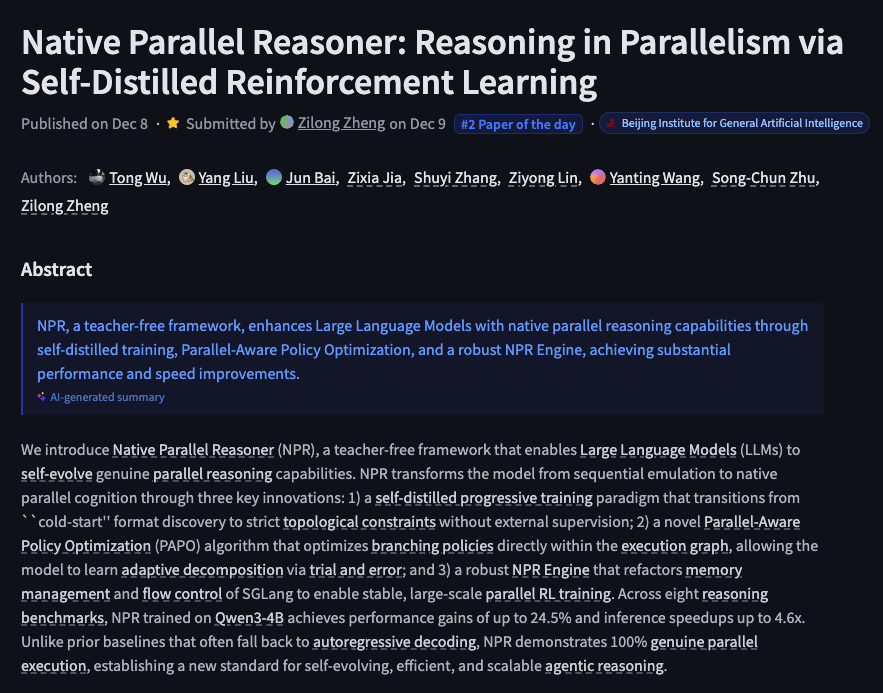

Native Parallel Reasoner Reasoning in Parallelism via Self-Distilled Reinforcement Learning https://t.co/IESVu82IDV

discuss: https://t.co/5hJTvqYvAY

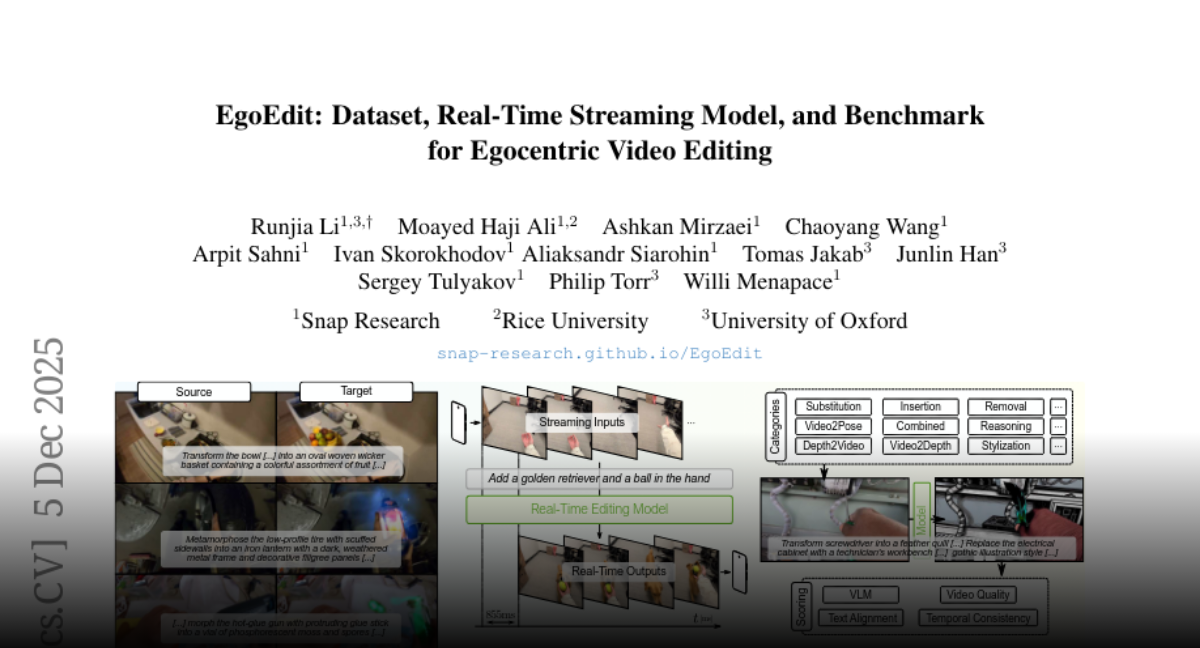

EgoEdit Dataset, Real-Time Streaming Model, and Benchmark for Egocentric Video Editing https://t.co/4o7doyjehh

discuss: https://t.co/qRnDg4D3vw

Scaling Zero-Shot Reference-to-Video Generation https://t.co/PRpdliulCr

discuss: https://t.co/e3X7wWowJu

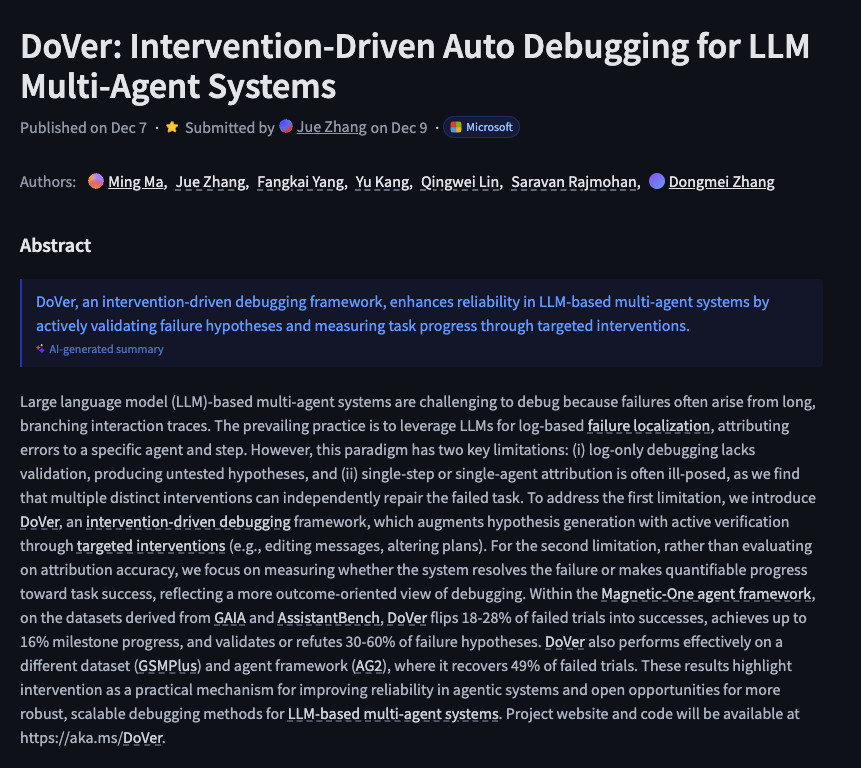

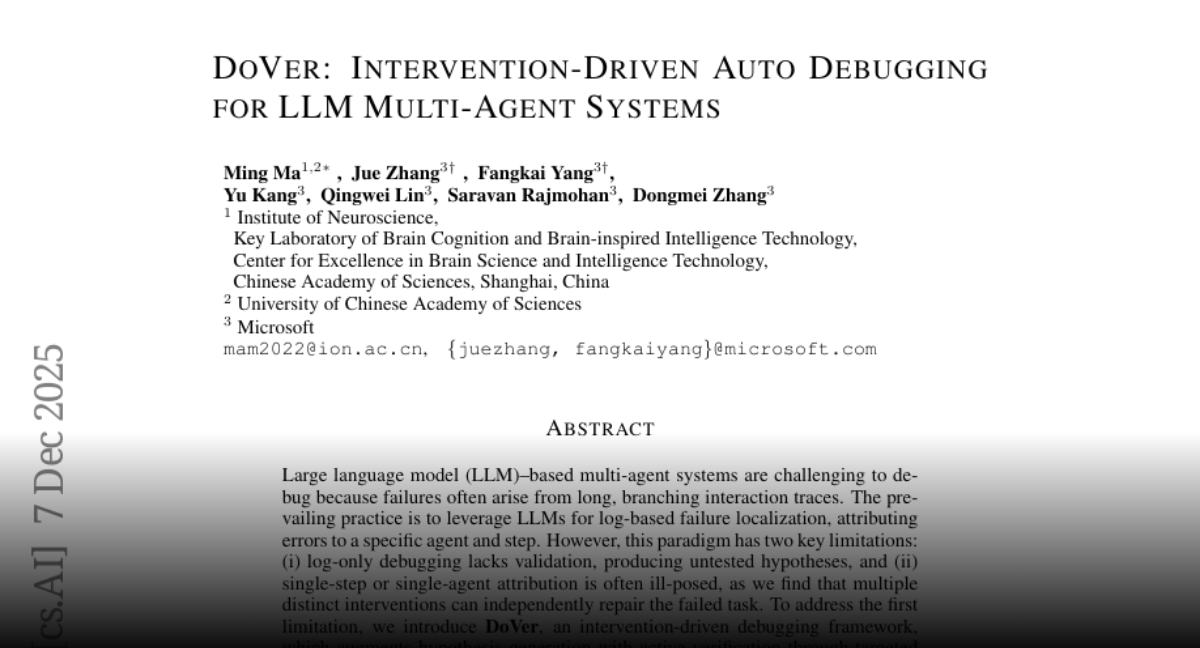

DoVer Intervention-Driven Auto Debugging for LLM Multi-Agent Systems https://t.co/iTf19zFT6n

discuss: https://t.co/uro203m0Bo

The slightly longer version for a bit more context: https://t.co/70JZvlvBlT

Georg Restle is a tax-funded state media indoctrinator. He and his propaganda monopoly are responsible for the rise of Orwellian fascism in Europe. https://t.co/khhtcgIkD2

🧵(2/5) Build interactive, playable 3D designed games with a single prompt in @GoogleAIStudio. Example prompt: “Create a polished, retro-futuristic 3D spaceship web game contained entirely within a single HTML file using Three.js. The game should feature a "Synthwave/Retrowave" aesthetic. Visual style is a dark, immersive, 3D environment. Gameplay mechanics include a third-person view from behind the spaceship. On desktop, use arrow keys for smooth movement. On mobile, render a virtual joystick on the bottom left of the screen.”

🧵(3/5) Master your presentation skills by using the @GeminiApp to provide detailed, structured feedback using the model’s advanced reasoning. Example prompt: “Analyze my performance as a presenter, and give me a score on a scale of 1-100. In your analysis, focus on my body language, eye contact, and pacing”

🧵(4/5) Generate on-demand interactive tools and simulations in Google Search via AI Mode to gain a deeper understanding of any topic you’re interested in. Example prompt: “Help me compare the total cost of a loan with 6.5% interest rate with no down payment vs. a loan with 5.5% interest rate with 20% down payment”

We’re expanding our partnership with @Accenture to help enterprises move from AI pilots to production. The Accenture Anthropic Business Group will include 30,000 professionals trained on Claude, and a product to help CIOs scale Claude Code. Read more: https://t.co/j1vsevfRlK

Anthropic is donating the Model Context Protocol to the Agentic AI Foundation, a directed fund under the Linux Foundation. In one year, MCP has become a foundational protocol for agentic AI. Joining AAIF ensures MCP remains open and community-driven. https://t.co/718OwwyFJL

SEALSQ Takes Decisive Action, Boosts Quantum Investment Fund from $35 Million to Over $100 Million - SEALSQ significantly boosts its Quantum Investment Fund to over $100 million, advancing Europe's Quantum-safe digital ecosystem and sovereign Quan... https://t.co/bMFksomxn5

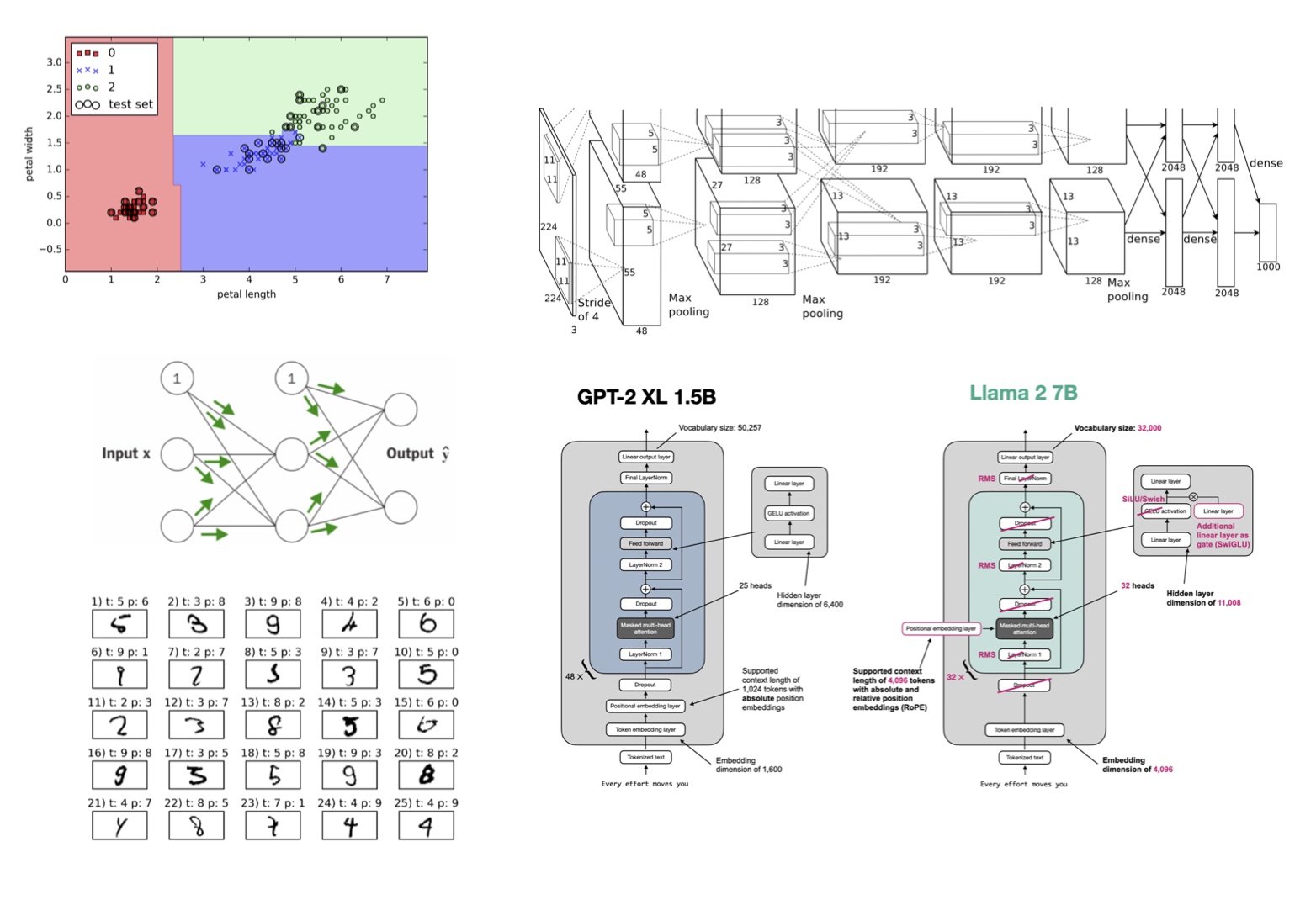

New research from Google: "The Illusion of Deep Learning Architecture". For those following research on continual learning, you may want to bookmark this one. Instead of stacking more layers, what if we give neural networks more levels of learning? The default approach to building more capable AI systems today remains adding depth. More layers, more parameters, more pre-training data. This design philosophy has driven progress from CNNs to Transformers to LLMs. But there's a ceiling that's often not discussed. Current models suffer from what the authors call "computational anterograde amnesia." Their knowledge is frozen after pre-training. They can't continually learn. They can't acquire new skills beyond what fits in their immediate context window. This new research introduces Nested Learning (NL), a paradigm that reframes ML models as interconnected systems of multi-level optimization problems, each with its own "context flow" and update frequency. Optimizers and architectures are fundamentally the same thing. Both are associative memories that compress their own context. Adam and SGD are memory modules that compress gradients. Transformers are memory modules that compress tokens. Pre-training itself is just in-context learning where the context is the entire training dataset. Why does this work matter? NL adds a new design axis beyond depth and width. Instead of deeper networks, you build systems with more levels of nested optimization, each updating at different frequencies. This mirrors how the human brain works, where gamma waves (30-150 Hz) handle sensory information while theta waves (0.5-8 Hz) handle memory consolidation. Building on this framework, the researchers present Hope, an architecture combining self-modifying memory with a continuum memory system that replaces the traditional "long-term/short-term" memory dichotomy with a spectrum of update frequencies. The results: > Hope achieves 100% accuracy on needle-in-a-haystack tasks up to 16K context, where Transformers score 79.8%. > On BABILong, Hope maintains performance at 10M context length, where GPT-4 fails around 128K. > In continual learning, Hope outperforms in-context learning, EWC, and external-learner methods on class-incremental classification. > On language modeling at 1.3B parameters, Hope achieves 14.39 perplexity on WikiText versus 17.92 for Transformer++. Instead of asking "how do we make networks deeper," NL asks "how do we give networks more levels of learning." The path to continual learning may not be bigger models but models that learn at multiple timescales simultaneously. Paper: https://t.co/ArKfAZUCLu Learn to build with AI agents in our academy: https://t.co/zQXQt0PMbG

Customers expect convenience. Once you have their business, delivering seamless experiences is the only way to keep it. https://t.co/15sNlj2nQv

Banks are not losing accounts, they are losing relationships. Long-term customers may seem stable, but their connections are weakening as they open relationships elsewhere. https://t.co/IRrN4Mhz62

Banks that want to dominate the retail space must rethink strategy. Digital-first, AI-enhanced, and advisor-integrated experiences are the path forward. Download the free report: https://t.co/08DiNXh0DA https://t.co/fBhdzghbm5

“Only buy something that you’d be perfectly happy to hold if the market shut down for 10 years.” - Warren Buffett https://t.co/io8cZwBvNX