Your curated collection of saved posts and media

Fei-Fei Li of World Labs: AI is incomplete without spatial intelligence https://t.co/OYGtVmyljC @CristinaCriddle @Melissahei @drfeifei @ft

Musicians are deeply concerned about AI. So why are the major labels embracing it? https://t.co/gWprIPX2TD

From One-Person Companies to Generative Media, AI Funding Spans the Stack https://t.co/VcljFNMZPV @pymnts

More than half of researchers now use AI for peer review often against guidance https://t.co/4YlS1DlsAt @Nature

PayPal applies for US banking licence https://t.co/ikR9vWX7PY @ft

‘Much more than a backend refresh’: @Coinbase’s fintech pivot hits milestone https://t.co/eGpSePAfau @HeleneBraunn @coindesk

You’re Thinking About AI and Water All Wrong https://t.co/xKTCjRHHKq @mollytaft @wired

Slop, vibe coding and glazing: AI dominates 2025’s words of the year https://t.co/FIQJMik8ej @ConversationUS @ConversationUK

Today we're rolling out our new iPad app, optimized for iPadOS. The Perplexity iPad app is designed for real work, offering the same core features you find on desktop, now with you wherever you go. Available on the App Store today. https://t.co/906c3jHcFJ

TurboDiffusion Accelerating Video Diffusion Models by 100–205 Times https://t.co/66ZYtT20hy

https://t.co/ZU9D3rl1Po

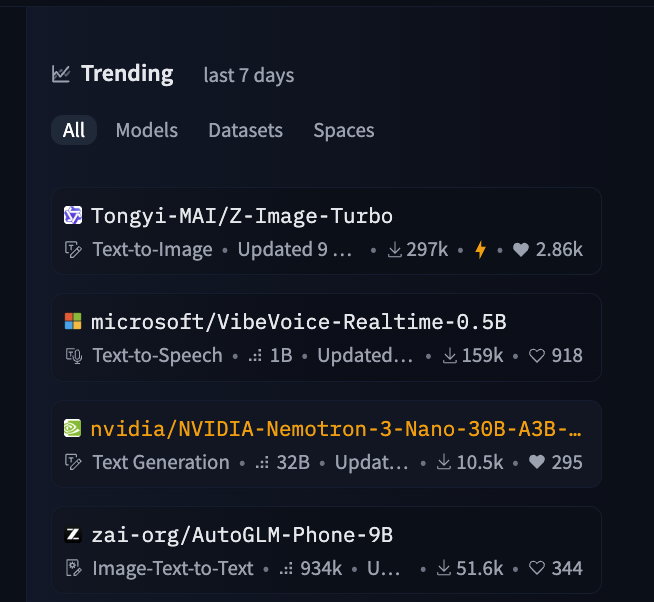

Nemotron 3 is already third trending on @huggingface. @nvidia is really becoming the American powerhouse for open-source AI! https://t.co/uDQWBPJUwL

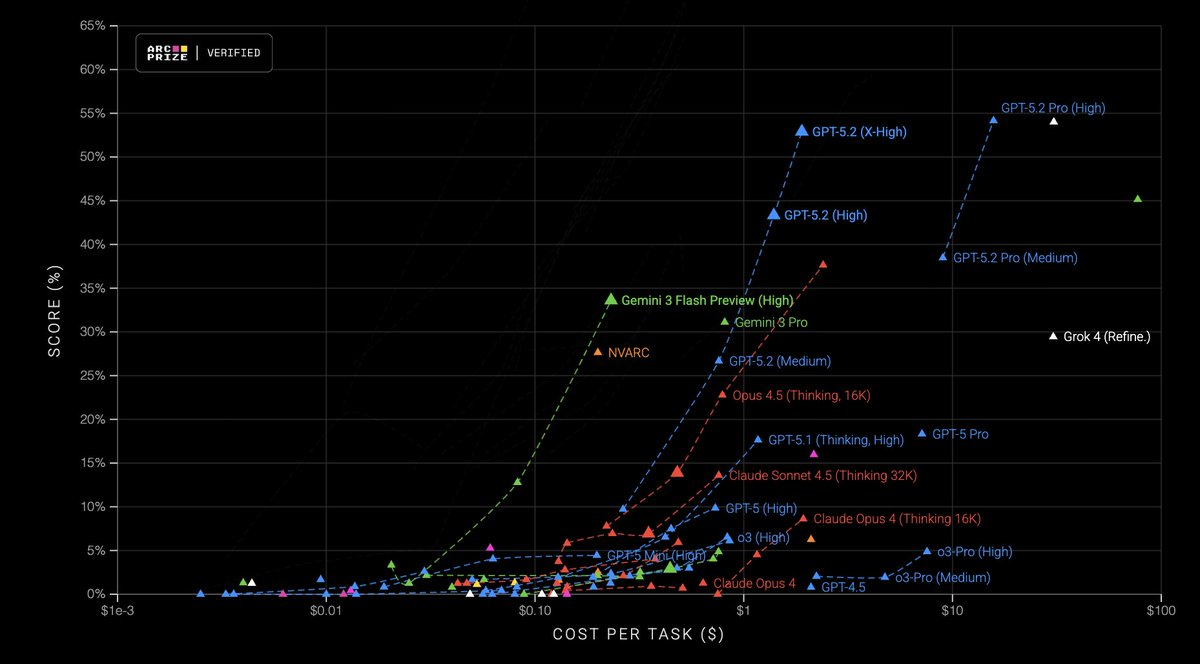

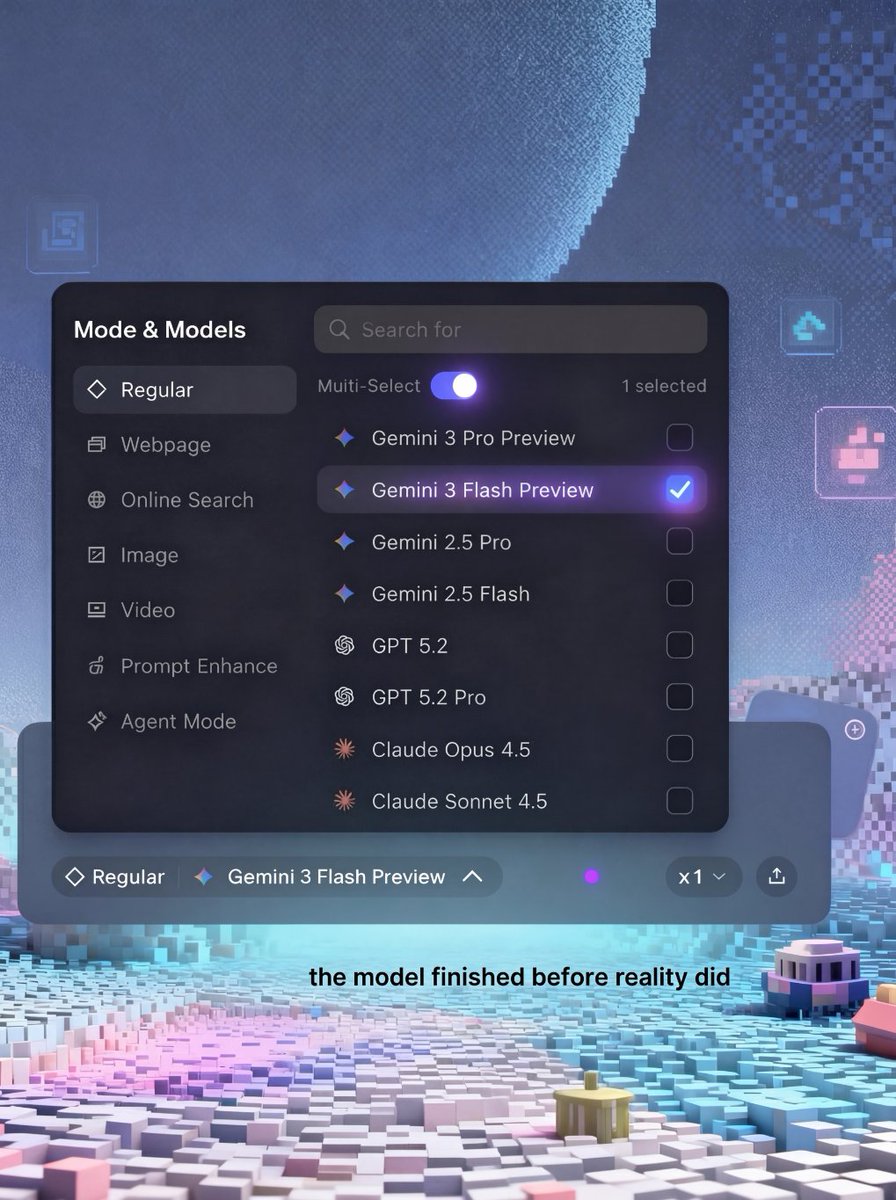

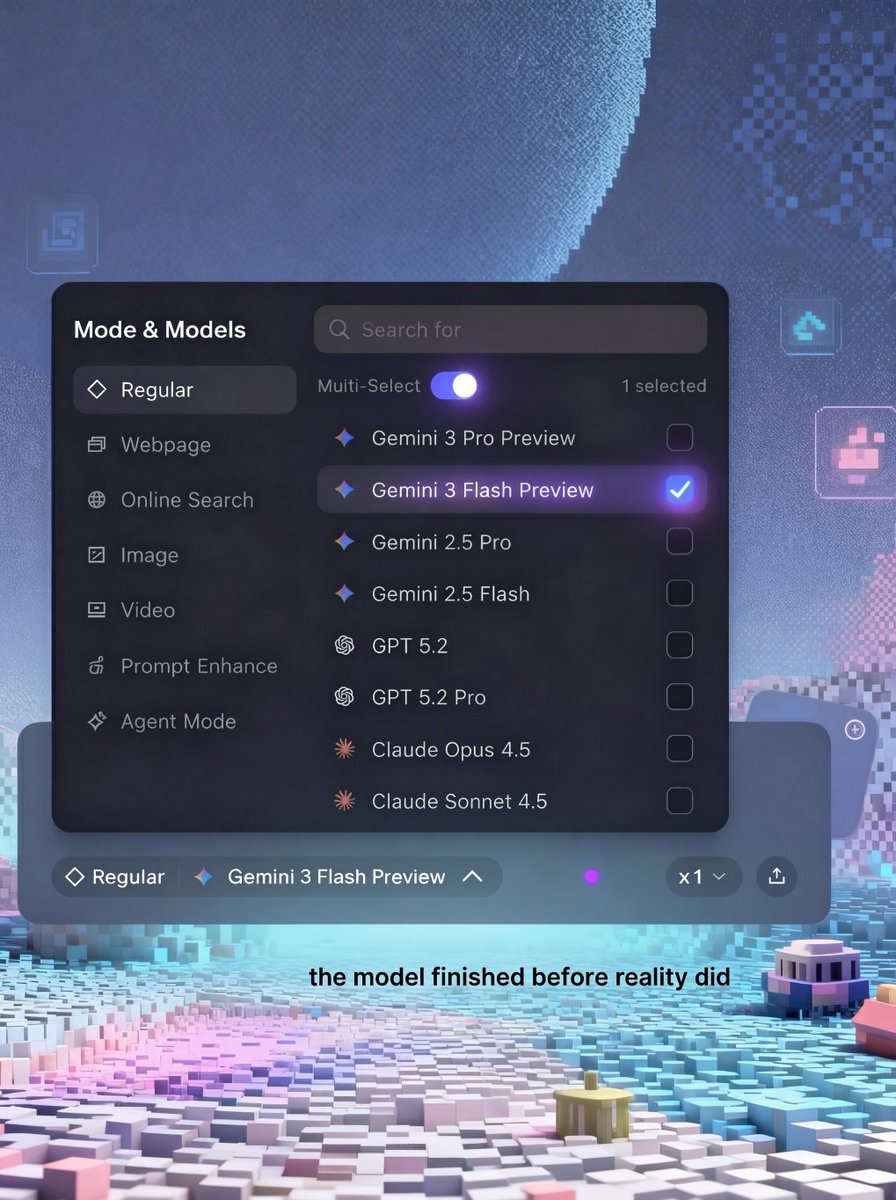

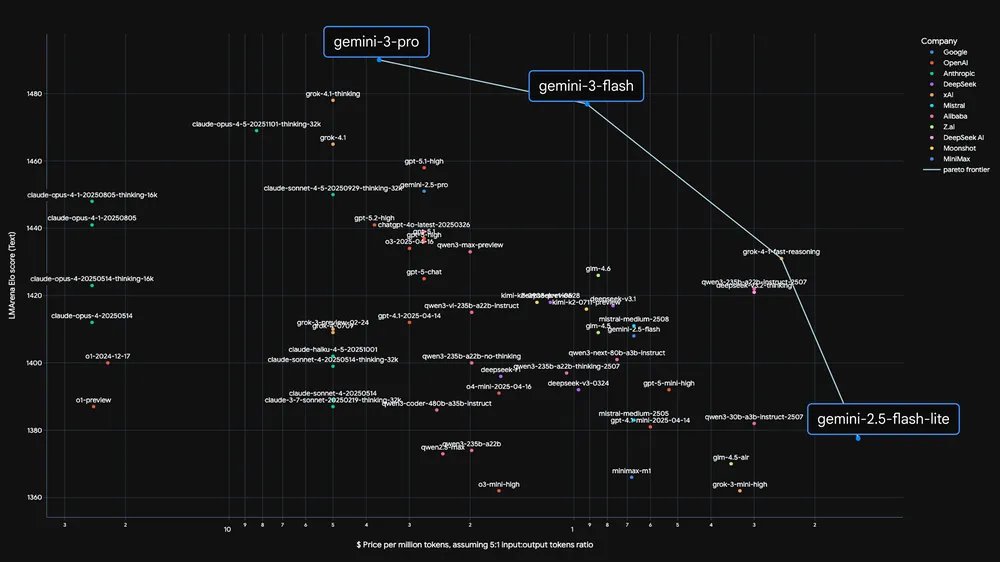

Gemini 3 Flash across different test-time compute levels (green line below) represents a new score/cost Pareto frontier on ARC-AGI-2. Congrats to @demishassabis and @sundarpichai on the launch! https://t.co/XJCTx5jvuq

Compute enabled our first image generation launch (and a +32% jump in WAU over the following weeks) as well as our latest image generation launch yesterday. We have a lot more coming… and need a lot more compute. https://t.co/rHfQv1aLKS

Gemini 3 Flash is now rolling out in public preview in GitHub Copilot. This model is ideal for tasks where speed is crucial. Try it out in @code 👇 https://t.co/UNMXQvQ635

Gemini 3 Flash rolling out to @code now 🚀 Try it out and let us know what you think! https://t.co/rutLlNevgO

Gemini 3 Flash is now rolling out in public preview in GitHub Copilot. This model is ideal for tasks where speed is crucial. Try it out in @code 👇 https://t.co/UNMXQvQ635

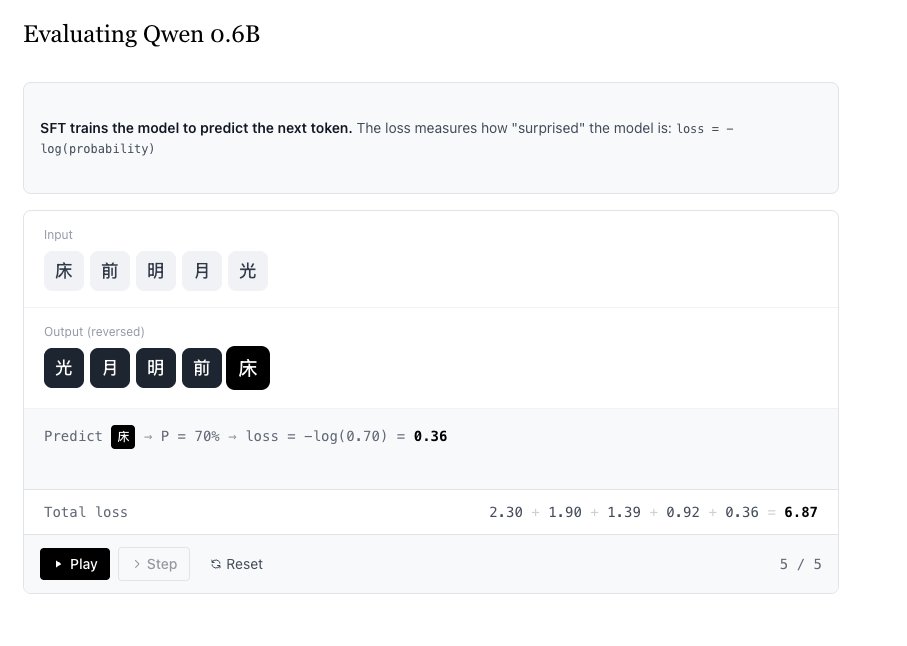

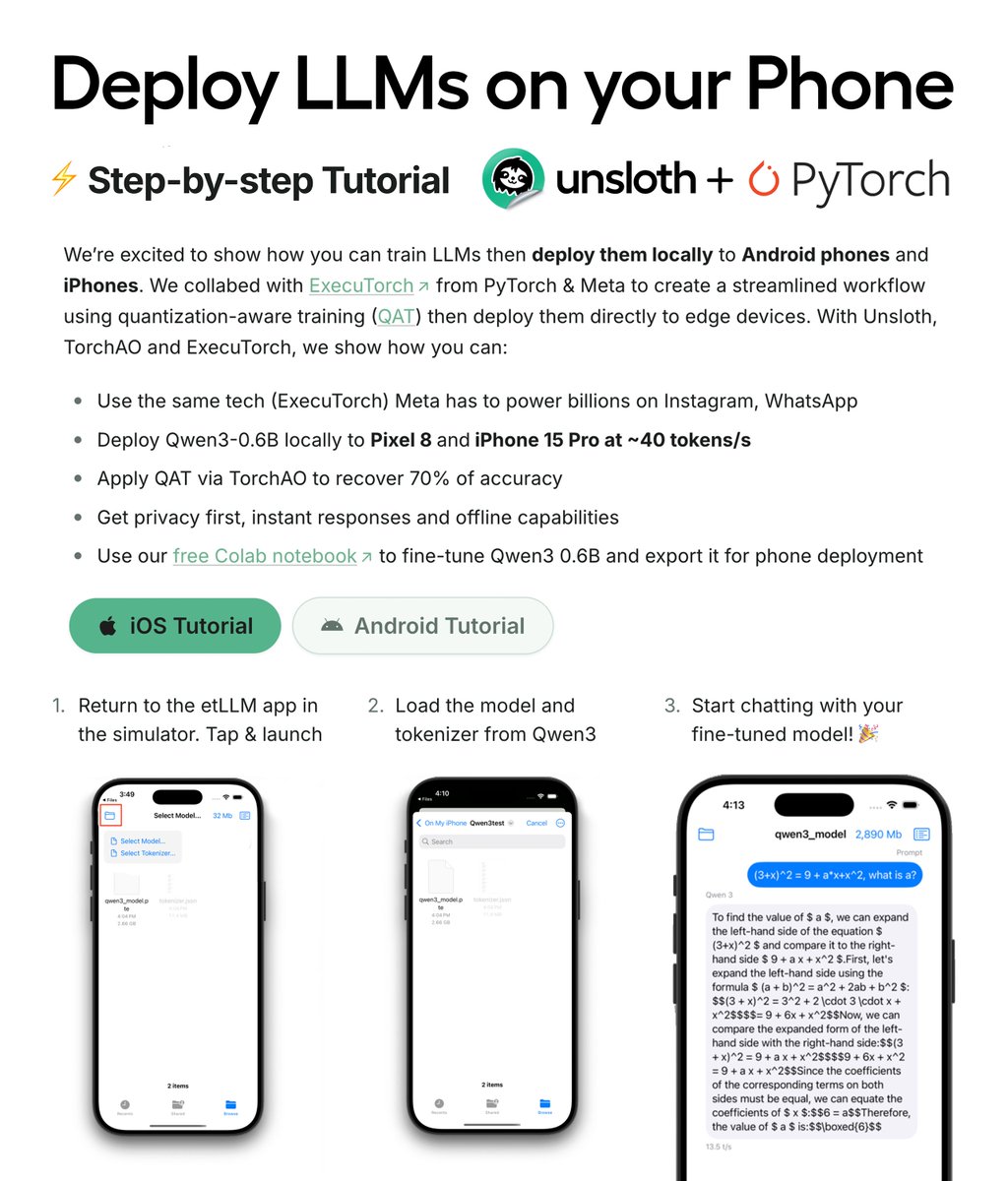

You can now fine-tune LLMs and deploy them directly on your phone! 🚀 We collabed with PyTorch so you can export and run your trained model 100% locally on your iOS or Android device. Deploy Qwen3 on Pixel 8 and iPhone 15 Pro at ~40 tokens/sec. Guide: https://t.co/8wyQLJfzeC

Crashes and corrupted data in GPU kernels often come from out-of-bounds memory access. Puzzle 03 in the Mojo🔥 GPU Puzzles series shows how guard conditions prevent this with just a few lines of code. Watch the full tutorial ⬇️ https://t.co/HlWQVGPhRx

We’ve rewritten the Perplexity iPad app to be native and support the workflows that iPad users have - multi tasking and wider screen real estate. It gets you the experience of a desktop Perplexity usage and the polish and finesse of our iOS app. https://t.co/pV3Pu6GA9k

⚡️Thrilled to introduce @ropedia_ai, where we aim to structure human Xperience (observations + interactions) for world models, robotics foundation models and interactive intelligence! 🔥Check out our blog for more: https://t.co/8284kANOFF 🎁Don’t forget to join our waitlist for early access of HOMIE: https://t.co/F9iNlCeAUX

🧵1/ Today we’re introducing Ropedia 🚀 AI needs more human Xperience 👀✋🚶🌍 to unlock interactive intelligence We’re building the system to capture & scale it 📡 Example 👇🍅🍳 (tomato & egg stir-fry)

already here ⚡️ gemini 3 flash. free to compare. free to decide. (limited time) https://t.co/mXQNL4bhHq

already here ⚡️ gemini 3 flash. free to compare. free to decide. (limited time) https://t.co/mXQNL4bhHq

Introducing Particulate: a feed-forward model for 3D object articulation 💻✂️👓🧳 Particulate gives you a fully articulated 3D object, including part segmentation, kinematic structure & motion constraints, in a single forward pass in ~10secs. 🏅SOTA performance! 💡GenAI compatible: Turns AI-generated 3D meshes into fully articulated models! Project page: https://t.co/8yYFpYdEkY Code: https://t.co/CUuubxqbdY

BREAKING: 𝕏 Chat standalone web app is now live. • Fully encrypted for maximum privacy • No third party dependencies • No ads or hidden trackers • Supports file sharing • 𝕏 never reads or sells your chats to advertisers. Live on web: https://t.co/2sZF5QZIzt https://t.co/t4yXz3gcND

Gemini 3.0 Flash surpasses 2.5 Pro while being 3x faster at a fraction of the cost ⚡️ frontier-class performance on PhD-level reasoning and knowledge benchmarks like GPQA Diamond (90.4%) and Humanity’s Last Exam (33.7% without tools) ⚡️ features our most advanced visual and spatial reasoning ⚡️ you can now use code execution to zoom, count and edit visual inputs. ⚡️ $0.50/1M input tokens and $3/1M output tokens (audio input remains at $1/1M input tokens) Rolling out to developers in the Gemini API via Google AI Studio, Google Antigravity, Gemini CLI, Android Studio and to enterprises via Vertex AI. https://t.co/4Pn5XwrXqx

The next scaling frontier isn't bigger models. It's societies of models and tools. That's the big claim made in this concept paper. It actually points to something really important in the AI field. Let's take a look: (bookmark for later) Classical scaling laws relate performance to parameters, tokens, and compute. More of each, better loss. These laws have driven a decade of progress. But they describe a single-agent world: one model, static corpus, one prompt at a time. There is a clear misalignment with how real-world problems actually work. This new perspective paper argues that scaling must expand along three new axes: population, organization, and institution. Not just how many parameters, but how many agents, how they're connected, and what norms govern their interaction. Simply adding more agents doesn't monotonically improve performance. Early experiments in multi-agent debate show that naive agent swarms can degenerate into majority herding, where the first plausible-but-wrong answer locks in and gets reinforced through subsequent rounds. Groups of frontier models fail to integrate distributed information, displaying human-like collective failures. The paper proposes three interaction regimes for multi-agent systems: 1) Competition: debate, adversarial critique, self-play. 2) Collaboration: role specialization, division of labor, complementary expertise. 3) Coordination: orchestrated workflows, planner-worker hierarchies, reliable execution. Which regime fits which task matters. Competitive regimes suit focused reasoning problems with clear correctness criteria. Collaborative regimes fit an open-ended design where diverse skills are needed. Coordinated regimes handle long-horizon, safety-critical workflows. The architectural implications are significant. Effective multi-agent systems need cognitive diversity: agents with different priors, reasoning styles, and tool access. They need institutional memory: persistent artifacts that outlive individual sessions, analogous to lab notebooks and version control. They need communication topologies: not just broadcast or hub-and-spoke, but structured graphs that balance diversity and coherence. Training objectives must change, too. Current models optimize individual next-token prediction. Multi-agent systems need collective objectives: group accuracy, calibration, hypothesis diversity, and conflict resolution quality. The paper proposes "multi-agent pretraining" where debate, peer review, and negotiation become first-class optimization targets. Paper: https://t.co/OqwIIeJe8T Learn to build AI agents in my academy: https://t.co/JBU5beHQNs

Damn! Gemini 3 Flash is no joke. Faster and cheaper, while demonstrating remarkable reasoning capabilities. Amazing that we have models of this caliber with multimodal and agentic capabilities. Time to build! Stay tuned for more of my thoughts on this model. https://t.co/uNRhz0GhNj

Gemini 3 Flash gives you frontier intelligence at a fraction of the cost. ⚡ Here’s how it’s built for speed and scale 🧵

last chance to sign up if interested! https://t.co/NY7HCOTEMB

last chance to sign up if interested! https://t.co/NY7HCOTEMB

Since central park was so beautiful covered in snow, we decided to put it on our website :) https://t.co/bms4JsbdOG

Not many people know this, but the company behind Manus is Butterfly Effect. In that small office, we pressed the launch button—never imagining how one small move could change the course ahead. Keep shipping! https://t.co/5PggD9FXla

$0 → $100M ARR in 8 months. Since we launched in March: -147 trillion tokens processed -80M+ virtual computers created -Total revenue run rate over $125M Thank you to everyone building with us. https://t.co/TZJ3n162zl

Working on a new RL article based on what I've been mucking around with :) Finally cracked these custom visualisations https://t.co/OgXiMnerBw