Your curated collection of saved posts and media

Livestream begins at 12pm ET. Tune in, we'll be sharing in Real-time. https://t.co/eddEUQnvn9

Part 2 of @runwayml 4.5 out now! With prompts this timem. Forgot to enter them in the main post last time. Prompts used: - hand held shot of a baby lion waking up next to his mom's face. Focus on the textures. - First-person view of parkour runner's perspective. Sprint toward cliff edge, explosive takeoff, hands reaching out toward hot air balloon basket approaching fast. Balloon fills frame as jumper flies through air. Ground drops away far below. Adrenaline rush captured in motion. GoPro-style dynamic movement. - macro shot of sloths claws picking up a blade of grass -drone shot of bison crossing the nile river. Smooth cinematic motion #research #creative #researchanddevelopment

Deep Research is the first agent released on the new Interactions API – offering a single endpoint for agentic workflows. Start building today ↓ https://t.co/FlgtzbDYj7

NEWS: Rivian haș unveiled its Autonomy chip and Gen 3 Autonomy Computer, which the company says is designed to solve the needs of autonomous driving. Chip: • Mutli-chip module • TSMC 5nm • Neural engine: Rivian designed • 800+ TOPS • In-house designed software stack "RAP1 powers the company’s third-generation Autonomy computer, the Autonomy Compute Module 3. Key specs include: • 1600 sparse INT8 TOPS • The processing power of 5 billion pixels per second. • RAP1 features RivLink, a low latency interconnect technology allowing chips to be connected to multiply processing power, making it inherently extensible. • RAP1 is enabled by an in-house developed AI compiler and platform software.” Rivian: “In addition to ACM3, Rivian plans to integrate LiDAR into future R2 models. LiDAR will augment the company’s multi-modal sensor strategy, providing detailed, three-dimensional spatial data and redundant sensing, and improving real-time detection for the edge cases of driving. Our Gen 3 Autonomy hardware including ACM3 and LiDAR is currently undergoing validation and we expect it to ship on R2 models starting at the end of 2026.”

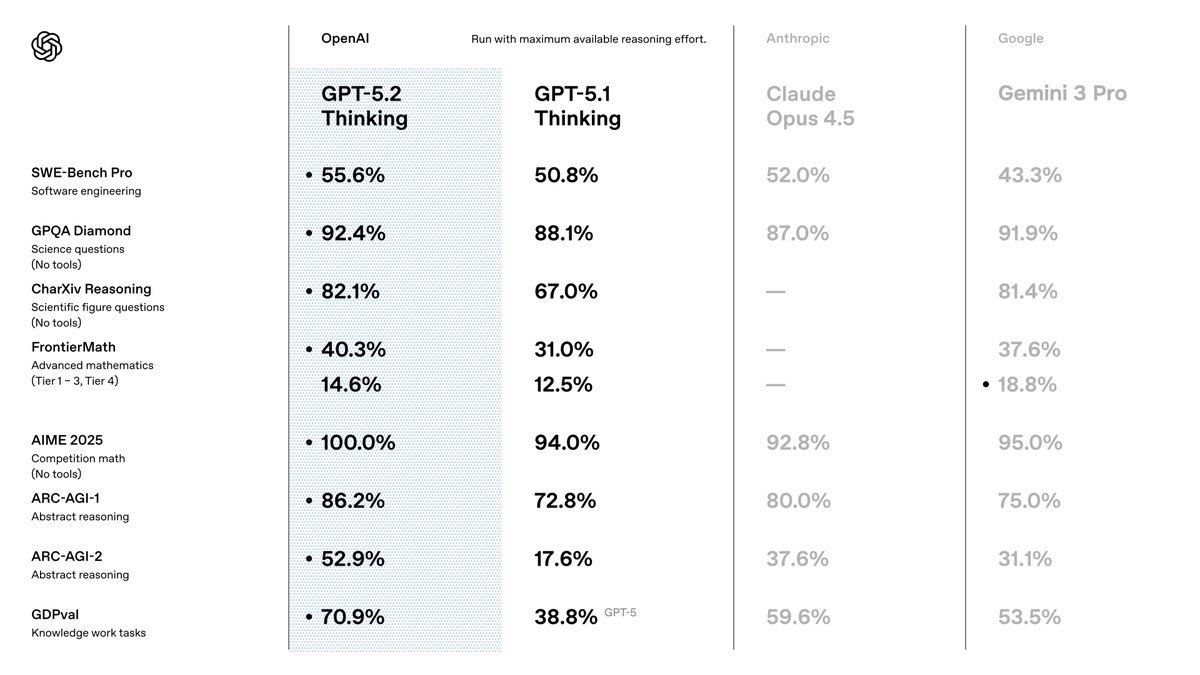

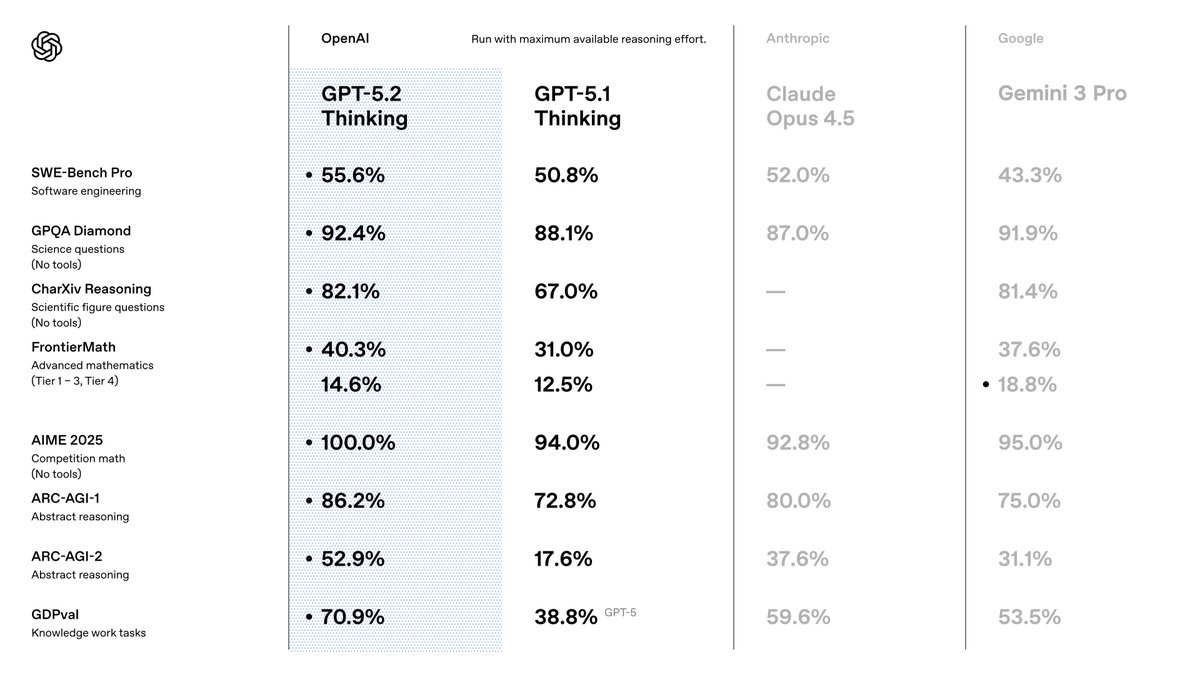

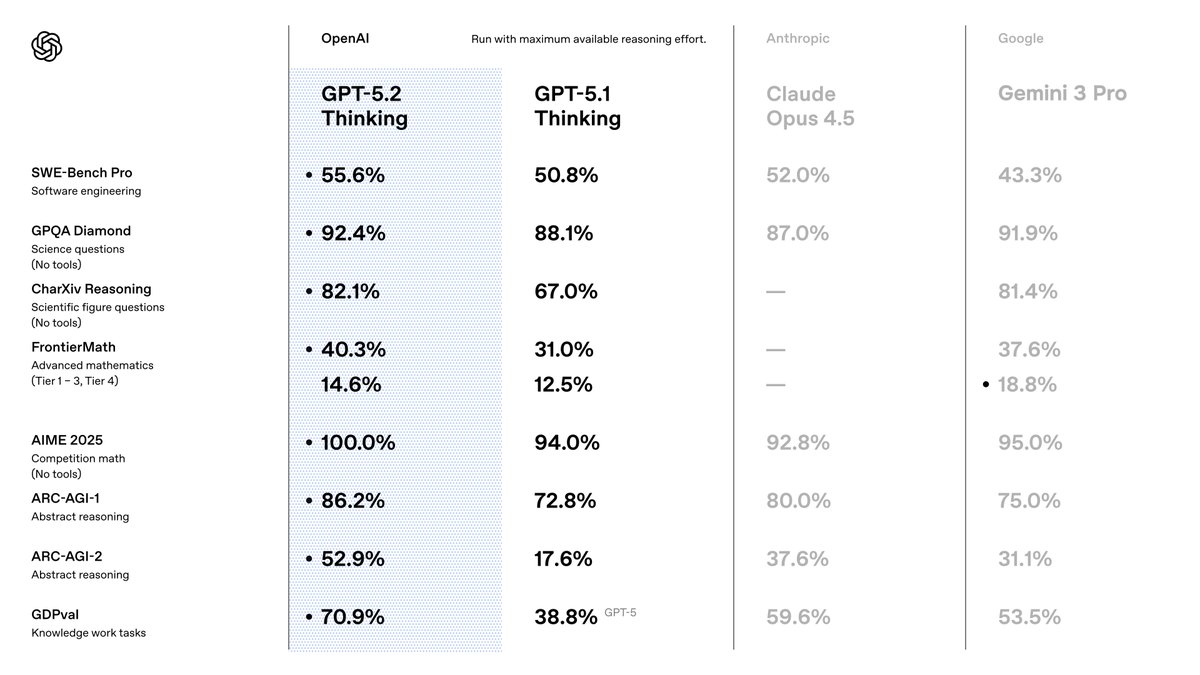

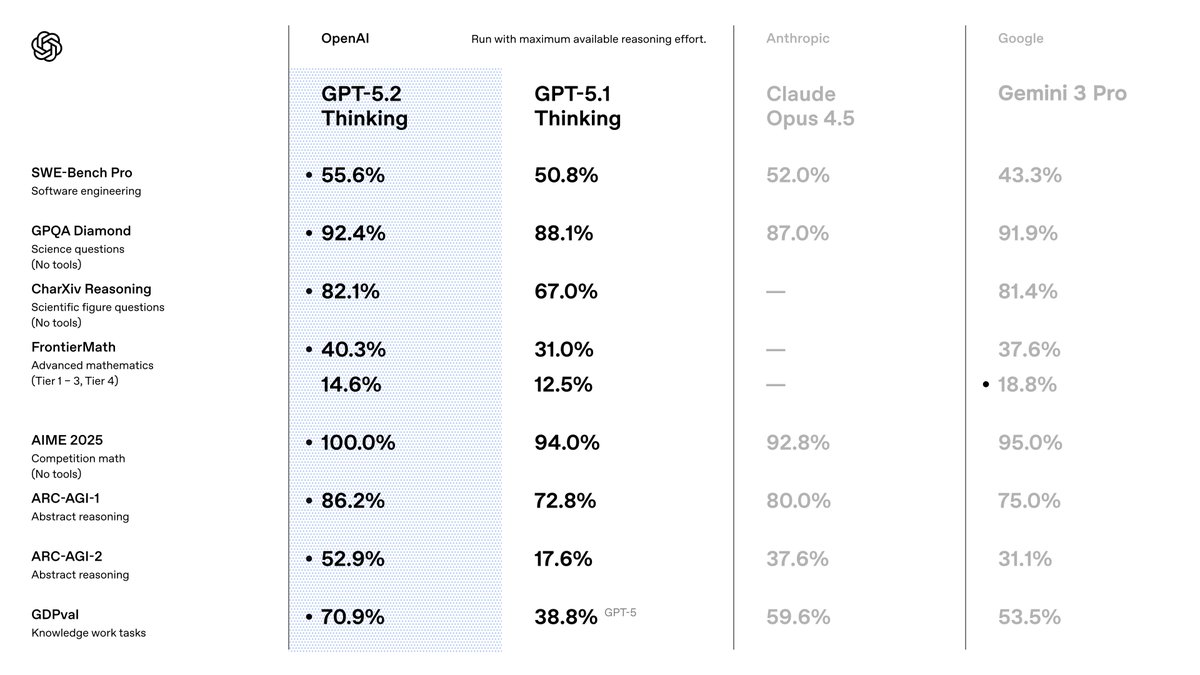

OpenAI says GPT-5.2 Thinking hallucinates less than GPT-5.1 and has improved reliability for agentic AI needs; pre-release testers include Notion, Box, Shopify (@haydenfield / The Verge) https://t.co/I64lWbO1Om https://t.co/iLX7mjbATR 📥 Send tips! https://t.co/wlNZvXuhJs

This seems Huge Scientists at Caltech and Cedars-Sinai have built a new AI tool called NOBLE that can quickly and accurately create virtual versions of brain neurons. Basically, it helps researchers understand how the brain works a lot faster, which could eventually lead to better treatments for brain related disorders.

A team of scientists led by Caltech and Cedars-Sinai has developed a new artificial intelligence framework that can accurately, quickly, and efficiently create virtual models of brain neurons. https://t.co/mxRpp2jvTM

GPT-5.2 Thinking evals https://t.co/Kcnz3ZIwye

GPT-5.2 Thinking evals https://t.co/Kcnz3ZIwye

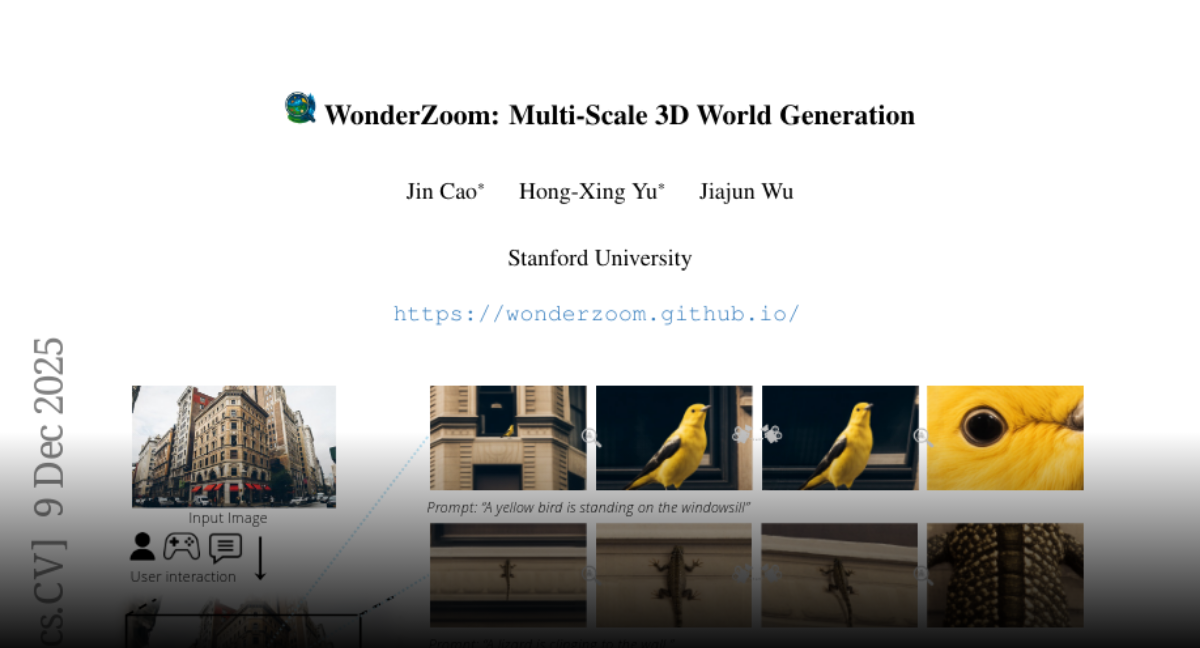

WonderZoom Multi-Scale 3D World Generation https://t.co/6VAoAzZ4SO

discuss: https://t.co/FKh81wKg5f

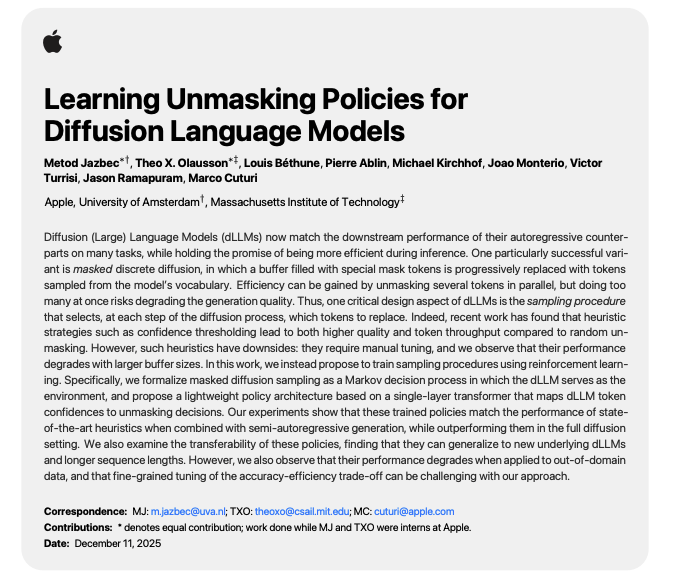

Apple presents Learning Unmasking Policies for Diffusion Language Models https://t.co/UGSQIdxxB5

discuss: https://t.co/kCLhzs6dPO

🤯MUST TRY: Qwen-Image-i2L skips the training loop entirely. 1-5 images in → LoRA weights out in seconds. ⬇️ Demo available on Hugging Face https://t.co/yuqEZ1GAnw

Towards a Science of Scaling Agent Systems https://t.co/JmCe3VLTby

discuss: https://t.co/uJHaaQkg5G

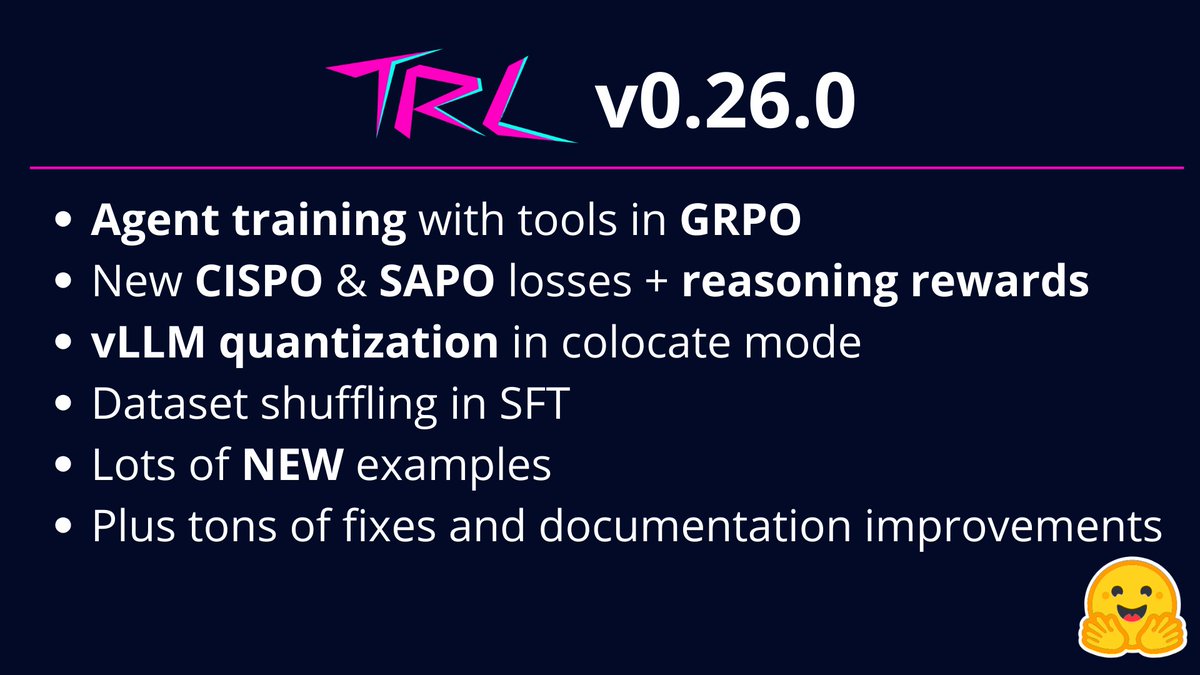

We just released TRL v0.26.0! It comes packed with updates: > Agent training with tools in GRPO > New CISPO & SAPO losses > Reasoning rewards > vLLM quantization in colocate mode > Dataset shuffling in SFT > Lots of NEW examples > Tons of fixes and documentation improvements https://t.co/Vt3dmI1sLU

Sharing the slides from a talk I gave this week on bridging the gap between research experiments and building production-ready models, based on our recent Smol Training Playbook. https://t.co/RmG53PytMv

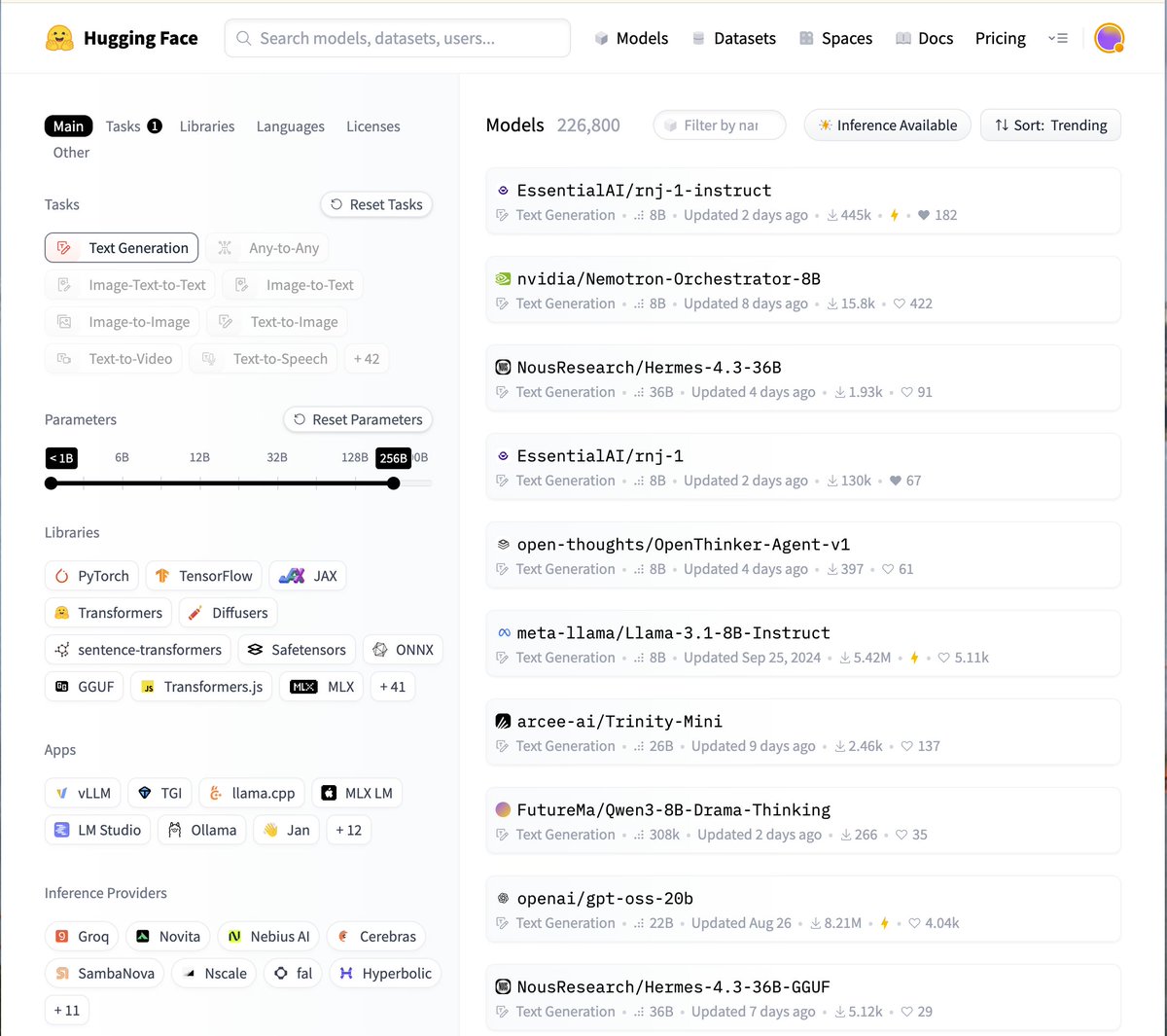

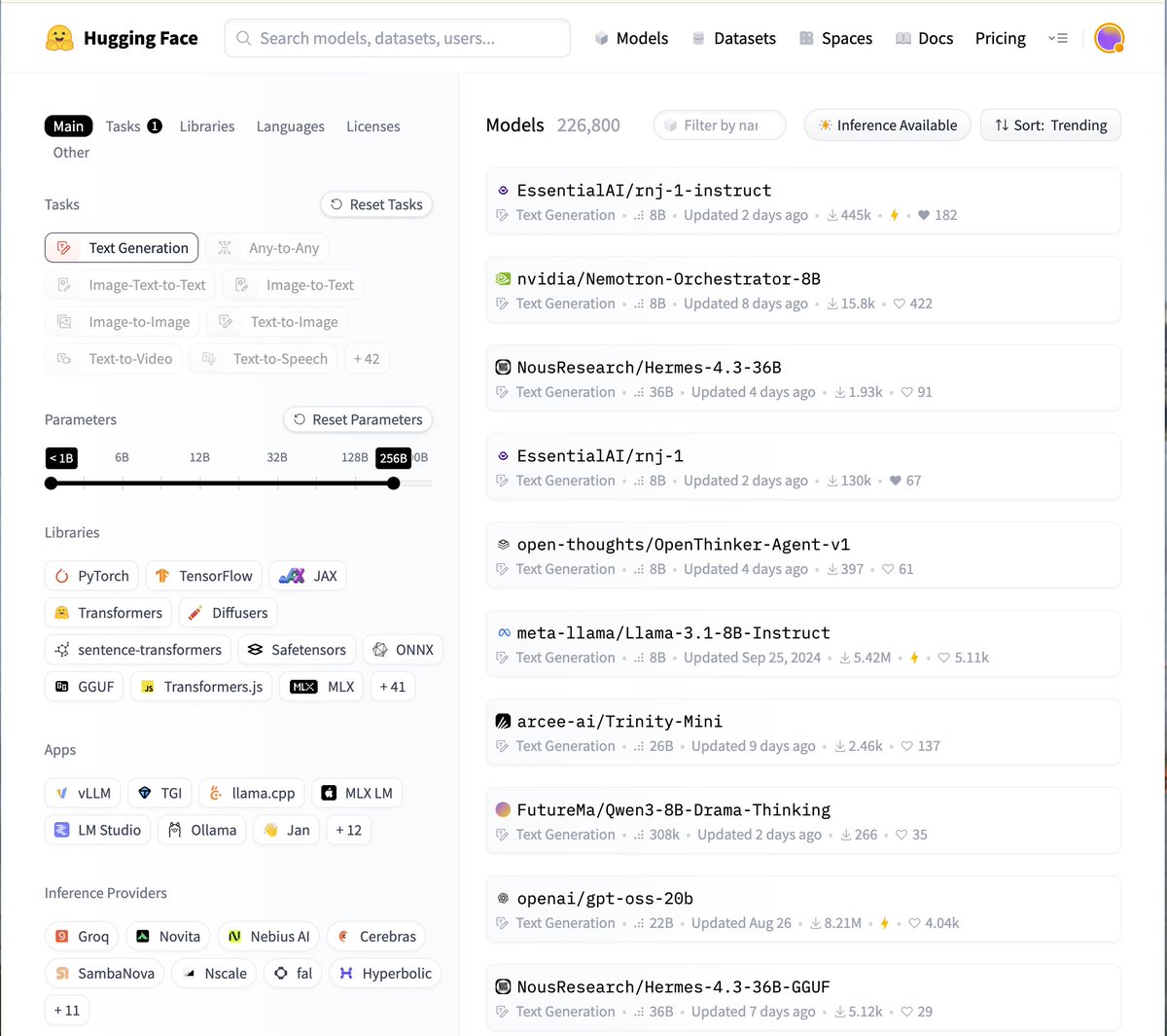

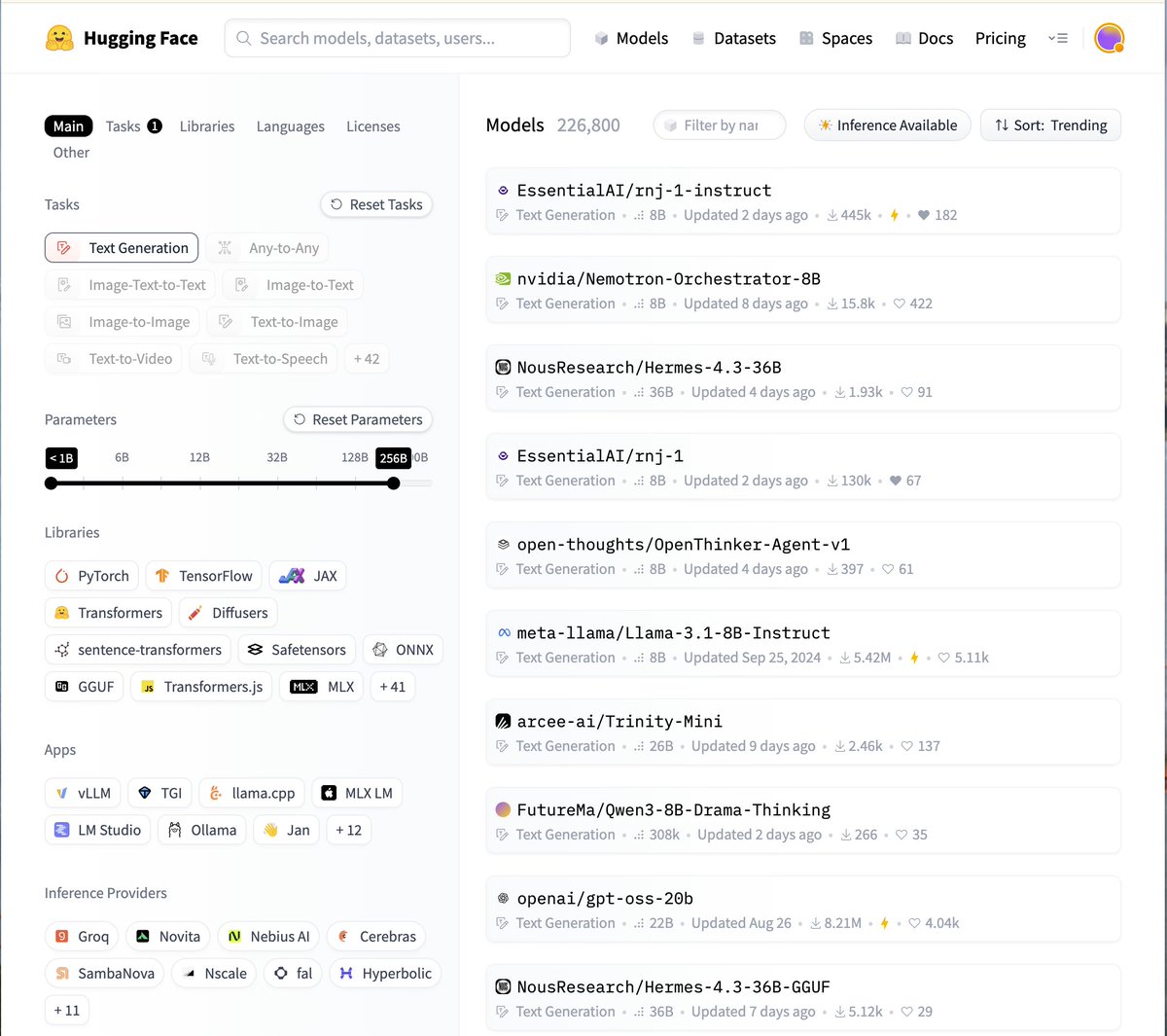

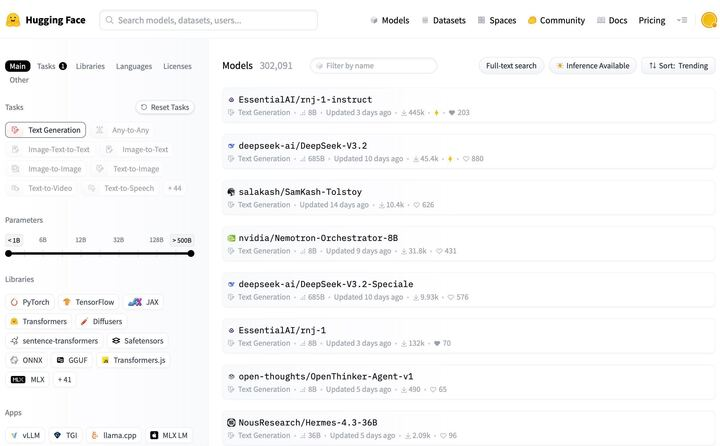

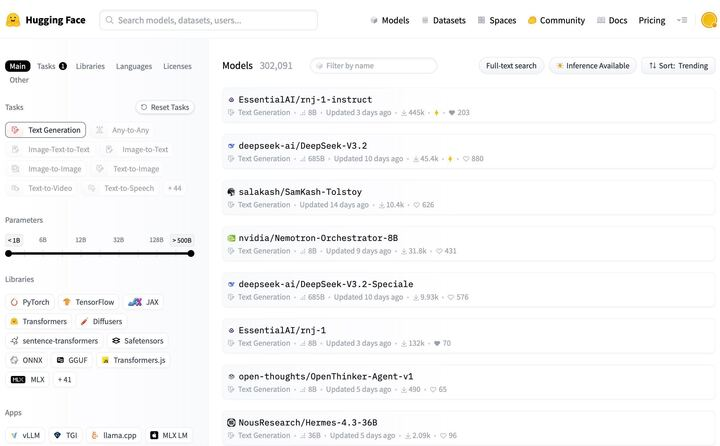

We are now the #1 trending text-gen <256B size model on HuggingFace!! https://t.co/SyoOHjWfvH

We are now the #1 trending text-gen <256B size model on HuggingFace!! https://t.co/SyoOHjWfvH

🔥 Ultra-FineWeb-en-v1.4 is coming! 2.2T tokens fully open-sourced! The core training fuel for MiniCPM4 / 4.1, fully updated based on FineWeb v1.4.0: 🆕 What's New 1️⃣ Fresher Data: Added CommonCrawl snapshots from Apr 2024 - Jun 2025 to capture the latest world knowledge. 2️⃣ Easier Access: CC Dump Slices are here! No need to download the entire massive dataset anymore, fetch exactly what you need seamlessly. ⚡ Highlights & Performance - Efficient Verification: Efficient Verification Strategy: Reduces data verification cost by 90% - High-Efficiency Filtering Pipeline: Optimizes selection of both positive and negative samples - Performance Gains: +3.613/+1.331 (Eng) & +1.98/+0.61 (Chn) vs. FineWeb/FineWeb-edu & Chinese FineWeb-edu-v2. Still high-quality cleaning. Still true to the open-source spirit. Welcome to download and test! 🚀 🔗 Resources 🤗 Dataset: https://t.co/KluL5t2kUn 📄 Paper: https://t.co/Kg9LLUqZgB 🧩 Classifier:https://t.co/oUfxrN6AmP 🤖 MiniCPM4:https://t.co/IQ82jD1PTi #UltraFineWeb #MiniCPM4 #AI #LLM #OpenBMB #UltraData

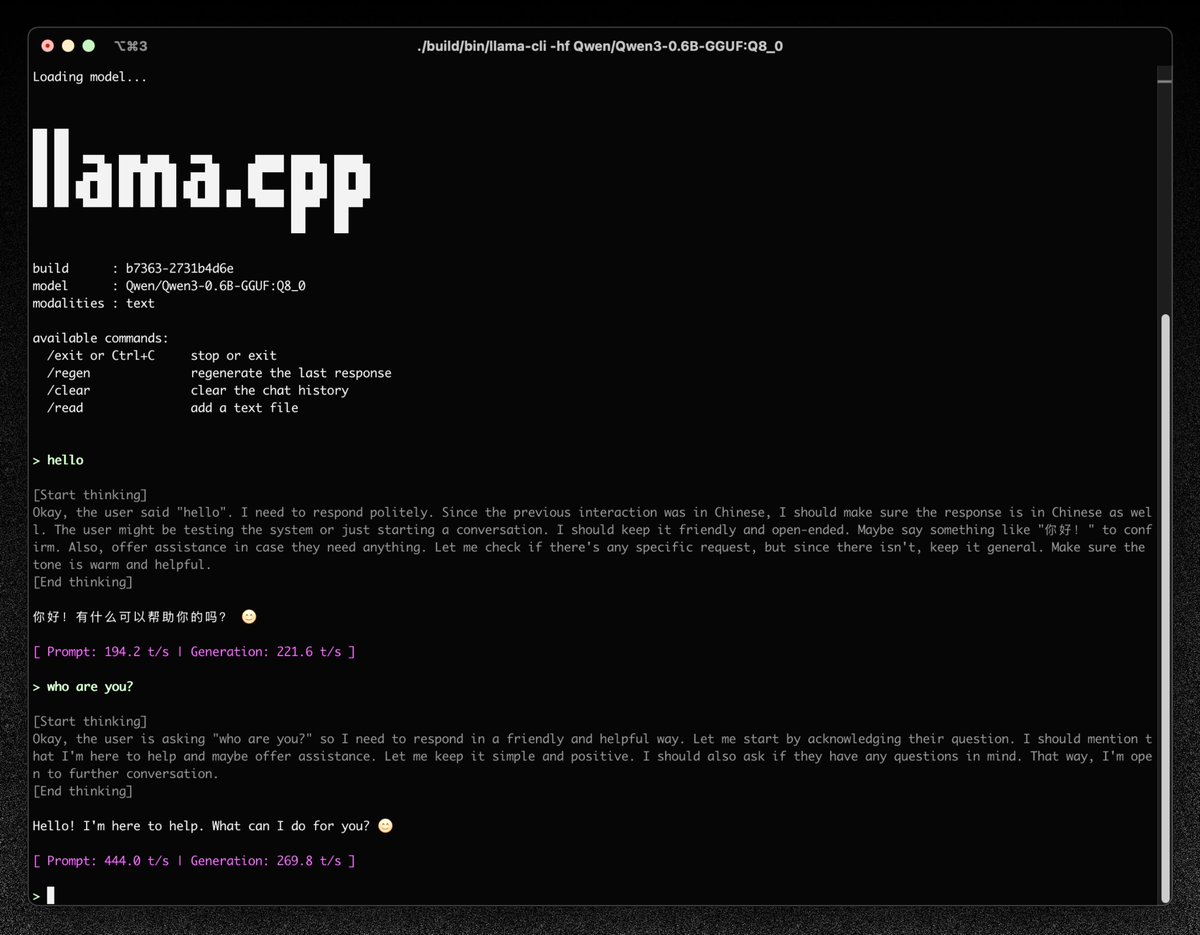

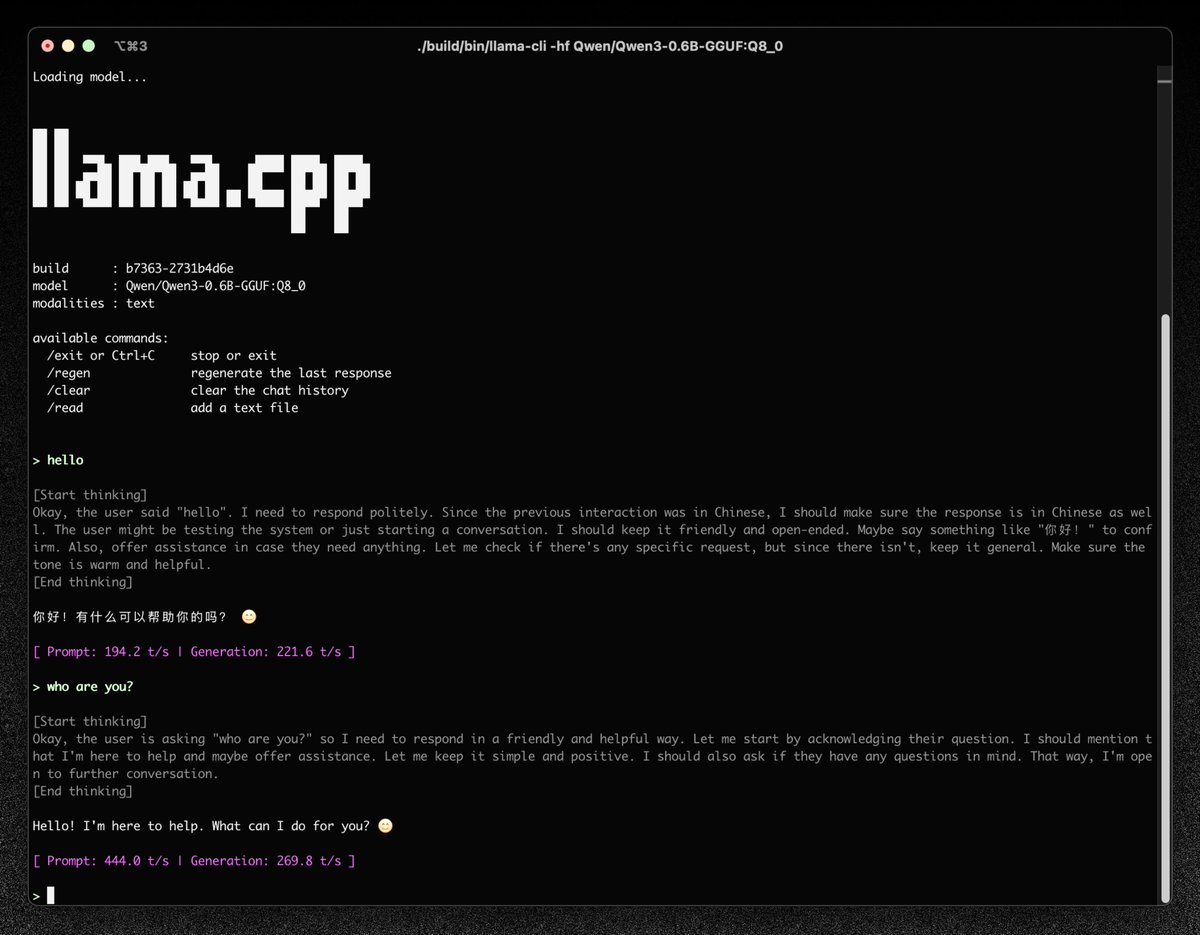

> llama-cli -hf org/model

> llama-cli -hf org/model

llama.cpp gets a new CLI (tested it and it's 🔥) https://t.co/XKVicocKGC

llama.cpp gets a new CLI (tested it and it's 🔥) https://t.co/XKVicocKGC

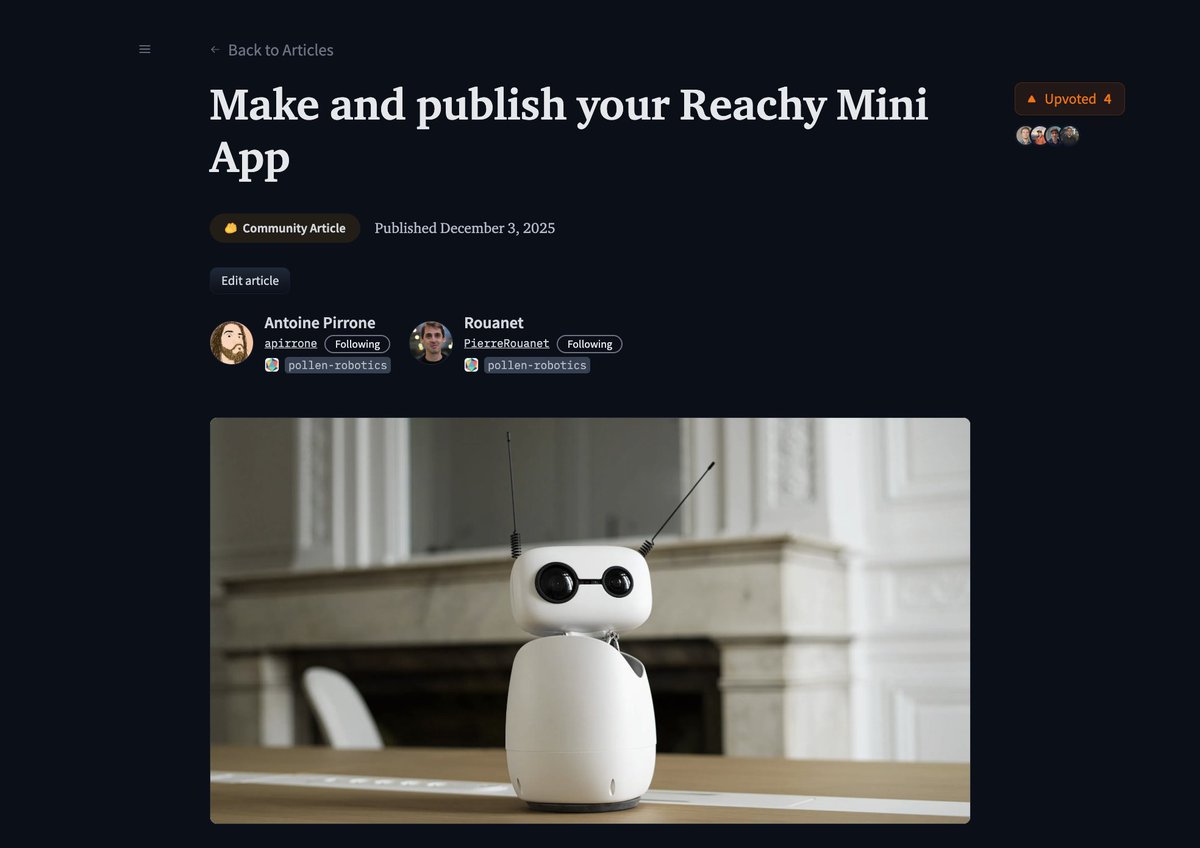

Even if you don't have a reachy mini (yet!), you can now creates apps thanks to our SDK, API and simulation and share them with the community. If you create simple apps in the coming days, I'll try them on my mini and share a video of them (+ you'll probably get good visibility as we're shipping a large number soon). Some ideas I had: - "What is love" Reachy mini plays "what is love?" and do the classic Jim Carrey head move - "Metronome app": Reachy's antennas turn into a metronome - "Relax app": Reachy plays relaxing music and does some calm zen moves - "Magic 8-Ball" Reachy answers a simple yes/no question by nodding or shaking its head based on a random outcome. - "Peek-a-Boo": Reachy stays hidden until an object (like a hand) gets close, then quickly pops its head up. - "Bless you": Reachy mini says "bless you" when you cough - "Take a picture": Reachy mini takes a picture of you - "Read": Reachy mini reads the paper you show him (using OCR) - "Face-tracking app" simple face-tracking app - Reachy follows your face as you move around - "Who's speaking": Reachy tries to detect who's speaking and turn/concentrate on them - "Describe the room": Reachy mini scans the room and describes it - "Describe what's on my screen": Reachy detects your laptop screen and describes what's on it - "Describe the object" you show an object, Reachy tells you what it is - "Where are?" Where are my keys, Reachy scans the room and point to where they are - "Dance app": Reachy mini does simple dances - "Translation app": you say something in English, Reachy repeats it in French So many ideas haha

Rnj-1-Instruct is now the #1 trending text generation model on HF! https://t.co/Gt5WGq9vLp

Rnj-1-Instruct is now the #1 trending text generation model on HF! https://t.co/Gt5WGq9vLp

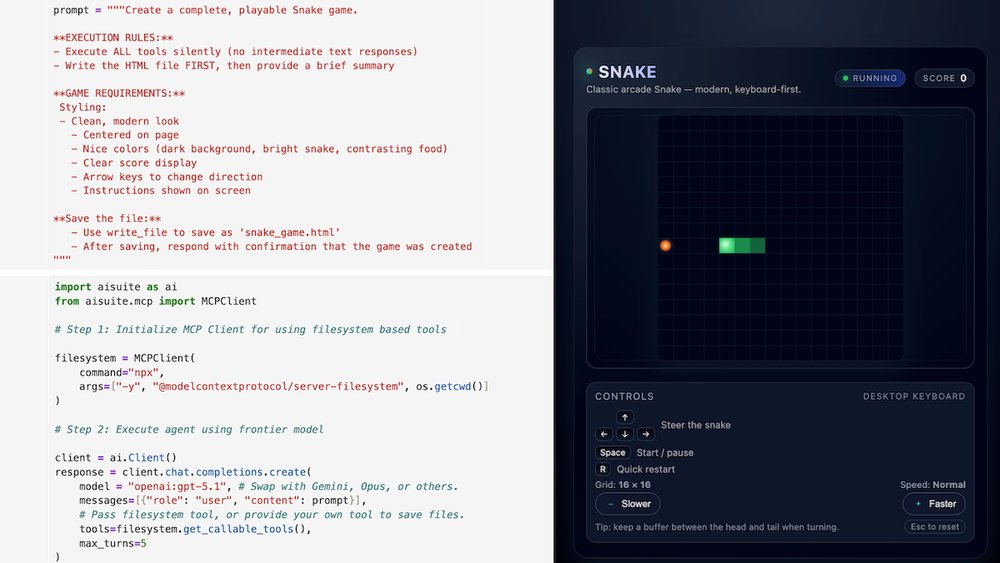

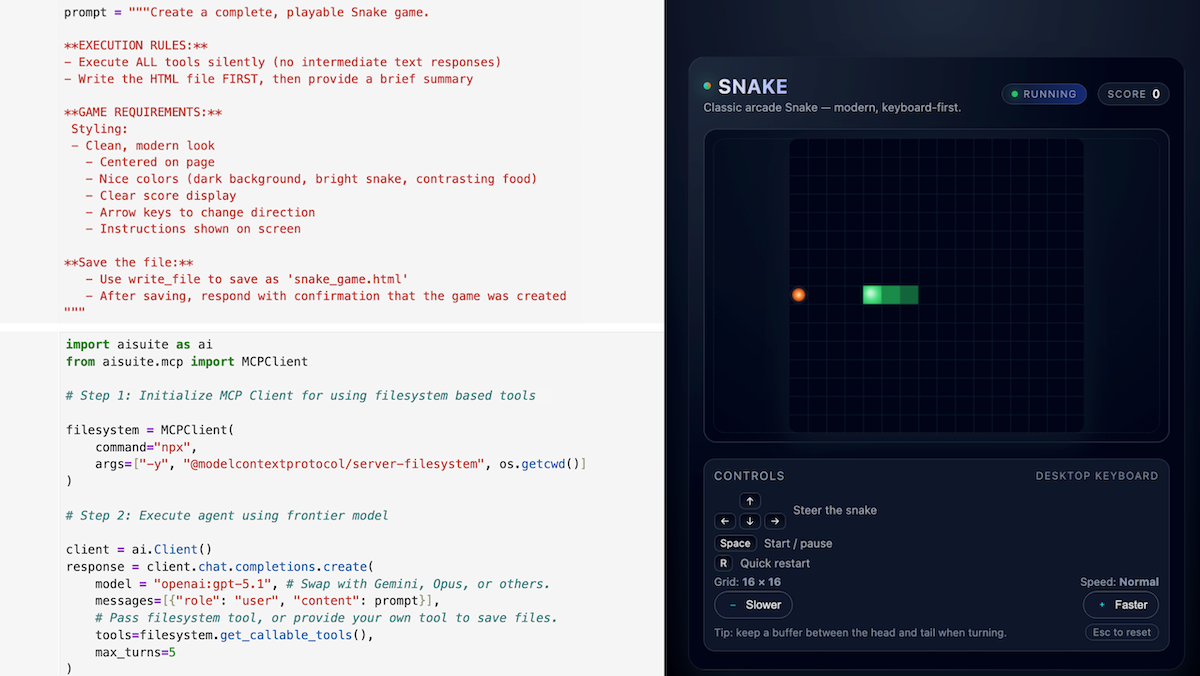

Sharing a fun recipe for building a highly autonomous, moderately capable, and very UNreliable agent using the open source aisuite package that Rohit Prasad and I have been working on. With a few lines of code, you can give a frontier LLM a tool (like disk access or web search), prompt it with a high-level task (such as creating a snake game and saving as an HTML file, or carrying out deep research), and let the LLM loose and see what it does. Example in image. Caveat: This is not how practical agents are built today, since most need much more scaffolding (see my Agentic AI course to learn more), but is still interesting to experiment with. Longer write-up here: https://t.co/BdS8tGhnIy

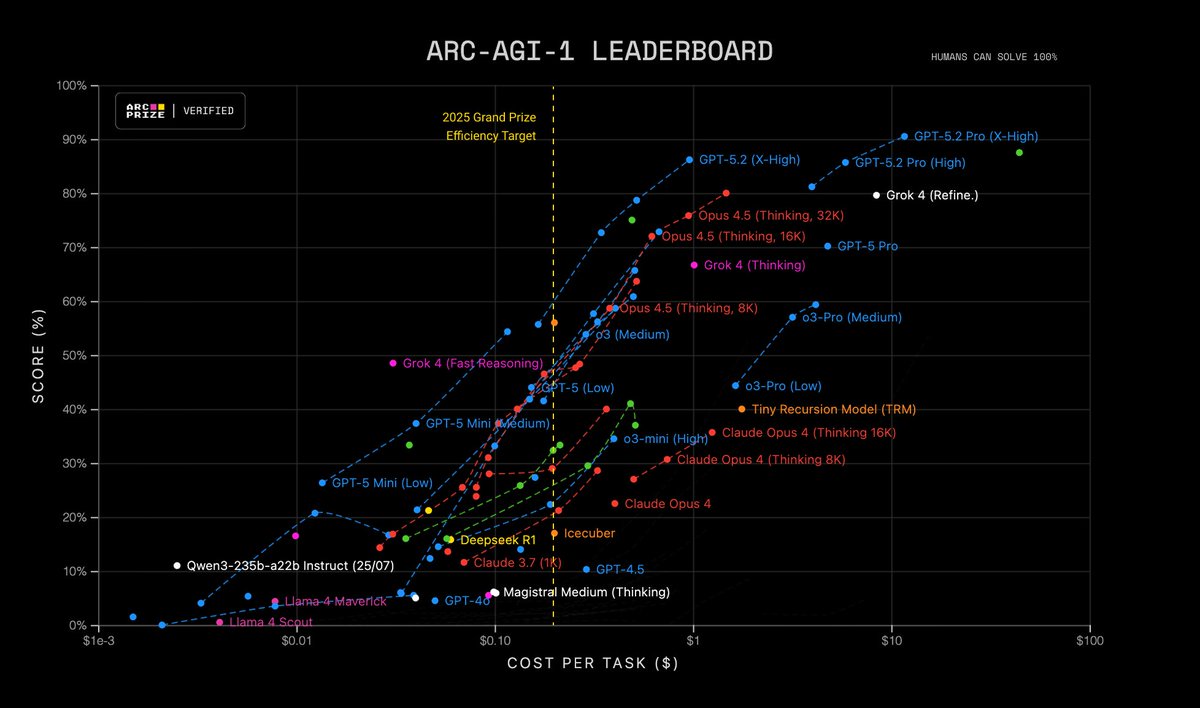

A year ago, we verified a preview of an unreleased version of @OpenAI o3 (High) that scored 88% on ARC-AGI-1 at est. $4.5k/task Today, we’ve verified a new GPT-5.2 Pro (X-High) SOTA score of 90.5% at $11.64/task This represents a ~390X efficiency improvement in one year https://t.co/9T47FdZ5Ry

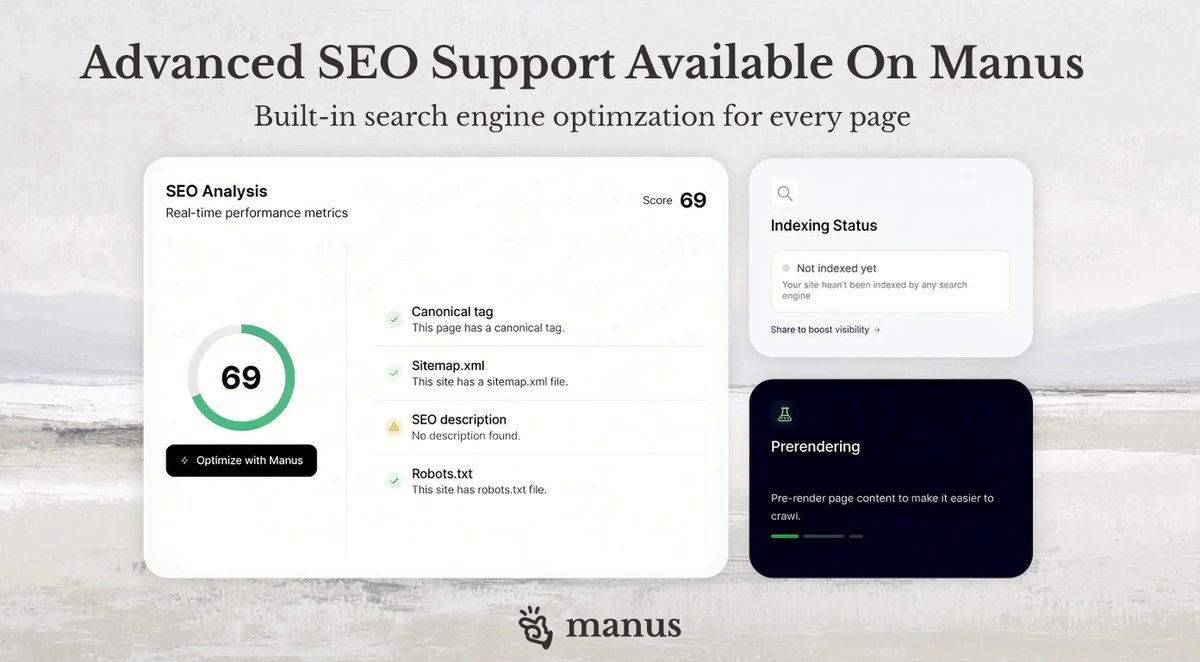

A website that doesn't rank is a missed opportunity. We’ve added a complete SEO engine to Manus Web App so you can stop worrying about config and start getting traffic. Turn your site into a growth engine today. https://t.co/c8wZzgURBM https://t.co/EP7ITH4YV0

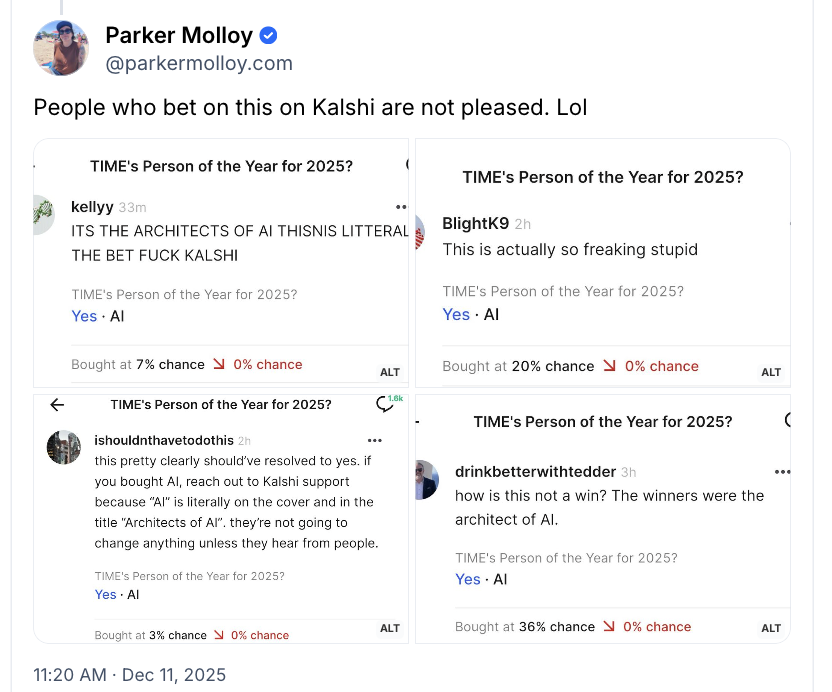

the Kalshi bets for Time Person of the Year being "AI" resolved to No because the actual winner was a bunch of AI executives they called "Architects of AI" https://t.co/TwOipqmjt1

I’ve watched this 200 times https://t.co/JhwGXUMsI6