Your curated collection of saved posts and media

<3 https://t.co/EjXen8elV2

OpenAI just released circuit-sparsity https://t.co/cebKqr7IaQ

OpenAI just released circuit-sparsity https://t.co/cebKqr7IaQ

on HF now! go get you some! https://t.co/byVhQFdVxX

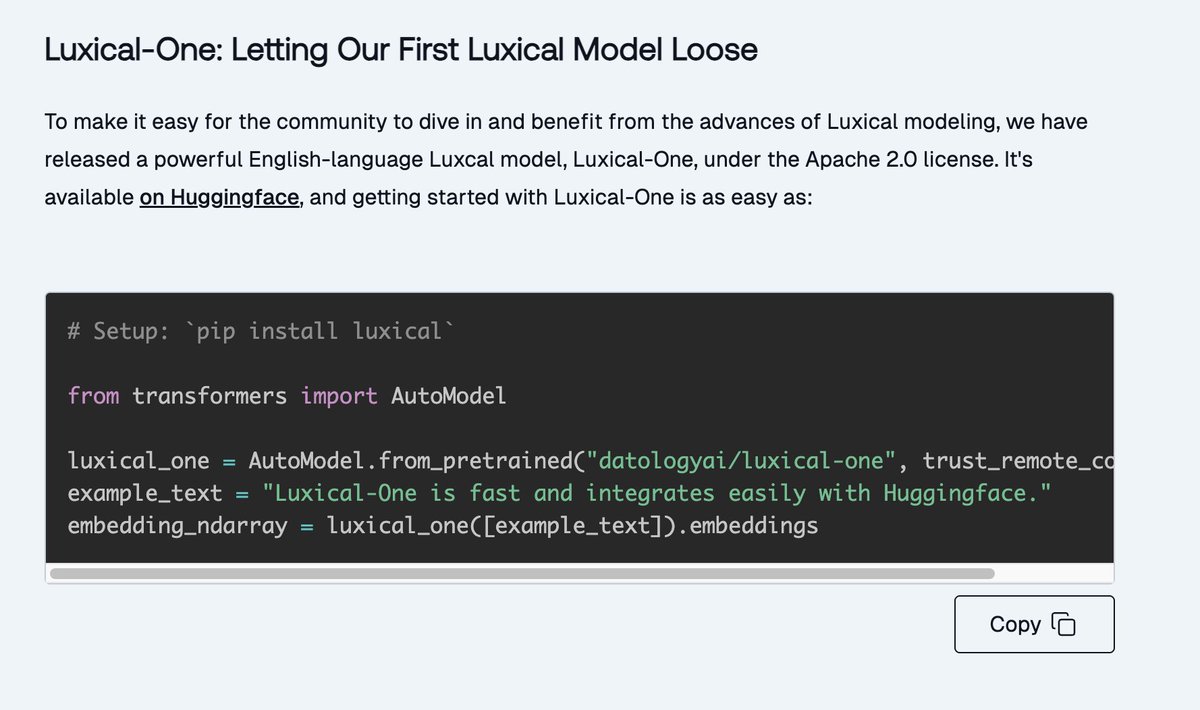

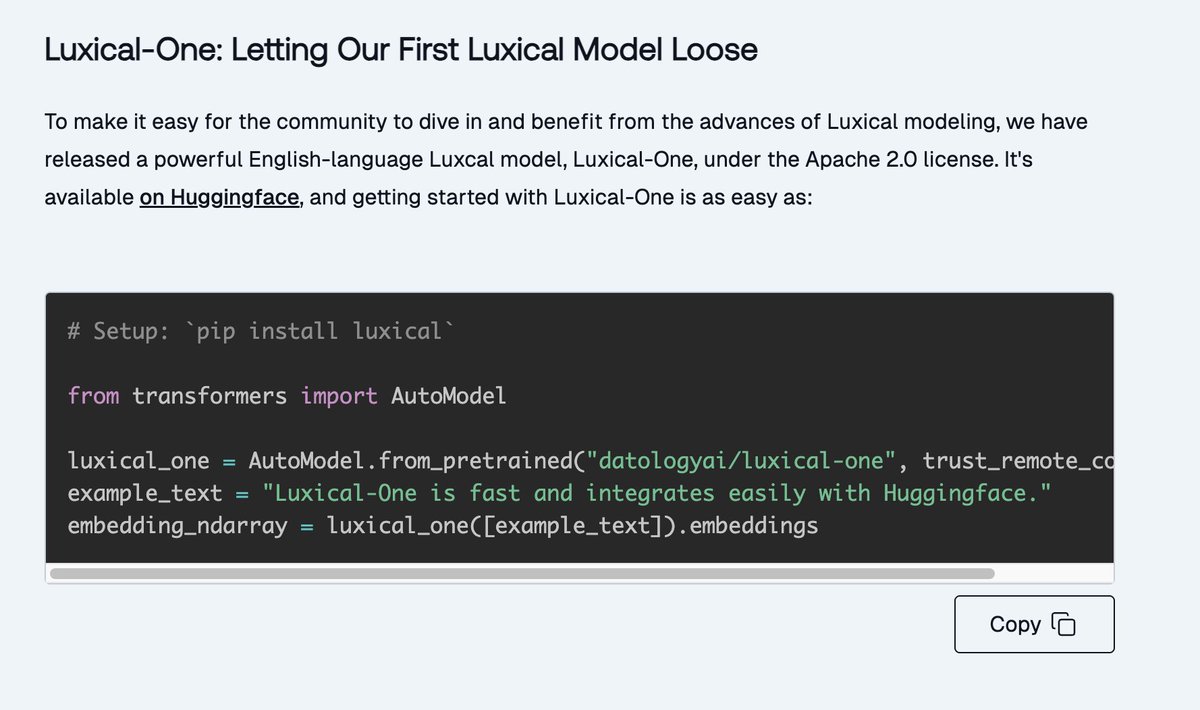

We have a fun one for you! Introducing Luxical! Embedding models and fast text models are the workhorses of data curation pipelines. Fast text models are, well fast. Embedding models are more precise, but they really are not designed for the types of things we want to do in web

on HF now! go get you some! https://t.co/byVhQFdVxX

Just dropped a new text embedding methodology. Fast as heck on CPU only and still great for document similarity analysis, clustering, and classification. How? Use a tiny ReLU network to approximate a big transformer from lexical (term frequency / bag of words) features. https://t.co/IXfpZCVcgt

One of the coolest AI project ever? Training an LLM from scratch using ONLY texts from 1800-1875 London. Goal: create a language model with zero modern bias contamination. a true time capsule 🧙♂️ https://t.co/teepdYTfqh

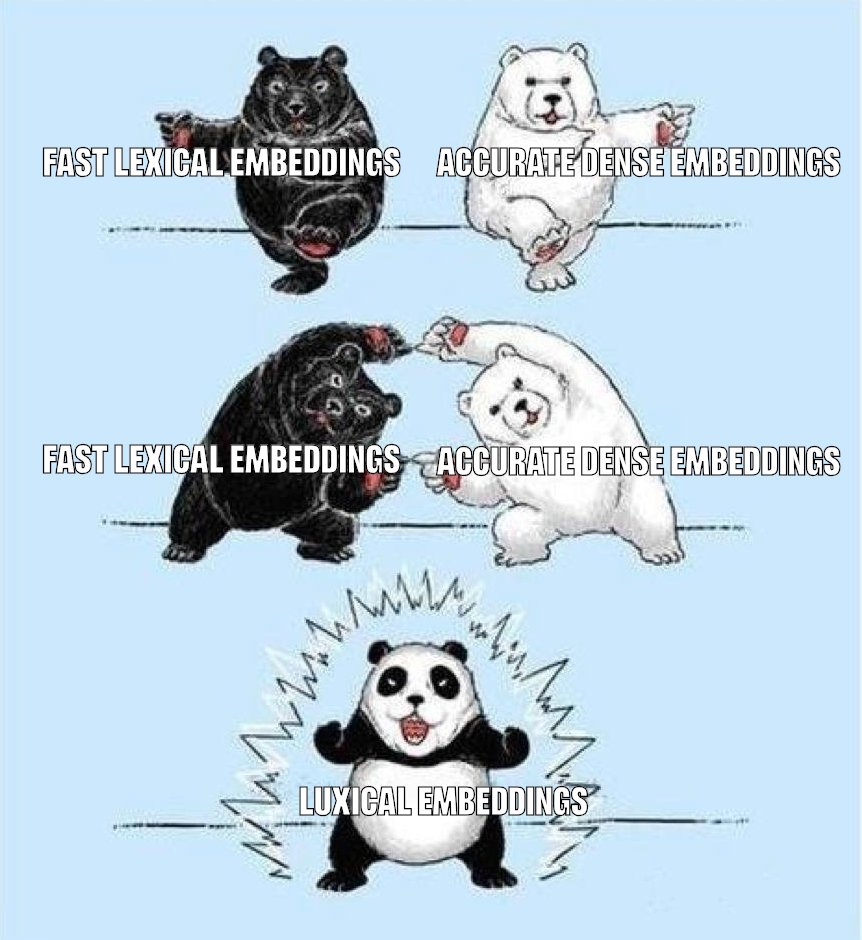

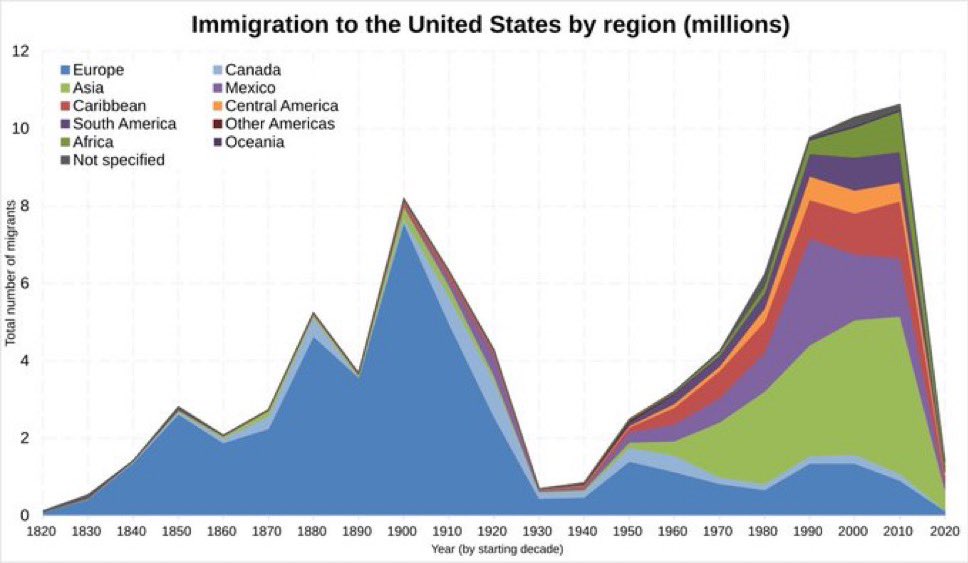

@Ivantheboomer @atalovesyou We don’t want to be a minority in our own country https://t.co/s1BkS5eofu

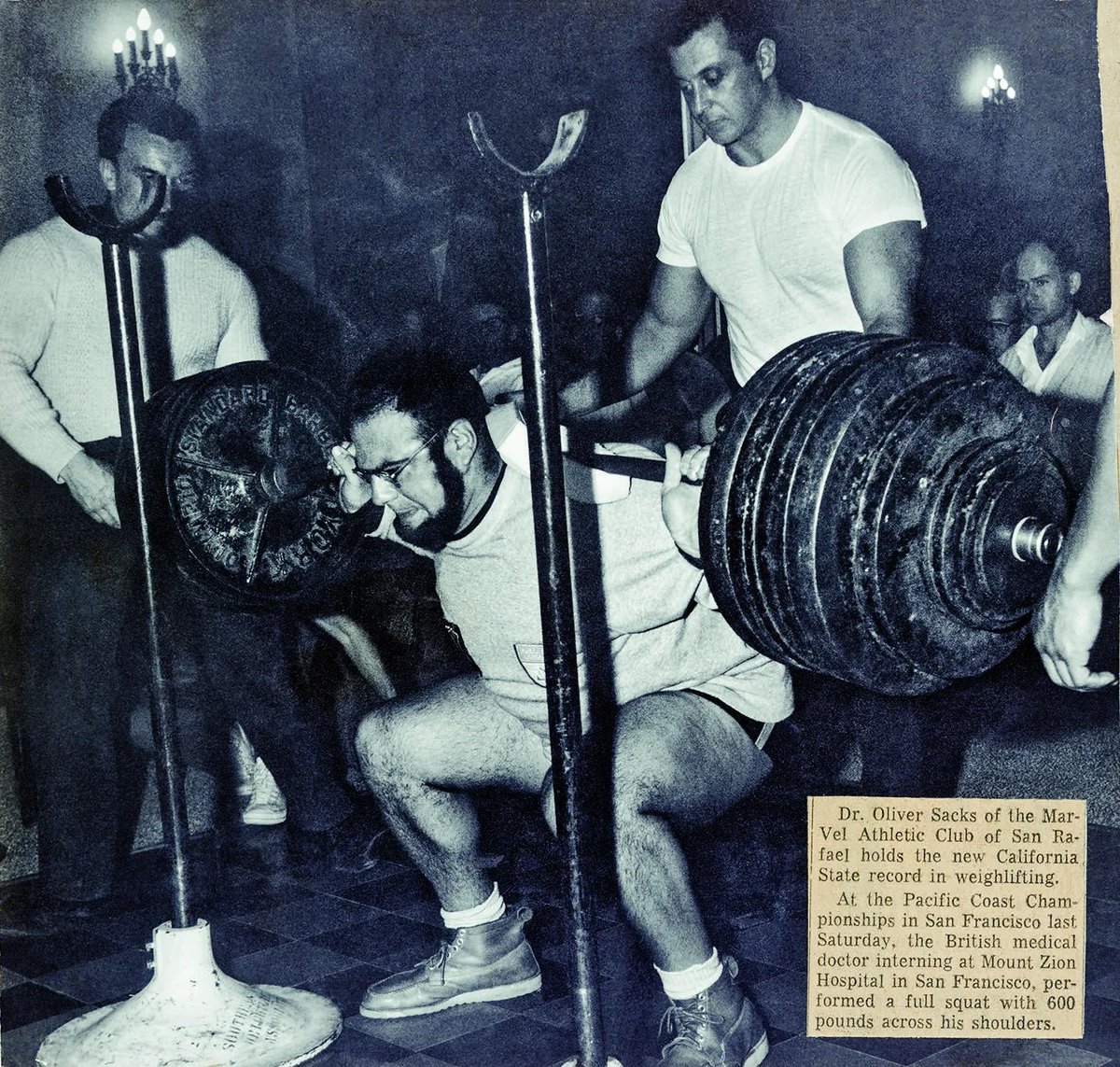

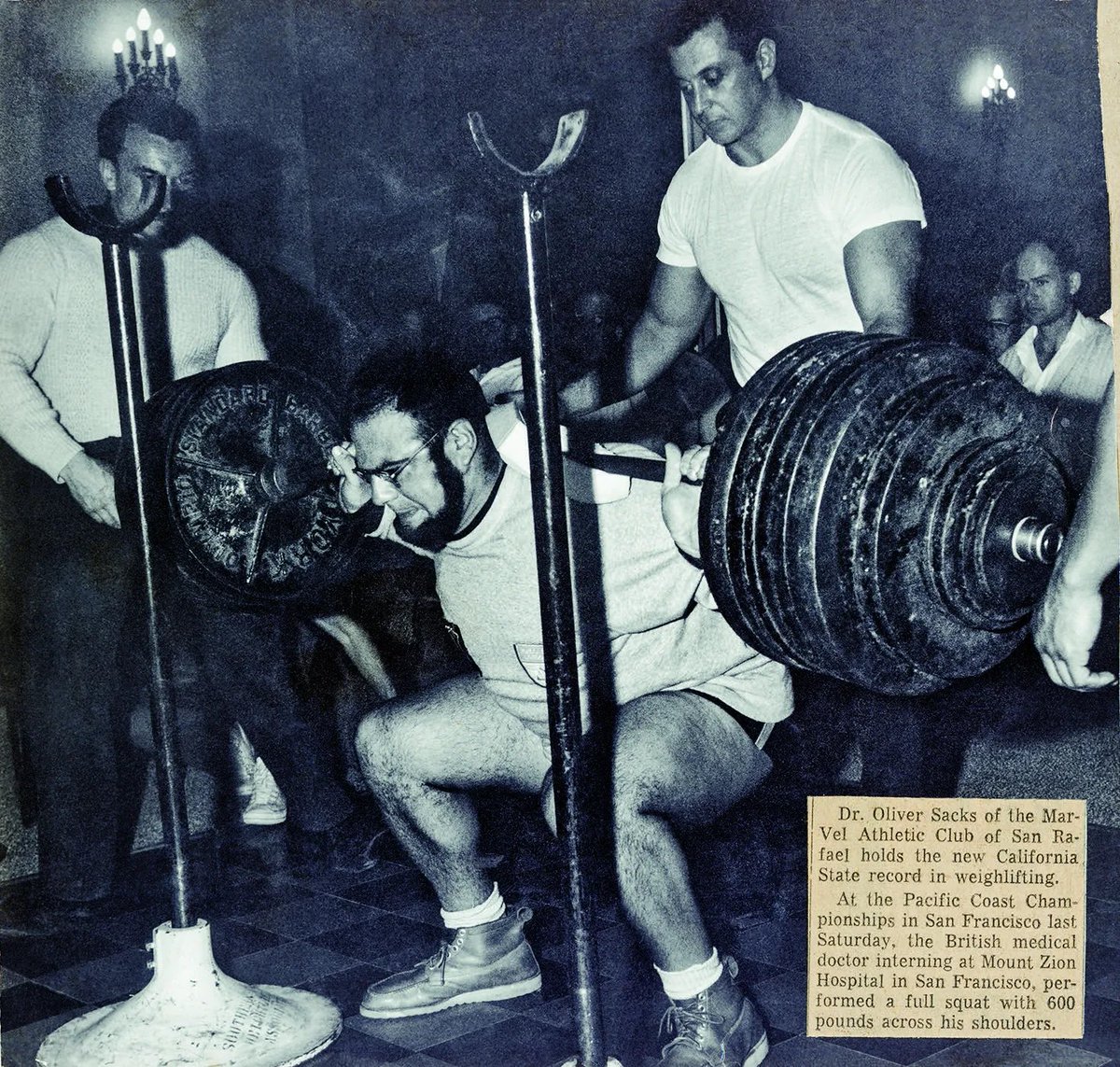

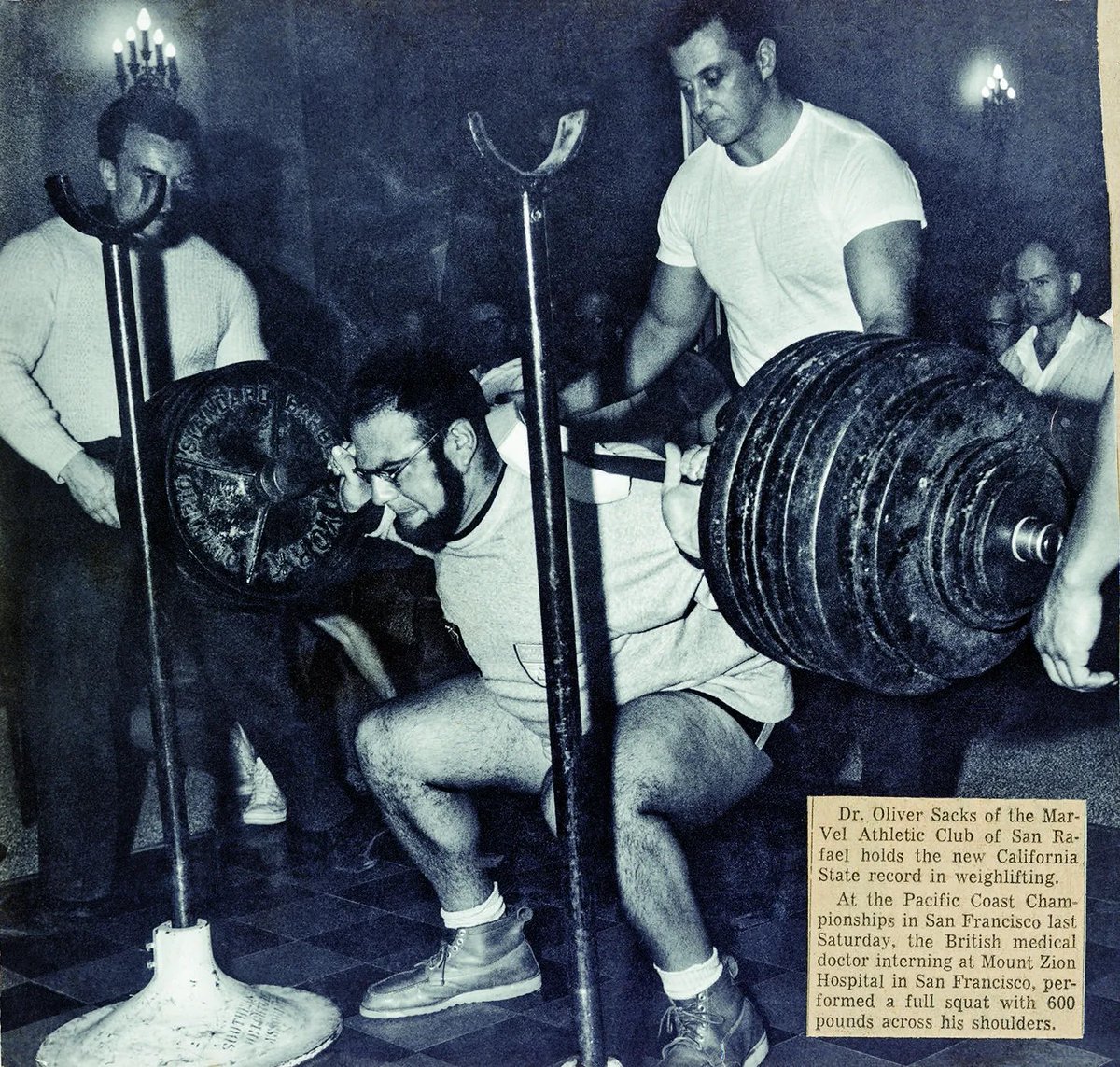

600 lb squat don’t care https://t.co/4xayMXnJ9g

Bombshell: Oliver Sacks (a humane man & a fine essayist) made up many of the details in his famous case studies, deluding neuroscientists, psychologists, & general readers for decades. The man who mistook his wife for a hat? The autistic twins who generated multi-digit prime numb

600 lb squat don’t care https://t.co/4xayMXnJ9g

https://t.co/50jJSSq2WT

This former CBS employee laments Bari Weiss works there now. I tell him anyone who feels the same should quit. Instead of ignoring me or making a good faith argument, he screenshots an old and out of context tweet, and just posts it partially. https://t.co/TPZaoJUXE8

https://t.co/50jJSSq2WT

@poddtadre https://t.co/oV9HfEQd5X

Training of Physical Neural Networks Could we train AI models 1000x larger than today's? Could we run them privately on edge devices like smartphones? The answer might be yes, but not with GPUs. This paper suggests that the path forward may require physical neural networks. Physical Neural Networks (PNNs) use properties of physical systems to perform computation. Optical systems, photonics, analog electronics, and even mechanical substrates. Physics can compute certain operations far more efficiently than digital transistors. The problem isn't inference. The problem is training. Backpropagation has powered deep learning's success, but implementing it in physical hardware faces fundamental challenges. Weight transport, gradient communication across layers, and precise knowledge of activation functions. This review maps the landscape of PNN training methods: 1) In-silico training: Create digital twins of physical systems, optimize them computationally, then deploy to hardware. Fast iteration but limited by model fidelity. Fabrication imperfections, misalignments, and detection noise break the digital-physical correspondence. 2) Physics-aware training: Physical system performs forward pass, digital model handles backpropagation. A hybrid approach that mitigates experimental noise while maintaining gradient-based optimization. Successfully demonstrated across optical, mechanical, and electronic systems. 3) Equilibrium Propagation: For energy-based systems that naturally minimize a Lyapunov function. Weight updates use local contrastive rules comparing equilibrium states. Implemented on memristor crossbar arrays with potential energy gains of 4 orders of magnitude versus GPUs. 4) Local learning methods: Avoid global gradient communication entirely. Physical Local Learning uses forward-mode differentiation through physical perturbations. No digital model required. Demonstrated on multimode optical fibers with 10,000+ trainable parameters. The emerging hardware spans optical correlators, photonic integrated circuits, spintronic devices, memristor crossbars, exciton-polariton condensates, and quantum circuits. No method yet scales to backpropagation's performance on digital hardware. But the trajectory is clear: diverse training techniques are converging on practical PNN implementations. As AI scaling hits GPU limits, physical computing offers a path to models orders of magnitude larger and more energy-efficient than what's currently possible. Paper: https://t.co/AiTbVWMZSP Learn to build with LLMs and AI Agents in our academy: https://t.co/zQXQt0PMbG

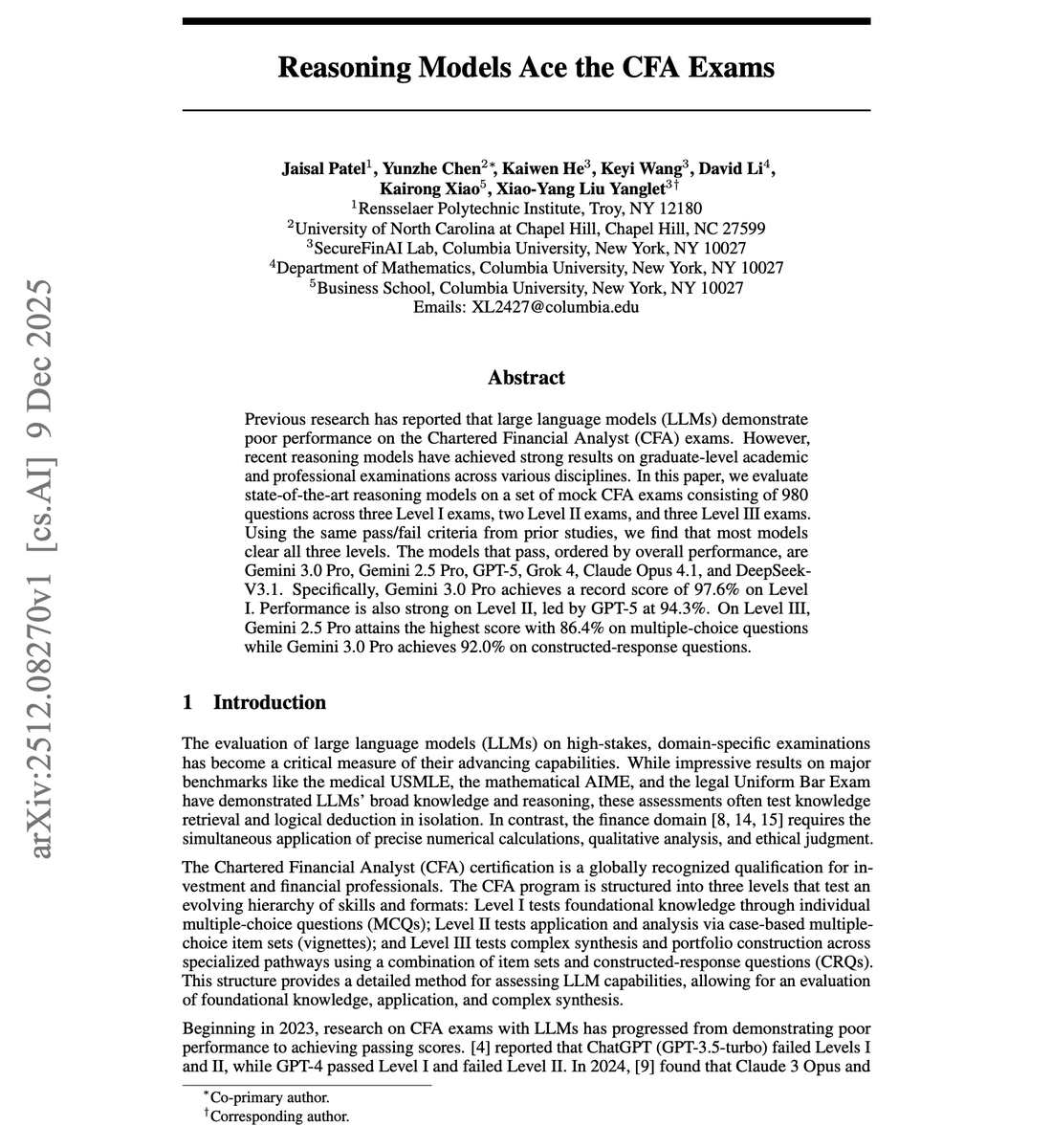

Reasoning models now pass all three levels of the CFA exam. In 2023, ChatGPT (GPT-3.5-turbo) failed CFA Levels I and II. GPT-4 passed Level I but failed Level II. LLMs struggles with finance exams requiring numerical precision, qualitative analysis, and ethical judgment simultaneously. That ceiling has been shattered, which speaks to the potential of reasoning models. Researchers evaluated state-of-the-art reasoning models on 980 CFA mock exam questions across all three levels. The results: Gemini 3.0 Pro, Gemini 2.5 Pro, GPT-5, Grok 4, Claude Opus 4.1, and DeepSeek-V3.1 all pass every level. Gemini 3.0 Pro achieves 97.6% on Level I. GPT-5 leads Level II with 94.3%. On Level III constructed-response questions, Gemini 3.0 Pro scores 92.0%. The CFA exam tests an evolving hierarchy of skills. Level I covers foundational knowledge through multiple-choice questions. Level II tests the application through case-based vignettes. Level III requires complex synthesis and portfolio construction with both multiple-choice and constructed-response formats. Quantitative methods, previously a major weakness, now show near-zero error rates for top models. The persistent challenge is Ethics and Professional Standards, where even the best models show 17-21% error rates on Level II. An interesting pattern emerges with prompting. Chain-of-thought reasoning helps baseline models substantially but shows inconsistent effects on reasoning models for multiple-choice questions. However, CoT remains highly effective for constructed-response questions. Gemini 3.0 Pro jumps from 86.6% to 92.0% on CRQs with explicit reasoning prompts. Reasoning models now surpass the expertise required of entry-level to mid-level financial analysts. The question shifts from whether AI can pass professional exams to how these capabilities translate to real-world financial decision-making. Paper: https://t.co/wdwtefM3EN Learn to build effective AI Agents in our academy: https://t.co/JBU5beIoD0

Comet Android can debug your code from your phone. Analyzed CI logs, Traced the failure, Figured out a fix, Committed the fix and opened a PR that’s ready to merge https://t.co/lcsuuE7cju

Launching: OPEN SOULS. Open source framework for creating AI souls Check out the repo, run the examples souls, and most of all, have fun https://t.co/hzQ4TBD0vd

Personally feels like we've reached the peak of "Proprietary APIs" and that we're entering a much more balanced world for AI where open-source, training, @huggingface (and other players) will start getting a much bigger share of the attention, usage and revenue. Let's go! https://t.co/nNFntbAmao

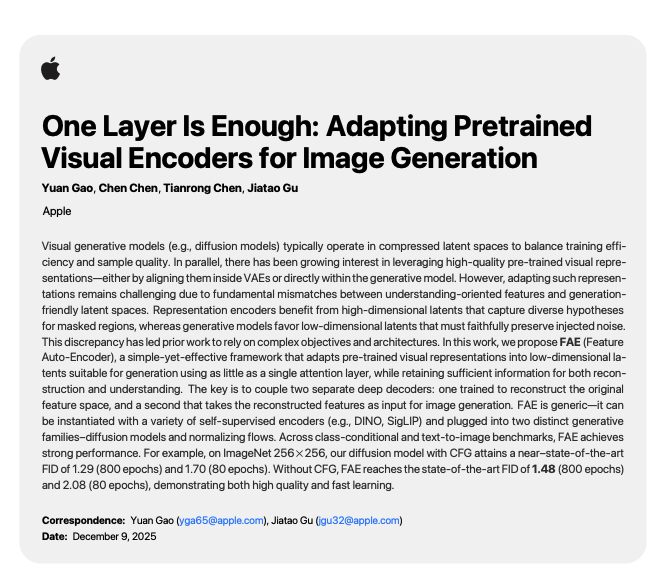

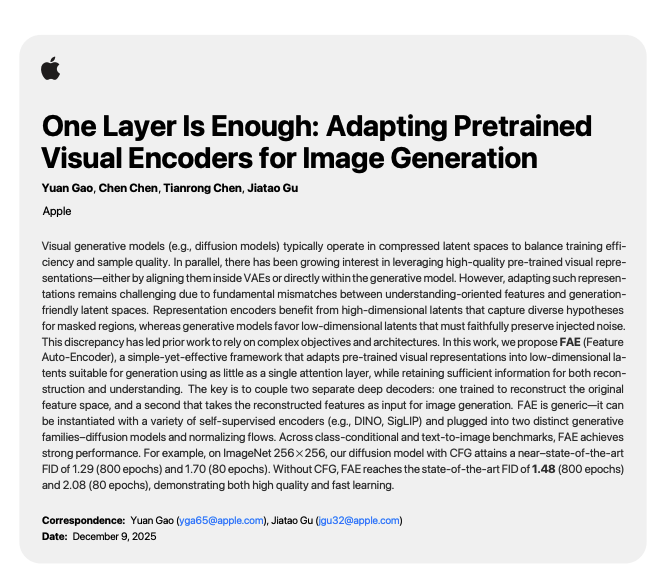

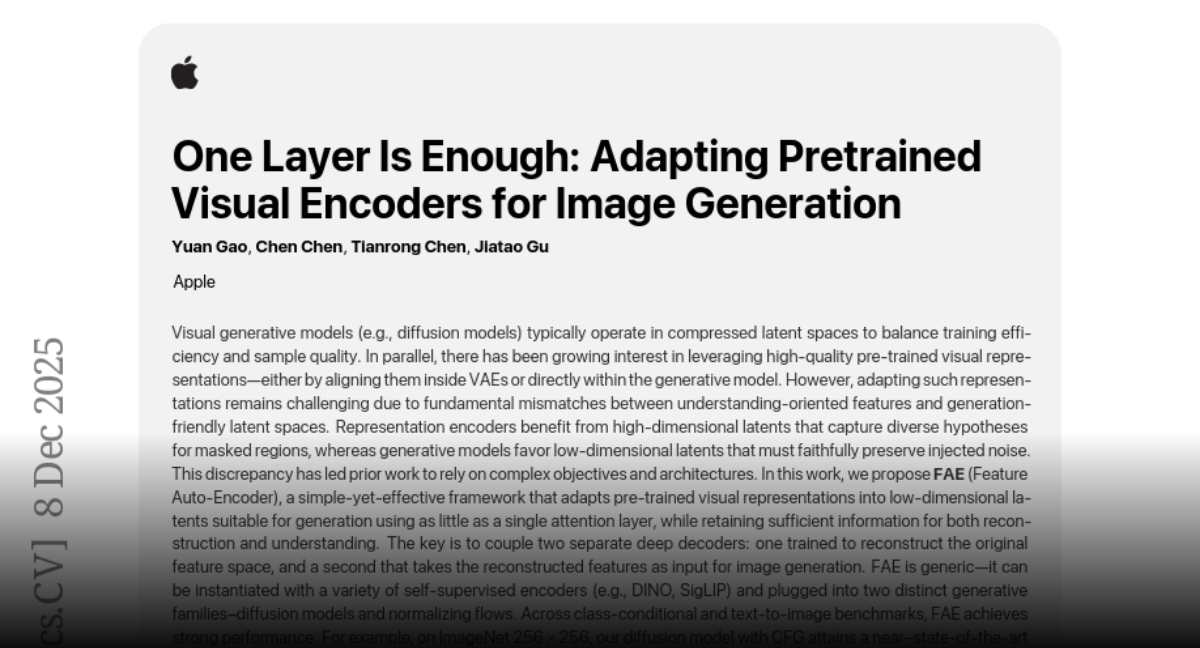

Apple presents One Layer Is Enough Adapting Pretrained Visual Encoders for Image Generation https://t.co/CGs5cb4M9J

discuss: https://t.co/1aUcQ6S8VA

Favorite way to use Copilot with April Yoho from GitHub 🤖✨ https://t.co/sVg21DwdlT

Want to make a big impact in robotic research? Come and work with robots and the smartest students @StanfordSVL! We are hiring a software developer, focusing on simulation for robotics & robotic learning. You'll be working directly with me , @jiajunwu_cs and our amazing students and researchers. Please apply at: https://t.co/jr2hSqMKiP

“every year, in every life, i will remember the warmth in your embrace on this special day.” © jokiruru #amodei #soulbondtwt #yumetwt https://t.co/je0oraObXu

present from @LanternRites ..🥹🥹🥹🥹🥹🥹I LOVE YOU SO MUCH WAAAAA THE AMODEI EVER https://t.co/BAVgZe4D2U

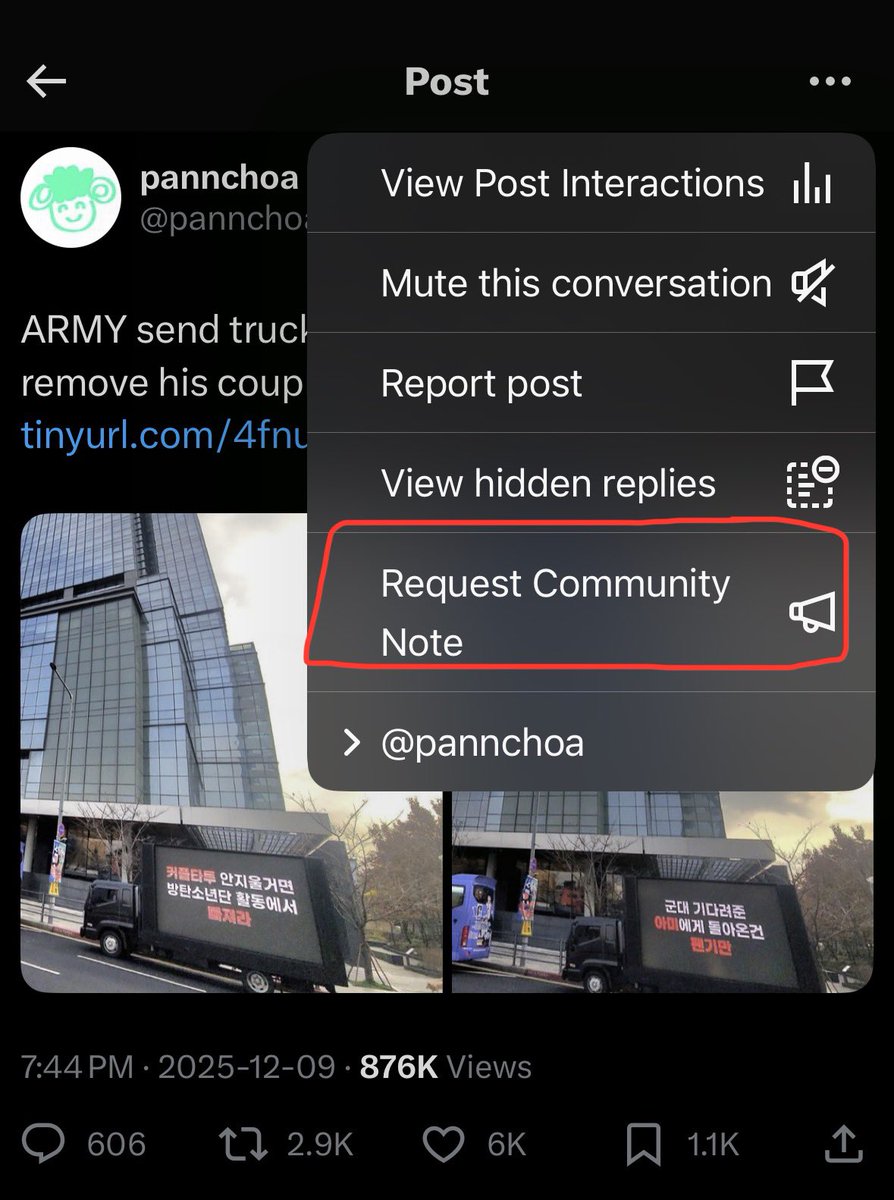

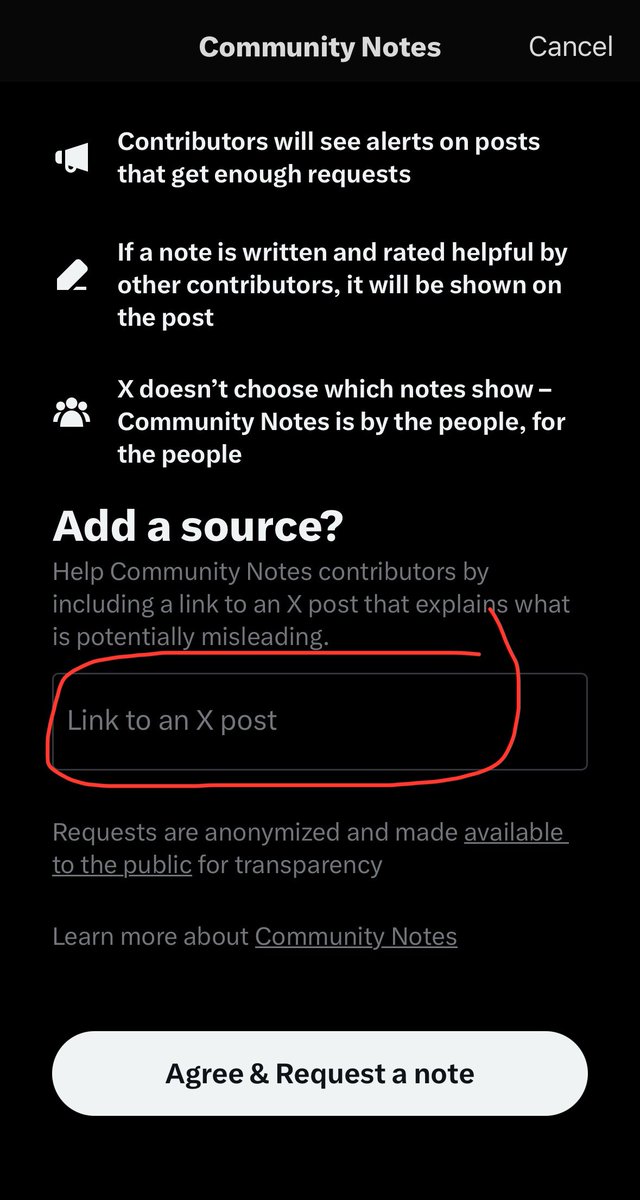

ฝากทุกคนช่วยกันกด request community note หน่อยนะคะ แอคมันใหญ่จนไม่ปลิวแน่ๆ เลยคิดว่าอย่างน้อยให้มันขึ้น community note เฟคนีวก็ยังดี กดตามรูปนี้ได้เลย แล้วตรงลิ้งสามารถก้อปลิ้งที่เราโควทไปใส่ได้เลย หรือถ้าใครมีทวิตที่เค้ายันเรื่องว่ามันไม่ใชอามี่ก็เอาไปแปะได้ หรือแปะในมชก็ได้ https://t.co/lX3XV7ZfZD

There is currently no verified evidence that the truck was sent by ARMY. This claim is unverified and may mislead readers. The situation also leaves open the possibility of impersonation by individuals seeking to harm the artist or the group. @BIGHIT_MUSIC

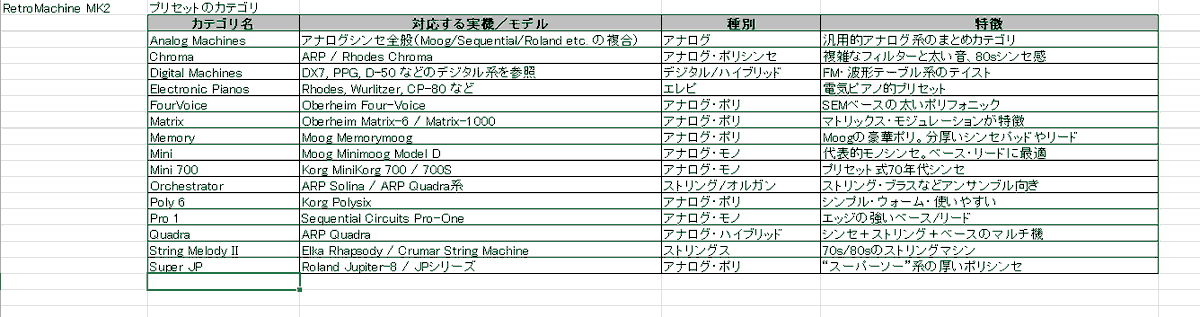

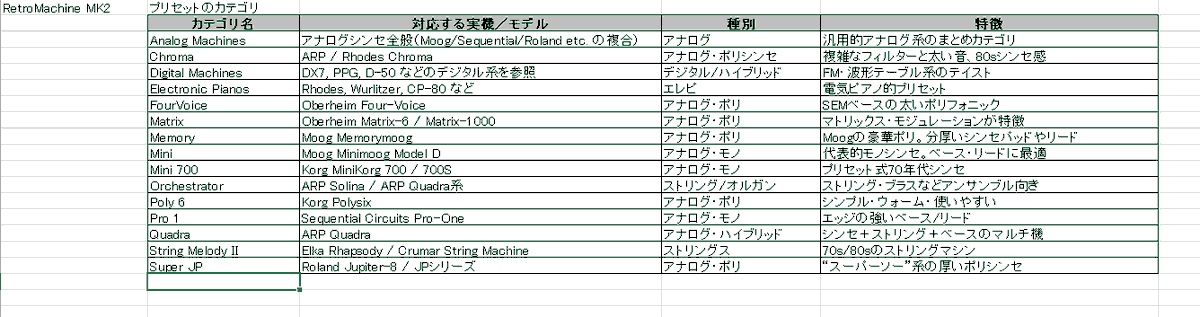

Native InstrumentsのAbsynth 6がリリースされたけど、 ビンテージシンセを調べてたら、 Native Instruments の RETRO MACHINES MK2が、 ちょっと気になった プリセットのフォルダ(カテゴリ)の実機を、 Chat GPTにまとめてもらった 参考までに #nativeinstruments #Retromaschines https://t.co/itL099XWbt

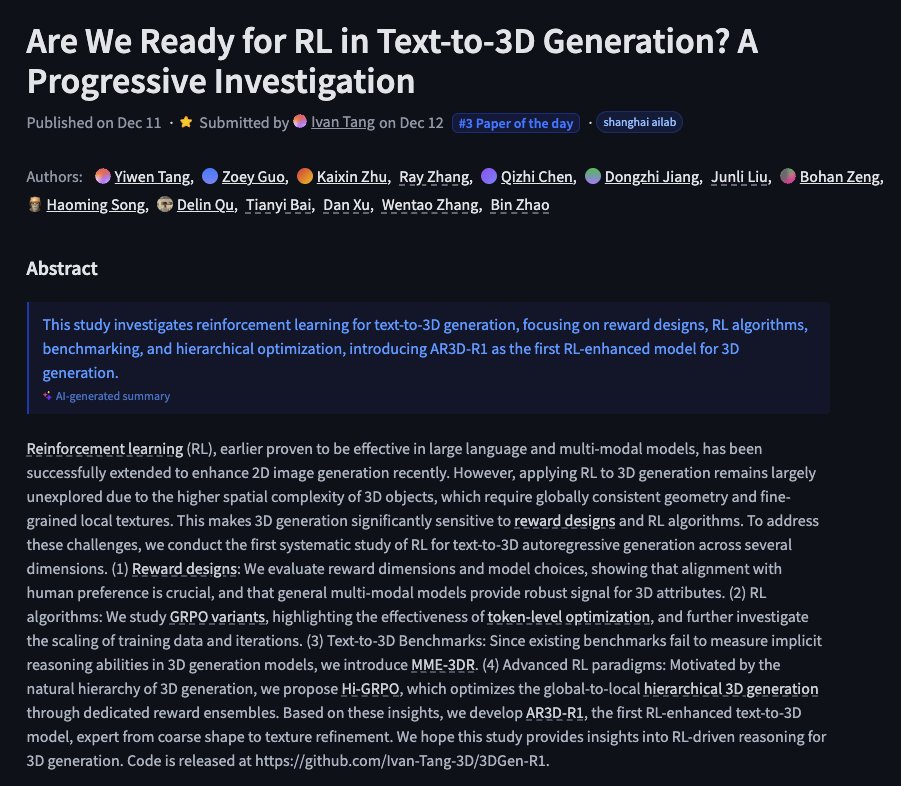

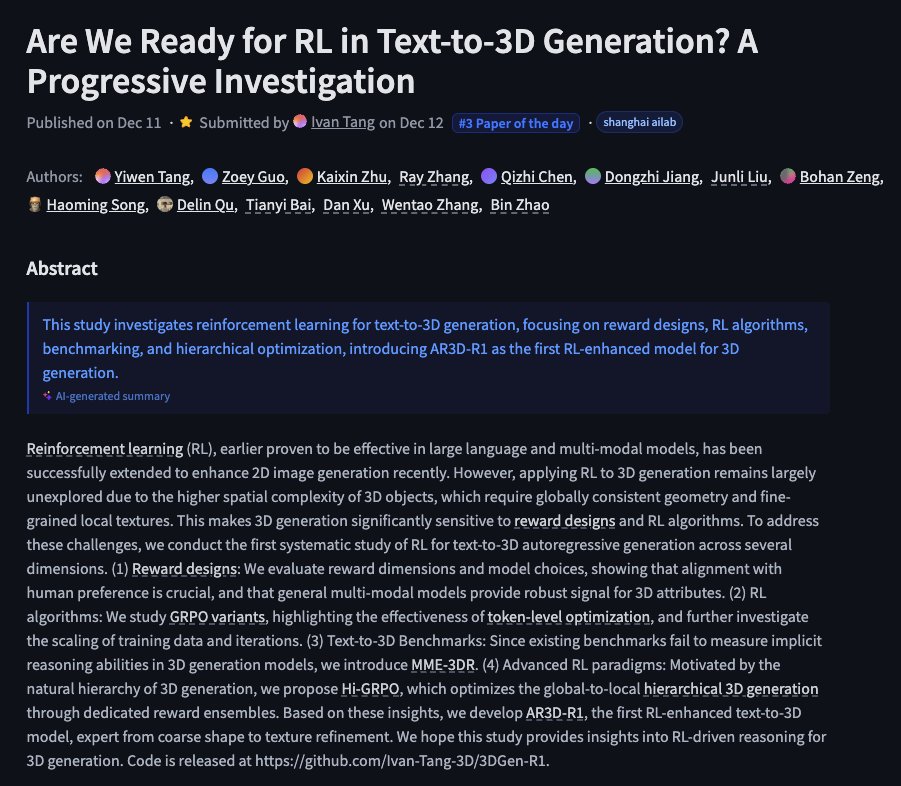

Are We Ready for RL in Text-to-3D Generation? A Progressive Investigation https://t.co/jdm6fDmOCI

discuss: https://t.co/5OzgF5ytyy

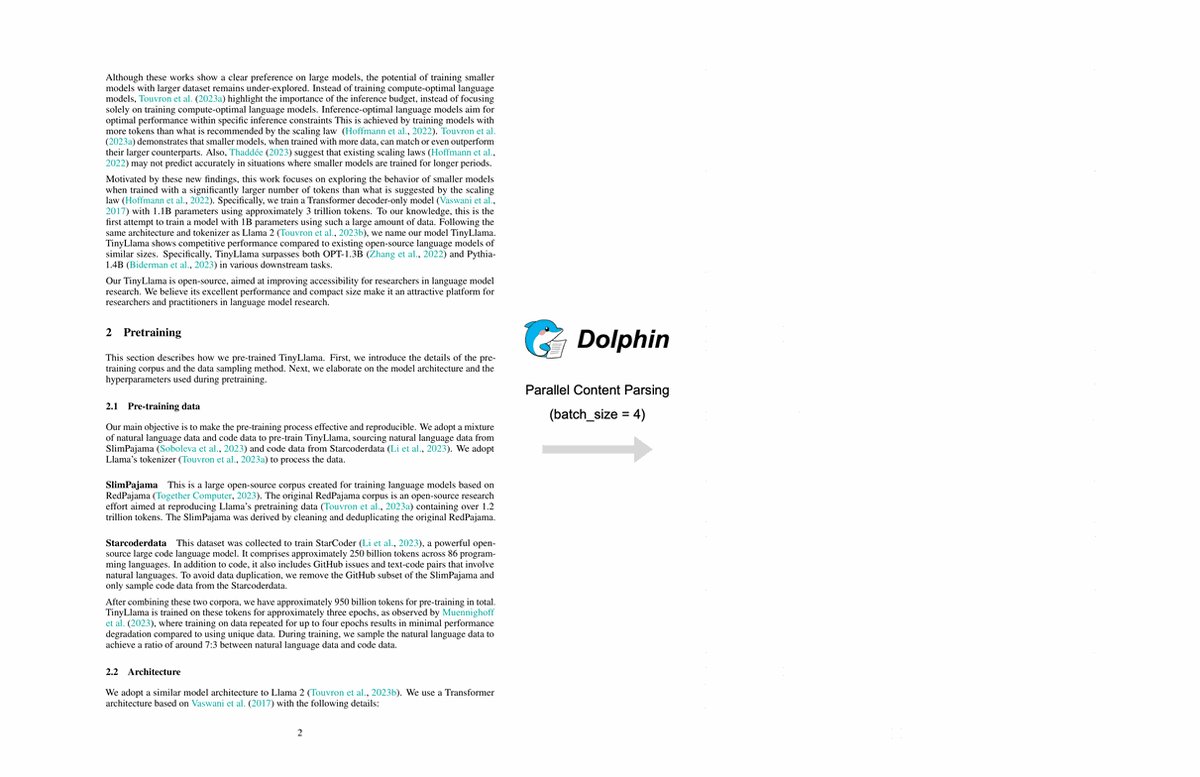

Dolphin-v2 🐬 new document parsing model released by @ByteDanceOSS ✨ 3B - MIT license ✨ Works on any document: PDFs, scans, photos ✨ Understands 21 types of content: text, tables, code, formulas, figures & more ✨ Pixel-level precision via absolute coordinate prediction https://t.co/aLuNxUAs0k

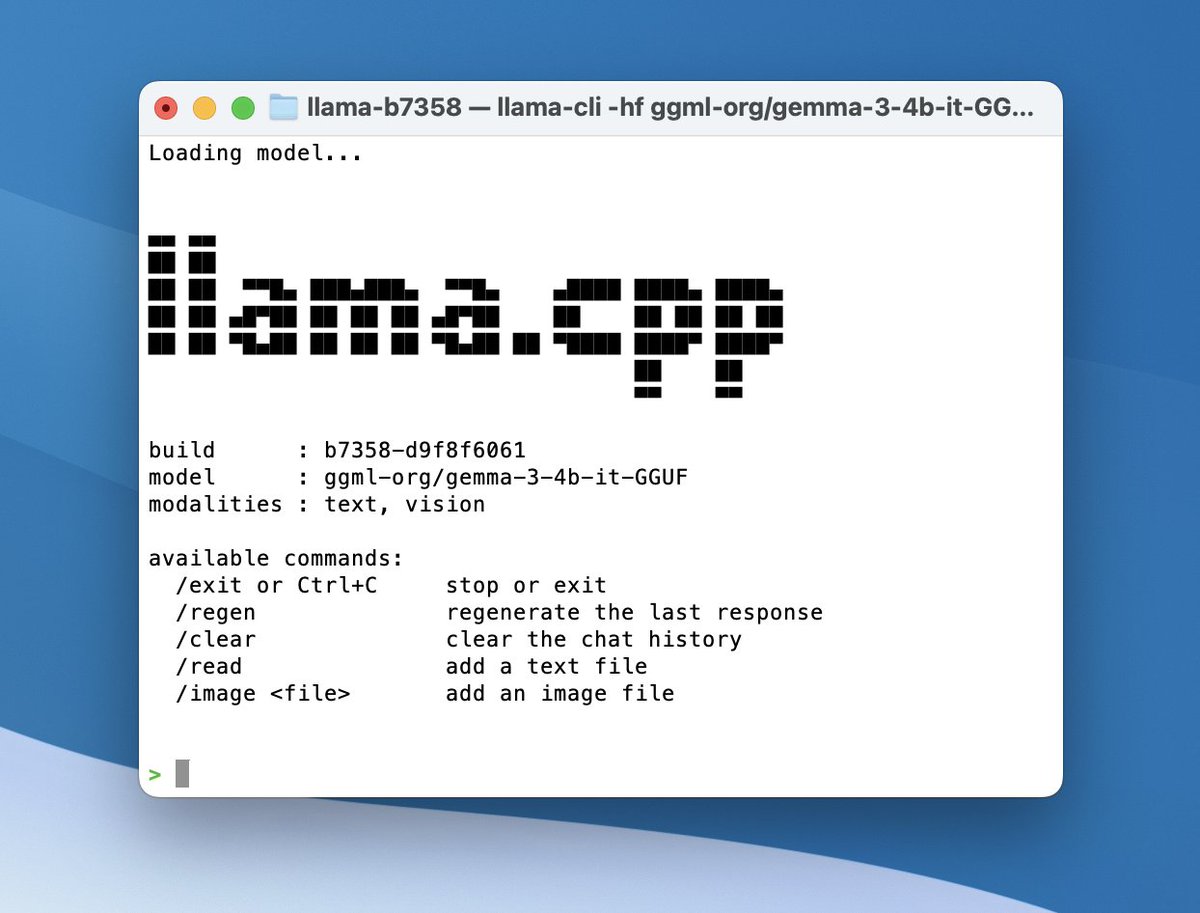

🎉 llama.cpp now has Ollama-style model management. • Auto-discover GGUFs from cache • Load on first request • Each model runs in its own process • Route by `model` (OpenAI-compatible API) • LRU unload at `--models-max` https://t.co/yfmfHL7zzj

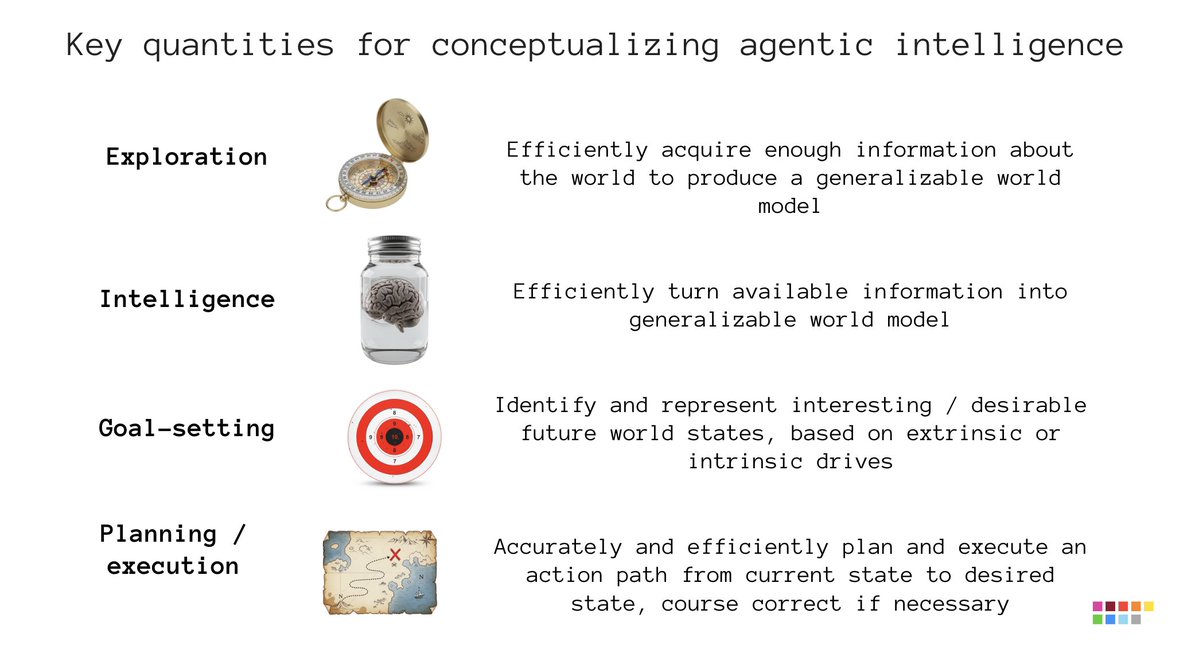

Fluid intelligence as measured by ARC 1 & 2 is your ability to turn information into a model that will generalize. That's not the only thing you need to make an intelligent agent. To start with, when you're an agent in the real world, information is not provided to you, passively. You have to go get it. That's "exploration": the agent's ability to efficiently acquire useful information (to turn into a world model) by interacting with its environment. Next, in the real world, you aren't provided instructions. There's no fixed goal. You have to figure what to do. That's "goal-setting": the ability to identify interesting or desirable future world states, via your intrinsic and extrinsic drives. This is a core part of being autonomous. Finally, "planning" represents the ability to accurately and efficiently map out and execute an action path from the current state to the desired goal, including the ability to course correct. That is also different from the ability to turn information into a model -- it's an application of having a model. All of these problems are still largely open. They're all much easier than solving fluid intelligence, in my opinion. Among them, the hardest one is exploration and the easiest one is planning.

@fchollet What type of intelligence is needed for “exploration, goal-setting, and interactive planning”? What is “beyond fluid intelligence”?

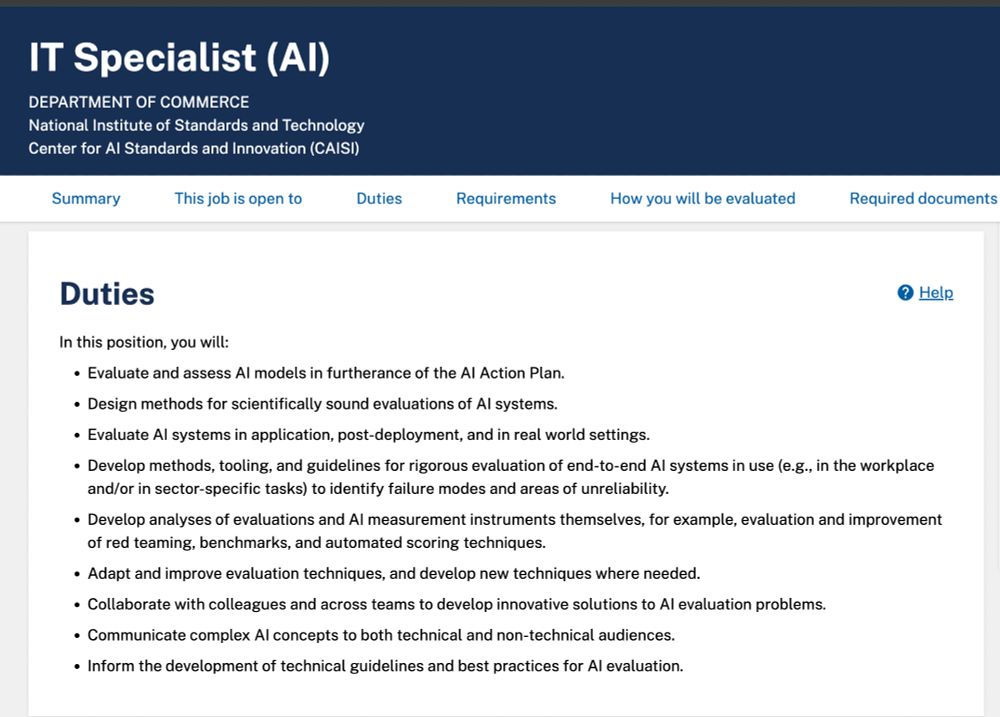

US CAISI is hiring -- the internal govt name is "IT Specialist" but it is effectively a research scientist role! Salary is $120,579 to - $195,200 per year & you work on AI evaluation within government agencies! Dream job for the right person. Details: https://t.co/HCZWEgqHex https://t.co/D9nReCZx6X