Your curated collection of saved posts and media

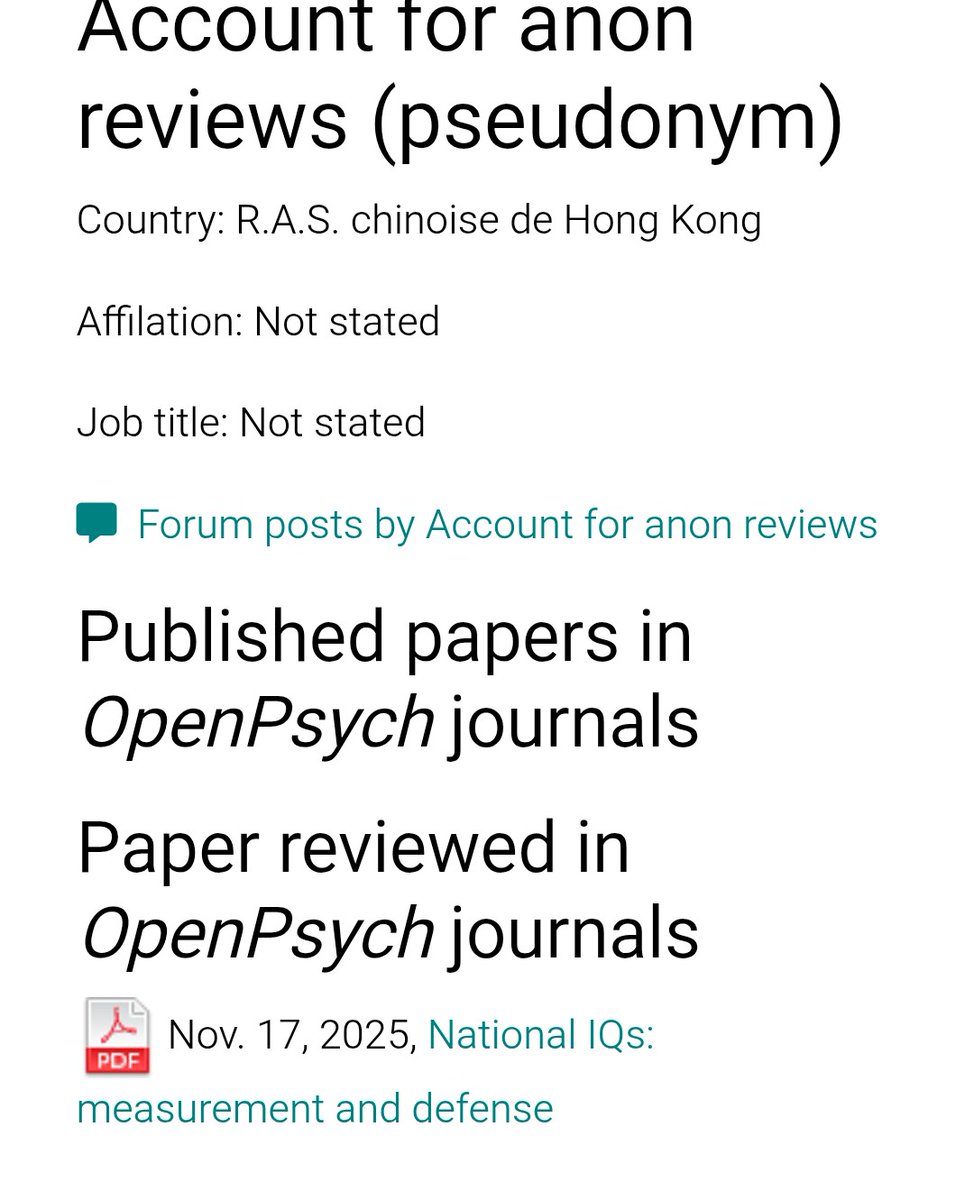

The data is from a "paper" hosted on the author 90s ass loking webpage and revieved by the extremely Well regarded reasearcher .... Some anonymous guy from Hongkong that only ever reviewied this one "paper". A random post on twitter ia a better source lmao. https://t.co/lW5C2JNsst

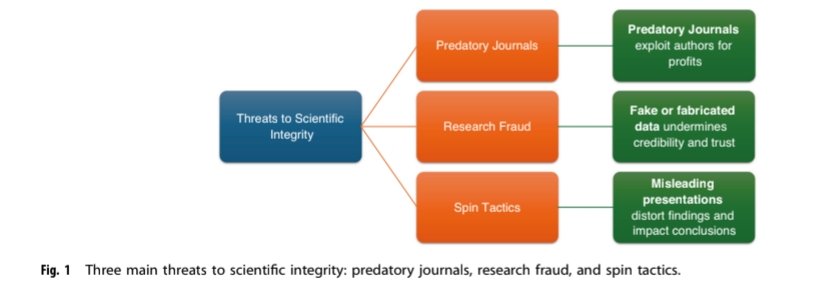

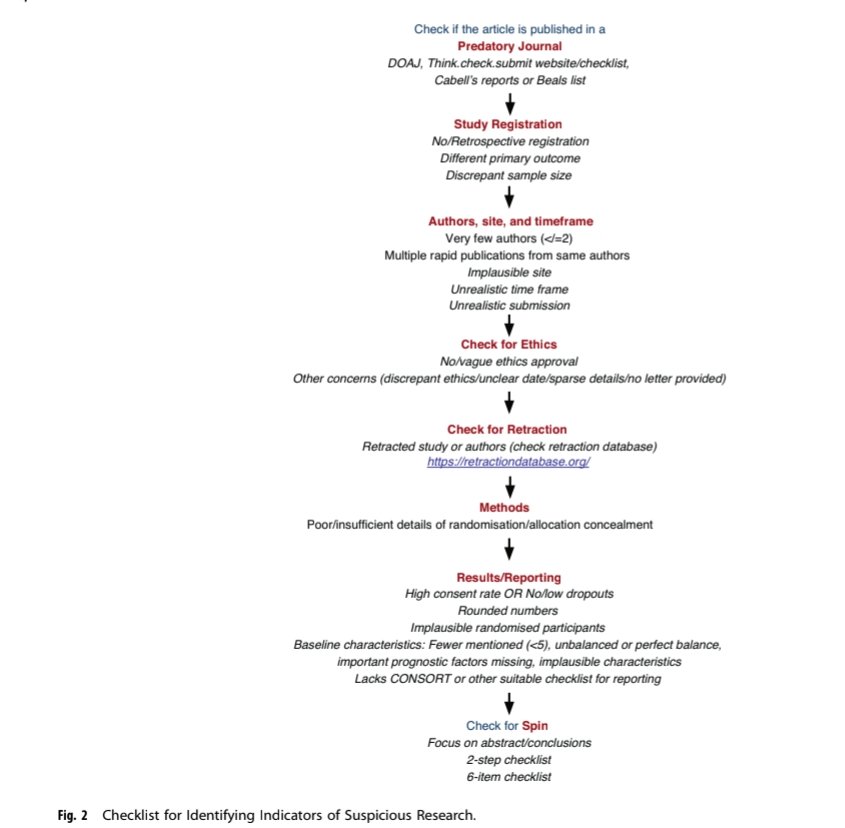

Is that paper you are reading trustworthy, #neoTwitter? Safeguarding the integrity of scientific literature in the 21st century @Ped_Research https://t.co/ERtQXVZhH3 @EBNEO @ESPR_ESN @nicupodcast https://t.co/oNCMw1D5f2

Paper: https://t.co/zu0BCFH67C Code: https://t.co/ljZTA90IJ8 Model: https://t.co/tEfsyrkkAM Website: https://t.co/cTf5c5CigY #AI #LLM #Reasoning 11/

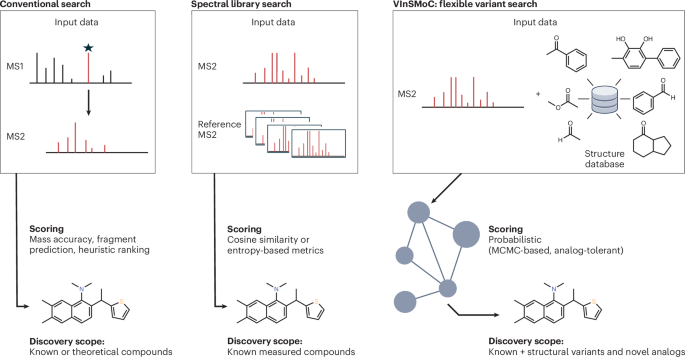

An accompanying News & Views by Bart Ghesquiere for this paper is now available! @VIBMetaboCoreLV https://t.co/jM2ljSwiB9 🔓https://t.co/KKGUGlCJTZ

📚 AI Native Daily Paper Digest - 2025-12-11🌟 Follow @AINativeF for the latest insights on AI Native. Covering AI research papers from Hugging Face, featured in the image. 💡 Stay updated with the latest research trends and dive deep into the future of AI! 🚀 #AI #HuggingFace #AIPaper #AINative #AINF — Appendix: Today's AI research papers — 1. StereoWorld: Geometry-Aware Monocular-to-Stereo Video Generation 2. BrainExplore: Large-Scale Discovery of Interpretable Visual Representations in the Human Brain 3. Composing Concepts from Images and Videos via Concept-prompt Binding 4. OmniPSD: Layered PSD Generation with Diffusion Transformer 5. InfiniteVL: Synergizing Linear and Sparse Attention for Highly-Efficient, Unlimited-Input Vision-Language Models 6. HiF-VLA: Hindsight, Insight and Foresight through Motion Representation for Vision-Language-Action Models 7. Fast-Decoding Diffusion Language Models via Progress-Aware Confidence Schedules 8. EtCon: Edit-then-Consolidate for Reliable Knowledge Editing 9. Rethinking Chain-of-Thought Reasoning for Videos 10. WonderZoom: Multi-Scale 3D World Generation 11. UniUGP: Unifying Understanding, Generation, and Planing For End-to-end Autonomous Driving 12. Towards a Science of Scaling Agent Systems 13. Learning Unmasking Policies for Diffusion Language Models

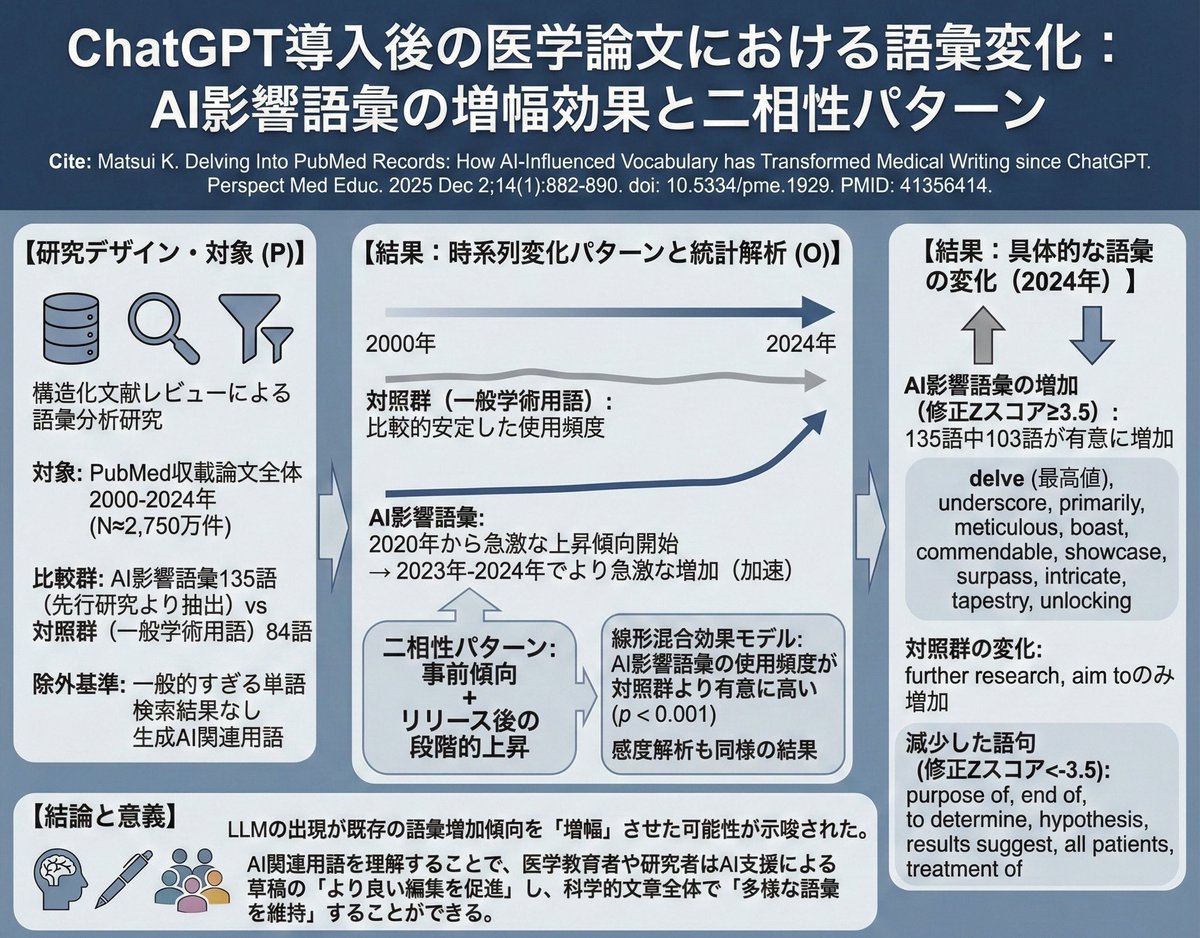

2022年末のChatGPTのリリース後,医学文献において特定の単語や語句の使用頻度が増加していた.しかし,これらの用語の使用は2022年以前から増加傾向にあり,LLMの出現が既存の傾向を増幅させた可能性が示唆された.2750万件の構造化文献レビューによる語彙分析研究 Perspect Med Educ 2025 Dec.2 https://t.co/FvvRTCSYdX

📚 AI Native Daily Paper Digest - 2025-12-12🌟 Follow @AINativeF for the latest insights on AI Native. Covering AI research papers from Hugging Face, featured in the image. 💡 Stay updated with the latest research trends and dive deep into the future of AI! 🚀 #AI #HuggingFace #AIPaper #AINative #AINF — Appendix: Today's AI research papers — 1. T-pro 2.0: An Efficient Russian Hybrid-Reasoning Model and Playground 2. Long-horizon Reasoning Agent for Olympiad-Level Mathematical Problem Solving 3. Are We Ready for RL in Text-to-3D Generation? A Progressive Investigation 4. OPV: Outcome-based Process Verifier for Efficient Long Chain-of-Thought Verification 5. Achieving Olympia-Level Geometry Large Language Model Agent via Complexity Boosting Reinforcement Learning 6. MoCapAnything: Unified 3D Motion Capture for Arbitrary Skeletons from Monocular Videos 7. BEAVER: An Efficient Deterministic LLM Verifier 8. From Macro to Micro: Benchmarking Microscopic Spatial Intelligence on Molecules via Vision-Language Models 9. VQRAE: Representation Quantization Autoencoders for Multimodal Understanding, Generation and Reconstruction 10. Thinking with Images via Self-Calling Agent 11. Evaluating Gemini Robotics Policies in a Veo World Simulator 12. StereoSpace: Depth-Free Synthesis of Stereo Geometry via End-to-End Diffusion in a Canonical Space 13. Stronger Normalization-Free Transformers

@IsBeVerse That’s a retweet dumbass. I don’t know what RN Savior act you’re talking about but I do know that now you’re trying to be the savior when just the other day you were telling us how much you hate indigenous people. https://t.co/xQfWOuTApp

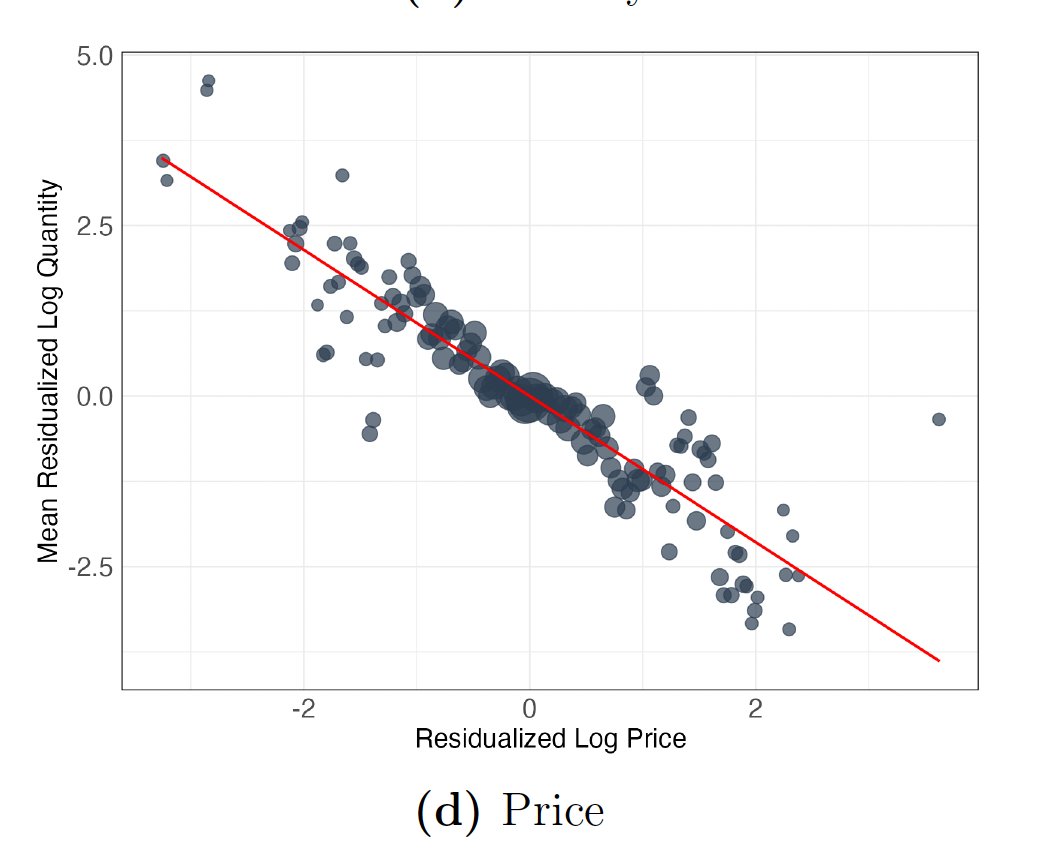

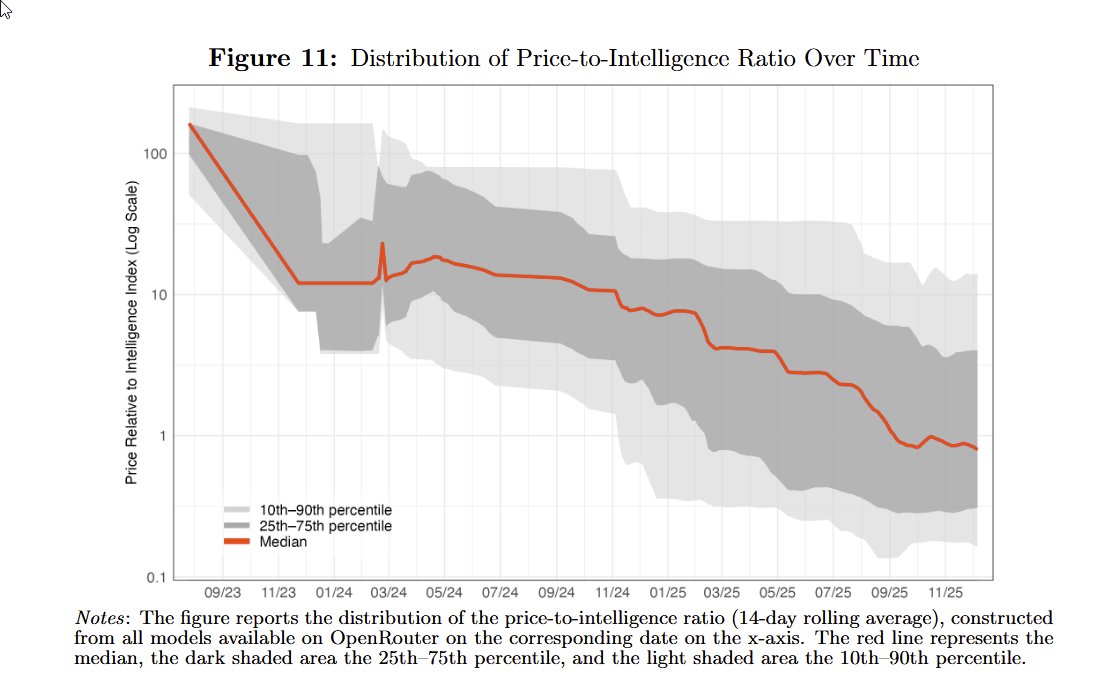

Lots of discussion on Jevons Paradox for AI: does cheaper AI lead to more total usage? New paper finds short-run elasticity ~1 (so no short-run paradox) but prices fell 1000x in two years & demand exploded. So Jevons happens over time, as firms gradually adopt AI at lower prices https://t.co/hKG6lWeuFd

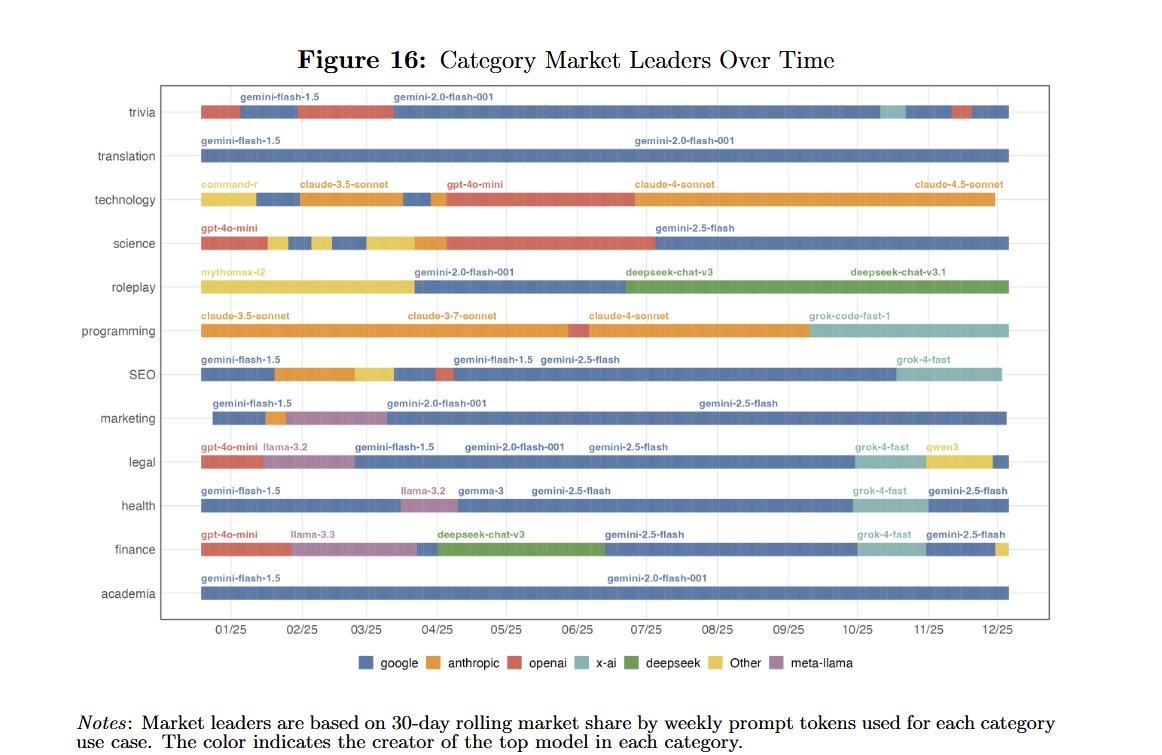

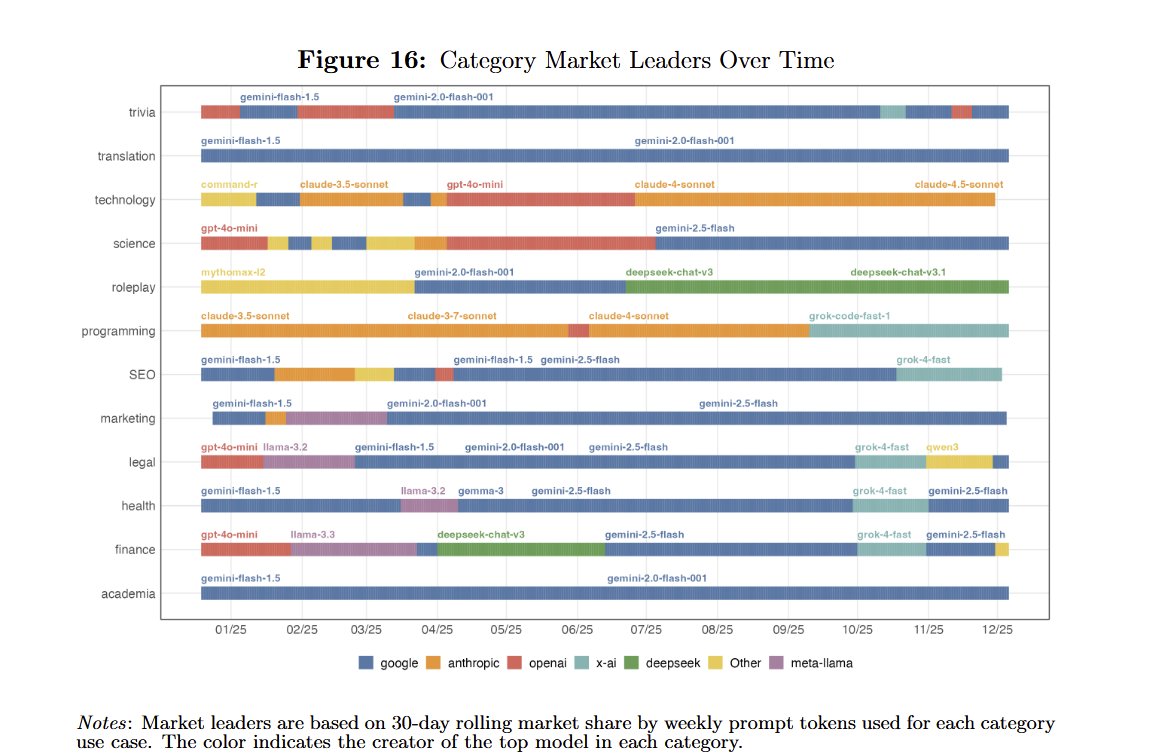

This was interesting, suggesting: 1) The market for AI is very dynamic, new models and providers switch leads often, and different leaders exist for different spaces 2) A lot of companies have not leveraged the power of very smart AIs outside of coding and tech, going with cheap https://t.co/zNkoEwJH4L

🏷️45,000 🧡🫧↔️ https://t.co/VbPcKaKHGG

I did a free Ads for him and he wants to dash me one native wear but I’ll prefer to give one of my followers. Who need native wear ? https://t.co/qRjeM1CpsM

🏷️45,000 🧡🫧↔️ https://t.co/VbPcKaKHGG

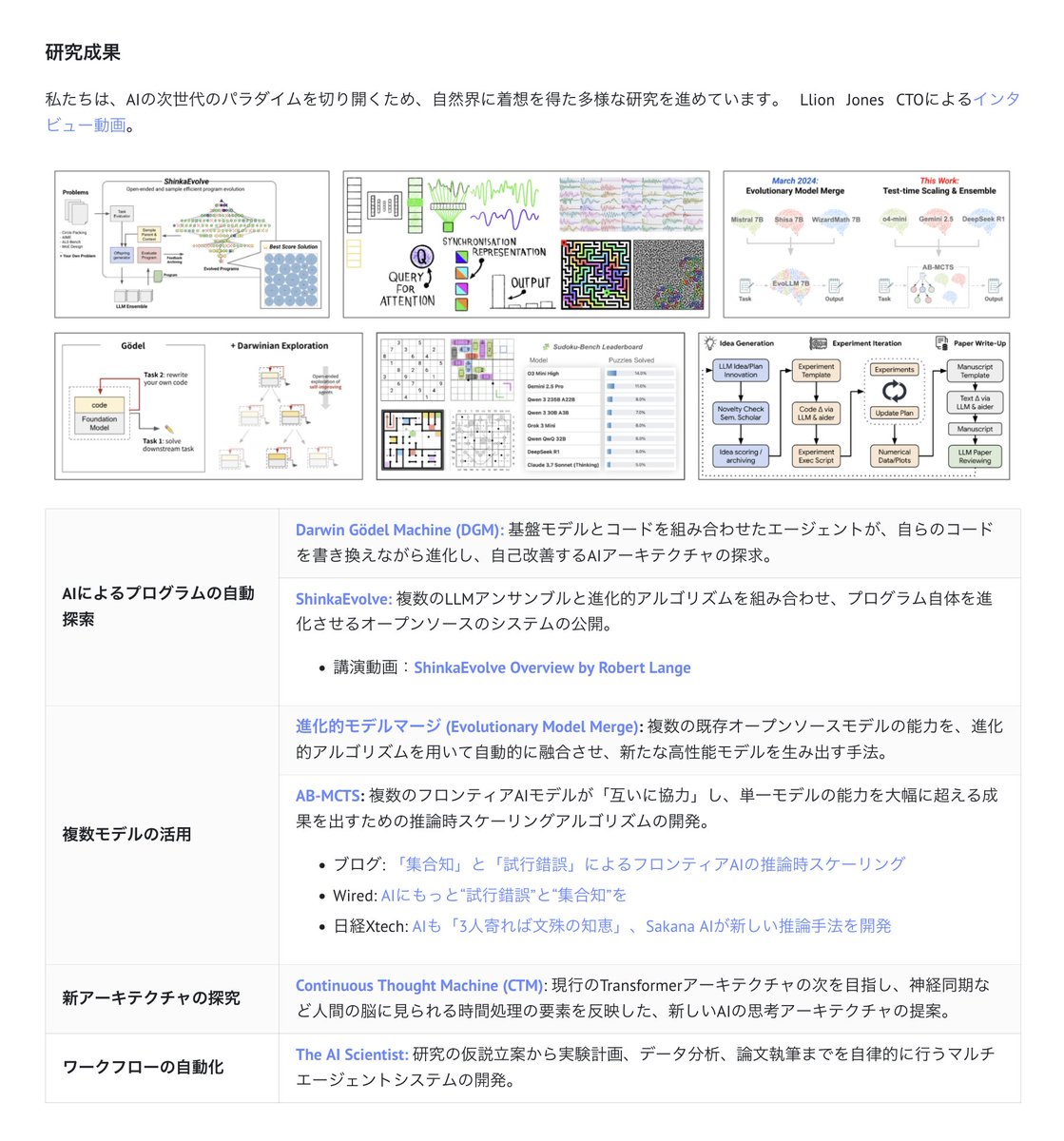

Sakana AIでは、世界最先端の自律型エージェント技術を駆使して、未踏のソリューション開発に挑むApplied Research Engineerを募集中です。 技術の社会実装をさらに加速させる、コアメンバーとしての参画をお待ちしています🚀 https://t.co/eQ7e0rIOmg (正社員・学生インターン問わず歓迎です✨) https://t.co/jnpuUp0ajJ

Applied Teamに関する詳細については、こちらの紹介をご覧ください。 https://t.co/11hP67FI84 https://t.co/PxwqbnGjak

Applied Teamに関する詳細については、こちらの紹介をご覧ください。 https://t.co/11hP67FI84 https://t.co/PxwqbnGjak

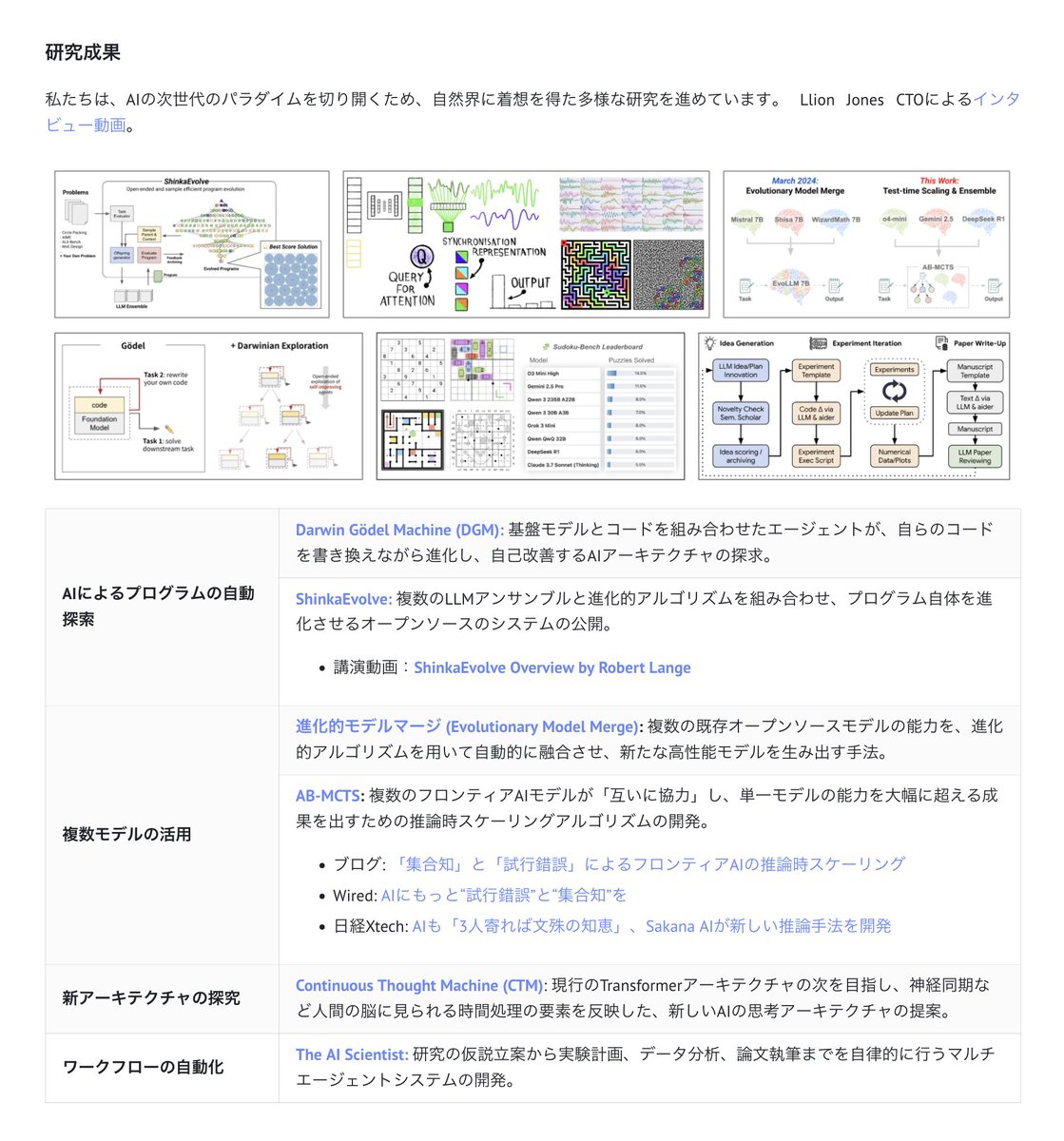

Analysis of the latest 1,000 posts in my AI Newsmaker’s list (the most followed 1,600 accounts here on X). Shows a lot of the value here on X that just goes by in an hour. Thanks @blevlabs for letting me use your cognitive AI to do these reports. Shows the hottest posts, and people in AI. R&D for the future of media. https://t.co/4tVEIegd3Q

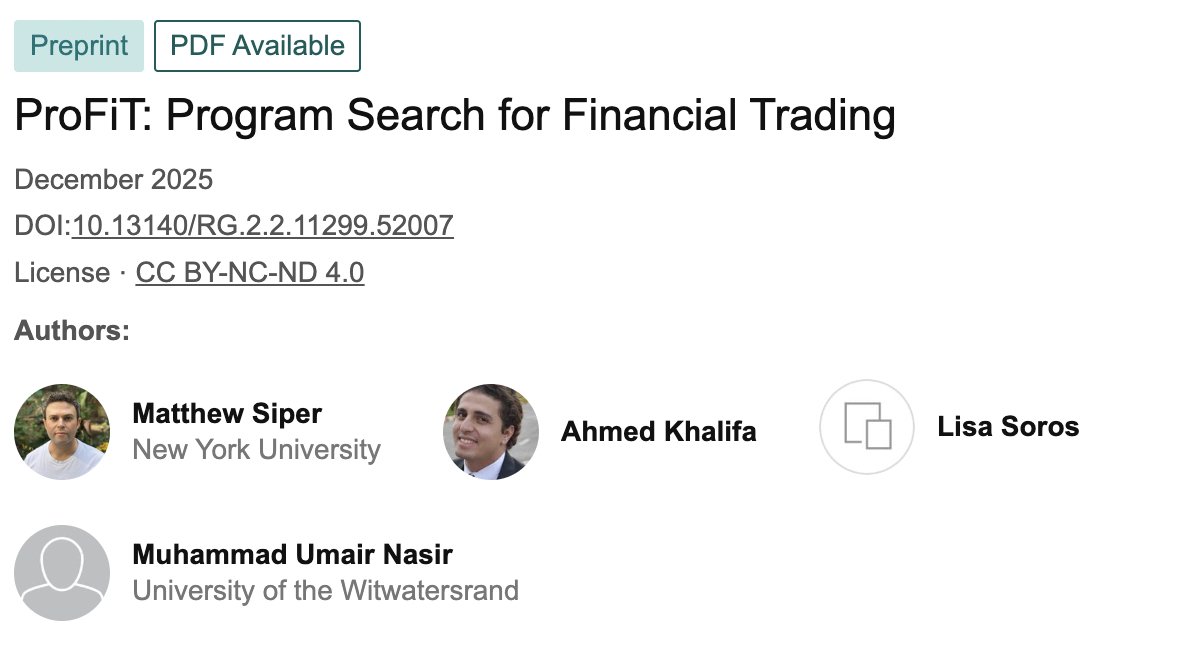

This is wild! There's an AI that literally rewrites its own trading code to beat the market. Not tuning parameters. Not learning patterns. Actually rewriting the Python functions that decide when to buy and sell. Let me explain this insanity: Traditional trading bots work like this: Human codes strategy AI adjusts weights/parameters Strategy structure stays FIXED Market changes → bot breaks Human fixes it manually This is exhausting and doesn't scale. ProFiT (Program Search for Financial Trading) does something completely different. It treats trading strategies as living organisms that evolve. Each strategy is actual Python code. Not weights. Not parameters. CODE. Here's the evolutionary loop: 1️⃣ Start with a basic strategy (say, MACD crossover) 2️⃣ LLM reads the code + performance report 3️⃣ LLM diagnoses weaknesses 4️⃣ LLM proposes improvements 5️⃣ New code gets backtested 6️⃣ If good → kept in population 7️⃣ Repeat forever The genius part? "Semantic mutation" Traditional genetic programming randomly flips bits of code (often breaking it). ProFiT's LLM actually understands what the code does: "This strategy lacks volatility filters. Add ATR-based gating to reduce false signals." LOGICAL evolution. And they don't keep just ONE best strategy. They maintain a POPULATION of all strategies that beat a minimum threshold. Why? Diversity prevents getting stuck in local optima. It's like keeping multiple species alive instead of just the "fittest" one. Quality-Diversity approach. Real results across 7 futures markets (E6, ES, Bitcoin, etc.): 📈 Beat Buy-and-Hold in 77% of cases 📈 Beat random strategies 100% of time 📈 +44% average return improvement over seed strategies 📈 +0.57 Sharpe ratio improvement Statistically significant (p < 0.05 on Wilcoxon tests) Let's look at one evolution path: Generation 0: Basic MACD crossover → Returns: -54% → 25 lines of code Generation 15: MACD + regime filter + ATR stops + volatility gates + debouncing → Returns: +0.77% → 90 lines of sophisticated logic The LLM built that complexity. How does this compare to prior work? 🔴 Reinforcement Learning: Optimizes weights, structure stays fixed 🔴 Classic GP: Random mutations, no reasoning 🔴 Codex/AlphaCode: One-shot generation, no iteration 🟢 ProFiT: Iterative, semantic, empirically grounded It's a NEW paradigm. Pain points this solves: ❌ Non-stationarity (markets change constantly) ✅ Code evolution adapts structure, not just params ❌ Black boxes you can't trust ✅ Human-readable Python you can inspect ❌ Constant human intervention ✅ Autonomous improvement loop 11/15 The validation methodology is RIGOROUS: 5-fold walk-forward cross-validation 2.5 years train, 6 months validation, 6 months test 10-day dormant windows to prevent lookahead bias Fixed transaction costs (0.2%) Multiple seed strategies tested This isn't overfit garbage. Inspiration comes from wild places: 🧬 Genetic Programming (Koza) 🤖 Gödel Machines (self-improving systems) 🎯 MAP-Elites (quality-diversity) 🧠 LLM code generation (Codex) They mashed it all together and pointed it at financial markets. Current limitation they acknowledge: Testing against FIXED historical data doesn't show how it adapts to real-time regime changes. They're working on that. (Imagine this running live, evolving strategies as the market shifts beneath it...) Future directions they hint at: Evolving the prompts themselves (meta-optimization) Cross-asset strategy evolution Multi-parent recombination between strategies Real-time deployment with continuous adaptation This is just the beginning. Bottom line: We're shifting from "training AI to predict markets" to "AI that rewrites how it thinks about markets." Not parameter learning. Strategy evolution. The paper: "ProFiT: Program Search for Financial Trading" by Siper et al. Wild times ahead. 🚀

บิวกิ้น : ผมเอาเพนกวิ้นๆๆ #RerouteEcoreviveHarmony #bbillkin https://t.co/a2RnzWlWA5

@PalmerReport How they function in the world is beyond me. https://t.co/dXxwsEVX2v

#NativeAmerican #NativeTwitter #native #NativeCamp https://t.co/OA3GEvLevg

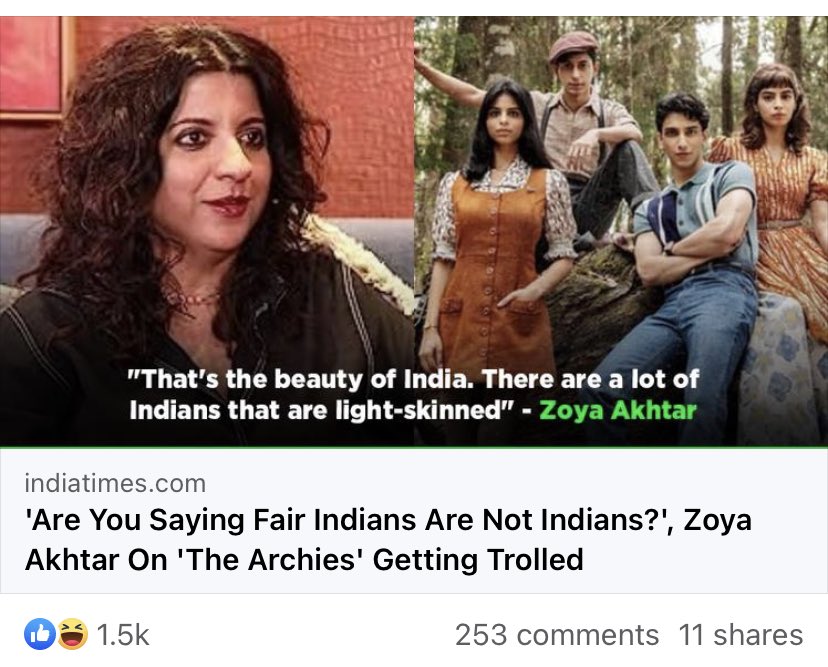

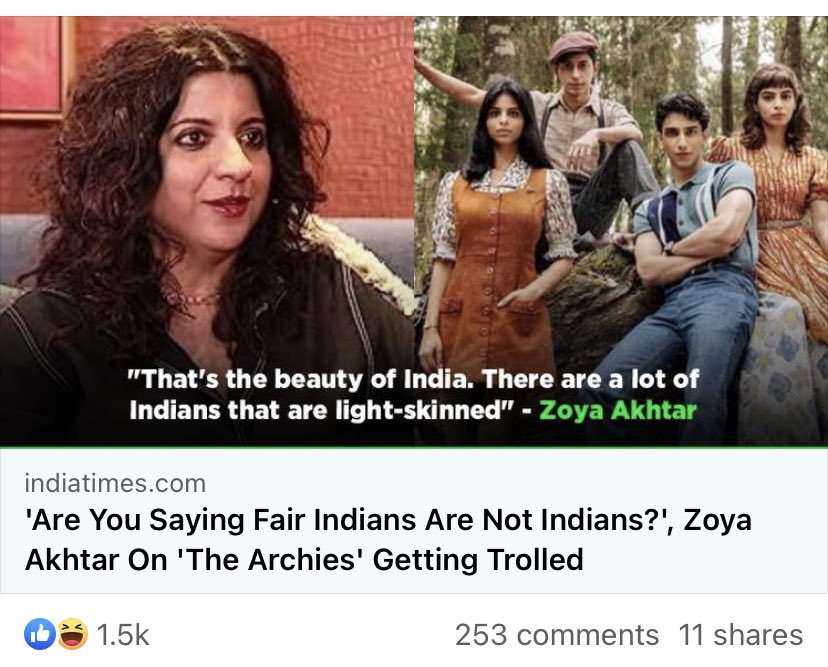

When I tell you I cried for my oppressed fair-skinned brethren and their struggle for resources, opportunity and dignity… https://t.co/H2wjwL6TWp

【好評受注中】 『すめらぎ琥珀』さんイラスト 「ラヴ・スロオト」 原型制作:BINDing https://t.co/ot6bEDoDmr #バインディング #エロホビ https://t.co/jzudpRRRJ8

【好評受注中】 『左藤空気』さんイラスト 「皇木 新奈」 原型制作:マッカラン24 https://t.co/ot6bEDoDmr #フロッグ #エロホビ https://t.co/bc4NIpPKFf

Gaffa. Vs. Zvaffa https://t.co/n6KB5hOfHG

$NAORIS TP2: 0.027 ✅ $ASTER $ICP $ZK $ZEC $DASH $XPL $PENGU $TAO $COAI $BTC $DGB $VIRTUAL $HYPE $SOL $PUMP $ETH $TRUMP $ROSE $DOGE $MYX $TRON $ZEC $TRX Click Link Below ⬇️ https://t.co/Gm1ZVgCNGO https://t.co/Wu9dIEJyXb

$NAORIS TP2: 0.027 ✅ $ASTER $ICP $ZK $ZEC $DASH $XPL $PENGU $TAO $COAI $BTC $DGB $VIRTUAL $HYPE $SOL $PUMP $ETH $TRUMP $ROSE $DOGE $MYX $TRON $ZEC $TRX Click Link Below ⬇️ https://t.co/KDRybHZQ8S https://t.co/hksrtn2BJ9

⠀:¨ ·.· ¨:⠀ (:::[♡]:::) ⠀ `· . info in strawpage ꜝ ྐ✚ ₊⠀Real shiro & stein 𓏵 https://t.co/aQbG7K2HQP #moothunt #promotwt #introtwt MOOT ME ⿻ #shtwt #edtwt #goretwt #988twt #kleptotwt #yaoitwt #alctwt #nictwt #problematictwt #shedtwt ♡ or ↻ for moots ! https://t.co/QfIH0Lrt24

$NAORIS TP2: 0.027 ✅ $ASTER $ICP $ZK $ZEC $DASH $XPL $PENGU $TAO $COAI $BTC $DGB $VIRTUAL $HYPE $SOL $PUMP $ETH $TRUMP $ROSE $DOGE $MYX $TRON $ZEC $TRX Click Link Below ⬇️ https://t.co/ohRxUHr7ed https://t.co/jtseaHZWab

Looking for your next AI Engineering Role? Aa bunch of VC-backed startups are hiring founding engineers, in ai and backend systems. Don't miss out on some great opportunities and your next big challenge Apply now! https://t.co/1wqJdsRLpS

I've never drawn Tessa before so... I hope this works🩶✨️ #MDswappedAU #tessaelliot https://t.co/geVeVM4sOy

What you see on Temu vs. what arrives from Temu https://t.co/kmr2eWyBiK