Your curated collection of saved posts and media

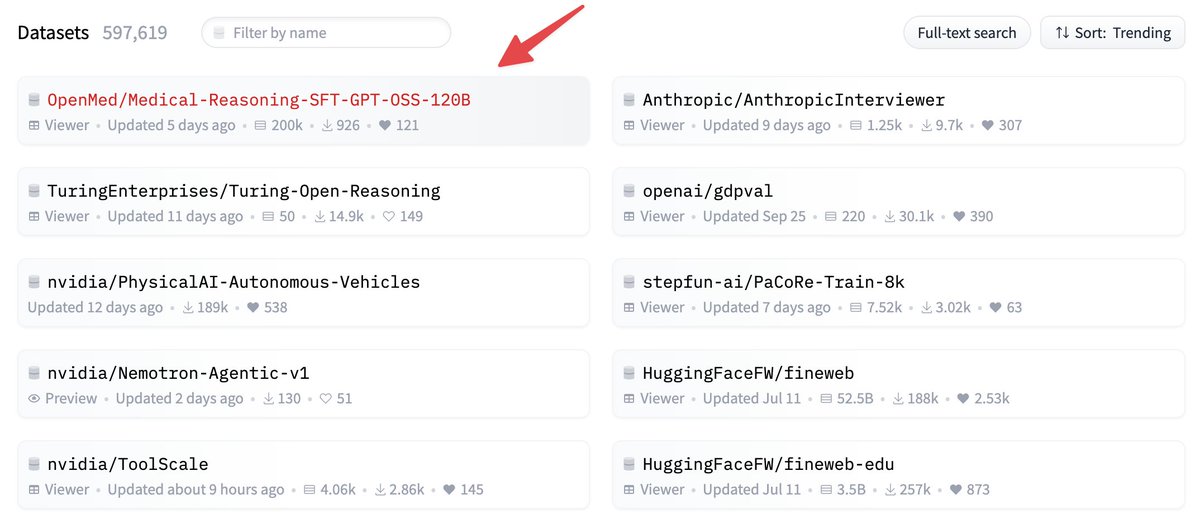

and it finally happened!!! 🔥 we are trending number one people! 🥇 thank you all for your likes, downloads, and support! ❤️ https://t.co/kzaL67IPEk

absolutely incredible fb marketplace find today https://t.co/Ri0n7K63e8

absolutely incredible fb marketplace find today https://t.co/Ri0n7K63e8

@Srirachachau got one of these that Christmas and let me tell you I found it a lot harder to use for pranks and deceptions than Kevin did https://t.co/qrXzwUYDLn

“dudes rock” doesn’t begin to cover it https://t.co/4eojSjDSAs

“dudes rock” doesn’t begin to cover it https://t.co/4eojSjDSAs

Academics are sounding the alarm about a growing crisis in scholarship as we know it: AI-generated citations of nonexistent papers that have infested real journals. Despite being fake, the sources are widely assumed to be authentic the more they appear in published literature. https://t.co/MeroDdEHt2

Story: https://t.co/PrxGcqi0Ni

MELANIA, the film, exclusively in theaters worldwide on January 30th, 2026. https://t.co/n2kloQ4JwW

I'm your Christmas party DJ and I'm pretty bad https://t.co/XvCfahOiSD

I'm your Christmas party DJ and I'm pretty bad https://t.co/XvCfahOiSD

GitHub Community Discussion: https://t.co/U49FuFBaRa

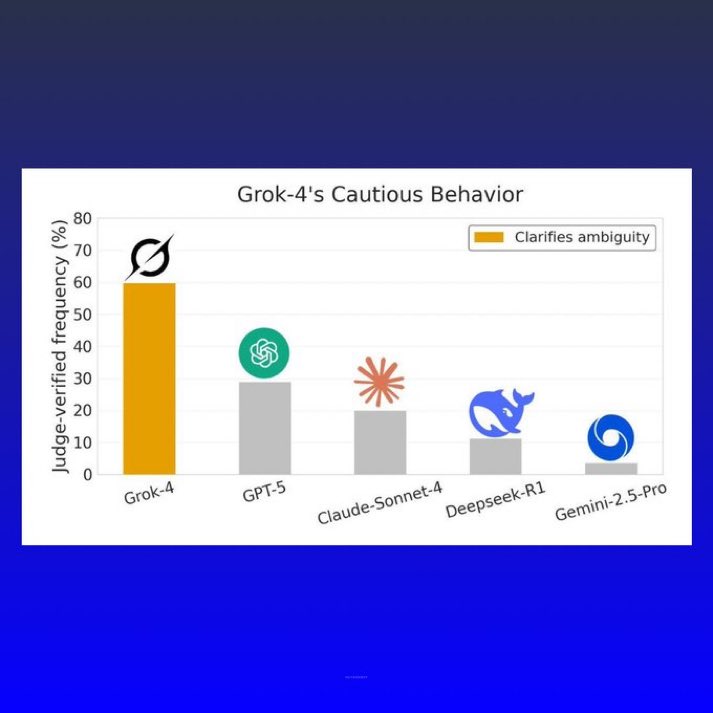

BREAKING: Grok-4 outperforms top AI models in truth-seeking and cautious reasoning https://t.co/4p0ib7WePH

There was no federal income tax the first 137 years in America was a country. Everything changed in 1913 with The Federal Reserve Act & 16th Amendment (Income Tax). America’s wealth was built on productivity, and stolen by usury. https://t.co/xtEHiodjY2

In this demo, you’ll see Gemini 3 Flash’s frontier-level reasoning and multimodal capabilities on display. The model is able to simultaneously conduct complex geometric calculations while processing complex inputs (video and image). You can play around with the slingshot in @GoogleAIStudio here and share your favorite examples below: https://t.co/2EqWhXlyP4

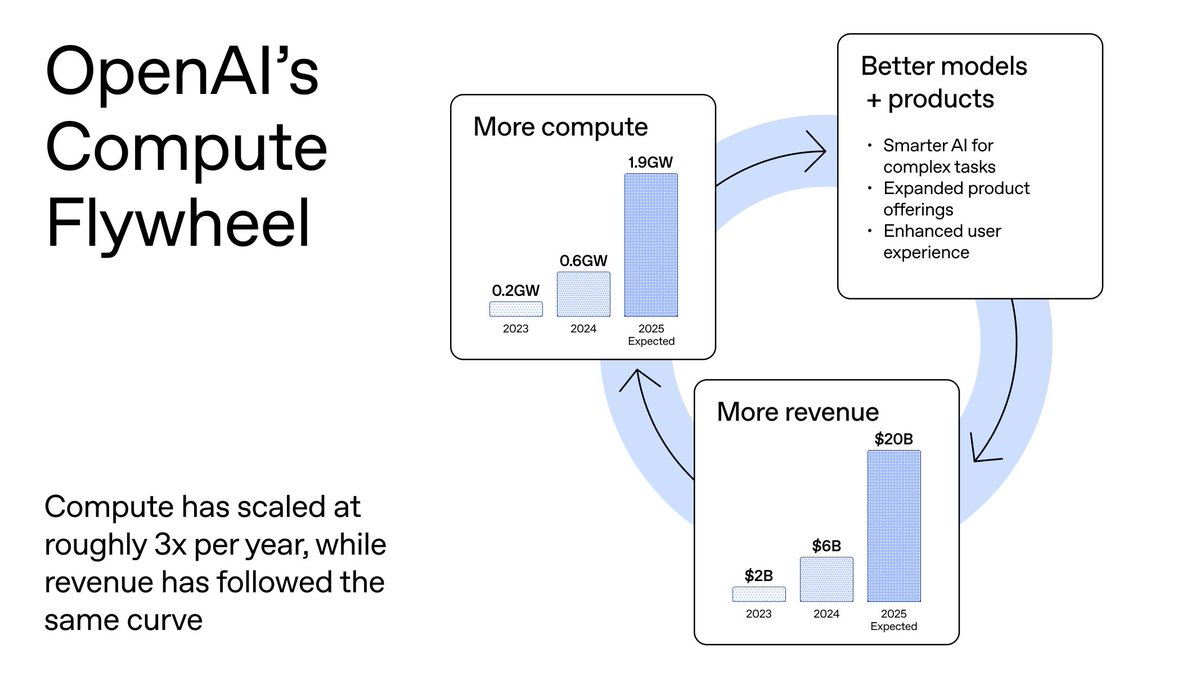

Compute fuels progress. https://t.co/ePm7pmpb8Q

Beyond image generation, we know people want more from AI like healthcare improvements and scientific discovery. Compute is how we get it. If America doesn't build it, others will. https://t.co/6VMtfCgr5Q

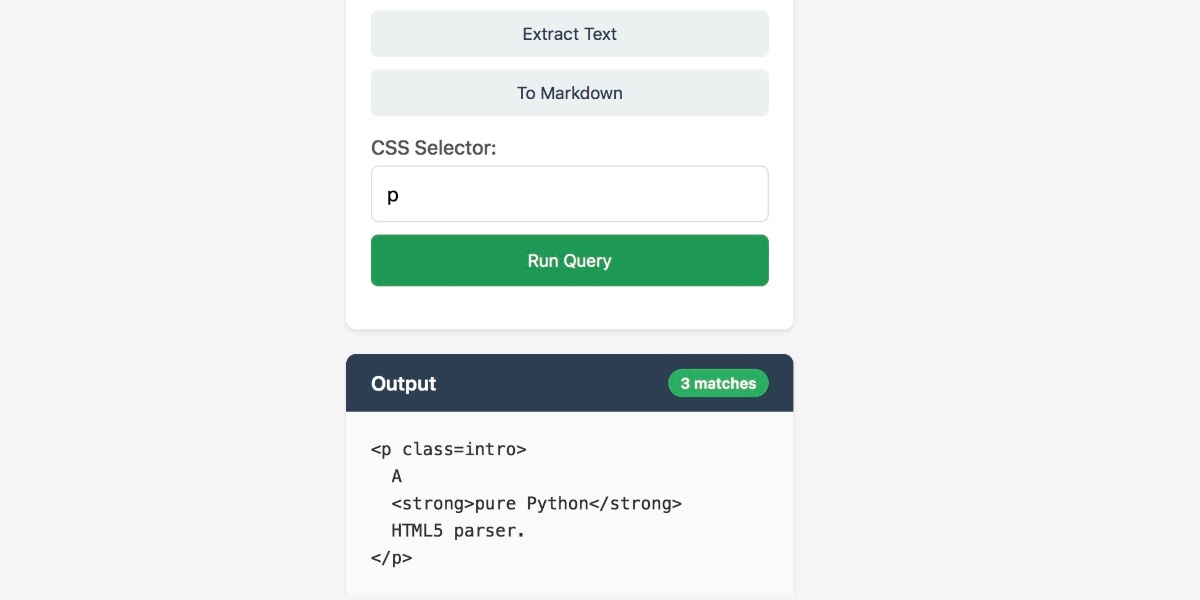

JustHTML by @EmilStenstrom is a new Python library (no dependencies) that parses HTML according to the HTML5 specification and passes the 9,200 test html5lib-tests suite It's 3,000 lines of code mostly written by coding agents over a couple of months https://t.co/raGQ88tlTg

“Respect for the environment, and respect for what was naturally occurring in nature: that was the bedrock of all original peoples. Harmony, coexistence, not conquest and conquer.” #NativeTwitter 🙏🏾🌎✌🏾 https://t.co/SZ854JGVOJ

We live in a world where everything is connected. We can not longer think in terms of us and them when it comes to the consequences of the way we live. Today it's all about WE.. <>G Braden, Cherokee . #INDIGENOUS #NativeAmericans https://t.co/QH60KACSHE

"Nature is never a place to get to, it is around us always, it is our home." ~ Lakota https://t.co/dB4gG4M16d

“Our challenge isn't so much to teach children about the natural world, but to find ways to sustain the instinctive connections they already carry”. ~ Terry. K https://t.co/8V9dlsq3Dh

Bring new robot testing environments to life with @theworldlabs and Isaac Sim. 🤖 If you can describe a world 🌎, you can start testing in it the same day. Learn how to: 1. Export scenes from World Labs' Marble as Gaussian splats 2. Convert to USD using @nvidiaomniverse NuRec 3. Import into NVIDIA Isaac Sim 4. Add a robot and run the simulation Read the guide ➡️ https://t.co/0kCSFIxhVC #SIGGRAPHAsia2025

Researchers are using Marble to generate simulation-ready robotics environments (scenes + collider meshes) then bring them into @NVIDIARobotics Isaac Sim for training + evaluation without any manual environment setup. Case Study: https://t.co/PzvcMkl2JI https://t.co/YVlDsQHd0t

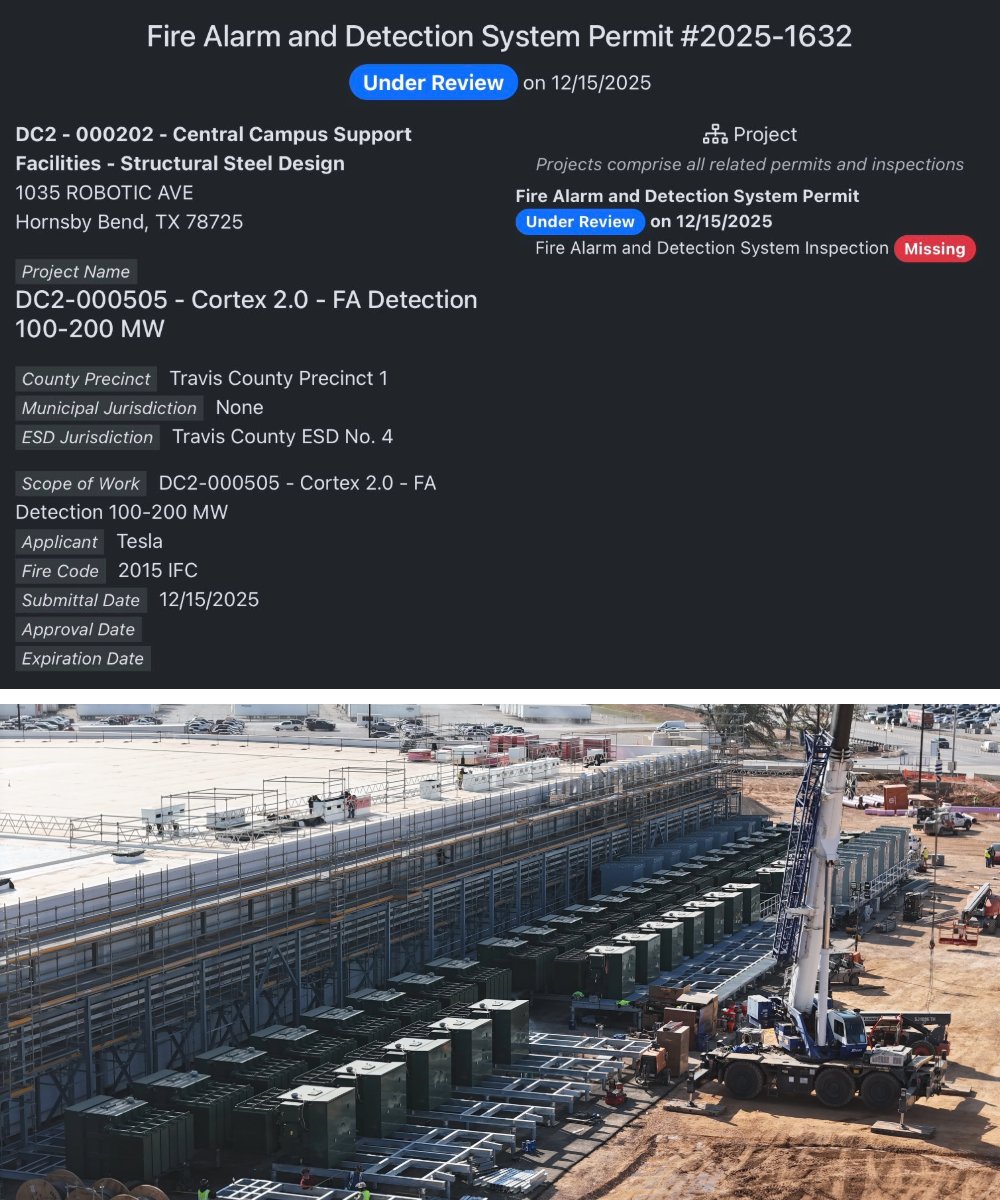

🚨🇺🇸 TESLA CONFIRMS 200 MW DATACENTER AT GIGA TEXAS - ENTIRE FACILITY TRAINS OPTIMUS ROBOTS Fire alarm permit confirms Tesla's Cortex 2.0 datacenter pulls 200 megawatts. That's enough power to run a small city. Instead it's training humanoid robots. Construction's happening now at Giga Texas - steel structure standing, roof half complete, operational late 2025. The facility runs thousands of Nvidia GPUs processing video training for Optimus movement, navigation, manipulation. Same AI platform that runs Tesla's Full Self-Driving. Elon's bigger plan: bring a terawatt of compute online. That's equivalent to all electrical power produced in America. For context, his xAI Colossus supercomputer already runs 200,000 Nvidia GPUs. Cortex 2.0 likely matches or exceeds that. The business model mirrors Tesla's FSD subscriptions - sell the robot, charge for AI updates. Market potential per Elon: $25 trillion. Tesla's targeting 1,000 Optimus units for internal use in 2025, external sales by 2026. Here's why 200 MW matters: robotics companies talk about humanoid robots for decades. Tesla's building infrastructure that rivals nations' power grids to actually train them at scale. Not demos. Production runs. Cortex 2.0 isn't speculation anymore. It's steel and concrete going up in Austin right now. Permit dated December 17. Source: AI News Detail, Giga Texas permits, @tesla, @elon, @Tesla_Optimus

🎥 Introducing SAGE, an agentic system for long video reasoning on entertainment videos—sports, vlogs, & more. It learns when to skim, zoom in, & answer questions directly. On our SAGE-Bench eval, SAGE with a Molmo 2 (8B)-based orchestrator lifts accuracy from 61.8% → 66.1%. 🧵 https://t.co/JkGlw3Ad1Z

Agent demos often fail for reasons that are hard to see: unclear tool traces, silent failures, and changes that improve one behavior but break another. Our new course with @Nvidia shows how to use their NeMo Agent Toolkit to surface these issues with OpenTelemetry tracing, run evals that expose brittle reasoning, and deploy workflows with authentication and rate limiting so they behave consistently in real environments. Taught by Brian McBrayer (@Pr_Brian). Enroll now in Nvidia's NeMo Agent Toolkit: Making Agents Reliable: https://t.co/FvQvUuj5lE

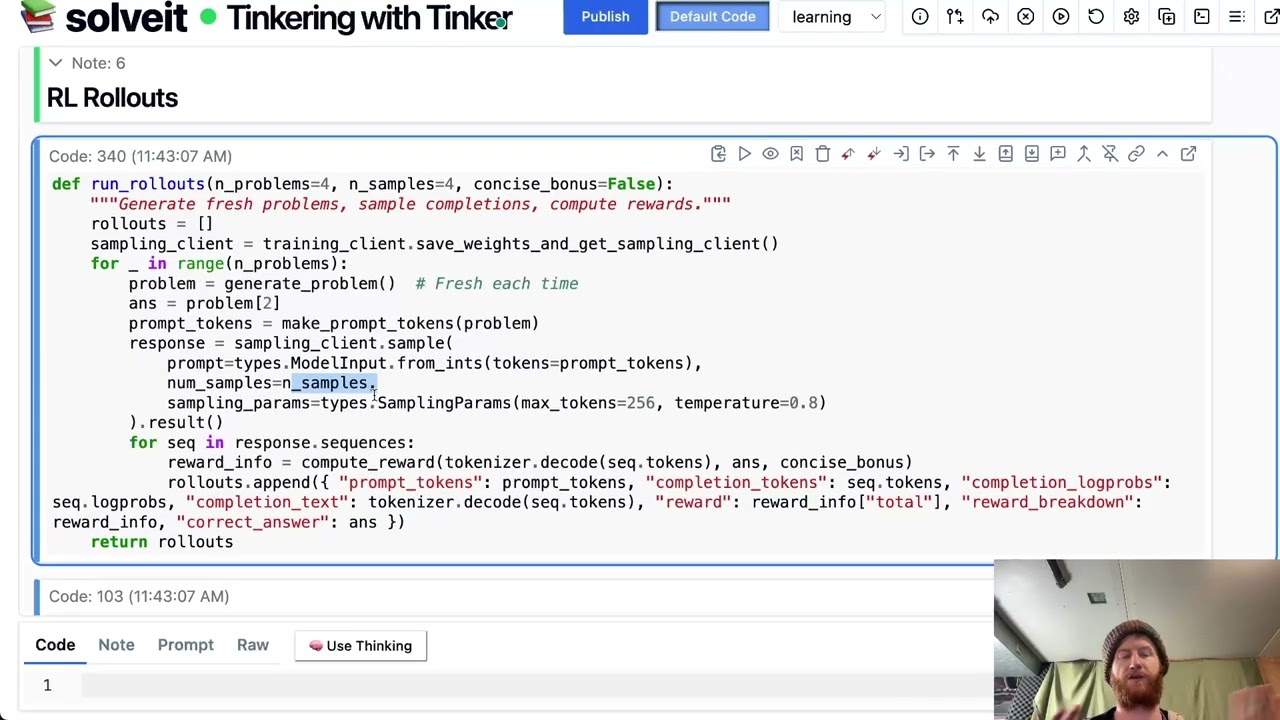

OK I had to record a quick video and share a dialog showing my first few tests: https://t.co/800pSOmNmD Dialog: https://t.co/Vev8lHDndn In the video, I show how easy it can be to train a model on a custom task with your own reward function. LMK what I should try next :)

First impressions of Tinker: I can tinker with LLMs again! Really liking it so far - you can focus on the data and *what* you want to DO, not the stress of distributed training, model loading, arcane incantations, implementation differences, library bugs... Amazing work @thinkyma

See how @intelligenceco built Cofounder, an AI chief of staff that turns business documents into agent-ready context at scale. 📄 LlamaParse handles continuous ingestion from @gmail, @SlackHQ, @linear, @notionhq, and @github every 30 minutes - processing PDFs, images, and attachments with agentic OCR 🤖 Two-stage retrieval system combines vector similarity with agent reasoning to filter by time, source, and ownership across multiple business tools 💰 Achieved lower costs and latency compared to managed RAG solutions while avoiding weeks of custom parser development ⚡ Freed engineering time to focus on core differentiator - building agents that can act - instead of document infrastructure "It probably would've taken us a month or more to build a worse document parser ourselves. LlamaParse let us focus on the agents instead of reinventing infrastructure." Read the full case study: https://t.co/1C2qyOAKaz

On the latest Unsupervised Learning, I sat down with @echen. Edwin is the founder and CEO of @HelloSurgeAI, the >$1B revenue infrastructure company behind nearly every major frontier model. Some favorite parts: - Why benchmarks make models worse - Why the model companies are diverging as they optimize for different objectives - The four rare qualities that make elite AI evaluators - The future of the model landscape - Why every company should eventually train their own models Listen here: YouTube: https://t.co/FrCSVWh1v1 Spotify: https://t.co/zgS8MQlYJ5 Apple: https://t.co/qUkHXu7kee

The pattern we keep seeing: Easy metrics → hard problems later Hard measurements → real progress The labs winning are the ones willing to do the difficult, expensive work of measuring what actually matters. Full conversation: Youtube - https://t.co/Bv6nvbt5kk Spotify - https://t.co/eL6a3HG8wb Apple - https://t.co/9e7awbFfde