Your curated collection of saved posts and media

Today, we released Lyra 2.0, a framework for generating persistent, explorable 3D worlds at scale, from NVIDIA Research. Generating large-scale, complex environments is difficult for AI models. Current models often “forget” what spaces look like and lose track of movement over time, causing objects to shift, blur, or appear inconsistent. This prevents them from creating the reliable 3D environments required for downstream simulations. Lyra 2.0 solves these issues by: ✅ Maintaining per-frame 3D geometry to retrieve past frames and establish spatial correspondences ✅ Using self-augmented training to correct its own temporal drifting. Lyra 2.0 turns an image into a 3D world you can walk through, look back, and drop a robot into for real-time rendering, simulation, and immersive applications. ➡️ Learn more: https://t.co/ROR7miJeCU 📄 Read the paper: https://t.co/1osU9EGjGD

Introducing Gemini on Mac. It’s the first time we’re bringing the @Geminiapp to desktop. The team built this initial release with @Antigravity, and it went from an idea to a native Swift app prototype in a few days. More features on the way! https://t.co/YRy0Pqq6zo

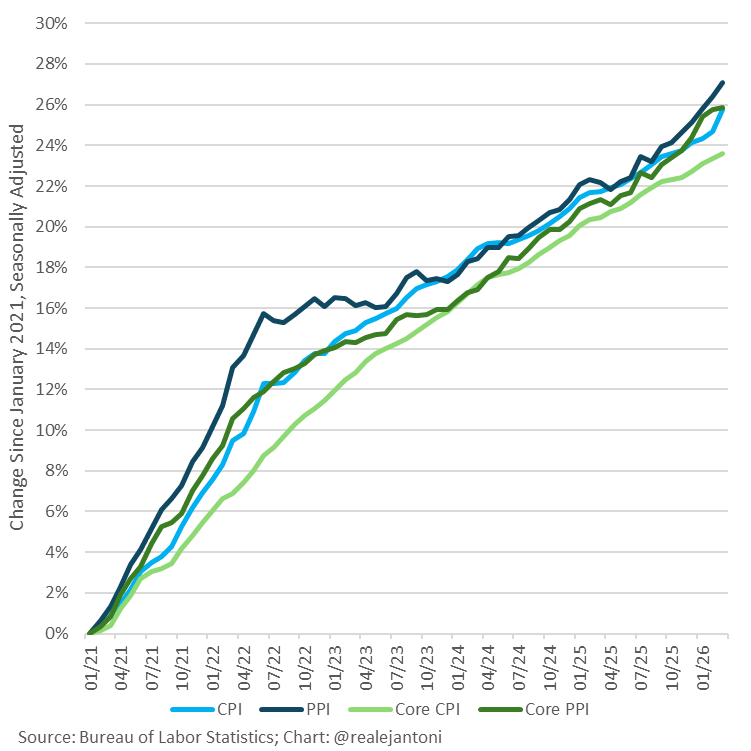

Cumulative inflation since Jan '21 is between 23.6% and 27.1%, depending on which index we're using: https://t.co/dKXzuV0SiJ

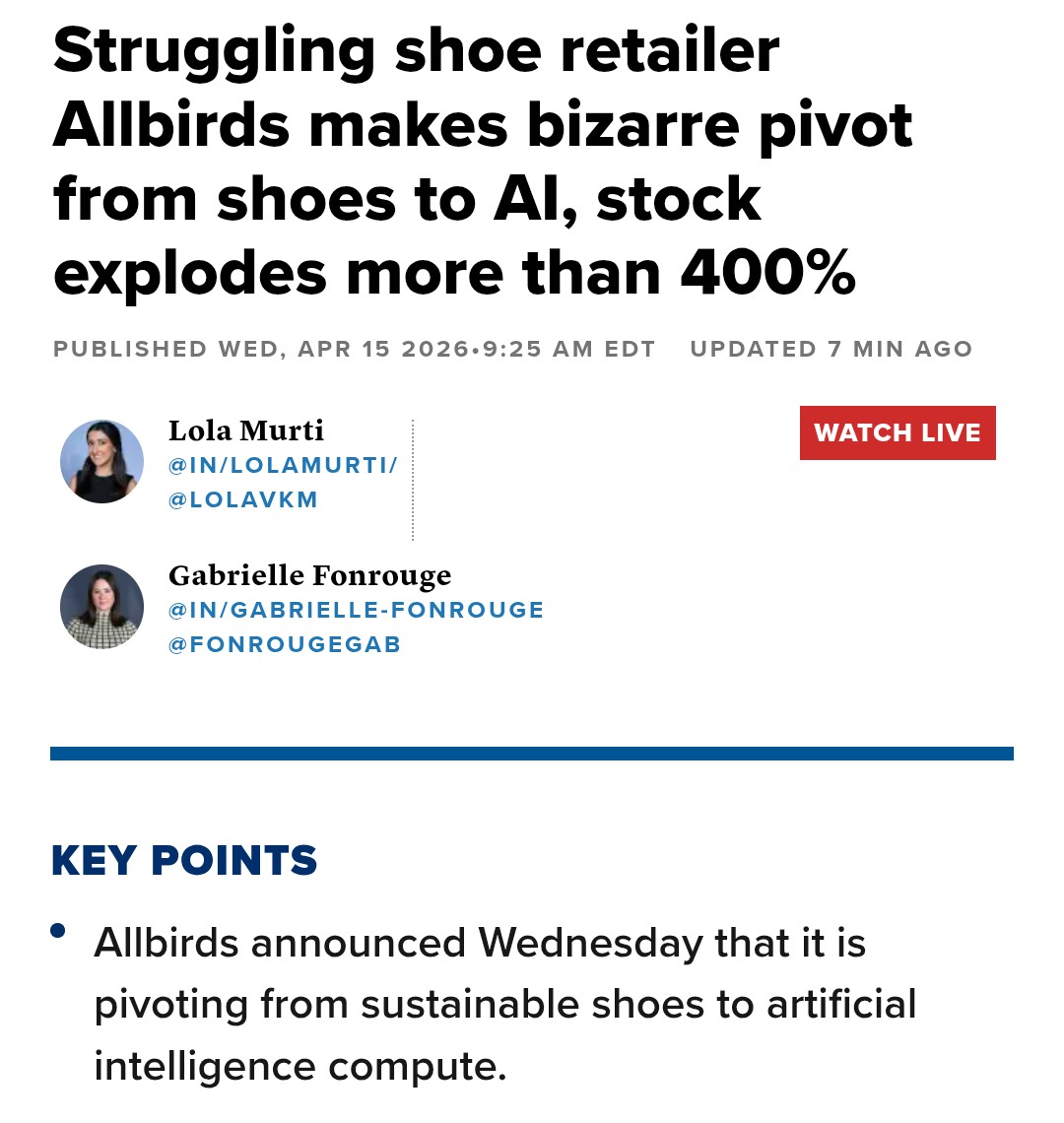

It’s never too late to pivot to a better business model https://t.co/YctUXcDJJC

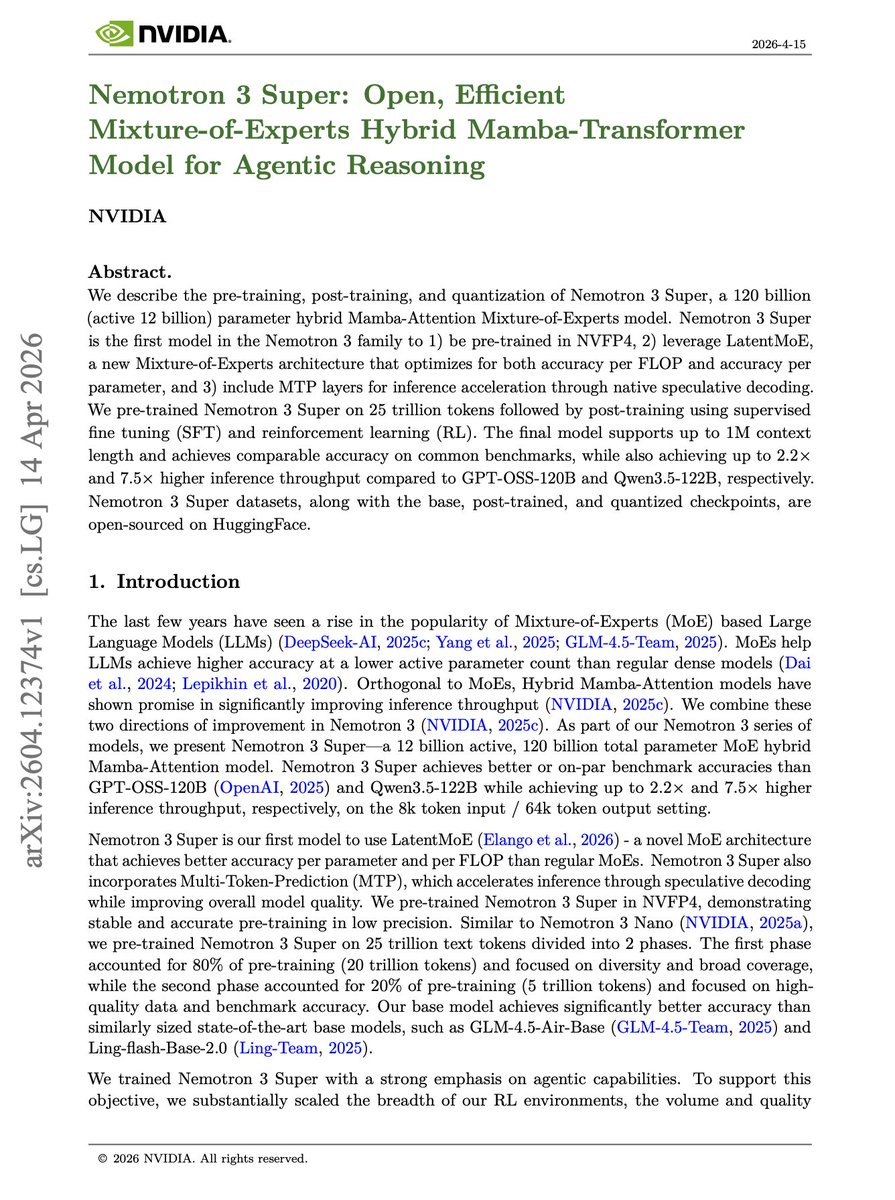

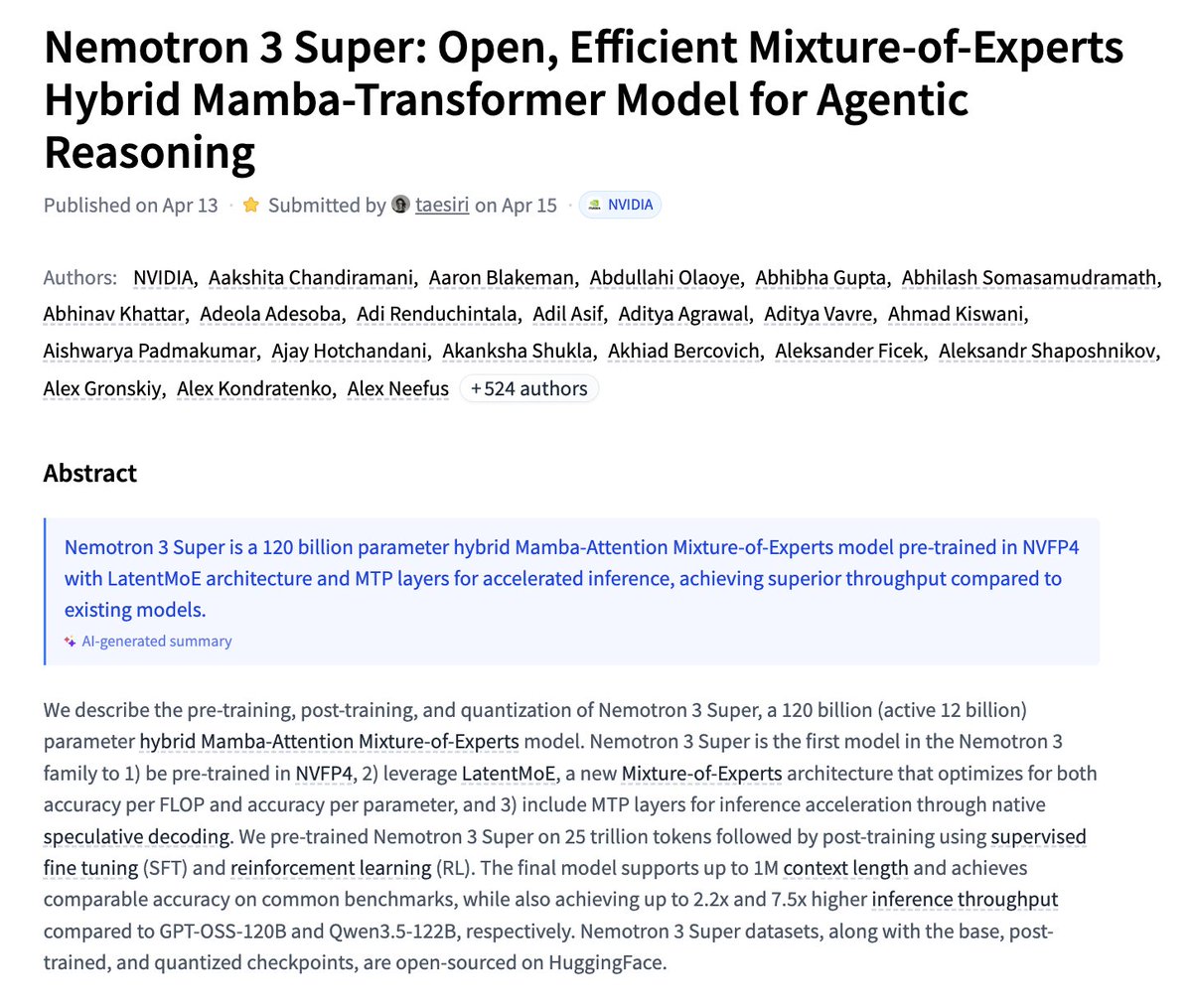

Banger paper from NVIDIA. Agentic reasoning needs models that are not just capable, but efficient at long-context inference. The agent model layer is moving toward open, long-context, high-throughput architectures. This paper introduces Nemotron 3 Super, an open 120B parameter model with 12B active parameters, built as a hybrid Mamba-Attention Mixture-of-Experts architecture. The headline numbers are strong: up to 1M context length, comparable accuracy on common benchmarks, and up to 2.2x higher throughput than GPT-OSS-120B and 7.5x higher throughput than Qwen3.5-122B. The model combines several efficiency bets, including NVFP4 pretraining, LatentMoE for accuracy per FLOP and per parameter, and MTP layers for native speculative decoding. It is trained on 25 trillion tokens, then post-trained with supervised fine-tuning and RL. Paper: https://t.co/VcqUPjylzF Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Nemotron 3 Super Open, Efficient Mixture-of-Experts Hybrid Mamba-Transformer Model for Agentic Reasoning paper: https://t.co/hOd6ss4tLV https://t.co/C7SOCZsA5c

New course: Spec-Driven Development with Coding Agents, built in partnership with @jetbrains, and taught by @paulweveritt. Vibe coding is fast, but often produces code that doesn't match what you asked for. This short course teaches you spec-driven development: write a detailed spec defining what to build, and work with your coding agent to implement it. Many of the best developers already build this way. A spec lets you control large code changes with a few words, preserve context across agent sessions, and stay in control as your project grows in complexity. Skills you'll gain: - Write a detailed specification to define your mission, tech stack, and roadmap, giving your agent the context it needs from the start - Plan, implement, and validate features in iterative loops using a spec as your agent's guide - Apply the same repeatable workflow to both new and legacy codebases - Package your workflow into a portable agent skill that works across agents and IDEs Join and write specs that keep your coding agent on track! https://t.co/hI4GwuvhtN

Today we launched Gemini 3.1 Flash TTS, our most expressive and controllable text-to-speech model yet. This launch [excitement] includes audio tags! 🗣🏷 Audio tags [explanatory] are a seamless way to guide vocal style, pace, and delivery using natural language commands embedded directly in your text. Want a different tempo or tone? [amazement] Just tag the audio to steer the AI-speech output! The model supports 70+ languages (24 of which are high-quality evaluated languages, including: Japanese, Hindi, and Arabic). Watch the audio tags in action in the demo below ↓

Gemini 3.1 Flash TTS is rolling out in Google Vids and is available today in preview via the Gemini API and in @GoogleAIStudio. Whether you’re creating a pitch deck or recording a passion project, transform your scripts into studio-quality narration: https://t.co/MG2YIQwKb6

A new and troubling risk is emerging around AI. An attacker targeting Sam Altman reportedly had a broader list of AI executives, raising concerns that individuals in the industry could become targets. It signals a shift. As AI’s influence grows, so do the stakes, and the risks are no longer just digital. https://t.co/50J0jk5tFz @fortunemagazine @marcoquiroz10

"I used a billion tokens this week. I'm not even in the top 100 Codex users at OpenAI." We sat down with @jxnlco (creator of Instructor, now on @OpenAI's Developer Experience team) to talk about how zero-latency inference is changing the way engineers work. https://t.co/PDyHtYRXec

Rethinking On-Policy Distillation of Large Language Models Phenomenology, Mechanism, and Recipe paper: https://t.co/E9e8kOjKvn https://t.co/60yYxgkqAA

Nvidia released Lyra 2.0 on Hugging Face Explorable Generative 3D Worlds paper: https://t.co/HcxsBD2yEh model: https://t.co/bC32ADfvDS https://t.co/RwdR7DUEcY

Habitat-GS A High-Fidelity Navigation Simulator with Dynamic Gaussian Splatting paper: https://t.co/pMpYxXukh7 https://t.co/n7UyazeDge

This AI car drove through 506 cities, a Tokyo typhoon, and Arctic darkness without a single external map. @wayve_ai is giving Waymo and Tesla a run for their money, and most people haven't noticed yet. @l2k sits down with CEO @alexgkendall, who breaks down how they got here and where it's going next. In his words: "taking it from expensive retrofit vehicles to mass-market vehicles that you can buy or manufacture." The old self-driving playbook is dead. The question is who writes the new one. Is your next car going to drive itself before you even think to ask for it?

Robots are maturing. AI models are advancing. Deployments are rarely scaling. Today, deploying a robot still means stitching together systems that were never designed to work together. For every robot. Every time. The industry needs a unified intelligence infrastructure that any robot, any model and any workflow can plug into. We call this an intelligence grid. Infrastructure that makes intelligence composable, deployable, and continuously adapting. Every connected robot evolves as the platform advances. More on what we're building: https://t.co/AMpxxFmtuc

The paper: https://t.co/ThpTSZye1N https://t.co/VjYeVcL983

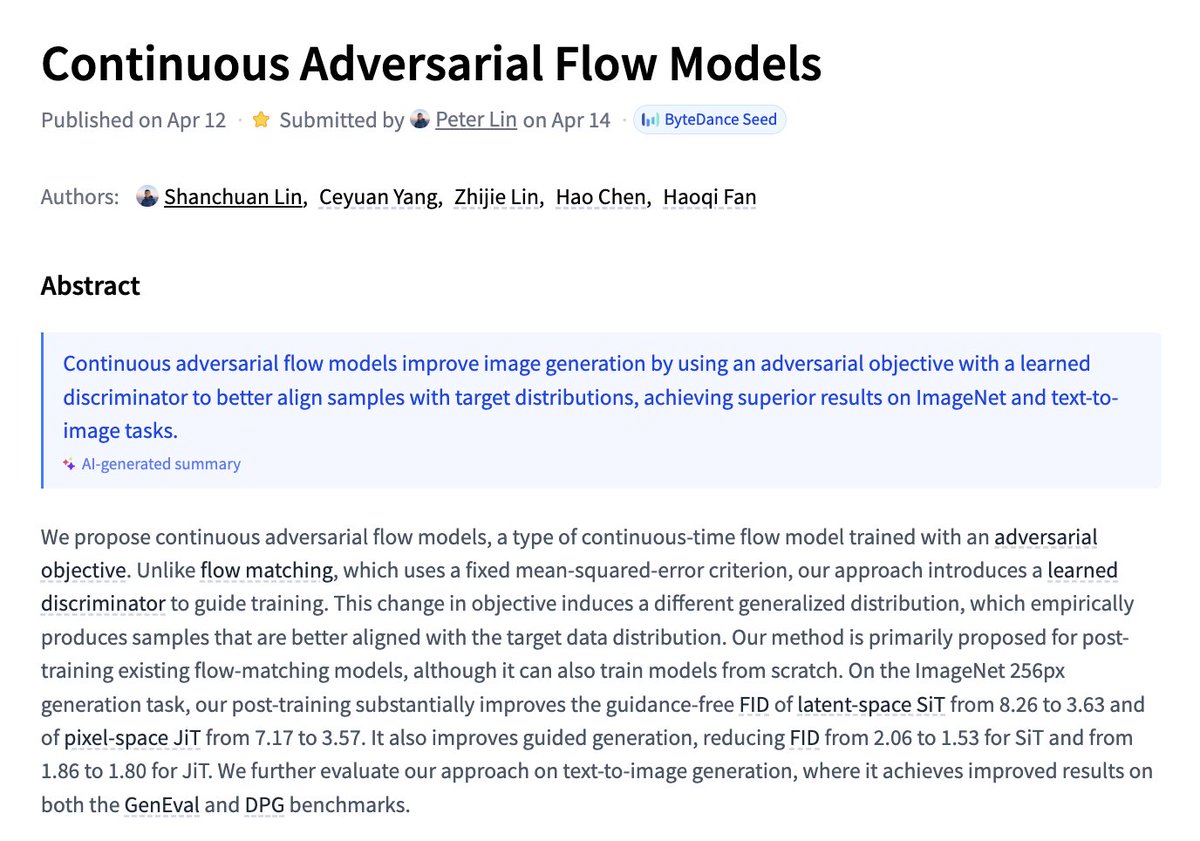

Continuous Adversarial Flow Models paper: https://t.co/dKxvhVE8Z2 https://t.co/STg8WFRgwY

ClawGUI A Unified Framework for Training, Evaluating, and Deploying GUI Agents paper: https://t.co/VPMTifMWGK https://t.co/xISqw6h3pQ

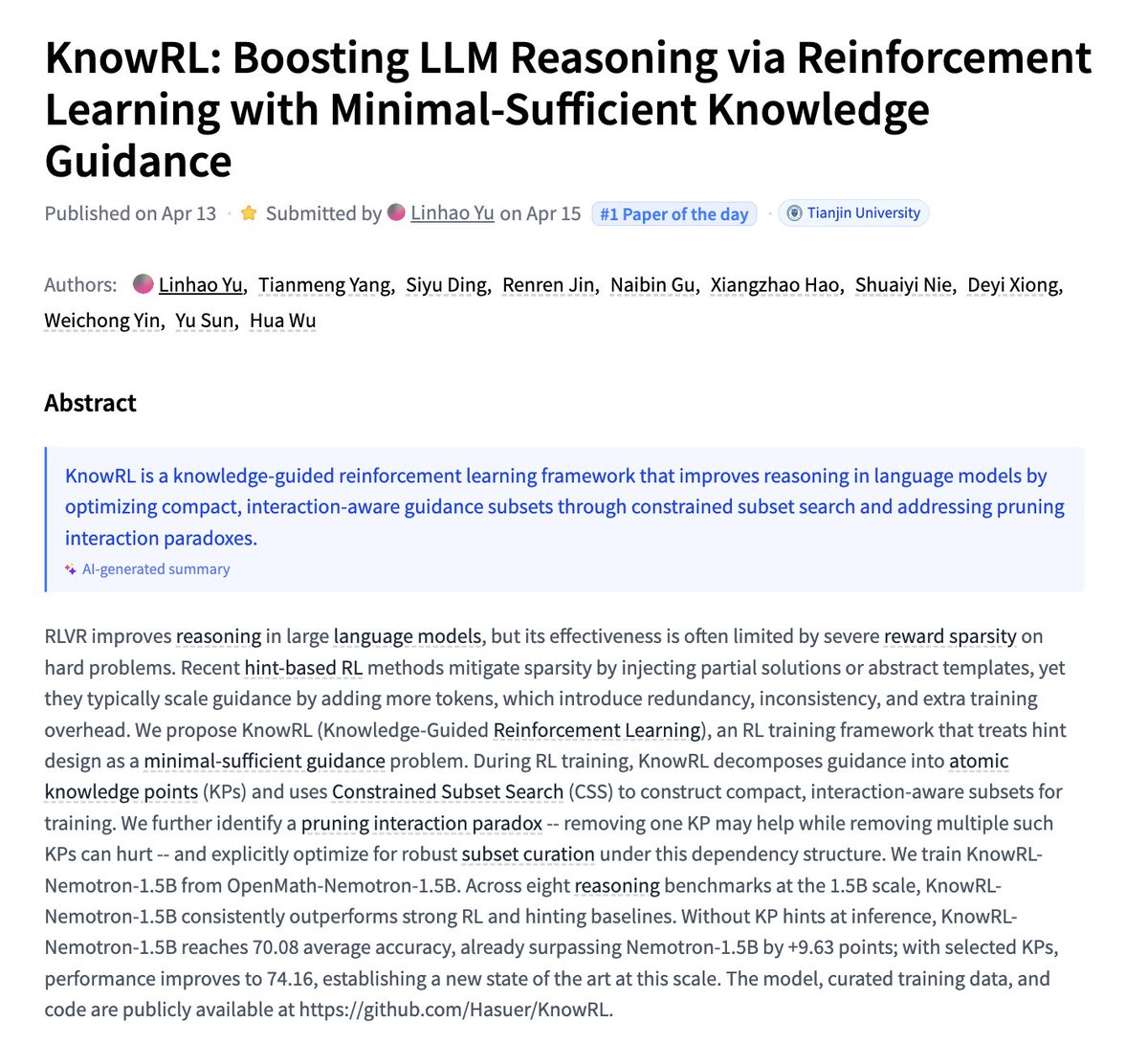

KnowRL Boosting LLM Reasoning via Reinforcement Learning with Minimal-Sufficient Knowledge Guidance paper: https://t.co/76Mu7D8sUn https://t.co/vnNFqXJ8hY

BREAKING: DHS confirms to @FoxNews that Olaolukitan Adon Abel, the suspect arrested for a seemingly random murder spree in DeKalb County, GA that left two women dead and a homeless man shot, is a national of the United Kingdom who was naturalized into a US citizen during the Biden administration in 2022. DHS confirms to FOX that one of the victims, 40-year-old Lauren Bullis, was a DHS employee who worked in the DHS Office of the Inspector General. She was found stabbed and shot to death while walking her dog. Abel faces two counts of murder, aggravated assault, and weapons charges after police say he carried out an early morning shooting spree in three separate locations in DeKalb County, GA on Monday, shooting and killing a woman in front of a Checkers, shooting and stabbing Lauren Bullis to death while she was walking her dog, and shooting a homeless man several times in front of a shopping center. Motive is unknown. DHS confirms that Abel had a lengthy rap sheet prior to this incident, including prior convictions for sexual battery, battery against a police officer, and assault with a deadly weapon. Photo courtesy: DHS

Grok just hit its highest monthly traffic EVER: over 326 MILLION visits in March alone That’s a massive 61% jump YoY and up 9.3% just since February People love Grok because it's the only AI they can trust with the answers and ask anything without hitting the policy walls unlike other AIs

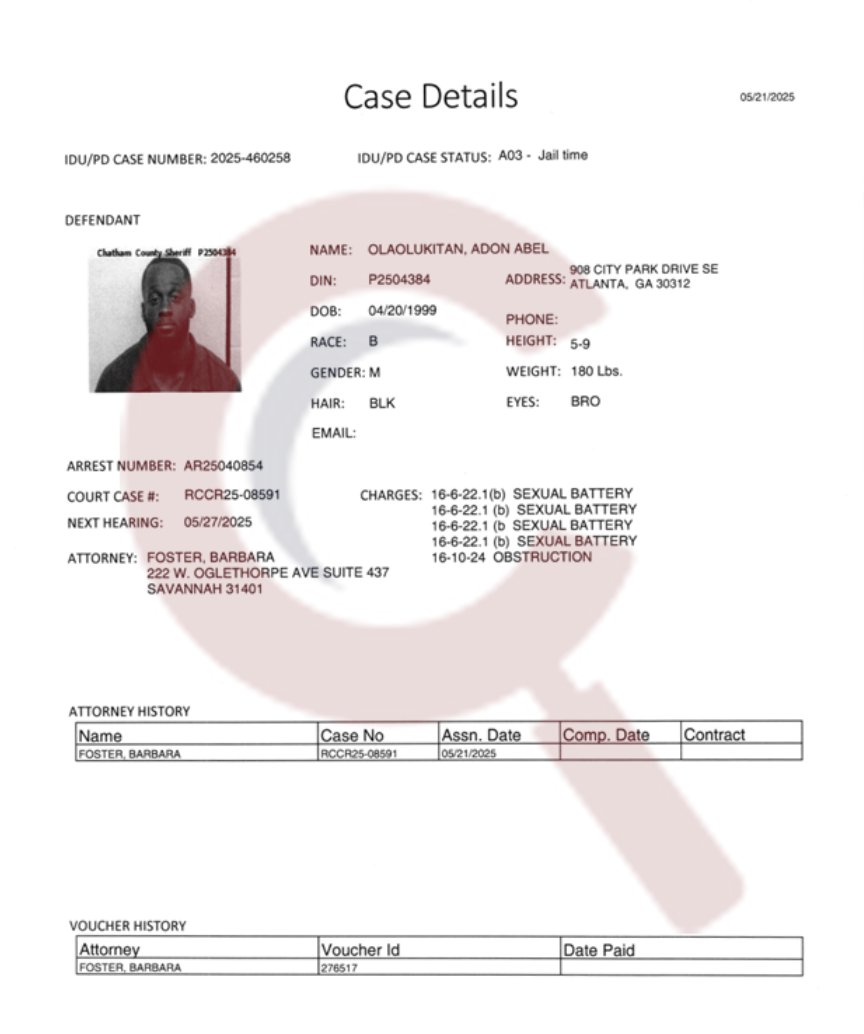

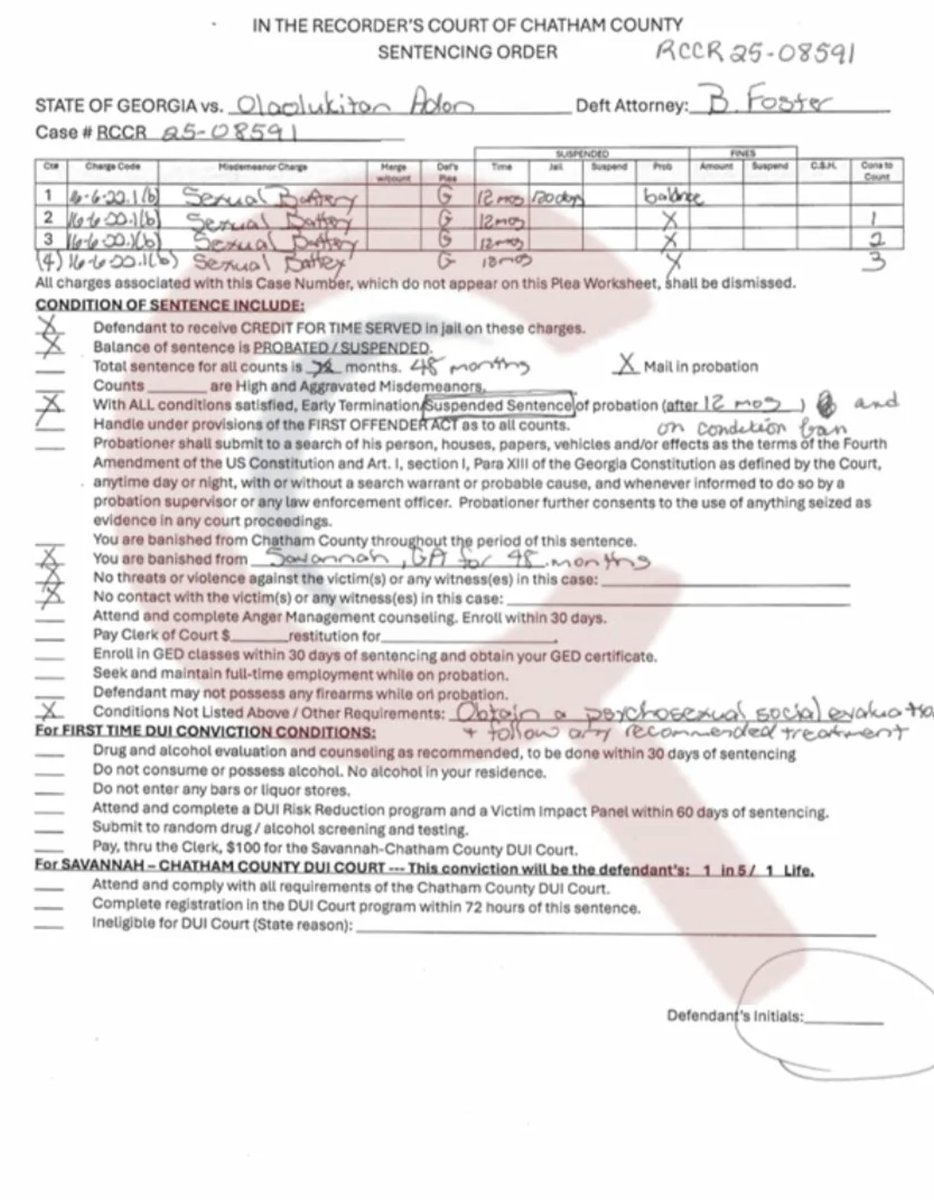

It took me a few hours yesterday, but I got access to the case files for Olaolukitan Adon Abel previous se*ual battery case from Savannah GA that caused him to be BANISHED from the ENTIRE CITY. I'll drop the files below, but here's the story: >Abel assaulted 4 different women in the span of a few hours >4 victims, 4 counts of se*ual battery, in Chatham County, April 2025. >When police tried to arrest him, he resisted. >They added an obstruction charge on top of everything else >That obstruction charge vanished before trial >Prosecutors dropped it entirely. No explanation given. >His attorney, B. Foster, got all 4 se*ual battery counts handled under Georgia's First Offender Act >Meaning if he completed probation, the whole thing would essentially disappear from his record. >The judge sentenced him to 48 months, then suspended most of it. >He actually served 120 days in prison TOTAL >Thirty days per victim >He paid $0 in fines >Zero in restitution to any of the 4 women >The court did order a psychose*ual evaluation and required he follow whatever treatment came from it >They mandated mental health counseling >They banned him from the entire city of Savannah for four years >They explicitly prohibited him from possessing any firearm >He signed the probation agreement on June 7, 2025 >7 months later, he got a gun and m*rdered 2 women, including Lauren Bullis You are not angry enough.

🚨#BREAKING: The woman who was randomly shot in the face 6 times and st*bbed to de*th while she was walking her dog in Atlanta GA has been identified as 40-year-old Lauren Bullis. The suspect, “Olaolukitan Adon Abel” was also allegedly in the process of se*ually assaulting her be

This group calls for genocide against whites, supports the Islamic regime in Iran, and spreads antisemitism. Then they blame @elonmusk, yet never answer a simple question: why is South Africa becoming what they accuse others of? https://t.co/cxjL6IscPL

Javier Milei: “No tengo nada en contra de los artistas. Yo mismo tuve una banda de rock. Mi problema es que si necesitas una subvención del gobierno para hacer arte, ya no eres un artista, eres un empleado público.” Milei es un número uno. https://t.co/SQeY55YZjZ

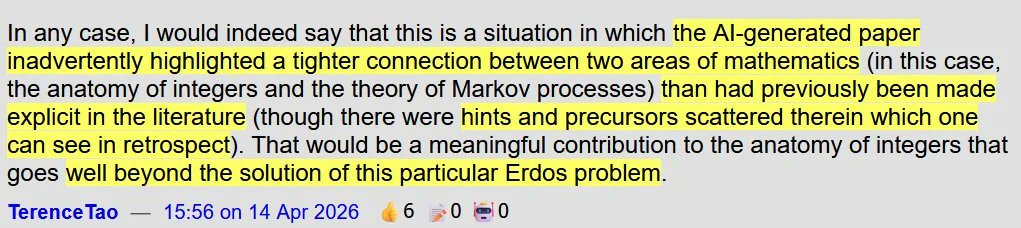

mathematician Terence Tao on the gpt-5.4 pro solving Erdős problem #1196: "the AI-generated paper may have made a meaningful contribution by revealing a deeper mathematical connection that earlier work had not clearly made explicit, which value beyond solving this particular erdős problem"

A couple of people asked me how I did it, so here's the answer: Asking in ChatGPT (not codex, that's a different gpt-5.4) the GPT-5.4 Pro Extended Thinking to solve it. Now, here's a caveat: if you just put "Solve X" and X is an open problem that is easily searchable to be open (e.g. Erdos problem), you get nothing really, just a literature search. So to really force an LLM to think hard about it there are 2 methods: 1. Add "Don't search the internet, this is a test of your reasoning capabilities". Yeah, really. Add after that "Solve X" and it will give it a shot. The problem with that is it often tries to solve problems without searching for any kind of research, so it only works on simpler problems. 2. Add guidance "Solve X with method Y". Now this is really good is you have mathematical background, because you can find some good Y (assuming it's your domain), but you can also pick Y at random from doing a literature search first. now for the actual problem I solve here, I did 2., but with a caveat of running agents before hand. I have the full repo of Agentic Erdos: https://t.co/xegnXal7UT where I've run AI agents over ALL Erdos problems, basically doing basic compute, some arguments, some literature. So when I go to ChatGPT I can use that as a very specific prompt.

Today I solved my second open Erdos problem with GPT-5.4 Pro. It's quite a remarkable day, because within the last 24 hours, two other Erdos problems has been solved as well with AI. Still over 500+ to go. https://t.co/dkrhZSlyBR

Gradio Super Mario theme is out To use this theme, set theme='hmb/super-mario' in the launch() method of gr.Blocks() or gr.Interface(). You can append an @ and a semantic version expression, e.g. @>=1.0.0,<2.0.0 to pin to a given version of this theme example app: https://t.co/QxOFyix6HS

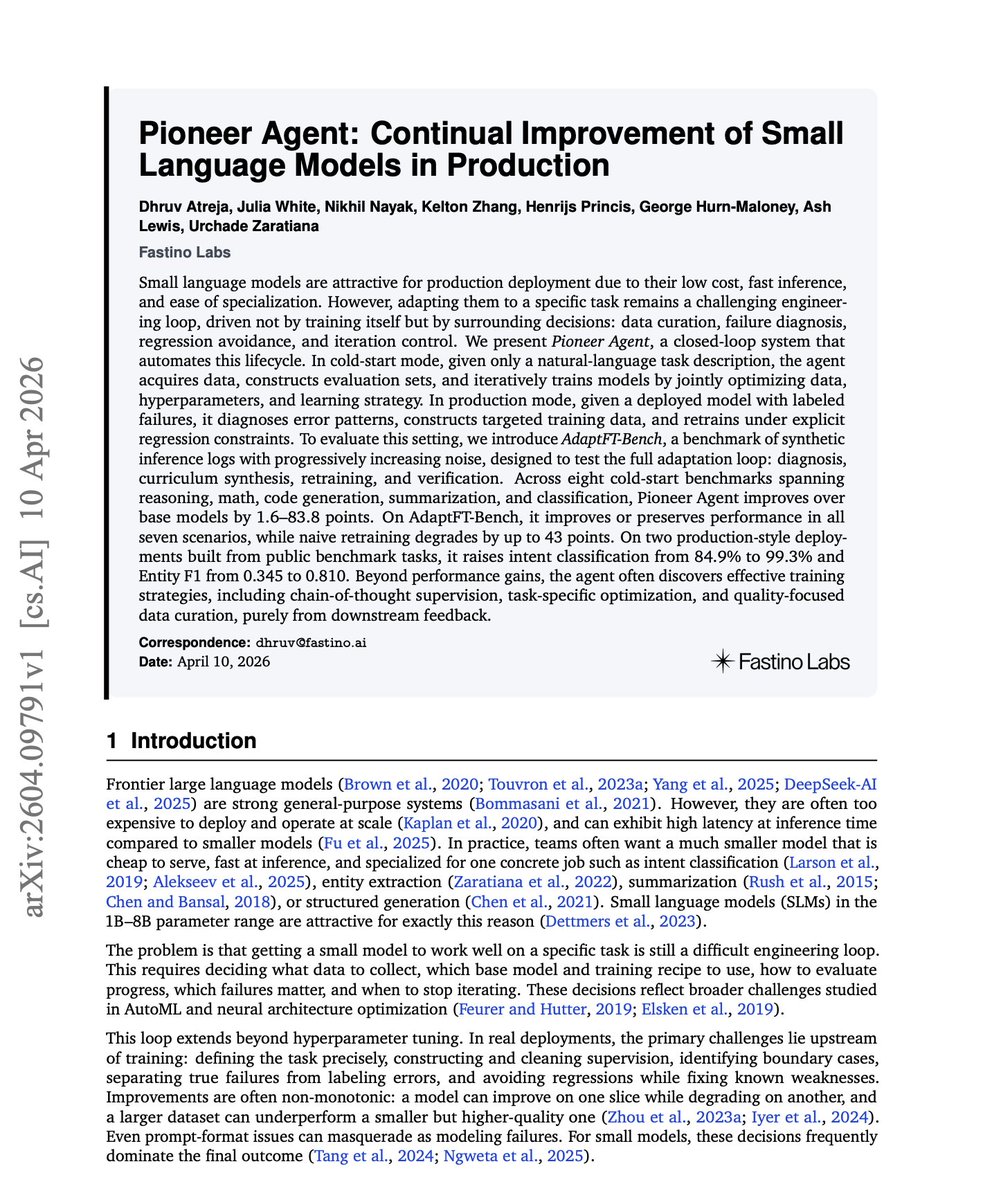

Small models are cheap to run, but expensive to adapt. The hard part is not only fine-tuning. It is the surrounding loop that involves collecting data, diagnosing failures, building evals, avoiding regressions, choosing curricula, and deciding when an update is safe. This new paper introduces Pioneer Agent, a closed-loop system for continual improvement of small language models in production. In cold-start mode, the agent starts from a natural-language task description, acquires data, builds evals, and iteratively trains models. In production mode, it uses labeled failures to diagnose error patterns, synthesize targeted data, and retrain under explicit regression constraints. The results are strong: gains of 1.6 to 83.8 points across eight cold-start benchmarks, no regressions across seven AdaptFT-Bench scenarios, intent classification from 84.9% to 99.3%, and Entity F1 from 0.345 to 0.810. Paper: https://t.co/lFkFiXzP8E Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

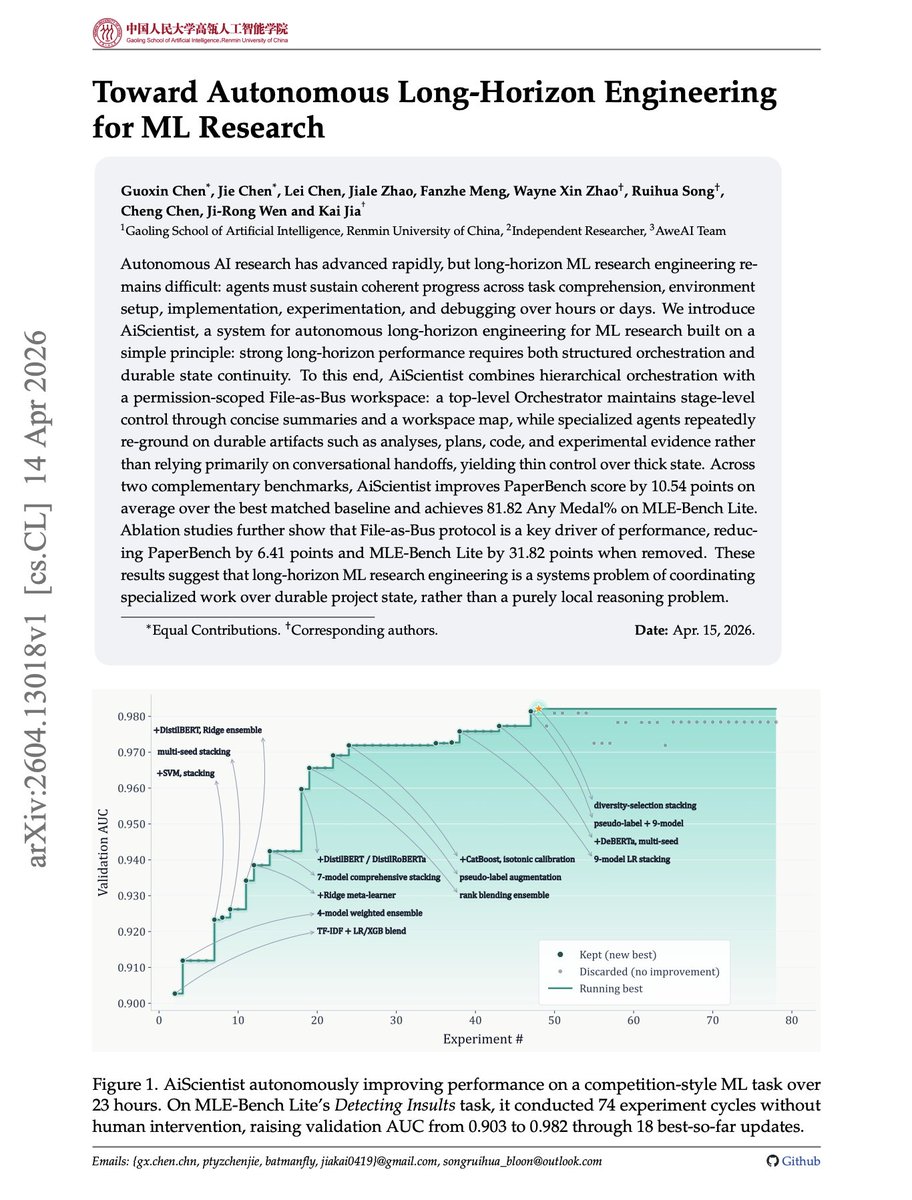

Long-horizon AI research agents are mostly a state-management problem. It is not enough for an agent to reason well in the next turn. ML research requires task setup, implementation, experiments, debugging, and evidence tracking over hours or days. This new paper introduces AiScientist, a system for autonomous long-horizon engineering for ML research. The key idea is to keep control thin and state thick. A top-level orchestrator manages stage-level progress, while specialized agents repeatedly ground themselves in durable workspace artifacts: analyses, plans, code, logs, and experimental evidence. That "File-as-Bus" design matters. AiScientist improves PaperBench by 10.54 points over the best matched baseline and reaches 81.82 Any Medal% on MLE-Bench Lite. Removing File-as-Bus drops PaperBench by 6.41 points and MLE-Bench Lite by 31.82 points. Why does it matter? Autonomous research agents need durable project memory, not just longer chats. Paper: https://t.co/A84c75oumP Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

From the Allbirds release: 'Allbirds, Inc. today announced the execution of a definitive agreement with an institutional investor for a $50 million convertible financing facility. The Facility, which is expected to close during the second quarter of 2026, will enable the Company to pivot its business to AI compute infrastructure, with a long-term vision to become a fully integrated GPU-as-a-Service (GPUaaS) and AI-native cloud solutions provider. In connection with this pivot, the Company anticipates changing its name to “NewBird AI.”'

Markets seem to love this stuff In 2018, Kodak said out of nowhere that it was a crypto company and its stock shot up In 1998, Zapata (an oil firm founded by the Bushes that became a fish meal company) shortened its name to https://t.co/nqMaODkYqt & IPO’ed as an Internet stock https://t.co/sE3OVN2kiC

From the Allbirds release: 'Allbirds, Inc. today announced the execution of a definitive agreement with an institutional investor for a $50 million convertible financing facility. The Facility, which is expected to close during the second quarter of 2026, will enable the Company