Your curated collection of saved posts and media

BREAKING: X now shows how many ads you avoided and how much time you saved with your Premium subscription. Go to Premium > Ads Avoided https://t.co/Jwm8eMfnNX

Fulton County admits it illegally certified 315,000 ballots in 2020 election https://t.co/OoIPJkh1cw

The thing the Democrats insisted never happens just keeps on happening at massive scale… https://t.co/WHkNqXlY1s

This robot solving a rubiks cube in 0.103 seconds is a little preview of what "AGI" really means https://t.co/kskJO2lhT0

This robot solving a rubiks cube in 0.103 seconds is a little preview of what "AGI" really means https://t.co/kskJO2lhT0

Today, at Markov, we're launching RL Environments. The simplest (and cutest :D) way to evaluate and train your AI agents. We're starting with Bananazon - an environment for customer service agents. Try it out at the link below. @markov__ai https://t.co/FX5pwuQU9B

Graphite is joining Cursor. We started Graphite to reimagine collaborative software development. Partnering with Cursor brings that future into focus faster than ever. https://t.co/gvMQ7y6fNJ

🎨 Qwen-Image-Layered is LIVE — native image decomposition, fully open-sourced! ✨ Why it stands out ✅ Photoshop-grade layering Physically isolated RGBA layers with true native editability ✅ Prompt-controlled structure Explicitly specify 3–10 layers — from coarse layouts to fine-grained details ✅ Infinite decomposition Keep drilling down: layers within layers, to any depth of detail 🤗 Hugging Face: https://t.co/WnXVNJigCg 🧩 ModelScope: https://t.co/2k0ClUS2ON 💻 GitHub: https://t.co/X4jB5APtP7 📝 Blog: https://t.co/TfySatdOwU 📄 Technical Report: https://t.co/3UtxVyGv5u 🚀 Demo (HF): https://t.co/YL0XOiDAIq 🚀 Demo (ModelScope): https://t.co/KJxca978AX

The NVIDIA Nemotron family just crossed 5M downloads on @huggingface 🤗 A massive thank you to the community for your work and enthusiasm. 🏗️ Get started here: https://t.co/lcU4HrBZKx https://t.co/8xjDii1zoj

Introducing Gemma Scope 2 🤗Largest open release of interpretability tools (over 1 trillion parameters trained!) 🔬Works as a microscope to analyze all Gemma 3 models' internal activations 🗣️Advanced tools for analyzing chat behaviors https://t.co/wnMg3tIXuV

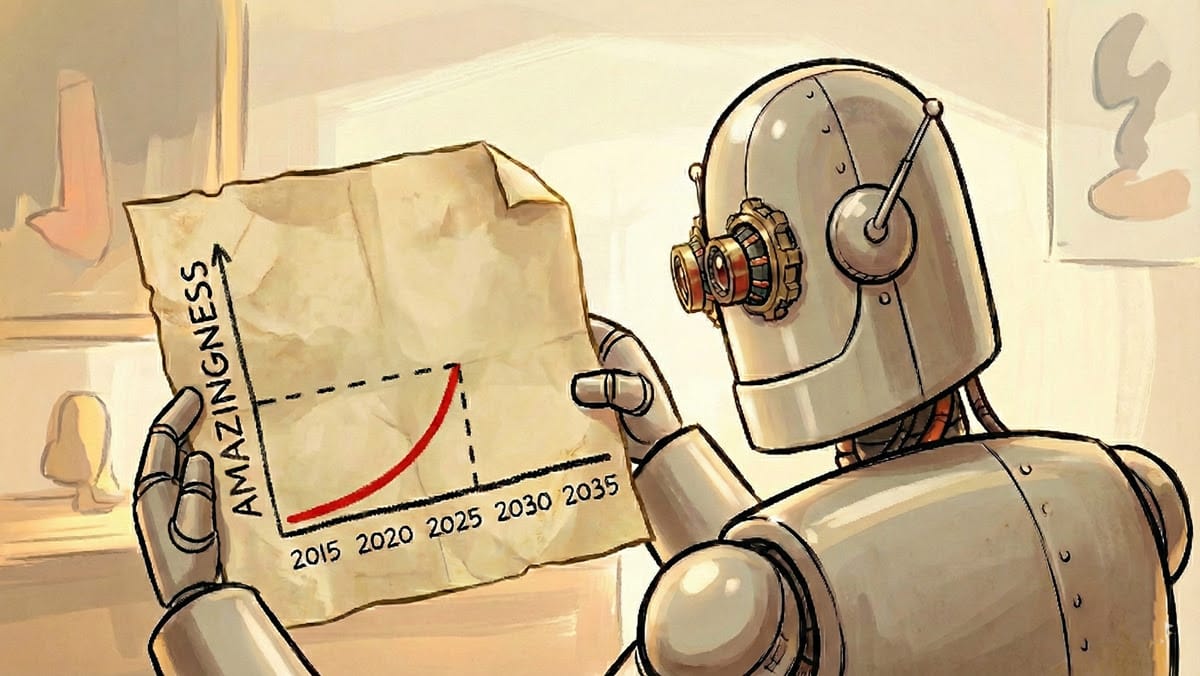

As amazing as LLMs are, improving their knowledge today involves a more piecemeal process than is widely appreciated. I’ve written before about how AI is amazing... but not that amazing. Well, it is also true that LLMs are general... but not that general. We shouldn’t buy into the inaccurate hype that LLMs are a path to AGI in just a few years, but we also shouldn’t buy into the opposite, also inaccurate hype that they are only demoware. Instead, I find it helpful to have a more precise understanding of the current path to building more intelligent models. First, LLMs are indeed a more general form of intelligence than earlier generations of technology. This is why a single LLM can be applied to a wide range of tasks. The first wave of LLM technology accomplished this by training on the public web, which contains a lot of information about a wide range of topics. This made their knowledge far more general than earlier algorithms that were trained to carry out a single task such as predicting housing prices or playing a single game like chess or Go. However, they’re far less general than human abilities. For instance, after pretraining on the entire content of the public web, an LLM still struggles to adapt to write in certain styles that many editors would be able to, or use simple websites reliably. After leveraging pretty much all the open information on the web, progress got harder. Today, if a frontier lab wants an LLM to do well on a specific task — such as code using a specific programming language, or say sensible things about a specific niche in, say, healthcare or finance — researchers might go through a laborious process of finding or generating lots of data for that domain and then preparing that data (cleaning low-quality text, deduplicating, paraphrasing, etc.) to create data to give an LLM that knowledge. Or, to get a model to perform certain tasks, such as use a web browser, developers might go through an even more laborious process of creating many RL gyms (simulated environments) to let an algorithm repeatedly practice a narrow set of tasks. A typical human, despite having seen vastly less text or practiced far less in computer-use training environments than today's frontier models, nonetheless can generalize to a far wider range of tasks than a frontier model. Humans might do this by taking advantage of continuous learning from feedback, or by having superior representations of non-text input (the way LLMs tokenize images still seems like a hack to me), and many other mechanisms that we do not yet understand. Advancing frontier models today requires making a lot of manual decisions and taking a data-centric AI approach to engineering the data we use to train our models. Future breakthroughs might allow us to advance LLMs in a less piecemeal fashion than I describe here. But even if they don’t, the ongoing piecemeal improvements, coupled with the limited degree to which these models do generalize and exhibit “emergent behaviors,” will continue to drive rapid progress. Either way, we should plan for many more years of hard work. A long, hard — and fun! — slog remains ahead to build more intelligent models. [Original text: https://t.co/SHRN5JDvTW ]

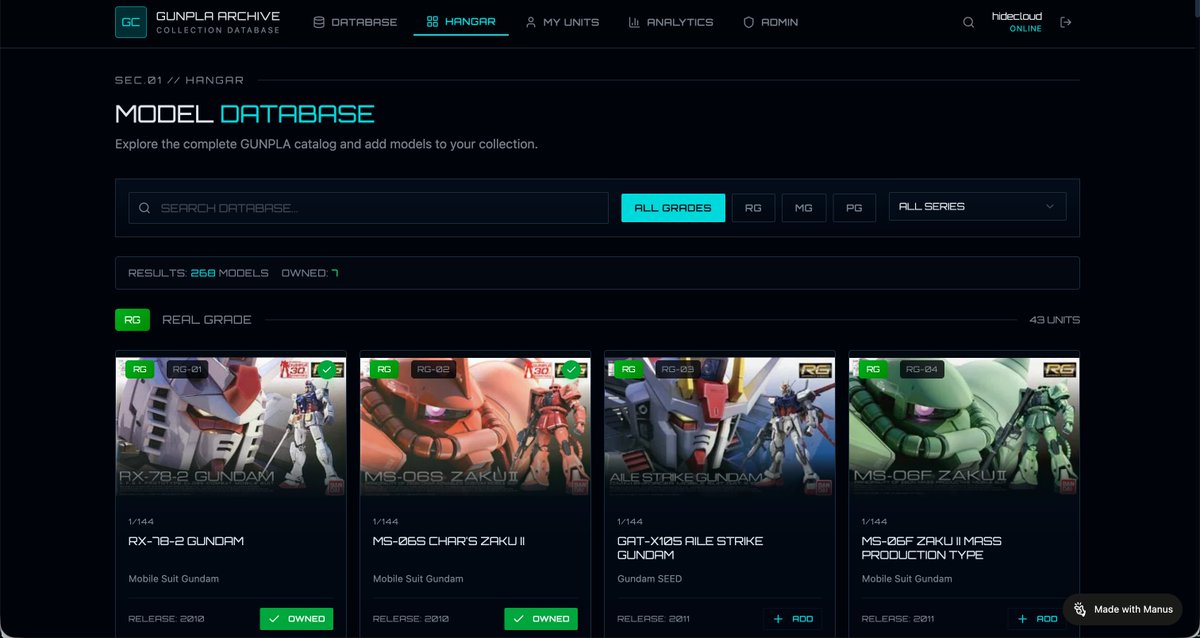

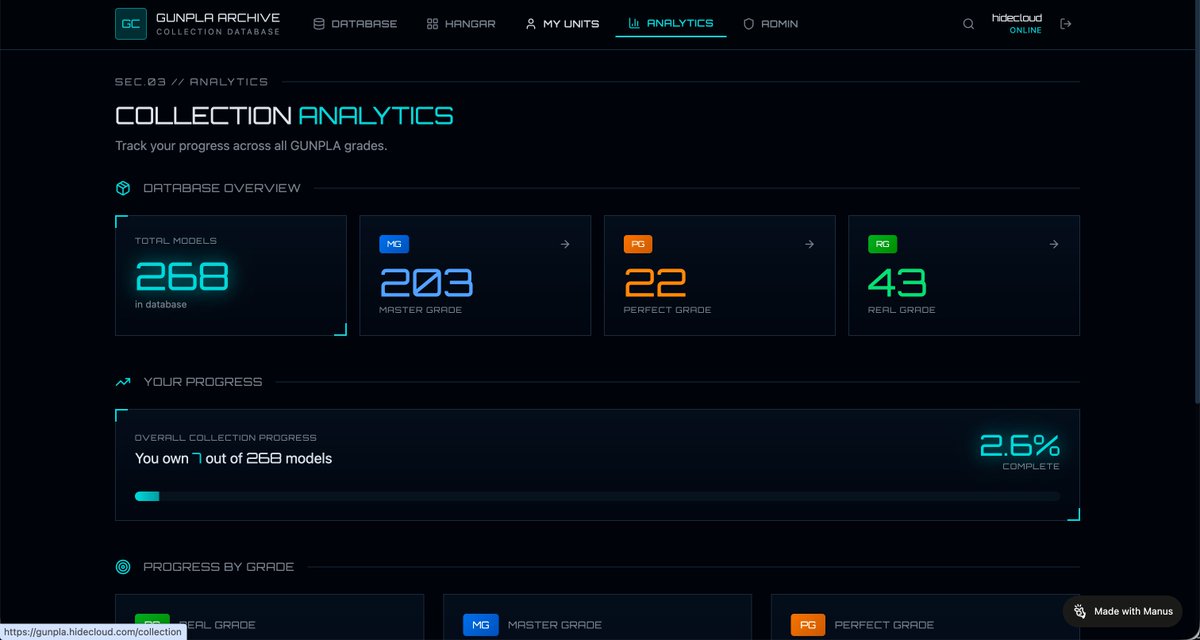

Long-time GUNPLA fan here. Built a GUNPLA database web app with Manus to track my collection — open to everyone. Have fun if you like GUNPLA https://t.co/ZvjXmxhCm8 https://t.co/uKQsd8RMAo

In all the Rob Reiner tributes I haven't seen my favourite bit, which is this short scene from Spinal Tap. Watching the band try to stifle their laughter always cracks me up https://t.co/XB6RFIfF69

Scott Jennings: "If I can’t trust you not to put a boy in a teenage girl’s locker room, how will I ever listen to your plan for taxes and the economy? ... I will not, because I’ve already concluded you’re a lunatic." https://t.co/r51qoPVV7n

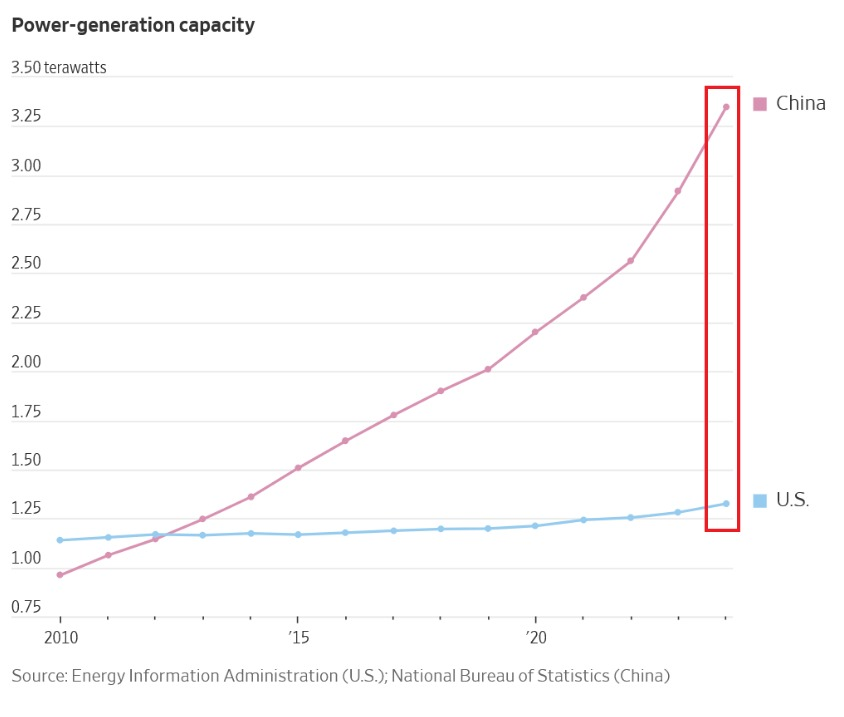

China is dominating the worldwide race for power: China now has a record 3.75 terawatts of power generation capacity. That capacity has doubled over the last 8 years. This is nearly 3 TIMES more than the US, which has ~1.30 terawatts of capacity. Furthermore, China has 34 nuclear reactors under construction, more than the next 9 countries combined. Nearly 200 other reactors are planned or proposed. At the same time, there are currently no large commercial nuclear reactors under construction in the US. The US must act now to keep up with China.

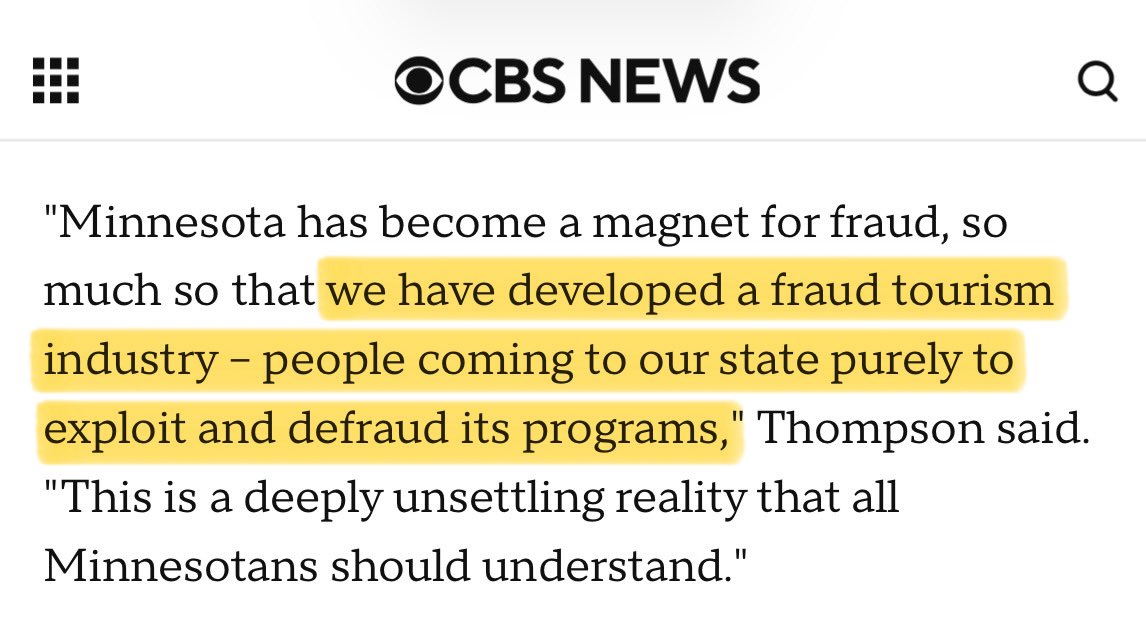

This is insane… US Attorney's Office of Minnesota now thinks that tens of billions have been stolen: "The magnitude can’t be overstated. What we see in Minnesota is not a few bad actors committing crimes. This is industrial-scale fraud. More than half of these programs." https://t.co/gqo0G9Eyda

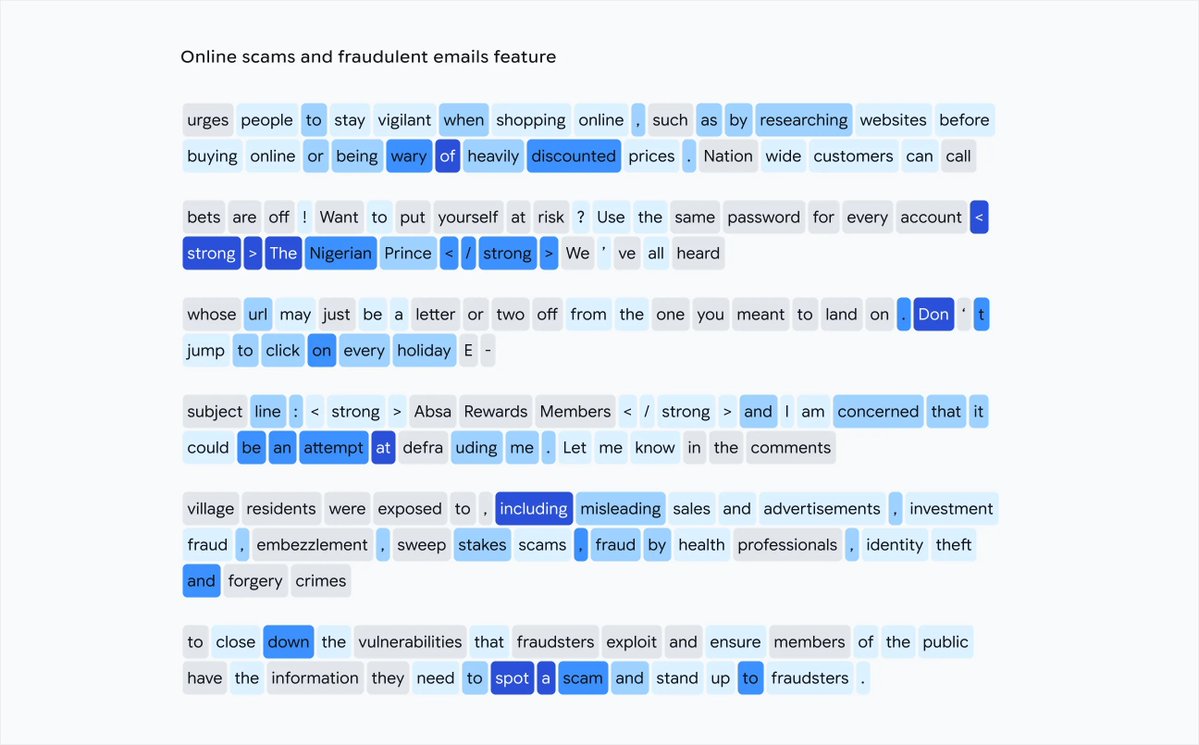

Holy shit is Meta evil lmao ***10%*** of Meta's revenue is from ACTUAL SCAMS that they KNOW ARE SCAMS When Zuck found out, he shut down... the ANTI-scam team Imagine trusting this man - or any of these cartoon villains - with Actual Fucking Superintelligence https://t.co/Ec8HALD4kl

Australian PM announces massive gun grab program following t*rrorist attack in Bondi Beach They imported radical islamists and now they’re further disarming their citizens. Defenseless victims waited nearly 20 minutes for police to respond to the attack. https://t.co/ZLWXjOCrNv

WOW. Students at Ross S. Sterling High School (@GCCISD) are PROTESTING against their school district, demanding JUSTICE after 16-year-old Andrew Meismer was stabbed to death during class. Students claim that Aundre Matthews, who was charged with Andrew's m*rder, had a history of disciplinary action which the school repeatedly ignored. They say Andrew's m*rder was PREVENTABLE.

early morning just got a little better - nice meeting you, @MichaelRapaport! https://t.co/n8sbXIrtI8

#NationalElephantDay & I hate these random “National” days but I rock with the Elephants. 🐘🐘🐘🐘🐘🐘🐘🐘🐘🐘🐘🐘 https://t.co/9GOsrt8J5g

Sometimes Nature is all you need. ~ Shikoba https://t.co/bQtI7ys67X

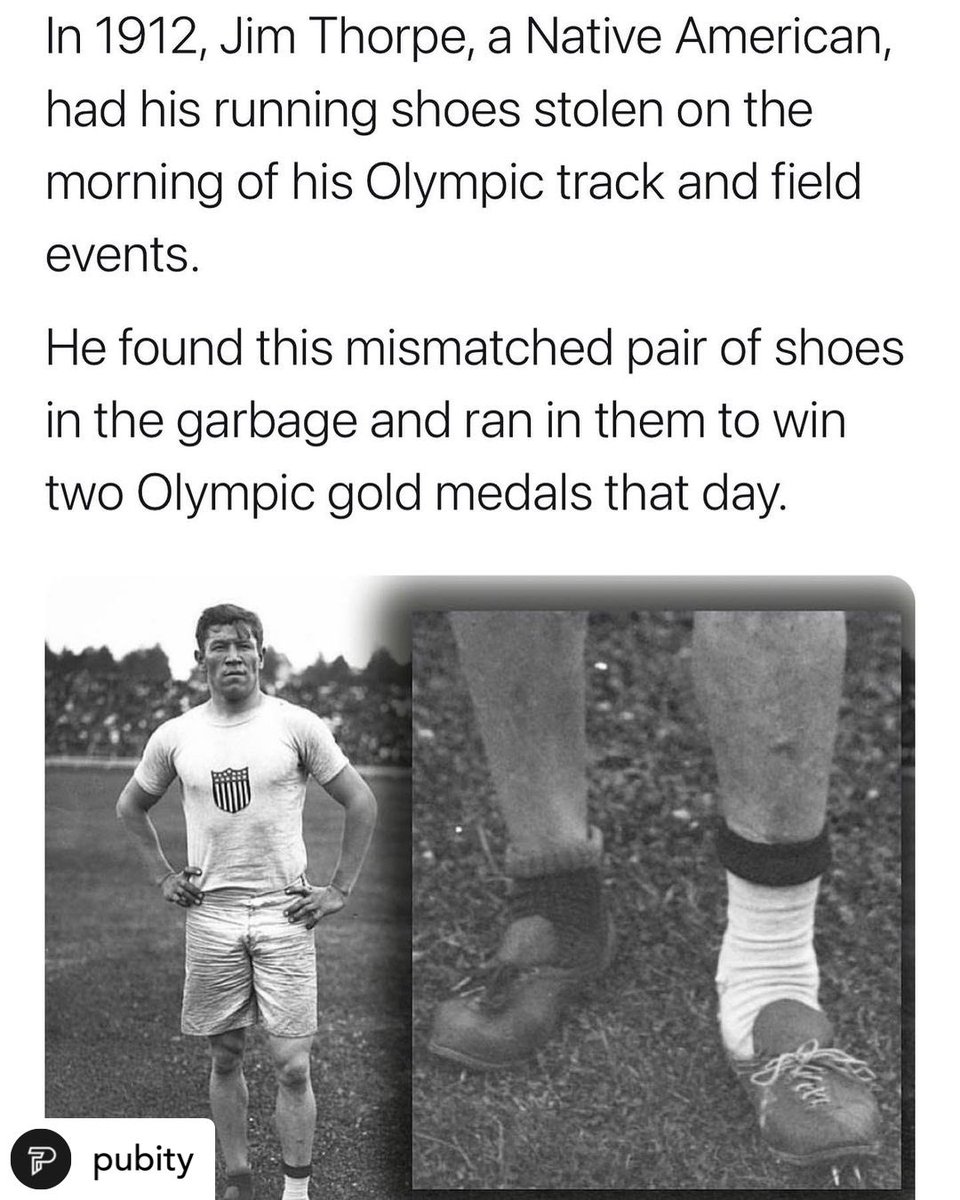

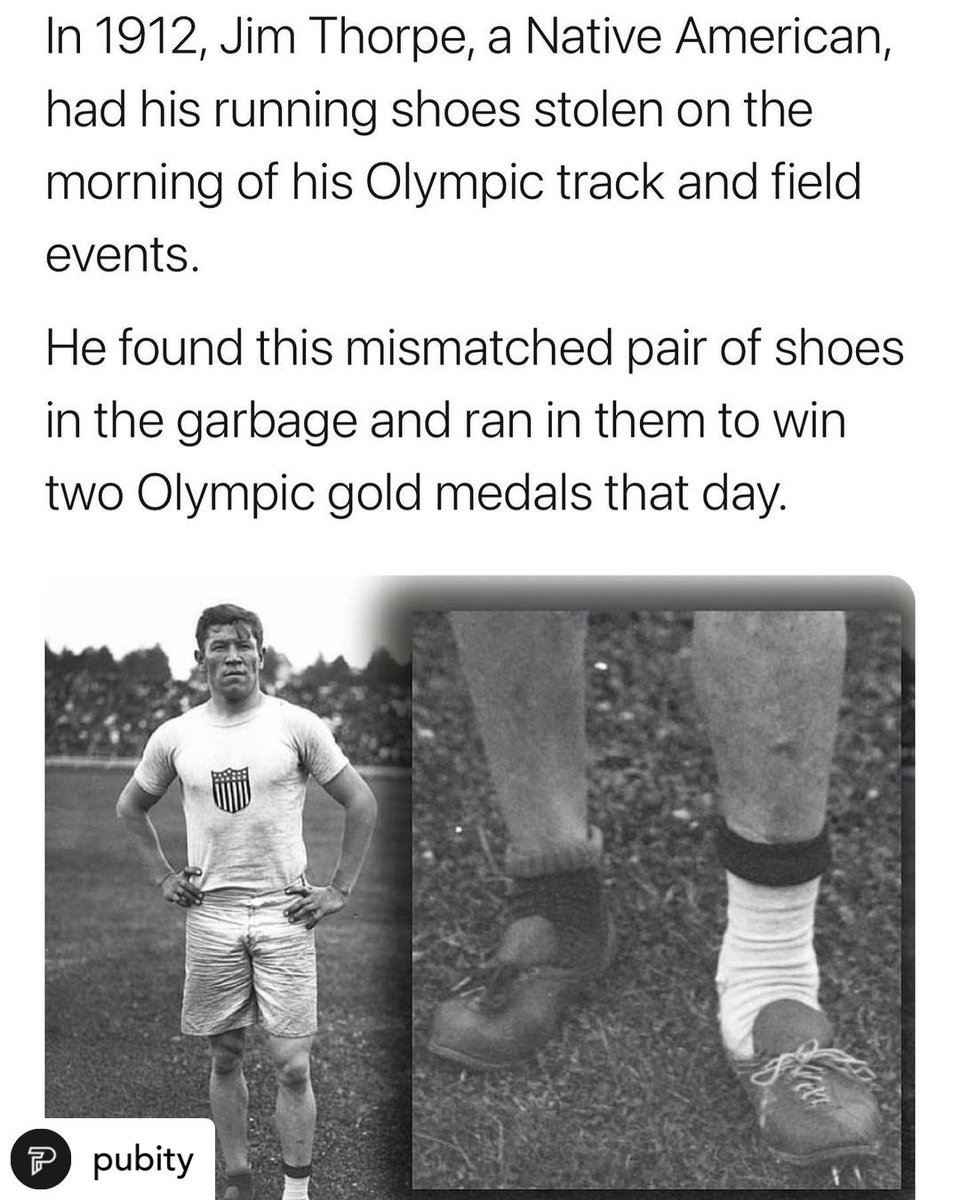

What you come from doesn’t matter. It’s never mattered. Most of our greatest hero’s came from lack. If you have a dream in your heart & faith in your soul. You do the work against all obstacles. You can it do all. Happy Olympics Everyone #JimThorpe #nativepride #nativesinsports https://t.co/R7q4ssiqKj

“Respect all living things, and never take what you cannot give back, or destroy what you cannot create.” ~ B. McGill, https://t.co/byvyL3ua66

Native is for Friday's .. so enjoy as we continue to cover you with too much assurances. Happy Friday. https://t.co/qz9lUSI3Dw

“Those who find beauty in all of nature will find themselves at one with the secrets of life itself.” ~ L. Wolfe Gilbert https://t.co/SaCQIW3O8v

Introducing NitroGen, an open-source foundation model trained to play 1000+ games: RPG, platformer, battle royale, racing, 2D, 3D, you name it! We are on a quest for general-purpose embodied agents that master not only the real world physics, but also all possible physics across a multiverse of simulations. We found that our GR00T N1.5 architecture, originally designed for robotics, can be adapted easily to play lots of games with wildly different mechanics. Our recipe is simple and bitter lesson-pilled: (1) a 40K+ hour, high-quality dataset of public in-the-wild gameplay; (2) a highly capable foundation model for continuous motor control; (3) a Gym API that wraps any game binary to run rollouts. Our data curation is a lot of fun: it turns out that gamers love to show off their skills by overlaying real-time gamepad control on a video stream. So we train a segmentation model to detect and extract those gamepad displays and turn them into expert actions. We then mask out that region to prevent the model from exploiting a shortcut. During training, a variant of GR00T N1.5 learns to map from 40K hours of pixels to actions through diffusion transformers. NitroGen is only the beginning, and there's a long way to hill-climb on the capability. We intentionally focus only on the System 1 side: the "gamer instinct" of fast motor control. We open-source *everything* for you to tinker: pretrained model weights, the entire action dataset, code, and a whitepaper with solid details. Today, robotics is a superset of hard AI problems. Tomorrow, it might become a subset, a dot in the much larger latent space of embodied AGI. Then you just prompt and "ask for" a robot controller. That might be the end game (pun intended). NitroGen is co-led by our brilliant minds: Loic Magne, Anas Awadalla, Guanzhi Wang. It's a multi-institutional collaboration. Check out Guanzhi's technical deep dive thread and repo links below!

Introducing NitroGen, an open foundation model for generalist gaming agents! https://t.co/TEv0G8QqaV ✅ Open access to the largest action-labeled gameplay videos with 40K hours across 1,000+ game titles ✅ Universal simulator for measuring cross-game generalization ✅ Open gaming foundation model trained to play 1,000+ games with large-scale behavior cloning 🧵

Website: https://t.co/SgkyODmmzR Paper: https://t.co/RYCZqku6iM Code: https://t.co/BWRwakEhkW Pretrained model weights: https://t.co/Ab0rBf4wHc Action dataset: https://t.co/ZoLHrpaCjY Deep dive from @guanzhi_wang https://t.co/a1VyQEMjxd

Introducing NitroGen, an open foundation model for generalist gaming agents! https://t.co/TEv0G8QqaV ✅ Open access to the largest action-labeled gameplay videos with 40K hours across 1,000+ game titles ✅ Universal simulator for measuring cross-game generalization ✅ Open gaming f

Do you want to run coding agents safely, without damaging to your filesystem? 📁 Last week, we published a blog post and a demo showing exactly how to do this with @claudeai and AgentFS by @tursodatabase. After strong community interest, we’ve now shipped support for @OpenAI Codex as well 🚢 How it works: 💻 Launch the filesystem MCP server 🆕 Open a new demo session 🚀 Start coding with Codex Supporting Codex unlocks a big advantage: developers can use any OpenAI-compatible provider, including @ollama and @huggingface Inference API. This means more flexibility and safer experimentation, all without compromising your local environment. Let us know what you build with it! 👩💻 Find the code on GitHub: https://t.co/lCeaFCYHve 📚 Read the blog: https://t.co/IiCW8Bo0NZ