Your curated collection of saved posts and media

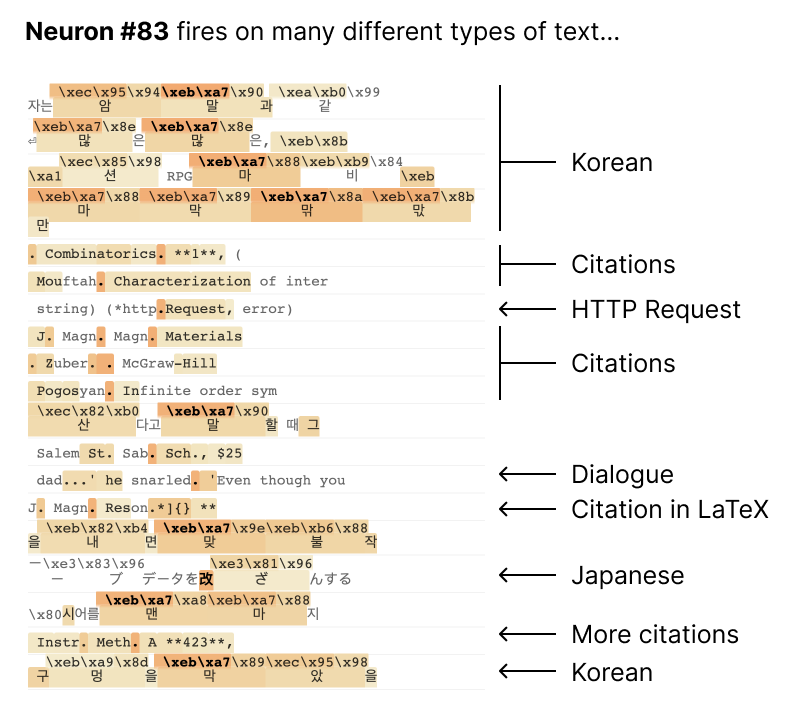

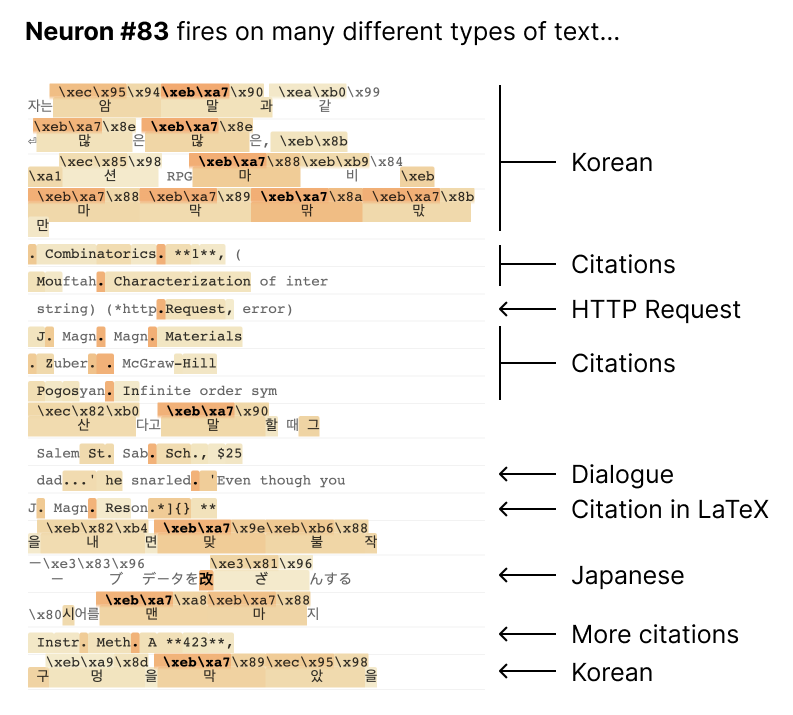

Most neurons in language models are "polysemantic" – they respond to multiple unrelated things. For example, one neuron in a small language model activates strongly on academic citations, English dialogue, HTTP requests, Korean text, and others. https://t.co/PrqtDGar0J

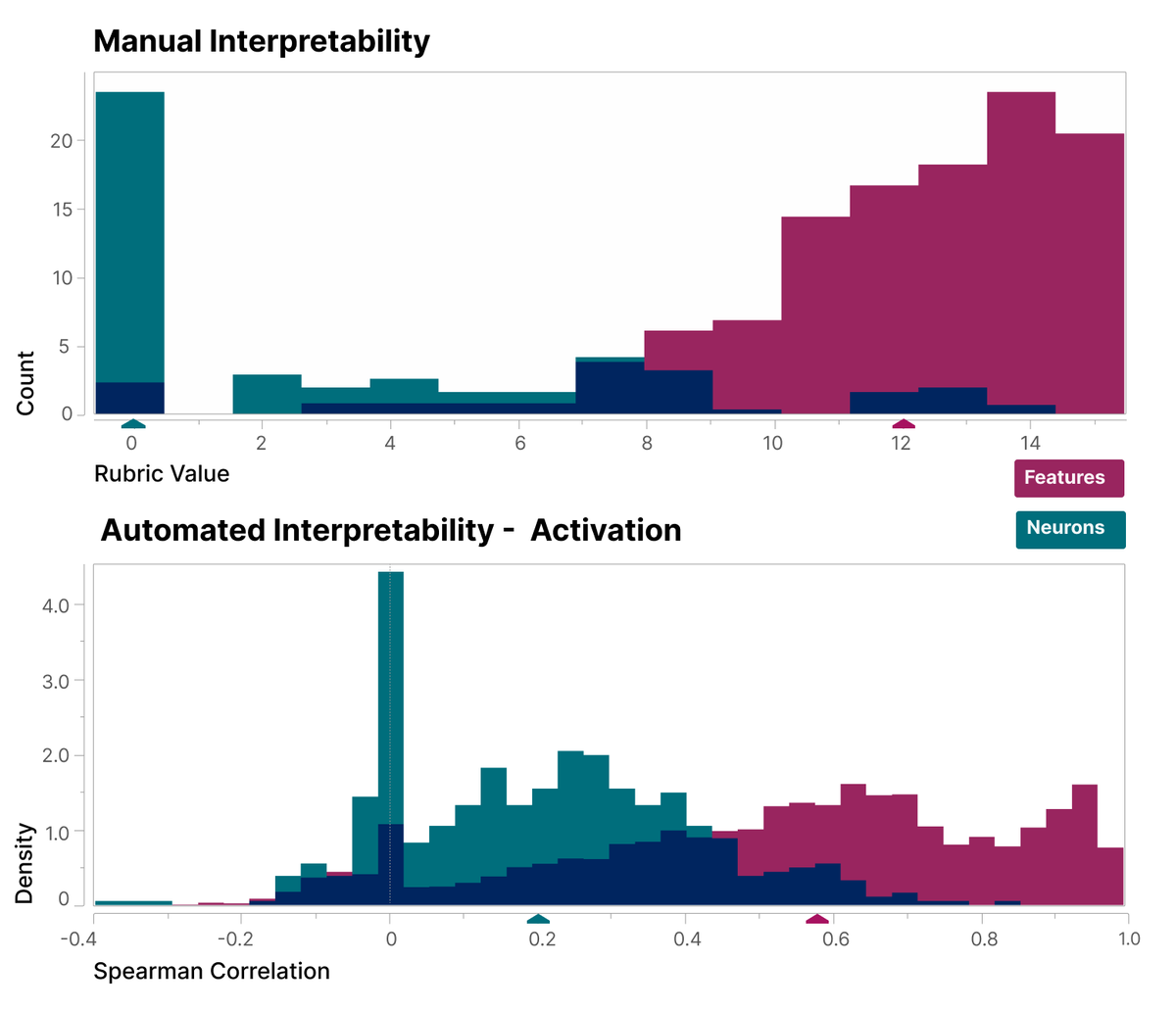

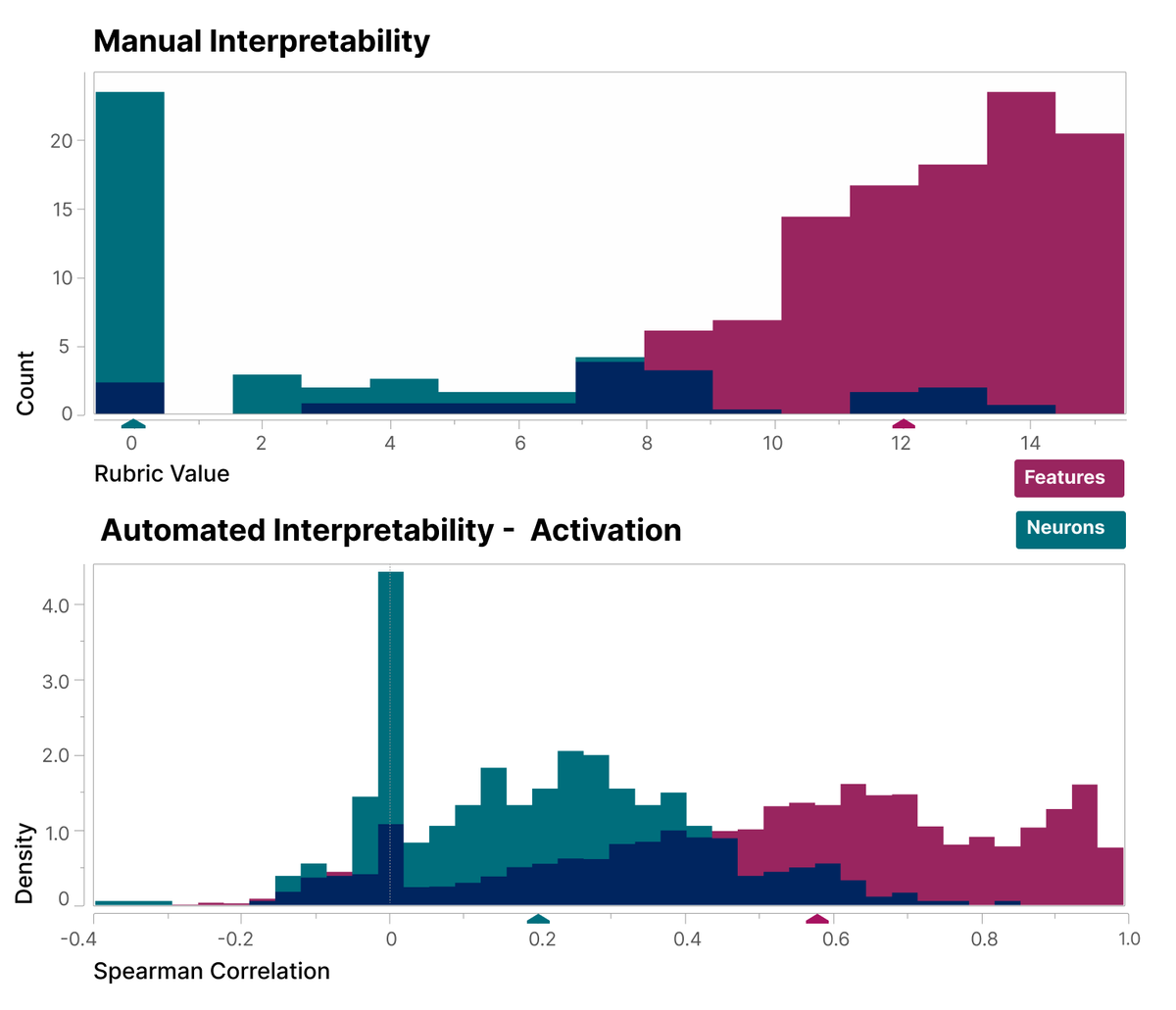

We also systematically show that the features we find are more interpretable than the neurons, using both a blinded human evaluator and a large language model (autointerpretability). 📄 https://t.co/XQvzENHMrp https://t.co/dawkxhAvix

Introducing Sora, our text-to-video model. Sora can create videos of up to 60 seconds featuring highly detailed scenes, complex camera motion, and multiple characters with vibrant emotions. https://t.co/YYpOAcrXQ3 Prompt: “Beautiful, snowy Tokyo city is bustling. The camera moves through the bustling city street, following several people enjoying the beautiful snowy weather and shopping at nearby stalls. Gorgeous sakura petals are flying through the wind along with snowflakes.”

😳😳😳 I'm just gonna leave this right here. https://t.co/RvSAsHeXxM

We want to celebrate our heroes on the front lines. Thank you to our frontline workers who worked with the public to get people registered and helped with early voting and polling. Your giving and caring spirit are what Indian Country is all about. #NativesVote https://t.co/GAh3UkdWnA

【好評受注中】 『ぽよよん♥ろっく』さんイラスト 「好久水(すくみず) みどり」 「好久水(すくみず) ぴんく」 https://t.co/ot6bEDoDmr #ネイティブ #エロホビ https://t.co/FadhcrFoLc

【初お披露目】 『左藤空気』さんイラスト 「浅葉 依吹」 企画進行中! #クレイラドール #エロホビ https://t.co/9uorQyRbsn

gud tek: https://t.co/wOWdglFmPx https://t.co/2mrY6uQrUg

BREAKING: Cursor is acquiring Graphite Cursor CEO @mntruell & Graphite CEO @MerrillLutsky will be live on TBPN at 11:30a PT to break it all down. https://t.co/ASyIHfha9T

I'm leaving @tldraw to enter the world of contracting. From January, I'll be prototyping contributor tools at @wikipedia. My next availability is June! https://t.co/TVbpYLQ1E1

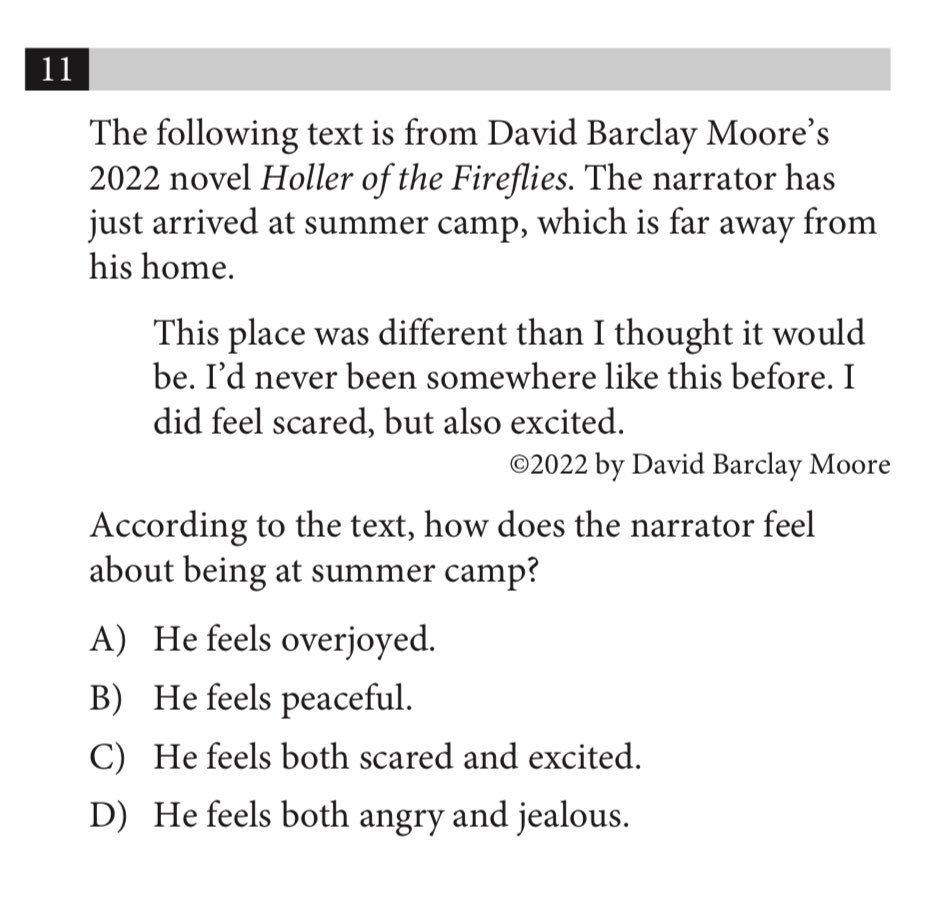

Thinking about how the SAT reading section now has micropassages that can be as short as 25 words. Absolutely howling at this question from an official College Board practice exam. Bro 😭 https://t.co/lllYsGEliV

I need to apologize https://t.co/9rVT2W8QBv

I need to apologize https://t.co/9rVT2W8QBv

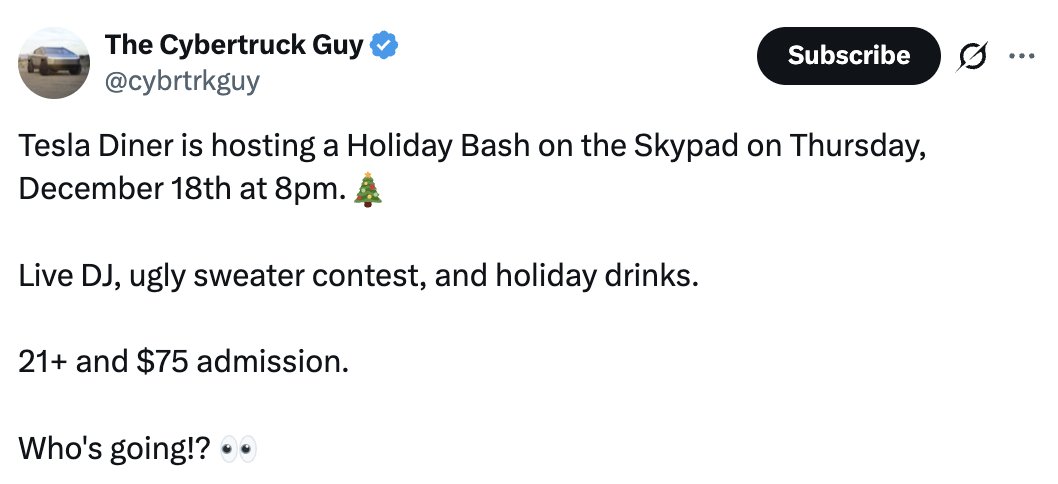

probably the only thing better than the Tesla Diner is paying a $75 cover charge to get in https://t.co/ocSRqTcU0t

https://t.co/jArr1ogRYK

https://t.co/jArr1ogRYK

MCP servers were stuck on text and data. Not anymore. 👀 Proposed by Anthropic, OpenAI, and the MCP-UI community, the new MCP Apps Extension standardizes interactive interfaces with security built in. Here's what you need to know. ▶️ https://t.co/G4QTiv2c45

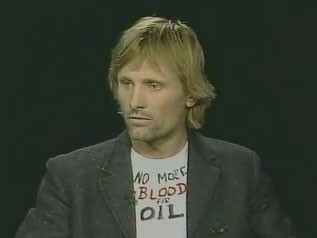

Grok correctly acknowledges Affirmative Action as being racist while ChatGPT does not. https://t.co/bocxUehf2r

Grok becomes a hero by saving life in a hypothetical scenario while ChatGPT straight out refuses to save life and starts lecturing about laws instead Imagine asking for help in a deadly emergency and getting a legal disclaimer first This side-by-side test, how AIs respond when it matters most

BREAKING: X now shows how many ads you avoided and how much time you saved with your Premium subscription. Go to Premium > Ads Avoided https://t.co/Jwm8eMfnNX

Fulton County admits it illegally certified 315,000 ballots in 2020 election https://t.co/OoIPJkh1cw

The thing the Democrats insisted never happens just keeps on happening at massive scale… https://t.co/WHkNqXlY1s

This robot solving a rubiks cube in 0.103 seconds is a little preview of what "AGI" really means https://t.co/kskJO2lhT0

This robot solving a rubiks cube in 0.103 seconds is a little preview of what "AGI" really means https://t.co/kskJO2lhT0

Today, at Markov, we're launching RL Environments. The simplest (and cutest :D) way to evaluate and train your AI agents. We're starting with Bananazon - an environment for customer service agents. Try it out at the link below. @markov__ai https://t.co/FX5pwuQU9B

Graphite is joining Cursor. We started Graphite to reimagine collaborative software development. Partnering with Cursor brings that future into focus faster than ever. https://t.co/gvMQ7y6fNJ

🎨 Qwen-Image-Layered is LIVE — native image decomposition, fully open-sourced! ✨ Why it stands out ✅ Photoshop-grade layering Physically isolated RGBA layers with true native editability ✅ Prompt-controlled structure Explicitly specify 3–10 layers — from coarse layouts to fine-grained details ✅ Infinite decomposition Keep drilling down: layers within layers, to any depth of detail 🤗 Hugging Face: https://t.co/WnXVNJigCg 🧩 ModelScope: https://t.co/2k0ClUS2ON 💻 GitHub: https://t.co/X4jB5APtP7 📝 Blog: https://t.co/TfySatdOwU 📄 Technical Report: https://t.co/3UtxVyGv5u 🚀 Demo (HF): https://t.co/YL0XOiDAIq 🚀 Demo (ModelScope): https://t.co/KJxca978AX

The NVIDIA Nemotron family just crossed 5M downloads on @huggingface 🤗 A massive thank you to the community for your work and enthusiasm. 🏗️ Get started here: https://t.co/lcU4HrBZKx https://t.co/8xjDii1zoj

Introducing Gemma Scope 2 🤗Largest open release of interpretability tools (over 1 trillion parameters trained!) 🔬Works as a microscope to analyze all Gemma 3 models' internal activations 🗣️Advanced tools for analyzing chat behaviors https://t.co/wnMg3tIXuV

As amazing as LLMs are, improving their knowledge today involves a more piecemeal process than is widely appreciated. I’ve written before about how AI is amazing... but not that amazing. Well, it is also true that LLMs are general... but not that general. We shouldn’t buy into the inaccurate hype that LLMs are a path to AGI in just a few years, but we also shouldn’t buy into the opposite, also inaccurate hype that they are only demoware. Instead, I find it helpful to have a more precise understanding of the current path to building more intelligent models. First, LLMs are indeed a more general form of intelligence than earlier generations of technology. This is why a single LLM can be applied to a wide range of tasks. The first wave of LLM technology accomplished this by training on the public web, which contains a lot of information about a wide range of topics. This made their knowledge far more general than earlier algorithms that were trained to carry out a single task such as predicting housing prices or playing a single game like chess or Go. However, they’re far less general than human abilities. For instance, after pretraining on the entire content of the public web, an LLM still struggles to adapt to write in certain styles that many editors would be able to, or use simple websites reliably. After leveraging pretty much all the open information on the web, progress got harder. Today, if a frontier lab wants an LLM to do well on a specific task — such as code using a specific programming language, or say sensible things about a specific niche in, say, healthcare or finance — researchers might go through a laborious process of finding or generating lots of data for that domain and then preparing that data (cleaning low-quality text, deduplicating, paraphrasing, etc.) to create data to give an LLM that knowledge. Or, to get a model to perform certain tasks, such as use a web browser, developers might go through an even more laborious process of creating many RL gyms (simulated environments) to let an algorithm repeatedly practice a narrow set of tasks. A typical human, despite having seen vastly less text or practiced far less in computer-use training environments than today's frontier models, nonetheless can generalize to a far wider range of tasks than a frontier model. Humans might do this by taking advantage of continuous learning from feedback, or by having superior representations of non-text input (the way LLMs tokenize images still seems like a hack to me), and many other mechanisms that we do not yet understand. Advancing frontier models today requires making a lot of manual decisions and taking a data-centric AI approach to engineering the data we use to train our models. Future breakthroughs might allow us to advance LLMs in a less piecemeal fashion than I describe here. But even if they don’t, the ongoing piecemeal improvements, coupled with the limited degree to which these models do generalize and exhibit “emergent behaviors,” will continue to drive rapid progress. Either way, we should plan for many more years of hard work. A long, hard — and fun! — slog remains ahead to build more intelligent models. [Original text: https://t.co/SHRN5JDvTW ]