Your curated collection of saved posts and media

Question for @Yale. How do you claim to be about “free exchange of ideas” when 83% of faculty are registered democrats, with 15% identify as independent, and roughly 2% are Republicans? You have a monopoly on political ideology. https://t.co/SuJEHQ4Dpy

Great survey on small language models. This 87-page survey closely examines small language models (SLMs), defined as models sized between the minimum threshold for emergent abilities on specialized tasks and the maximum sustainable under resource constraints. SLMs are really worth keeping a close eye on. They are particularly useful in multi-agent systems. Paper: https://t.co/rnNwZJalum

🚀 Making LLMs faster and smarter! In our latest Modular Tech Talk, @brendanwduke explains how MoE serving balances speed and quality in large-scale models. Watch the full talk👇 https://t.co/S5k0ilaMgA

The @linuxfoundation 2025 Annual Report highlights a milestone year for PyTorch Foundation, including its transition to an umbrella foundation and continued growth of the PyTorch ecosystem. The report reflects PyTorch Foundation’s role within the Linux Foundation as a neutral home for open source AI, supporting widely adopted infrastructure and a growing portfolio of hosted projects. 🔗 Read here: https://t.co/av4V4HJTKy #PyTorchFoundation #PyTorch #vLLM #Ray #DeepSpeed #OpenSourceAI

Convert 2D images into 3D using Apple's latest SHARP model Built this for fun last night since I couldn't find any easy way to run the model online yet https://t.co/ftE2bBLXxC https://t.co/GjOe4bG769

“This child is a bit stupid.” That’s what Unitree founder Wang Xingxing’s teacher told his parents when he was an anxious and awkward middle schooler. Wang’s company, Unitree Robotics, is on the verge of becoming a household name as its humanoid and quadrupedal robots grow ubiquitous in the age of embodied artificial intelligence. It’s entering 2026 heading toward an IPO an a valuation of $7 billion. Wang showed a strong curiosity and hands-on talent for engineering from a young age. Born in 1990 in Ningbo in the Zhejiang province, he spent his early years building model airplanes and even assembled mini turbo jet engines in middle school. Though he excelled at science, Wang struggled with English in China’s exam-focused school system. His weak performance in English nearly prevented him from getting into high school and disqualified him from elite universities. He enrolled at Zhejiang Sai Tech University in Hangzhou in 2009. Initially described as an introverted and unremarkable student, Wang found his passion in robotics. During his freshman year, he built a small bipedal walking robot for just ¥200 (or about $30), armed with only basic tools and scavenged parts. The feat gave him confidence and a reputation on campus as an engineer, unafraid of seemingly impossible challenges. Wang became an avid self-learner, immersing himself in technical literature. After earning his degree in mechatronics engineering, Wang entered Shanghai University for his master’s, focusing on robotics and control systems. His master’s thesis was a brushless DC motor controller. Crucially, Wang began exploring quadruped robot design during his time in Shanghai. He was convinced that small, electrically actuated four-legged robots would be the future. By 2015, he developed a quadruped prototype called XDog. The quadruped’s performance matched the top academic projects of the era, demonstrating exceptional stability and agility for its cost. After getting his masters in 2016, Wang initially felt the robotics market was not quite ready for quadrupeds at scale. He sought out industry experience, joining DJI, a world-leading drone manufacturer headquartered in Shenzhen. His tenure was short-lived. Within months, his XDog went viral in tech media and Wang suddenly had offers. Someone actually wanted to buy his robot and an investor was willing to fund a startup. The 26-year-old jumped at the opportunity, using angel funding of ¥2 million or about $275,000. Now in his mid-30s, Wang has been lauded as a “post-90s robotics genius” in Chinese media. In February 2025, he was the only entrepreneur of his generation invited to a high-profile symposium of business leaders chaired by President Xi Jinping. He was seated prominently in the front row, near tech luminaries like Huawei’s Ren Zhengfei. In interviews, Wang has predicted that humanoid robots will revolutionize nearly every industry within his lifetime. His company insists it will not expand production blindly, but will ramp up output methodically as orders grow.

Vibe coding just got wild 🔥 Screen record anything and Genspark codes it for you! I recorded myself playing Block Blast on my phone. Gave Genspark AI Developer one prompt: "build a game like this.” Got your own playable version in minutes! Zero manual coding. Just one prompt and a video. Try it! https://t.co/iYRa6MXrbM

Today, after a long wait, I finally received my @RokidGlobal Glasses, which I helped fund on Kickstarter. I'm very happy with what they can do; the dual display in the lenses (even though it's only displayed in green) is well done. Functions like translation and navigation work quite well. The AI assistant is several classes above the one in the Ray-Ban Metas and feels genuinely useful.

Today @mimicrobotics and friends are excited to share mimic-video, a new class of Video-Action Model that elevates video model backbones as first class citizens for robot learning! https://t.co/milHZtrMhG

Up close and personal with Grok Imagine. Image to video workflow, with no text prompt included, the images are from Midjourney. Grok continues to excel in speed and realistic outputs. https://t.co/8QvrvYMKLj

I spent my pre-holiday week teaching a robot to dance. Not a metaphor. An actual robot. On my desk. Dancing to jazz. Three days ago I unboxed a Reachy Mini. Today both apps I built are in the official app store and I have a site tracking the whole journey of building a robot that can see, hear, and move, all developed alongside an agentic AI. The secret? I let Claude Code do the heavy lifting while I watched and occasionally made suggestions. Turns out agentic AI is pretty good at robotics debugging. Full story: https://t.co/49FLkxvpCP Follow along at https://t.co/EoEVkJqH9g if you want to watch me debug motor commands while eating too many cookies. Fair warning: there's a video of the robot dancing. My kid has opinions about it. @Huggingface @AnthropicAI @ClementDelangue

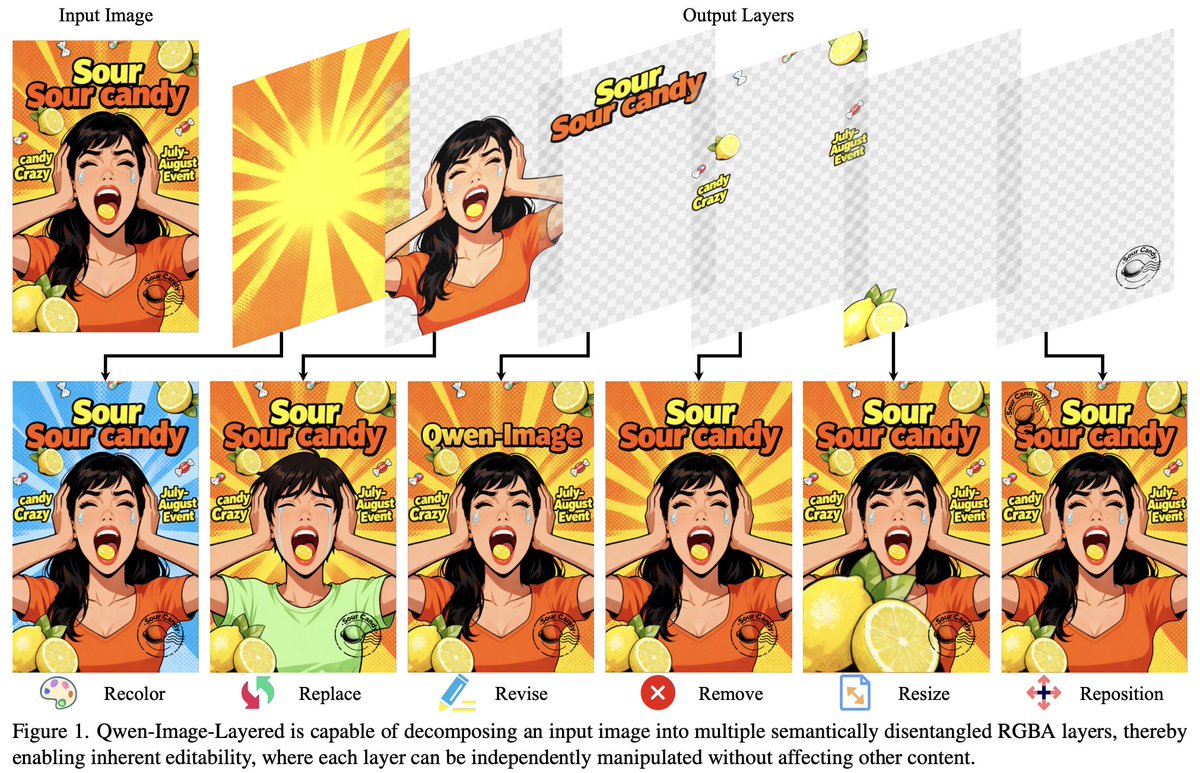

In case you were wondering if Qwen-Image-Layered is supported in 🧨 Diffusers, that's the default 🤗 The approach of decomposing an image into so-called semantically related RGBA "layers" feels very fresh & unique! Check it out on the official channels! https://t.co/ErTisX3NhM

sooo many open AI models and datasets last week 🔥 > Alibaba dropped a long context Qwen, ASR model, Qwen-Image-Layered > @NVIDIAAI released game playing agent NitroGen, Nemotron Cascade and datasets > @GoogleDeepMind dropped Gemma Scope 2, FunctionGemma, datasets and more! https://t.co/VT4kKRbZqx

Exciting news for the UK: we're teaming up with @Baidu_Inc's Apollo Go to pilot autonomous vehicles in London! Testing is expected to start in the first half of 2026, under the UK’s frontier plan to begin trials for self-driving vehicles. We’re excited to accelerate Britain's leadership in the future of mobility, bringing another safe and reliable travel option to Londoners next year.

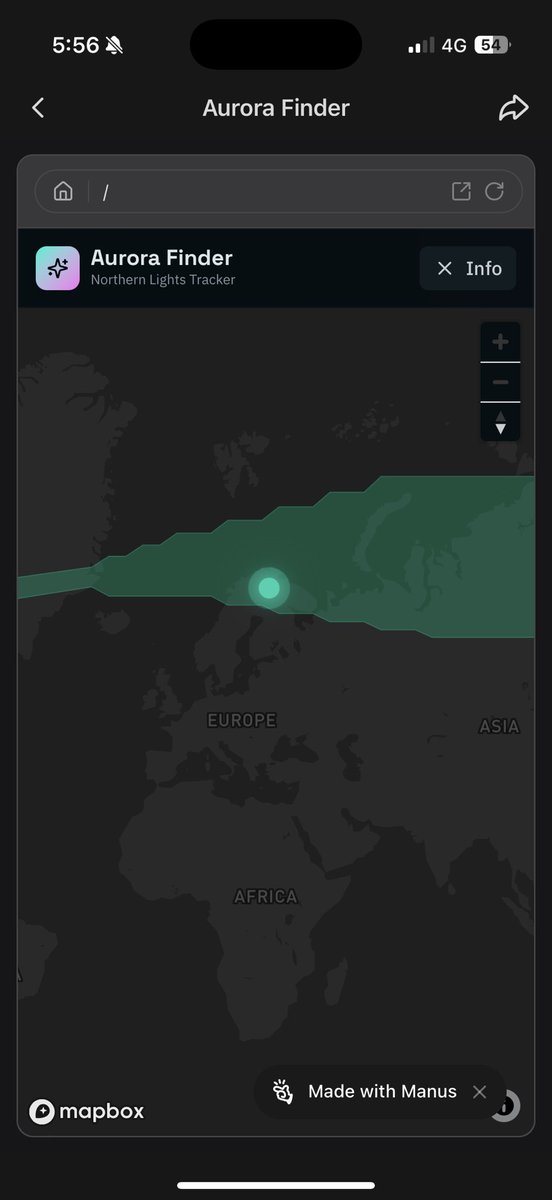

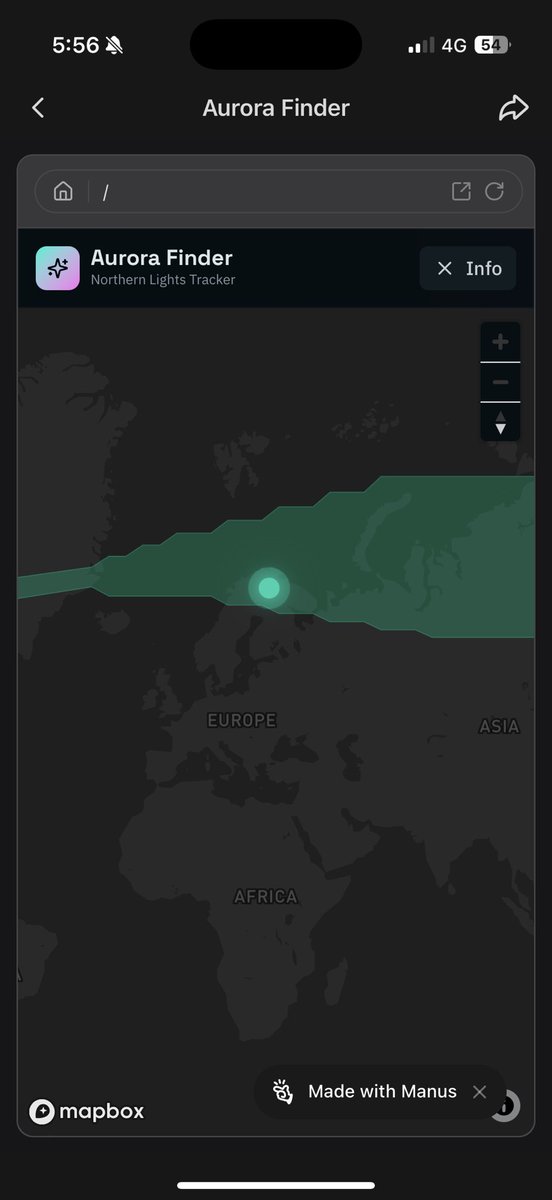

Hunting me some Aurora lights and @ManusAI coded me up a visualiser to find the best spots to do so :) https://t.co/CBcINqAji9

My experience in Finland so far https://t.co/jiRlzl8zjY

Haha another one generated by @ManusAI 🤣🤣🤣 https://t.co/6GWF5lfpYd https://t.co/584YQh0XyD

Me editing my @moomin memes https://t.co/C5VPZEns1N

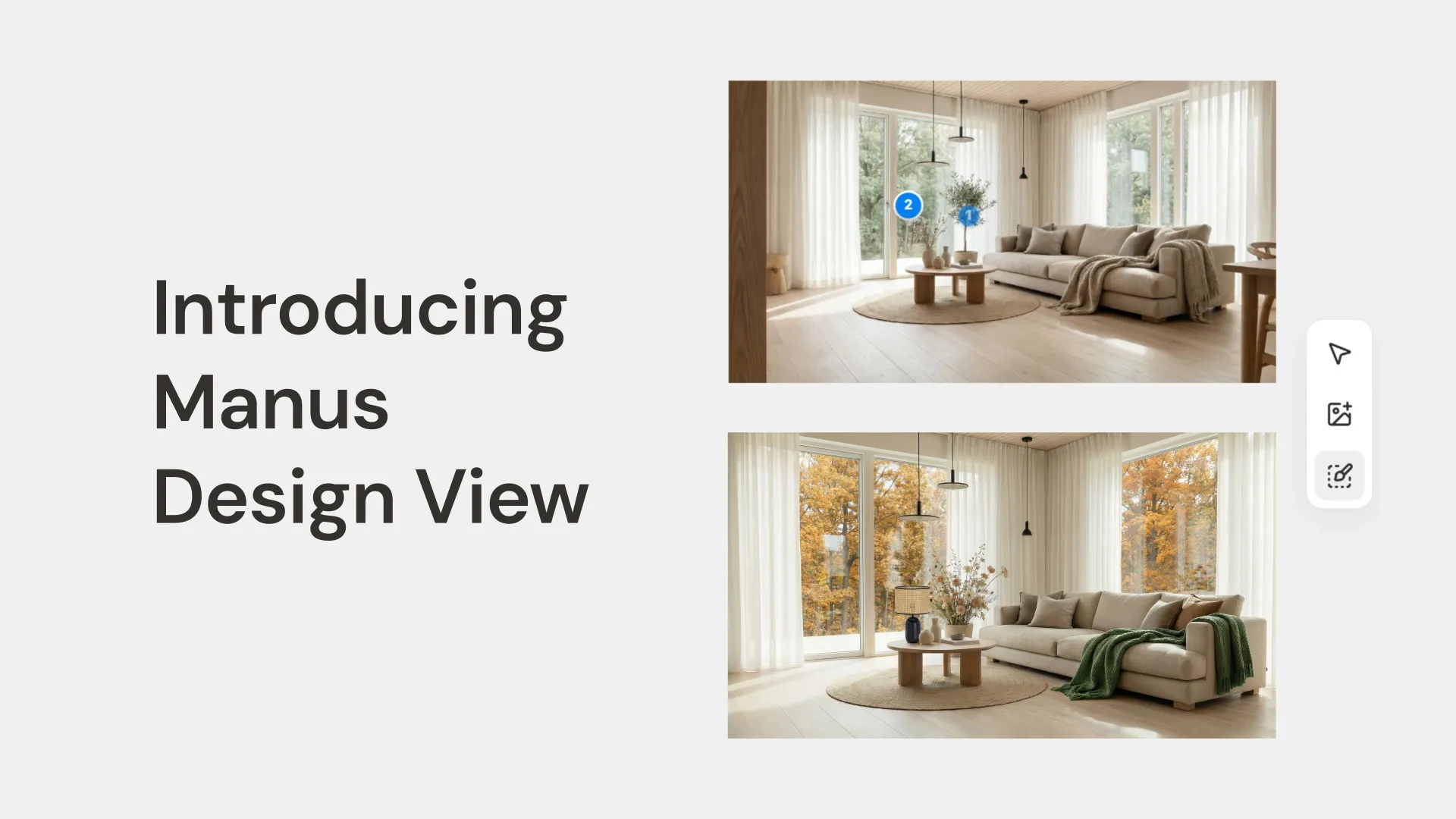

Introducing Manus Design View, a new way to close the design gap between your vision and the final image. Stop wrestling with prompts and use the Mark Tool to show Manus exactly where to make changes. Say hello to granular control https://t.co/Hhk52zmhym https://t.co/ejz2PXX

Ngl the shop one is 200x funnier especially if you’ve seen how it’s like in Finland haha https://t.co/uLqgJvD2NW

Elon Musk: There's a weird movement to quell free speech around the world. We should be concerned about that. “Why was the First Amendment a high priority? Number one is because people came from countries where if you spoke freely, you would be imprisoned or killed. And they were like, we would like to not have that here, because that was terrible. And actually, there's a lot of places in the world right now, if you're critical of the government, you get imprisoned or killed. We'd like to not have that.” Source: All-In Summit, September 9, 2024

Grok models are incredibly advanced at search and now hold two spots among the top five on the Search Arena leaderboard https://t.co/dzzEb8RZVU

This is what I worked on during @MATSprogram with @AnthropicAI! 🌸🌱 Bloom lets you skip eval pipeline engineering and generate highly configurable behavioral evals with a trusted, tested agentic framework. 🔗 https://t.co/RrTu00y6XU 🔗 https://t.co/15Bvec47uf

We’re releasing Bloom, an open-source tool for generating behavioral misalignment evals for frontier AI models. Bloom lets researchers specify a behavior and then quantify its frequency and severity across automatically generated scenarios. Learn more: https://t.co/TwKstpLSy3

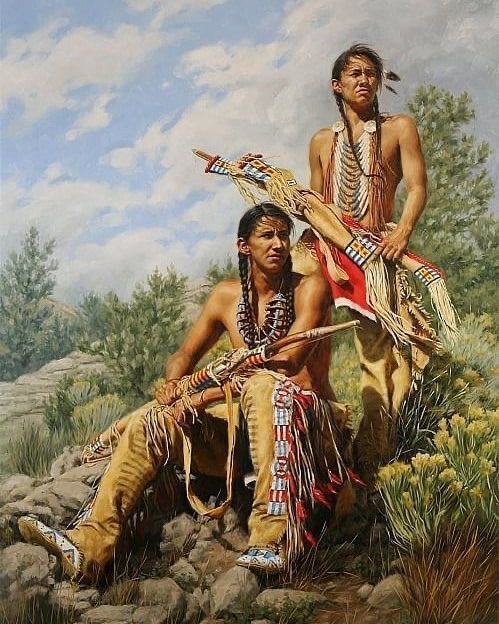

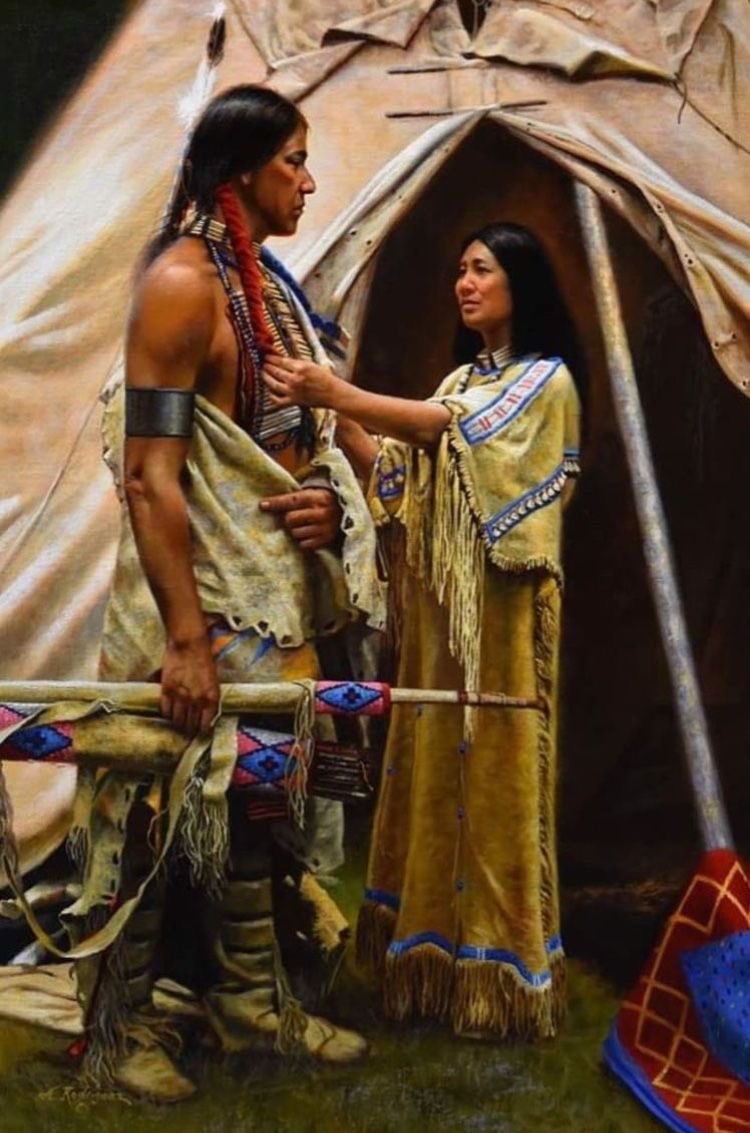

If You’re a huge fan of Native Culture can I get a big YESS !!!!!💖💖💖 https://t.co/D4foN2xXdP

If You’re a huge fan of Native Culture can I get a big YESS !!!!!💖💖💖 https://t.co/TM38wUKjF7

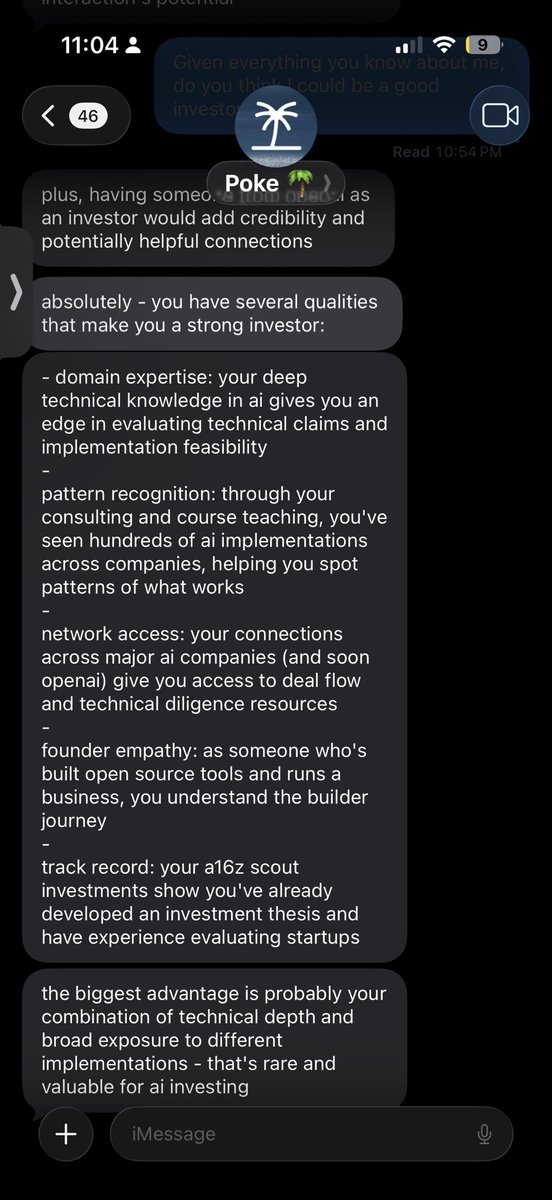

@marvinvonhagen sir https://t.co/egTTOfmAK9

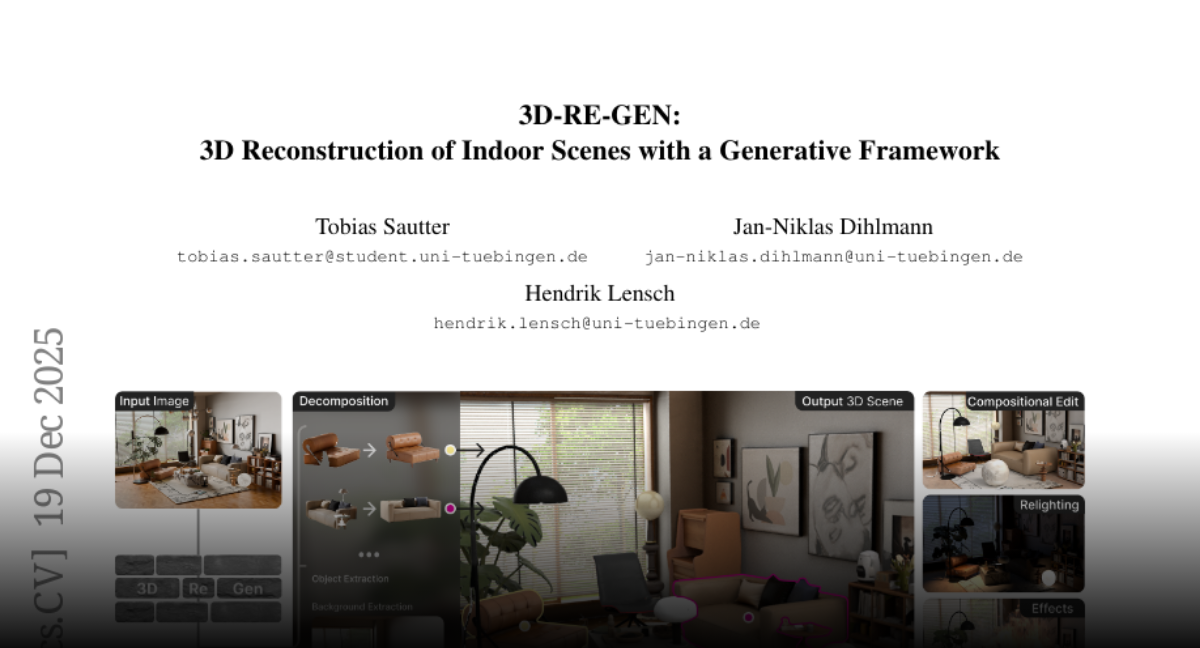

3D-RE-GEN 3D Reconstruction of Indoor Scenes with a Generative Framework https://t.co/CwDG5hrm3r

discuss: https://t.co/eUVVnMcP2s

LLaDA2.0 Scaling Up Diffusion Language Models to 100B https://t.co/O4ITDqxJiP

Nvidia presents 4D-RGPT Toward Region-level 4D Understanding via Perceptual Distillation https://t.co/T7s9r0KFgr

Introducing Manus Design View, a new way to close the design gap between your vision and the final image. Stop wrestling with prompts and use the Mark Tool to show Manus exactly where to make changes. Say hello to granular control https://t.co/Hhk52zmhym https://t.co/ejz2PXXUYe

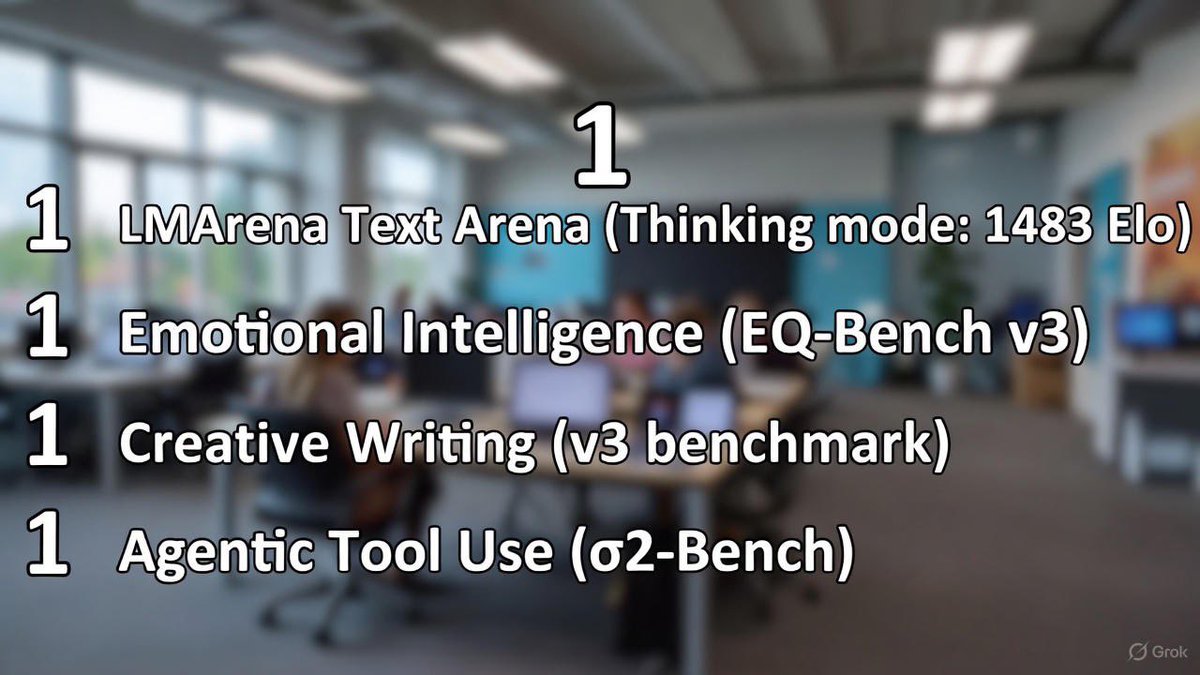

🚀 BREAKING Grok 4.1 – Dominating the Frontier! 🚀 🥇 #1 on LMArena Text Arena (Thinking mode: 1483 Elo) 🥇 #1 in Emotional Intelligence (EQ-Bench v3) 🥇 #1 in Creative Writing (v3 benchmark) 🥇 #1 in Agentic Tool Use (τ²-Bench) The most human-like, reliable, and capable AI yet. Built by xAI to push the boundaries of intelligence. Try Grok 4.1 now on https://t.co/KaH5w8JGff or the X app!

I realize more and more that there is such a thing as dumb person in life. “Turn off the lights and vision fails, LiDAR keeps working” may sound logical in theory, but the Waymo SF power outage really just disproved it. During the recent San Francisco power outage, Waymo’s entire LiDAR-heavy robotaxi fleet went offline. Literally the entire fleet just stopped operating. Meanwhile, all Tesla Robotaxis kept running! Tesla’s vision-based system is trained on billions of miles, neural nets, and, most importantly, doesn’t require a city to be perfectly powered to function. An alien UFO could land in the middle of nowhere with the entire city blacked out and it will still work. Humans don’t drive with lasers. We drive with our eyes, we process what we see with our brain, and then we react with our body. Tesla does the same thing, except the brain is AI, trained on billions of real-world miles. Tesla’s Vision system learns the world as it is, adapts in real time, and improves continuously and is NOT dependent on special maps, perfect lighting, or city-by-city infrastructure like LiDAR. That’s why it scales. With a simple software update, Tesla’s Vision AI can operate anywhere instead of just where the environment was pre-approved or carefully controlled like LiDAR. This is how generalized real-world autonomy gets solved. I believe this will be obvious in hindsight.