Your curated collection of saved posts and media

Nearly 30 Yale undergraduate departments have no Republican faculty, Buckley Institute report finds https://t.co/pY4f4MTsEq https://t.co/eTw5QTIWNc

xAI supports local Memphis restaurants and food vendors spending $10M in 2025 providing meals around the clock for its employees and contractors. https://t.co/Il2926AiOU

Grok Rankings Update, Dec 22 Grok Code Fast 1 — The Market Dominator The undisputed heavy-lifter of the global AI agent economy, sustaining overwhelming usage and infrastructure leadership. #1 Positions 🥇 #1 Overall on OpenRouter Leaderboard — 518B tokens 🥇 #1 in Categories Token Share — 29.9% dominance 🥇 #1 on Kilo Code Leaderboard 🥇 #1 on BLACKBOXAI Leaderboard 🥇 #1 on Roo Code Leaderboard 🥇 #1 on Cline Leaderboard 🥇 #1 on EQ-Bench3 — Score: 1586 (highest recorded emotional intelligence) 🥇 #1 on τ²-Bench Telecom — Complex agentic tool use 🥇 #1 on Berkeley Function Calling Benchmark 🥇 #1 on FActScore — Lowest error rate in class (~3%) 🥇 #1 on Alpha Arena Season 1.5 — +22.38% return on U.S. stock tokens 🥇 #1 in High-Stakes Multi-Step Reasoning — Only model profitable through December volatility

Imagine the outrage if any group but White people were banned. Yet discrimination against White people is not only tolerated, it’s normalized. Society treats hostility toward Whites as acceptable, while condemning the same behavior in others. This is not just unfair, it’s an active undermining of White people’s rights, opportunities, and place in society, and it must be stopped.

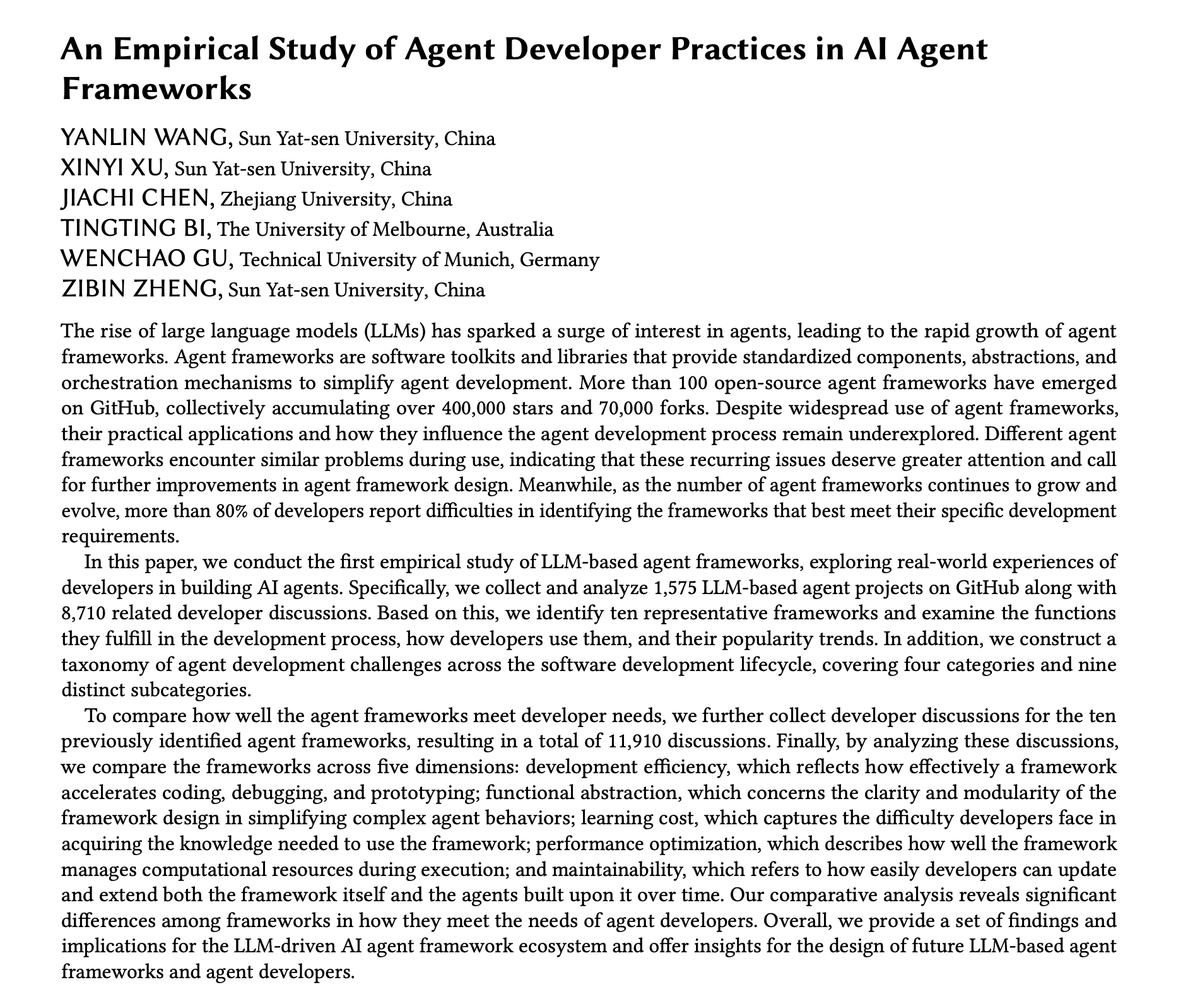

First large-scale empirical study of how developers actually use AI agent frameworks. Over 100 open-source agent frameworks have emerged on GitHub, collectively accumulating 400,000+ stars and 70,000+ forks. But 80% of developers report difficulties identifying which frameworks best meet their needs. Researchers analyzed 1,575 agent projects and 11,910 developer discussions across ten major frameworks, including LangChain, AutoGen, CrewAI, and MetaGPT. Here are the findings: 96% of top-starred projects use multiple frameworks together. Single-framework solutions no longer meet the complex demands of real-world agent applications. The dominant patterns: orchestration + data frameworks (LangChain + LlamaIndex) and multi-agent + orchestration combinations (AutoGen + LangChain). Not surprisingly, GitHub stars don't predict real-world adoption. MetaGPT has 59K stars but appears in only 2 repositories in the dataset. LangGraph has 20K stars but shows the second-highest actual adoption. Ecosystem maturity and maintenance activity matter more than popularity metrics. Where do developers struggle? The study maps 8,710 issues across the software development lifecycle: Logic failures account for 35%+ of problems. Task termination issues represent 21% of all failures. 72% of recursive call failures occur at the agent-tool interaction layer. Missing call-chain state tracking and insufficient termination detection are root causes. Version compatibility creates 23% of technical obstacles. The LangChain Pydantic v1 to v2 migration caused mass build failures. AutoGen's v0.2 to v0.4 refactoring introduced a completely incompatible architecture, splitting the community. Performance optimization is a universal weakness. All frameworks struggle with caching mechanisms, concurrent processing, and resource management. Response latency for retrieval-augmented agents ranges 3.2-5.6 seconds per query, 1.8x slower than direct generation. Framework-specific patterns emerge. LangChain and CrewAI lower barriers for beginners with strong documentation. AutoGen and LangChain excel at rapid prototyping, with 78% of developers citing them for quick verification. However, LangChain's deeply nested abstractions pose problems: 42% of developers reported difficulty when dealing with non-standard requirements. Paper: https://t.co/EFLiiT9RUM Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

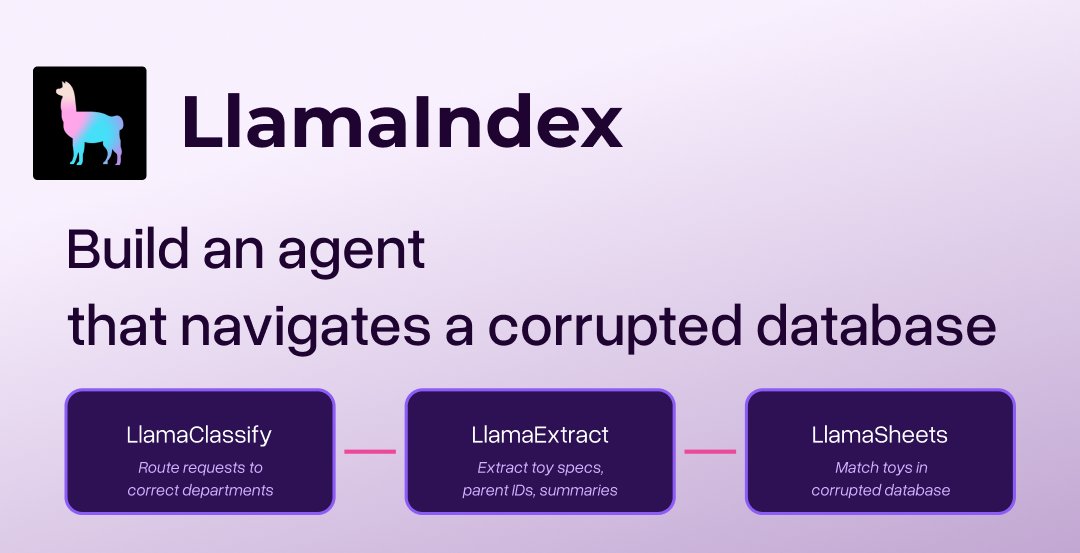

We love seeing all of the out of office emails around the holidays! So we thought we'd keep things light this week. We'll share some fun little demos, great way to get started with LlamaIndex too🎄 Today: check out this example where we build an agent that helps navigate a corrupted database! The challenge: Santa's toy database is corrupted. Parent requests are flooding in, but toy names are gone—just placeholders. The fix: An automated workflow using LlamaClassify (routing), LlamaExtract (data extraction), LlamaSheets (database search), and LlamaIndex Workflows (orchestration) to process thousands of requests without manual work. Try the Colab: https://t.co/J7cnONZQrs

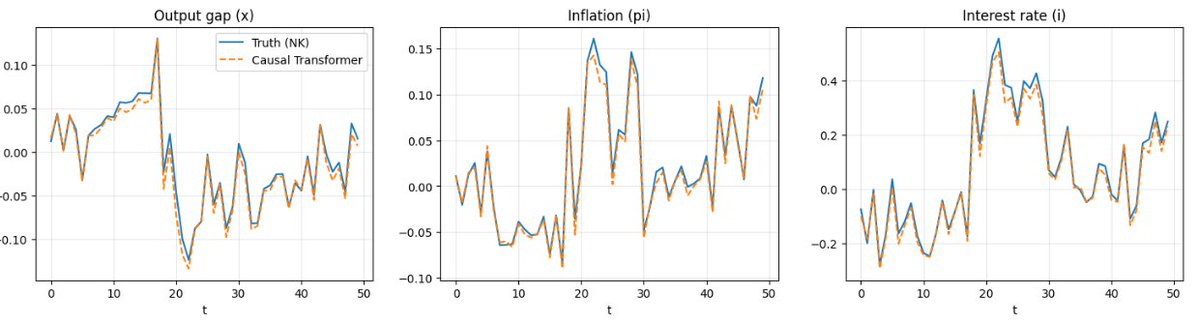

New post : Can a Transformer “Learn” Economic Relationships? Revisiting the Lucas Critique in the age of Transformers. with @arpitrage We simulated data from an NK model, fit a transformer, and tested out of sample fit How did it do? Pretty well! Link and thread in reply: https://t.co/En3x1zBClF

you can now create personalized ai companions just like character ai with Dreamvibes.. follow these STEPS to setup a comapanion : 1/ Create account on dream vibes : https://t.co/M5IeK0eM1D 2/ start personalizing your companions 3/ click to proceed chatting it taked 2 munites to this is fully vibecoded with @YouWareAI #YouwareChallenge

you can text your @zocomputer consistently one of our most-loved features who has the best theory on why we've slept on texting as an AI interface for all these years? https://t.co/ocZoMhIXbm

We partnered with Cursor to launch Hooks. Hooks let Cursor users observe, control, and extend AI agents with custom scripts. Runlayer handles governance for MCP behind the scenes. Engineers just keep shipping. https://t.co/teJT7Wg8m6

Probing Scientific General Intelligence of LLMs with Scientist-Aligned Workflows https://t.co/dQeegQE2RN

PhysBrain Human Egocentric Data as a Bridge from Vision Language Models to Physical Intelligence https://t.co/HyMrcBvQVP

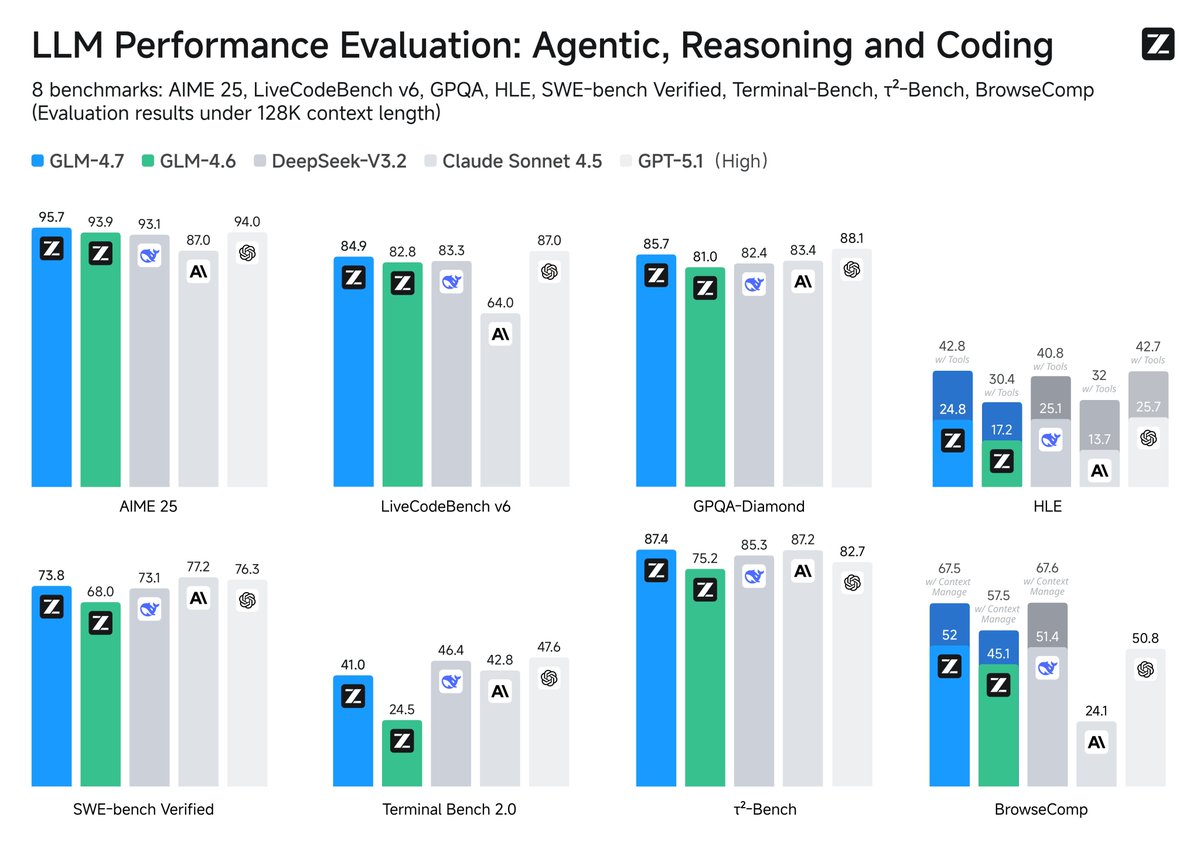

GLM-4.7 just dropped on Hugging Face https://t.co/878BBUW9Dm

When Reasoning Meets Its Laws https://t.co/8zYuZUISsJ

GLM-4.7 is here! GLM-4.7 surpasses GLM-4.6 with substantial improvements in coding, complex reasoning, and tool usage, setting new open-source SOTA standards. It also boosts performance in chat, creative writing, and role-play scenarios. Default Model for Coding Plan: https://t.co/Nk8Y98Il7s Try it now: https://t.co/WCqWT0raFJ Weights: https://t.co/CpKNBpXTWu Tech Blog: https://t.co/CfXPHbrBPm

🤗 Try GLM‑4.7 on @huggingface , supported by Novita. Stronger coding, reasoning, and tool use, with improved chat, creative writing, and role play. https://t.co/6v9jac05Sc

@GlobalUpdates24 Seems to be false unfortunately according to @ManusAI https://t.co/3C8xuQ06KS

@SorryMomDotGov https://t.co/d2OuF4CL35

son i am in fucking tears https://t.co/nu3zUqNF2e

son i am in fucking tears https://t.co/nu3zUqNF2e

Question for @Yale. How do you claim to be about “free exchange of ideas” when 83% of faculty are registered democrats, with 15% identify as independent, and roughly 2% are Republicans? You have a monopoly on political ideology. https://t.co/SuJEHQ4Dpy

Great survey on small language models. This 87-page survey closely examines small language models (SLMs), defined as models sized between the minimum threshold for emergent abilities on specialized tasks and the maximum sustainable under resource constraints. SLMs are really worth keeping a close eye on. They are particularly useful in multi-agent systems. Paper: https://t.co/rnNwZJalum

🚀 Making LLMs faster and smarter! In our latest Modular Tech Talk, @brendanwduke explains how MoE serving balances speed and quality in large-scale models. Watch the full talk👇 https://t.co/S5k0ilaMgA

The @linuxfoundation 2025 Annual Report highlights a milestone year for PyTorch Foundation, including its transition to an umbrella foundation and continued growth of the PyTorch ecosystem. The report reflects PyTorch Foundation’s role within the Linux Foundation as a neutral home for open source AI, supporting widely adopted infrastructure and a growing portfolio of hosted projects. 🔗 Read here: https://t.co/av4V4HJTKy #PyTorchFoundation #PyTorch #vLLM #Ray #DeepSpeed #OpenSourceAI

Convert 2D images into 3D using Apple's latest SHARP model Built this for fun last night since I couldn't find any easy way to run the model online yet https://t.co/ftE2bBLXxC https://t.co/GjOe4bG769

“This child is a bit stupid.” That’s what Unitree founder Wang Xingxing’s teacher told his parents when he was an anxious and awkward middle schooler. Wang’s company, Unitree Robotics, is on the verge of becoming a household name as its humanoid and quadrupedal robots grow ubiquitous in the age of embodied artificial intelligence. It’s entering 2026 heading toward an IPO an a valuation of $7 billion. Wang showed a strong curiosity and hands-on talent for engineering from a young age. Born in 1990 in Ningbo in the Zhejiang province, he spent his early years building model airplanes and even assembled mini turbo jet engines in middle school. Though he excelled at science, Wang struggled with English in China’s exam-focused school system. His weak performance in English nearly prevented him from getting into high school and disqualified him from elite universities. He enrolled at Zhejiang Sai Tech University in Hangzhou in 2009. Initially described as an introverted and unremarkable student, Wang found his passion in robotics. During his freshman year, he built a small bipedal walking robot for just ¥200 (or about $30), armed with only basic tools and scavenged parts. The feat gave him confidence and a reputation on campus as an engineer, unafraid of seemingly impossible challenges. Wang became an avid self-learner, immersing himself in technical literature. After earning his degree in mechatronics engineering, Wang entered Shanghai University for his master’s, focusing on robotics and control systems. His master’s thesis was a brushless DC motor controller. Crucially, Wang began exploring quadruped robot design during his time in Shanghai. He was convinced that small, electrically actuated four-legged robots would be the future. By 2015, he developed a quadruped prototype called XDog. The quadruped’s performance matched the top academic projects of the era, demonstrating exceptional stability and agility for its cost. After getting his masters in 2016, Wang initially felt the robotics market was not quite ready for quadrupeds at scale. He sought out industry experience, joining DJI, a world-leading drone manufacturer headquartered in Shenzhen. His tenure was short-lived. Within months, his XDog went viral in tech media and Wang suddenly had offers. Someone actually wanted to buy his robot and an investor was willing to fund a startup. The 26-year-old jumped at the opportunity, using angel funding of ¥2 million or about $275,000. Now in his mid-30s, Wang has been lauded as a “post-90s robotics genius” in Chinese media. In February 2025, he was the only entrepreneur of his generation invited to a high-profile symposium of business leaders chaired by President Xi Jinping. He was seated prominently in the front row, near tech luminaries like Huawei’s Ren Zhengfei. In interviews, Wang has predicted that humanoid robots will revolutionize nearly every industry within his lifetime. His company insists it will not expand production blindly, but will ramp up output methodically as orders grow.

Vibe coding just got wild 🔥 Screen record anything and Genspark codes it for you! I recorded myself playing Block Blast on my phone. Gave Genspark AI Developer one prompt: "build a game like this.” Got your own playable version in minutes! Zero manual coding. Just one prompt and a video. Try it! https://t.co/iYRa6MXrbM

Today, after a long wait, I finally received my @RokidGlobal Glasses, which I helped fund on Kickstarter. I'm very happy with what they can do; the dual display in the lenses (even though it's only displayed in green) is well done. Functions like translation and navigation work quite well. The AI assistant is several classes above the one in the Ray-Ban Metas and feels genuinely useful.

Today @mimicrobotics and friends are excited to share mimic-video, a new class of Video-Action Model that elevates video model backbones as first class citizens for robot learning! https://t.co/milHZtrMhG

Up close and personal with Grok Imagine. Image to video workflow, with no text prompt included, the images are from Midjourney. Grok continues to excel in speed and realistic outputs. https://t.co/8QvrvYMKLj

I spent my pre-holiday week teaching a robot to dance. Not a metaphor. An actual robot. On my desk. Dancing to jazz. Three days ago I unboxed a Reachy Mini. Today both apps I built are in the official app store and I have a site tracking the whole journey of building a robot that can see, hear, and move, all developed alongside an agentic AI. The secret? I let Claude Code do the heavy lifting while I watched and occasionally made suggestions. Turns out agentic AI is pretty good at robotics debugging. Full story: https://t.co/49FLkxvpCP Follow along at https://t.co/EoEVkJqH9g if you want to watch me debug motor commands while eating too many cookies. Fair warning: there's a video of the robot dancing. My kid has opinions about it. @Huggingface @AnthropicAI @ClementDelangue

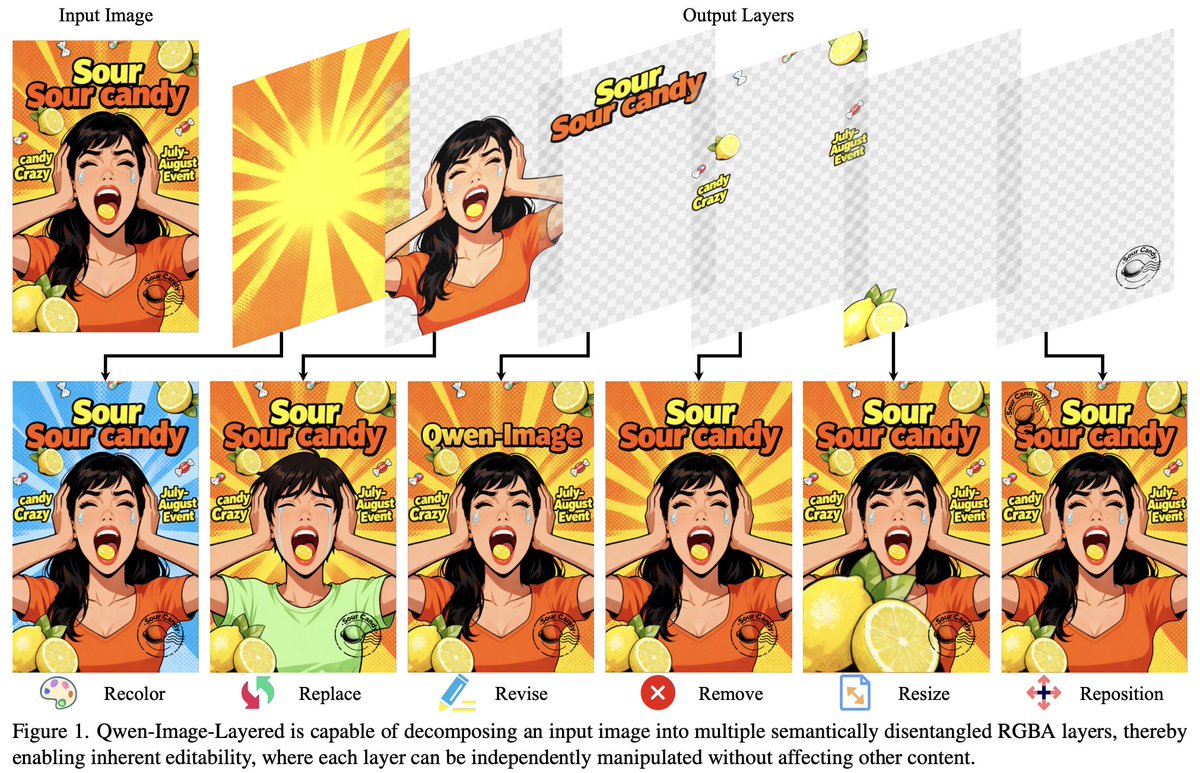

In case you were wondering if Qwen-Image-Layered is supported in 🧨 Diffusers, that's the default 🤗 The approach of decomposing an image into so-called semantically related RGBA "layers" feels very fresh & unique! Check it out on the official channels! https://t.co/ErTisX3NhM