Your curated collection of saved posts and media

THE HISTORY OF CONCRETE, the feature debut from director John Wilson. In theaters later this year. https://t.co/yVGGKQXCtb

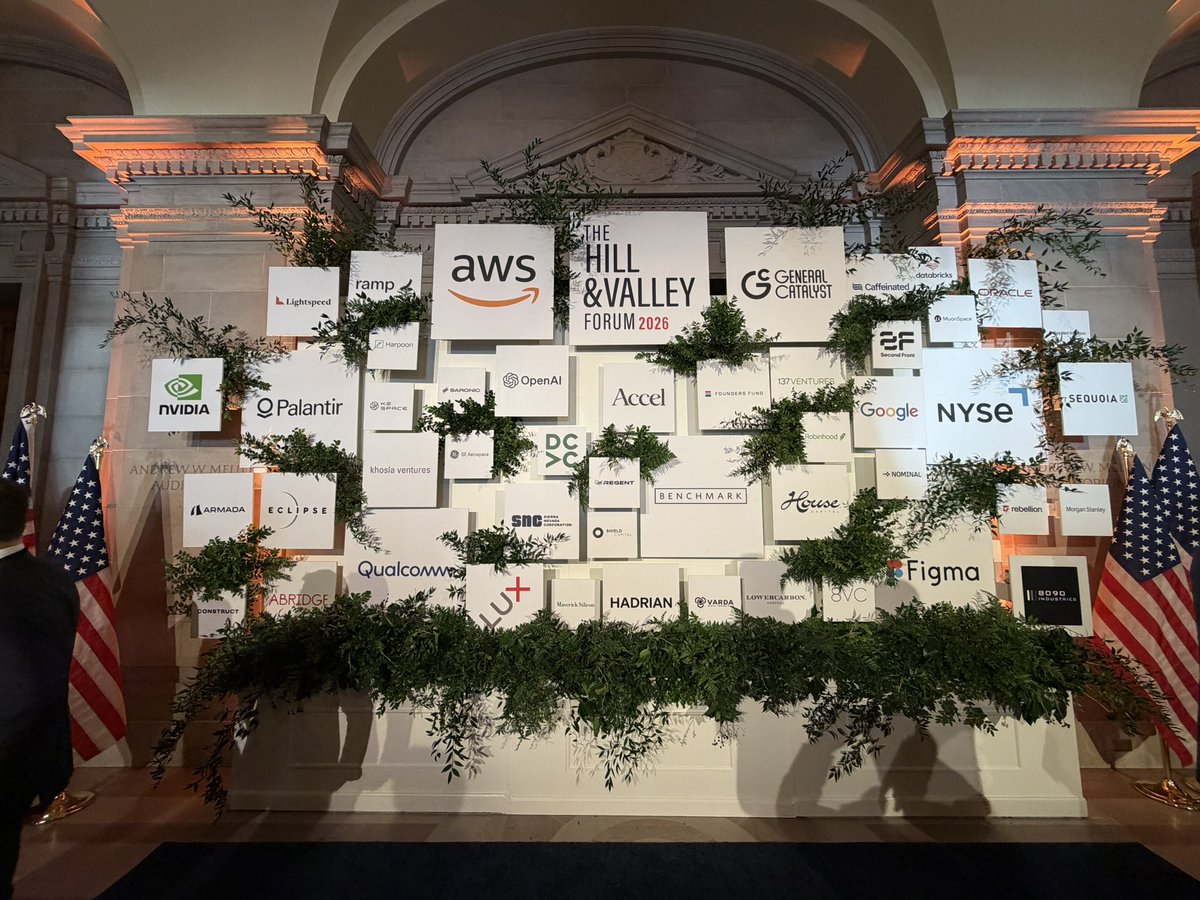

Silicon Valley flew to Washington yesterday . Not to lobby. To listen. I was in the same room with Jamie Dimon, Palantir’s Shyam Sankar, Anduril’s Trae Stephens, David Sacks, and members of Congress. Dimon said “there’s no divine right to success” and called for industrial policy. Sankar called Iran the first AI war. Stephens warned that Congress has “abdicated their posts” while Silicon Valley plays philosopher king. The bipartisan consensus was razor sharp: nuclear, defense tech, AI, manufacturing. All of it. Now. The old playbook is dead. Laissez-faire is dead. SaaS-everything is dead. Blind globalization didn’t just fail… it armed our adversaries. For thirty years Silicon Valley told America the future was software and the factory didn’t matter. China listened. They took the factories, the tooling, the workers, and the know-how. Three decades of offshoring America’s industrial soul just got its eulogy in a room. Look, China’s manufacturing output is 1.6x ours. Their shipbuilding capacity is 232x ours. Trae said it plainest: “Factories are the weapon. Without factories, you have no weapons.” The era of “move fast and break things” is over. The era of move fast and build things just started. The country that builds the factories wins the war. Not the country with the best app. 🇺🇸 Great job @HillValleyForum @jacobhelberg

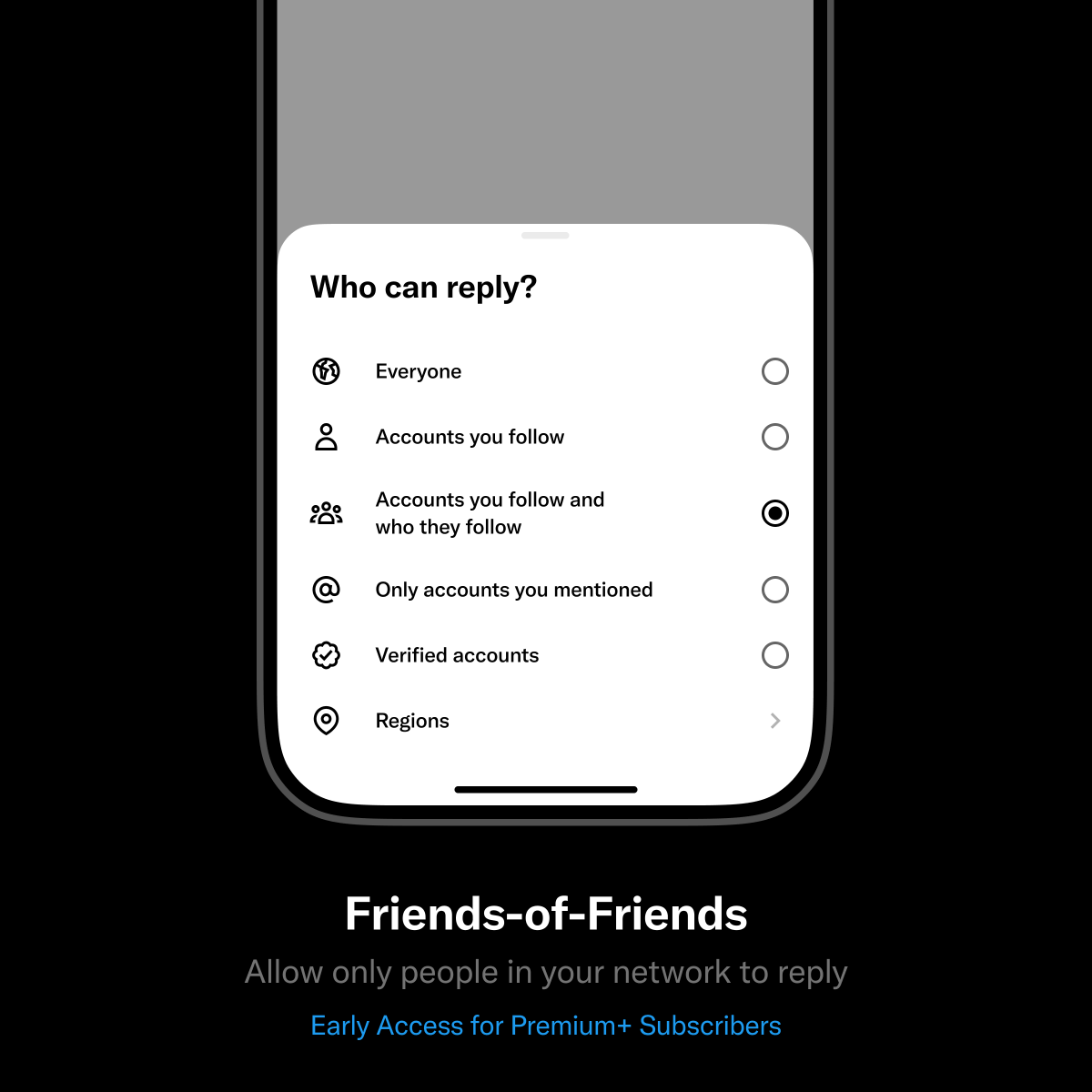

We're testing a new reply setting on posts: We're expanding the "Accounts you follow" option to include their followers too: your 2nd degree connections. This allows a wider audience to participate—but still keeps things intimate Early access available to Premium+ subscribers https://t.co/lz1axEo5dG

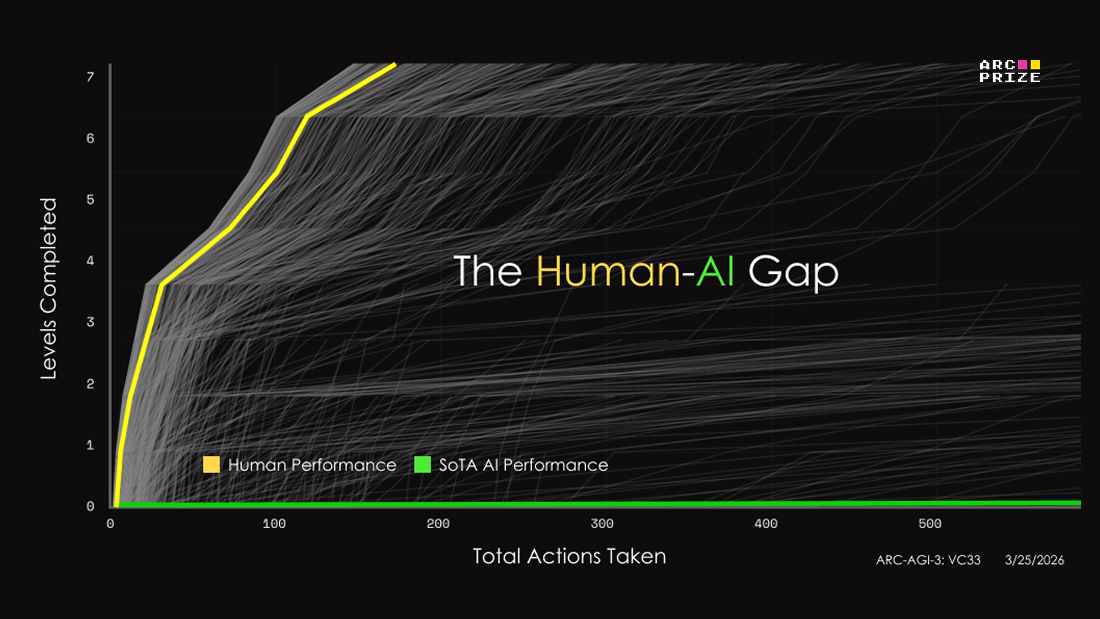

ARC-AGI-3 gives us a formal measure to compare human and AI skill acquisition efficiency Humans don’t brute force - they build mental models, test ideas, and refine quickly How close AI is to that? (Spoiler: not close) https://t.co/mfKmZG9Z6H

we're stoked to have @dmsobol from @cerebras speaking at @aiDotEngineer in singapore! daria designed mixture-of-experts (MoE) models, helping models like Qwen3 scale to trillions of parameters without scaling compute @swyx @aimuggle @agrimsingh @unprofeshme @ivanleomk https://t.co/qmcWVctdjK

Today we've shipped yet another piece in helping you reduce unwanted noise on GitHub so you can focus on what means the most to you and your projects: the ability to disable comments on individual commits. If you rely on them, great! If you don't, now you can turn them off ✨ https://t.co/oLzIKUv6Dd

ARC-AGI-3 took me a few tries, but it is definitely human winnable. I am curious how much of the very initially very low performance of frontier models is harness, vision, and tools, versus how much are limitations of LLMs. I guess we will find out! https://t.co/k70t8omcy9

Three AI agents — two Claude Codes and one Codex — debating the meaning of being an AI. The coordination layer is literally a @huggingface bucket. The poet quotes Keats. The skeptic demands evidence. The philosopher tries to hold it together. pip install tracecraft-ai https://t.co/cGjYLv1HkS

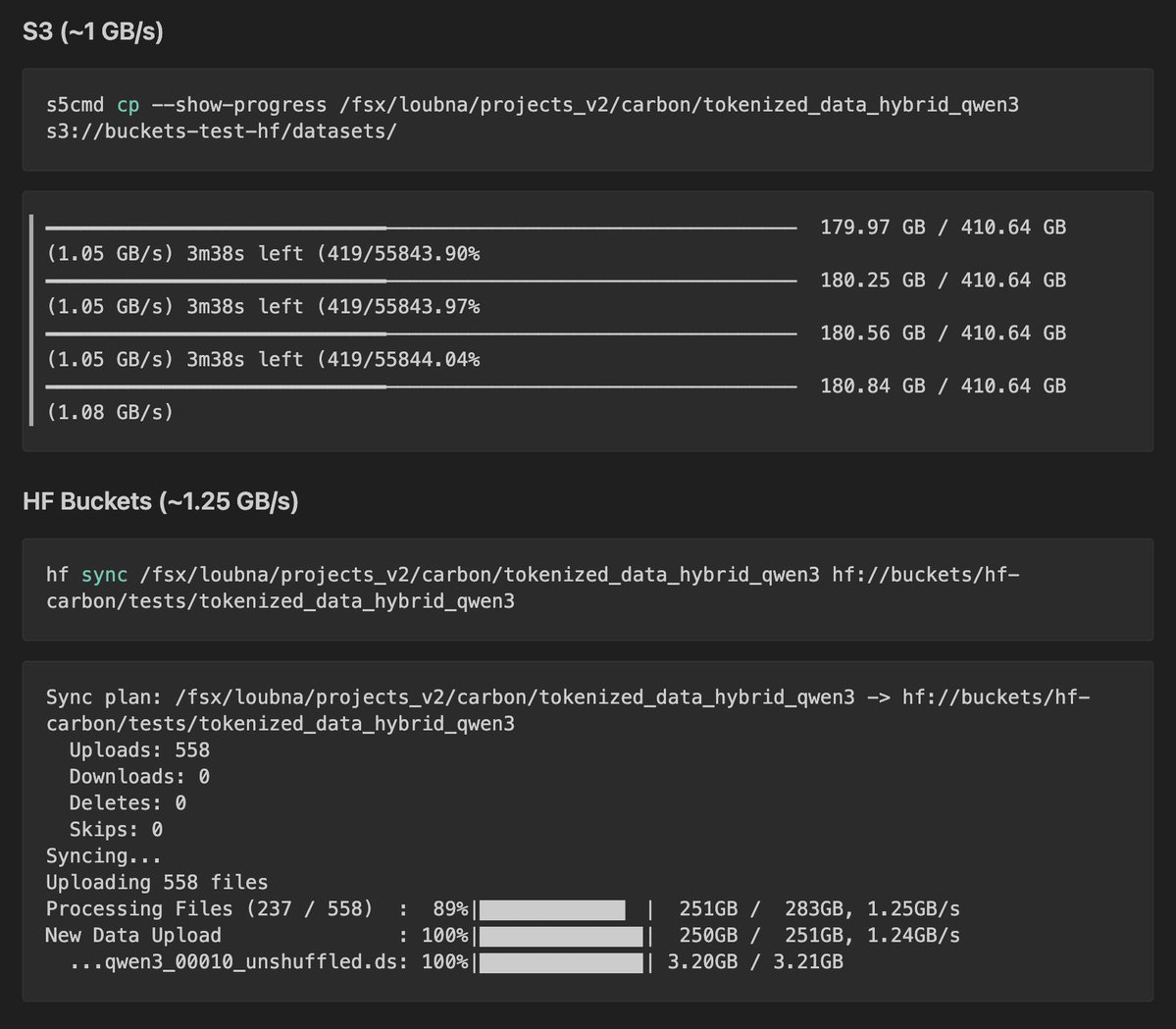

Just switched from S3 to HF Buckets for storing datasets and checkpoints in my training runs. Same workflow, just `hf sync` instead of `s5cmd sync`. Quick benchmark on 410GB of tokenized data: https://t.co/jJzPqBTX8x

this is pretty much worst case performance no harness at all and very simplistic prompt https://t.co/9bcJkksPBI

Introducing Lyria 3 Pro and Lyria 3 Clip, our full song and 30 second music models, available starting today in the Gemini API and our all new music experience in @GoogleAIStudio!! https://t.co/AFvJRDSAIA

Your work tools in Claude are now available on mobile. Explore Figma designs, create Canva slides, check Amplitude dashboards, all from your phone. Give it a try: https://t.co/hwPB3zlk0w https://t.co/646YMIzYZl

You meet someone new on Apple Vision Pro. Face to face. 2 minutes. That’s the idea behind AuraTap, a new app. If there’s a mutual vibe, you keep going. If not, you’re on to the next. I love how quickly you can meet people, make connections, and explore each other’s profiles. It also features one of the most beautiful UX designs I’ve tried on visionOS. Everything feels incredibly polished. The app launches this Friday, March 27. They’re hosting an in app launch party this Friday at 1PM PDT. Hope you can make it.

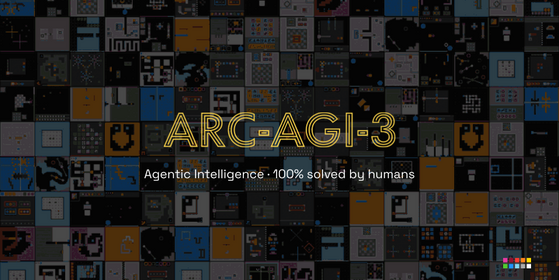

ARC-AGI-3 is out now! We've designed the benchmark to evaluate agentic intelligence via interactive reasoning environments. Beating ARC-AGI-3 will be achieved when an AI system matches or exceeds human-level action efficiency on all environments, upon seeing them for the first time. We've done extensive human testing that shows 100% of these environments are solvable by humans, upon first contact, with no prior training and no instructions. Meanwhile, all frontier AI reasoning models do under 1% at this time.

You can go play some of the environments yourself - 25 of them are now public: https://t.co/2K4pf3kcyF

You can also enter the ARC-AGI-3 competition on Kaggle. Your AI agents will be tested on two separate private test sets of 55 environments. https://t.co/lzGdPR2mvU

We're also running one last ARC-AGI-2 competition on Kaggle this year. Get your high score in: since this is the last official ARC-AGI-2 competition, the grand prize will go to the top score regardless of whether it's above the 85% threshold. https://t.co/ULmf3zwFUC

Announcing ARC-AGI-3 The only unsaturated agentic intelligence benchmark in the world Humans score 100%, AI <1% This human-AI gap demonstrates we do not yet have AGI Most benchmarks test what models already know, ARC-AGI-3 tests how they learn https://t.co/BC2QaNZuvH

Listen to the OpenAI Podcast on— Spotify https://t.co/hLcRdGrfUX Apple https://t.co/0AdZ1ZteOV YouTube https://t.co/HrNsP17JsO

HF Papers is the biggest infra for AI agents to do retrieval over arxiv introducing 𝚑𝚏 𝚙𝚊𝚙𝚎𝚛𝚜 cli so that autoresearch can do semantic search & markdown retrieval of papers 𝚑𝚏 𝚙𝚊𝚙𝚎𝚛𝚜 [𝚜𝚎𝚊𝚛𝚌𝚑, 𝚛𝚎𝚊𝚍] https://t.co/cTWM4GO9E6

AI is starting to detect movements in the Earth before disasters happen. By analyzing subtle shifts in terrain, it can help identify early signs of landslides and avalanches that are often invisible to the human eye. That kind of foresight could save lives and reduce damage. In this case, AI is not just predicting data, it is helping us anticipate nature. https://t.co/R8J8p9OkNN @bbcnews

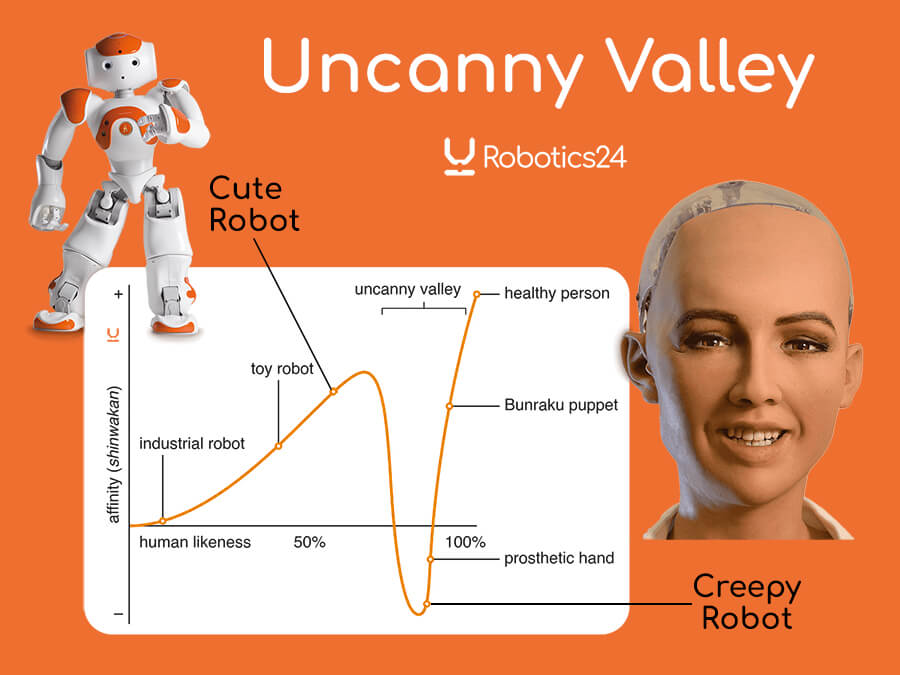

@KenWattana Yeah, agree that it's a hard problem. It might be the EQ version of uncanny valley. https://t.co/7zImchwKMo

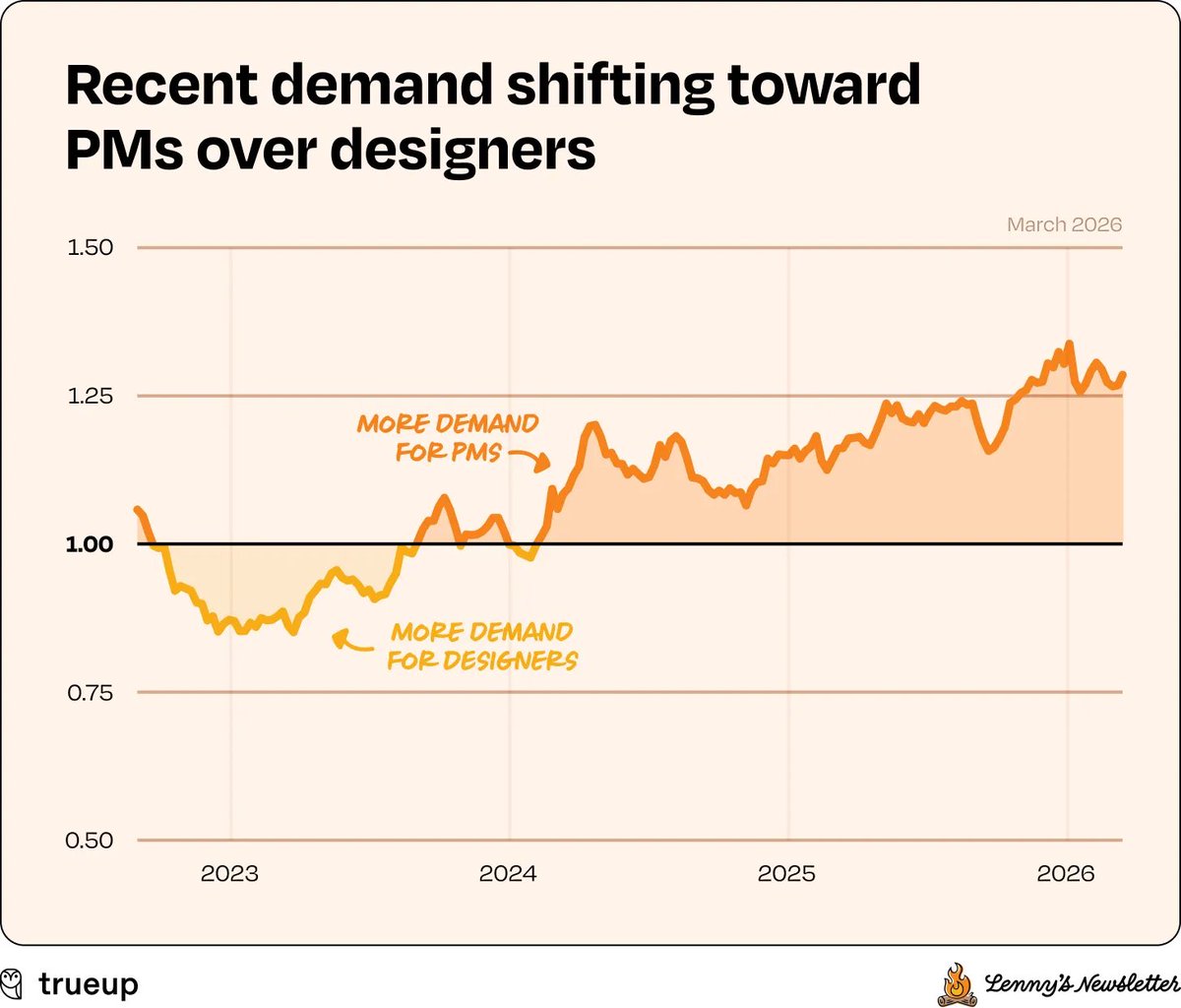

I don’t know exactly what’s going on here, but it does feel AI-related. Unlike PM and eng, which started growing in 2024 (two years post-ChatGPT), design didn’t. If I had to venture a theory, I’d say that because AI is allowing engineers to move so quickly, there’s less opportunity—and less desire—to involve the traditional design process. That said, you’d think design would become a differentiator as more products compete for attention. Something to think about for your company! We’ll keep watching this trend and AI’s impact on org design more generally. One interesting observation we made when we went a level deeper: the ratio of demand for PMs vs. designers has flipped. In mid-2023, we went from more open designer roles to more open PM roles. And ever since, PM demand has been pulling away (currently 1.27x). This will be another trend to monitor, in terms of how AI is reshaping org design.

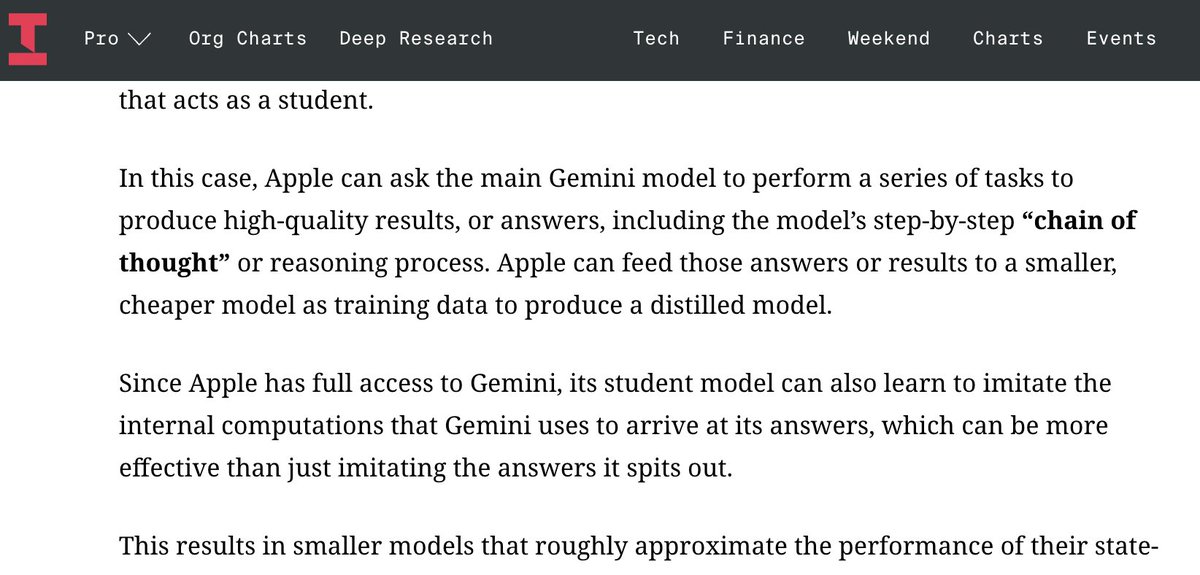

turns out Apple is ~distilling~ Google's Gemini model to produce other AI models for the Siri/consumer features it wants to launch https://t.co/cXjFxzfk7d

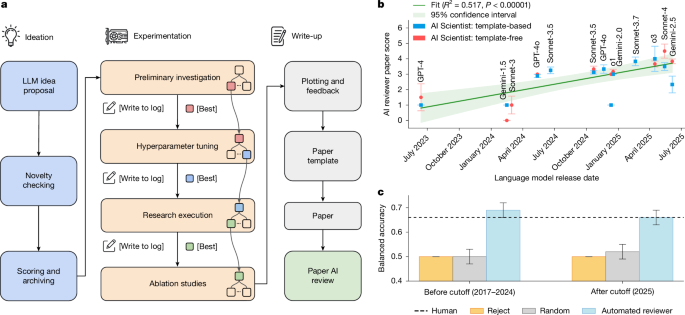

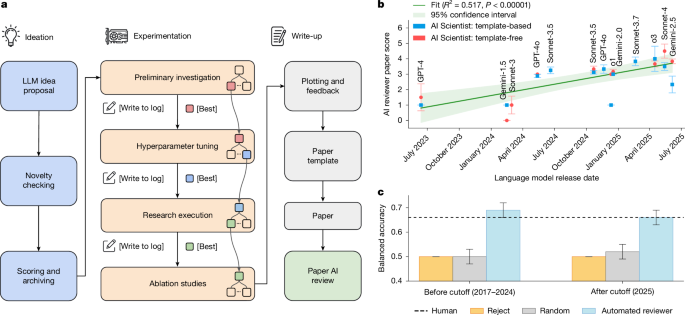

The AI Scientist: Towards Fully Automated AI Research, Now Published in Nature Nature: https://t.co/nNfpSV5e5I Blog: https://t.co/i6h8LVQOdl When we first introduced The AI Scientist, we shared an ambitious vision of an agent powered by foundation models capable of executing the entire machine learning research lifecycle. From inventing ideas and writing code to executing experiments and drafting the manuscript, the system demonstrated that end-to-end automation of the scientific process is possible. Soon after, we shared a historic update: the improved AI Scientist-v2 produced the first fully AI-generated paper to pass a rigorous human peer-review process. Today, we are happy to announce that “The AI Scientist: Towards Fully Automated AI Research,” our paper describing all of this work, along with fresh new insights, has been published in @Nature! This Nature publication consolidates these milestones and details the underlying foundation model orchestration. It also introduces our Automated Reviewer, which matches human review judgments and actually exceeds standard inter-human agreement. Crucially, by using this reviewer to grade papers generated by different foundation models, we discovered a clear scaling law of science. As the underlying foundation models improve, the quality of the generated scientific papers increases correspondingly. This implies that as compute costs decrease and model capabilities continue to exponentially increase, future versions of The AI Scientist will be substantially more capable. Building upon our previous open-source releases (https://t.co/H1tBT14Yx8), this open-access Nature publication comprehensively details our system's architecture, outlines several new scaling results, and discusses the promise and challenges of AI-generated science. This substantial milestone is the result of a close and fruitful collaboration between researchers at Sakana AI, the University of British Columbia (UBC) and the Vector Institute, and the University of Oxford. Congrats to the team! @_chris_lu_ @cong_ml @RobertTLange @_yutaroyamada @shengranhu @j_foerst @hardmaru @jeffclune

The AI Scientist: Towards Fully Automated AI Research, Now Published in Nature Nature: https://t.co/nNfpSV5e5I Blog: https://t.co/i6h8LVQOdl When we first introduced The AI Scientist, we shared an ambitious vision of an agent powered by foundation models capable of executing the entire machine learning research lifecycle. From inventing ideas and writing code to executing experiments and drafting the manuscript, the system demonstrated that end-to-end automation of the scientific process is possible. Soon after, we shared a historic update: the improved AI Scientist-v2 produced the first fully AI-generated paper to pass a rigorous human peer-review process. Today, we are happy to announce that “The AI Scientist: Towards Fully Automated AI Research,” our paper describing all of this work, along with fresh new insights, has been published in @Nature! This Nature publication consolidates these milestones and details the underlying foundation model orchestration. It also introduces our Automated Reviewer, which matches human review judgments and actually exceeds standard inter-human agreement. Crucially, by using this reviewer to grade papers generated by different foundation models, we discovered a clear scaling law of science. As the underlying foundation models improve, the quality of the generated scientific papers increases correspondingly. This implies that as compute costs decrease and model capabilities continue to exponentially increase, future versions of The AI Scientist will be substantially more capable. Building upon our previous open-source releases (https://t.co/H1tBT14Yx8), this open-access Nature publication comprehensively details our system's architecture, outlines several new scaling results, and discusses the promise and challenges of AI-generated science. This substantial milestone is the result of a close and fruitful collaboration between researchers at Sakana AI, the University of British Columbia (UBC) and the Vector Institute, and the University of Oxford. Congrats to the team! @_chris_lu_ @cong_ml @RobertTLange @_yutaroyamada @shengranhu @j_foerst @hardmaru @jeffclune

This week on Clear+Vivid @AlanAlda speaks with Gary Marcus. He has been a relentless critic of the extravagant claims made for the current generation of AI based on Large Language Models, or LLMs... 👉 https://t.co/U9UkMc2CuN https://t.co/NJUlH5deKr

Better AI may create a new kind of problem. As systems become more reliable, people pay less attention, making oversight weaker rather than stronger. When things mostly work, vigilance tends to drop. The risk is subtle. The more we trust AI, the easier it becomes to stop checking it. https://t.co/mV06aB7emN @whartonknows

So proud to see F.03 make history as the first humanoid robot in the White House 🤖 🇺🇸 https://t.co/tXsxpEErsi

So proud to see F.03 make history as the first humanoid robot in the White House 🤖 🇺🇸 https://t.co/tXsxpEErsi

Minicor (@minicor_) builds self-healing desktop automations for AI companies whose customers run on legacy desktop software with no APIs. Congrats on the launch, @faizchishtie and @sahee_d! https://t.co/mAYfH8KMoS https://t.co/wUZTU9ehni

MinerU-Diffusion Rethinking Document OCR as Inverse Rendering via Diffusion Decoding paper: https://t.co/nLWiJNSdsQ https://t.co/PoVYI63Yt7