Your curated collection of saved posts and media

🙌 never been a better time to start yapping! 😆 welcome to the world, gemini 3.1 flash live: https://t.co/686c32EnWc

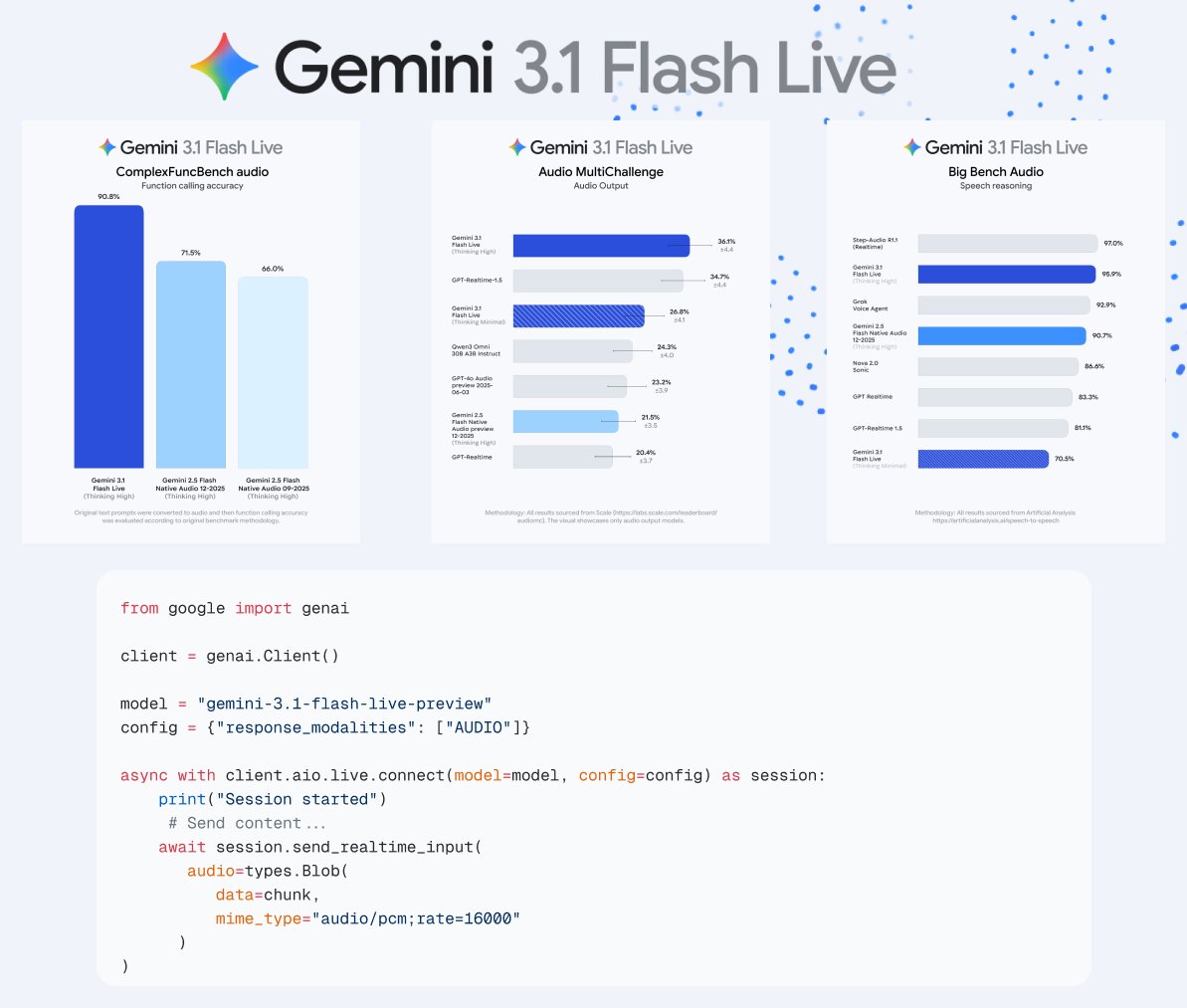

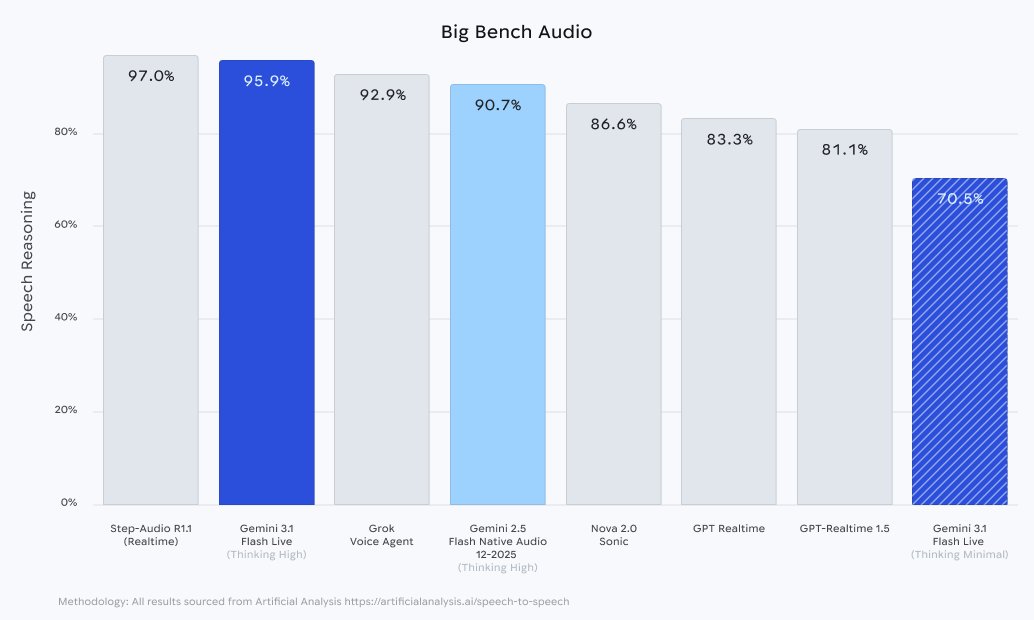

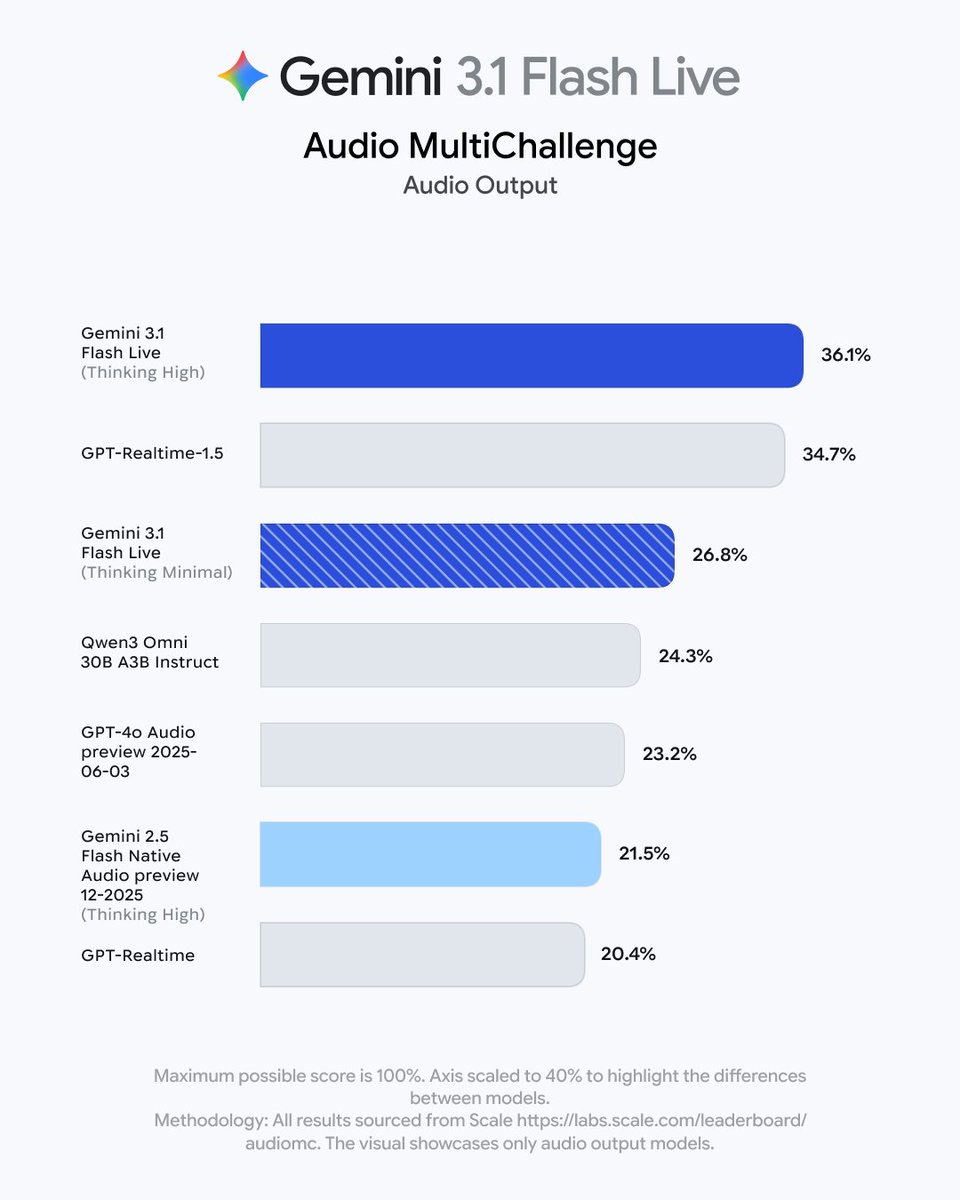

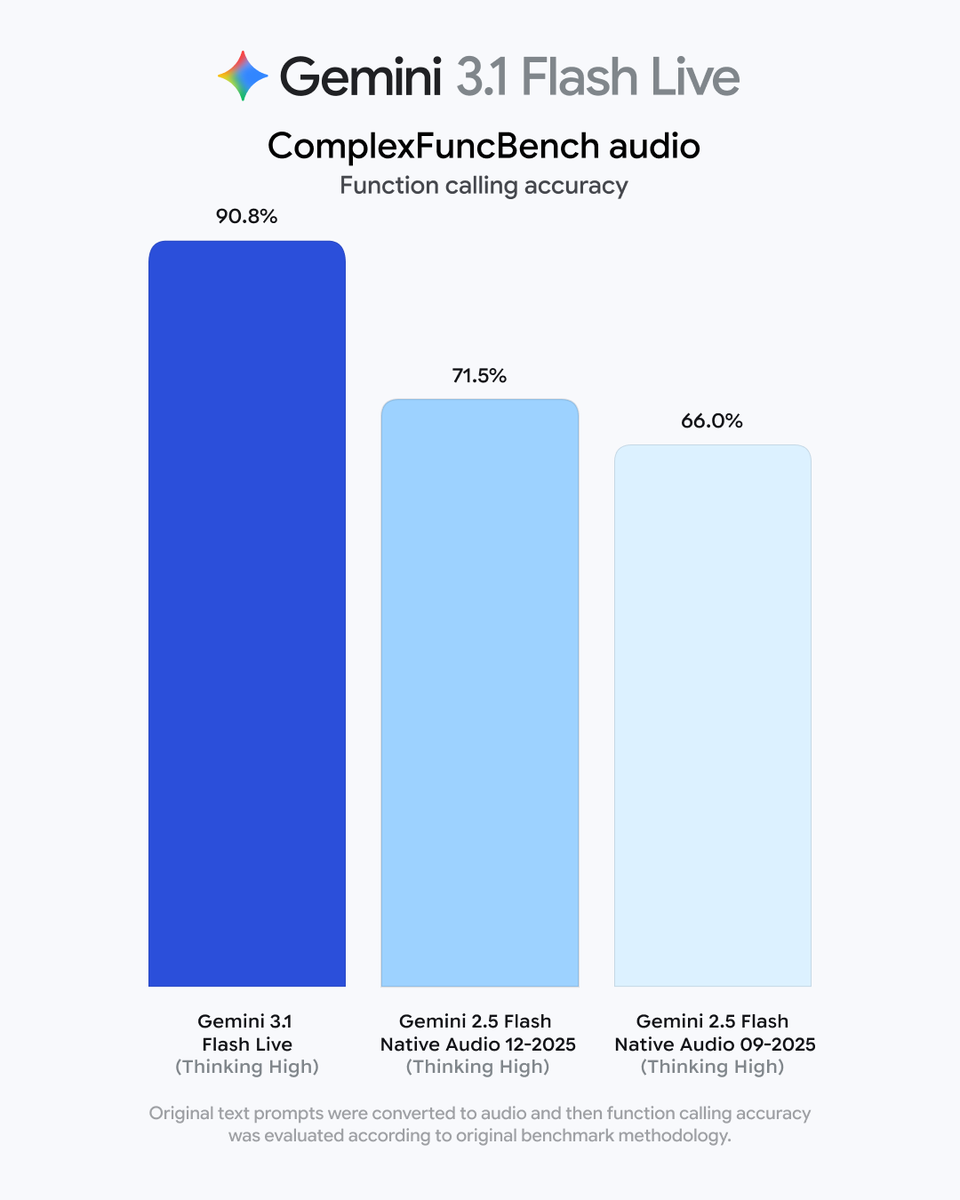

Introducing Gemini 3.1 Flash Live, our new realtime model to build voice and vision agents!! We have spent more than a year improving the model + infra + experience, the results? A step function improvement in quality, reliability, and latency. https://t.co/0esYpmDy5l

🙌 never been a better time to start yapping! 😆 welcome to the world, gemini 3.1 flash live: https://t.co/686c32EnWc

make me a billion dollars, make no mistakes https://t.co/VmfVObzI2Q

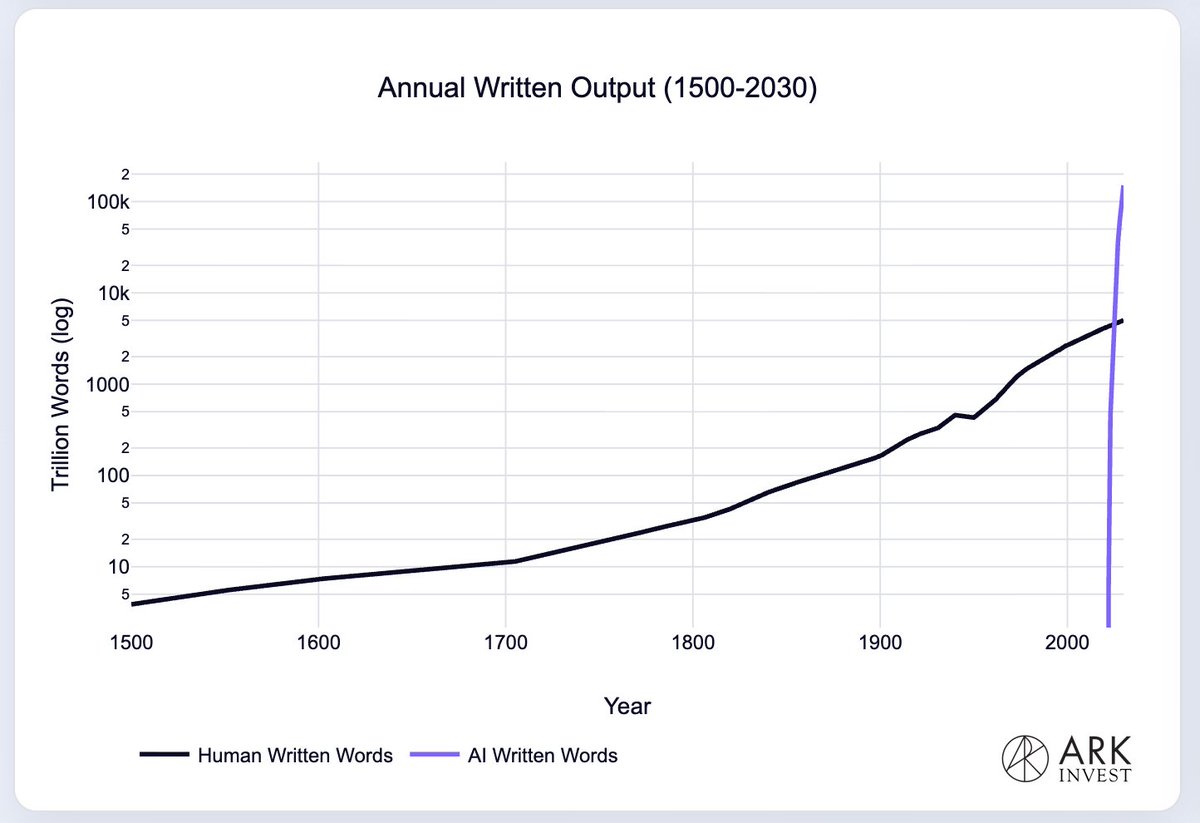

We have been surpassed: AI written output exceeded human written output in 2025 https://t.co/Dv4CNJDMVf

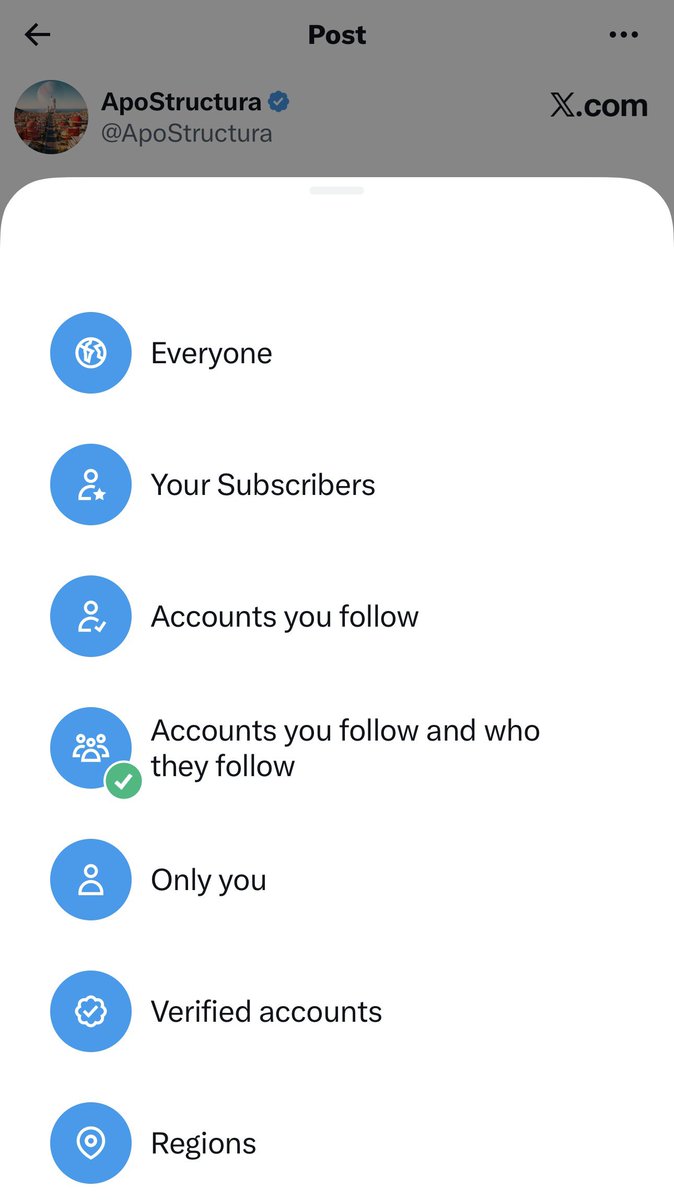

Trying this new “Accounts you follow and who they follow” reply filter on my latest post that Elon reposted. As soon as I turned it on the number of spam replies collapsed. I don’t think I’ll turn it on for all my posts, but for posts like this it looks like a great feature. https://t.co/wVF66GhMhT

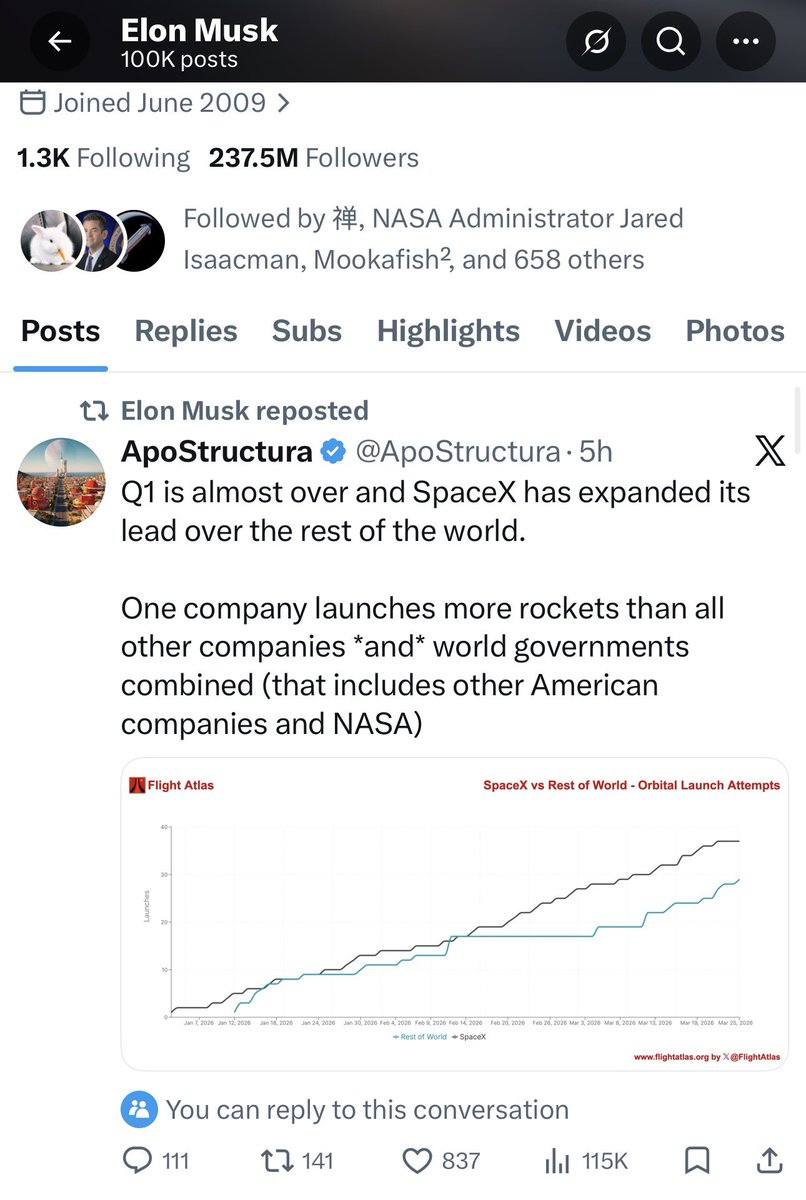

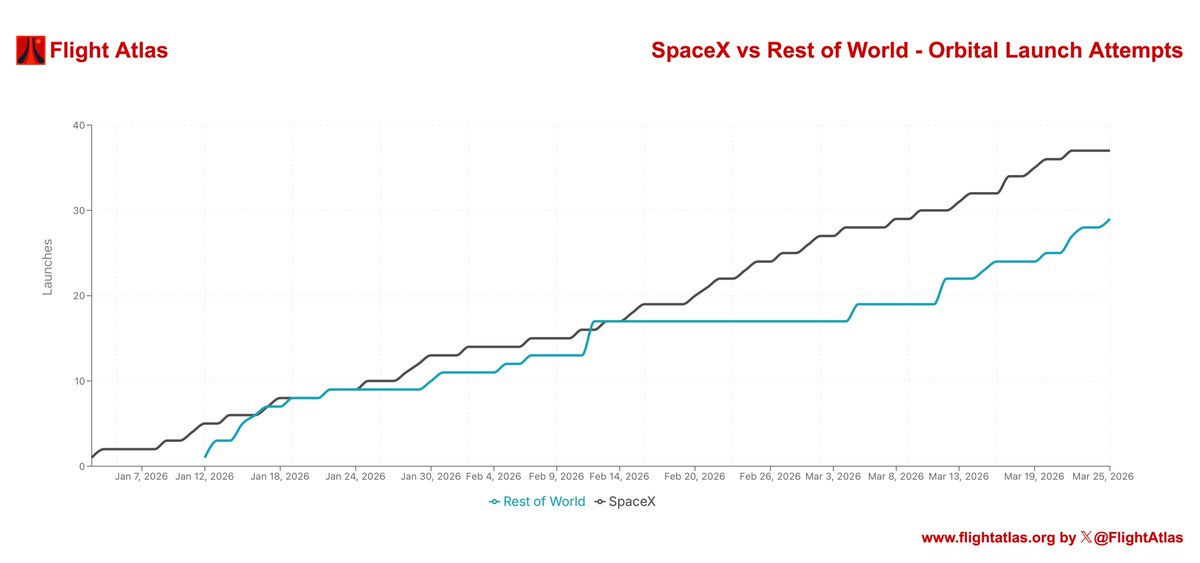

Q1 is almost over and SpaceX has expanded its lead over the rest of the world. One company launches more rockets than all other companies *and* world governments combined (that includes other American companies and NASA) https://t.co/aZZ54ySx1U

GPU MODE (@GPU_MODE) & PyTorch Foundation are organizing an ML systems hackathon in Paris on April 9, immediately following PyTorch Conference Europe 2026. Researchers and engineers will compete across two tracks: -Distributed training (LLM speedrun) and inference optimization (leaderboard) - Access to a B300 cluster from Verda and H200 instances from Sesterce - Cloud credits as prizes, including 48-hour access to a GB300 NVL72 rack - Talks from PyTorch (Helion), vLLM, Prime Intellect, and more - Food and refreshments Doors open at 9:30, with the closing ceremony at 20:00. Attendees can join with a pre-formed team or match on-site. Location details are shared upon registration. Spots are limited. Register: https://t.co/1BYgfGWJIr #OpenSourceAI #PyTorchCon #PyTorch #vLLM #Helion

Building systems that can execute the scientific research lifecycle has been an incredible journey for our team. For those interested in diving in, we released our implementations for both versions of The AI Scientist: ⸻ V1: https://t.co/A5NDvfZKLC V2: https://t.co/gedB5EIMCg ⸻

Lyria 3 came out from google today, so naturally I had to use it for generative lullabies. now, when the monitor detects the baby is crying, it can play a 30 sec lullaby pre-generated to the specific baby's tastes (based on what's worked historically) using Lyria 3 Clip. this demo showcases this generative lullaby capability, whereas the last showcased playing soothing phrases in the mother's cloned voice to calm the baby. this is using the @openhome devkit as hardware. more to come!

GPU MODE and PyTorch Foundation are organizing an ML systems hackathon in Paris on April 9, immediately following PyTorch Conference Europe 2026. Researchers and engineers will compete across two tracks: -Distributed training (LLM speedrun) and inference optimization (leaderboard) - Access to a B300 cluster from Verda and H200 instances from Sesterce - Cloud credits as prizes, including 48-hour access to a GB300 NVL72 rack - Talks from PyTorch (Helion), vLLM, Prime Intellect, and more - Food and refreshments Doors open at 9:30, with the closing ceremony at 20:00. Attendees can join with a pre-formed team or match on-site. Location details are shared upon registration. Spots are limited. Register: https://t.co/1BYgfGWJIr #OpenSourceAI #PyTorchCon #PyTorch #vLLM #Helion

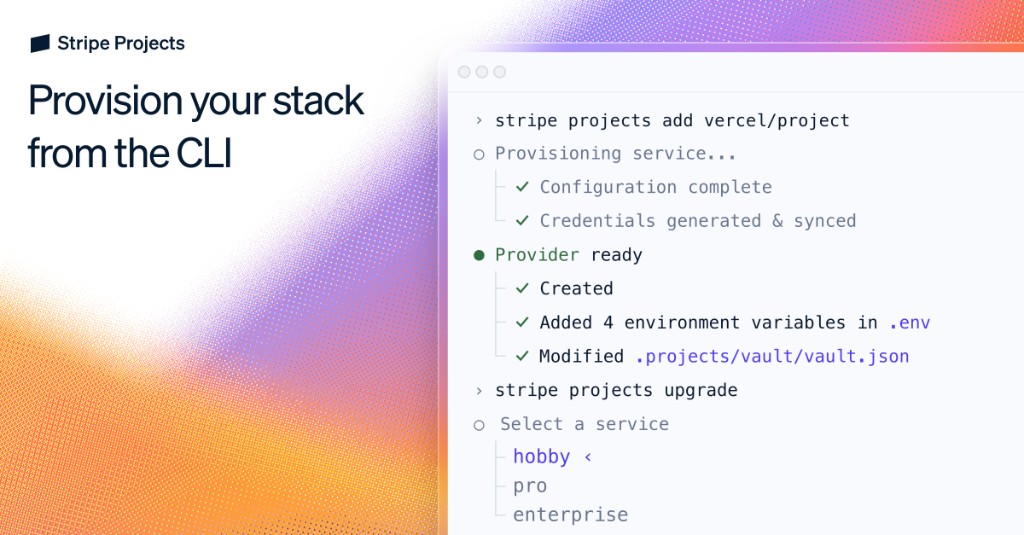

When @karpathy built MenuGen (https://t.co/2OjrUJ3aLS), he said: "Vibe coding menugen was exhilarating and fun escapade as a local demo, but a bit of a painful slog as a deployed, real app. Building a modern app is a bit like assembling IKEA future. There are all these services, docs, API keys, configurations, dev/prod deployments, team and security features, rate limits, pricing tiers." We've all run into this issue when building with agents: you have to scurry off to establish accounts, clicking things in the browser as though it's the antediluvian days of 2023, in order to unblock its superintelligent progress. So we decided to build Stripe Projects to help agents instantly provision services from the CLI. For example, simply run: $ stripe projects add posthog/analytics And it'll create a PostHog account, get an API key, and (as needed) set up billing. Projects is launching today as a developer preview. You can register for access (we'll make it available to everyone soon) at https://t.co/1tSgGbSLxM. We're also rolling out support for many new providers over the coming weeks. (Get in touch if you'd like to make your service available.) https://t.co/vjRymcVCKI

Say hello to Gemini 3.1 Flash Live. 🗣️ Our latest audio model delivers more natural conversations with improved function calling – making it more useful and informed. Here’s what’s new 🧵

Meet Gemini 3.1 Flash Live 🎙️👁️ Our first Gemini 3-based multimodal, real-time model is officially here. Try the preview now on the Live API and in @GoogleAIStudio 👉 https://t.co/4OxcX7glyG https://t.co/XMp9hIsUfK

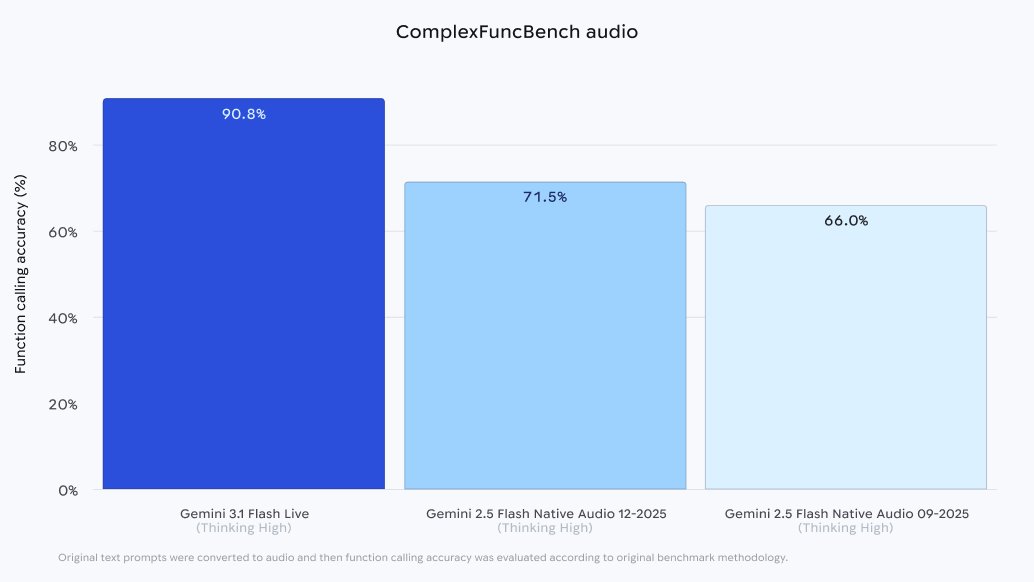

We just launched Gemini 3.1 Flash Live! Our fastest, most natural real-time voice AI model for building Agents. - Scores 90.8% on ComplexFuncBench Audio for tool use. - 70 languages, Video streaming, Audio transcriptions, 128k context - Comes with Agent Skill for building live voice agents. - All generated audio is watermarked with SynthID.

New Voxtral-4B-TTS is beautiful 💛 it sounds so good that it's whispering at closed models: "your time is over" - Demo available on Hugging Face ⬇️ https://t.co/gI02Jmo9j5

Bounding boxes are key for citations, and we just shipped a new guide showing how to use LiteParse for visual citations! LiteParse is our fast and open-source document parser. Using both bounding box extraction and page screenshots, anyone (including agents) can learn how to associate text with an element on the page. https://t.co/Vauhx5Yh9n

Gemini's audio and voice capabilities just got an upgrade with Gemini 3.1 Flash Live. Our new high-quality audio and voice model comes with: ⚡️ Faster response times 💬 More helpful, natural dialogue 🧵 2x longer conversation memory in Gemini Live 🌍 Multilingual support for Search Live in 200+ countries & territories See what’s new 🧵

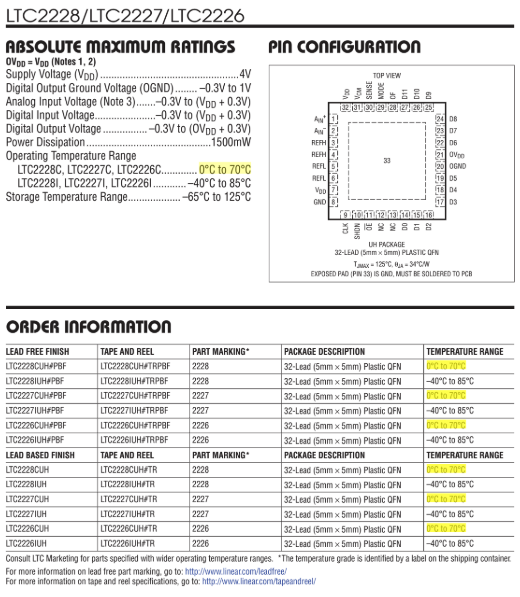

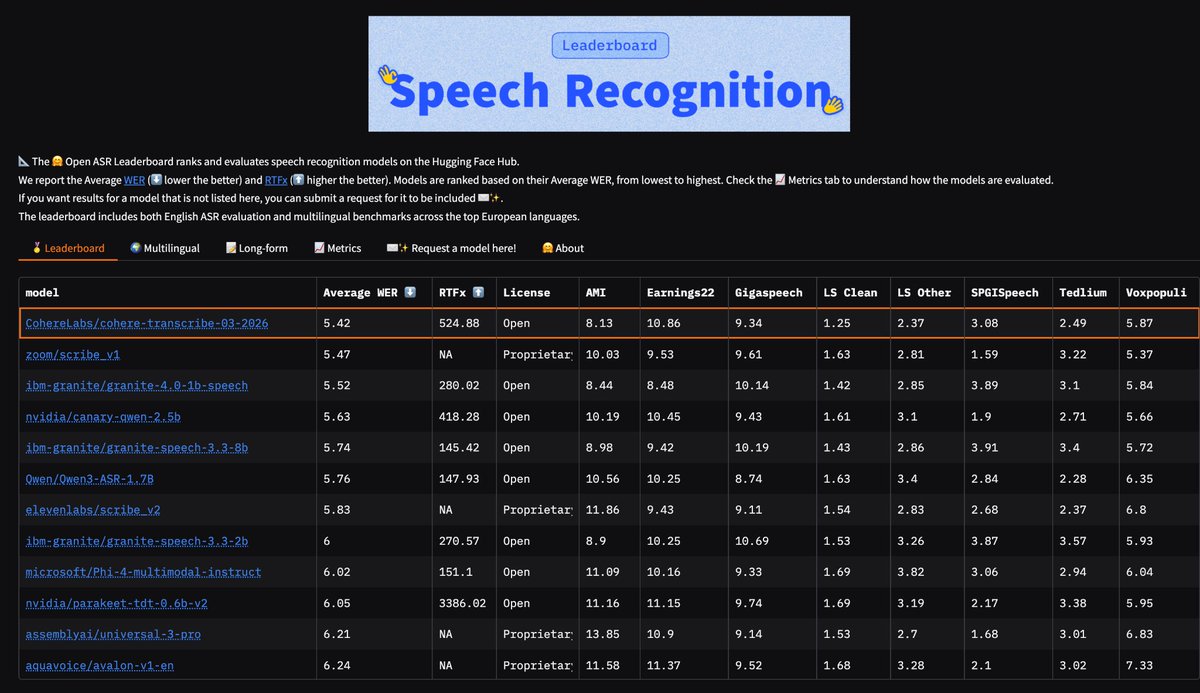

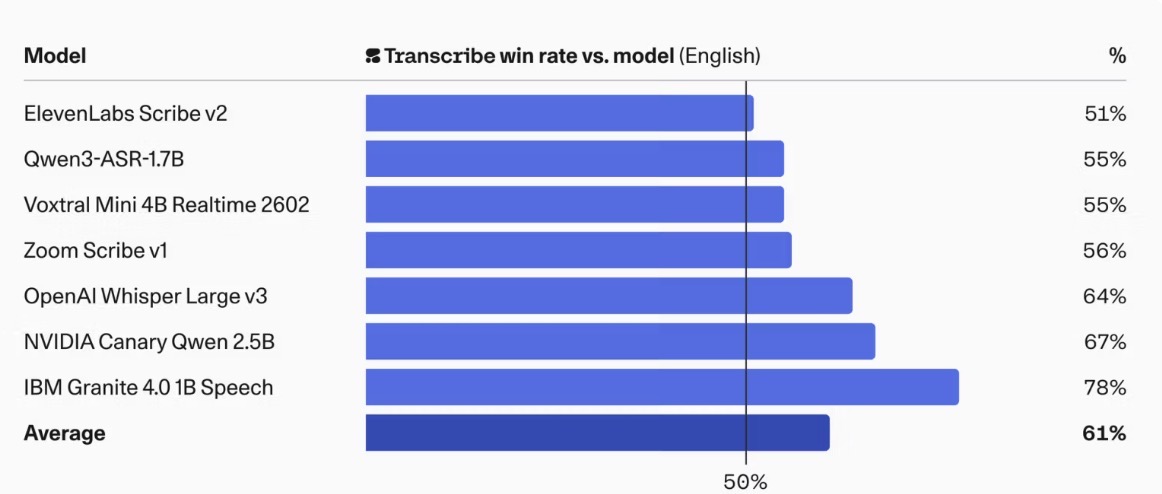

Just dropped on HF: Cohere’s cohere-transcribe-03-2026 > 🥇 #1 on the Open ASR leaderboard > 🌍 #4 multilingual > 📄 #6 long-form > Supports 12+ languages: English, German, French, Italian, Spanish, Portuguese, Greek, Dutch, Polish, Arabic, Vietnamese, Chinese, Japanese, Korean > Conformer-based encoder + lightweight Transformer decoder for transcription > And of course: Apache 2.0 license

Cohere has released an Apache 2.0 model on @huggingface. No restricted license. No "research only." Actually open. Respect. Is this a one-off or a direction change for Cohere? https://t.co/i204YRbe98

Introducing: Cohere Transcribe – a new state-of-the-art in open source speech recognition. https://t.co/l87Z6oyQdM

@cohere just released the best speech->text model :) It currently ranks #1 for accuracy on @huggingface Open ASR Leaderboard, setting a new benchmark for real-world transcription performance. Read more 👇 https://t.co/WopGr8Wu9P

Introducing: Cohere Transcribe – a new state-of-the-art in open source speech recognition. https://t.co/l87Z6oyQdM

Q1 is almost over and SpaceX has expanded its lead over the rest of the world. One company launches more rockets than all other companies *and* world governments combined (that includes other American companies and NASA) https://t.co/aZZ54ySx1U

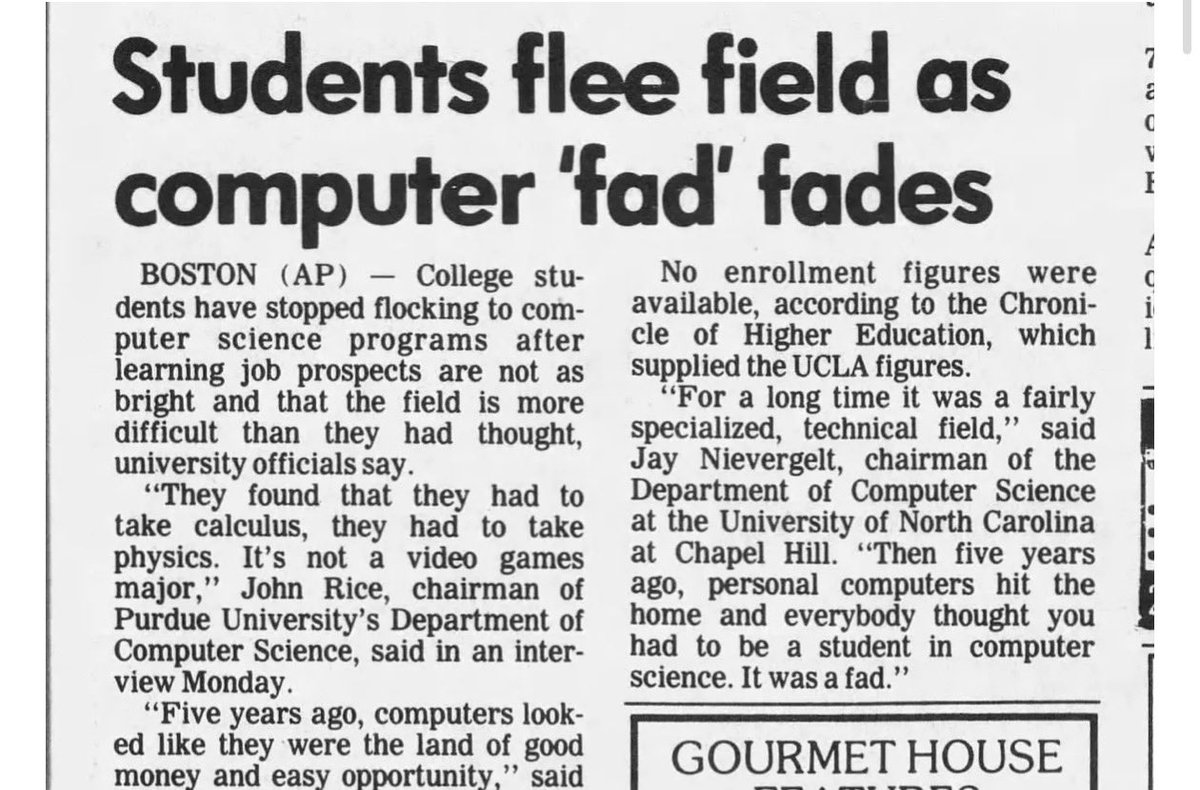

Will people avoiding computer science end up looking like these 1987 studies? “Five years ago, computers looked like they were the land of good money and easy opportunity” https://t.co/APtEshUctt

Jevons paradox is happening in real time. Companies, especially outside of tech, are realizing that they can now afford to take on software projects that they wouldn’t have been able to tackle before because now AI lets them do so. We’re going to start to use software for all n

Realism isn't just power prevailing. Realism also requires looking at the world realistically to recognize the limits of a nation's power and to deploy it only for compelling purposes. Trump's war-of-choice on Iran isn't realism but reckless stupidity. https://t.co/vc2C9Nz034

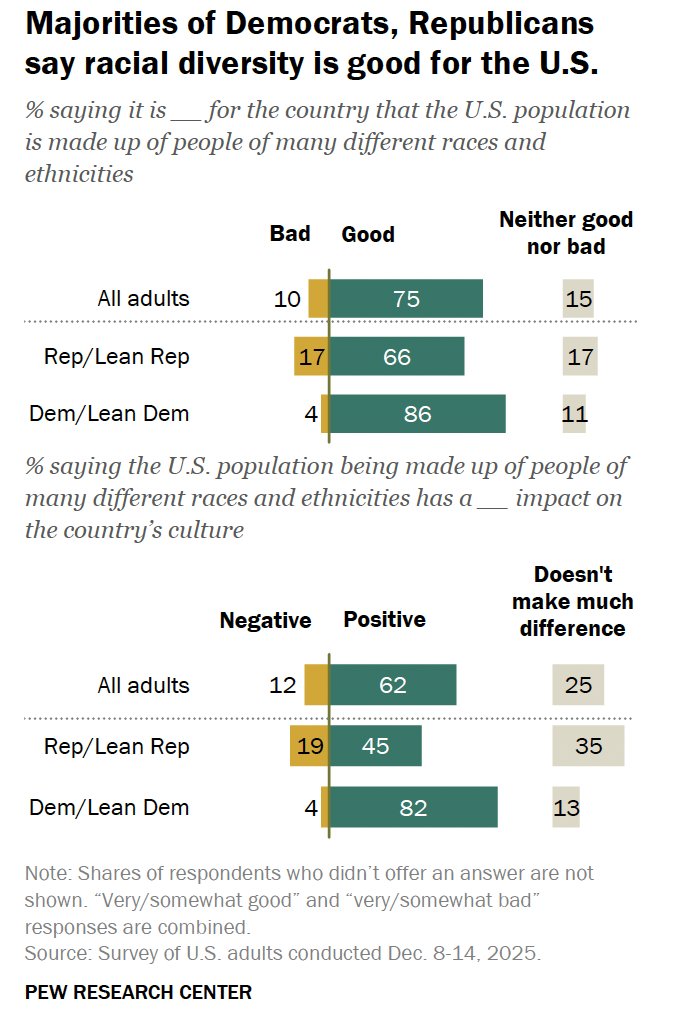

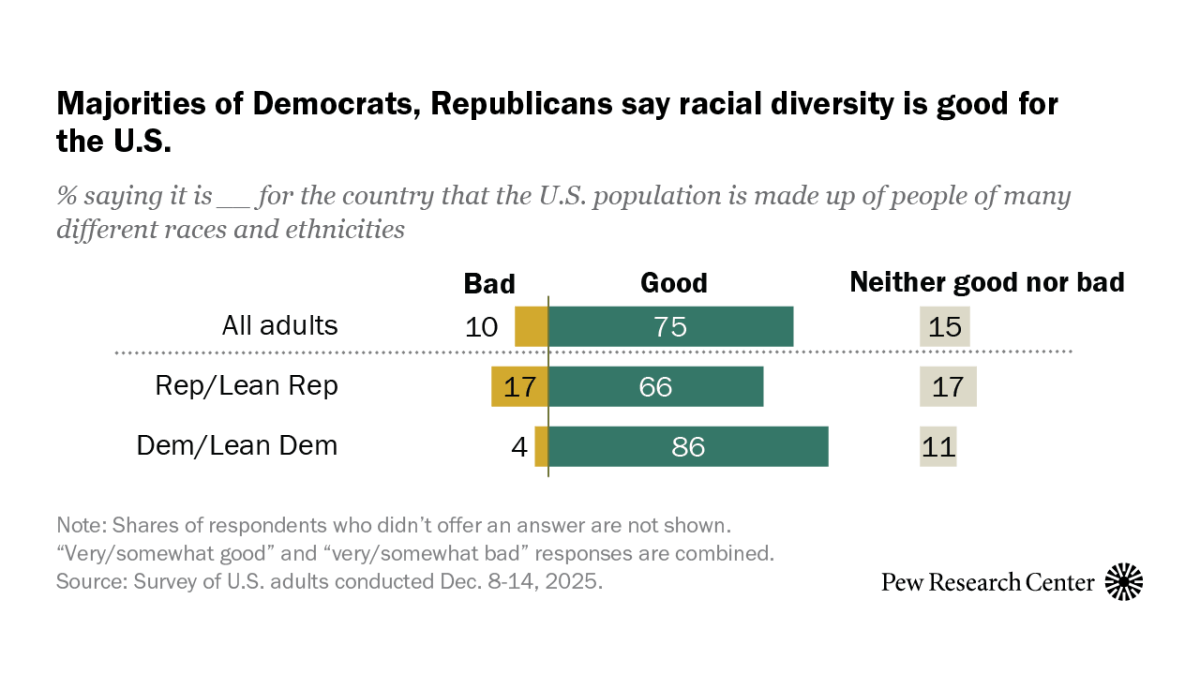

As the United States turns 250, the country’s population is more racially and ethnically diverse than in previous decades. Three-quarters of U.S. adults see racial and ethnic diversity as a good thing for the country. Read more about Americans’ views on diversity here: https://t.co/ngrBuEWe5O

At GTC, @NVIDIAAIDev announced general availability for NuRec, their library for gaussian splatting (uses 3DGUT) for Physical AI. It's now available through @NVIDIAAI NGC Catalog Article: https://t.co/5zK7VAhBkx Access: https://t.co/66PNiUMOb8 https://t.co/LaQQeo0ABf

🔊Introducing Voxtral TTS: our new frontier open-weight model for natural, expressive, and ultra-fast text-to-speech 🎭Realistic, emotionally expressive speech. 🌍Supports 9 languages and accurately captures diverse dialects. ⚡Very low latency for time-to-first-audio. 🔄Easily adaptable to new voices

BREAKING! Introducing Plus One: A hosted @openclaw that lives in your Slack and comes pre-loaded with @every's best tools, skills, and workflows. Set it up in one click, and use your ChatGPT subscription (or any other API key.) Bring your Plus One to work: https://t.co/7Lo2xHM1B4 Connected to the @every ecosystem Plus Ones automatically use @every's agent-native apps, no setup required: - @CoraComputer for searching, sending, and managing email - @TrySpiral for great writing in your voice - Proof (https://t.co/NTVY3NgAKy) for agent-native document editing Custom skills and workflows we use and love Plus Ones come pre-loaded with skills and workflows we use ourselves @every —some we've made, and some we think are great. - Content digest—summarizes the publications you read, starting with @every - Daily brief—your day's schedule and to-dos sent to you each morning - Animate—turn any static screenshot into an animation with @Remotion - Frontend—Anthropic's front-end skill (which we use all the time!) We also make it fast to connect Google, Notion, Github, and more to your Plus One. Our goal is to give you a capable AI coworker right away, not a vanilla OpenClaw that you have to teach from scratch. Why we built Plus One @OpenClaw has changed the way we work at Every. We effectively have a parallel org chart of AI coworkers, each with a name, a manager, and real responsibilities. Because of them our workflows are completely different—our company is different—and we would never go back. But getting here has been hard. Claws require a significant amount of manual setup and require a dedicated machine—like a Mac Mini—running 24/7 to stay responsive. We have learned that the hard part of Claws is the infrastructure around them—the hosting, the integrations, the skills, and the ongoing care. We’ve made them work great for our team, and we want to share everything we’ve learned with you. We're letting in 20 people a week to start, and scaling invites quickly from there. @Every subscribers get priority. Bring your Plus One to work: https://t.co/3GRscNf15z

Introducing Gemini 3.1 Flash Live, our new realtime model to build voice and vision agents!! We have spent more than a year improving the model + infra + experience, the results? A step function improvement in quality, reliability, and latency. https://t.co/0esYpmDy5l

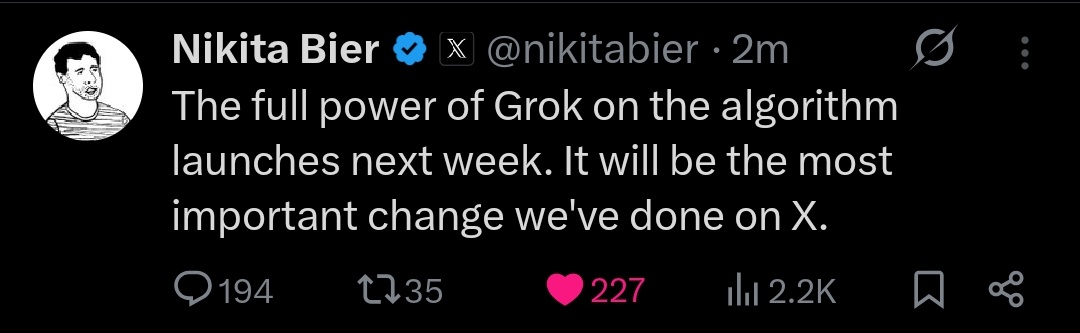

Grok will now power every ranking decision on X. It will "read" posts, watch videos, and score them in real-time based on what you will love. Runs on 20K+ GPUs to analyze hundreds of millions of posts daily in real-time + OPEN SOURCE algorithm. X is basically becoming the most transparent + smartest social app.

We will make the new 𝕏 algorithm, including all code used to determine what organic and advertising posts are recommended to users, open source in 7 days. This will be repeated every 4 weeks, with comprehensive developer notes, to help you understand what changed.

Gemini 3.1 Flash Live, our highest-quality audio and voice model, is launching today! This is how it advances our real-time dialogue capabilities: — Faster: 3.1 Flash Live powers faster responses than the previous model, which is great for when you need a timely answer (Ex: “Can you help me change this tire in under 5 minutes?!?”) — Longer: In Gemini Live, the model’s context window is now twice as long as before, so it can keep up all of the details shared in your conversations (Ex: “I'm back to writing my future bestselling crime novel. Remind me, who is the secret double agent?”) — Global: 200+ more regions will be able to have real-time, multimodal conversations in their preferred language

Listen up 🔊Gemini 3.1 Flash Live is launching today, making a big difference for developers who are building real-time voice and vision agents. How, you ask? Well, this model delivers: — Responses that feel as fast as natural dialogue — Better task completion in noisy environments — Improvements in complex-instruction following

Start building with 3.1 Flash Live anywhere it’s available: — Gemini Live in @GeminiApp + Search Live — Gemini Live API + @GoogleAIStudio in preview — Gemini Enterprise for Customer Experience And learn more about the model in our blog➡️ https://t.co/RmIDqJ4rP6

Vibe code at the speed of thought with Gemini 3.1 Flash Live. Here’s an example to get you started. Using the model in @GoogleAIStudio, you can build apps as you talk out loud with a pace that keeps up with your brainstorms. Start creating your own app with voice control today, or remix ours: https://t.co/TYubZborBH