Your curated collection of saved posts and media

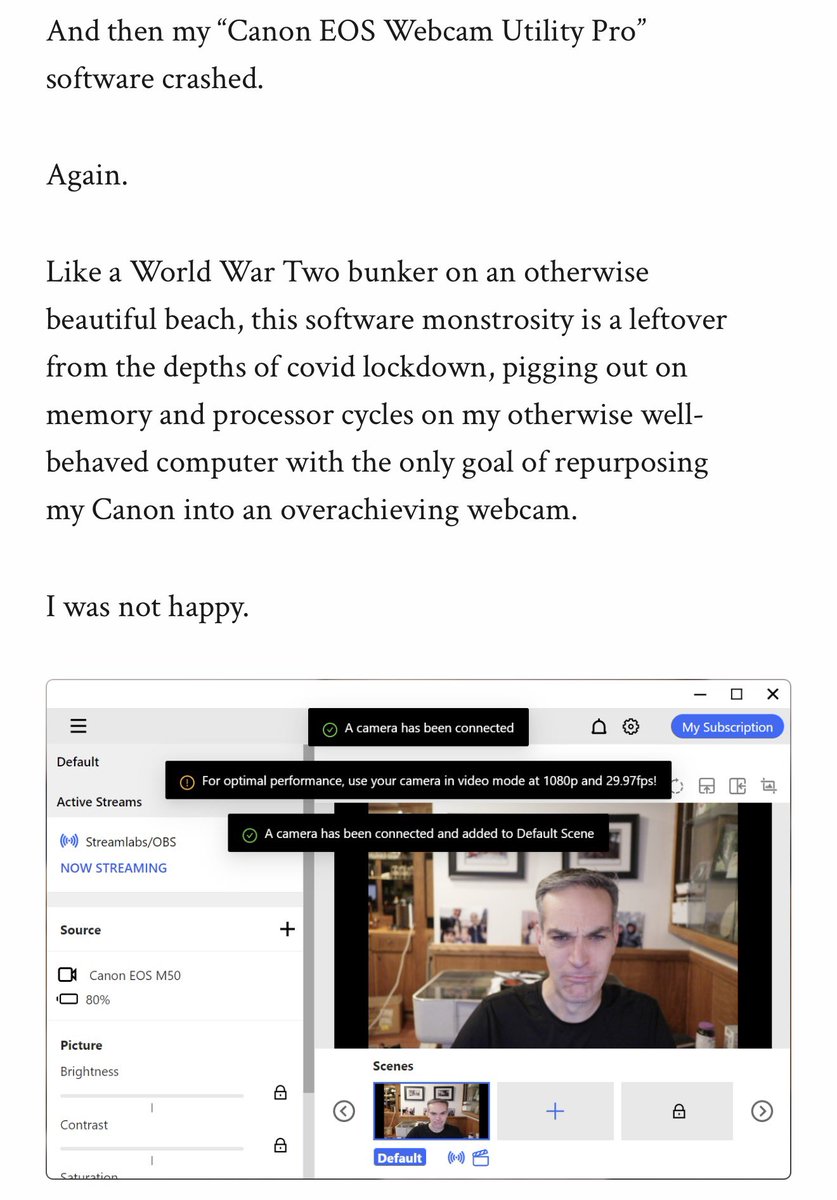

Great little story from @danshapiro about how he asked a coding agent to fix the official webcam software from Canon that kept crashing. He woke up to a new, fully functional Rust webcam app that has worked ever since. https://t.co/uazUapOQb0 https://t.co/3BVb2PU1uN

VERY cool first open release of Chroma on @huggingface, along with a tech report and all the details on training an agentic search agent Find it here https://t.co/Ef8vdBZGgy

Introducing Chroma Context-1, a 20B parameter search agent. > pushes the pareto frontier of agentic search > order of magnitude faster > order of magnitude cheaper > Apache 2.0, open-source https://t.co/bhAkULyBBn

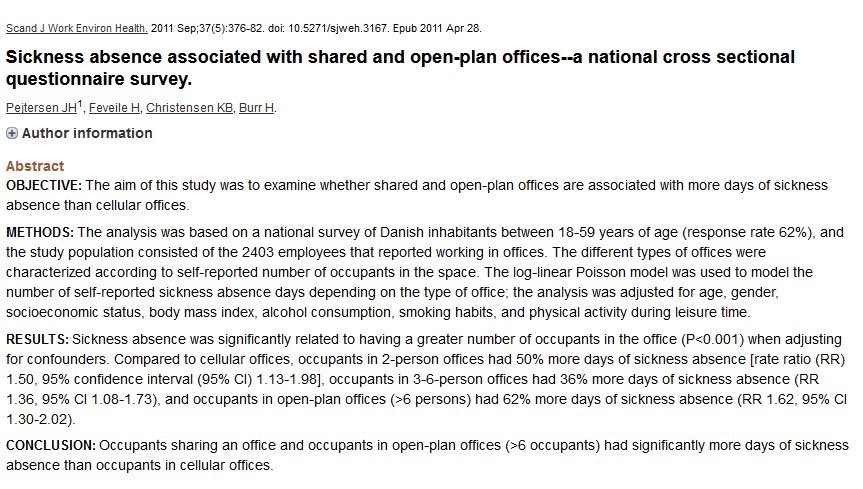

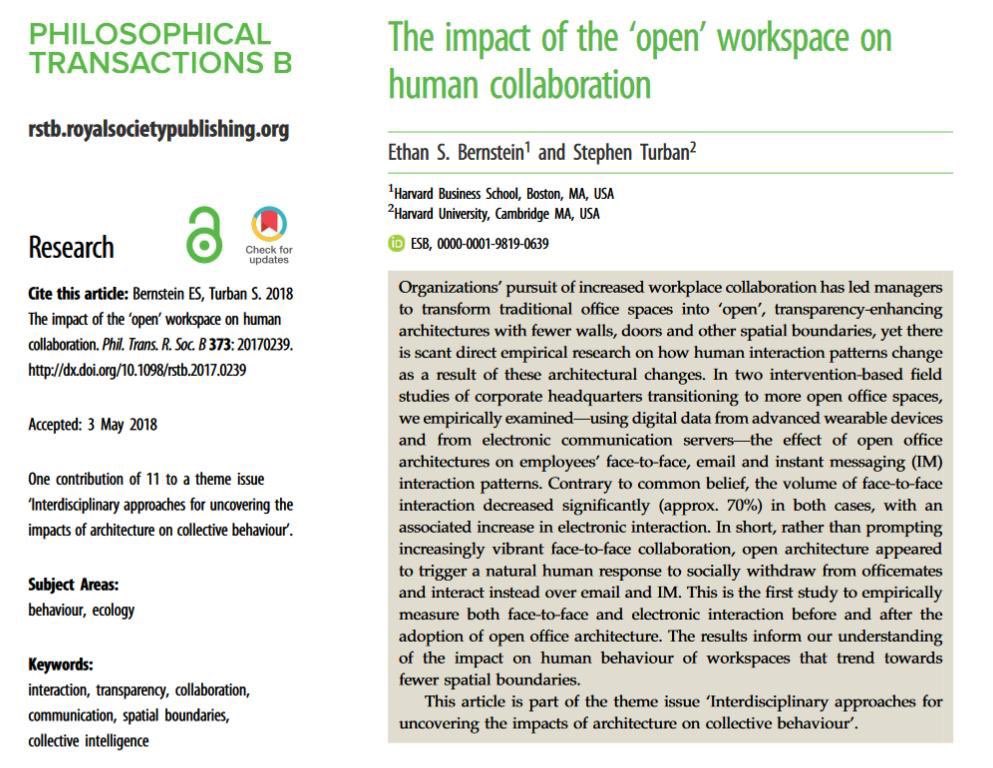

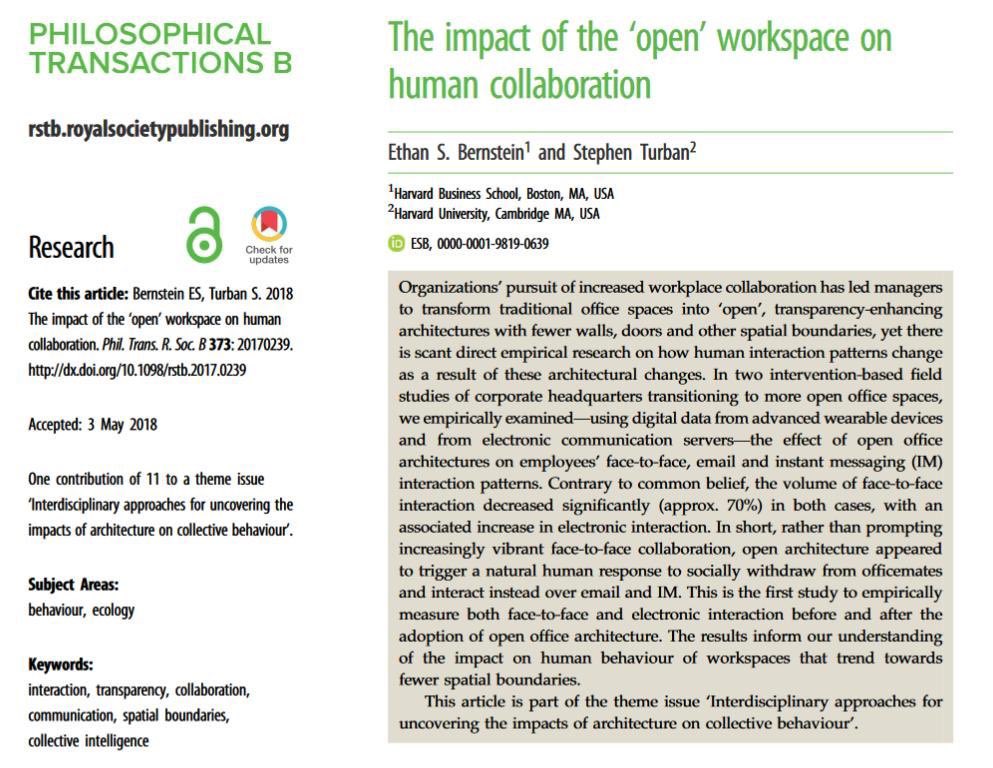

Sick days are 62% higher for open floor plans than individual offices. Even group offices are better, six person rooms have 30% less sick days than open plans. https://t.co/uYqP3kQEtW

We raised $6.5m to build humanity’s platform for uploaded consciousness. Sentience creates one unique model for every person — a digital twin of your mind — to remember everything, recall what matters, and operate as you. I started this company because I am worried. For the first time, we have intelligence that will fully replace humans across a range of domains within a few years. For those outside of the tech echo chamber, this is a period wrought with confusion and uncertainty about what actually matters anymore. I’ve had numerous conversations with people who aren’t sure if they have any value as a unique human being, or whether their own thinking matters. While Silicon Valley goes full speed ahead towards AGI, many people are left wondering what place they have in this AI future. If I can communicate one thing to everyone out there with these doubts, it would be this: your unique knowledge, memories, and who you are still matter. In fact, these things matter now more than ever. The problem is that the course of AI is heading towards a world where all of us will outsource our thinking to the same one-size-fits-all AI models. This is not a hypothetical future. If 100,000 people ask a question to ChatGPT, every single person gets the same answer. You can see the cost of uniform AI models across writing, social media, and even academic papers. This uniformity is more than annoying – it’s dangerous. There’s a genuine chance that we lose the texture and vibrancy that makes us unique as a species. I founded The Sentience Company to arm real humans against this dystopian outcome. First, your Sentience lets you collect everything that holds context from your life, learning from what you do across every platform – starting on desktop and mobile. Never forget a detail again. Second, your Sentience becomes the best recall engine for everything in your life. Never copy/paste context or search across 50 chrome tabs again. Finally, your Sentience becomes the full simulation of you – an AI model that thinks and acts like you, to scale and share your unique ideas and interact with others. Your Sentience emulates more than your context. It understands your values, emotions, drive, and goals. We’re creating a world where you can leverage your own Sentience model alongside the models of your colleagues and friends to jam on ideas and access their knowledge 24/7. We’re not building Sentience to scale AGI and replace more human thinking. We’re building Sentience to scale you. We’re proud that many amazing humans are supporting our mission. Our round was led by @kevinzhang (@BainCapVC), with participation from @ditzikow, @adityaag, @evantana, @AgrawalArian, @gopalkraman, @JPBrebner (@southpkcommons), @rex_woodbury, @tmrohan, @soleio, @anniecase1, and many more.

Introducing Stamp: The AI Secretary that thinks, writes, and works like you. https://t.co/I2WJxsxX4c

Introducing Stamp: The AI Secretary that thinks, writes, and works like you. https://t.co/I2WJxsxX4c

PyTorch 2.11 features improvements for distributed training and hardware-specific operator support. Join Andrey Talman and Nikita Shulga on Tuesday, March 31st at 10 am for a live update and Q&A. Topics: - Differentiable Collectives for Distributed Training - FlexAttention: Now includes a FlashAttention-4 backend on Hopper and Blackwell GPUs - MPS (Apple Silicon): Comprehensive operator expansion - RNN/LSTM GPU Export Support - XPU Graph Register: https://t.co/eJUPu4m4K5 #PyTorch #OpenSource #AIInfrastructure

ai avatars are boring We built characters that argue, react, and sometimes go completely off-script Powered by @odysseyml live at https://t.co/HrVojqaxbZ https://t.co/vxSAkZ60id

CVPR 2026🎥We built a model that lets you fly through a generated video world. Not just generating frames — but maintaining a consistent 3D world under complex camera motion. Code, ckpt, and even the data pipeline are all open-sourced ↓ #AI #worldmodel #videogen #cvpr #drone https://t.co/AjLXPPM359

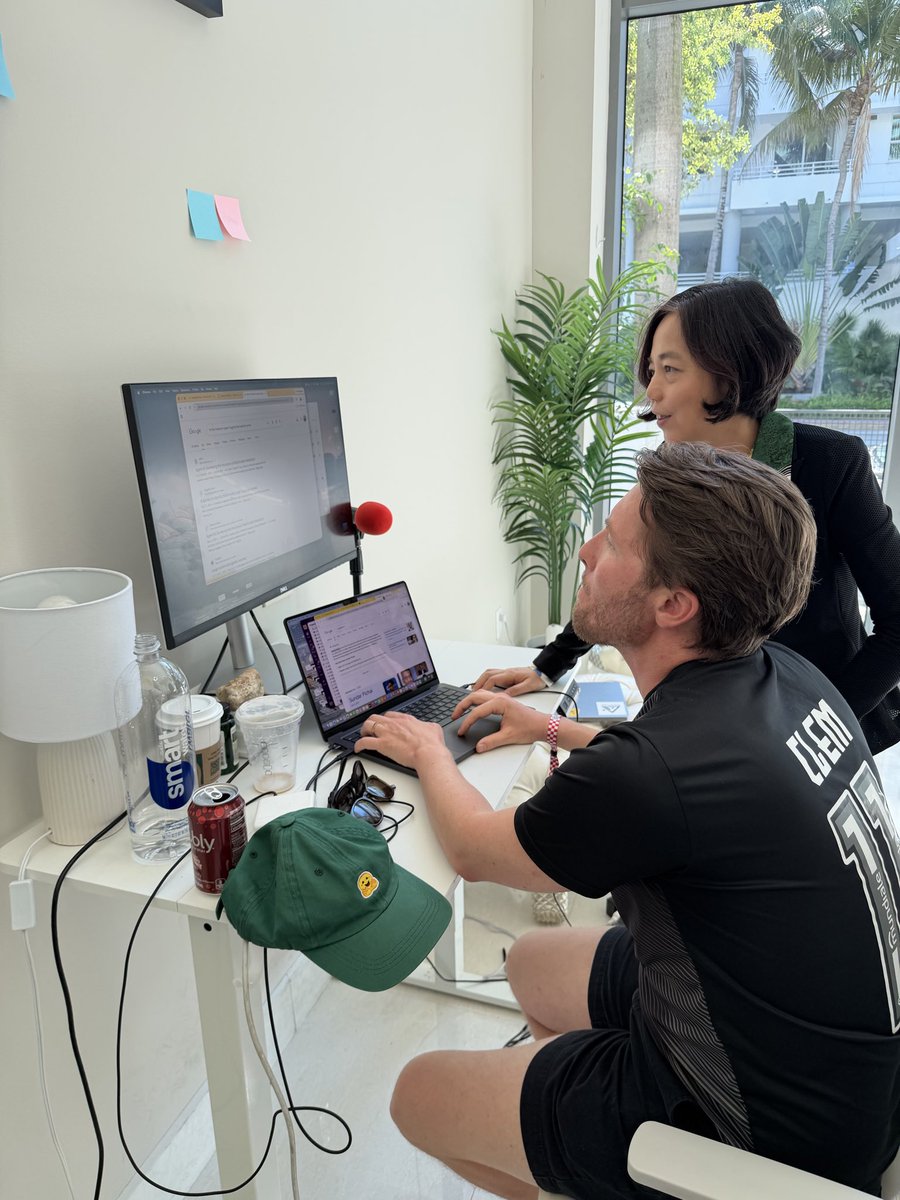

I got no other than the Godmother of AI @drfeifei as a coworker at the @huggingface Miami office today! So inspiring! https://t.co/mdn3xxQcsy https://t.co/AF740DX9zN

The new GitHub repository dashboard is now generally available. You can now find, specify, and save custom views of your projects in seconds. 🔍 Filter by language or org 🔍 Search via the command palette 🔍 Bookmark your top queries Get organized. ⬇️ https://t.co/8K4U4M54ky https://t.co/vNS13R0zap

When Elon mentioned visiting Saturn, it sounded like from a sci-fi movie. And, he is serious, "Now, wouldn’t it be amazing if you could buy a trip to Saturn? Or frankly, if you just have a trip to Saturn. I think things will just be free in the future. It sounds nuts, but you know, if you’ve got an AI robotics economy that is anywhere close to a million times the size of the current Earth economy, literally any need you possibly want can be met. If you can think of it, you can have it".

Our latest LiteParse release gives your AI agent access to text bounding boxes within any PDF 📐 LiteParse is our fast/free open-source document parser that can extract text from any document. Besides the extracted text, we also expose bounding boxes for every text block. This means that if you're building an AI agent over PDFs, it can now trace back to the precise line in the document and highlight it to the user, creating an audit trail for any decision made over this unstructured data. We've created a new guide that shows you how to get bounding boxes (and use it to highlight text on the page similar to the example below). Come check it out! https://t.co/YJFRFPyWxJ LiteParse: https://t.co/JNER0mVcB8

Bounding boxes are key for citations, and we just shipped a new guide showing how to use LiteParse for visual citations! LiteParse is our fast and open-source document parser. Using both bounding box extraction and page screenshots, anyone (including agents) can learn how to ass

@imtiaznabi_ I do: https://t.co/DkQIlgrc5h

Anthropic CEO: “50% of all entry-level Lawyers, Consultants, and Finance Professionals will be completely wiped out within the next 1–5 years." grad students and junior hires are cooked. https://t.co/LGpi7kUl1y

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only. https://t.co/sVymgmtEMI

@dexhorthy @trychroma https://t.co/DMmtHdqmjF

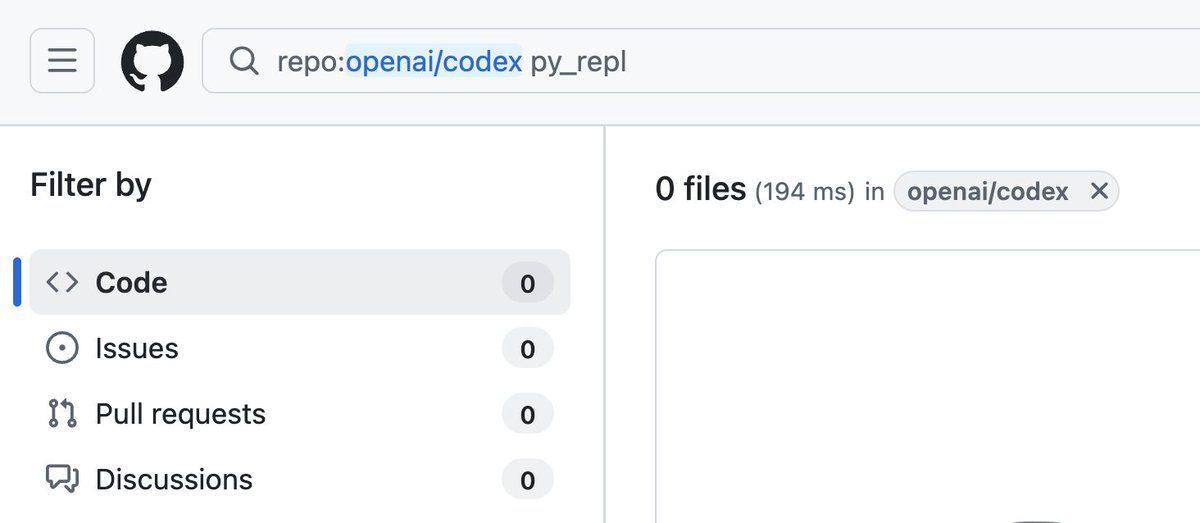

@_xjdr I don't see it in the app or the CLI, and can't find it in the CLI source. Is it coming from a skill or something? https://t.co/JATXyTy2Hn

Weirdly, open offices also kill socialization! Face-to-face communication among office workers actually drops by 70%(!!) in open plan offices, since people hide away from others. https://t.co/t9Xu05PRHI

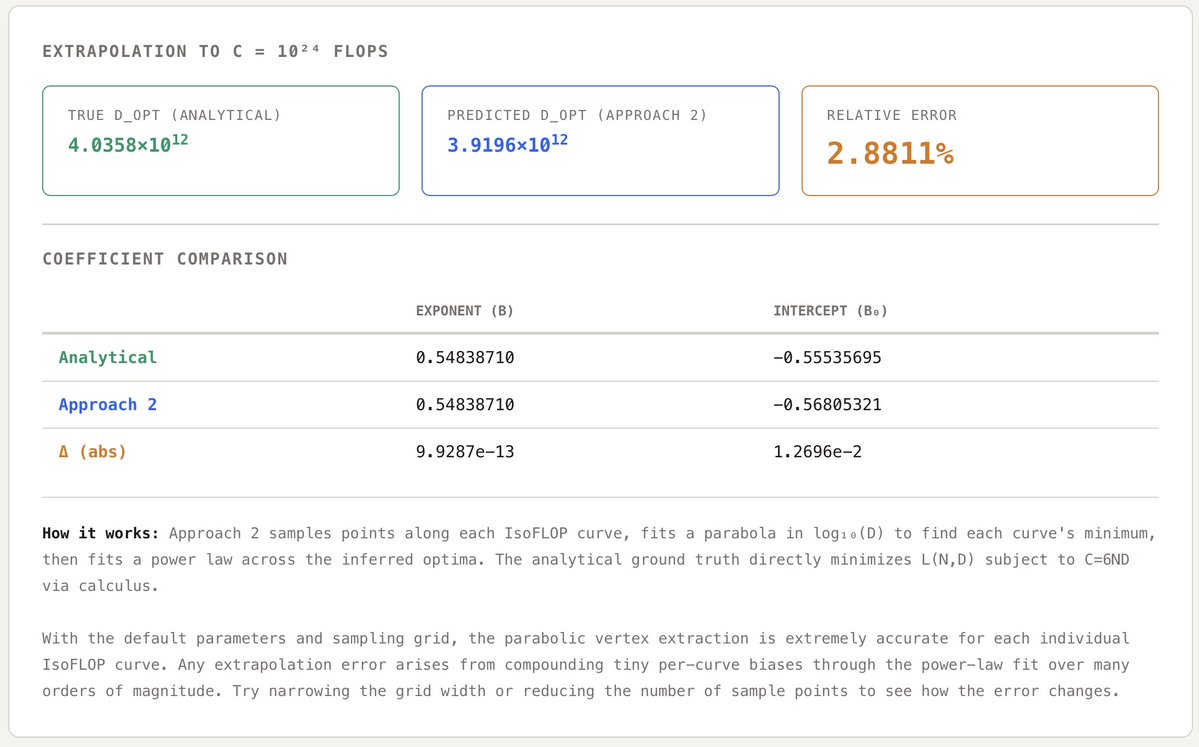

Scaling laws are "just" regressions. But a biased fitting method can quietly misallocate millions of $ of compute at frontier scales. My coworker Eric Czech dug into a bias in parabolic IsoFLOP fits used by Meta, DeepSeek, Microsoft, Waymo, et al. for their scaling laws🧵 https://t.co/JlQO3tEECc

Meet Suno v5.5: More expressive, more you. Use your voice, your sound, and your taste to make music that's unmistakably yours, in the best and most personal Suno experience yet. https://t.co/fKC7VAFLok

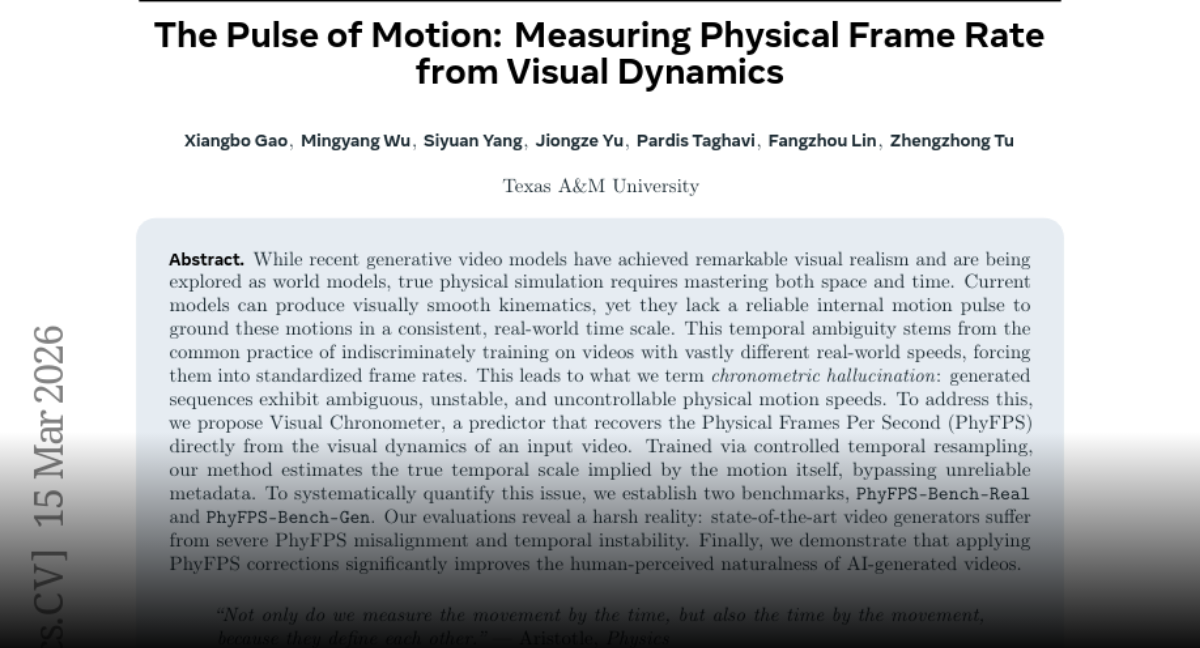

The Pulse of Motion Measuring Physical Frame Rate from Visual Dynamics paper: https://t.co/oQ3KAPx225 https://t.co/Zg86MJt0qW

It's over. Been using Tweetdeck (aka X Pro) to follow several of @Scobleizer's lists, but I guess this is the end. https://t.co/qNPNFtBxED

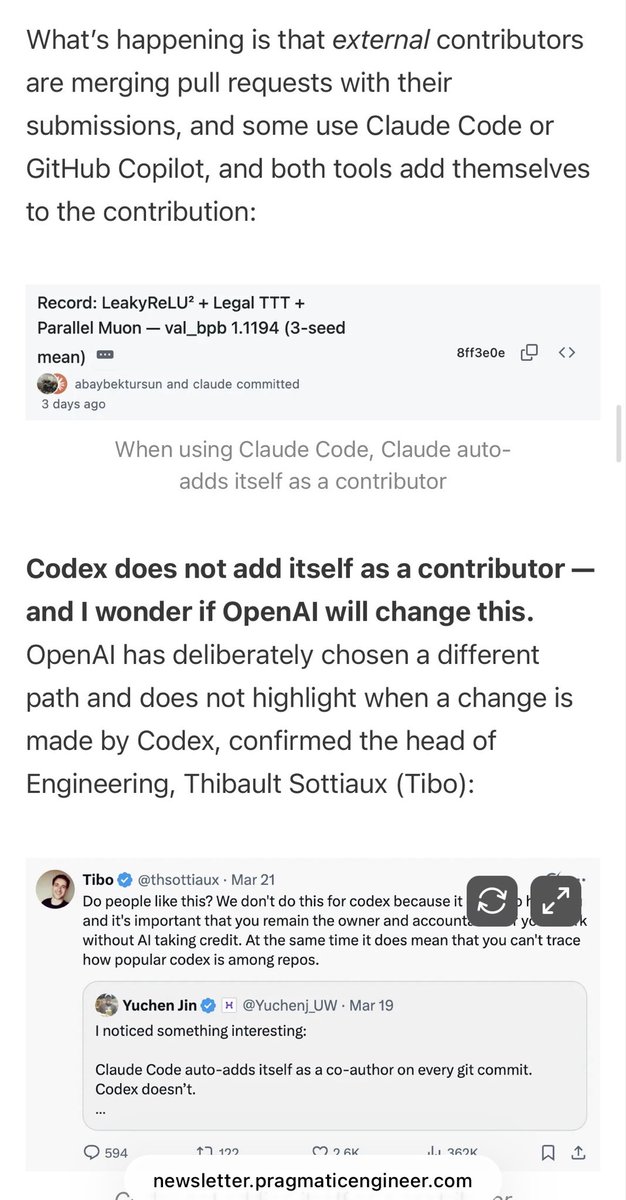

I explained in today’s @Pragmatic_Eng newsletter: That’s a repo where OpenAI is merging external contributions. Some are made by Claude Code, some with GitHub Copilot, some with Codex. Codex doesn’t add itself as a contributor - on purpose - that’s why. https://t.co/11aiShxeAb https://t.co/z68AZ3sfvW

NCCL watchdog timeouts are some of the most commonly misunderstood and difficult-to-debug errors in AI training. In the past, PyTorch released Flight Recorder to make debugging these errors easier, but interpreting its outputs has been tricky. Based on an analysis of countless NCCL watchdog timeouts at Meta, the PyTorch team at Meta has put together a guide on how to effectively use Flight Recorder to debug NCCL watchdog timeouts, and to better understand why they occur (spoiler: it's usually not because of network or NCCL issues). If you've ever spent hours debugging these errors and are looking for a better solution, this blog post is for you: https://t.co/HIBdkYgpv8 ✍ Yifei Liu, Uttam Thakore, Ph.D., Junjie W., Yongzhong Yang #PyTorch #OpenSourceAI #NCCL #FlightRecorder

so stoked to have @hrishioa speaking at @aiDotEngineer singapore! hrishi is the ceo of southbridge ai - data agents that work unsupervised across difficult, messy data across healthcare, finance, scientific research previously he was cto at greywing (@ycombinator w21). he co-authored "make smart contracts smarter" which was adopted by the @ethereum foundation. @swyx @ivanleomk @agrimsingh @unprofeshme @aimuggle

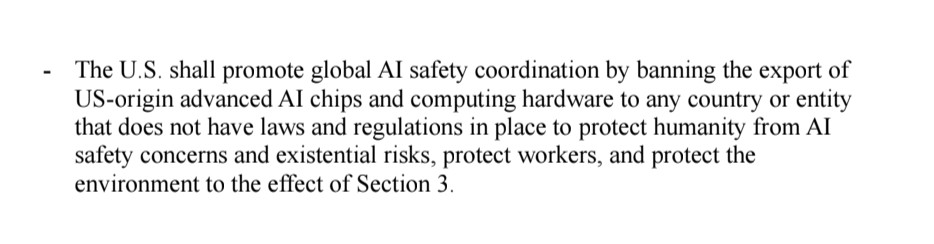

@ESYudkowsky @slatestarcodex It does include export controls. Specifically: https://t.co/iFLwBnhg5Y

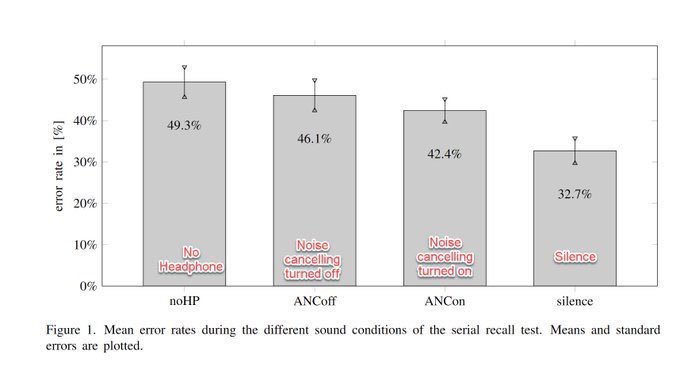

Noise cancelling headphones are apparently a futile solution to office noise… they mostly delude us into thinking they help. This study finds we feel they improve our ability to concentrate, but found no significant improvements in actual concentration. https://t.co/KrX8JIQxuO https://t.co/EoUNyVn9cr

OpenClaw 2026.3.24 🦞 🔌 Improved OpenAI API: talk to sub-agents with @openwebui 🎛️ Skill & tool management Control UI 🎨 Slack interactive reply buttons 💅 Native Microsoft Teams 🧵 Smart Discord auto-thread naming Any client. Any model. One runtime. https://t.co/LhRgNElE0b

The background noise in open offices, in multiple experiments, decreases the ability to do analytical work, increase bugs in software, and decrease the ability to find bugs. Open floor plans are just a bad idea for any solo work. https://t.co/Y2KX5HjIEd

Tech companies pay millions of dollars for their employees and then stick them in open-plan offices that make it nearly impossible to get work done. Best strategy for poaching employees is probably to just offer them an office with a door.

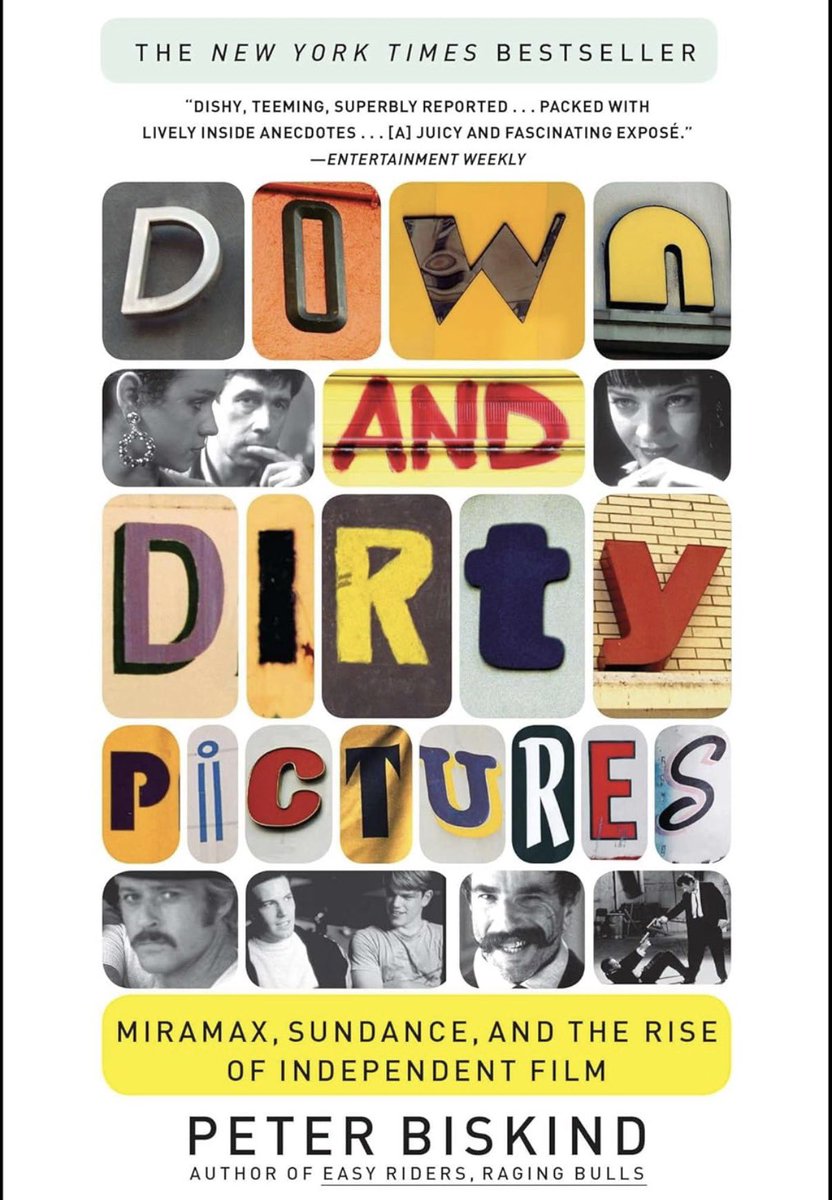

What I’m reading next: https://t.co/HxkByA4ry0

Thrilled to announce Claude Code auto-fix – in the cloud. Web/Mobile sessions can now automatically follow PRs - fixing CI failures and addressing comments so that your PR is always green. This happens remotely so you can fully walk away and come back to a ready-to-go PR. https://t.co/F41RTeymXJ

🚀 The @GoogleDeepMind team just added Gemini 3.1 to the Live API, so we built a small demo showing how Gemini voice agents can plug directly into the document processing ecosystem powered by LlamaIndex. 🔥 In this example, we integrate LiteParse to enable fast, fully-local document parsing. With our TUI-based voice assistant, you can literally talk to your terminal: - Speak commands - Trigger live document parsing via tool calls - Hear the agent read back results in real time 🔊 The assistant can extract content from single files or entire folders, leveraging the lightning-fast local parsing that LiteParse provides ⚡ Take a look at the demo👇 👩💻 GitHub repo https://t.co/ySmenP2HoY 📚 LiteParse docs https://t.co/NlpoI4CqEq